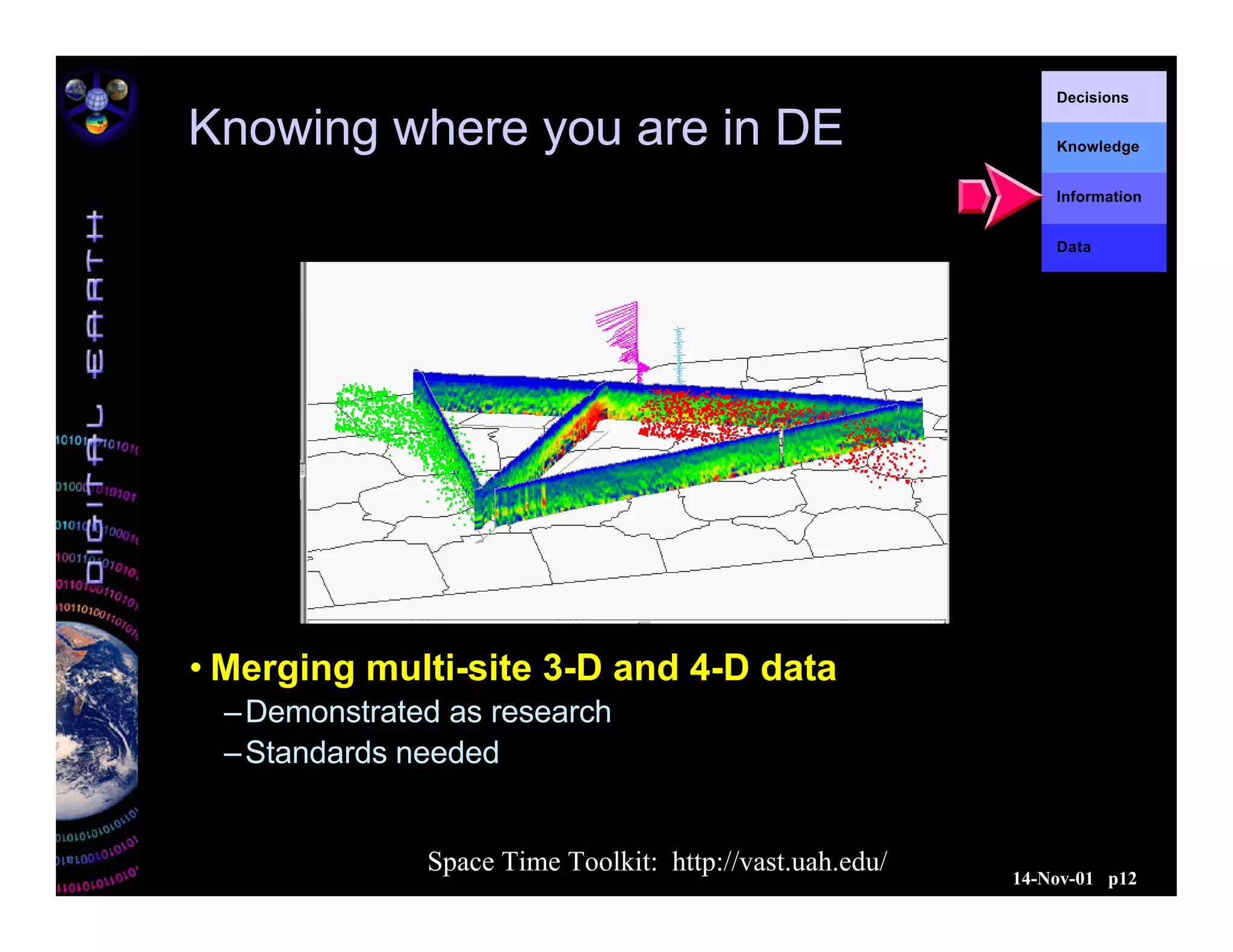

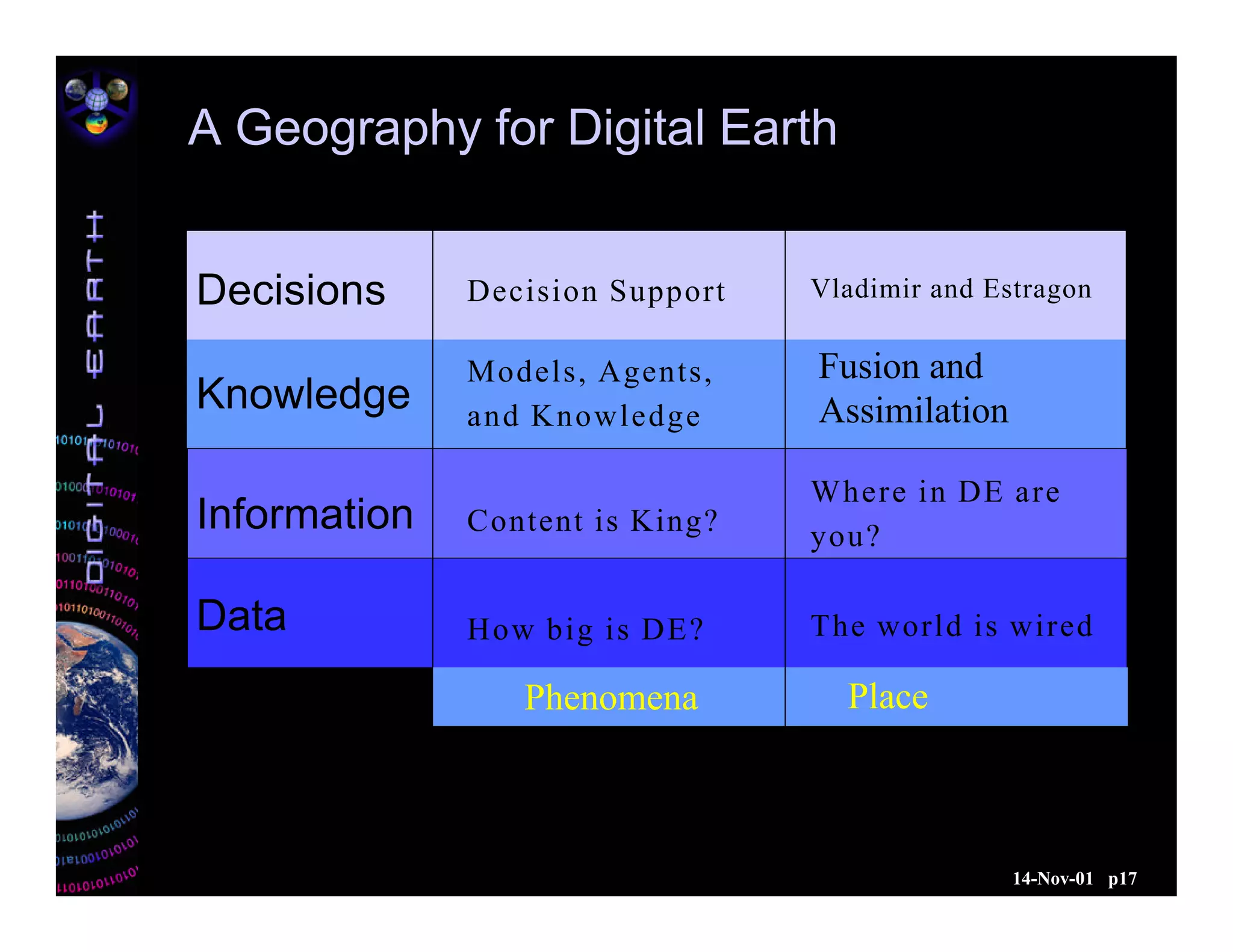

The document outlines the concept of Digital Earth, which is a virtual representation that integrates vast geographic data for exploration and interaction. It discusses the technological advancements necessary for accessibility, the challenges in data management, and the importance of interoperability among data sources. Furthermore, the document emphasizes the role of decision support systems and the need for geographic references to enhance understanding and effective decision-making regarding earth-related phenomena.