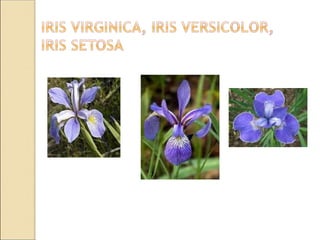

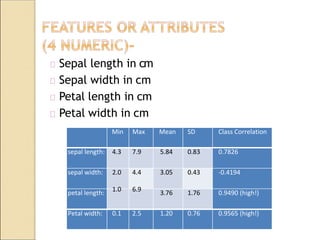

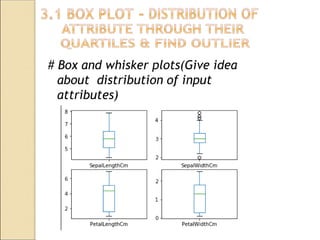

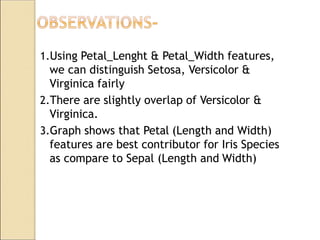

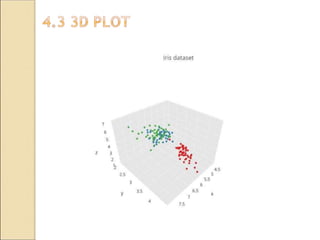

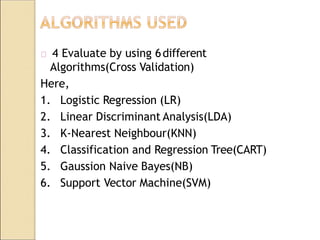

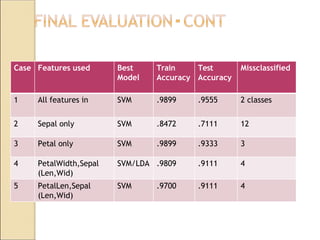

The document discusses the optimization of genetic algorithms using the Iris flower dataset, focusing on techniques to enhance the effectiveness of genetic algorithms in solving complex optimization and search problems. It explains the structure and application of genetic algorithms, highlights their advantages and disadvantages, and provides a performance evaluation using various classification algorithms. The Iris dataset is used as a case study to illustrate the successful classification of different species of iris flowers based on their physical features.

![REFERENCES

1. Akbari Z. (2010). "A multilevel evolutionary algorithm for optimizing numerical

functions" IJIEC 2 (2011): 419–430

2. Ananya (2017), What is Diabetes, retrieved online from https://www.news-

medical.net/health/What- is-Diabetes.aspx

3. Coffin, D.; S., Robert E. (2008). "Linkage Learning in Estimation of Distribution

Algorithms". Linkage in Evolutionary Computation. Springer Berlin Heidelberg: 141–

156. doi:10.1007/978-3-540- 85068-7_7.

4. Eiben, A. E. et al (1994). Genetic algorithms with multi-parent recombination, PPSN III:

Proceedings of the International Conference on Evolutionary Computation. The Third

Conference on Parallel Problem Solving from Nature: 78–87. ISBN 3-540-58484-6.

5. Clustering - K-means demo’, K-means-Ineractive demo, Available at:

http://home.deib.polimi.it/matteucc/Clustering/tutorial_html/AppletKM.html.

Consulted 22 AUG 2013

6. Bache, K.& Lichman, M. 2013. UCI Machine Learning Repository

[http://archive.ics.uci.edu/ml]. Irvine, CA: University of California, School of Information

and Computer Science.

7. Bishop, C. 2006. Pattern Recognition and Machine Learning. New York: Springer, pp.424-

428.

8. Fisher, R.A. 1936. UCI Machine Learning Repository: Iris Data Set. Available at:

http://archive.ics.uci.edu/ml/datasets/Iris. Consulted 10 AUG 2013

9. Mitchell, T. 1997. Machine learning. McGraw Hill.](https://image.slidesharecdn.com/g23lp-iiipresentation-200610203630/85/Genetic-Algorithm-for-optimization-on-IRIS-Dataset-presentation-ppt-25-320.jpg)