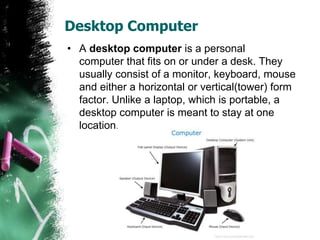

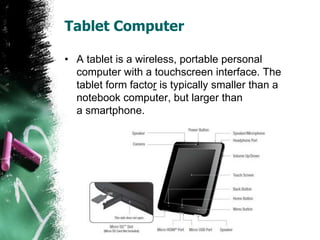

The document outlines the evolution of computers through five generations, each marked by significant technological advancements. Beginning with vacuum tubes in the first generation, the progression includes transistors, integrated circuits, very large scale integration, and up to ultra large scale integration in the current fifth generation, which emphasizes artificial intelligence and powerful microprocessors. It also categorizes various types of computers such as desktops, laptops, tablets, smartphones, mainframes, and supercomputers.