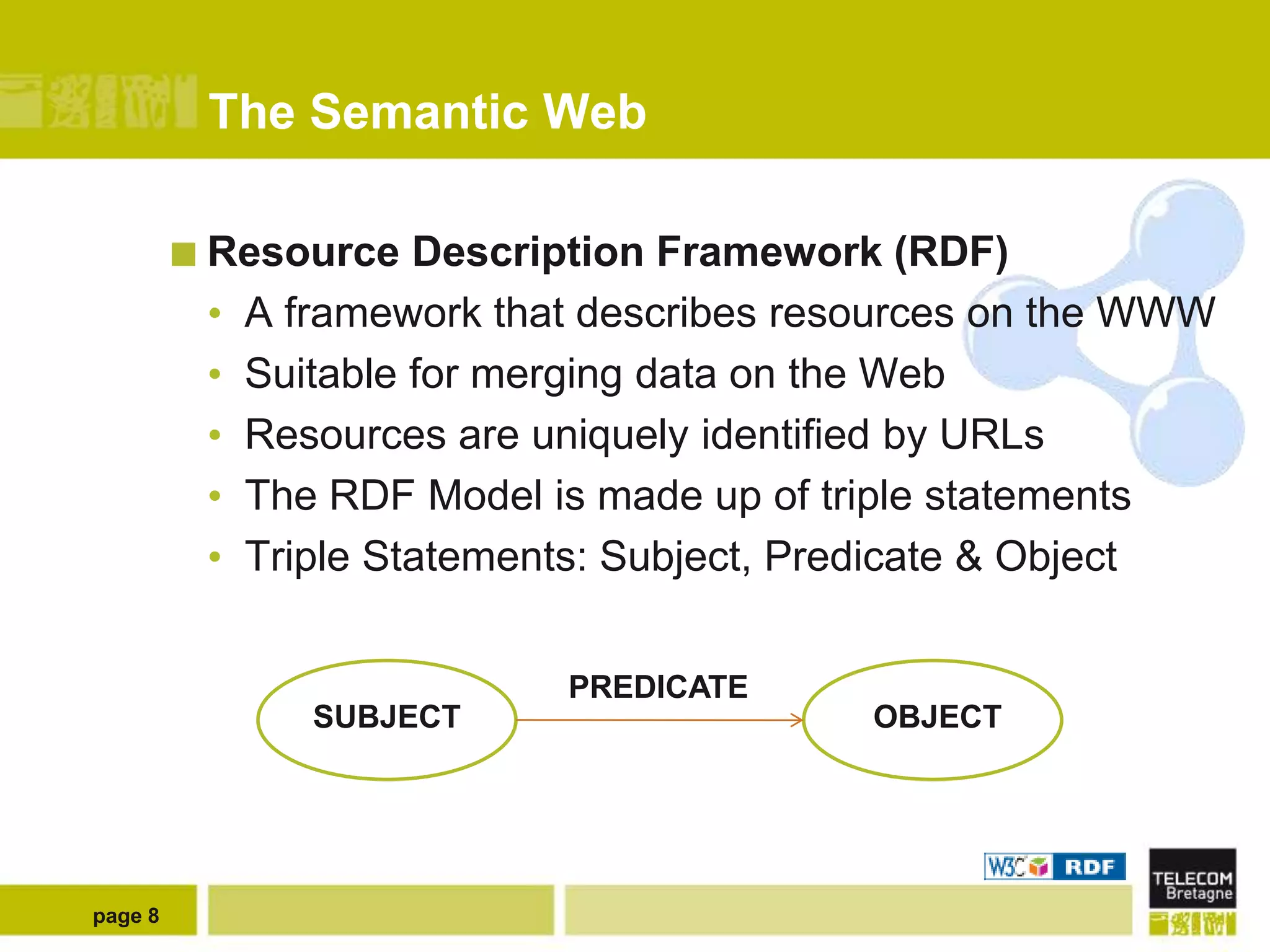

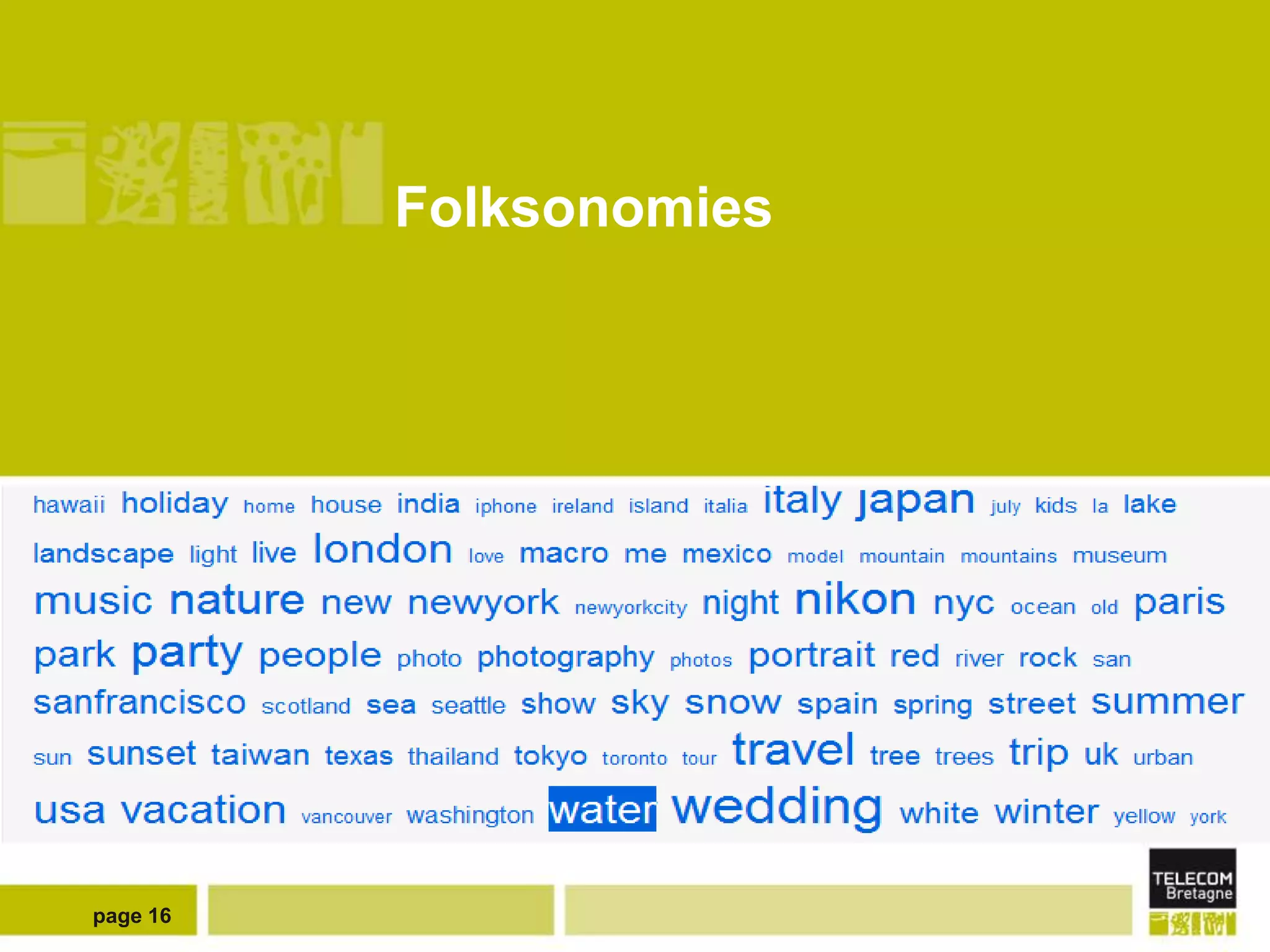

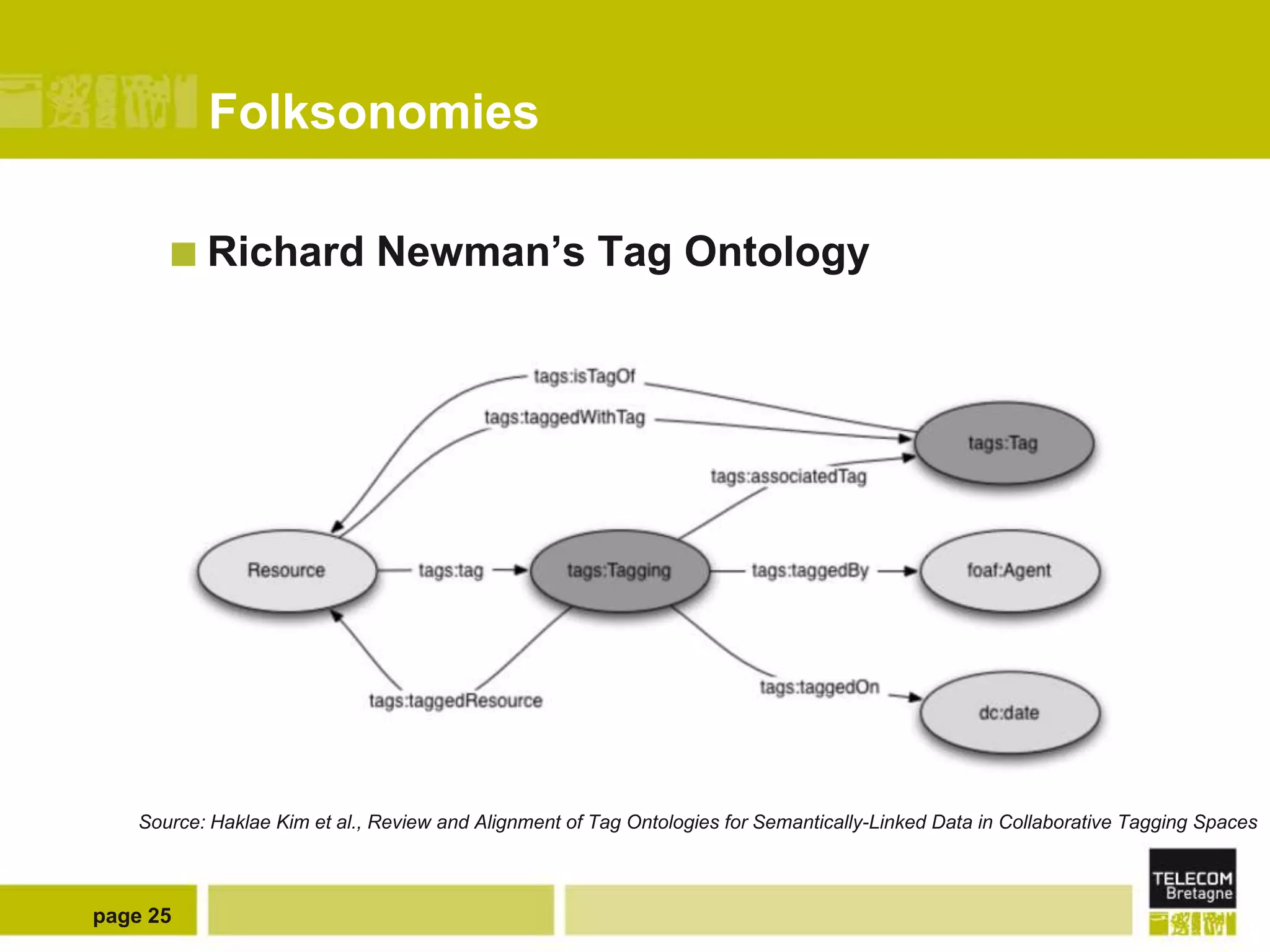

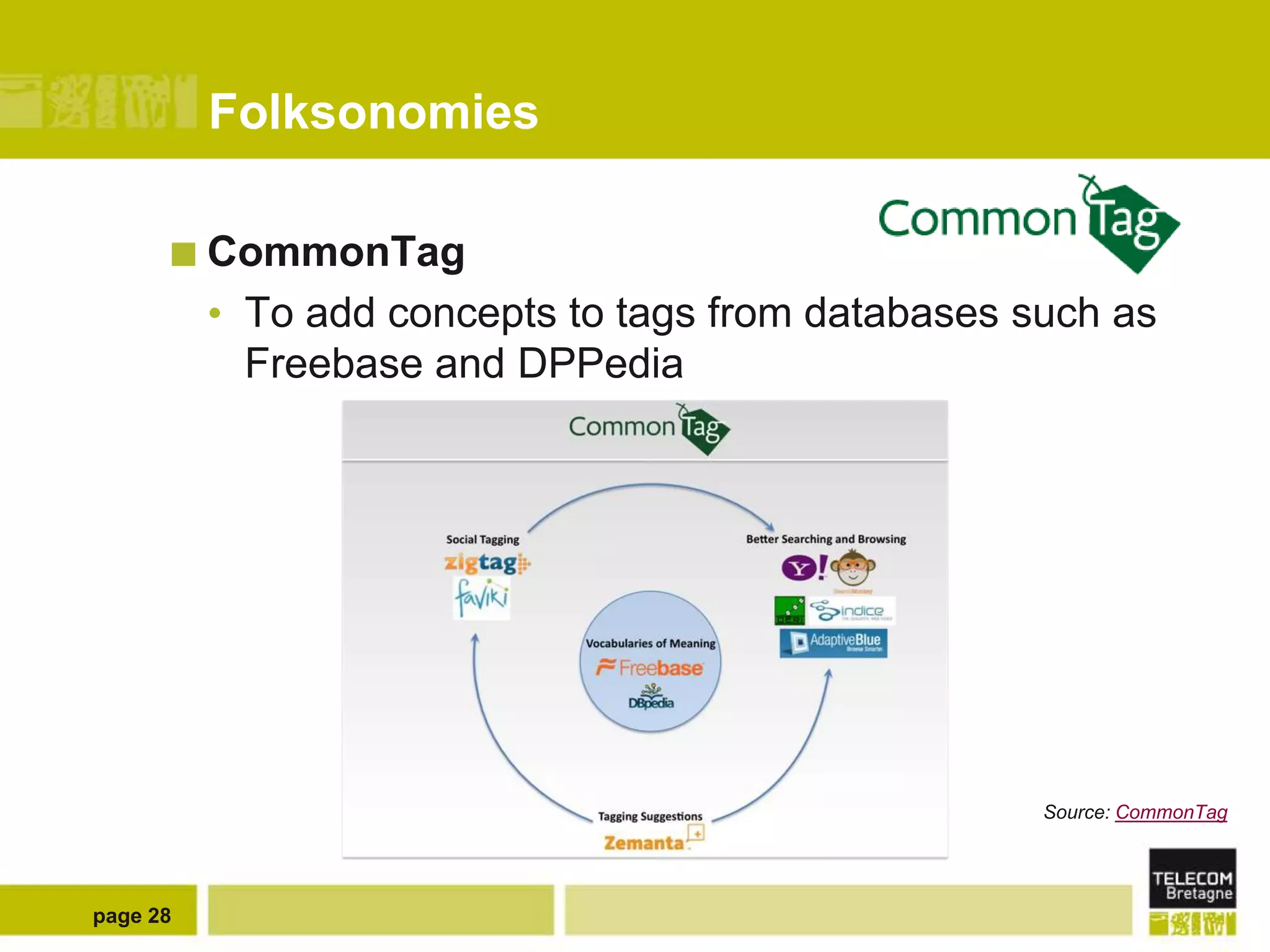

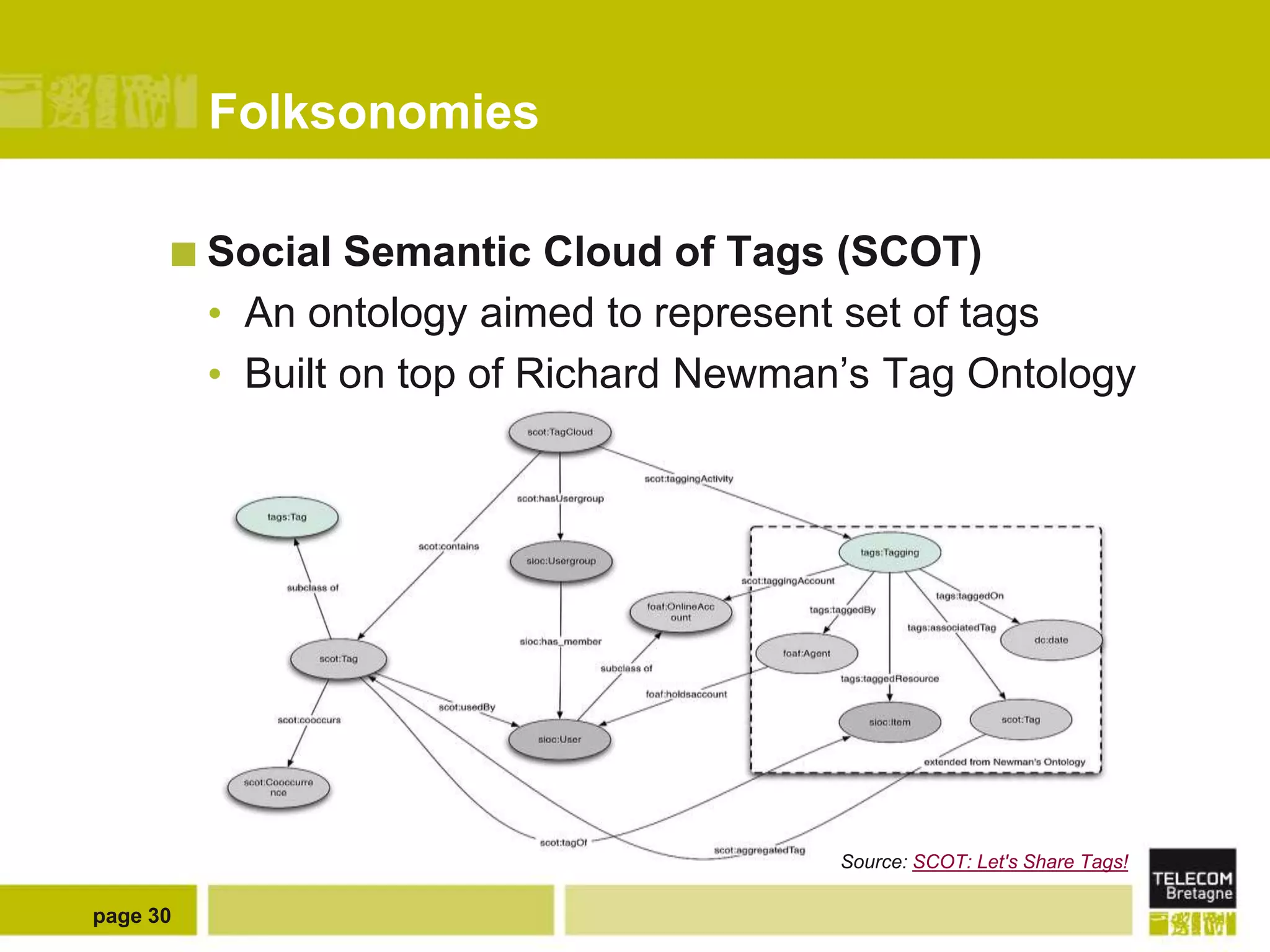

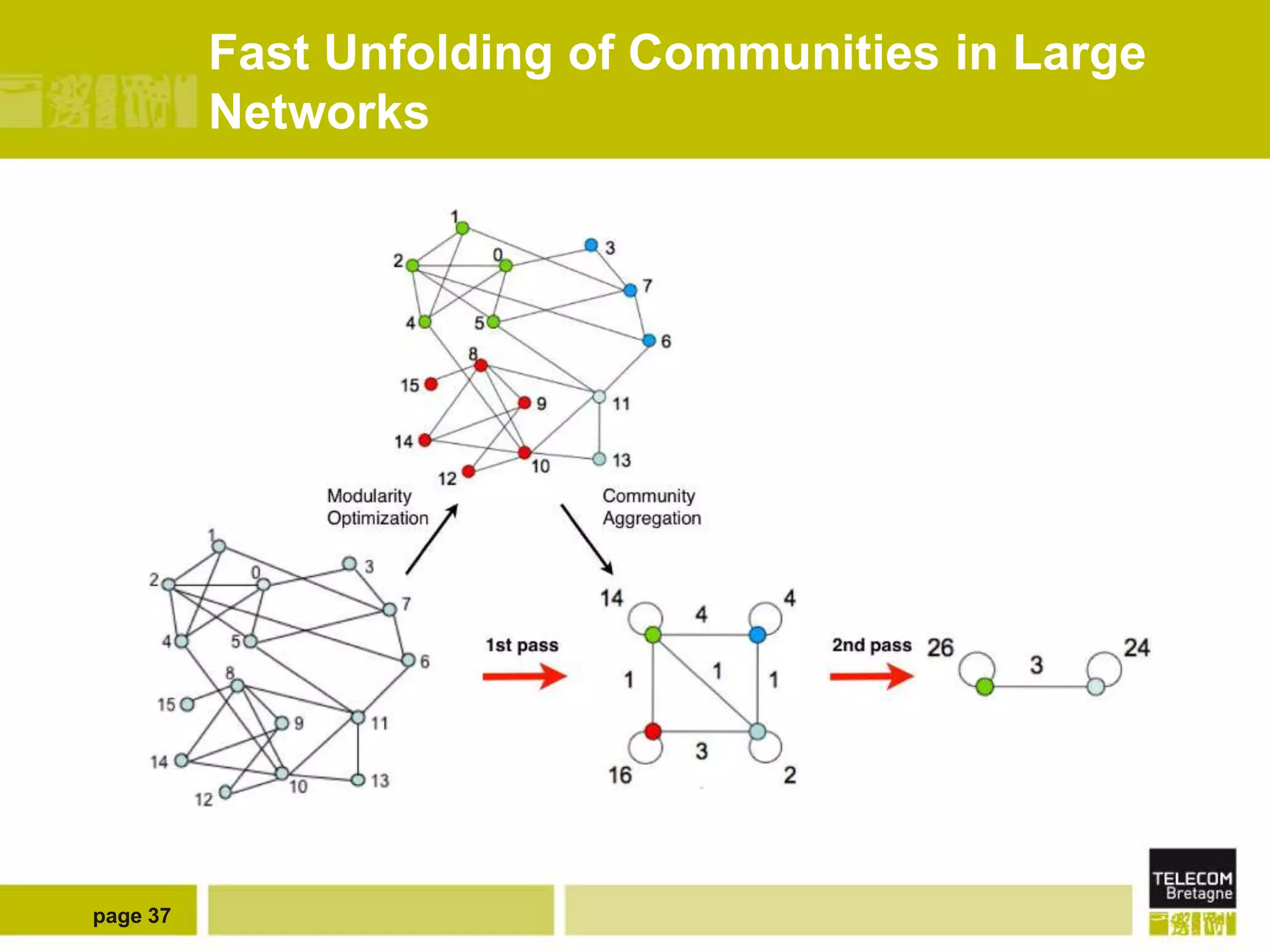

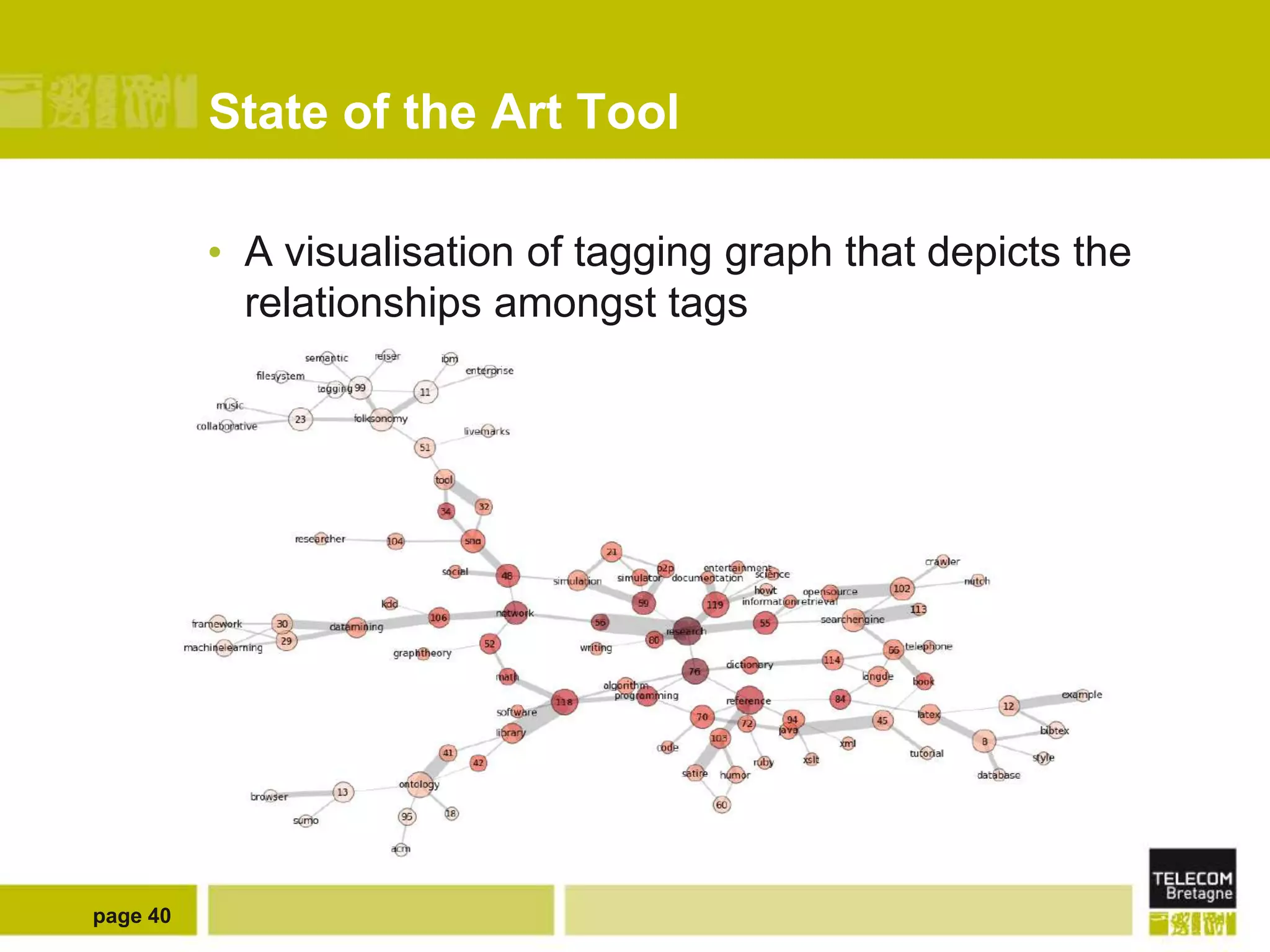

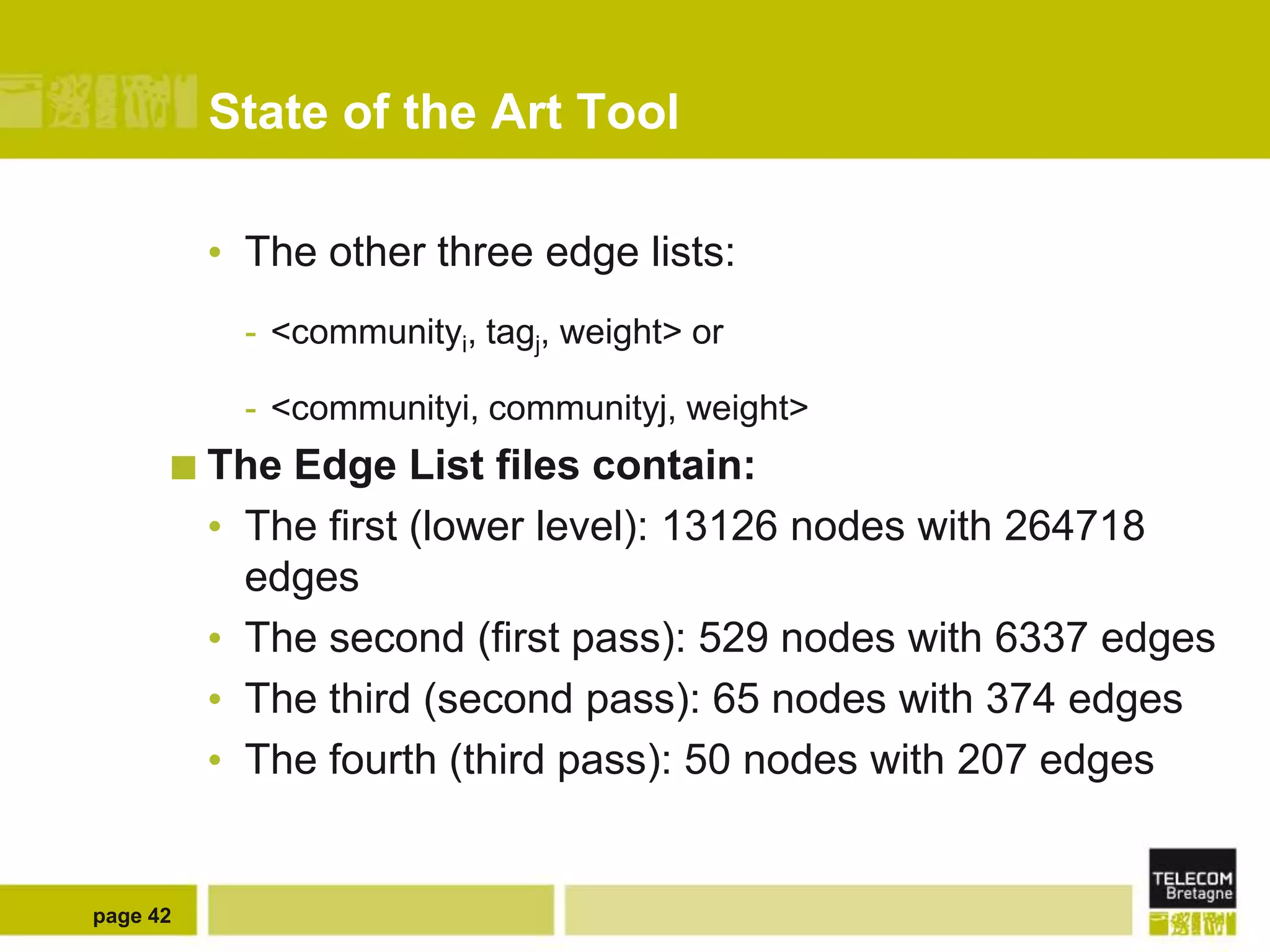

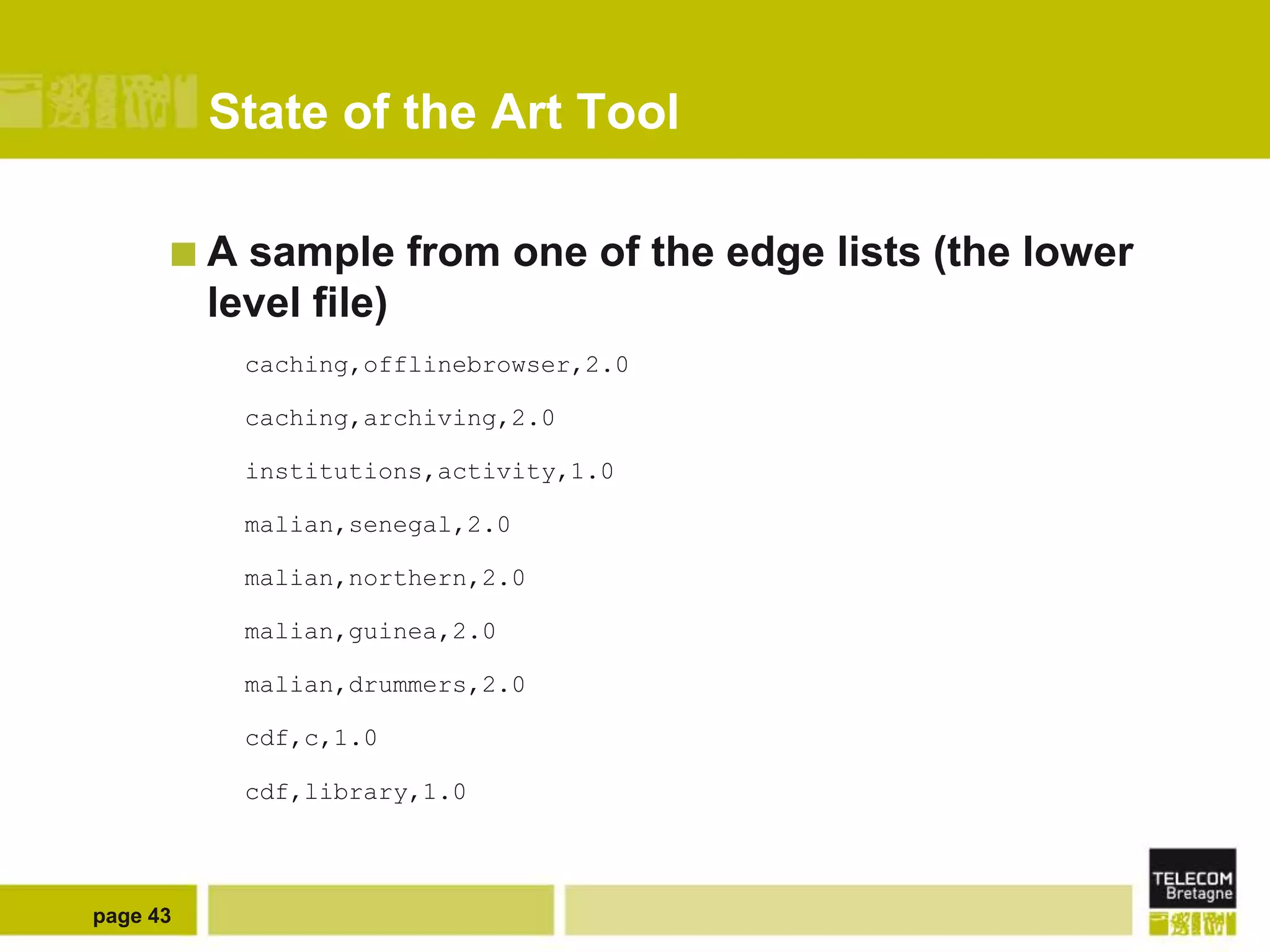

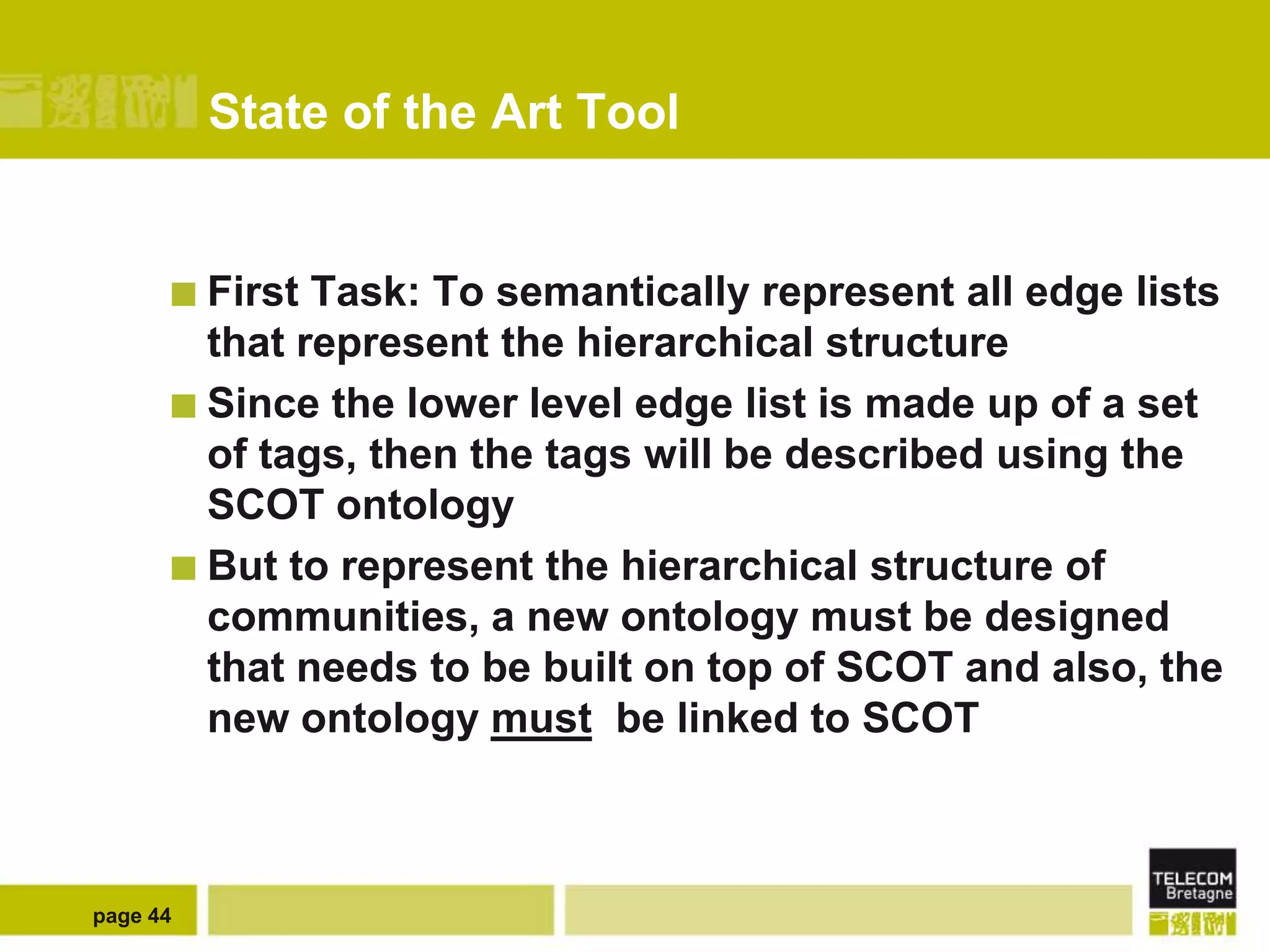

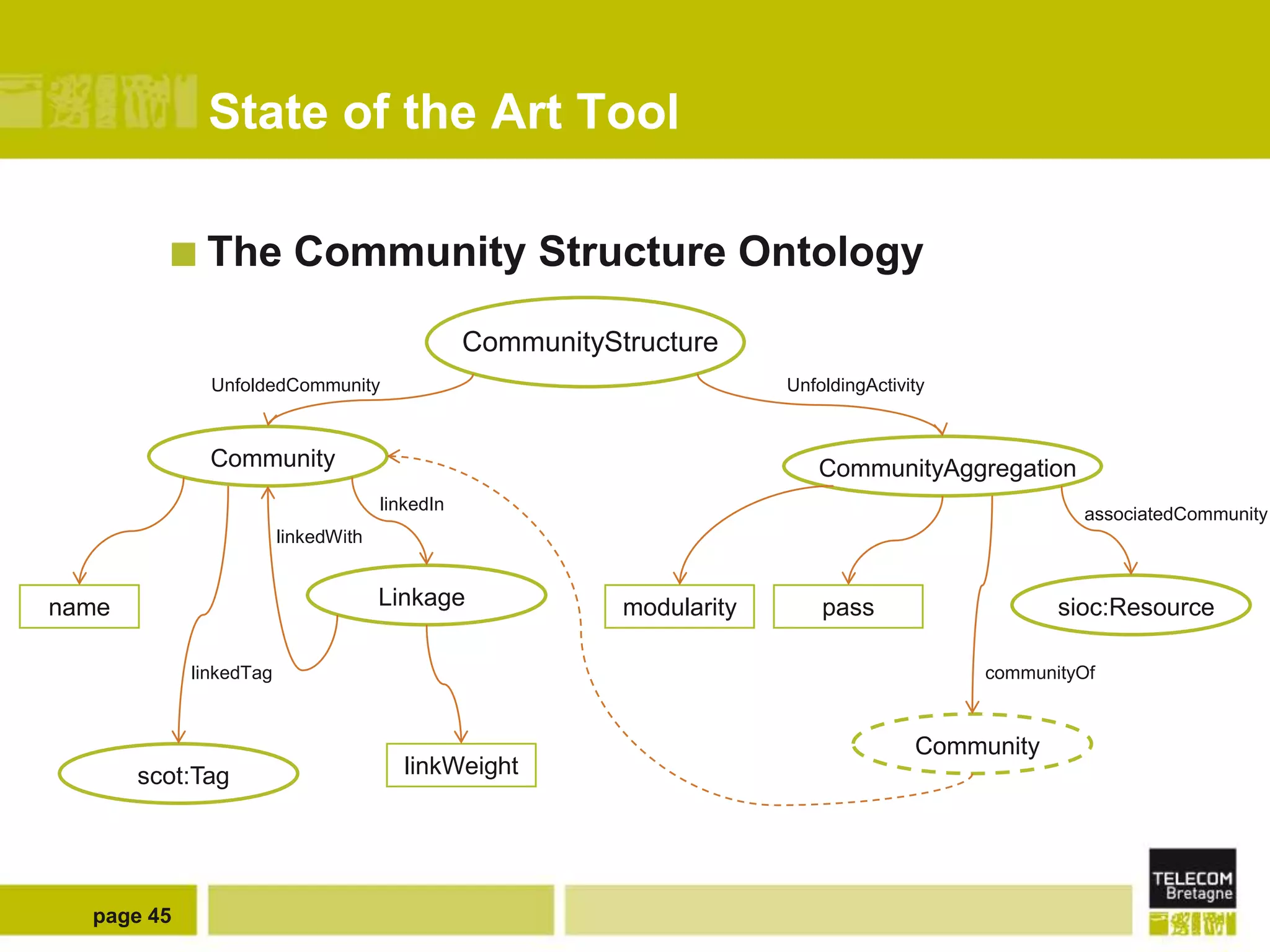

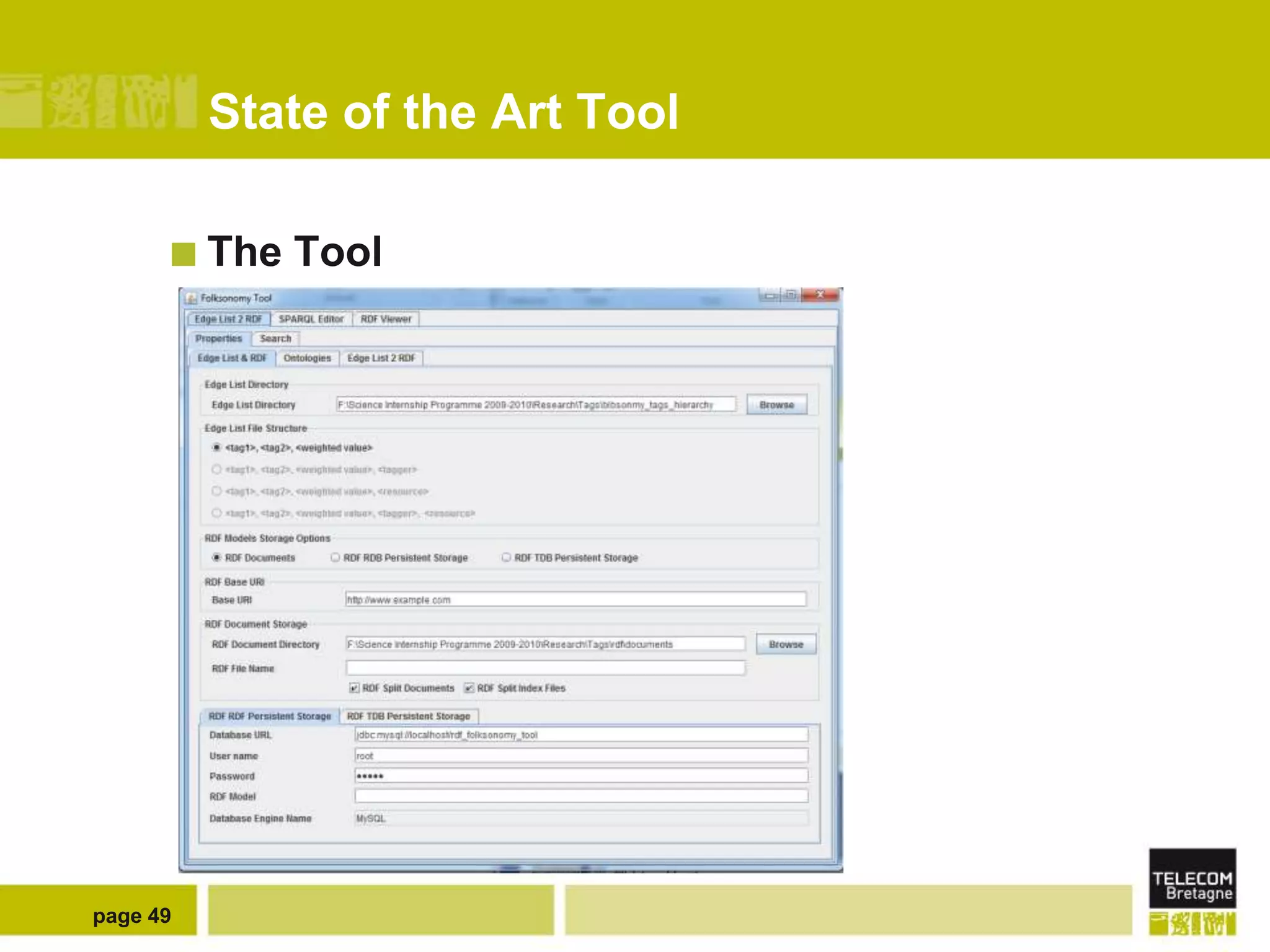

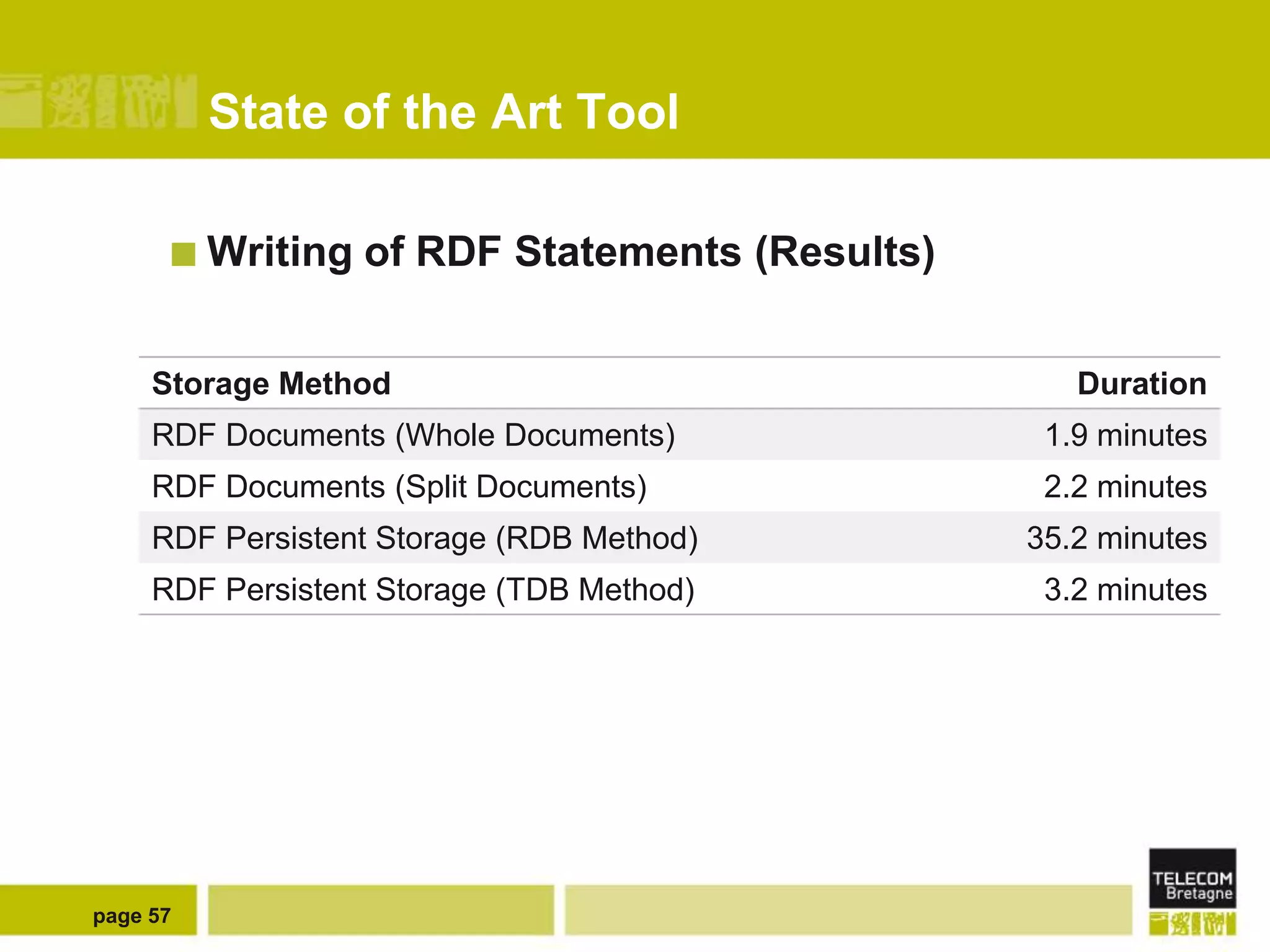

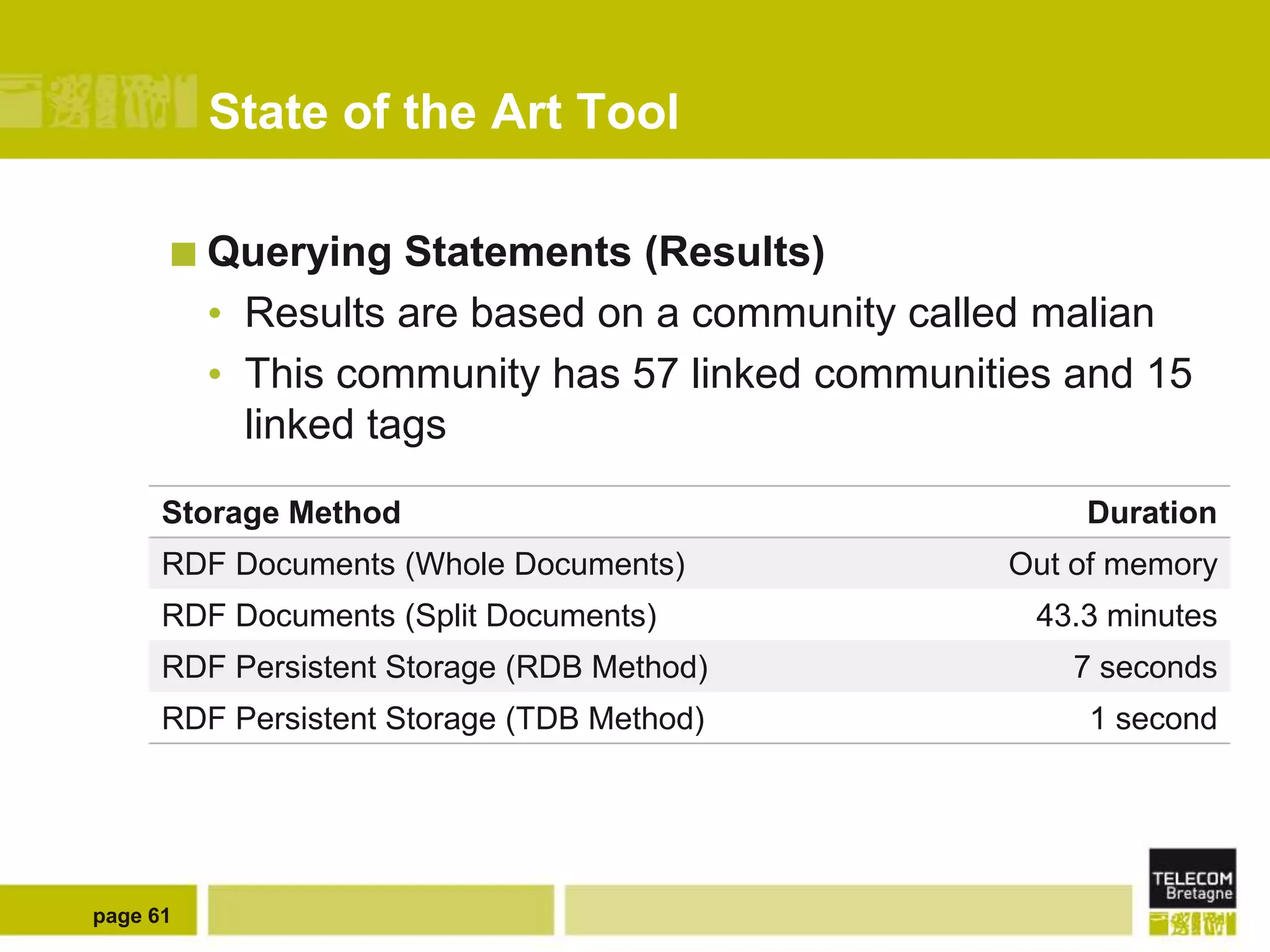

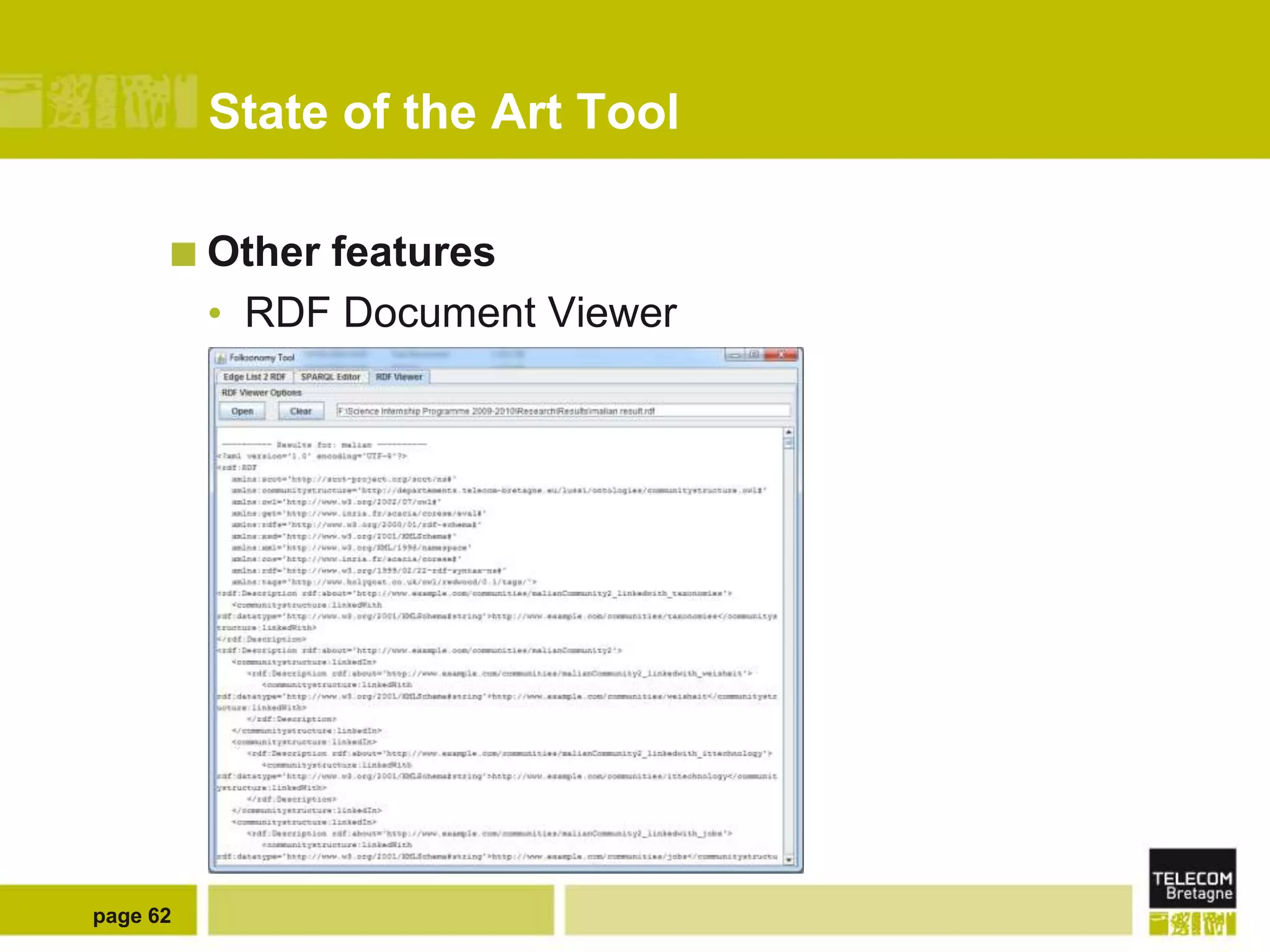

The document discusses the use of semantic web technologies for representing and utilizing folksonomies, focusing on the integration of tags with the semantic web. It outlines various methodologies, including the 'fast unfolding of communities in large networks' algorithm, to analyze community structures and enhance data representation. Future enhancements aim to expand the application to larger tag models and optimize the processes for querying and writing data.