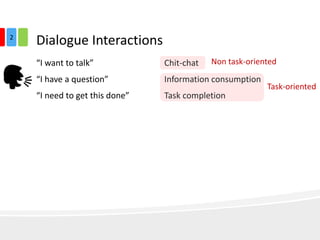

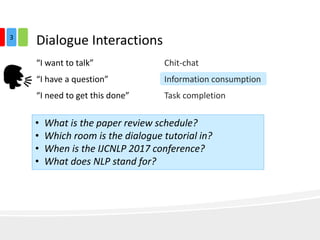

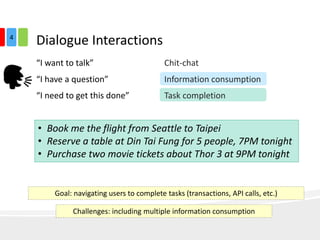

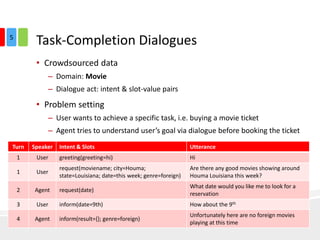

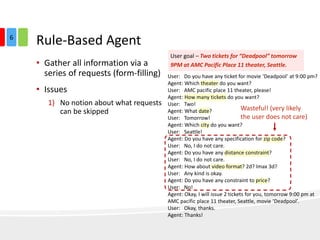

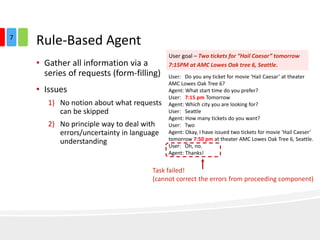

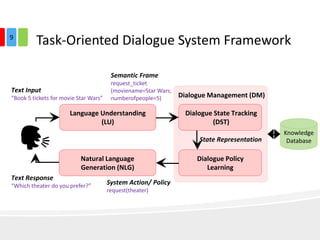

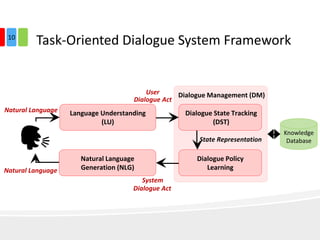

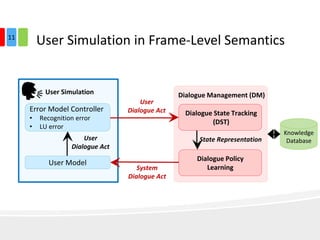

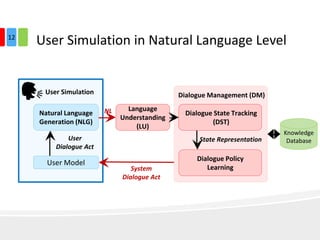

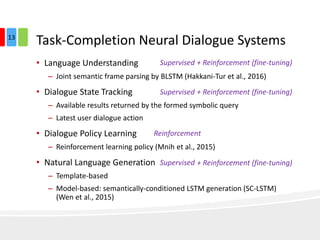

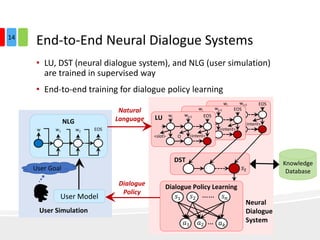

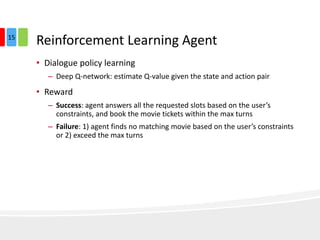

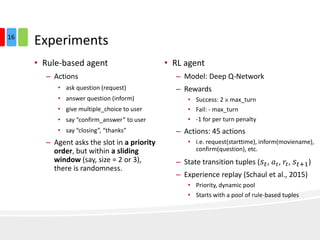

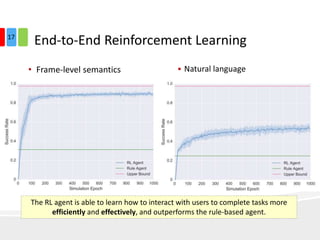

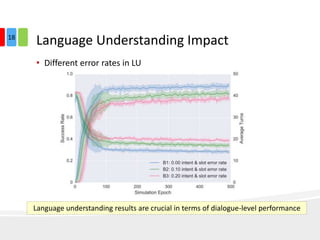

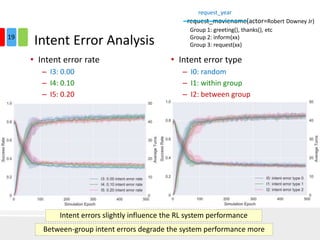

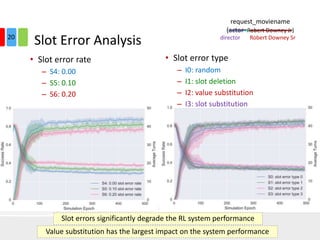

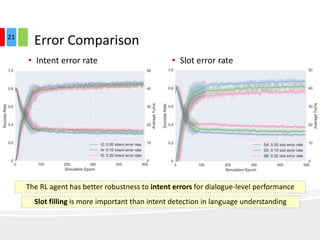

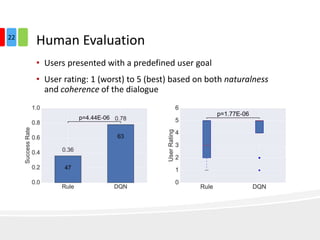

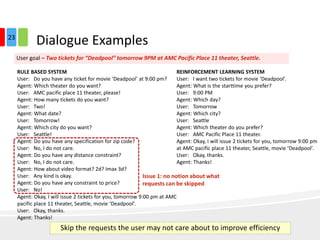

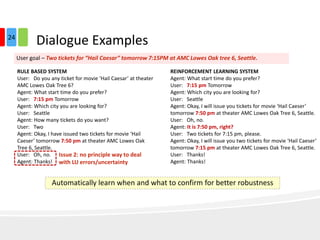

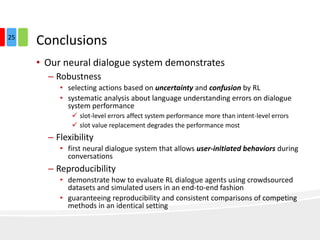

The document discusses end-to-end task-completion neural dialogue systems, highlighting their ability to manage dialogue interactions for various user intents, such as completing tasks or providing information. It compares rule-based agents with reinforcement learning agents, noting that the latter exhibits improved efficiency and robustness in handling user requests and errors. The authors emphasize the importance of addressing slot-level errors over intent errors and propose a systematic approach to evaluate dialogue agents using crowdsourced data.