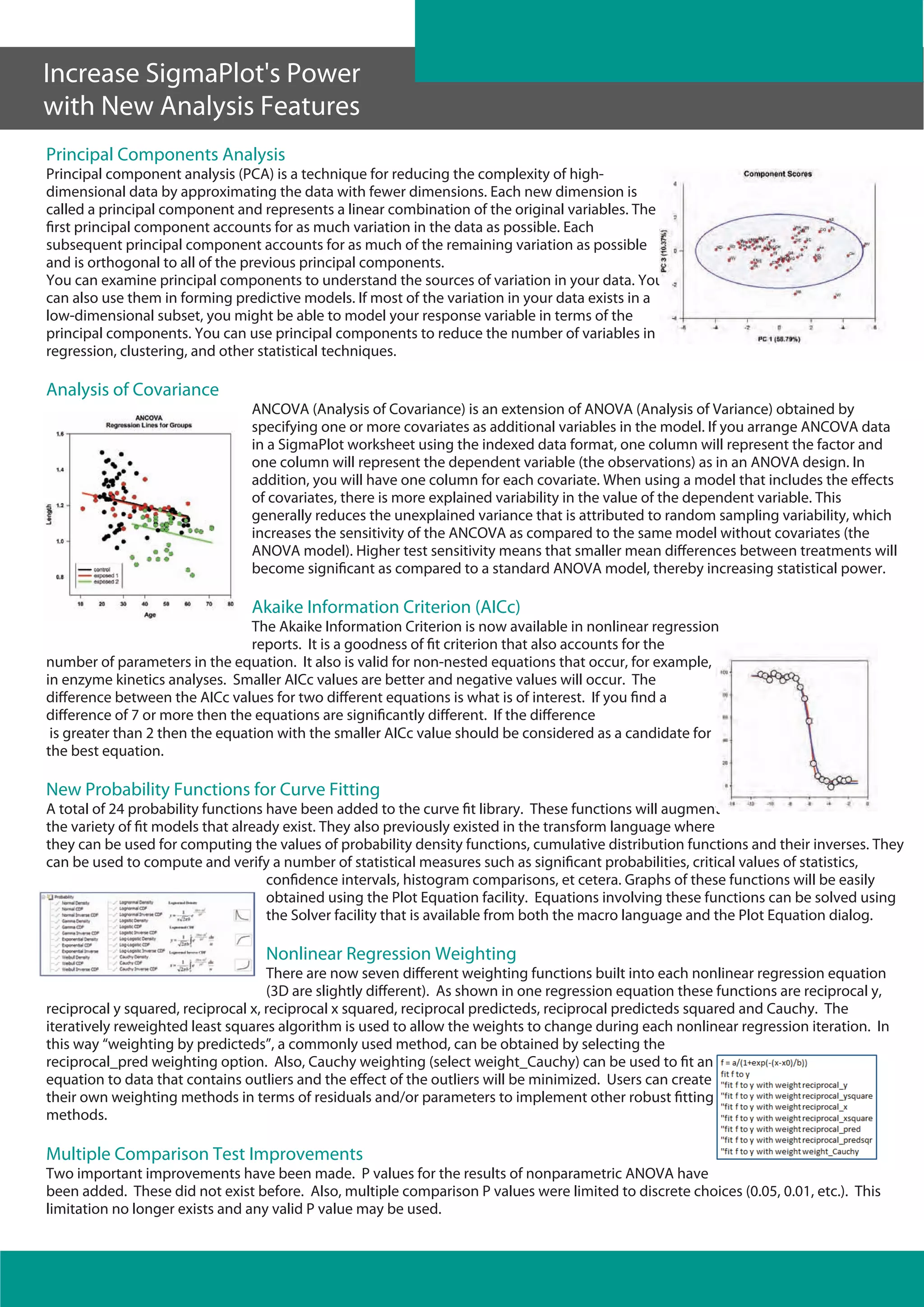

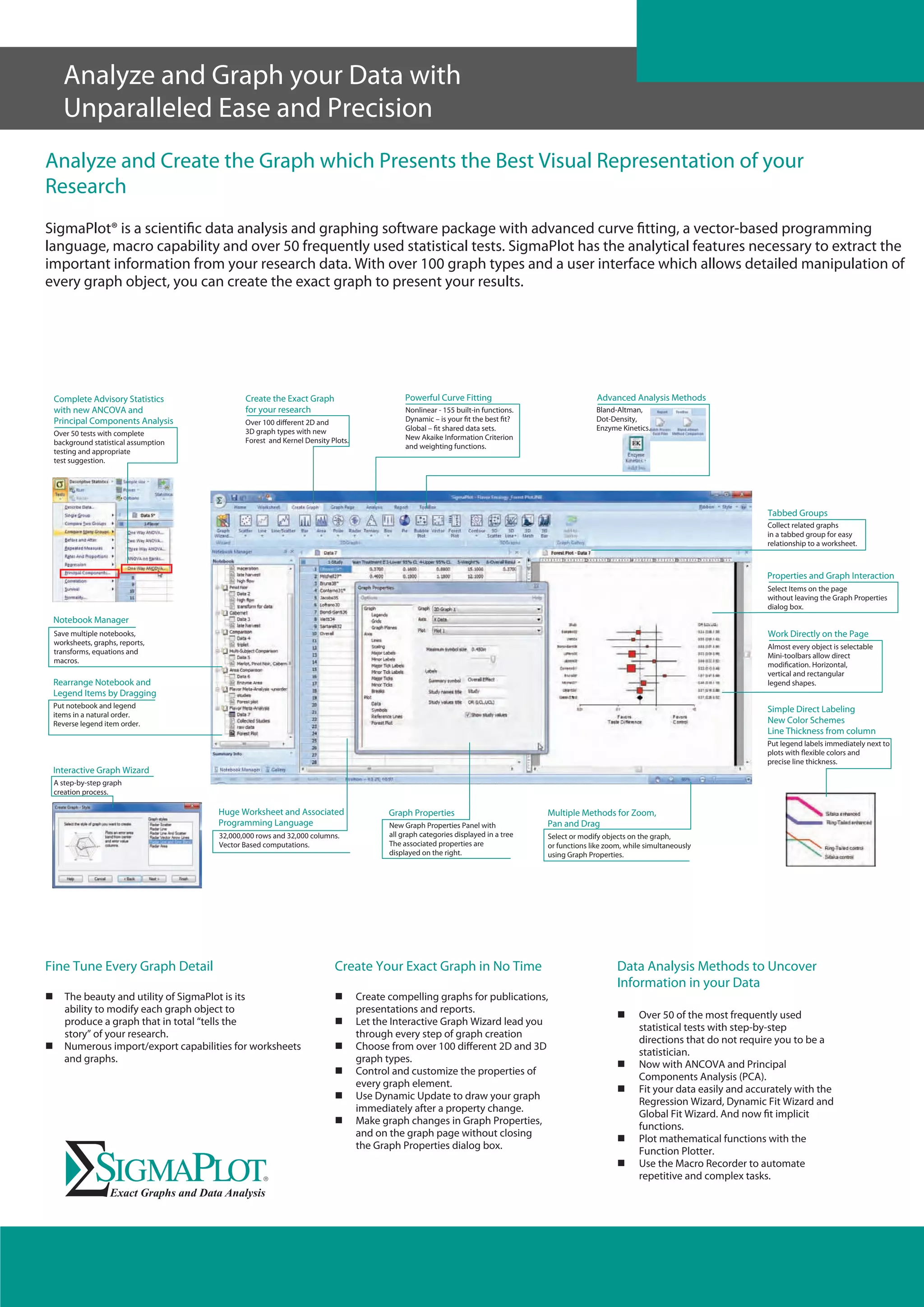

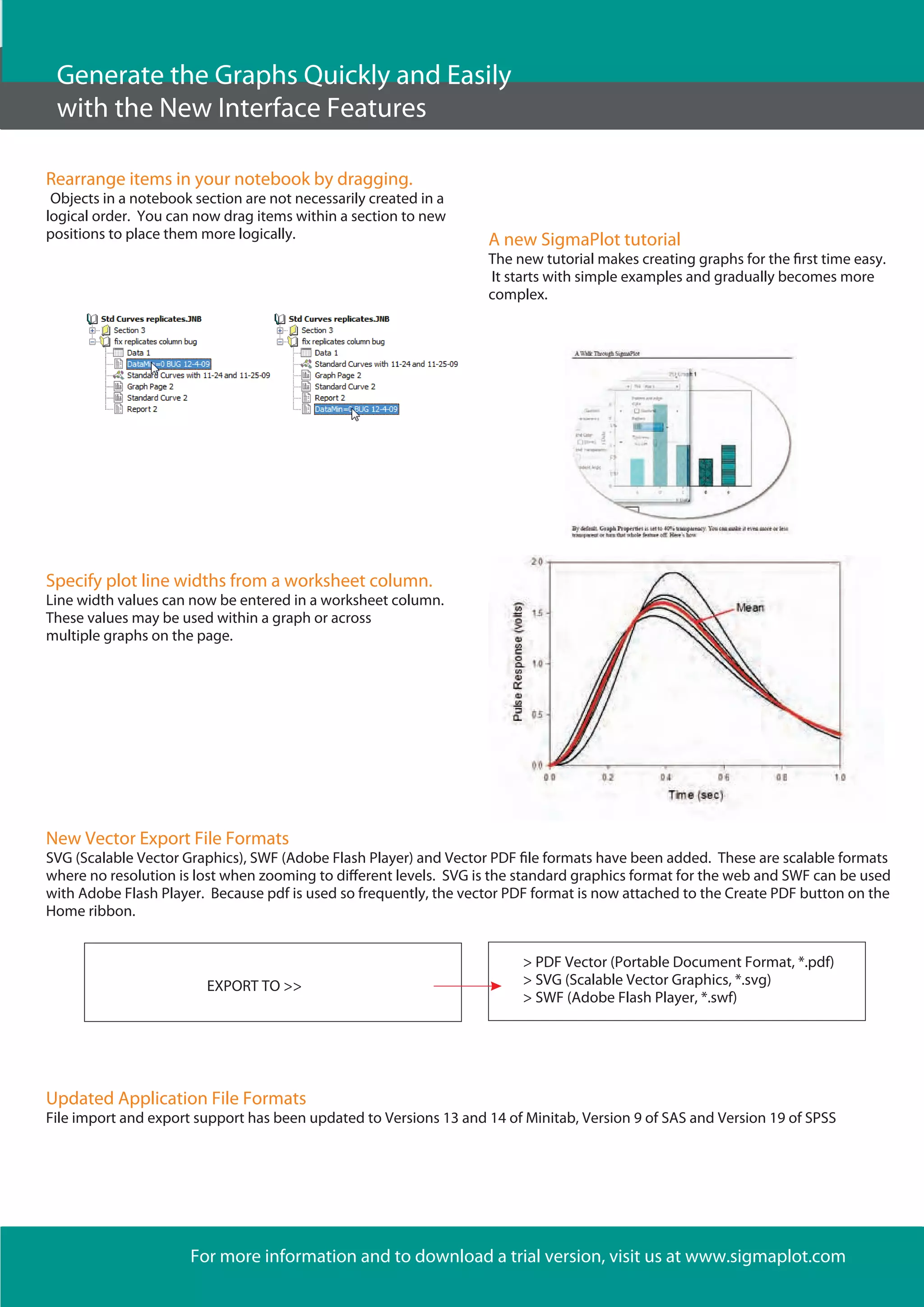

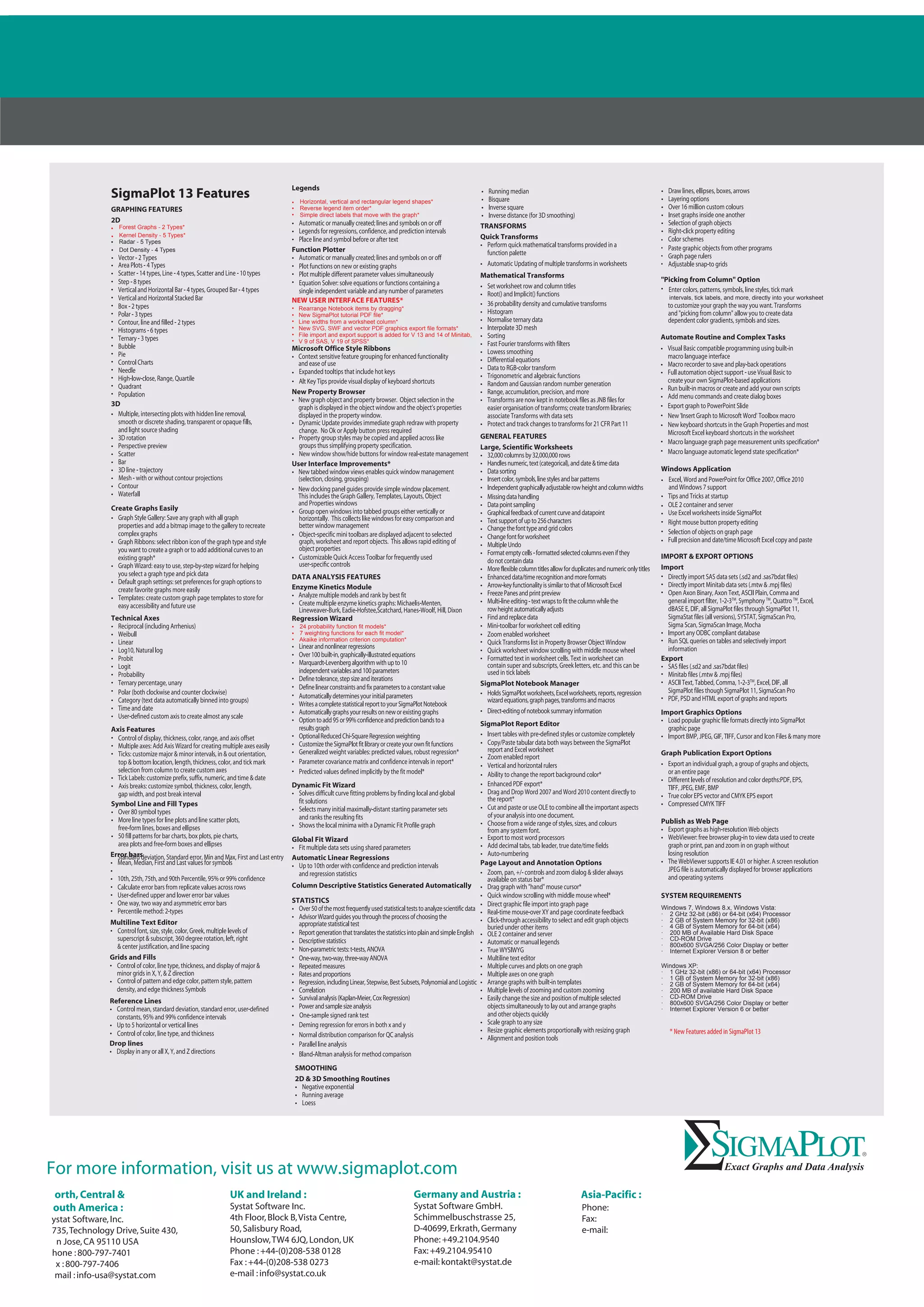

The document discusses new features in the latest version of SigmaPlot statistical analysis and graphing software. Key new features include additional graph types like forest plots and kernel density plots, more options for customizing legends, the ability to set line widths from data columns, and additional file export options. The software also includes more statistical models for curve fitting, new weighting functions, and an improved Akaike information criterion for model selection.