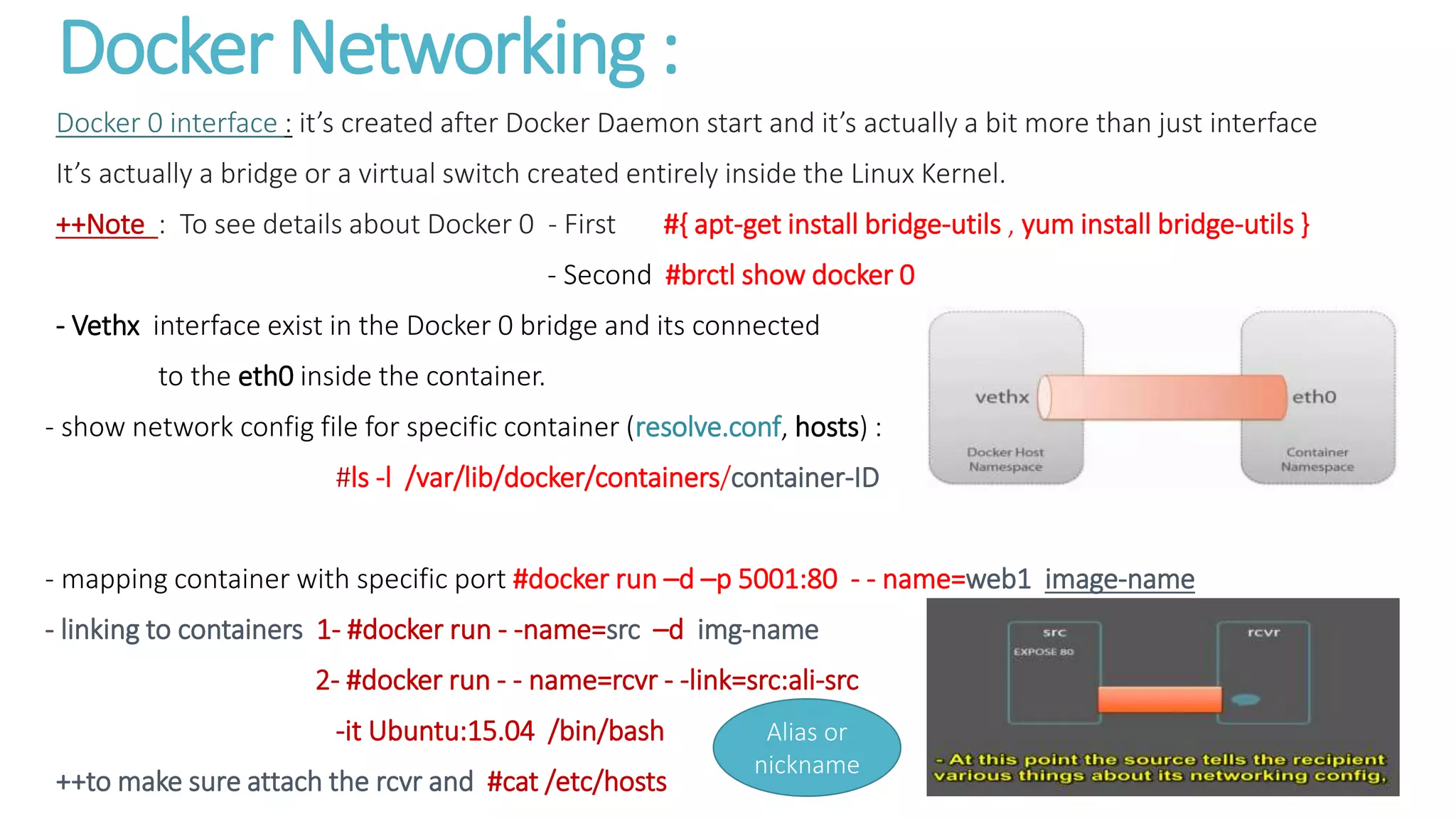

The document provides an overview of Docker, detailing its core components like the Docker client and daemon, and the difference between stateless and stateful applications. It discusses how Docker functions through execution drivers, container registries, and orchestration tools, as well as offering installation instructions and common command usages for managing Docker images and containers. Additionally, it highlights networking in Docker and the structure of Dockerfiles for building images.