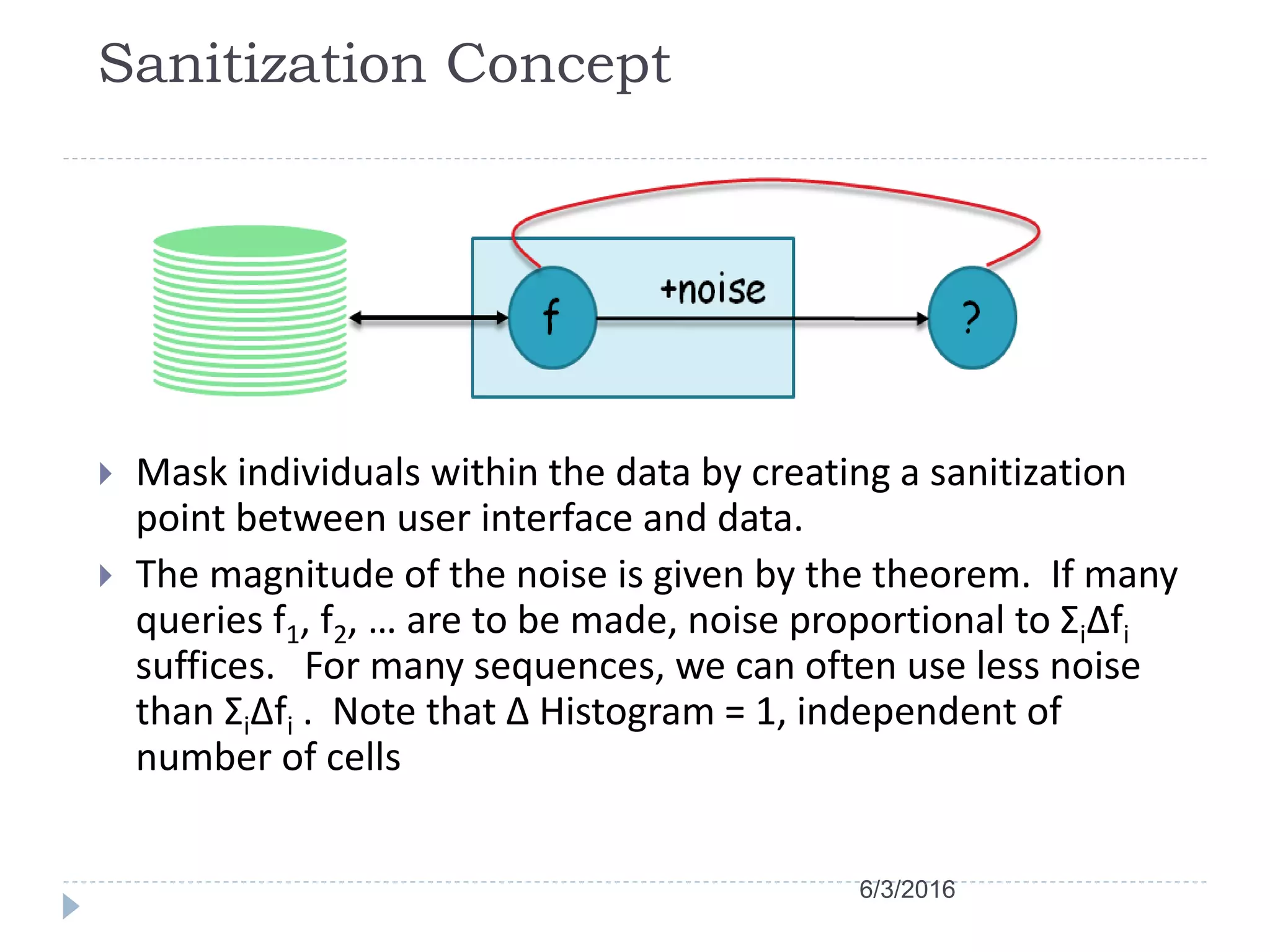

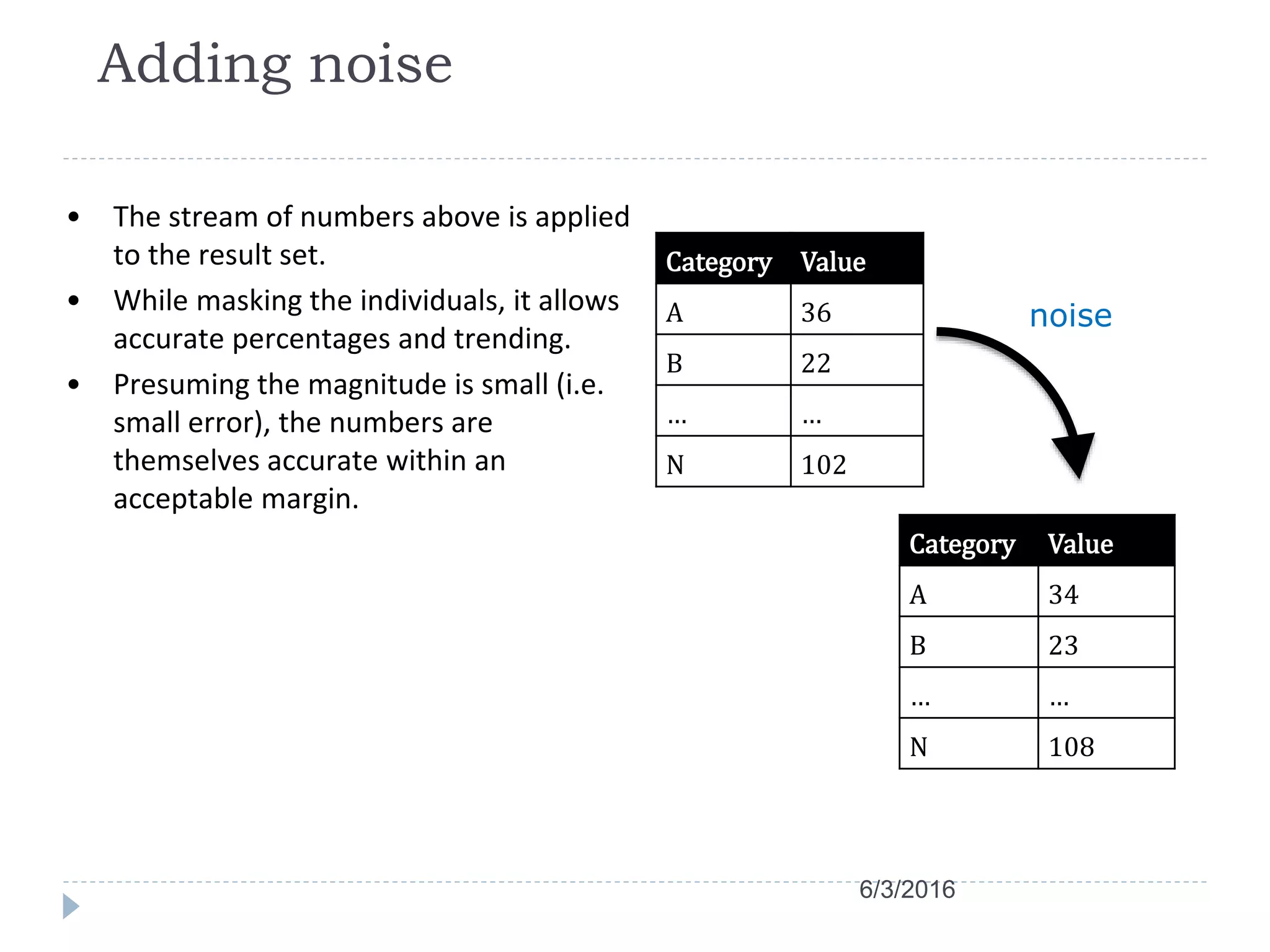

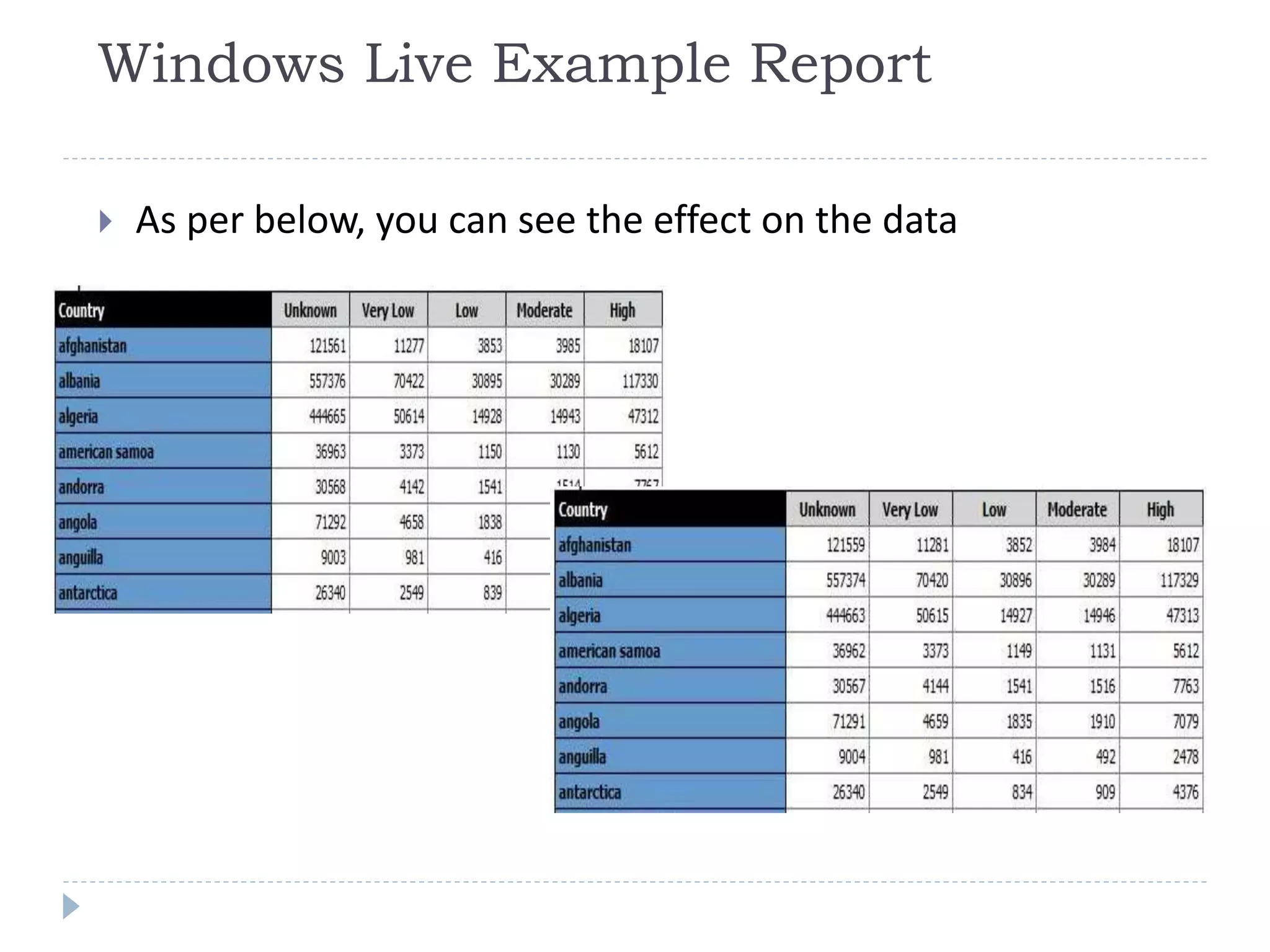

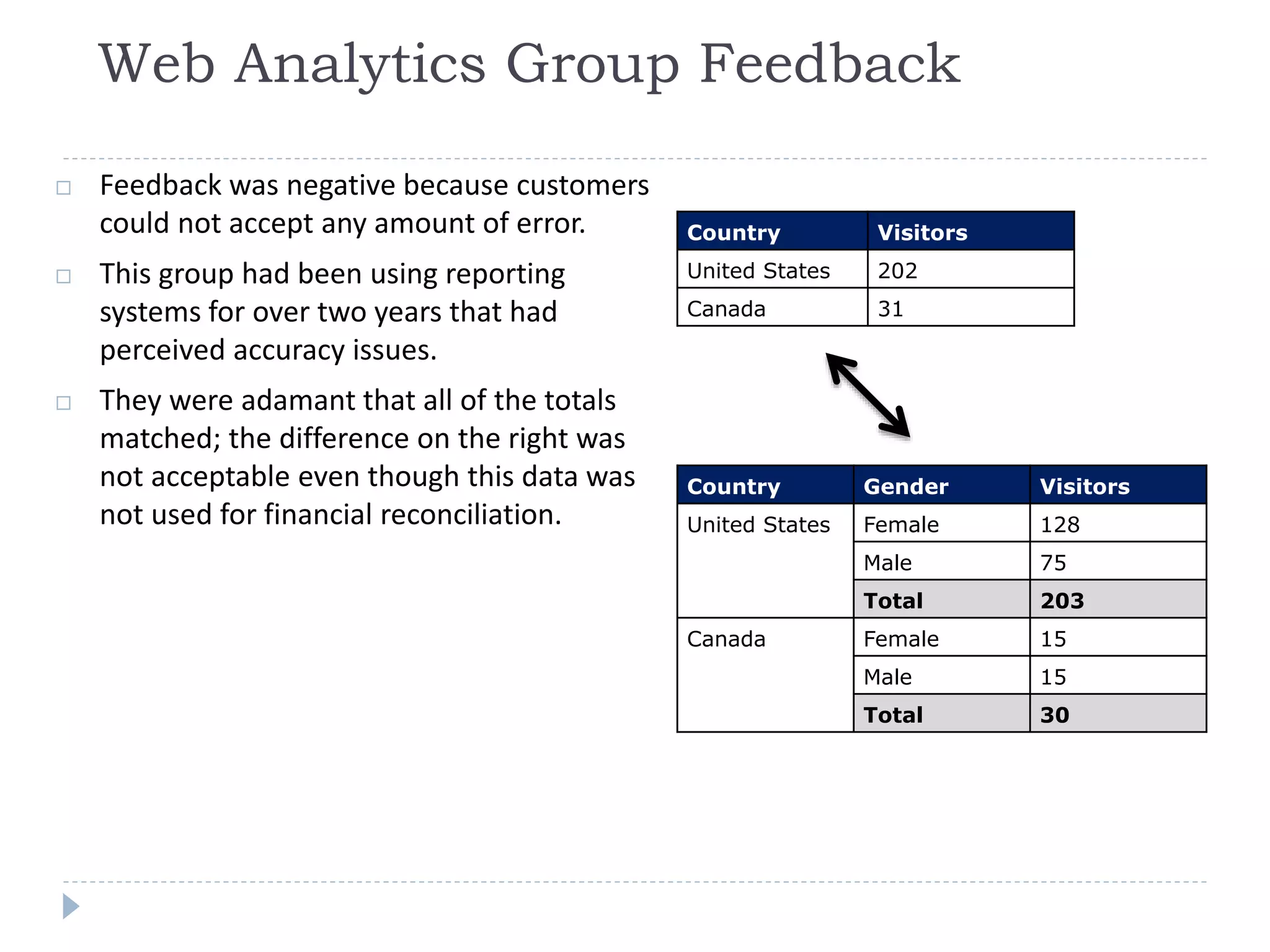

This document discusses case studies using differential privacy to analyze sensitive data. It describes analyzing Windows Live user data to study web analytics and customer churn. Clinical researchers' perspectives on differential privacy were also examined. Researchers wanted unaffected statistics and the ability to access original data if needed. Future collaboration with OHSU aims to develop a healthcare template for applying differential privacy.