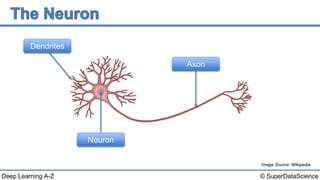

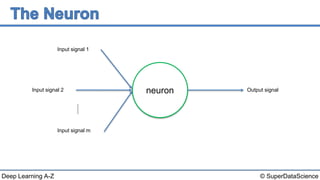

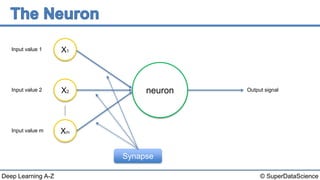

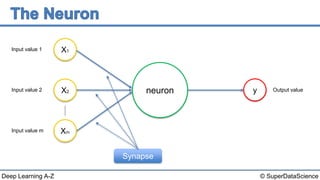

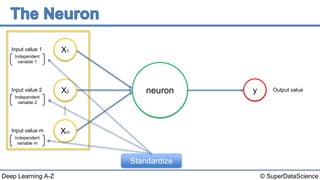

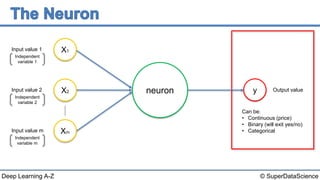

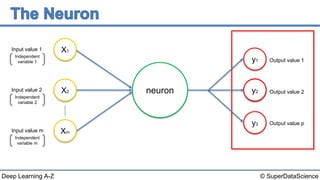

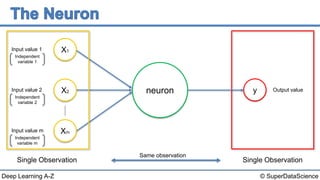

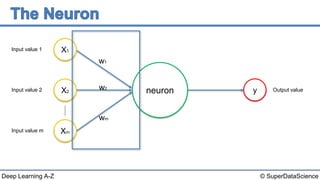

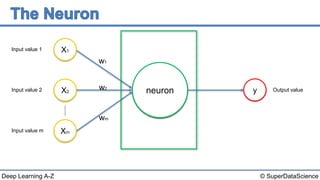

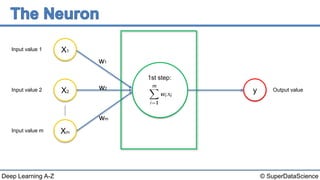

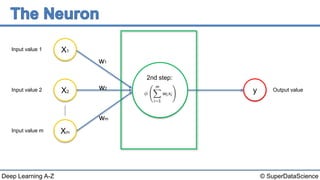

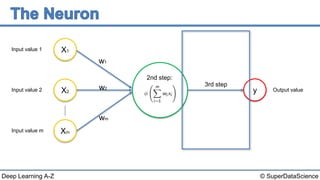

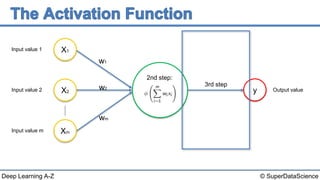

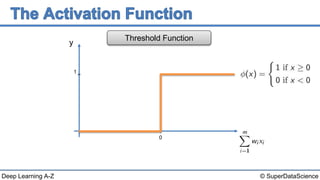

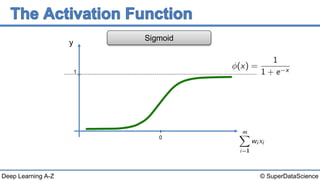

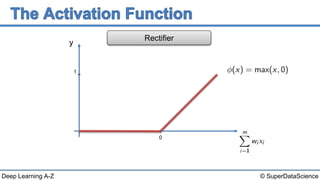

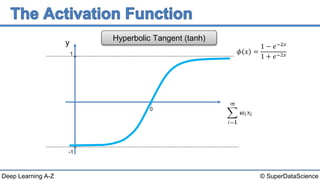

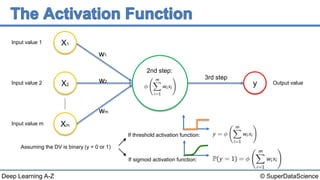

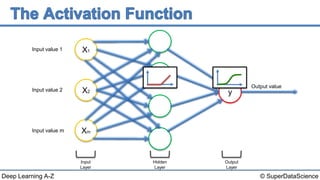

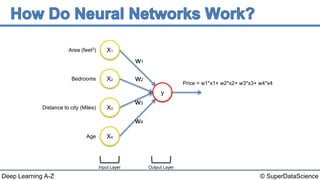

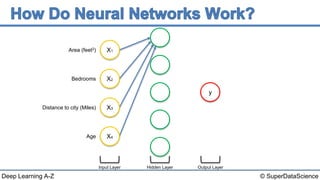

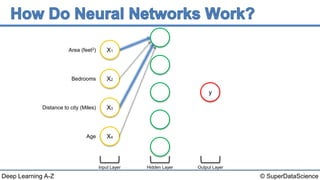

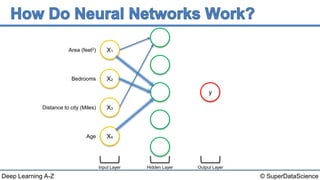

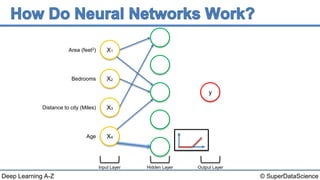

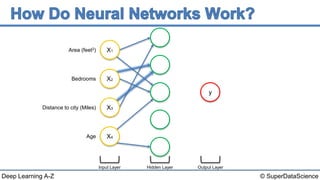

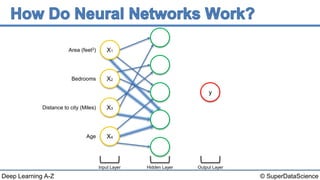

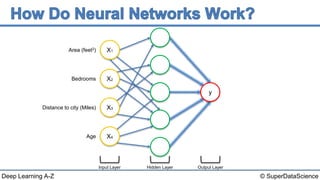

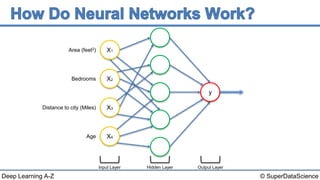

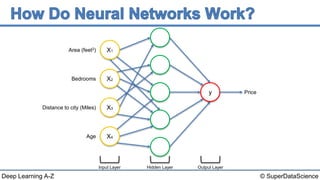

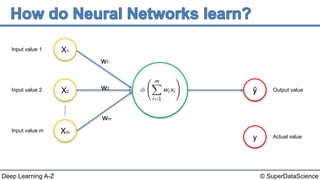

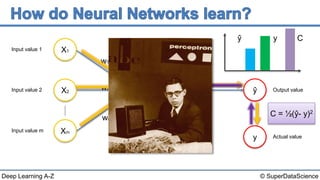

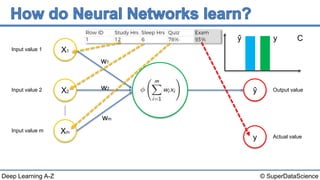

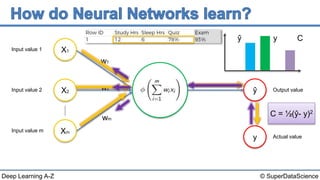

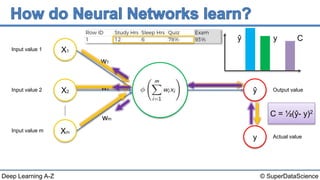

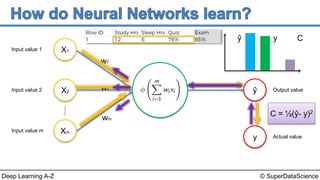

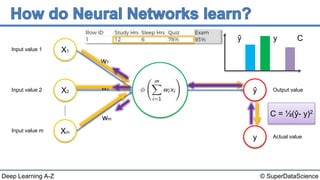

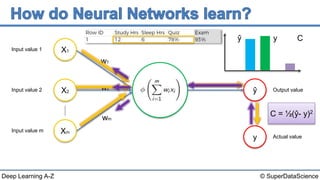

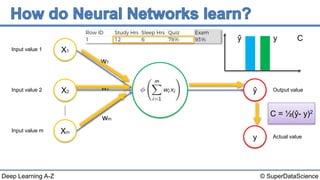

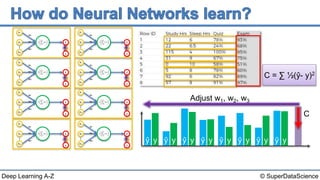

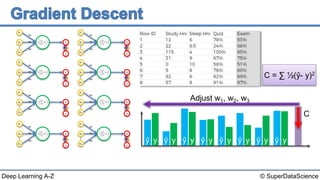

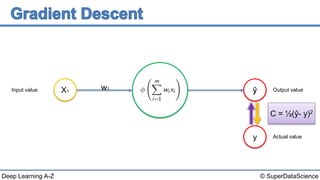

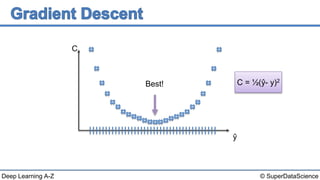

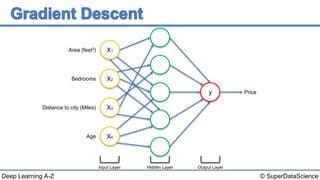

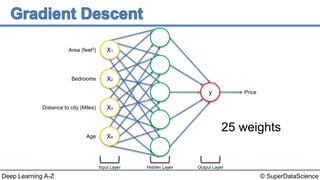

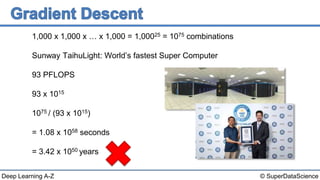

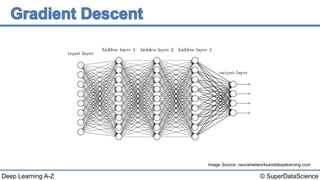

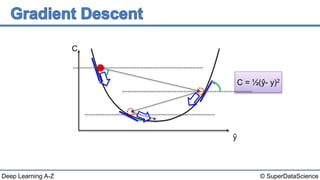

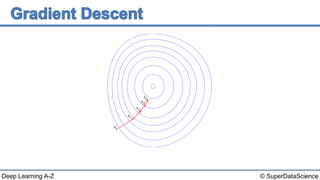

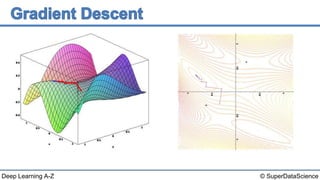

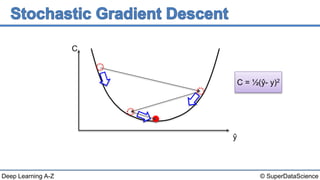

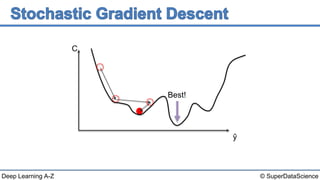

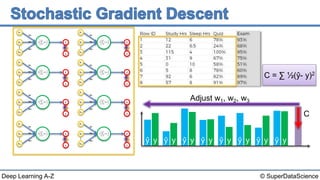

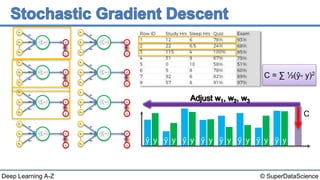

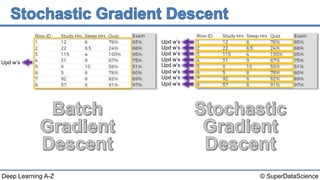

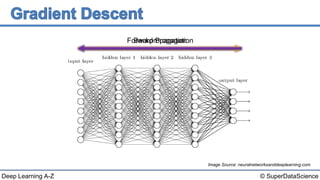

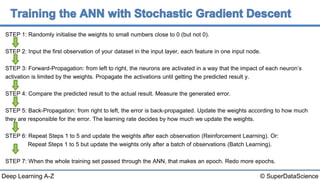

The document provides an overview of key concepts in neural networks including neurons, activation functions, how neural networks work and learn. It discusses gradient descent and backpropagation as methods for neural networks to learn by minimizing a cost function comparing predicted and actual outputs. Examples are given of single and multi-layer neural networks for problems like predicting housing prices from input features.