Embed presentation

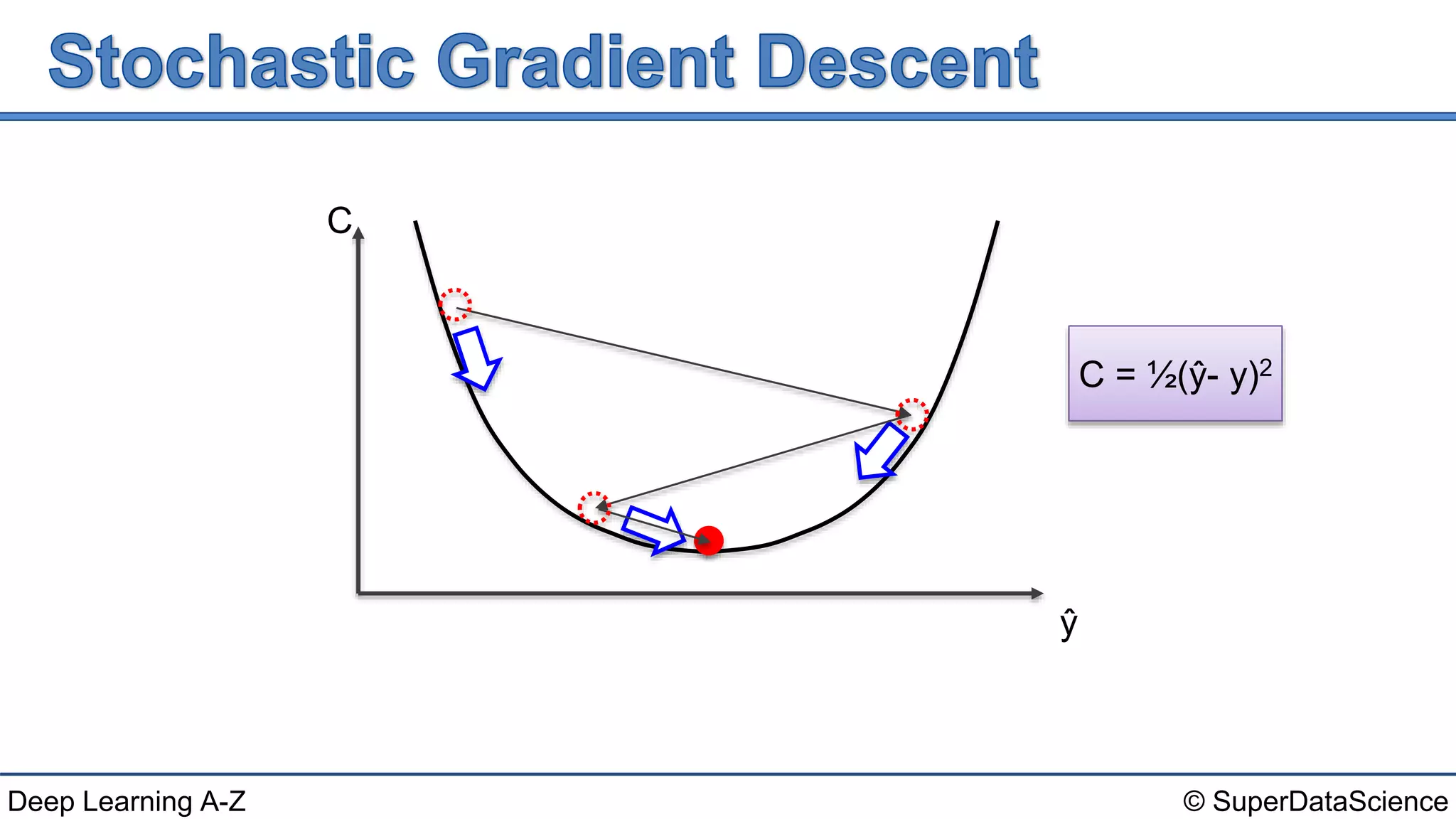

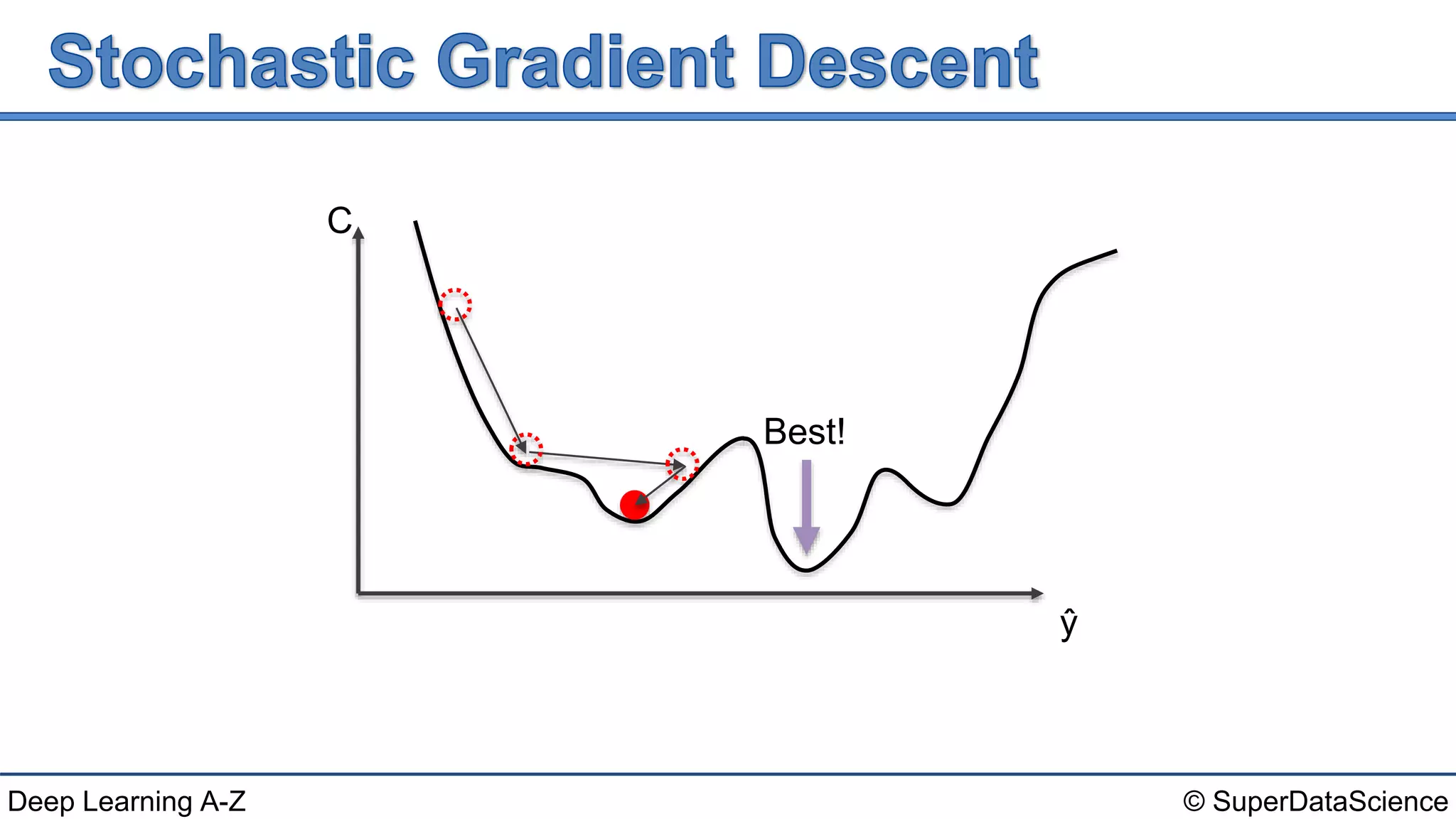

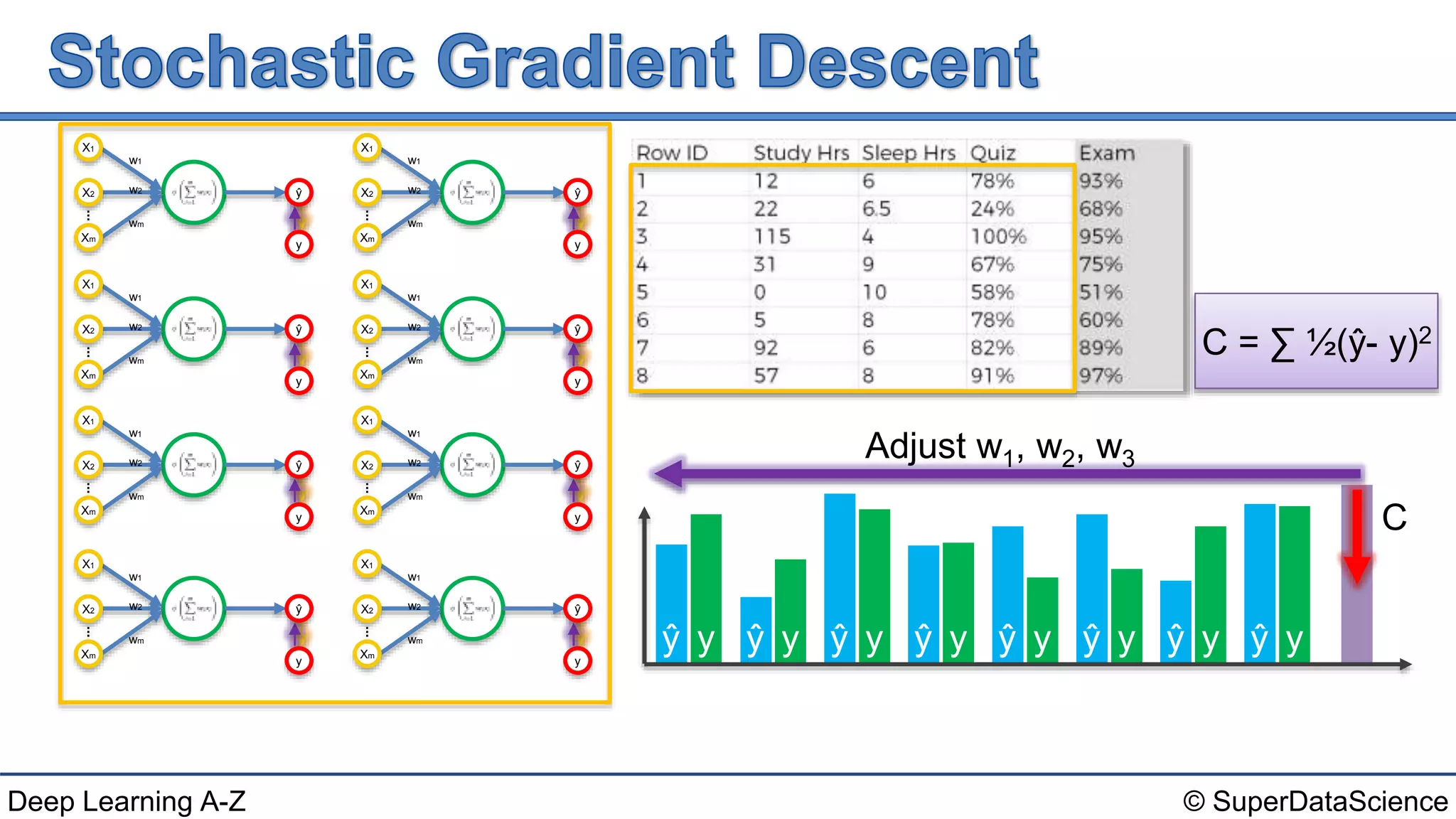

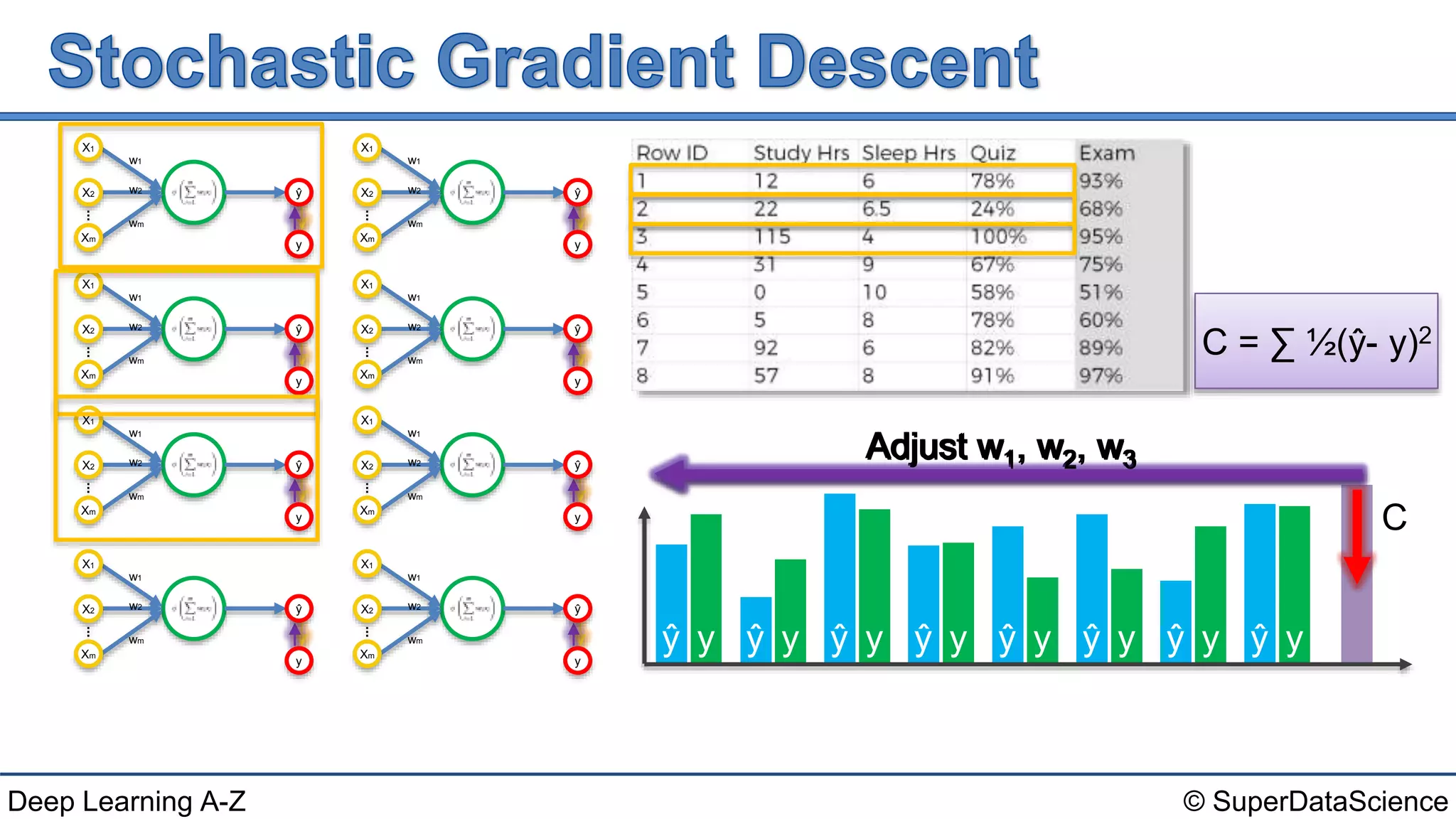

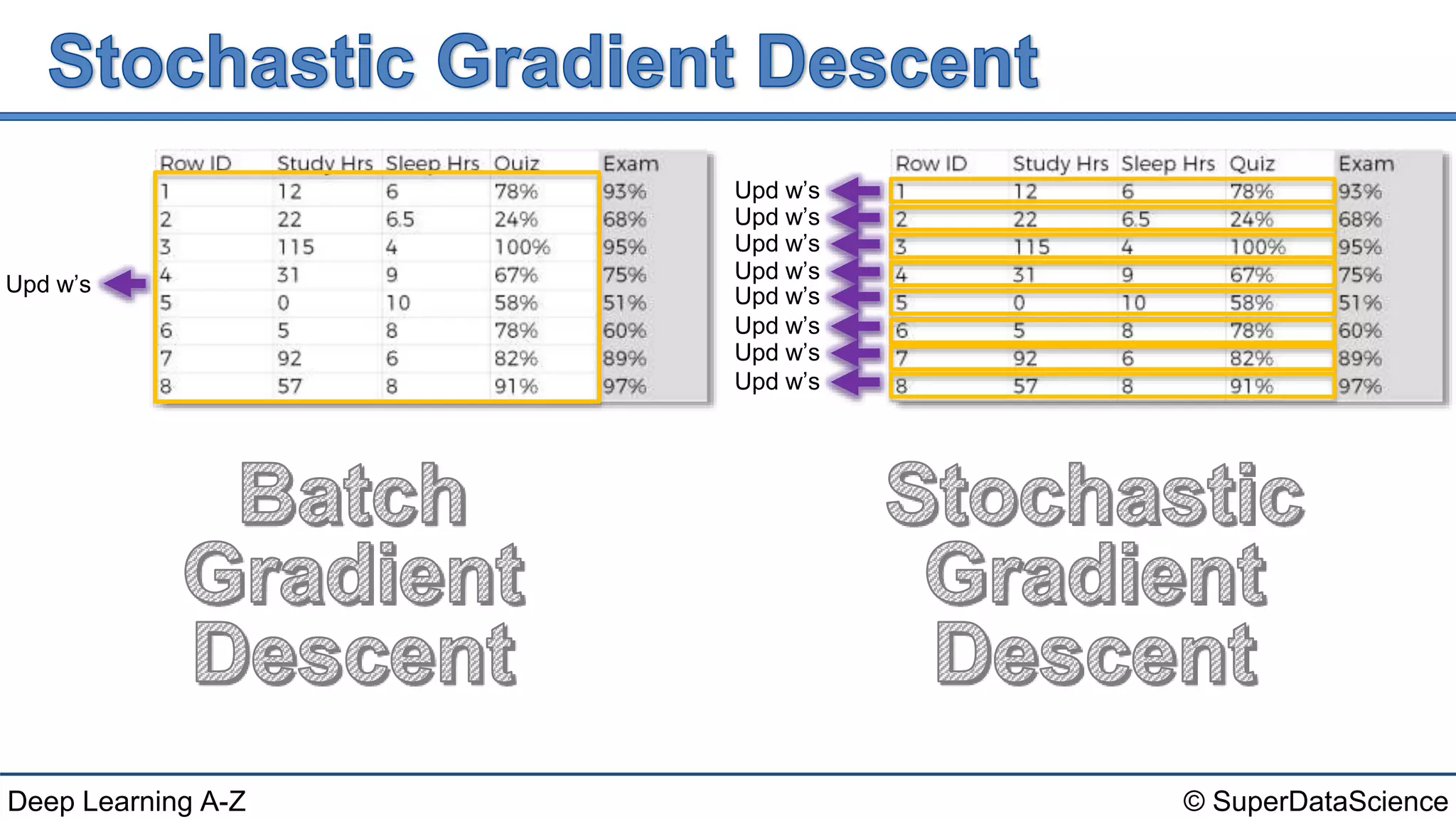

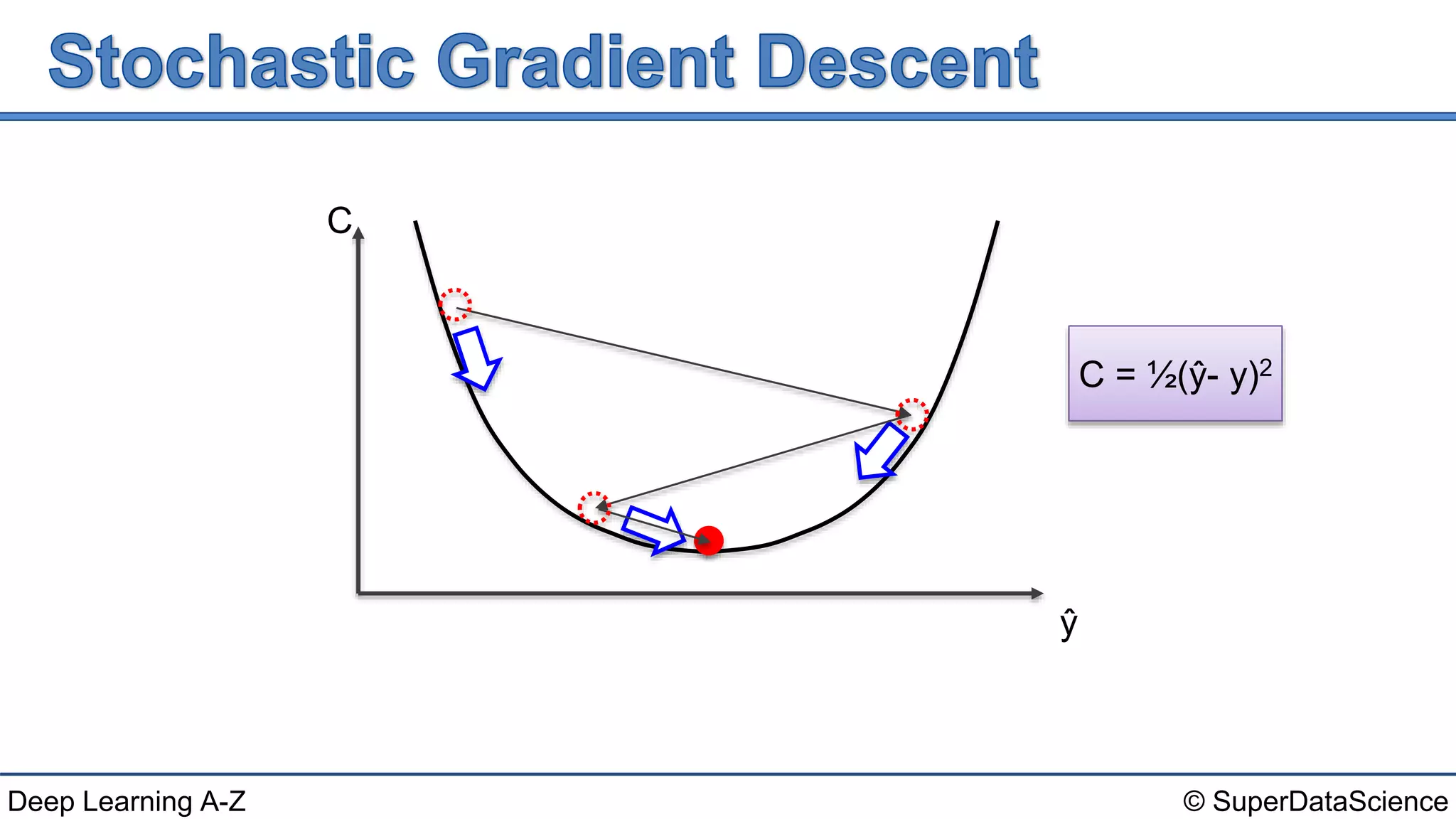

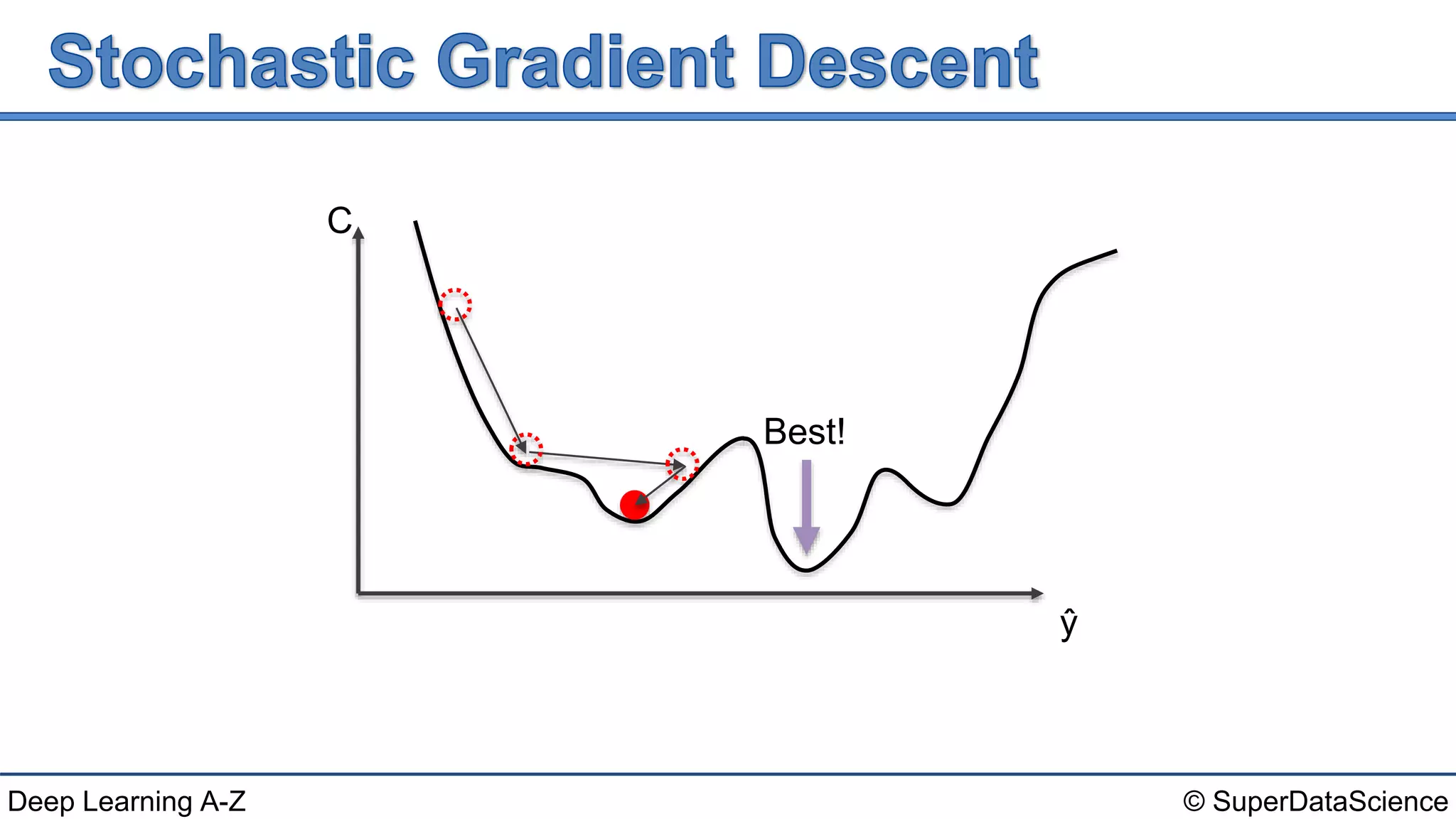

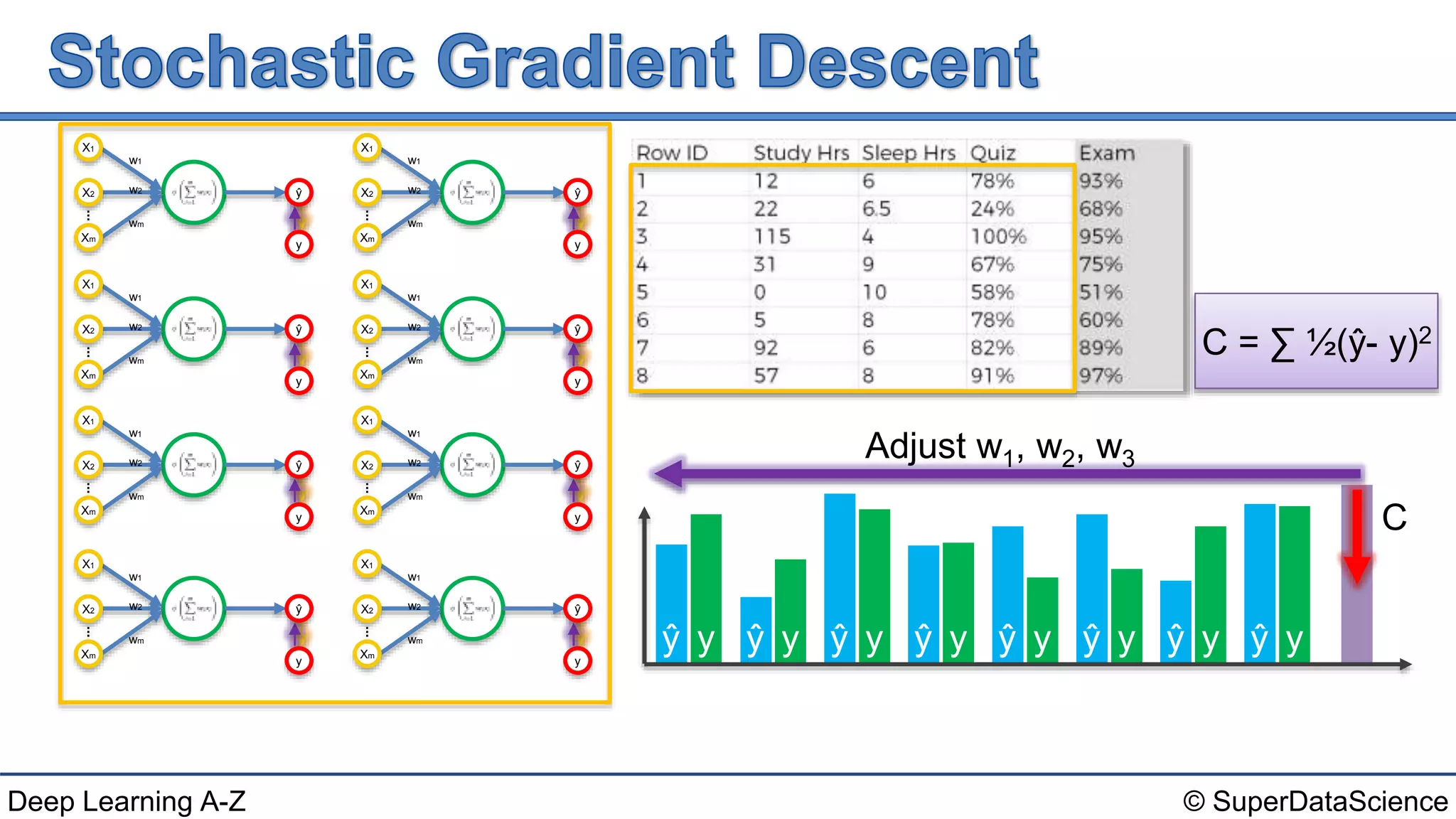

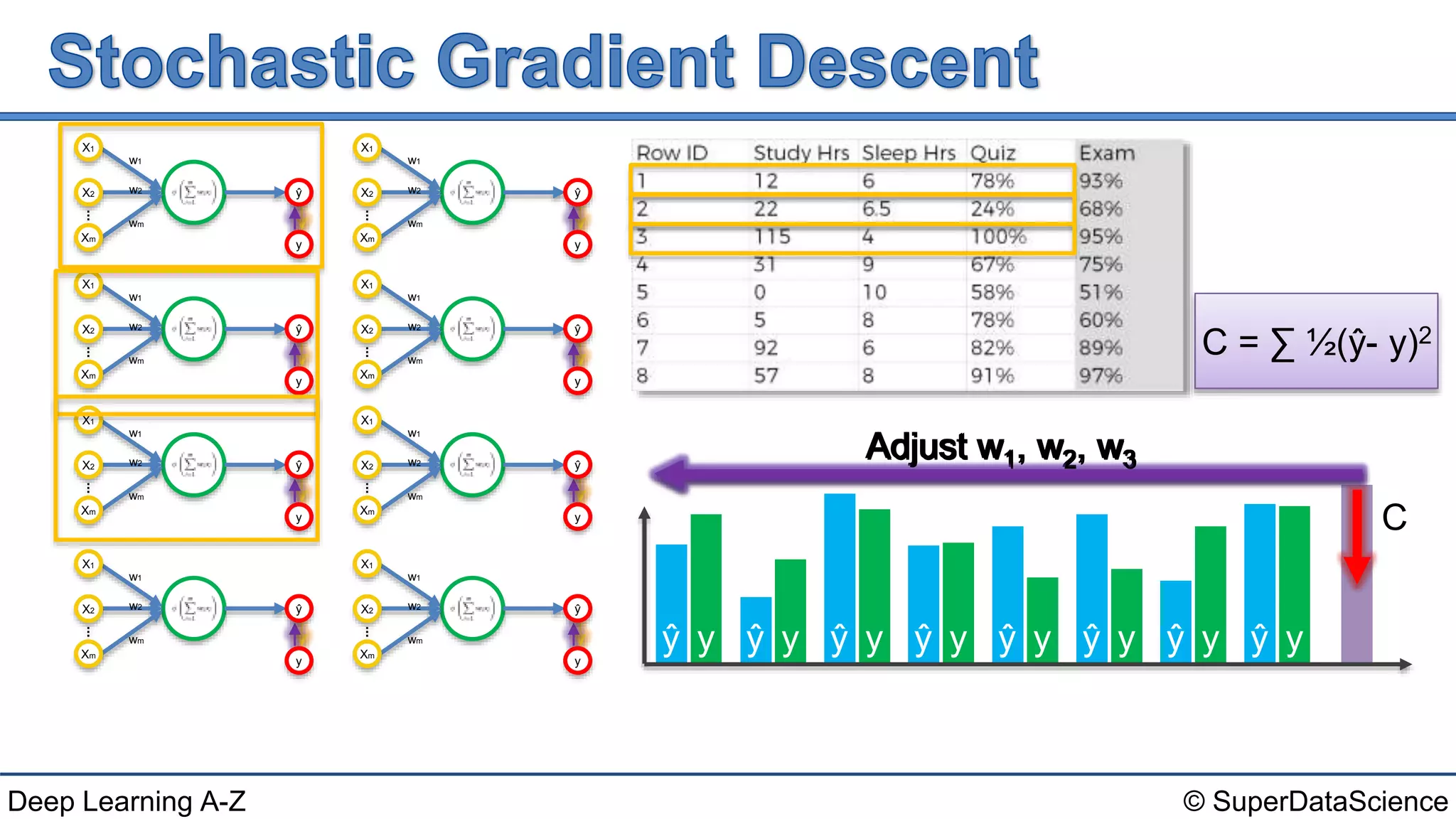

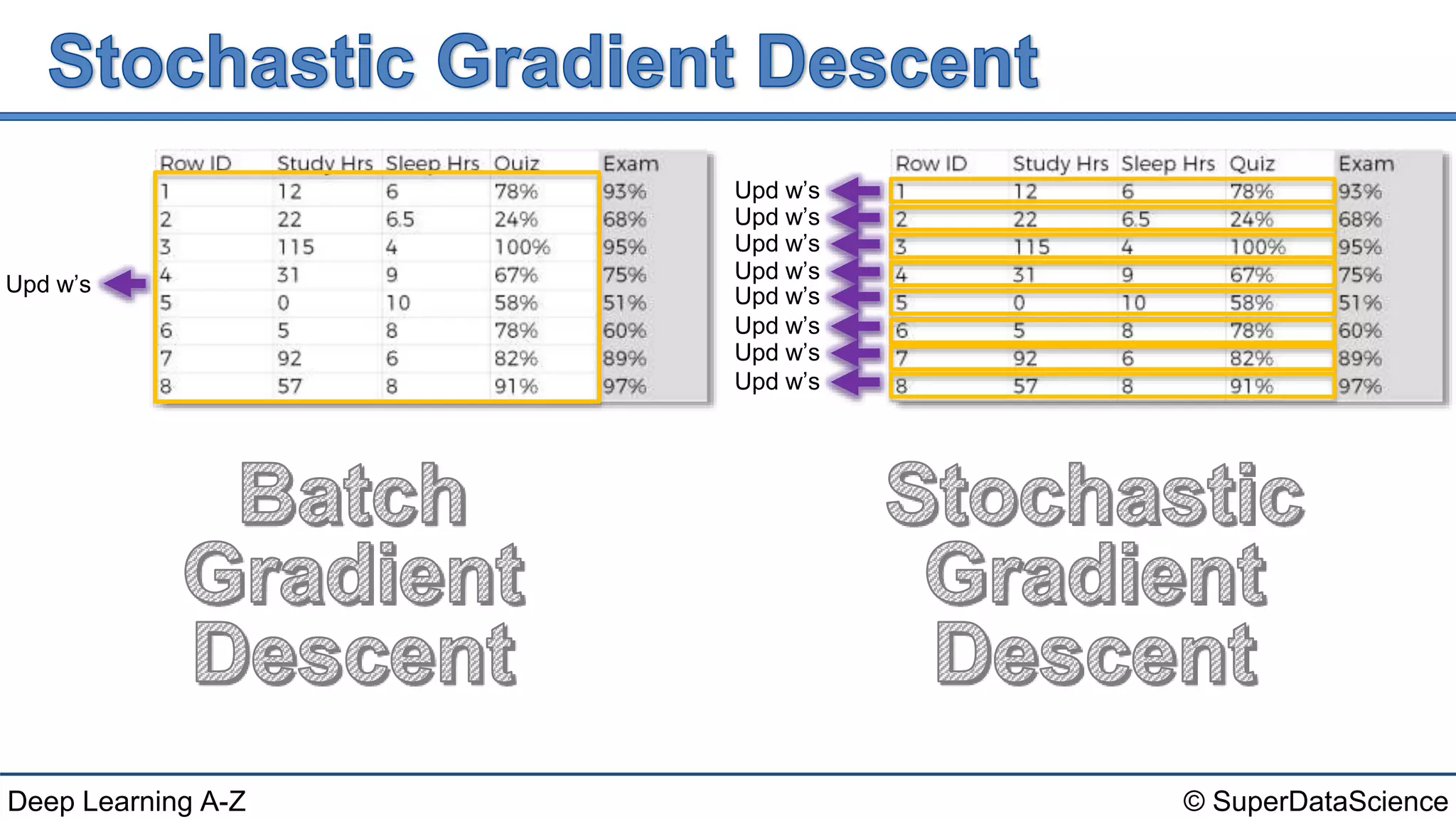

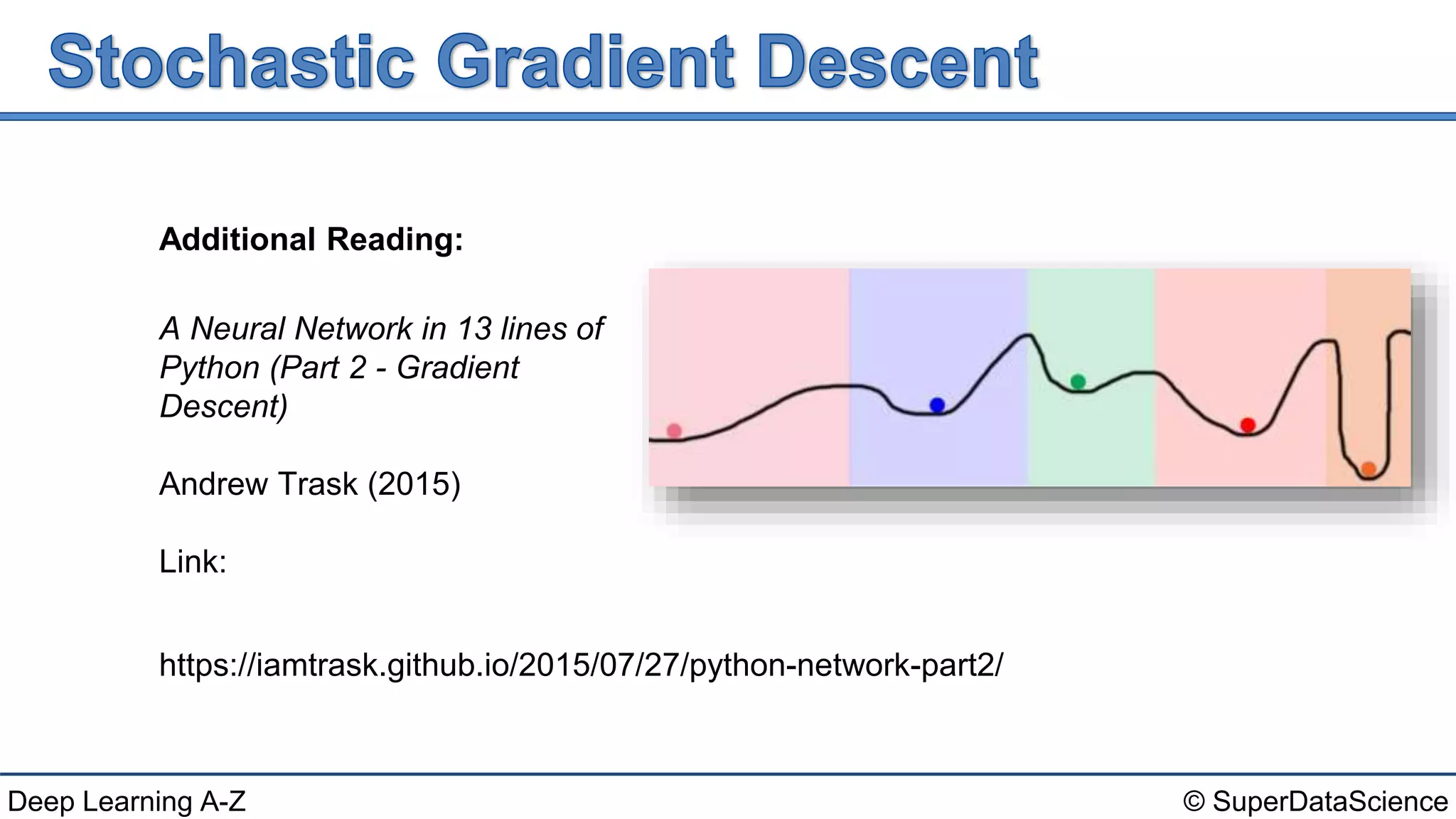

The document discusses gradient descent and updating weights (w's) in a neural network. It provides mathematical formulas for cost (C) as the sum of squared errors between predicted (y^) and true (y) values. It describes adjusting the weights w1, w2, w3 to minimize cost via gradient descent. References are provided for additional reading on implementing neural networks in Python and the fundamentals of neural networks and deep learning.