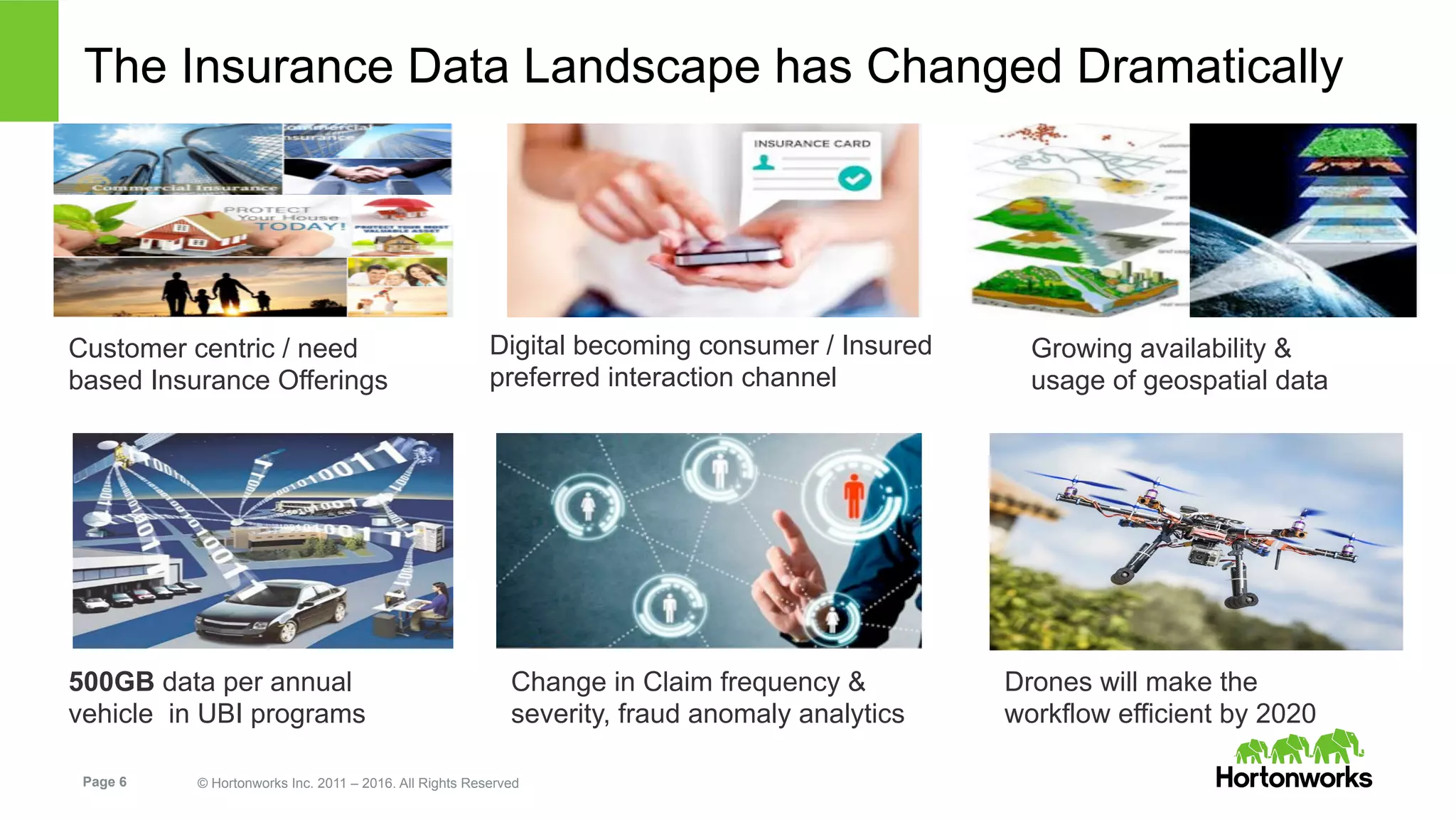

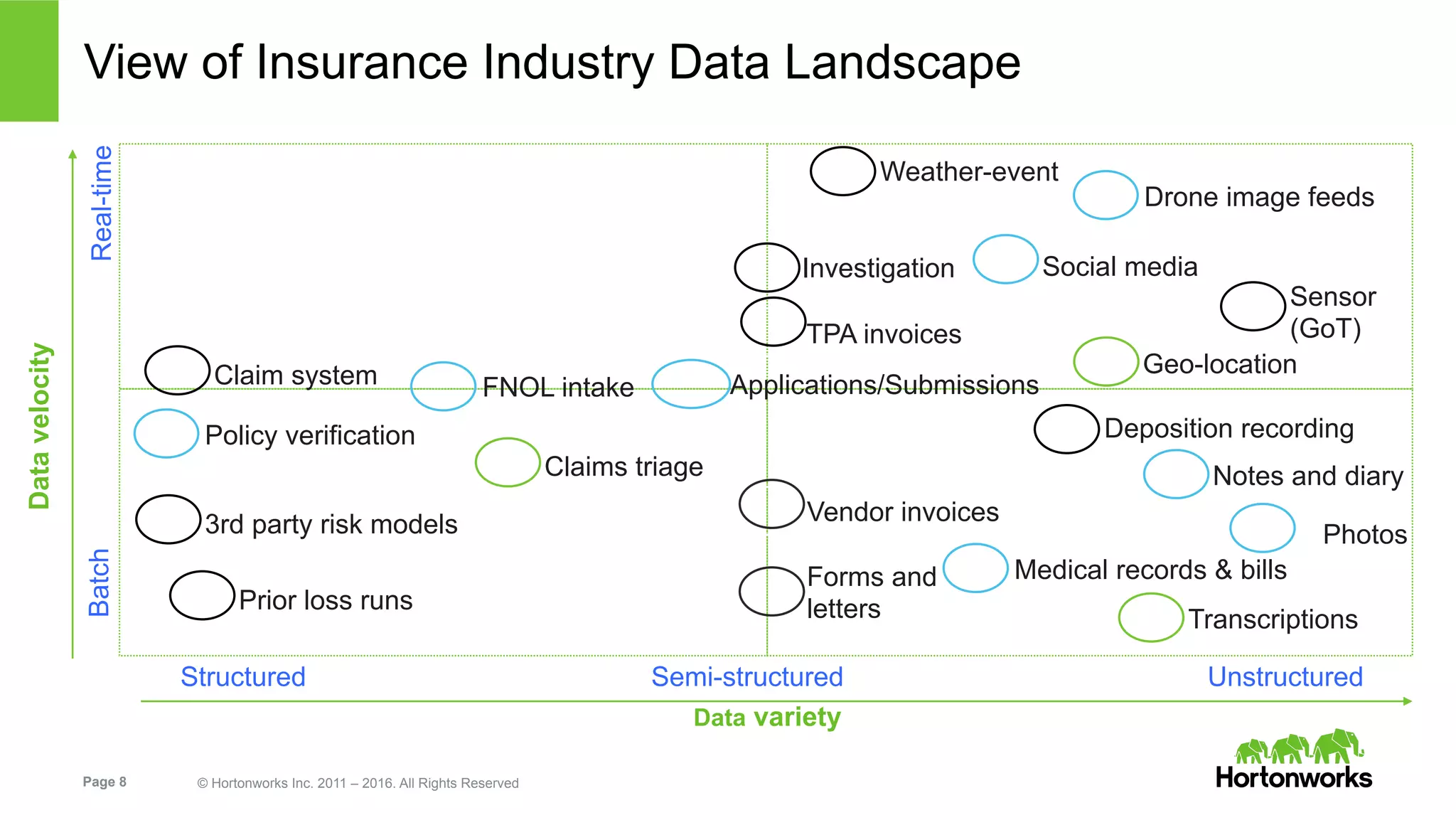

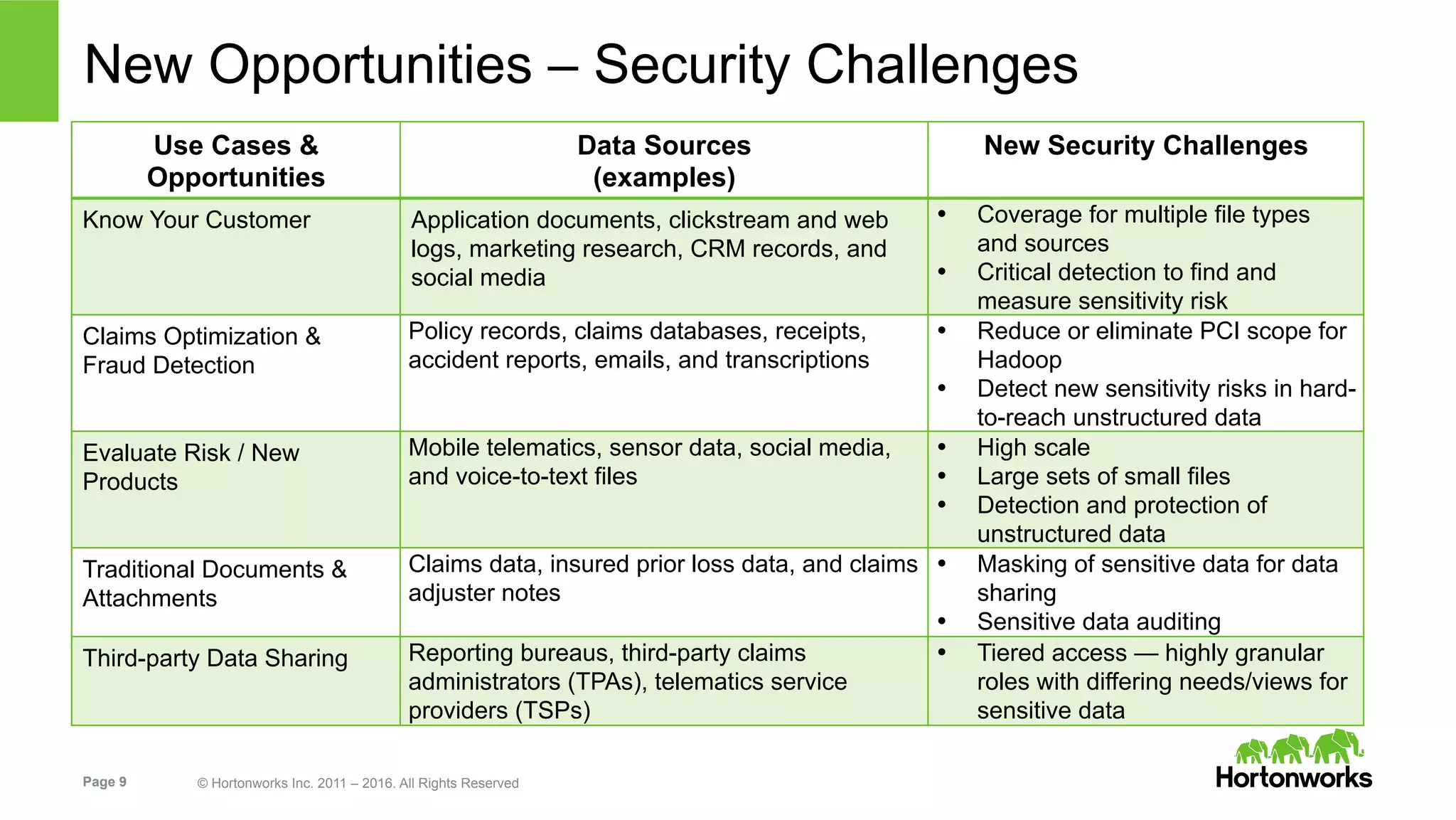

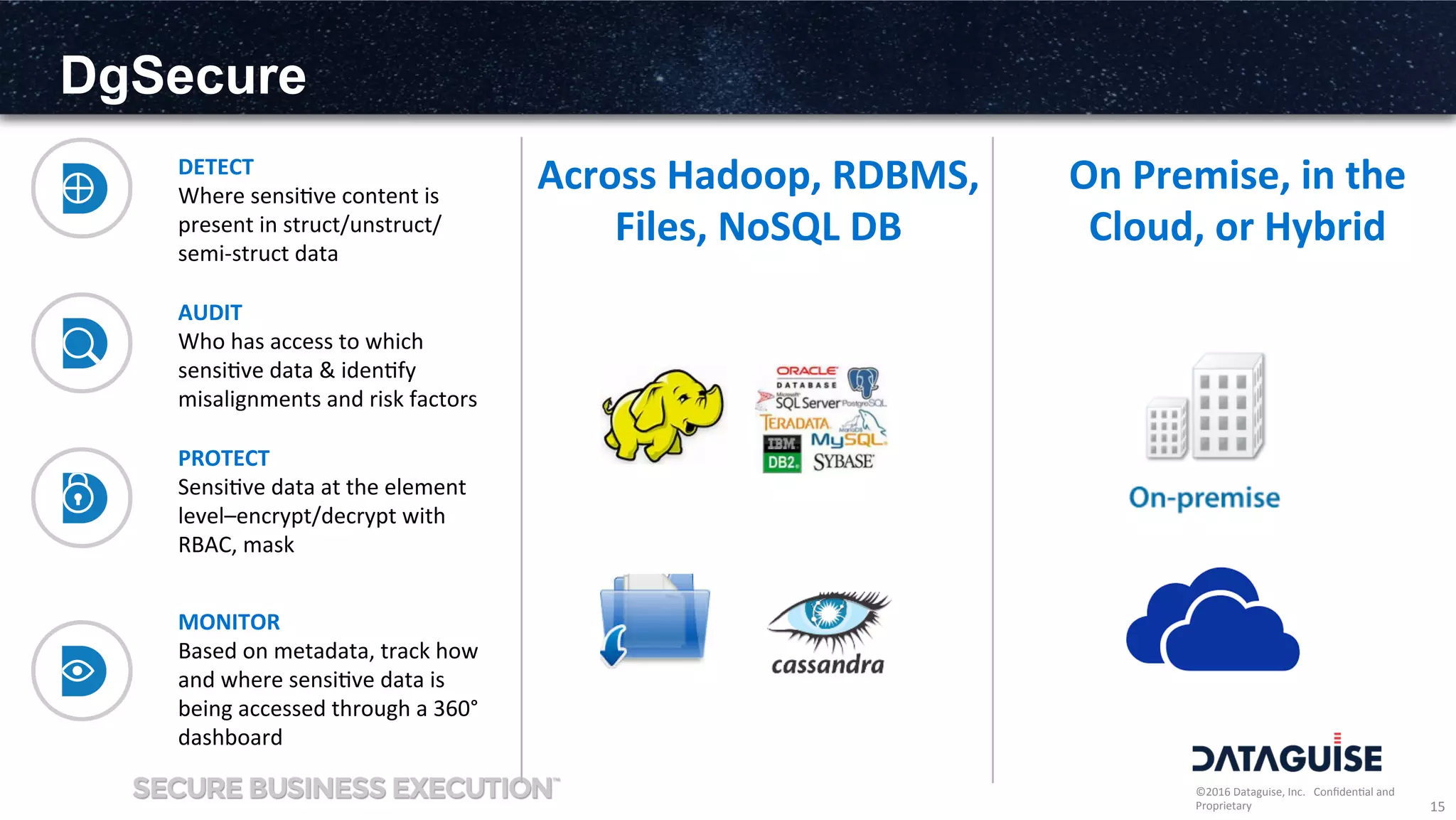

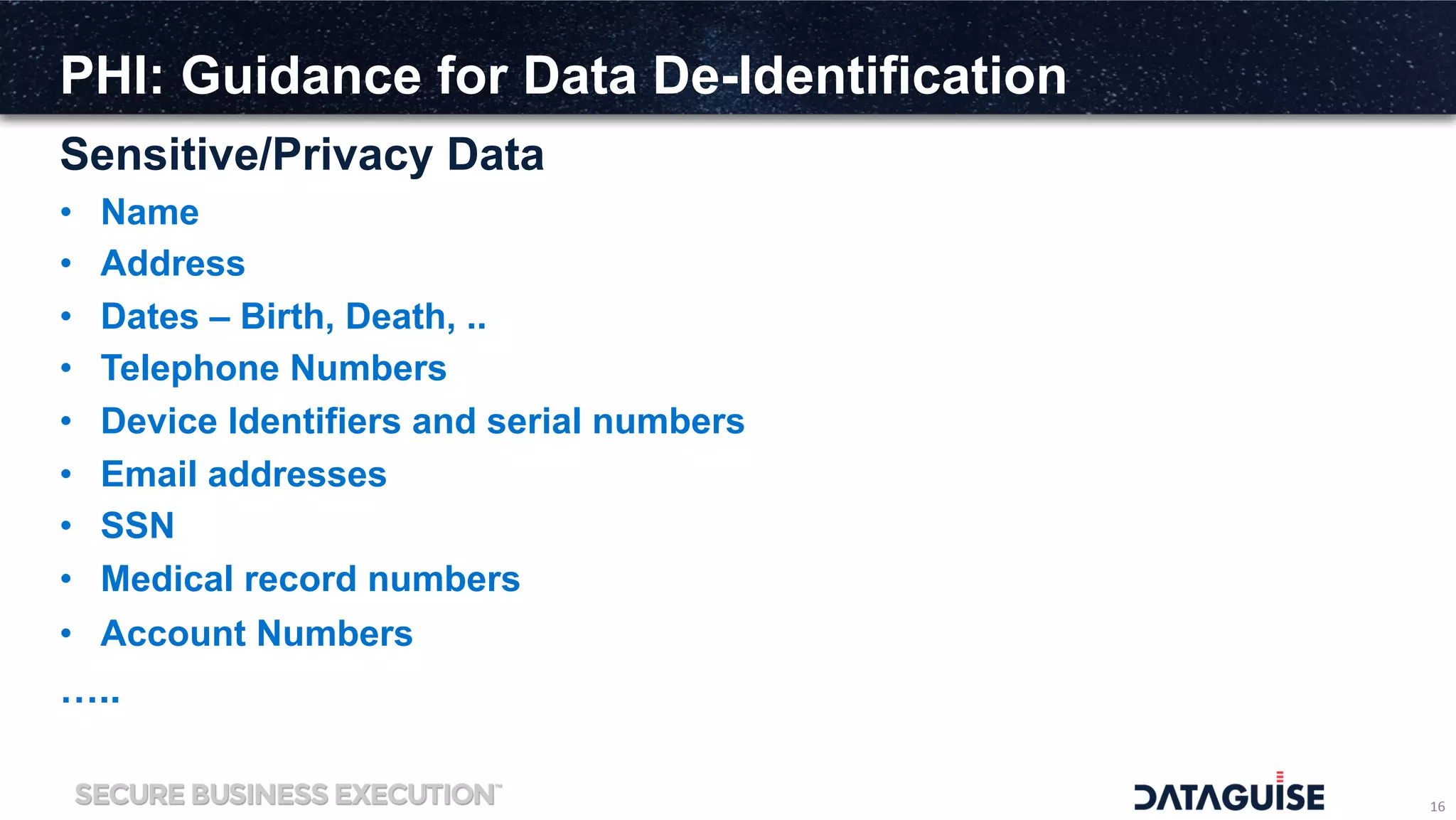

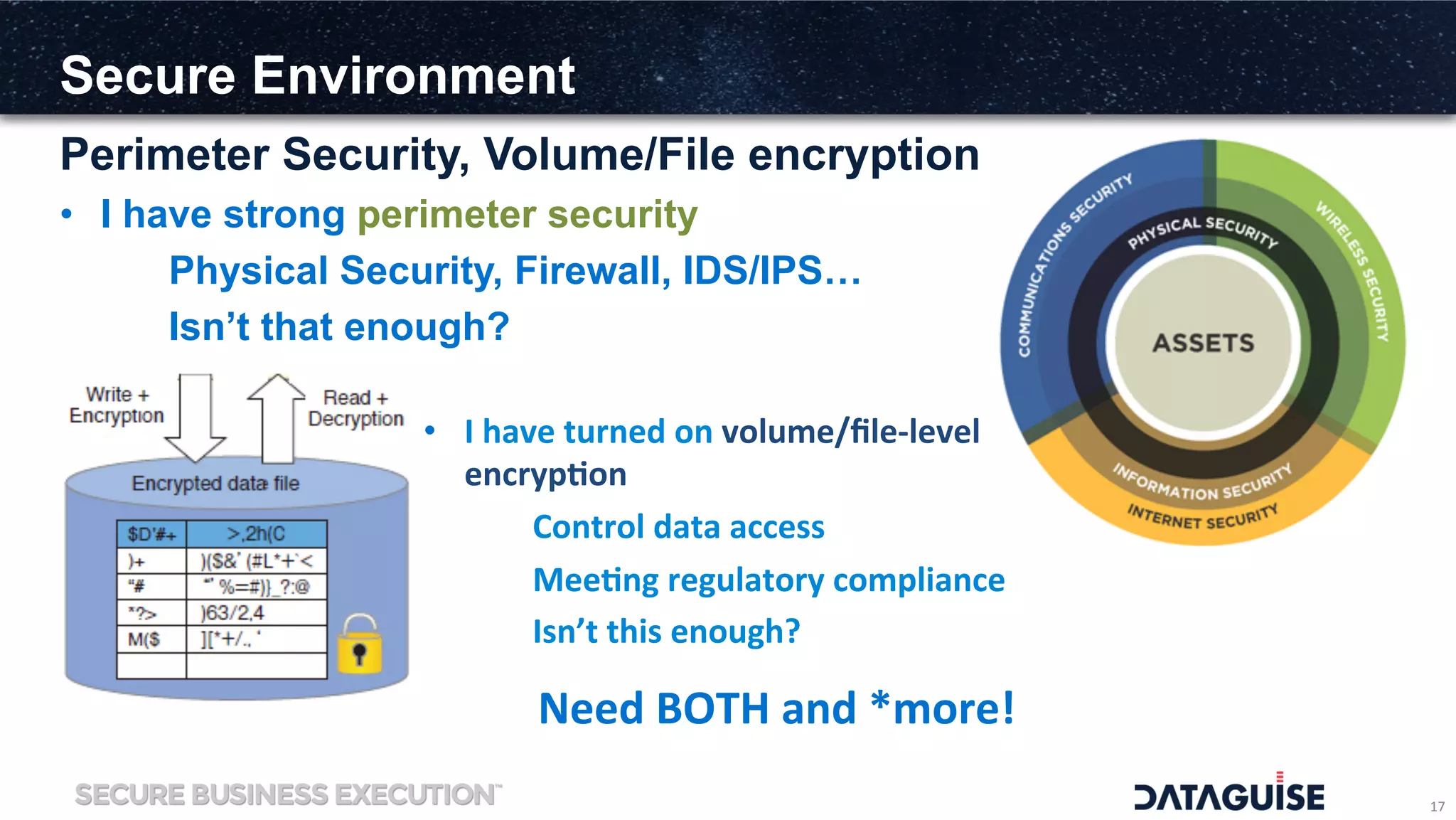

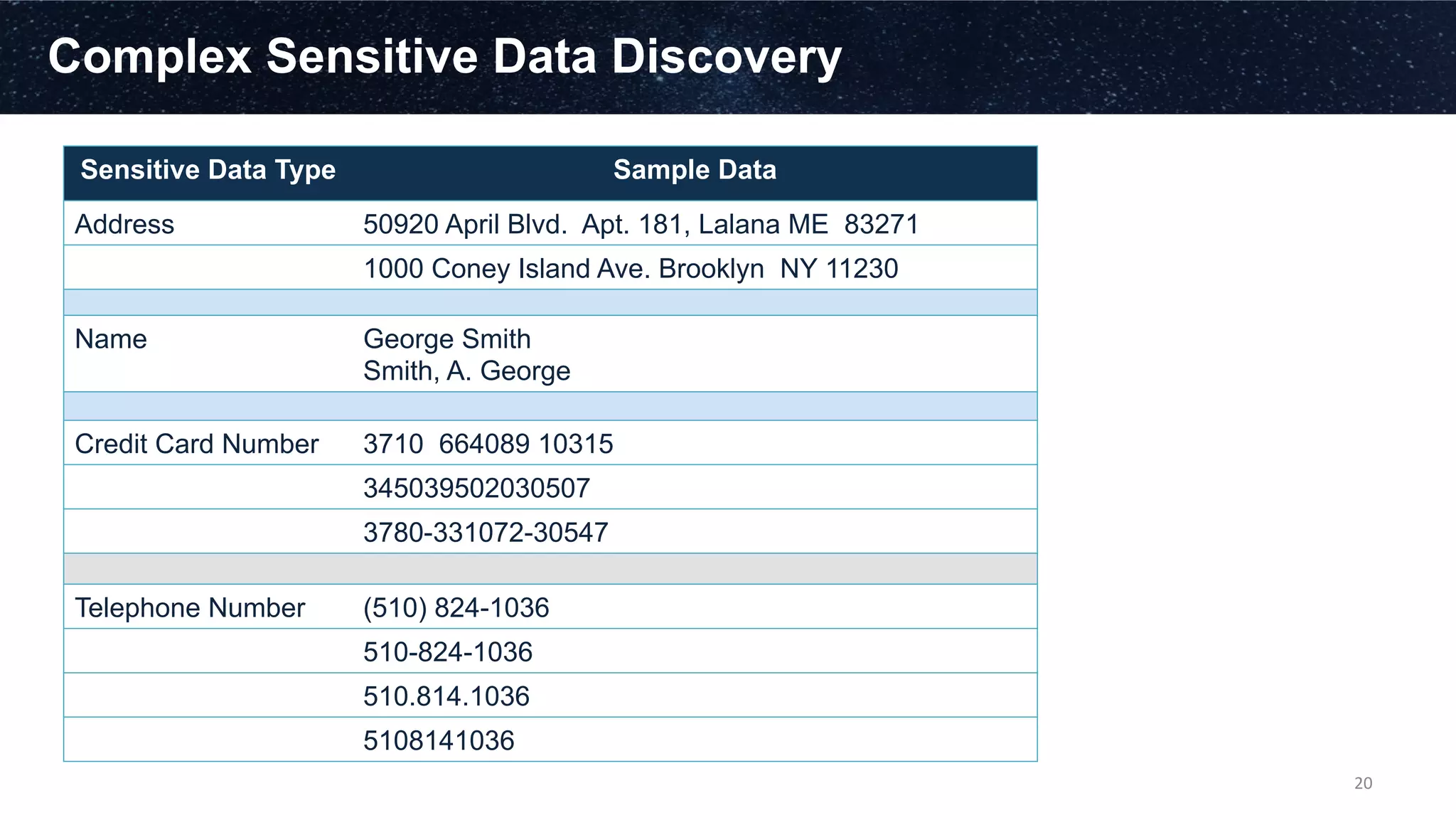

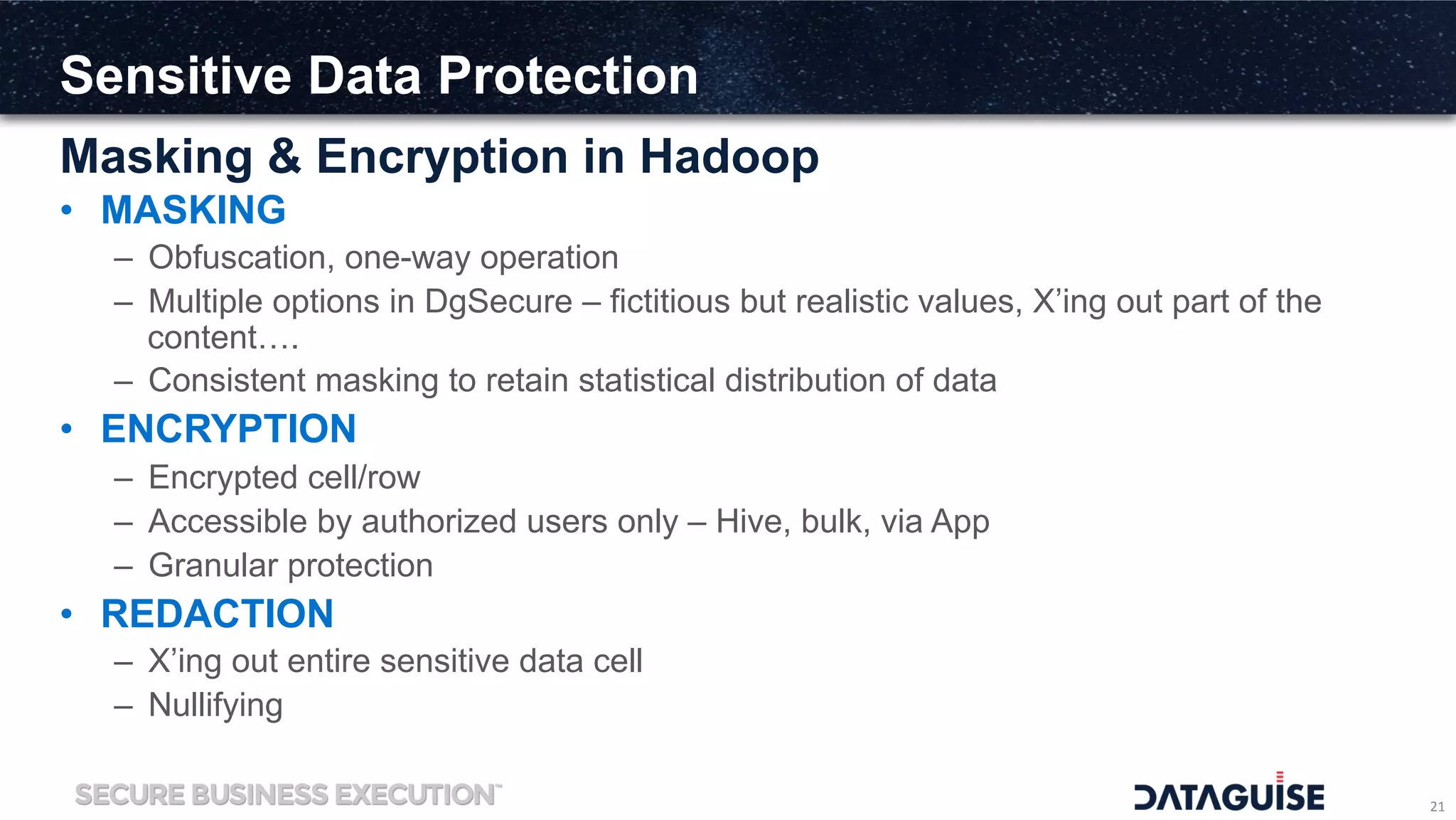

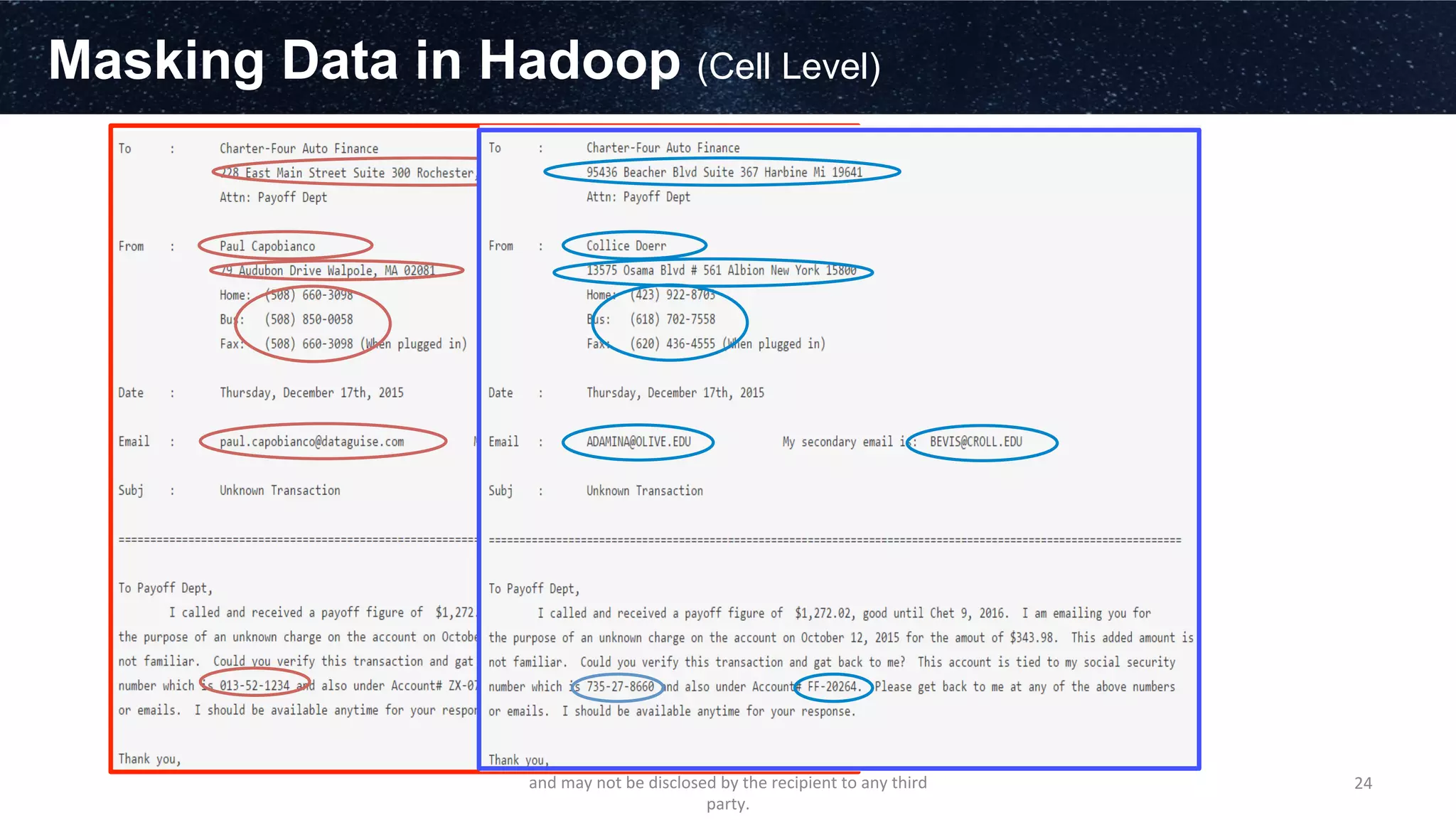

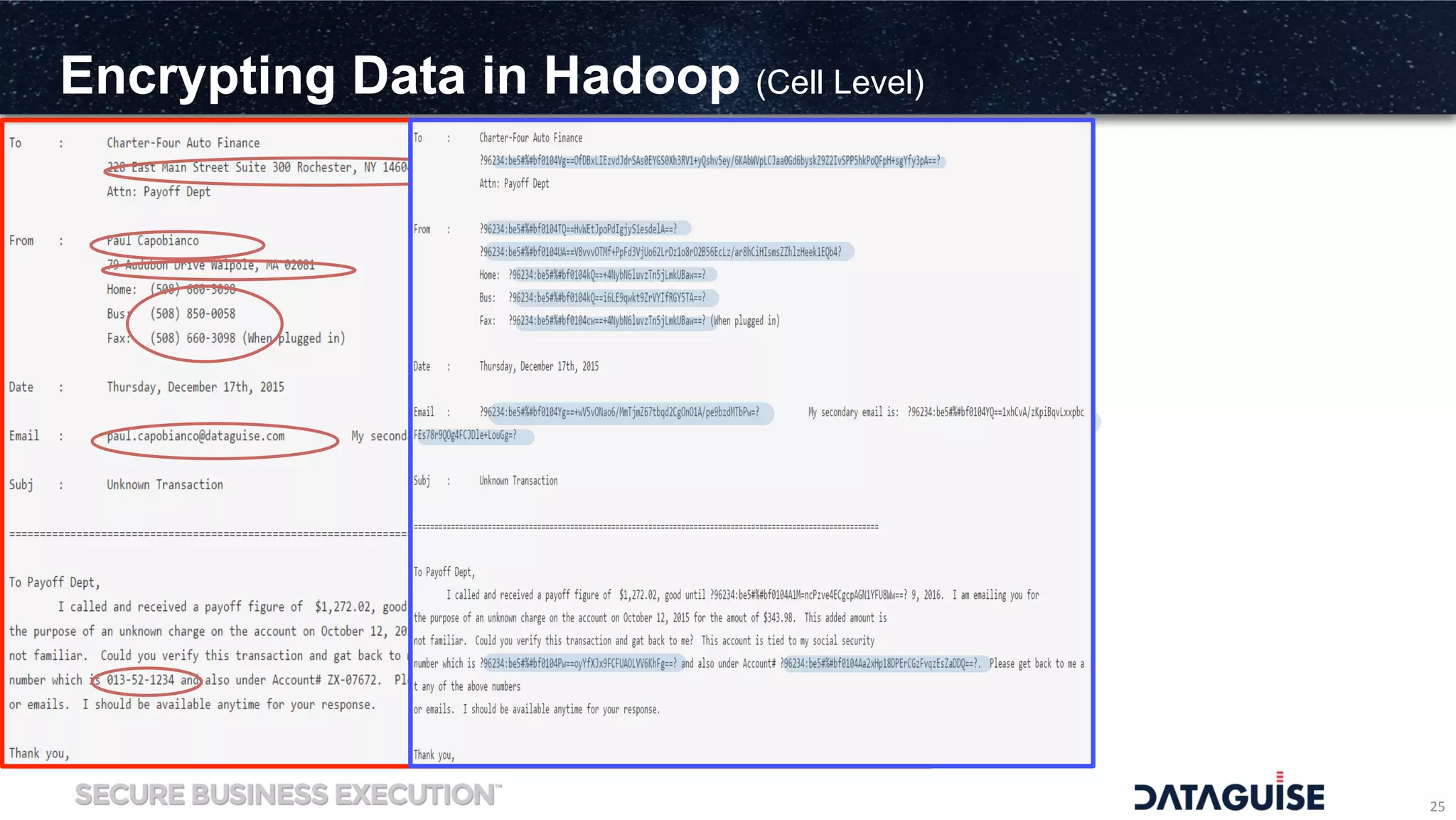

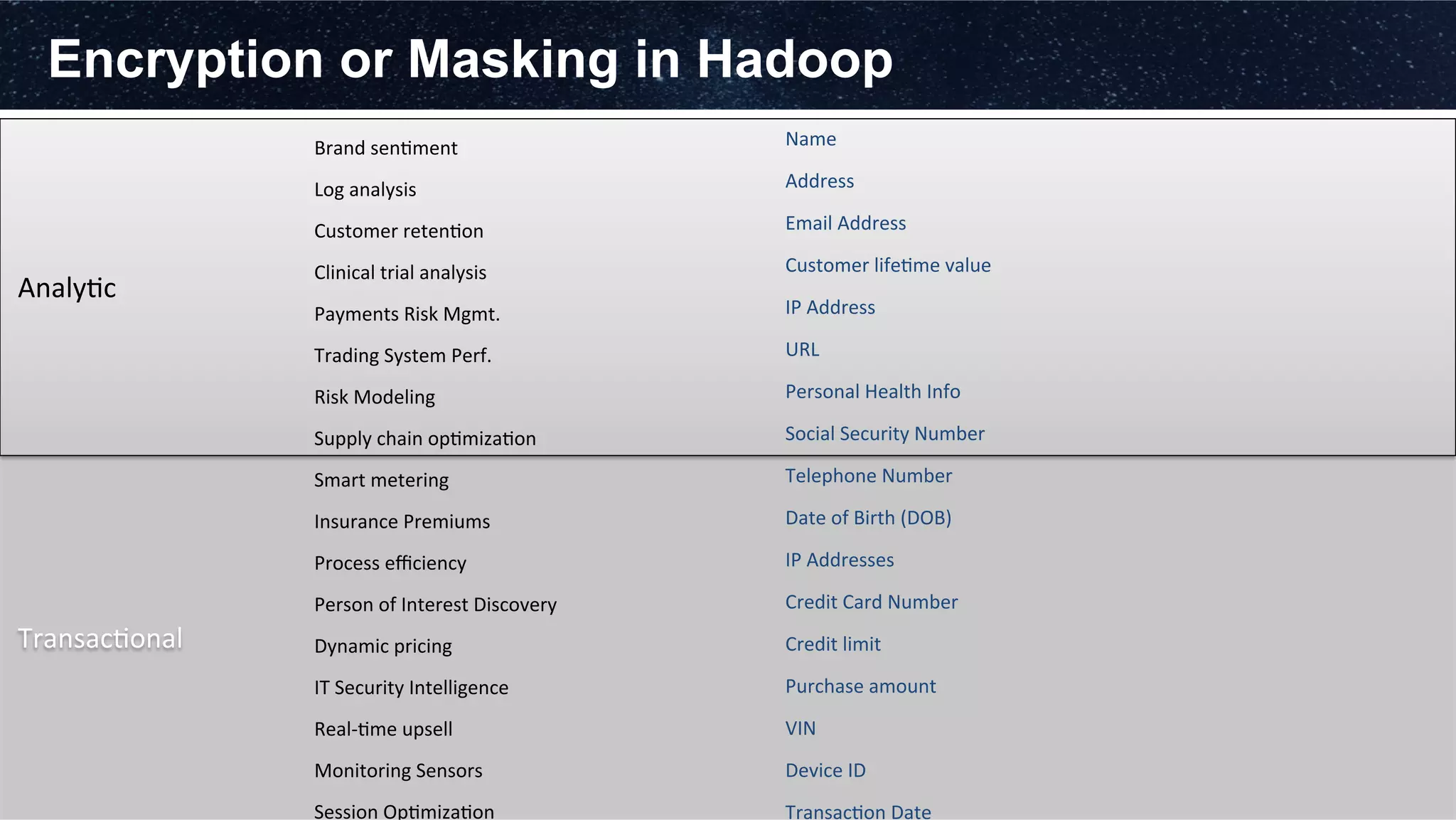

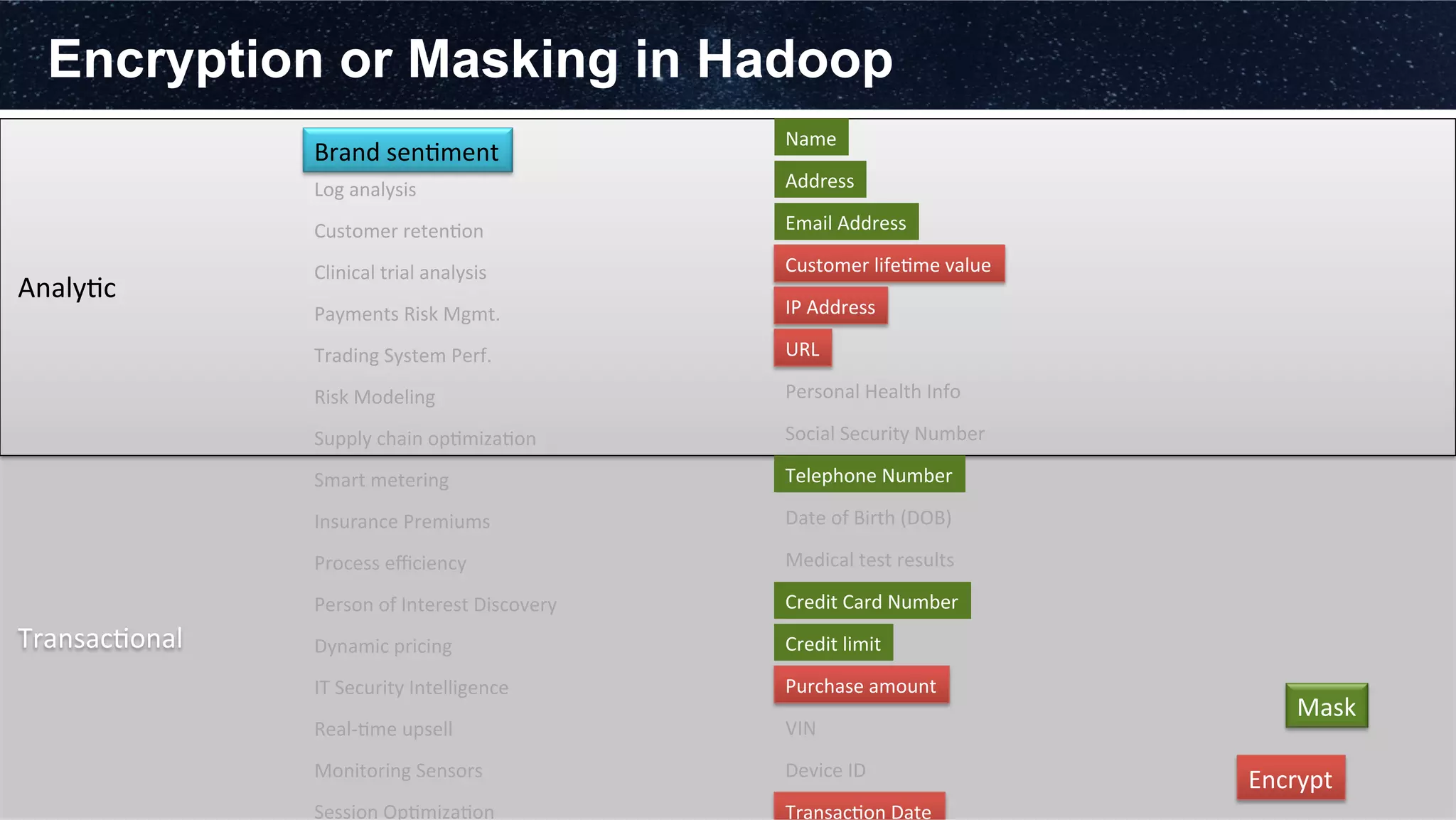

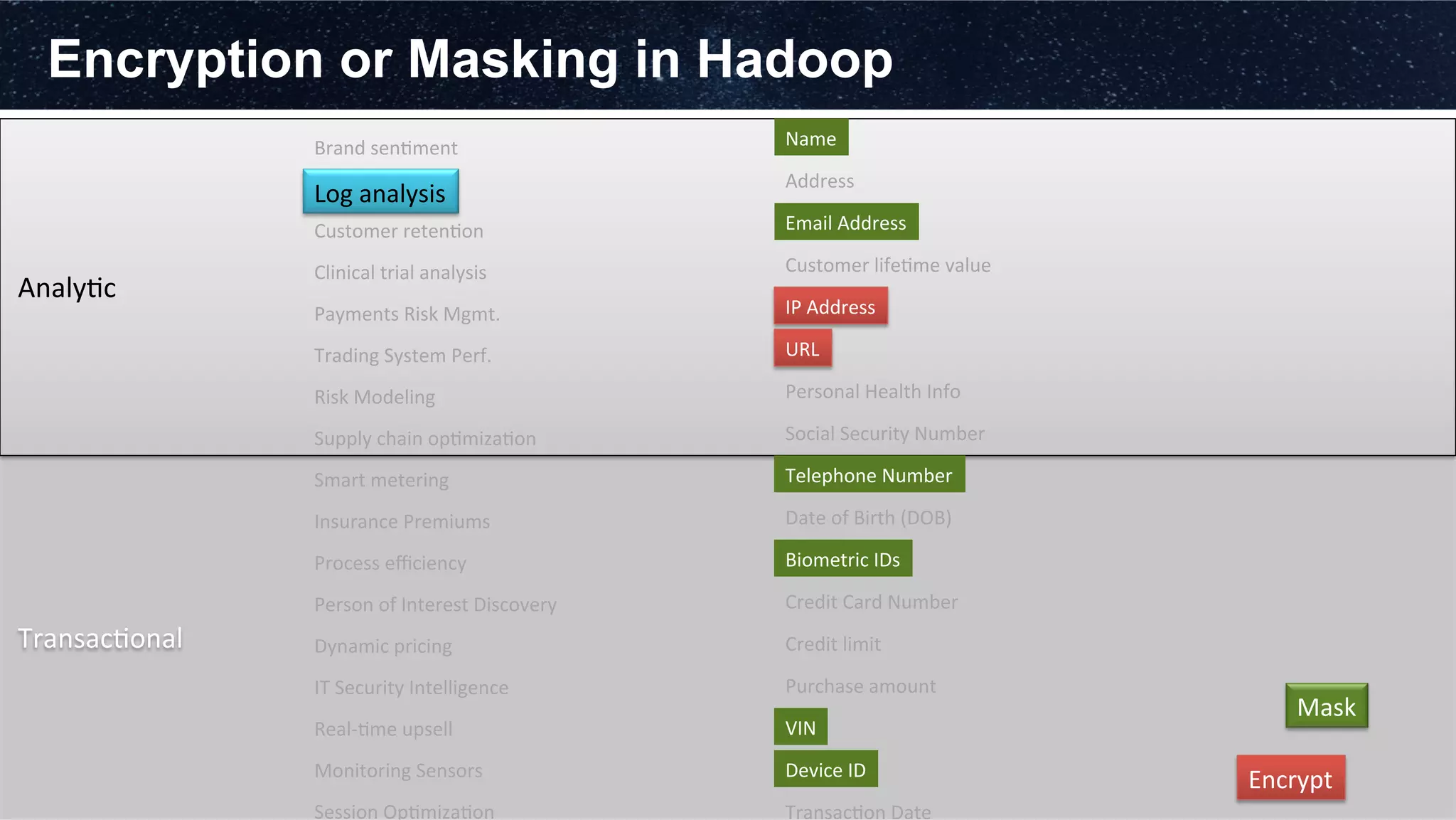

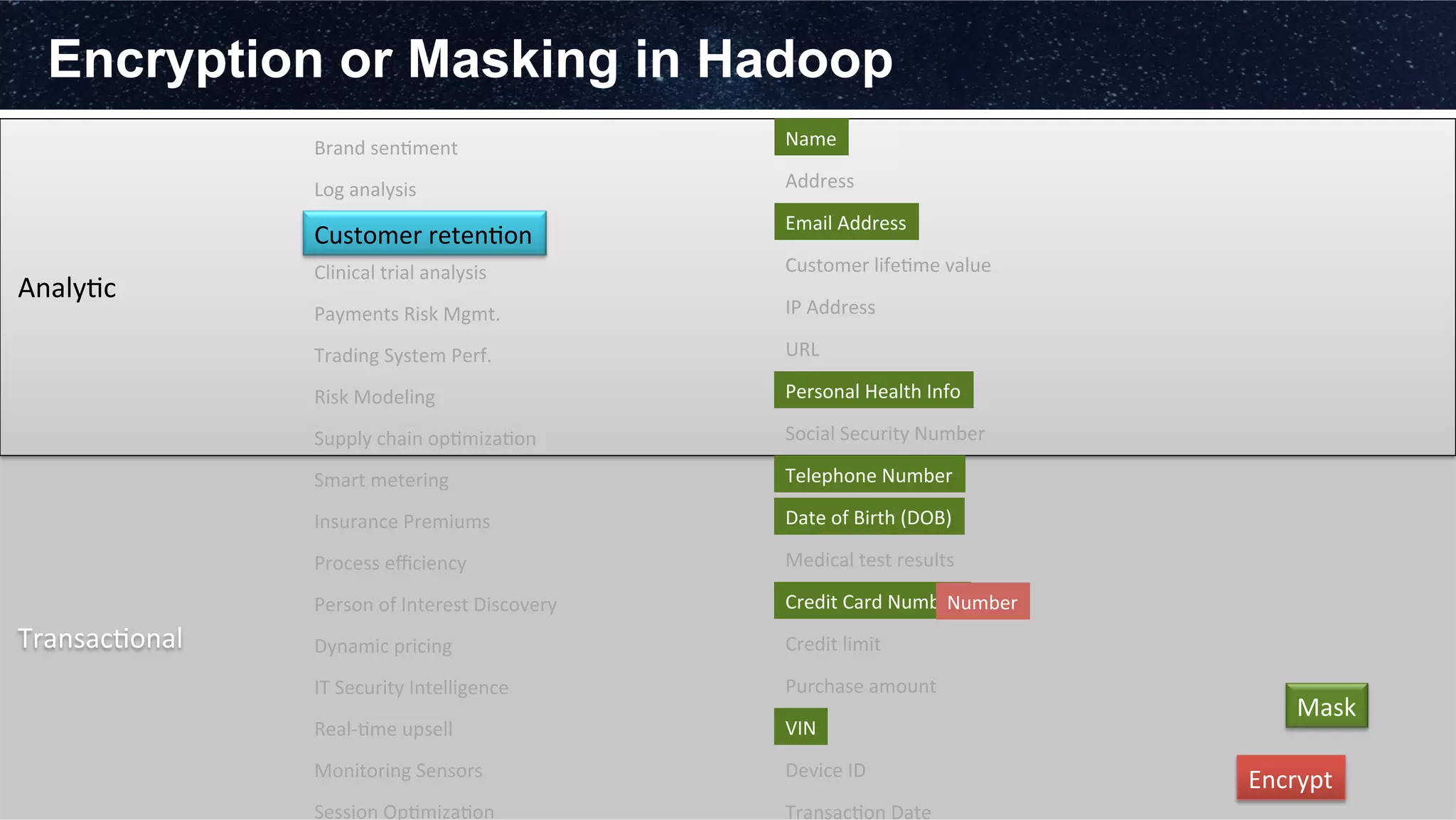

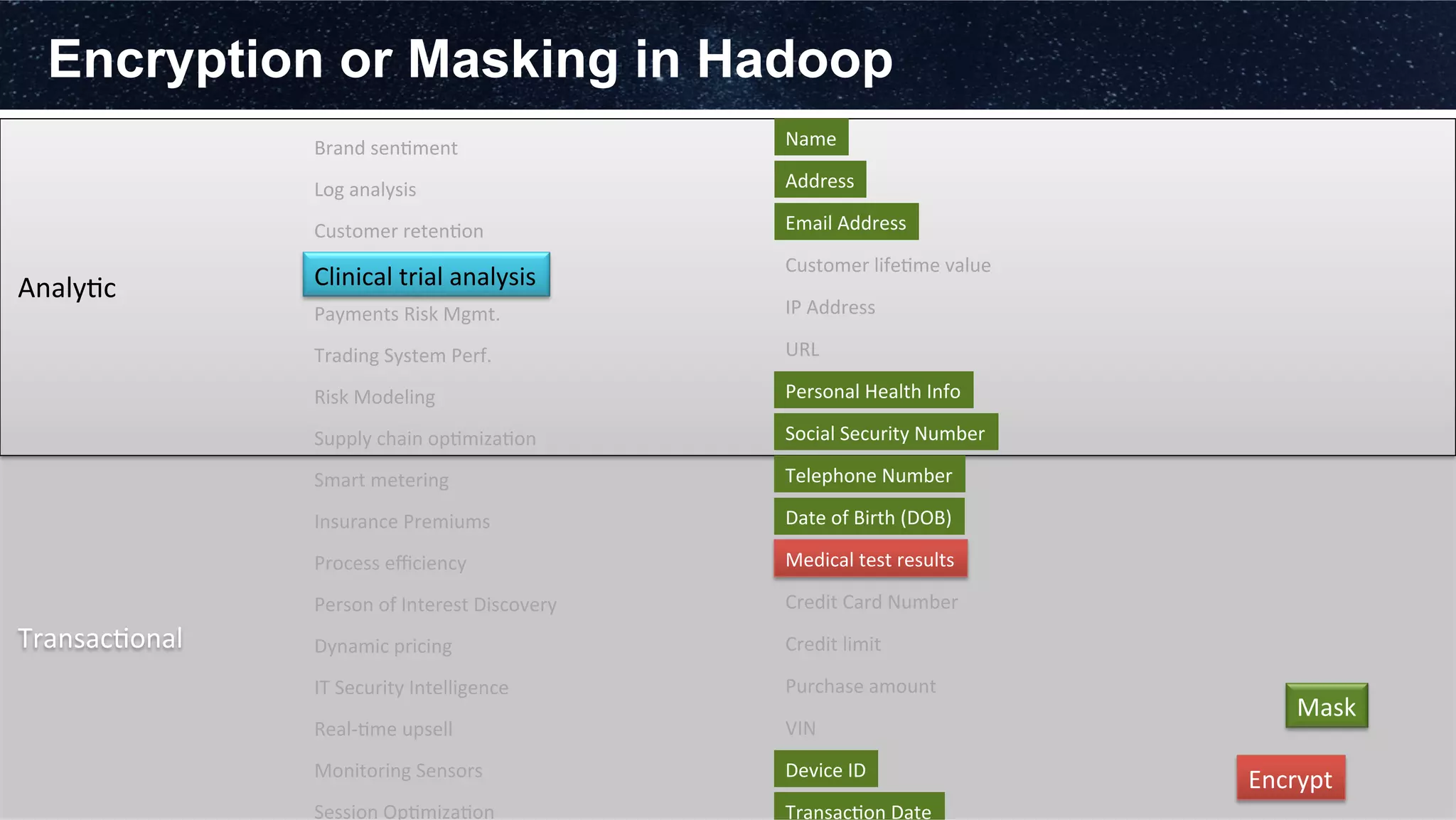

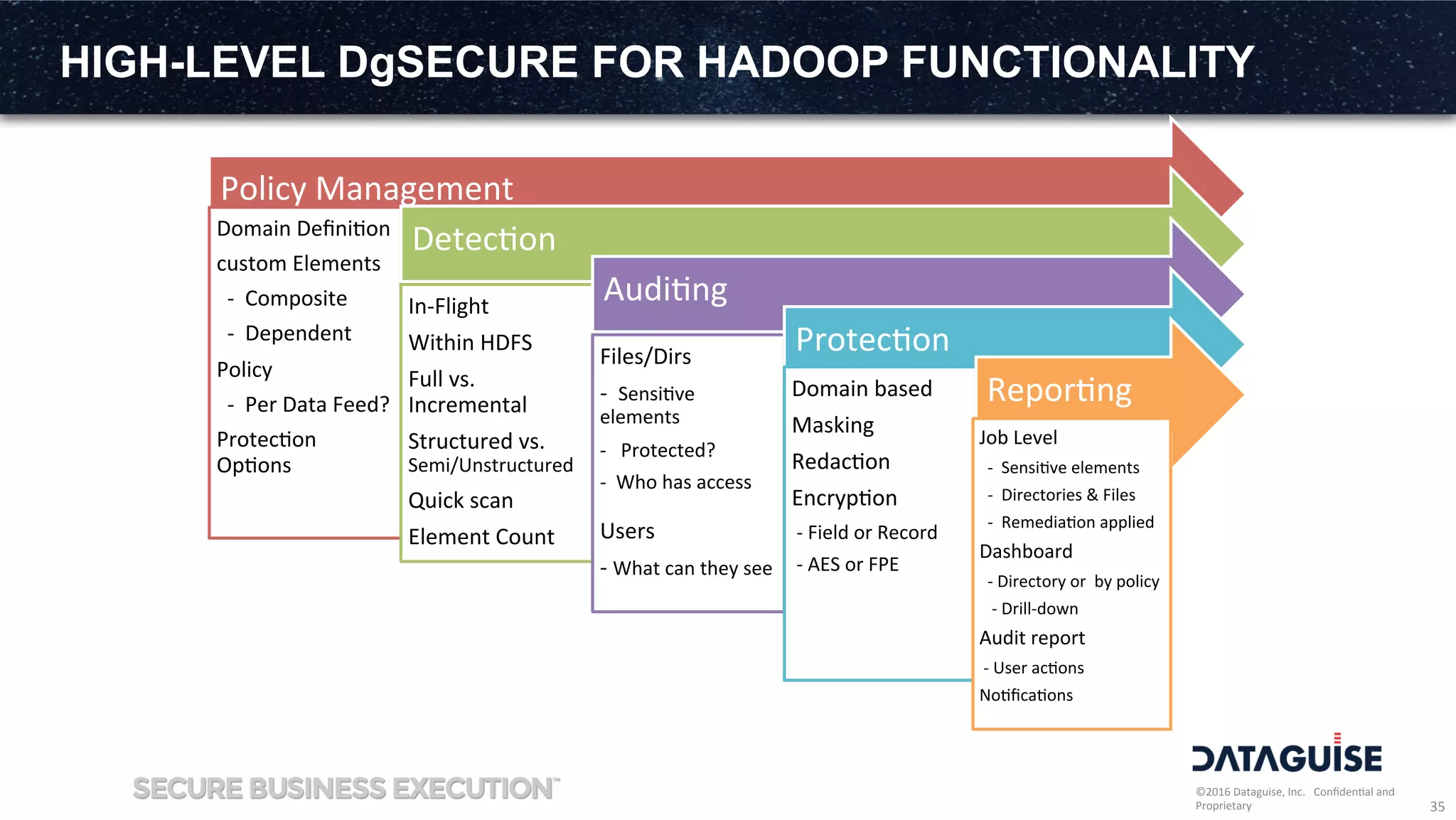

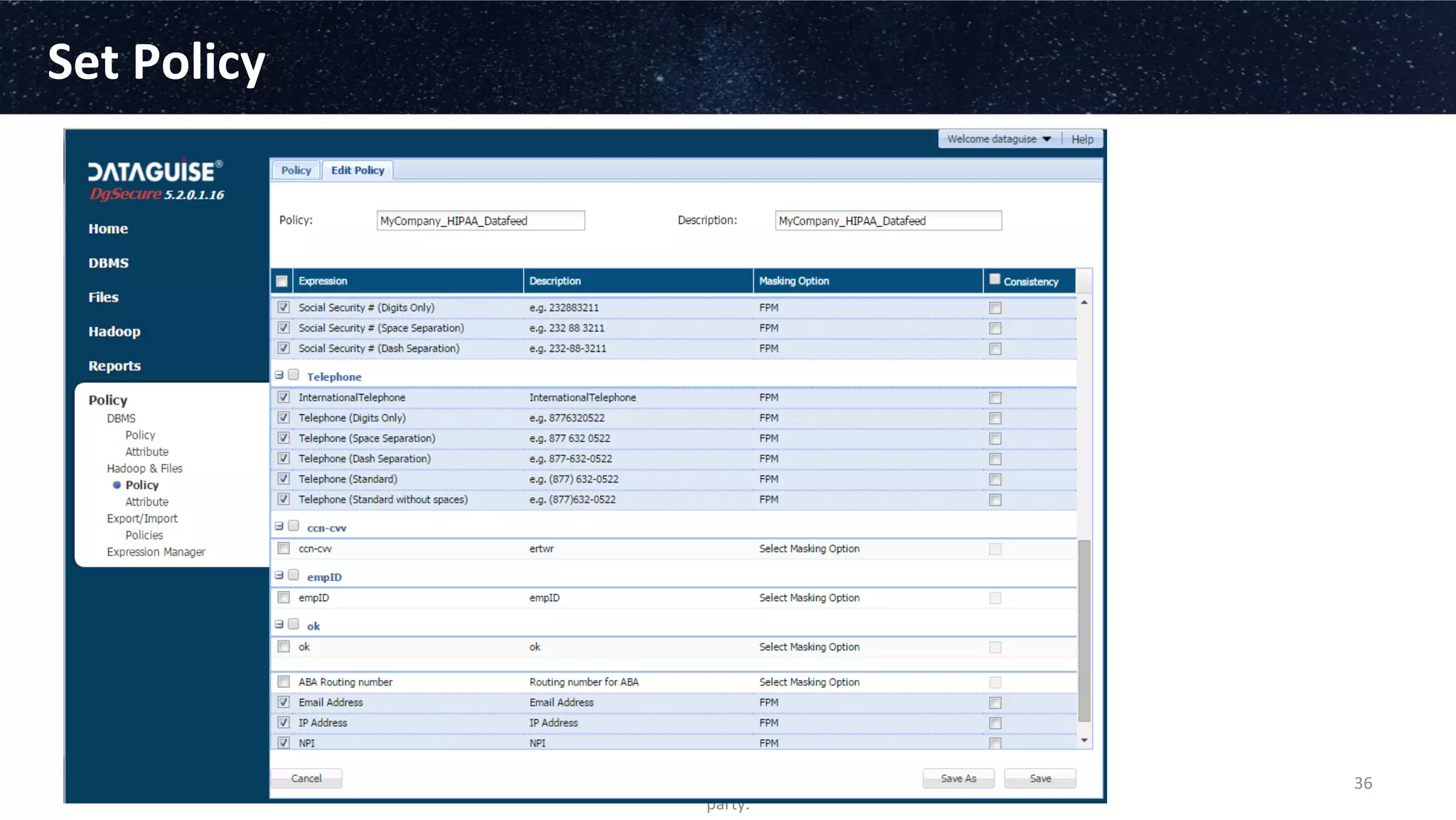

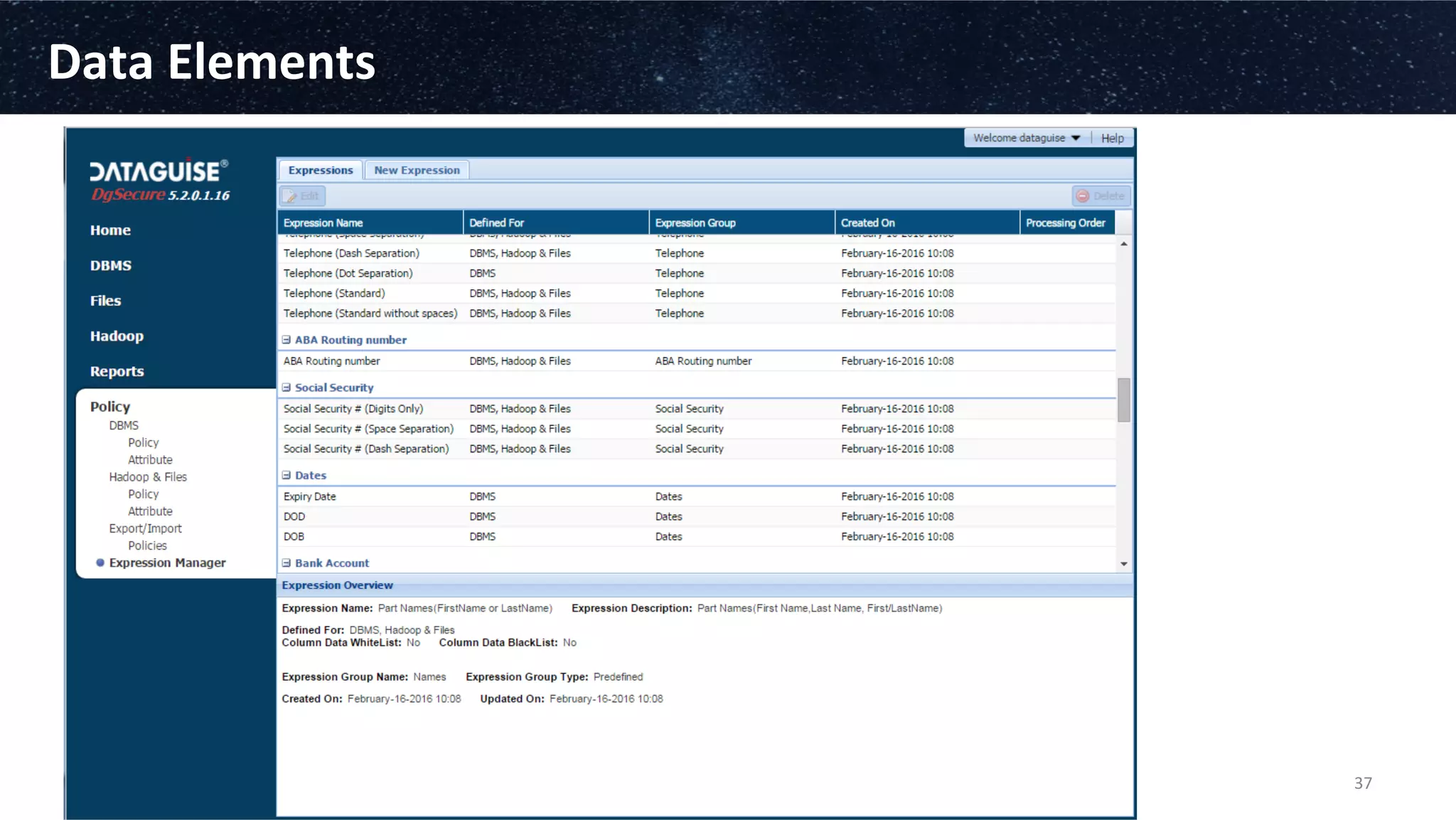

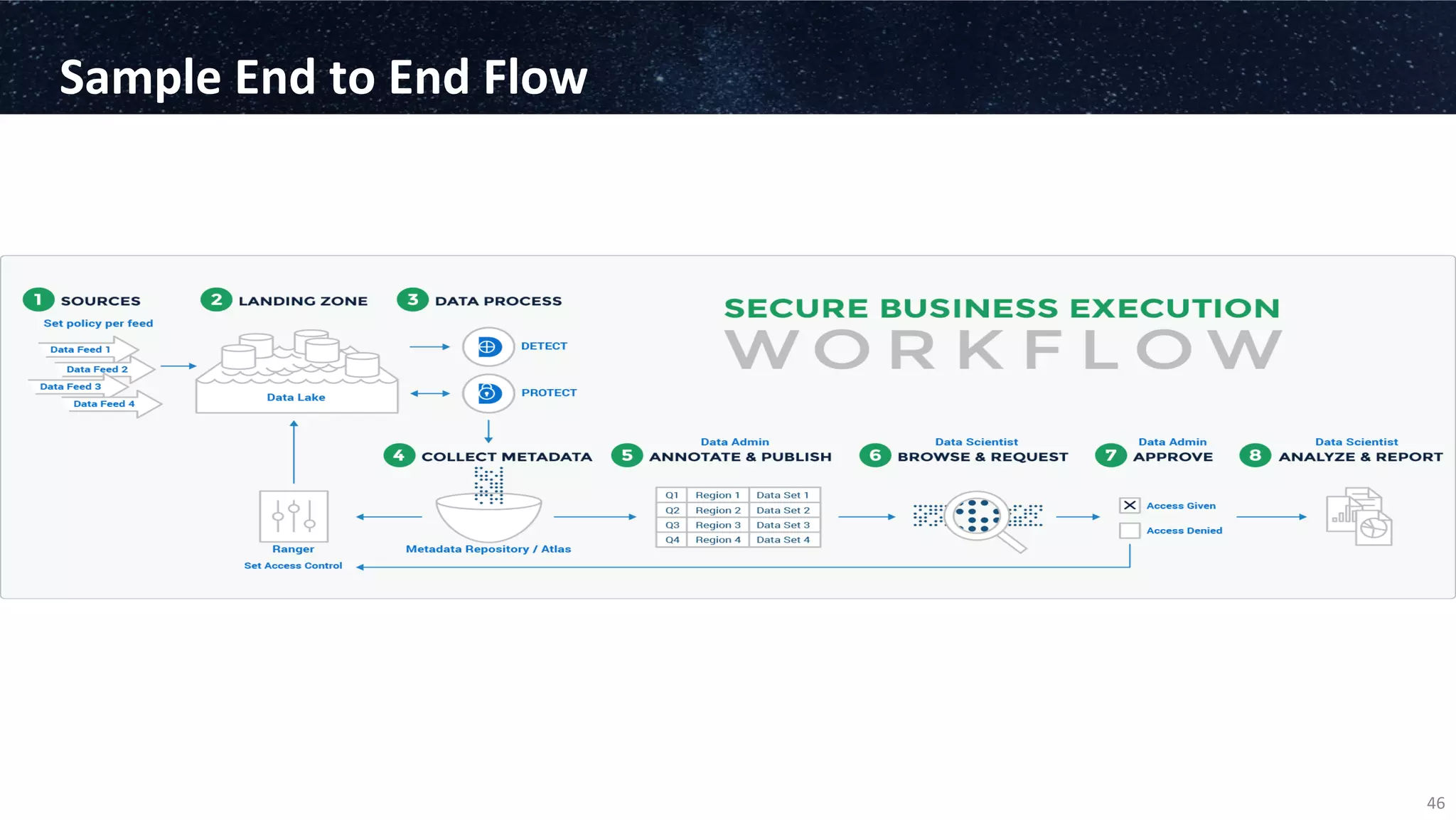

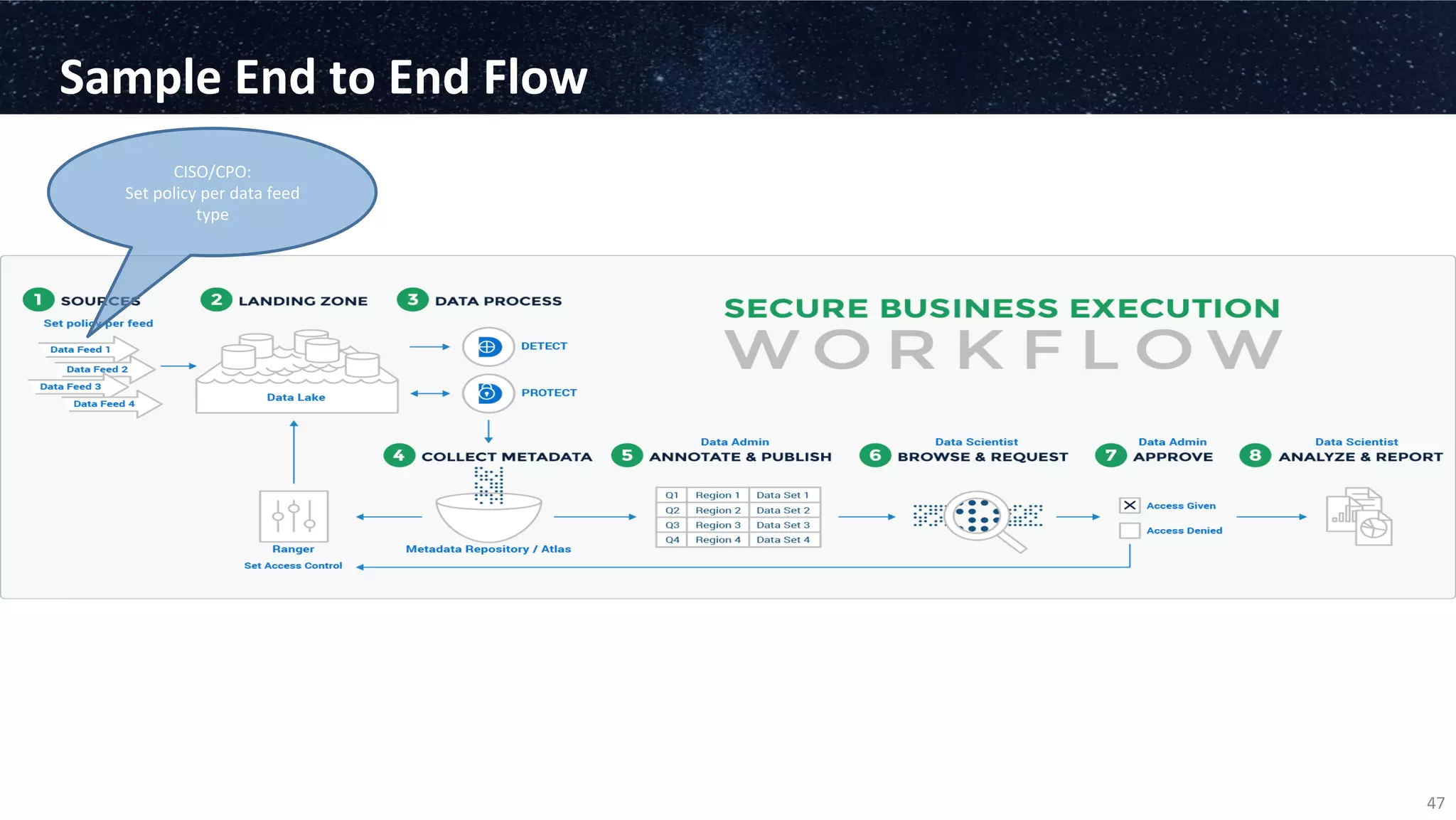

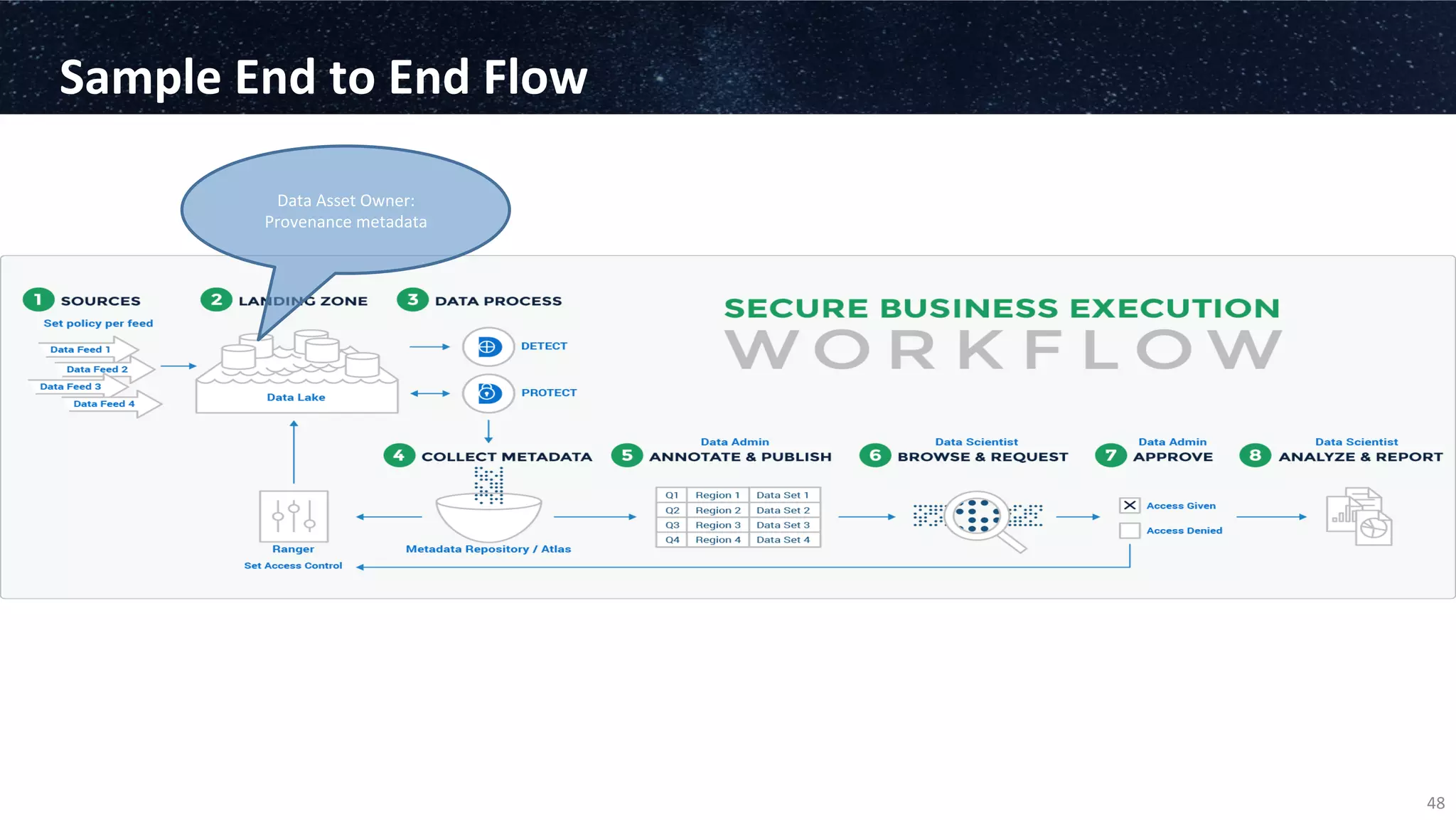

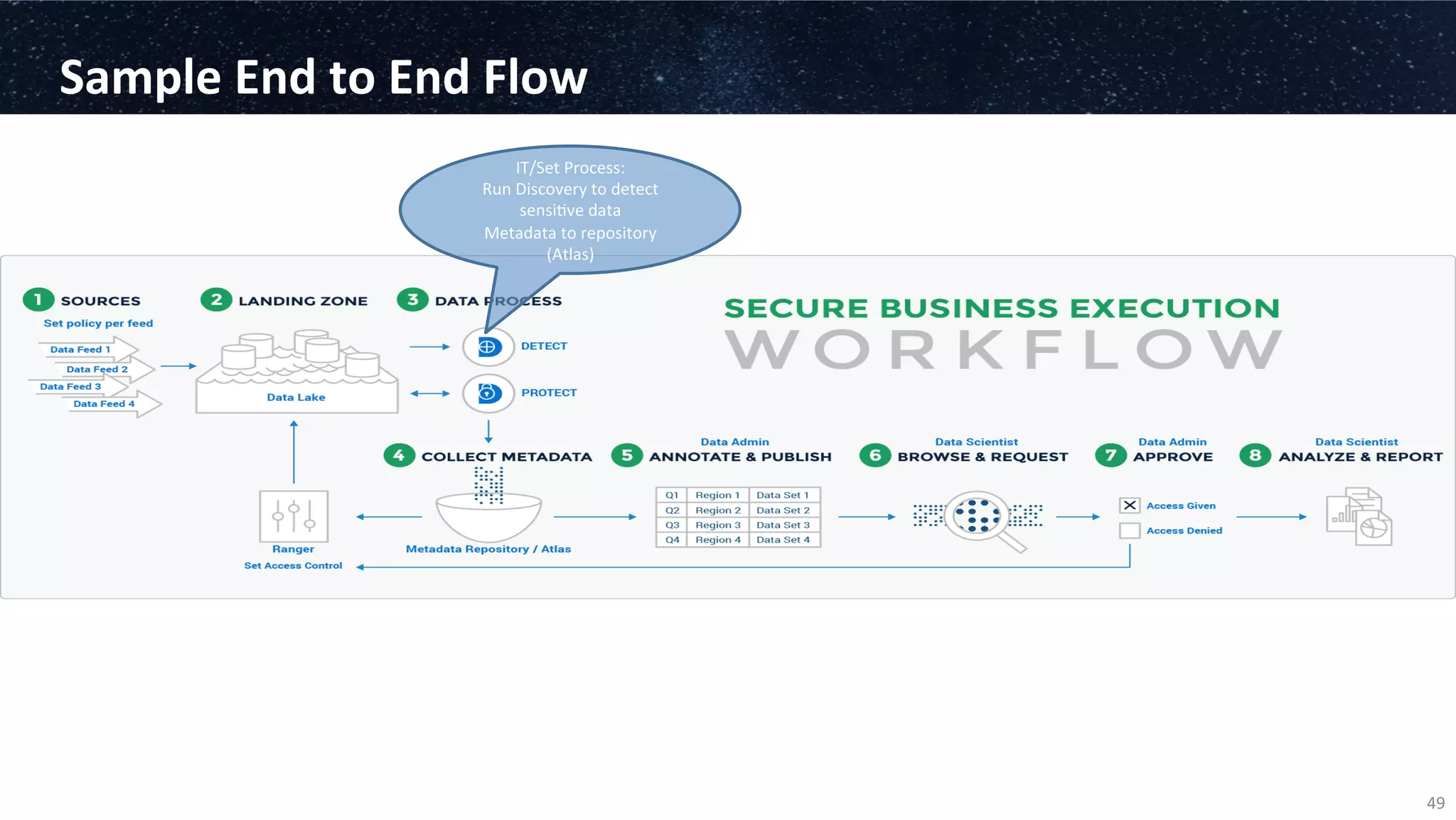

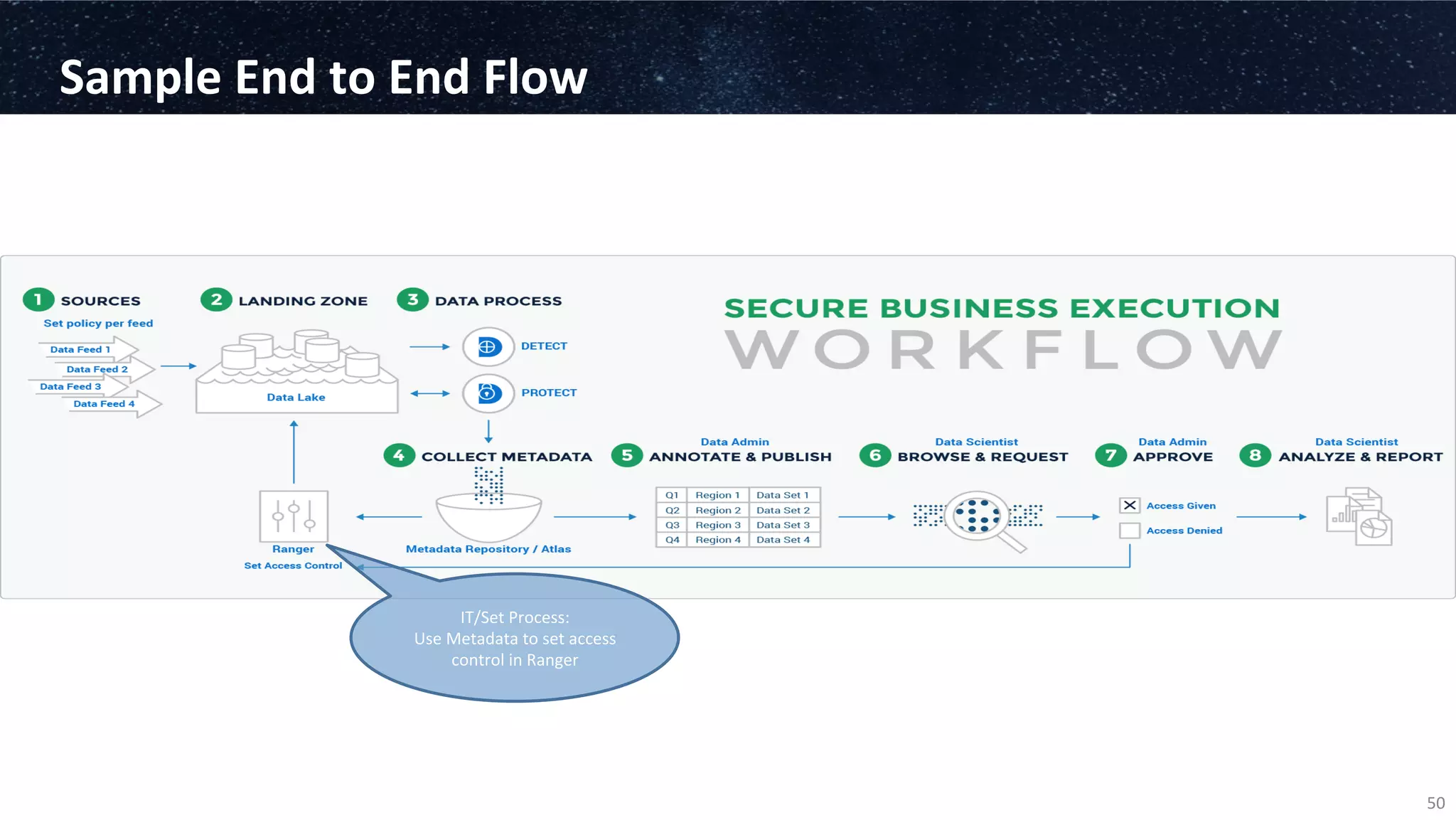

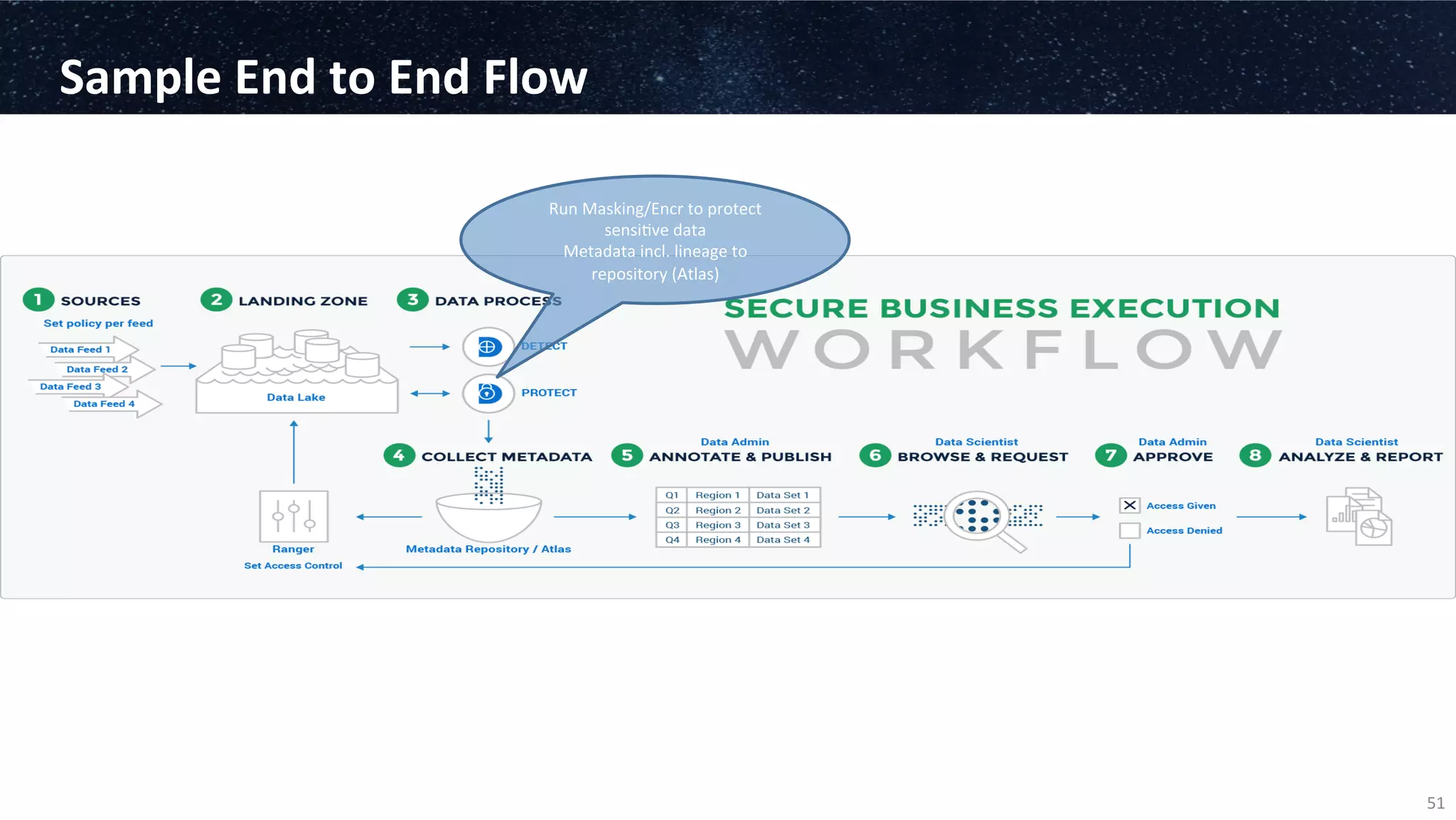

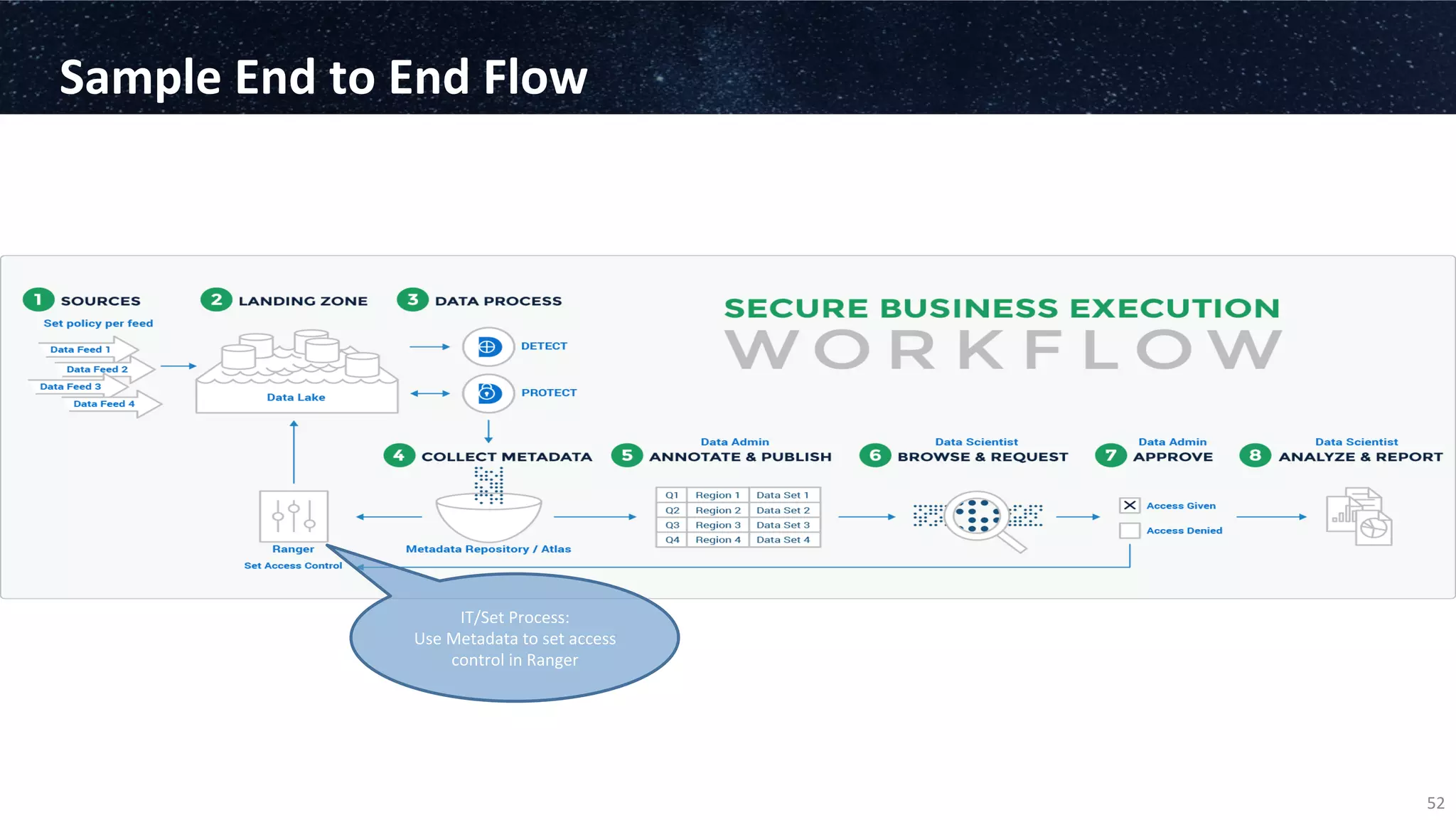

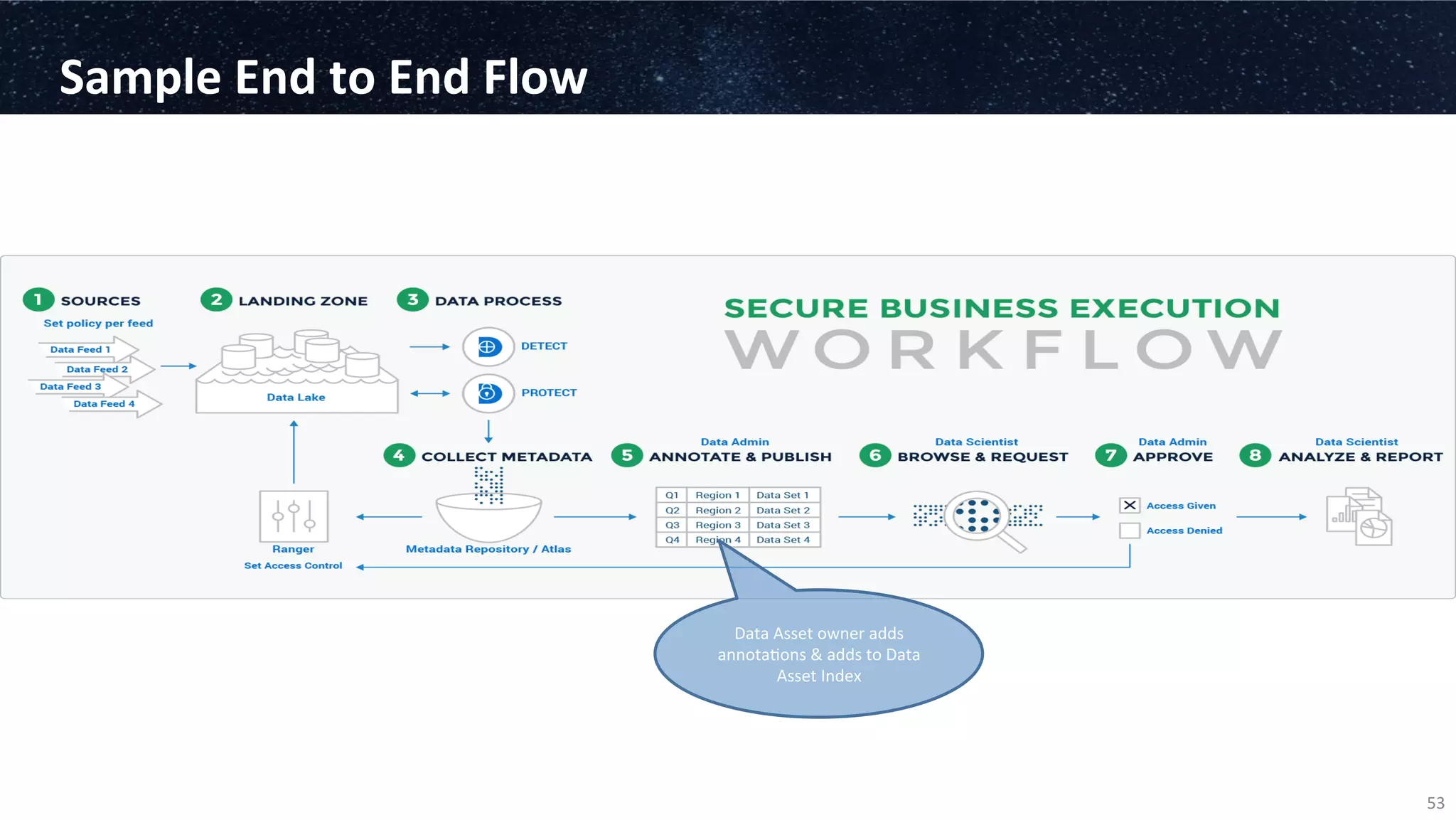

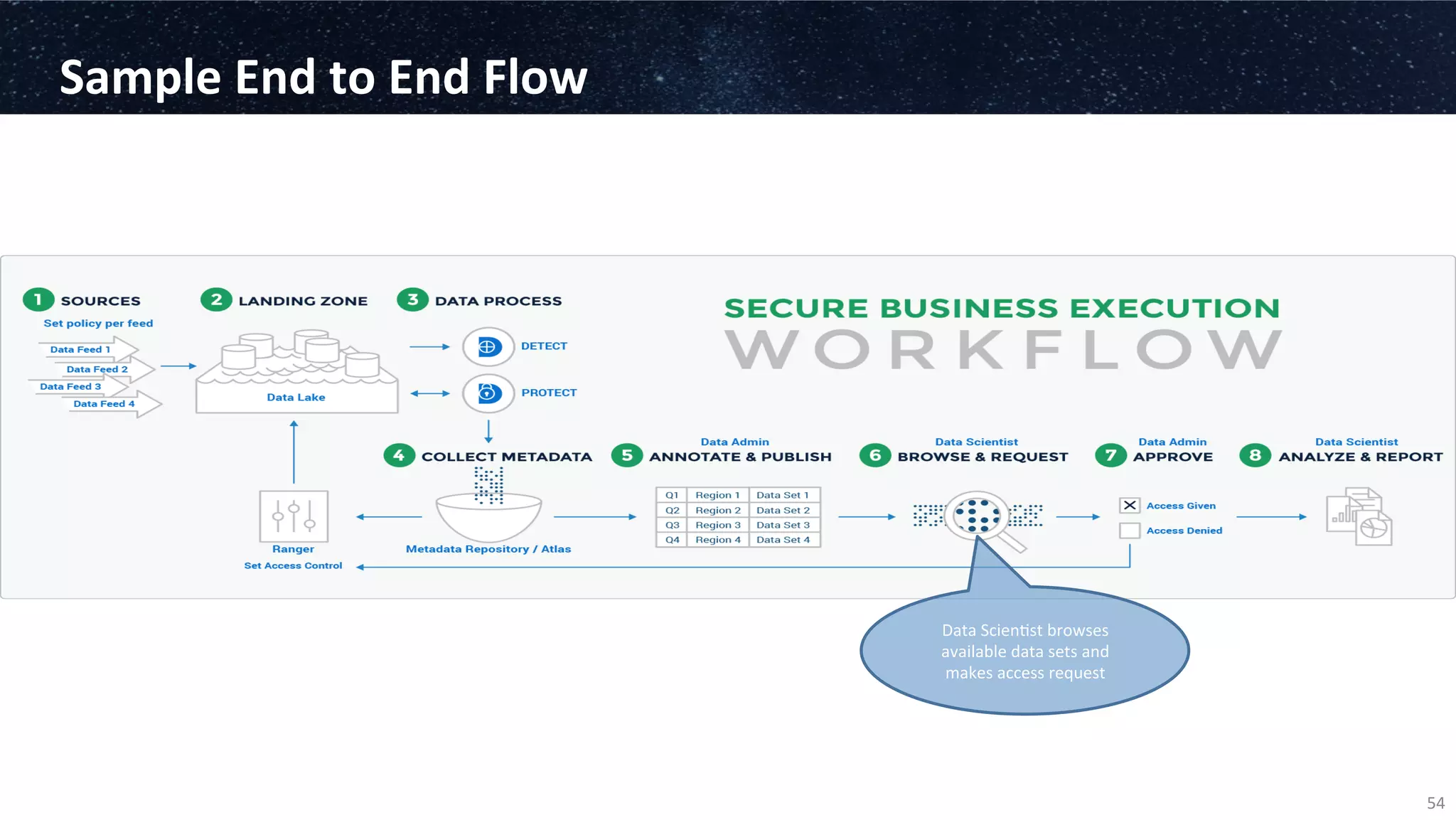

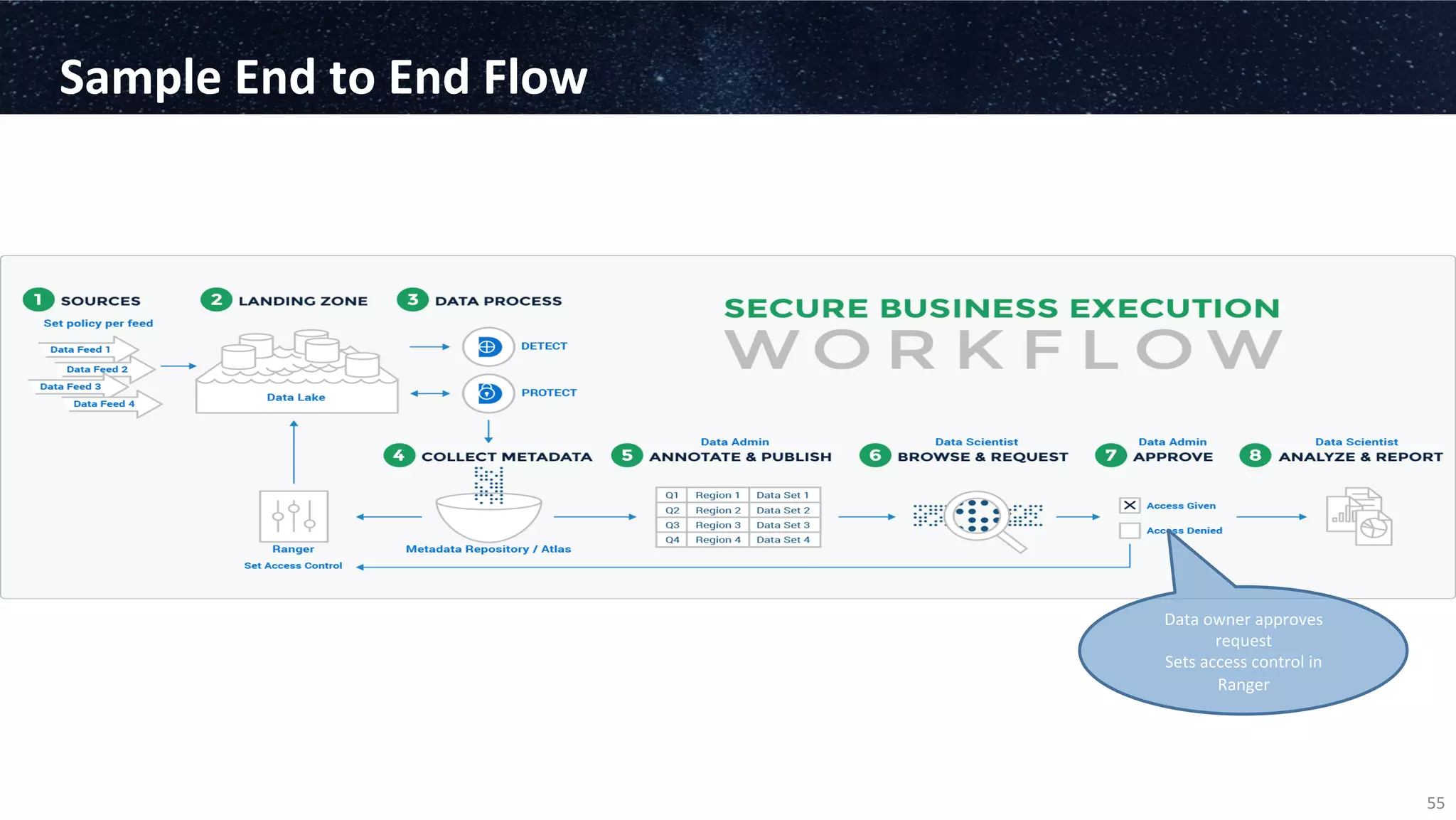

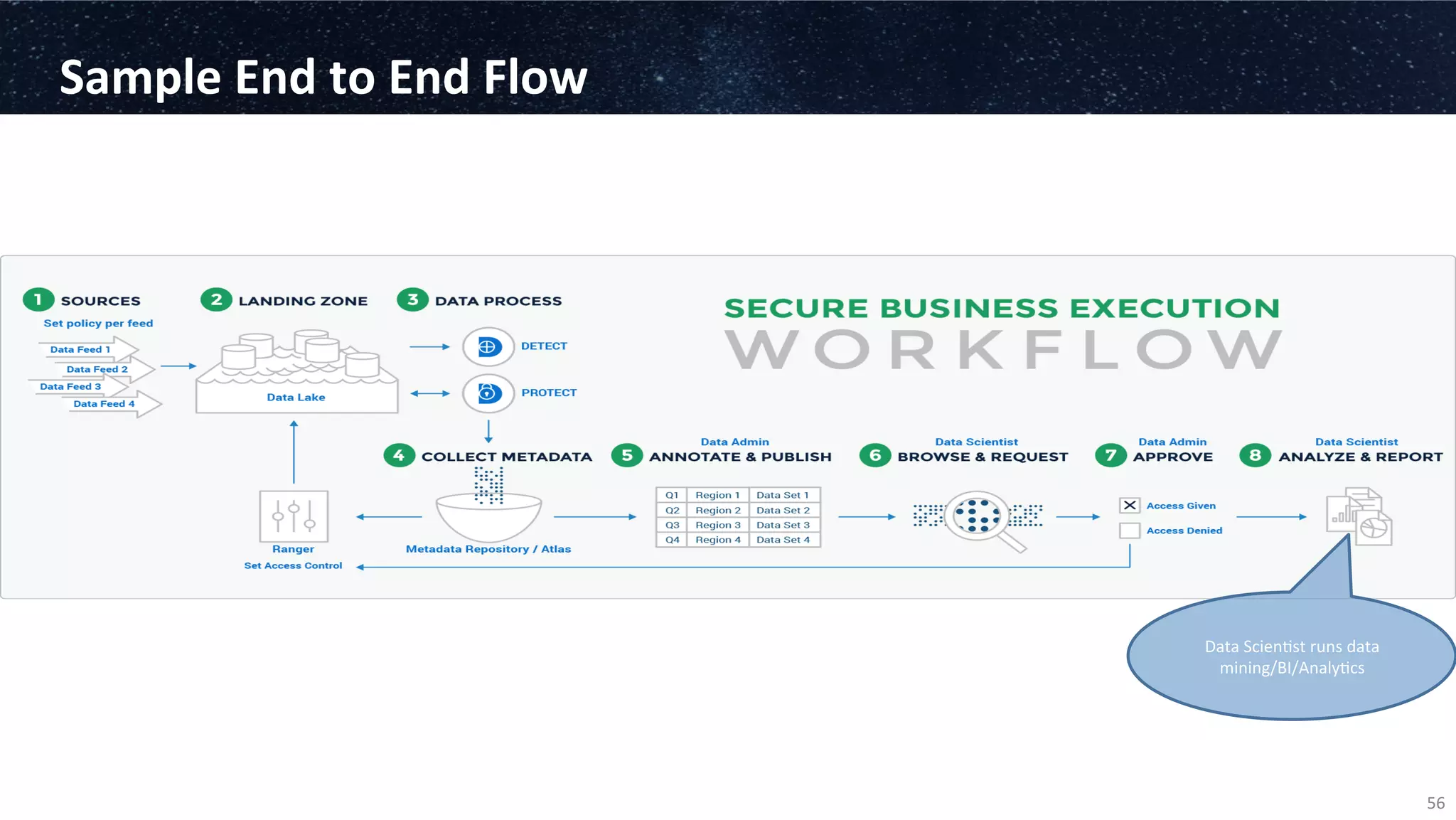

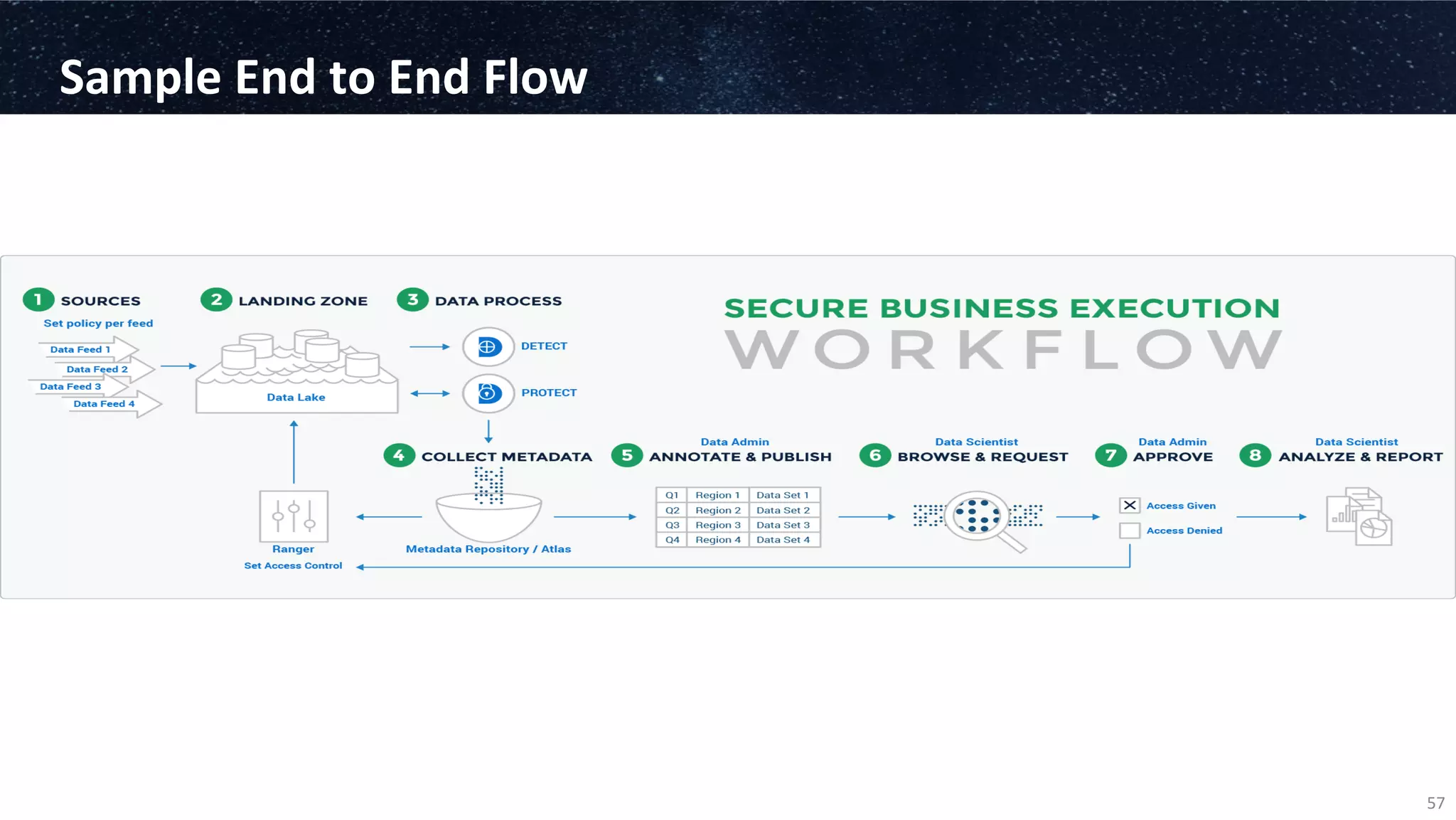

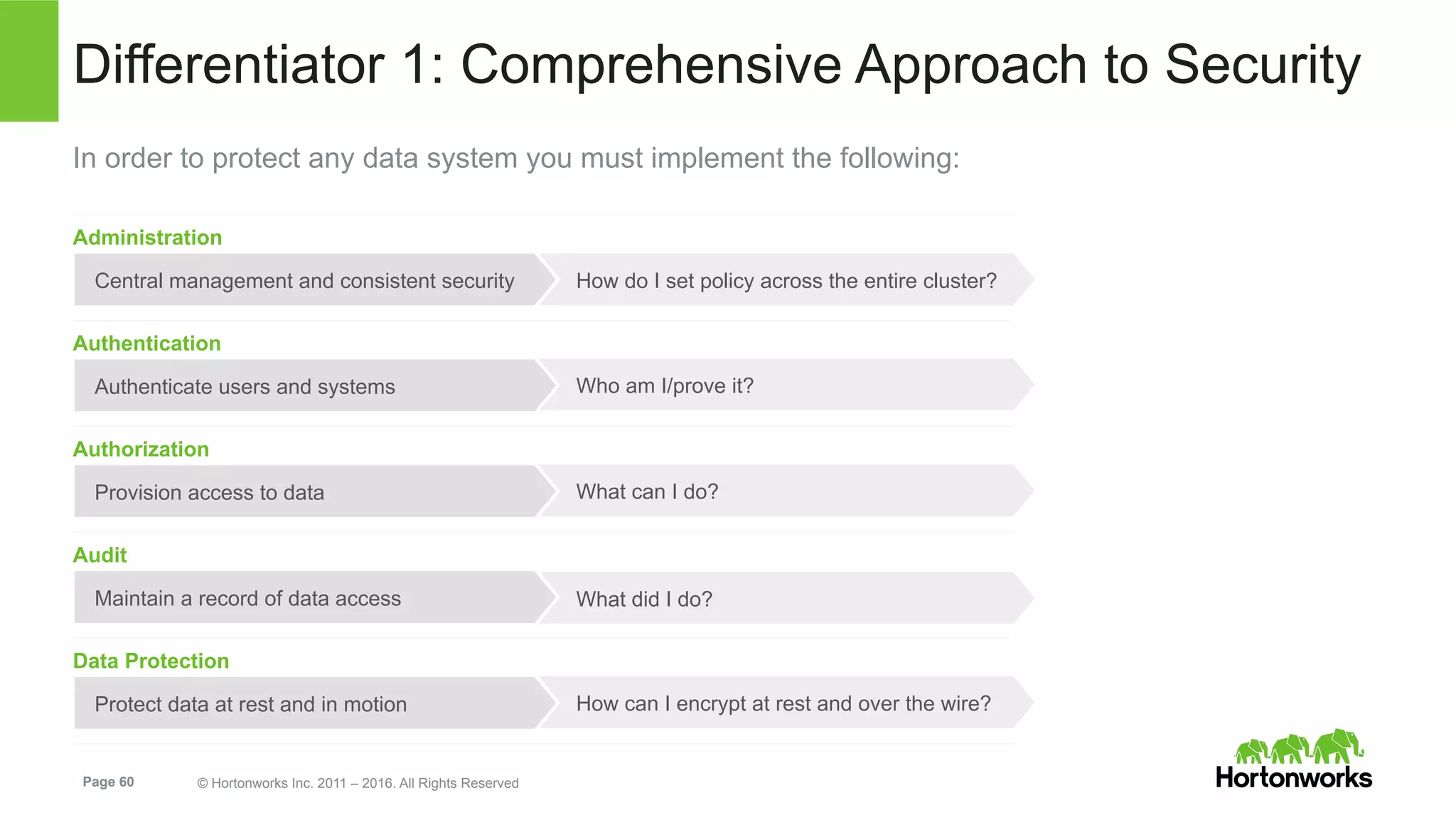

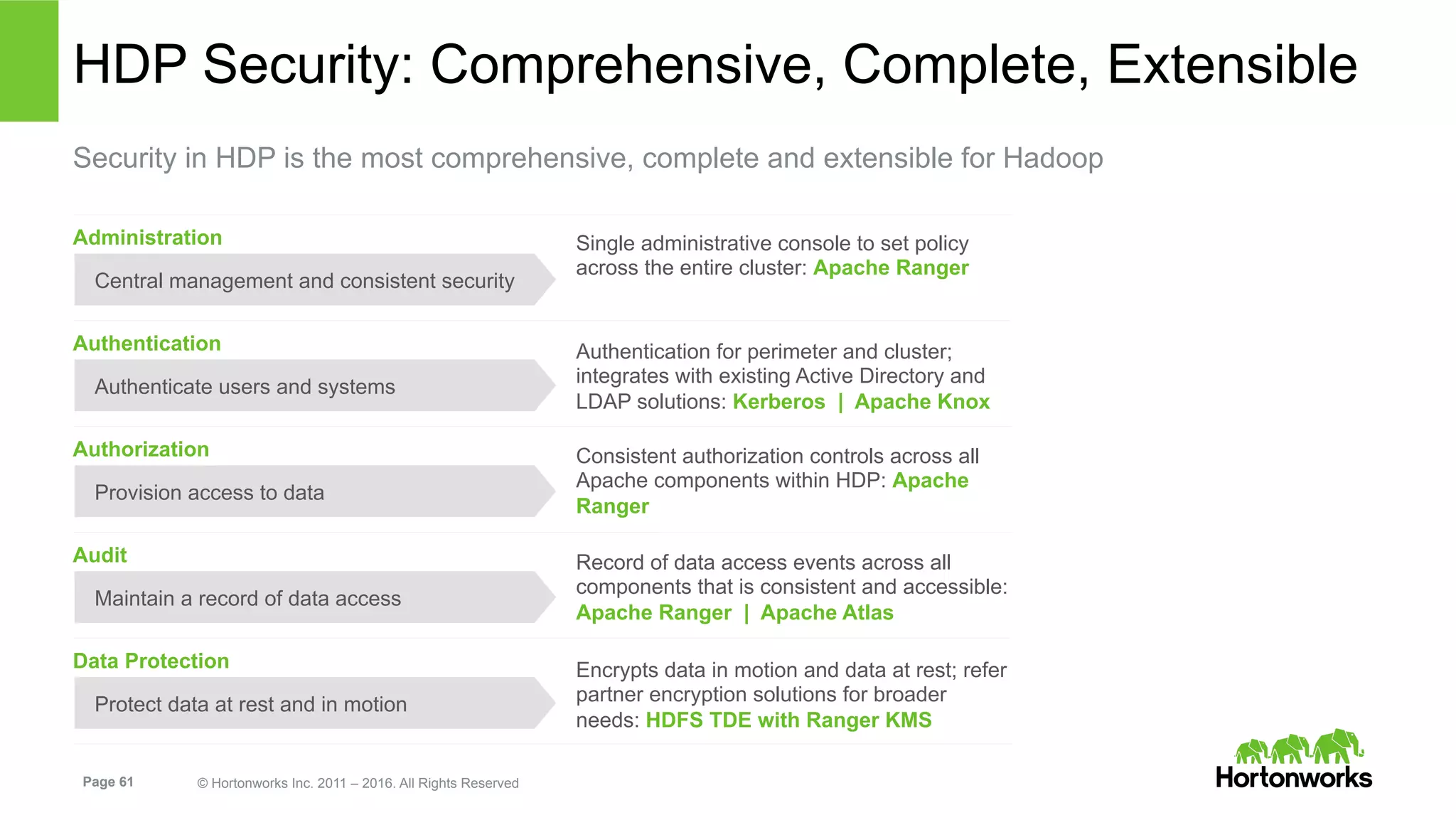

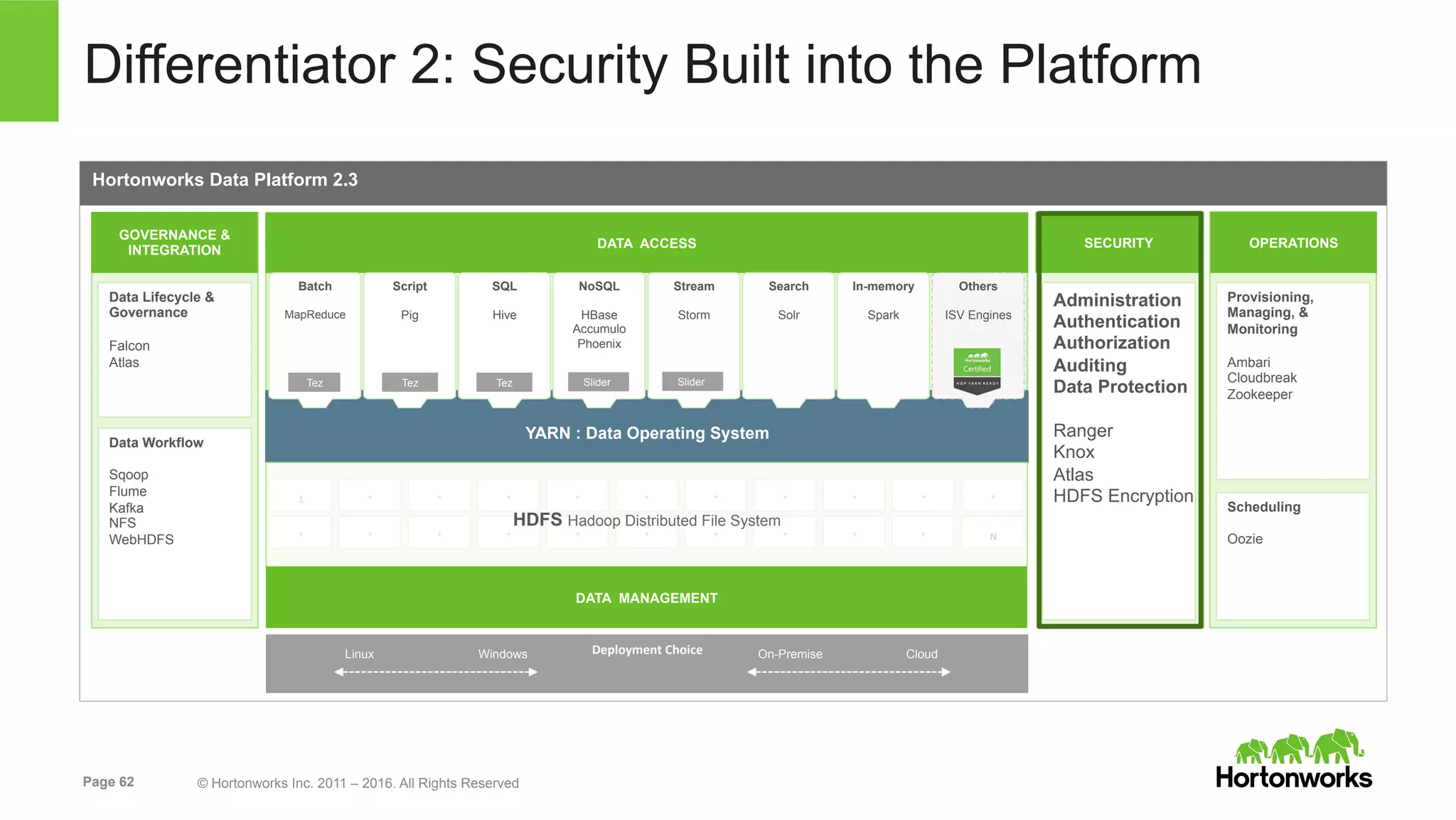

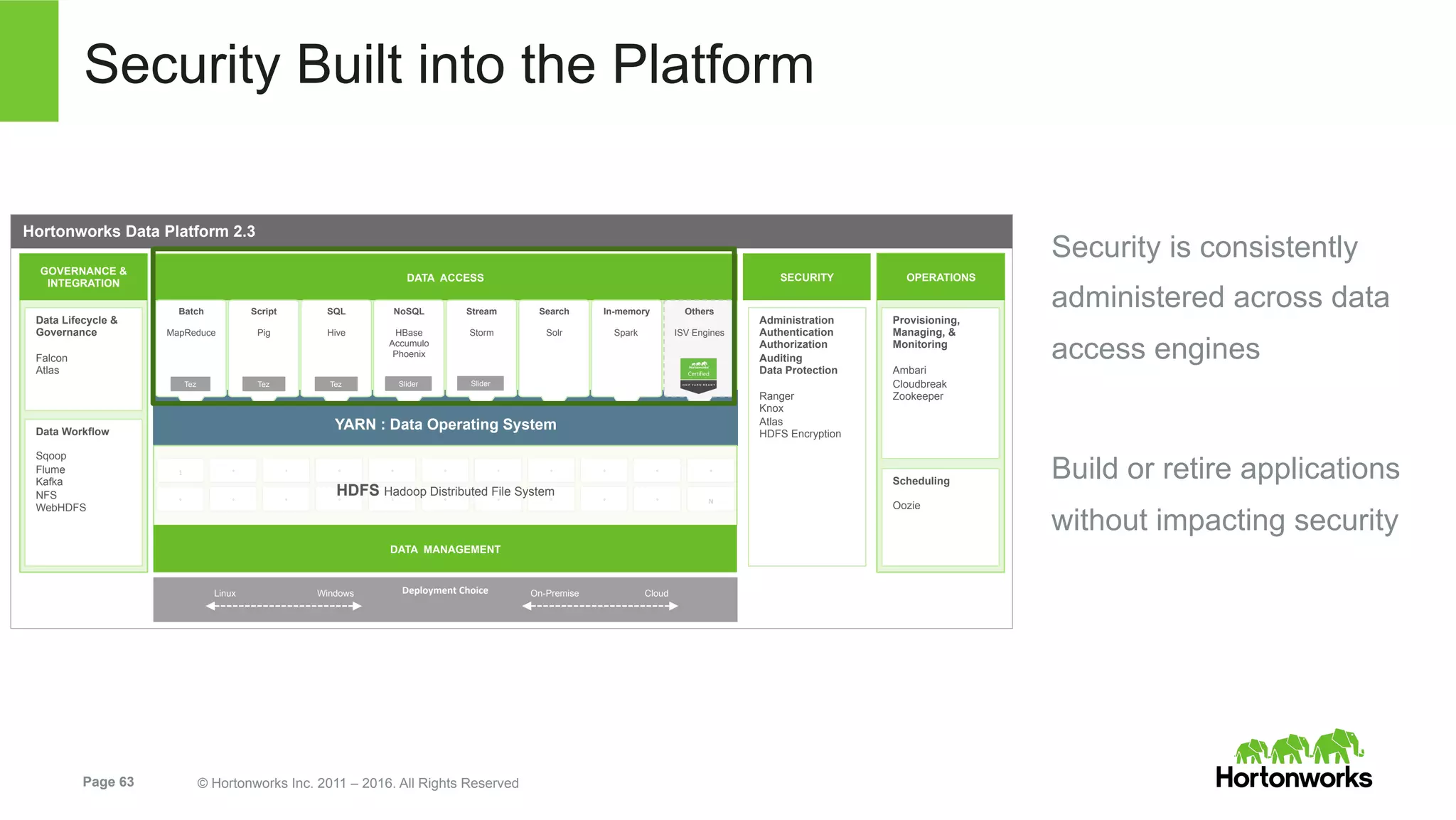

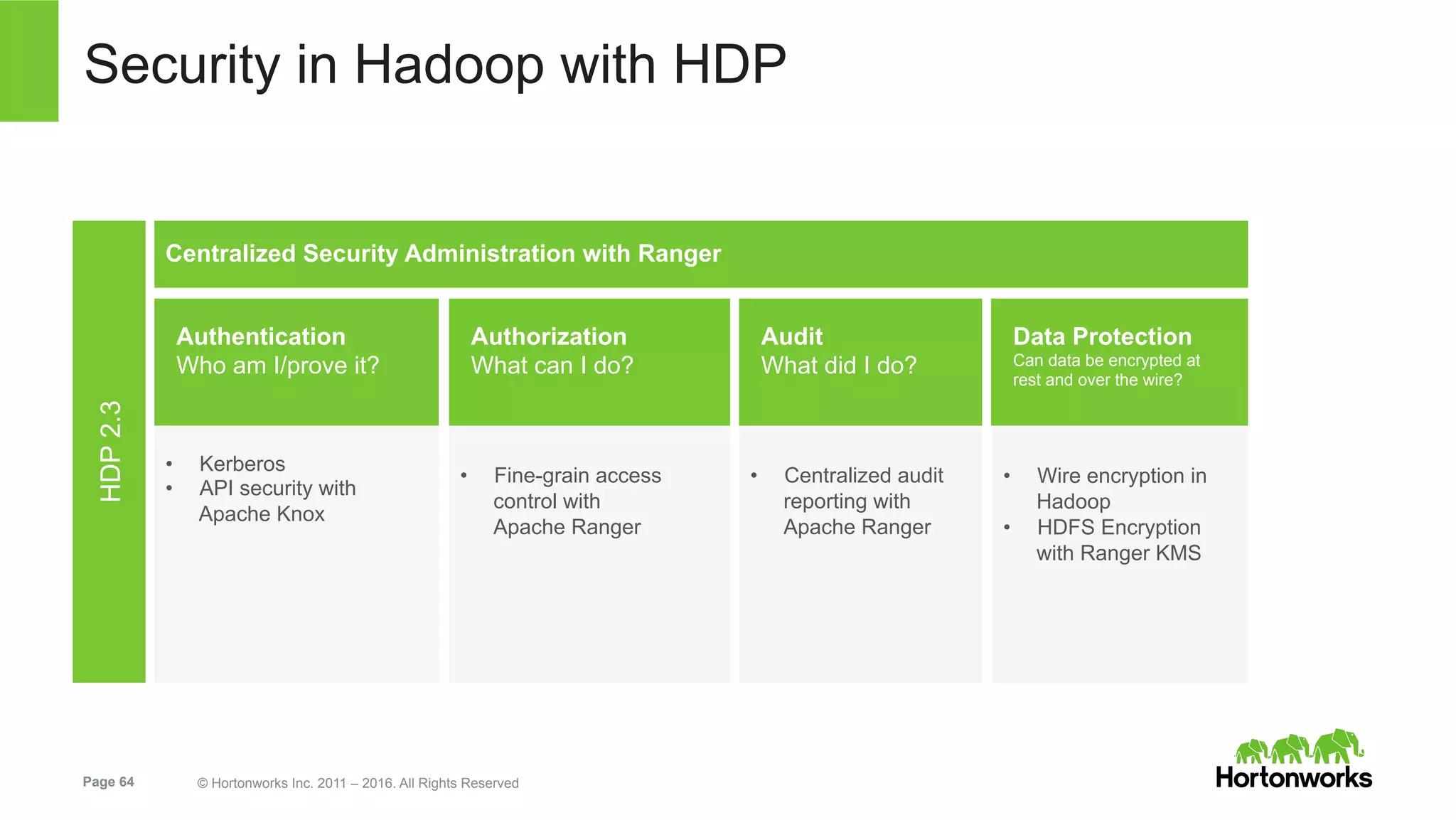

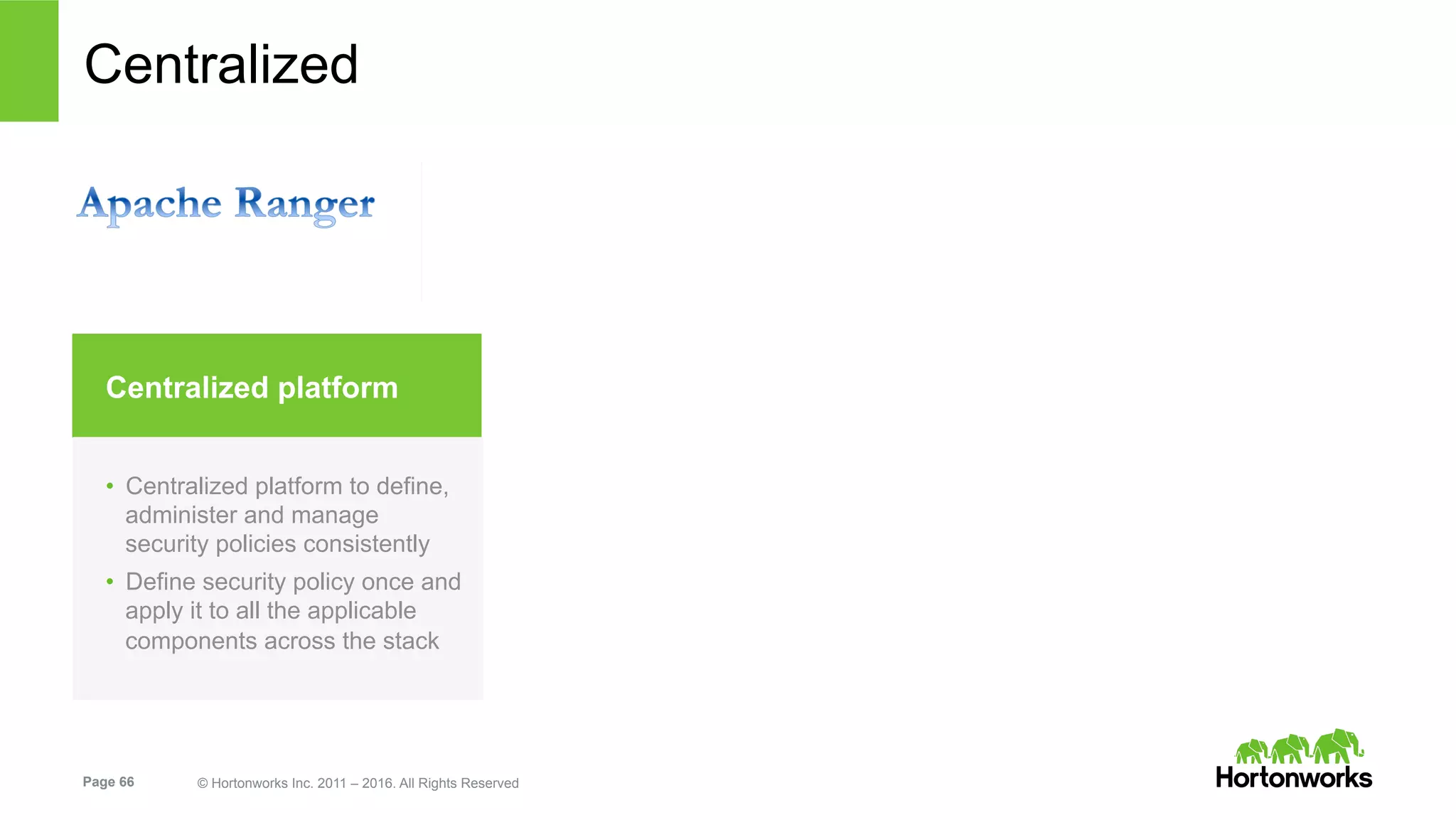

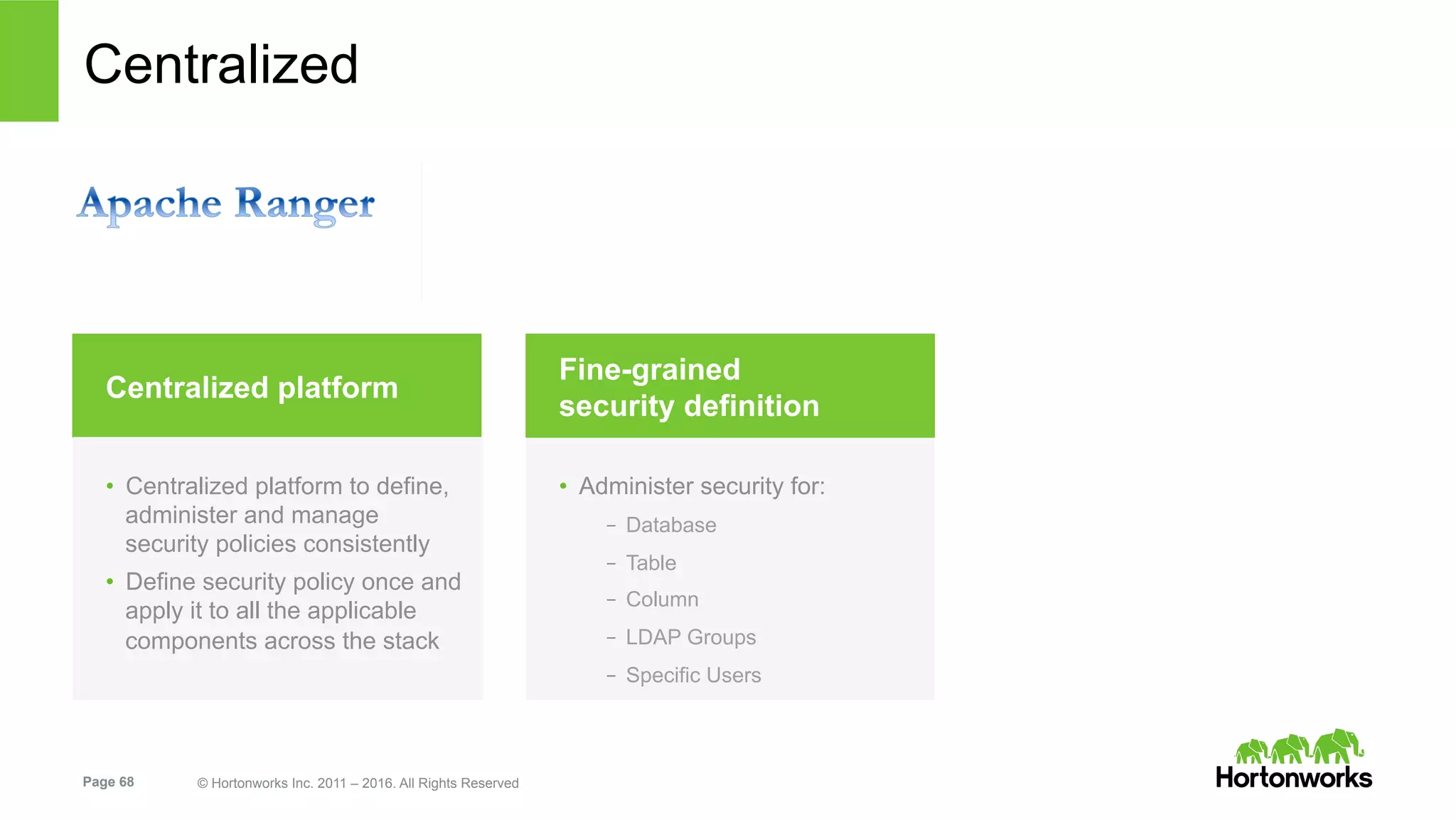

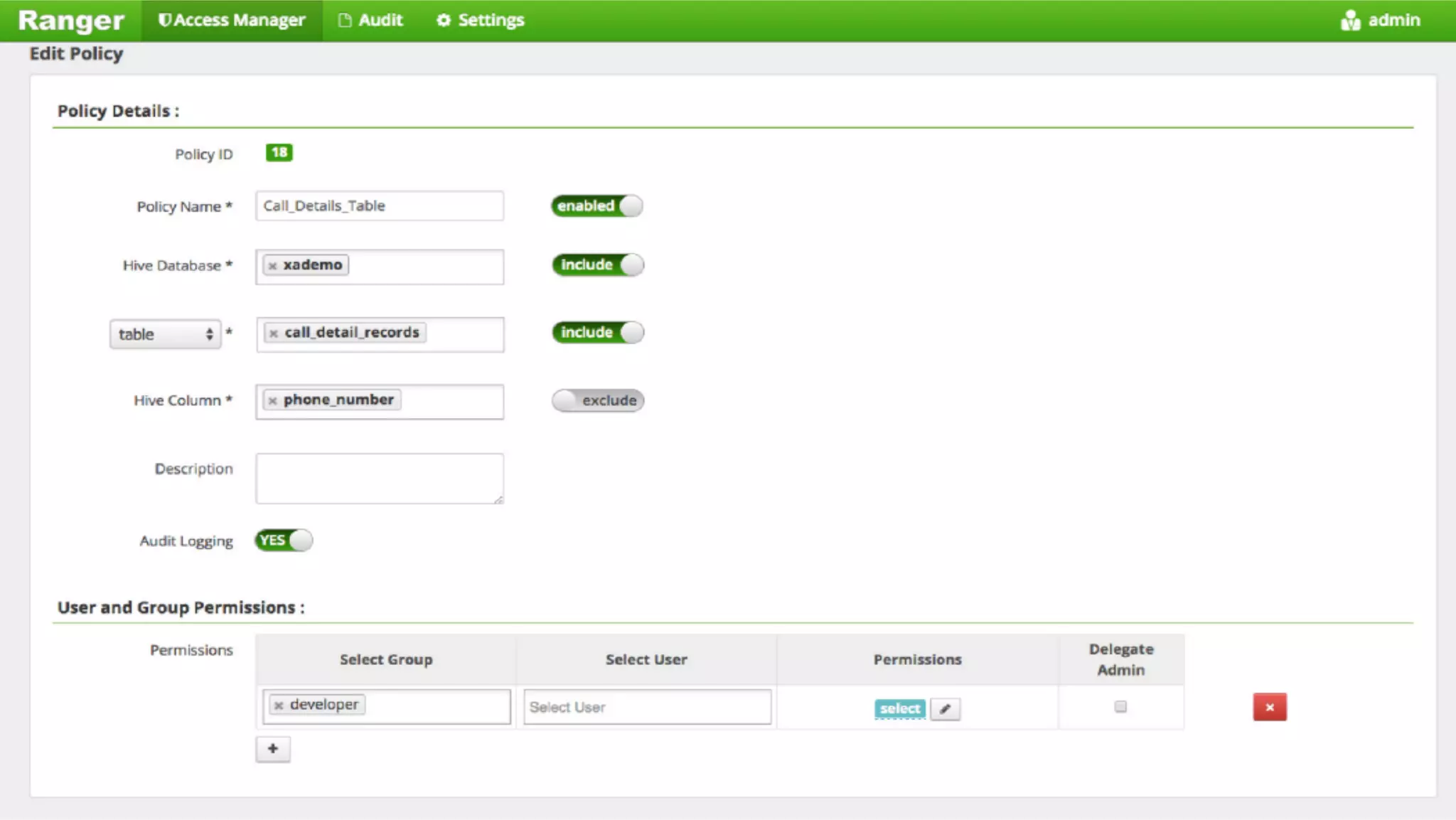

The document discusses the challenges and opportunities of managing sensitive data (PII/PHI) in the insurance sector amidst increasing demand for big data analytics. It highlights the necessity for robust data protection solutions, such as those offered by Dataguise, to ensure compliance and security while gaining insights from diverse data sources. The presentation emphasizes the importance of integrating security at all stages of data management to support business intelligence and operational efficiency.