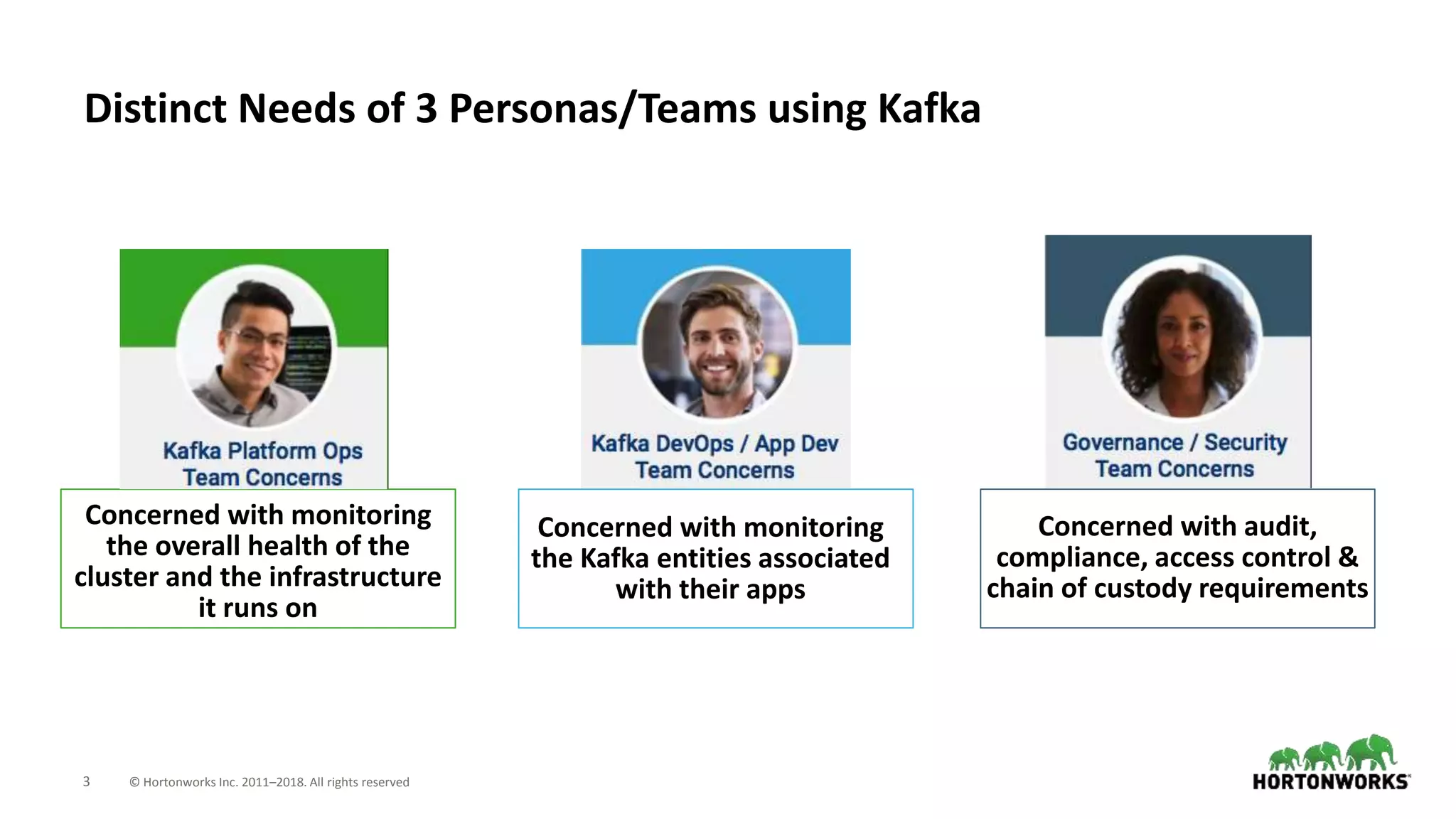

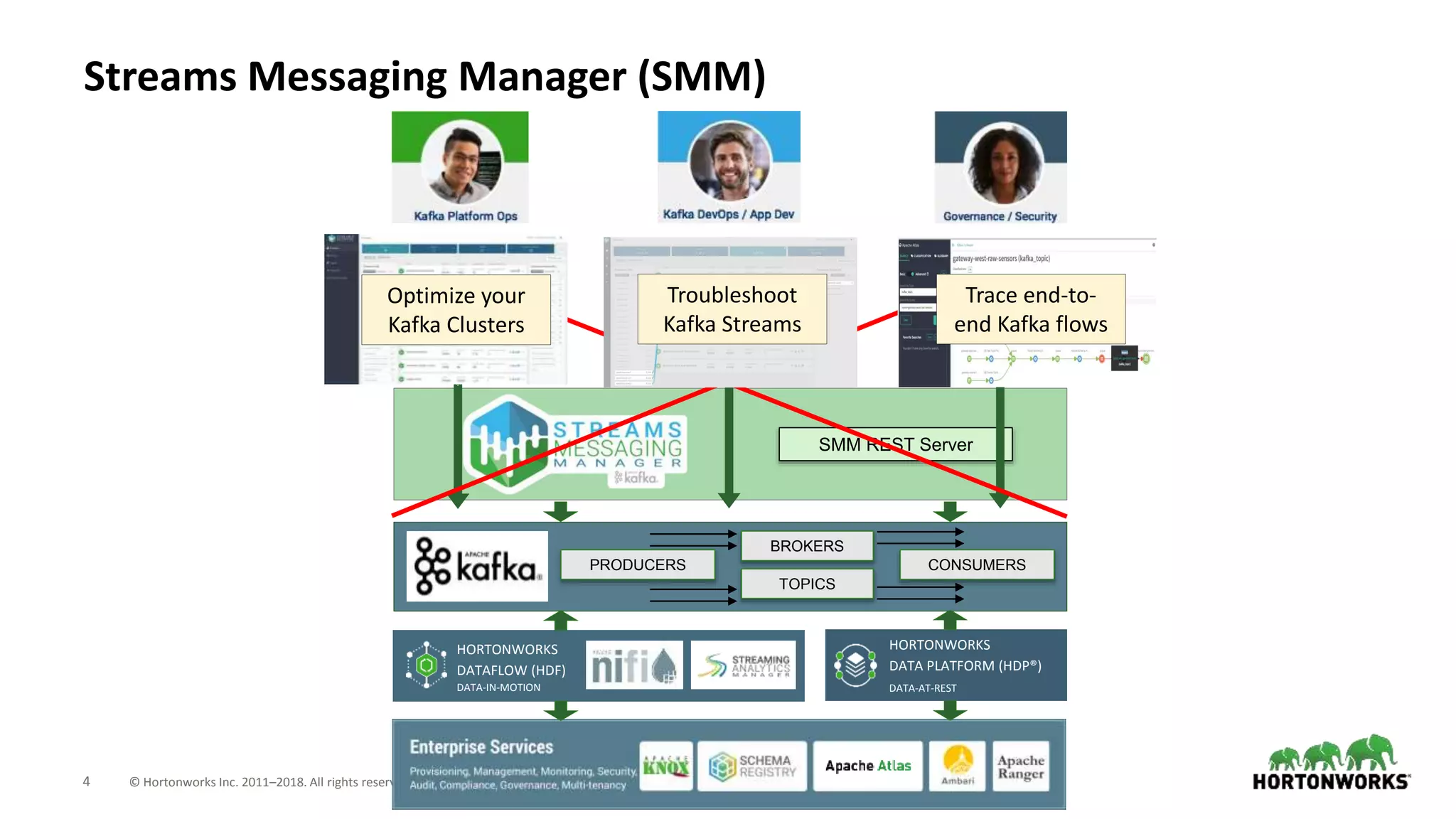

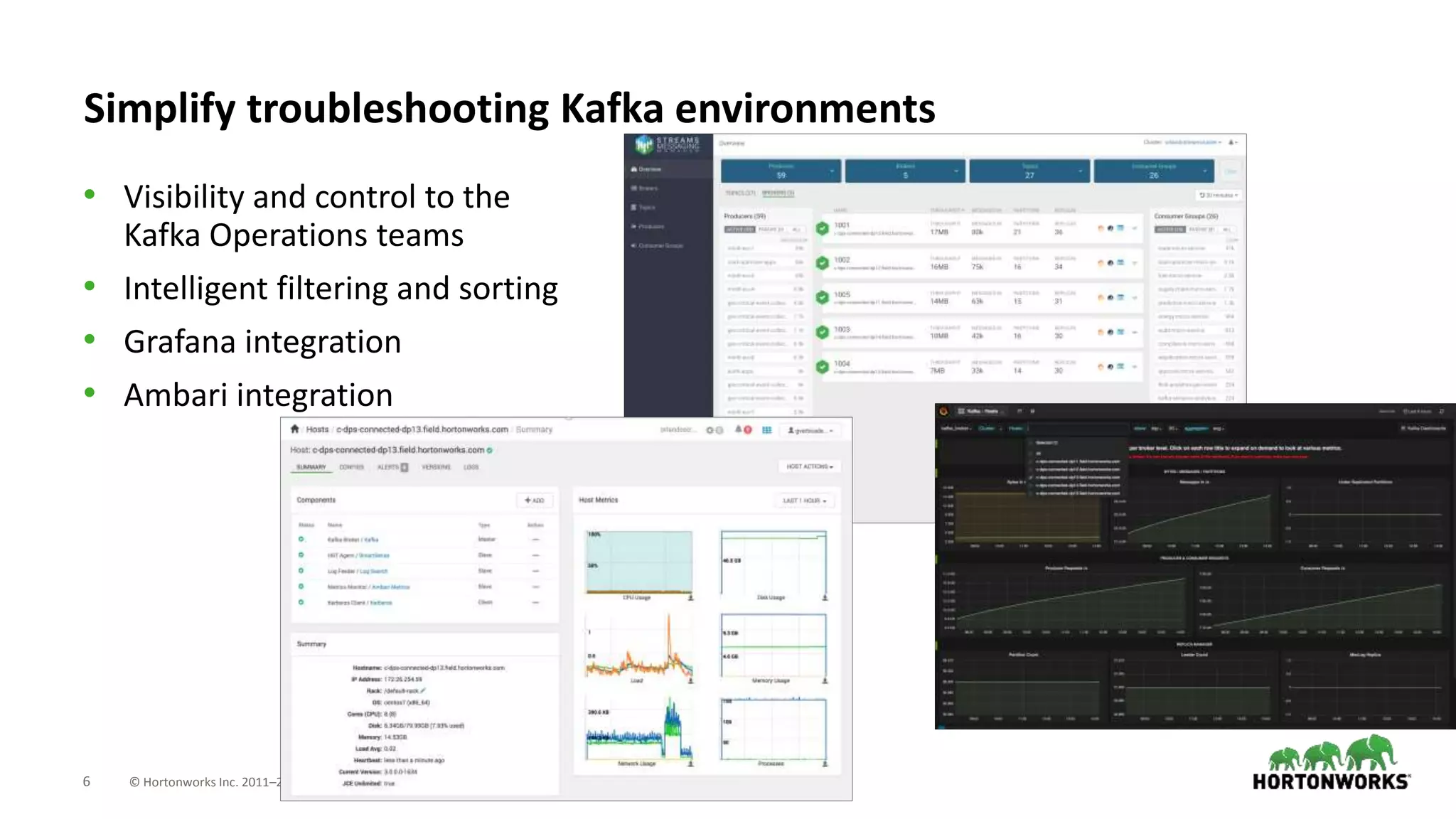

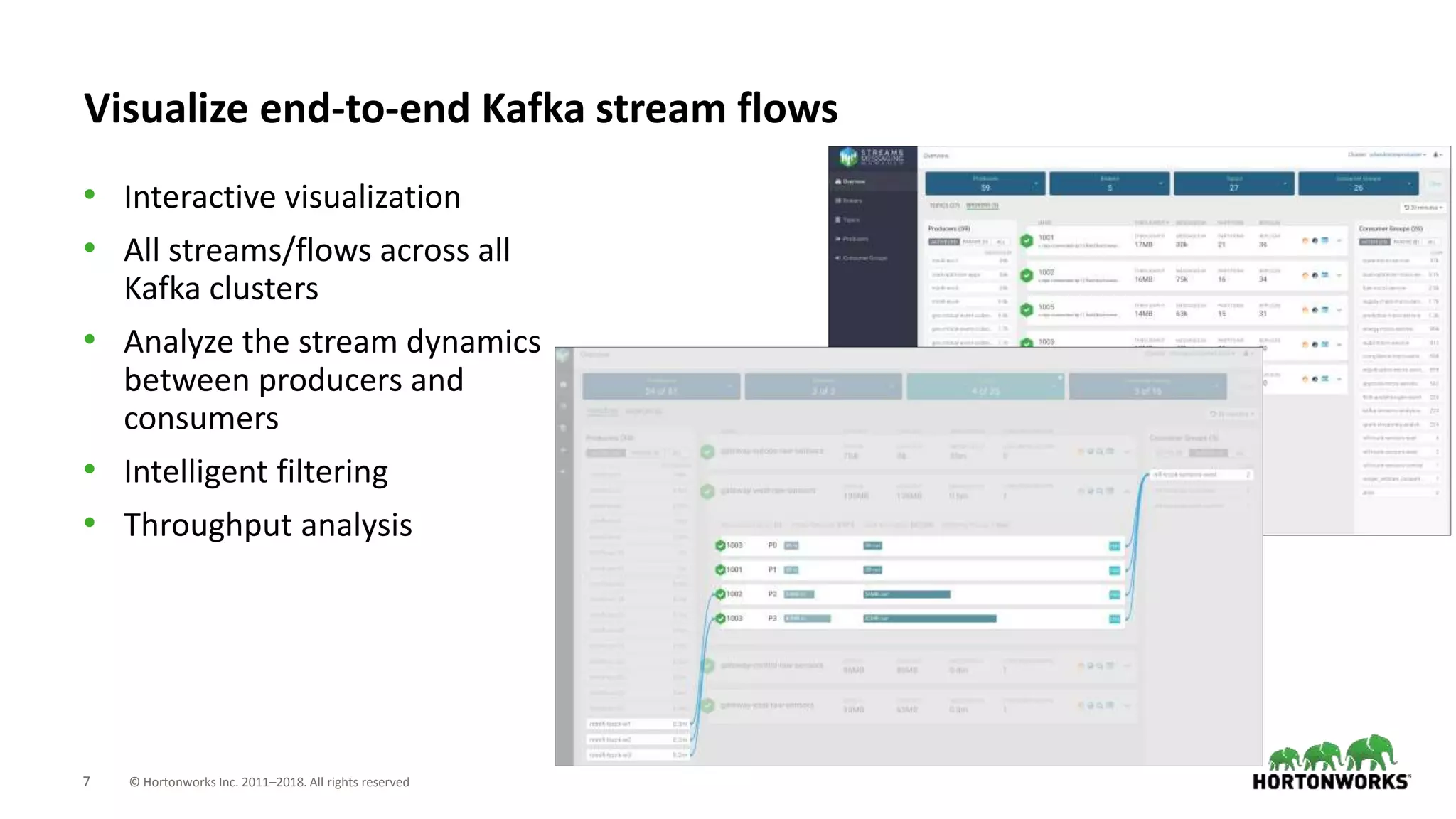

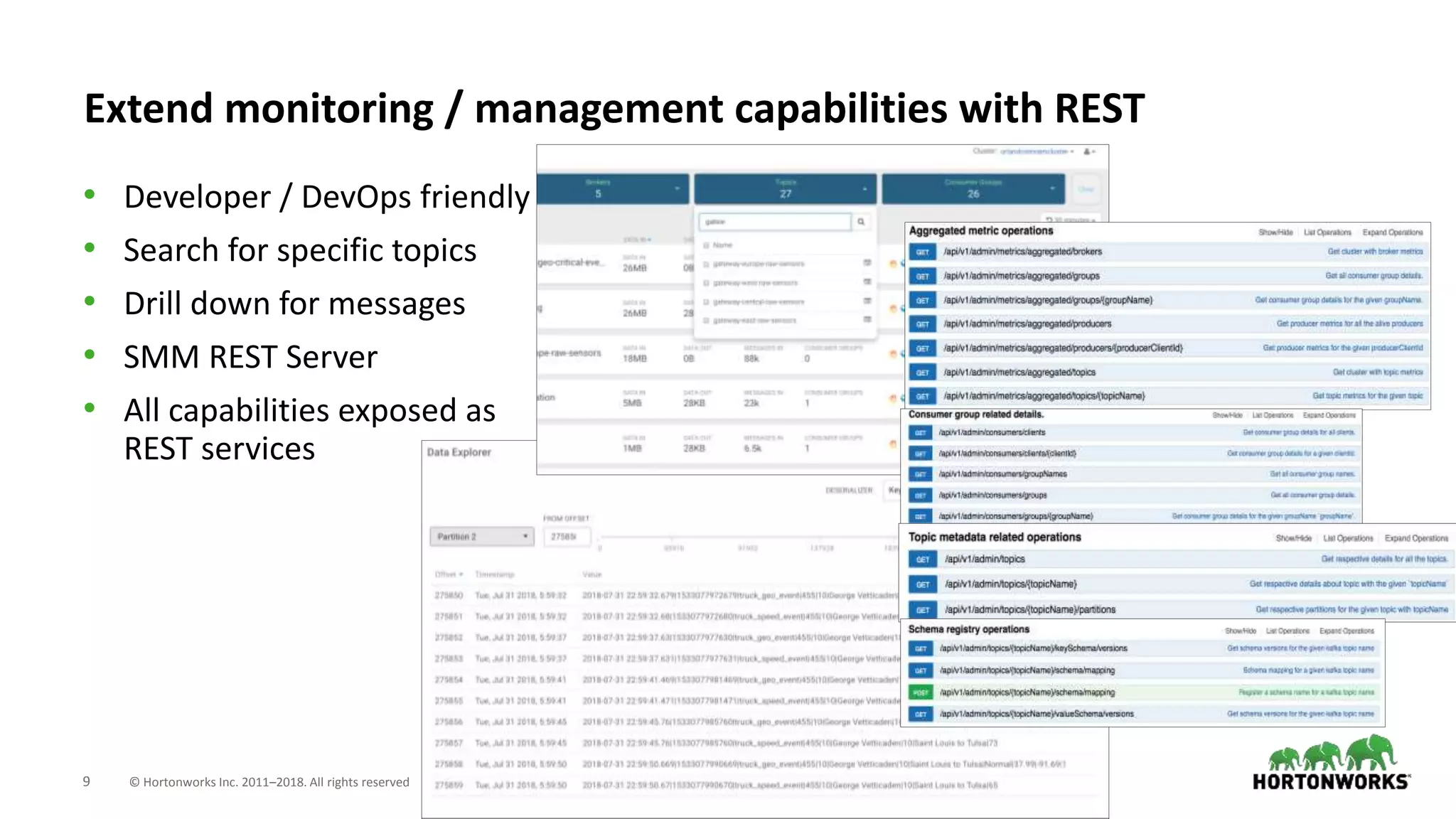

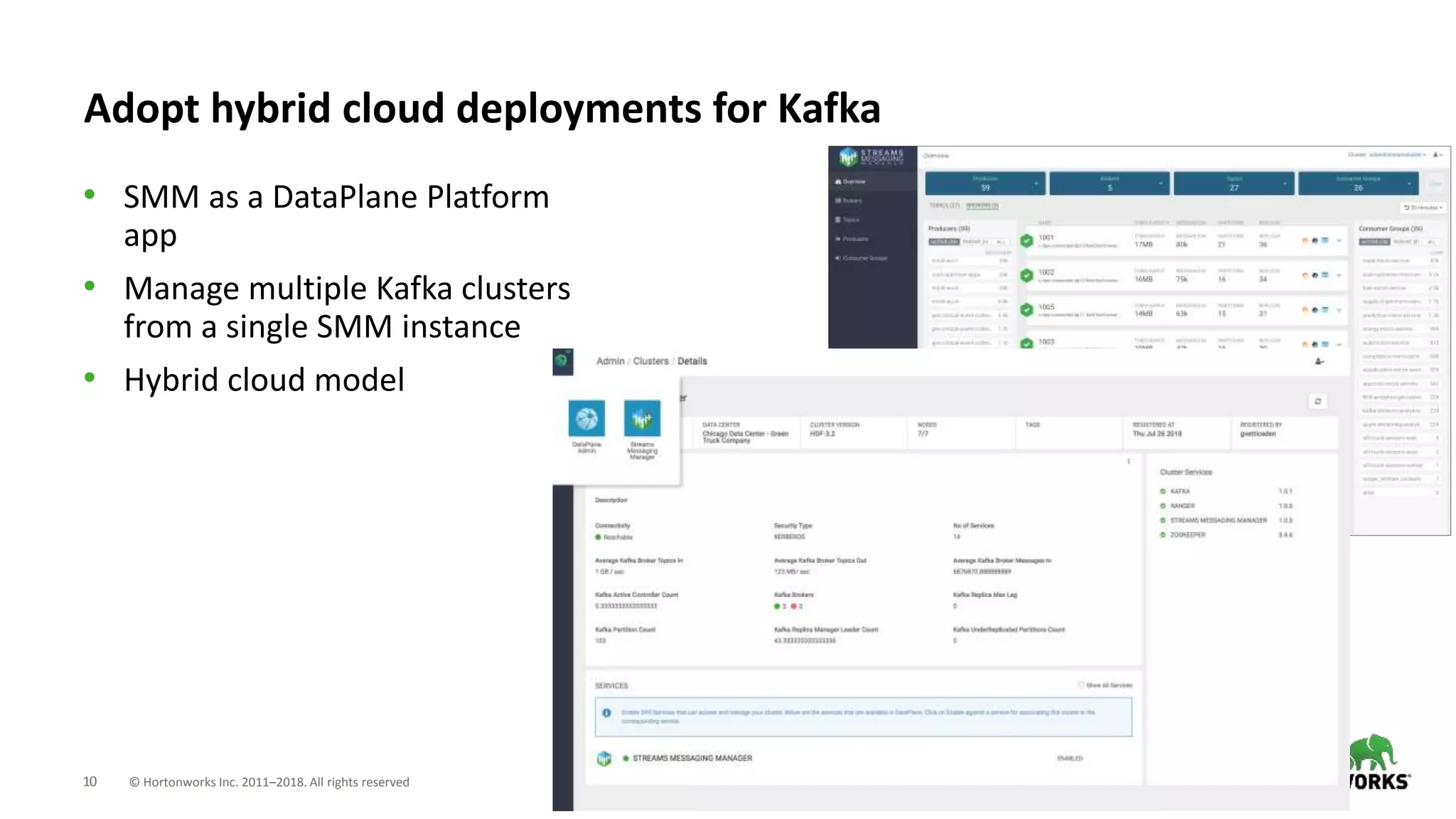

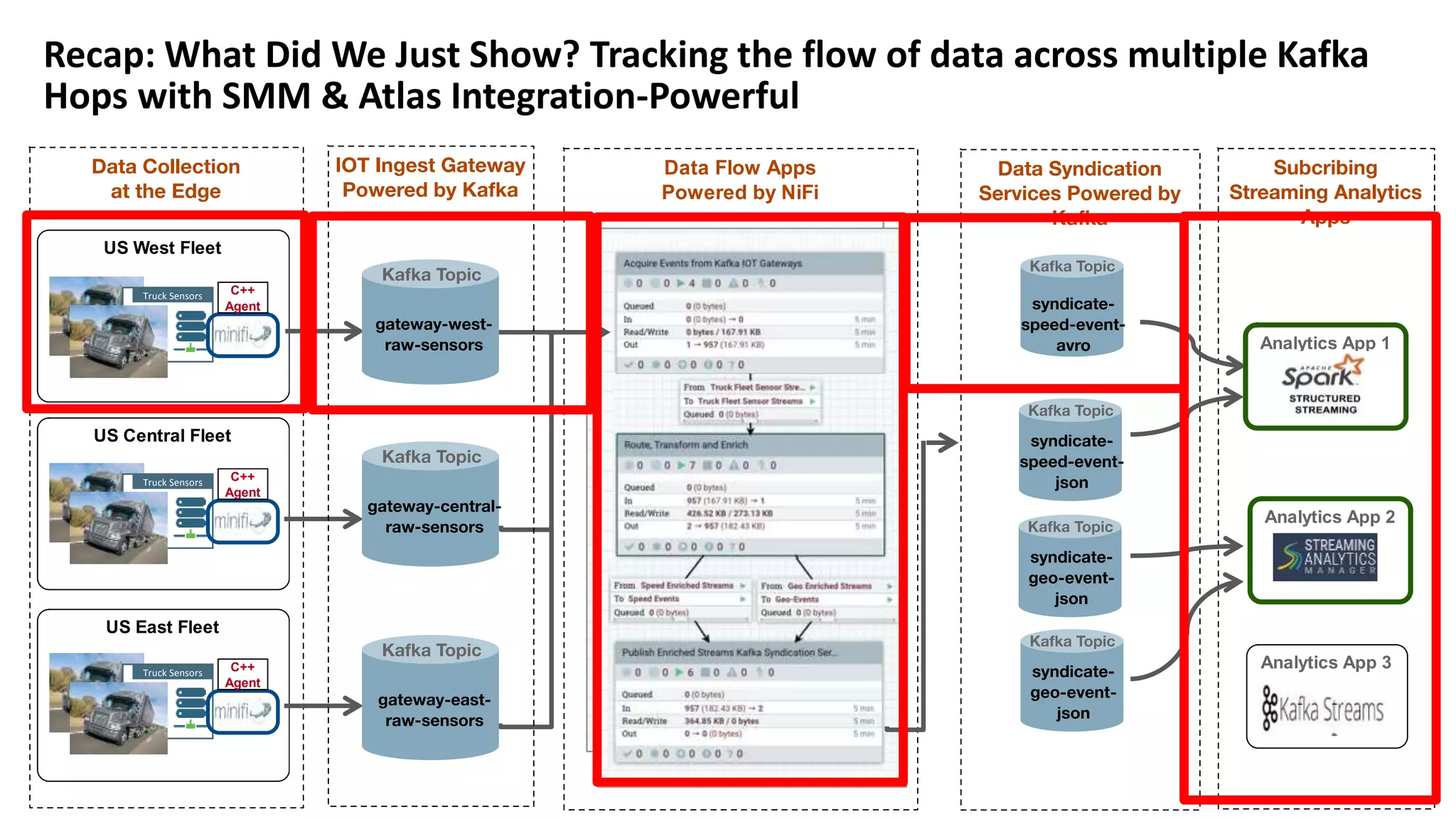

The document introduces Hortonworks Streams Messaging Manager (SMM), a tool designed for managing and monitoring Kafka streams to address 'Kafka blindness'. It offers a unified monitoring dashboard for various Kafka entities, integrates with Apache Atlas for data lineage, and supports hybrid cloud deployments. Key features include enhanced troubleshooting capabilities, end-to-end stream visualization, and developer-friendly REST API access.