DataCite workshop at BL April 2011

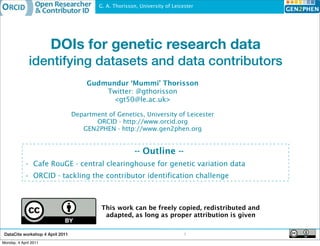

- 1. G. A. Thorisson, University of Leicester DOIs for genetic research data identifying datasets and data contributors Gudmundur ‘Mummi’ Thorisson Twitter: @gthorisson <gt50@le.ac.uk> Department of Genetics, University of Leicester ORCID - http://www.orcid.org GEN2PHEN - http://www.gen2phen.org -- Outline -- • Cafe RouGE - central clearinghouse for genetic variation data • ORCID - tackling the contributor identification challenge This work can be freely copied, redistributed and adapted, as long as proper attribution is given DataCite workshop 4 April 2011 1 Monday, 4 April 2011

- 2. G. A. Thorisson, University of Leicester DataCite workshop 4 April 2011 2 Monday, 4 April 2011

- 3. G. A. Thorisson, University of Leicester Cafe RouGE - Café for Routine Genetic data Exchange 1. Diagnostic 2. Central 3. End-users (e.g. laboratories ‘clearinghouse’ LSDB curators) Publish data Retrieve Atom feeds Submi&ng muta,ons from diagnos,c labs using “Café RouGE enabled” so<ware via simple bu@on click Data are shared with diverse 3rd par,es via manual retrieval or automated feed-‐ based monitoring/retrieval DataCite workshop 4 April 2011 3 Monday, 4 April 2011

- 4. G. A. Thorisson, University of Leicester Aim:publication credit for Cafe submissions • Journal publication: Thorisson, G.A. Accreditation and attribution in data sharing. Nature Biotechnology 27, 984 - 985 (2009) doi:10.1038/nbt1109-984b => http://www.nature.com/nbt/journal/v27/n11/full/nbt1109-984b.html • Data publication: G. A. Thorisson. 4x variants in BRCA2 gene. Published online via Cafe RouGE. 21 January (2011) doi:10.1255/caferouge.BRCA2-2352354 => http://api.caferouge.org/atomserver/v1/caferouge/mutations/2352354 DataCite workshop 4 April 2011 4 Monday, 4 April 2011

- 5. G. A. Thorisson, University of Leicester Data shared with diverse 3rd par6es and data usage/cita6on tracked via DOI Submission from diag. lab ✔ DOI assigned to incoming data upload Cafe RouGE Depot dbSNP (coding) UniProt PhenCode Already stable IDs so no DOI assigned AAribu6on given to data submiAers via ORCID unique iden6fier Metadata describing varia6on data published elsewhere DataCite workshop 4 April 2011 Monday, 4 April 2011 http://

- 6. G. A. Thorisson, University of Leicester Data shared with diverse 3rd par6es and data usage/cita6on tracked via DOI Submission from diag. lab ✔ DOI assigned to incoming data upload Cafe RouGE Depot dbSNP (coding) UniProt PhenCode Already stable IDs so no DOI assigned AAribu6on given to data submiAers via ORCID unique iden6fier Metadata describing varia6on data published elsewhere DataCite workshop 4 April 2011 Monday, 4 April 2011 http://

- 7. G. A. Thorisson, University of Leicester The ORCID initiative - tackling contributor identification challenges ? ORCID ORCID ID: B-1242-2010 F67572010 G. Thorisson, Univ. Leicester G. A. Thorisson, Univ. Leicester G. A. Thorisson, Cold Spring Harbor Lab. ORCID ID: G-1442-2009 J. Smith, Univ. North Pole ORCID ID: D-2400-2010 J. Smith, Luthor Corporation Global registry of disambiguated IDs for contributors: i) UI for researchers to manage & use their ORCID ID ii) facilitate linking content creators with their work (attribution links) iii) interact with other systems (publishers, digital libraries, universities etc) DataCite workshop 4 April 2011 6 Monday, 4 April 2011

- 8. G. A. Thorisson, University of Leicester Key use case: submitting manuscript to journal Attribution statement deposited into ORCID: • A-883-2010 <created> 10.4259/psycho-review.gbilder2010.Foobar DataCite workshop 4 April 2011 Monday, 4 April 2011

- 9. G. A. Thorisson, University of Leicester DataCite workshop 4 April 2011 8 Monday, 4 April 2011

- 10. G. A. Thorisson, University of Leicester tio ns a ni za rg O rg id .o 2 0 0 .o rc > w w :/ /w ht tp DataCite workshop 4 April 2011 8 Monday, 4 April 2011

- 11. G. A. Thorisson, University of Leicester Attributing other types of ‘published work’ G. Thorisson, Univ. Leicester gthorisson@gmail.com ORCID ID: A-883-2010 • Thorisson, G. (A-883-2010), Bilder, G.W. (C-035-2009) and Fenner, M. (A-101-2010). Icelandic 9th century viking bowl. Psychoceramics Archive. Sep 2 2010. doi:10.4259/psycho.5gtpq-thorisson DataCite workshop 4 April 2011 9 Monday, 4 April 2011

- 12. G. A. Thorisson, University of Leicester Attributing other types of ‘published work’ G. Thorisson, Univ. Leicester gthorisson@gmail.com ORCID ID: A-883-2010 • Thorisson, G. (A-883-2010), Bilder, G.W. (C-035-2009) and Fenner, M. (A-101-2010). Icelandic 9th century viking bowl. Psychoceramics Archive. Sep 2 2010. doi:10.4259/psycho.5gtpq-thorisson • A-883-2010 <created> 10.4259/psycho-image5gtpq-thorisson • C-035-2009 <created> 10.4259/psycho-image5gtpq-thorisson • A-101-2010 <created> 10.4259/psycho-image5gtpq-thorisson DataCite workshop 4 April 2011 9 Monday, 4 April 2011

- 13. G. A. Thorisson, University of Leicester • Some DOI issues for discussion – Granularity & versioning • citing datasets vs citing collections of datasets (including databases) • organization - datasets within databases, aggregation • databases =~ journals, BUT changing resources? • DOI identifies a work which may be a new version of a previous work – When is it “appropriate” to assign DOIs? • original datasets published via the online repository • derived datasets resulting from analysis of primary data • republished datasets acquired from elsewhere? – Identifier structure: desire for branding vs opacity (10.163/caferouge.325fff5) • Contributor recognition: ORCID IDs for data creators / contributors – ORCID will support datasets registered via DataCite • Early adopter outreach - summer/autumn 2011 – DataCite metadata schema: where do contributor IDs fit in? (Creator field) DataCite workshop 4 April 2011 10 Monday, 4 April 2011

- 14. G. A. Thorisson, University of Leicester Coming autumn 2011, to a venue near you! Int’l workshop on researcher identity • Co-organized by CSC (Finland IT Centre for Science) • Provisional title: “Identity in research infrastructure and scientific communication" - IRISC • Location: Helsinki • Dates: September 12-13 DataCite workshop 4 April 2011 11 Monday, 4 April 2011

- 15. G. A. Thorisson, University of Leicester Acknowledgements GEN2PHEN Consortium This work has received funding from the European Community's Seventh http://www.gen2phen.org/about-gen2phen/partners Framework Programme (FP7/2007-2013) under grant agreement number 200754 - the GEN2PHEN project. Anthony J. Brookes Bioinformatics Group Contact me! Gudmundur ‘Mummi’ Thorisson <gt50@le.ac.uk> |<gthorisson@gmail.com> http://friendfeed.com/mummit http://www.linkedin.com/in/mummi http://www.twitter.com/gthorisson DataCite workshop 4 April 2011 12 Monday, 4 April 2011