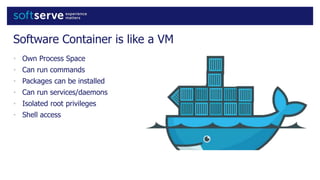

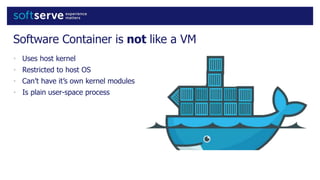

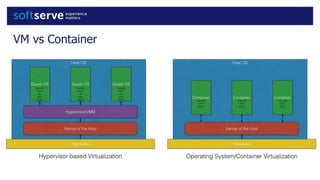

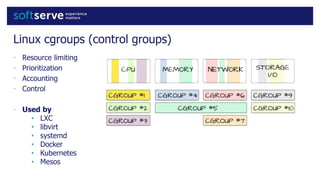

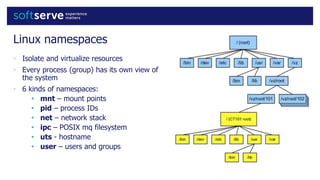

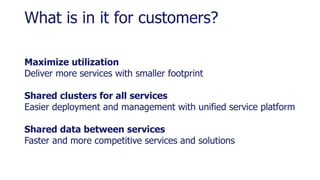

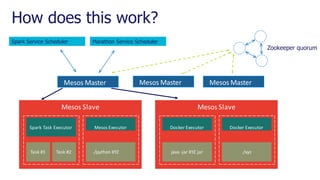

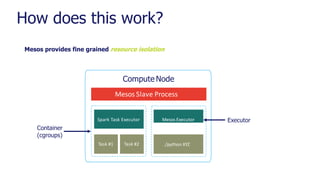

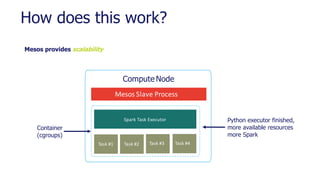

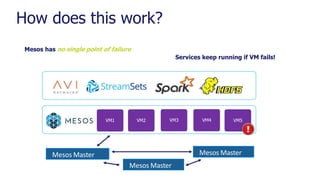

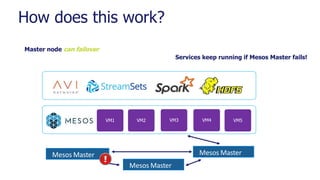

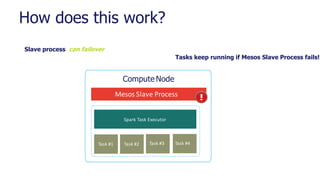

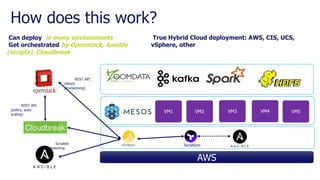

Containerization allows for standardized and isolated application deployment across infrastructure through container runtimes like Docker. Key features that enable containers include namespaces which isolate processes and their views of the system, cgroups which control resource usage, and copy-on-write storage for efficient application packaging. A container orchestration system like Mesos provides scalability, fault tolerance, and unified resource management across clusters. This allows maximizing infrastructure utilization through flexible scheduling of containerized applications and services on shared clusters.