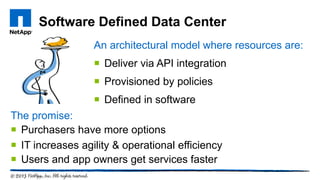

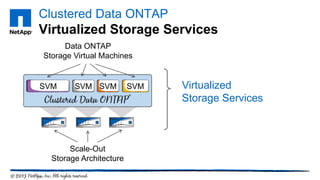

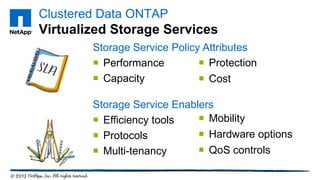

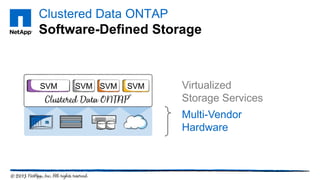

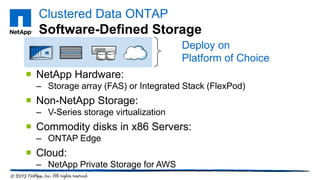

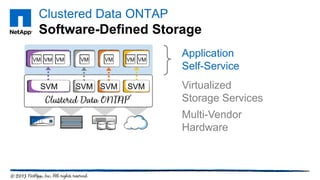

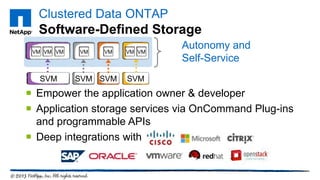

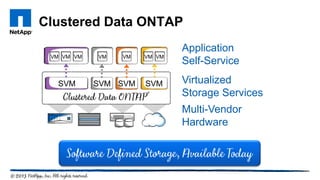

The document discusses software-defined storage (SDS) and its role in transforming IT into a service provider model. It emphasizes features such as application self-service, provisioning via policies, and support for diverse hardware, which enhance agility and efficiency. Additionally, it highlights an event showcasing how companies leverage SDS and FlexPod to improve data center performance.