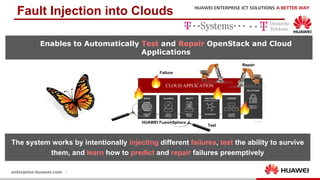

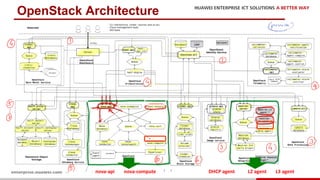

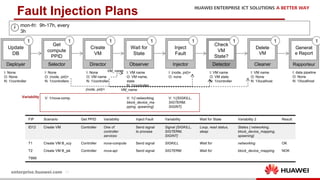

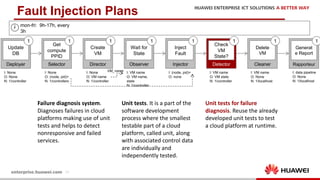

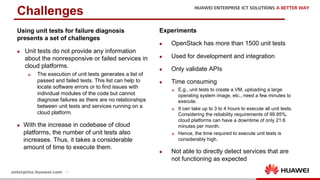

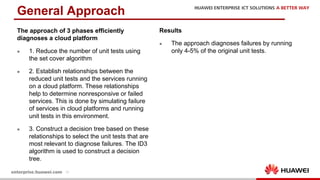

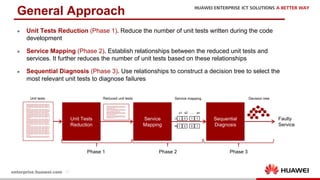

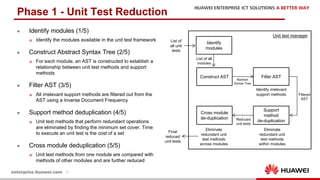

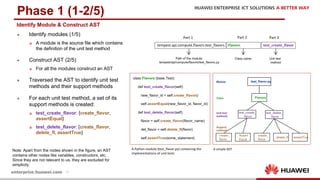

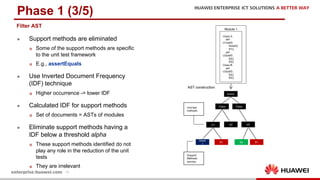

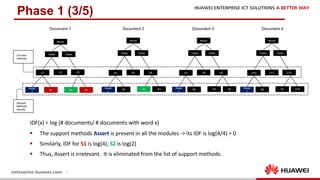

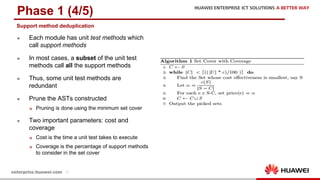

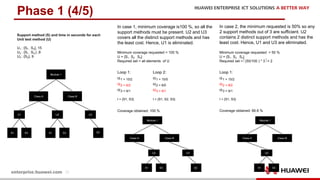

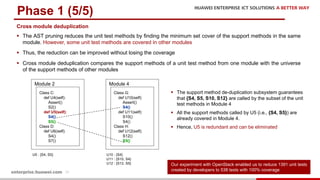

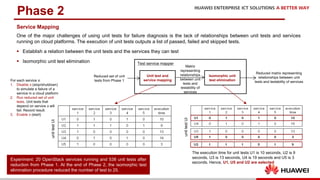

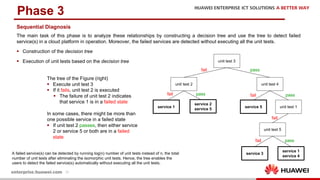

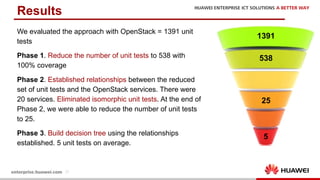

The document discusses the 9th International Workshop on Software Engineering for Resilient Systems held in Geneva, highlighting Dr. Jorge Cardoso's presentation on using fault injection to enhance cloud reliability, as demonstrated with OpenStack. It emphasizes the significance of toolsets like Netflix's Chaos Monkey for testing cloud applications and proposes a method to efficiently diagnose failures by reducing unit tests and establishing service relationships. The approach aims to diagnose failures using only 4-5% of the original unit tests, aligning with operational reliability requirements.