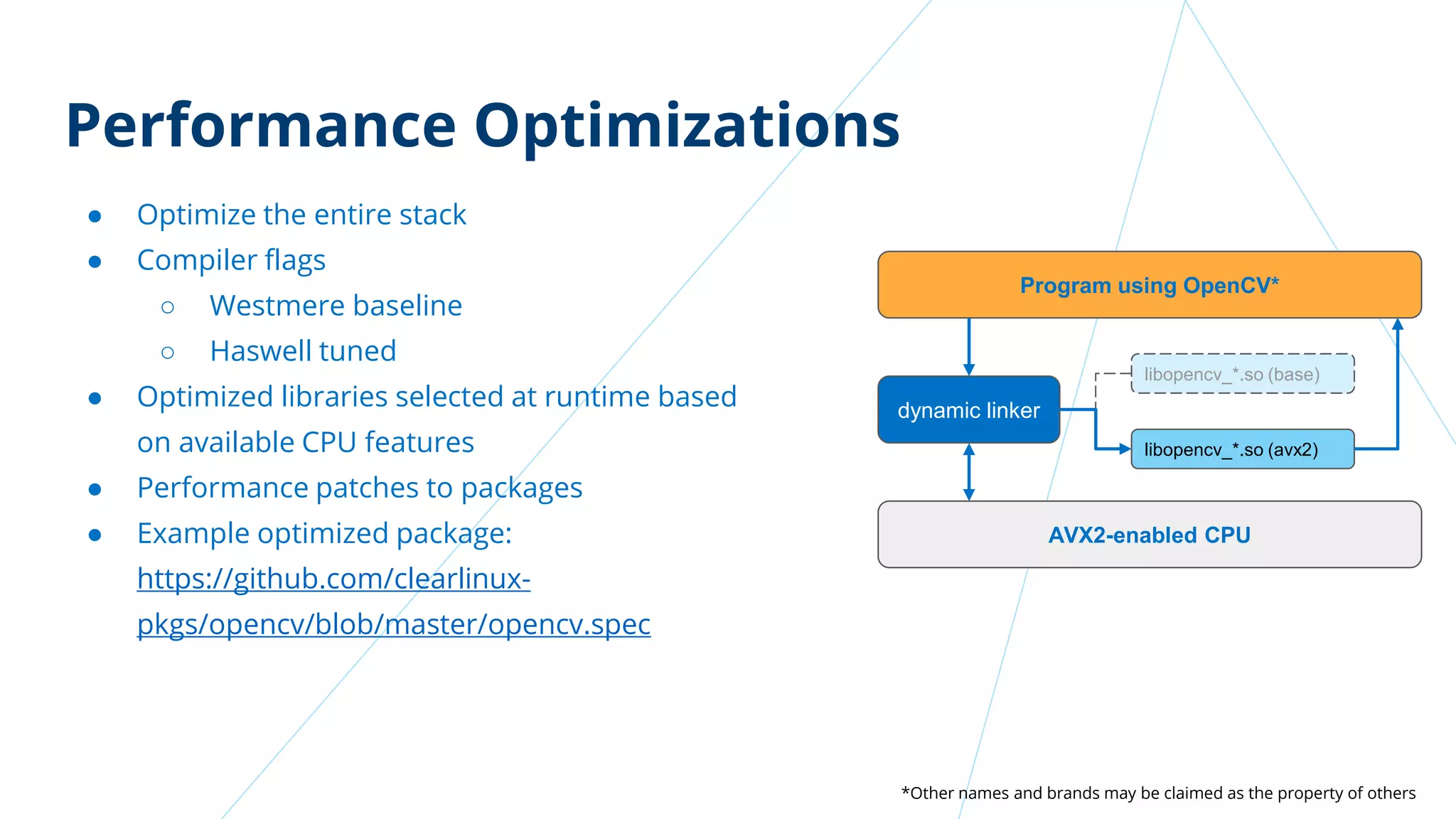

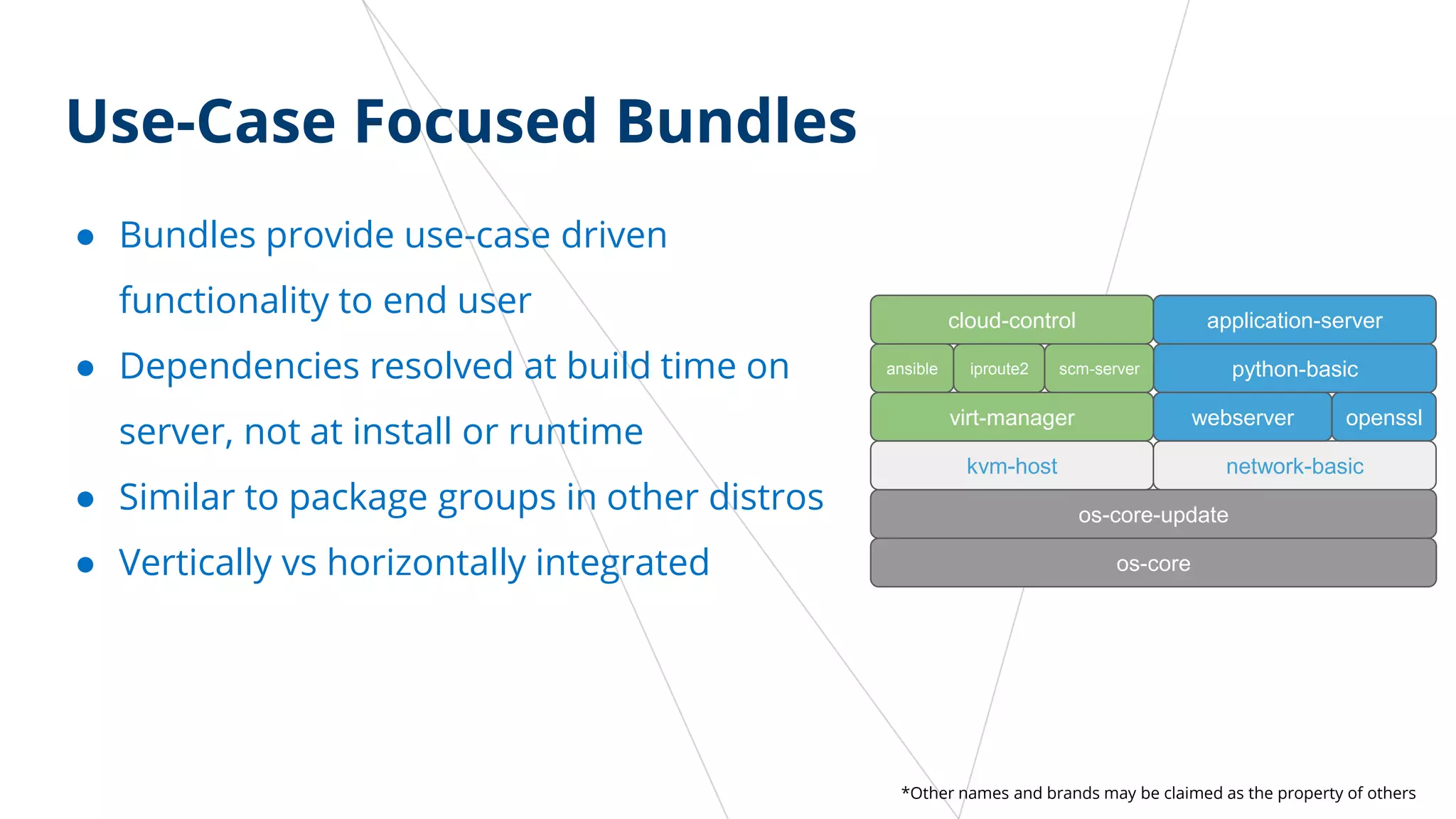

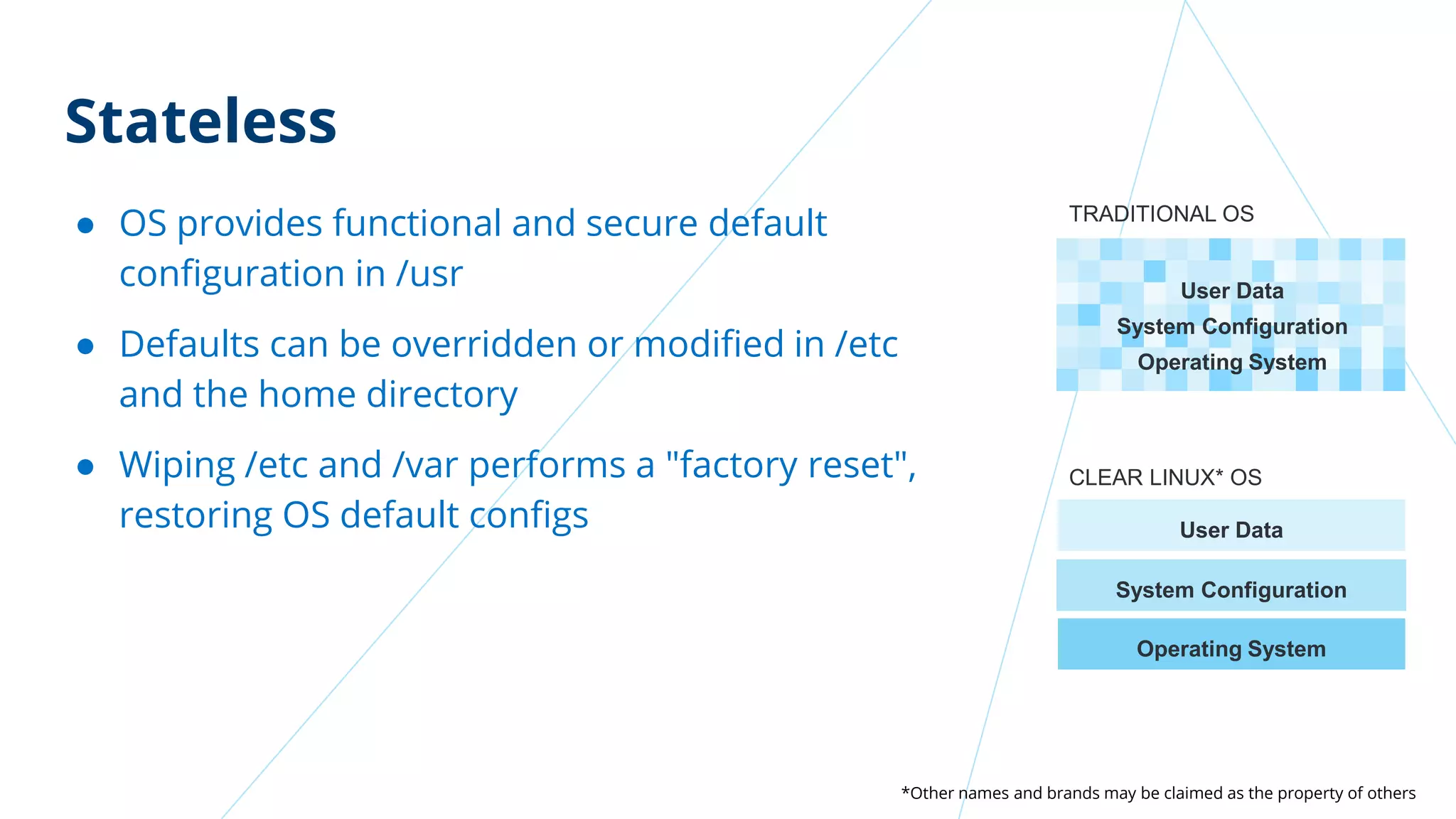

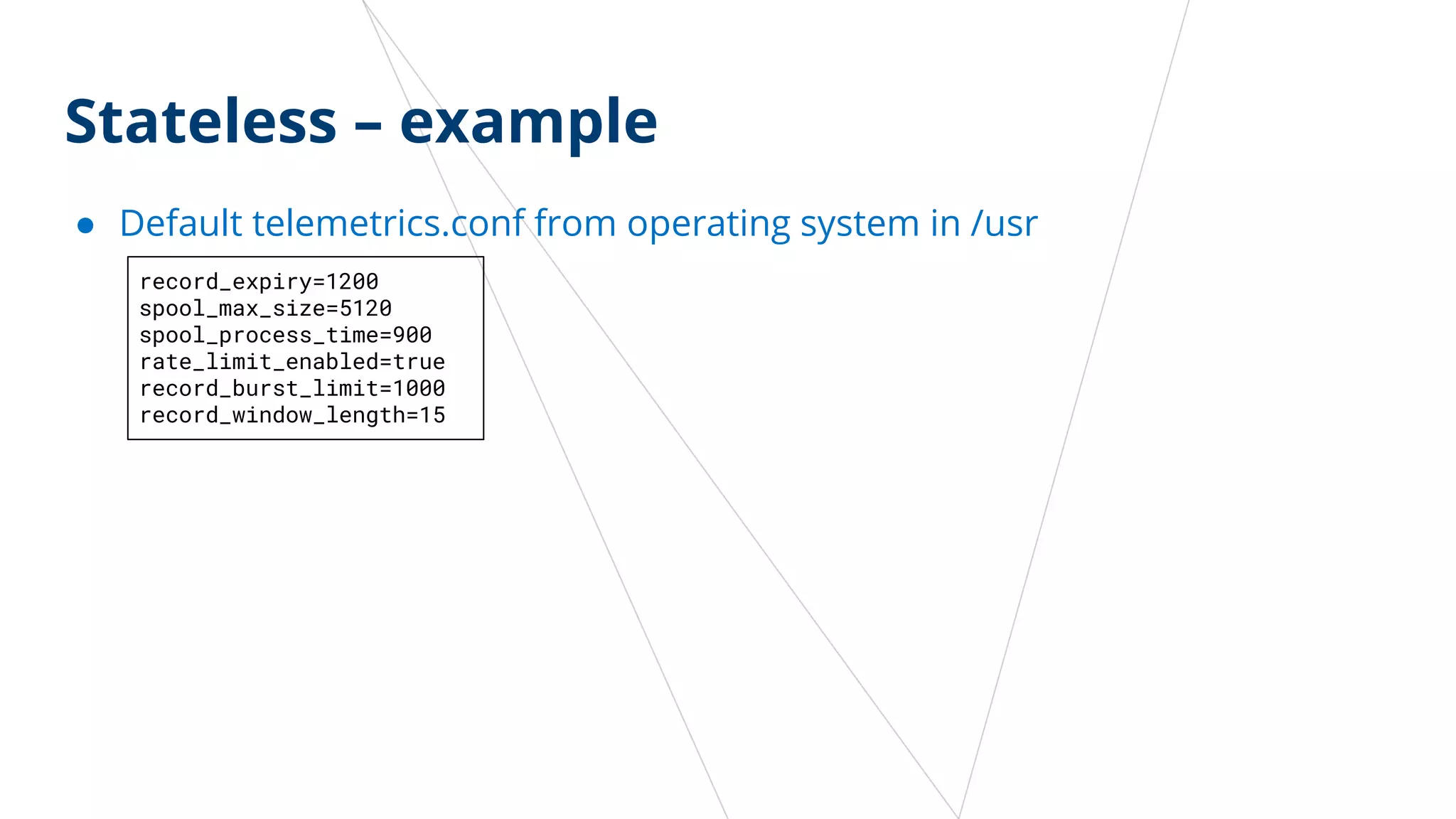

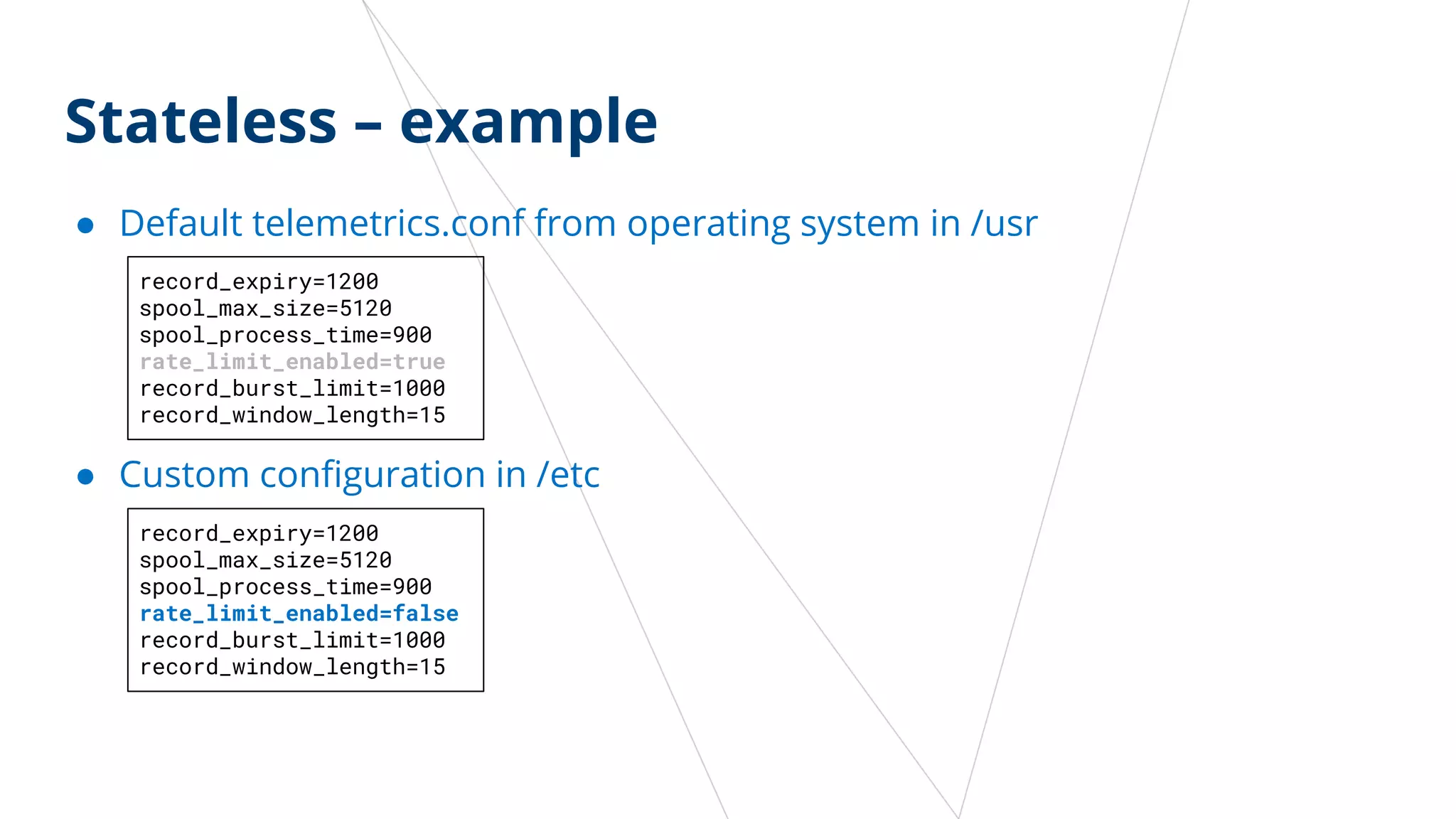

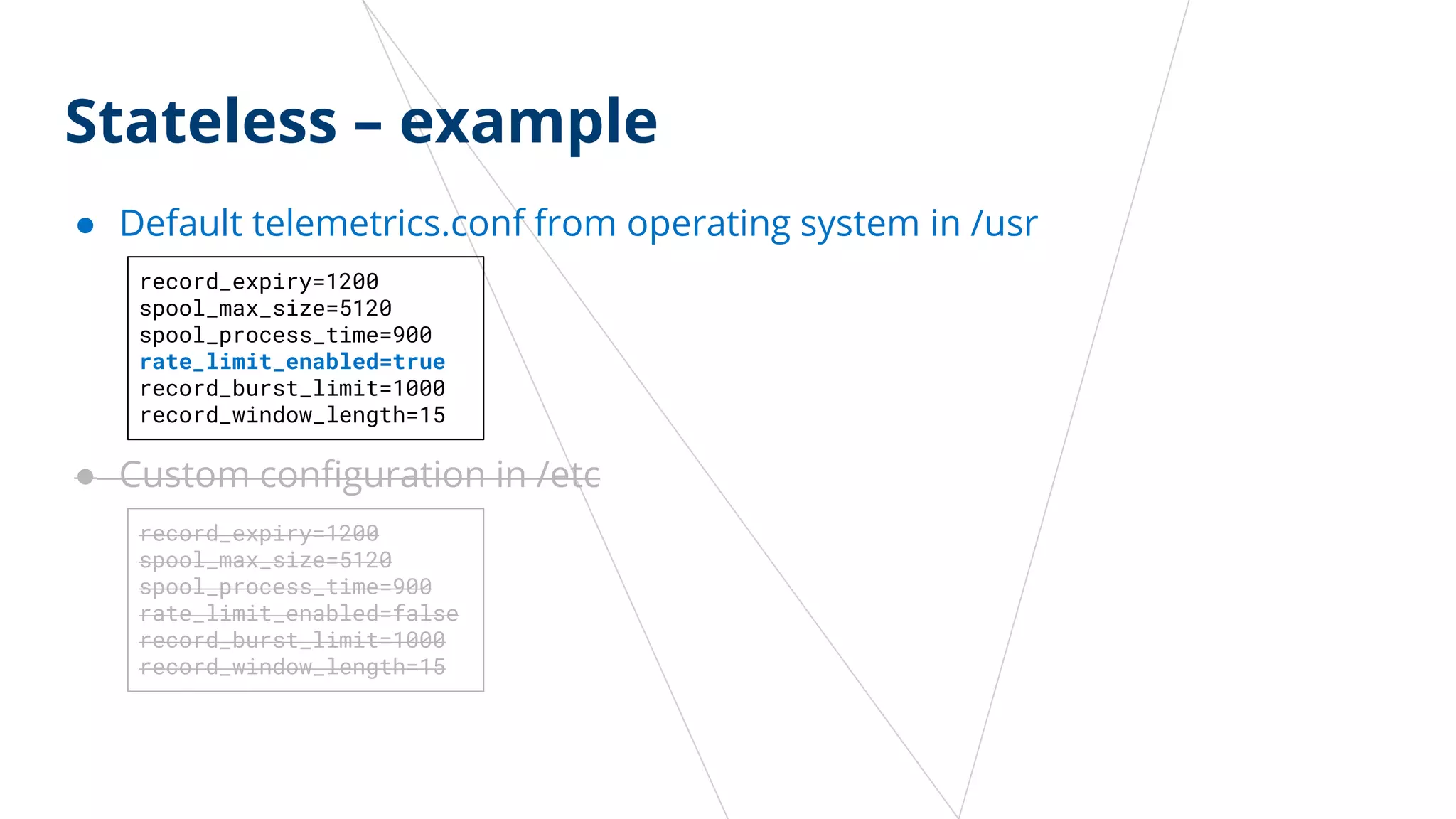

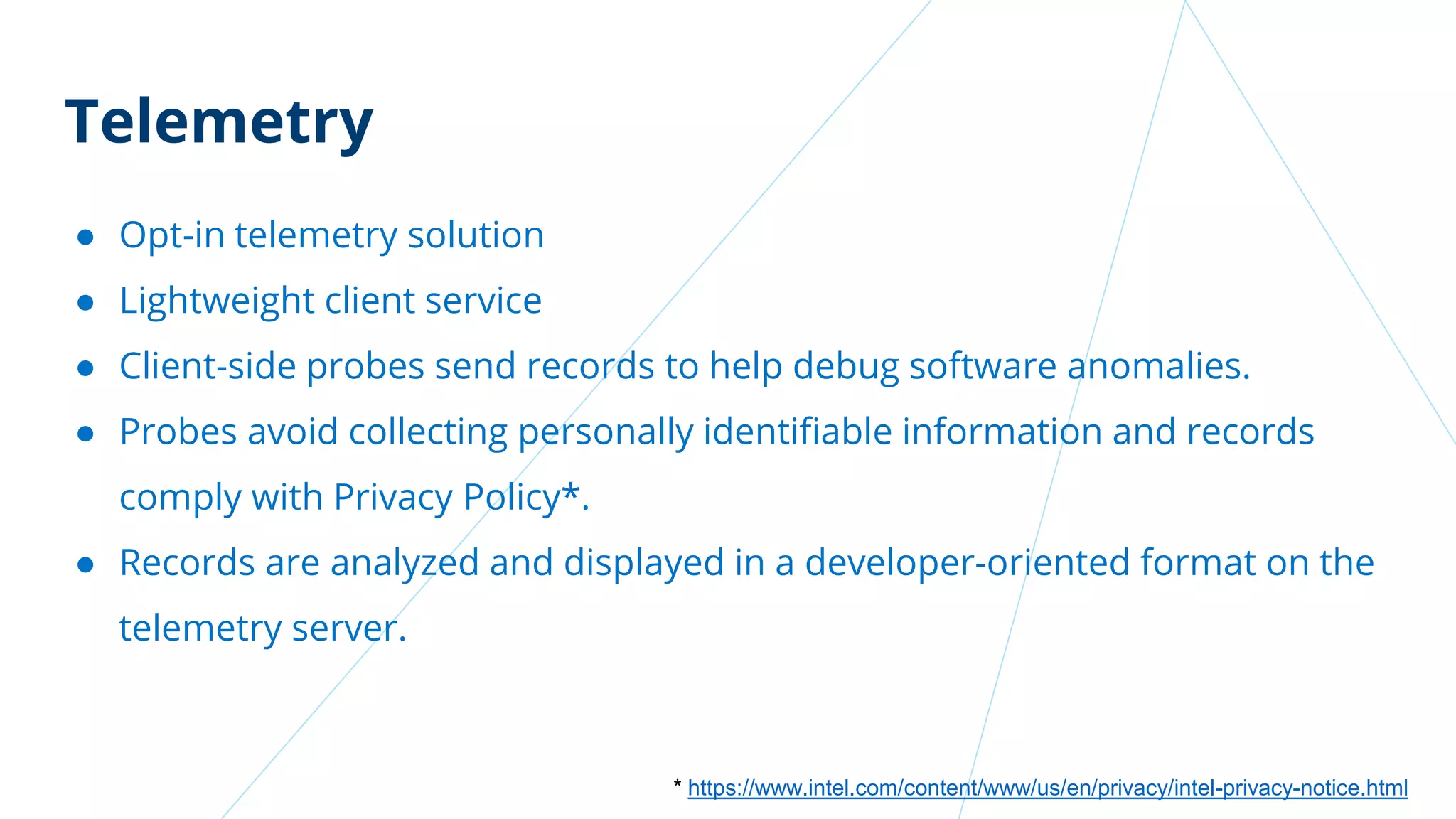

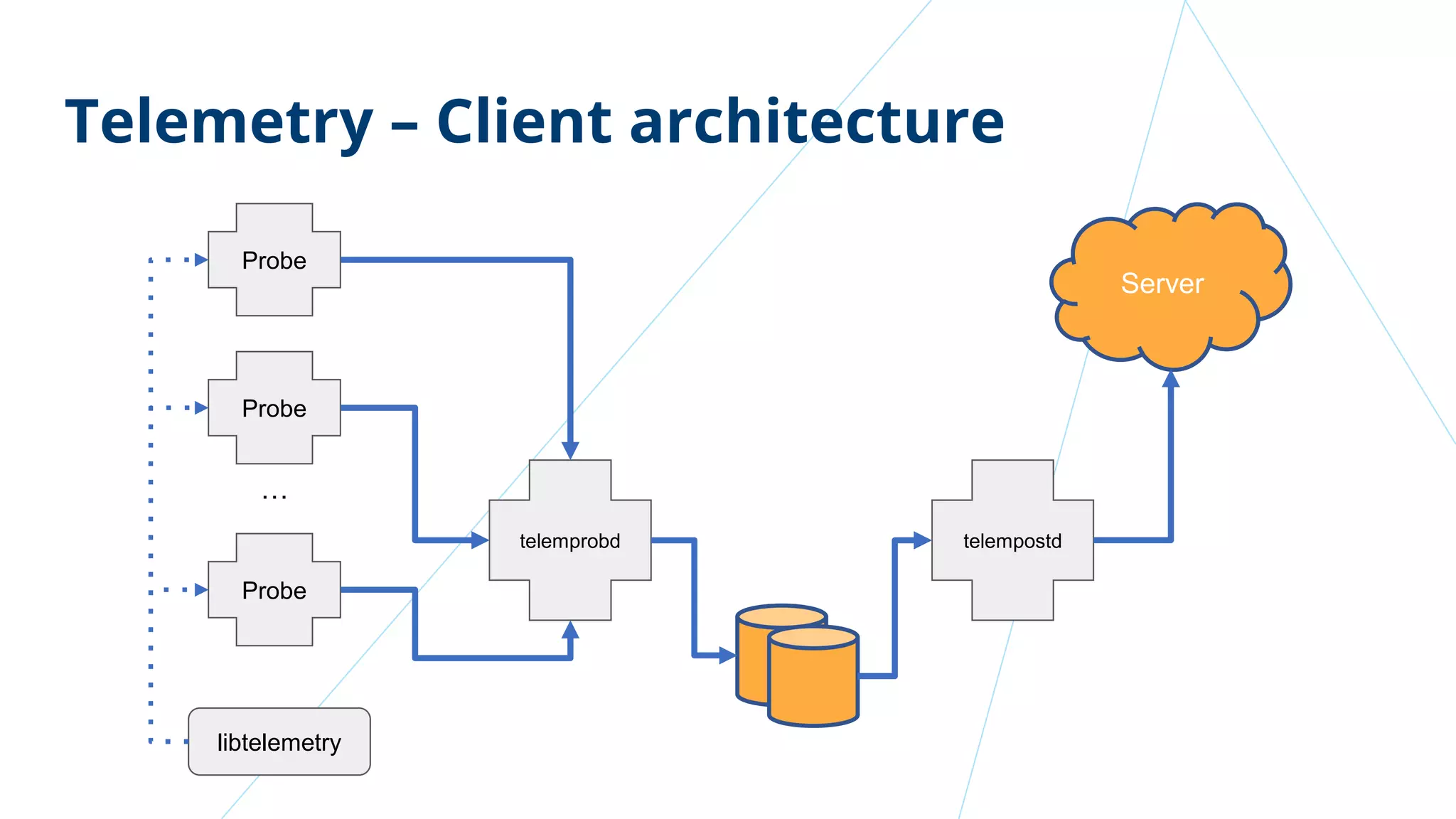

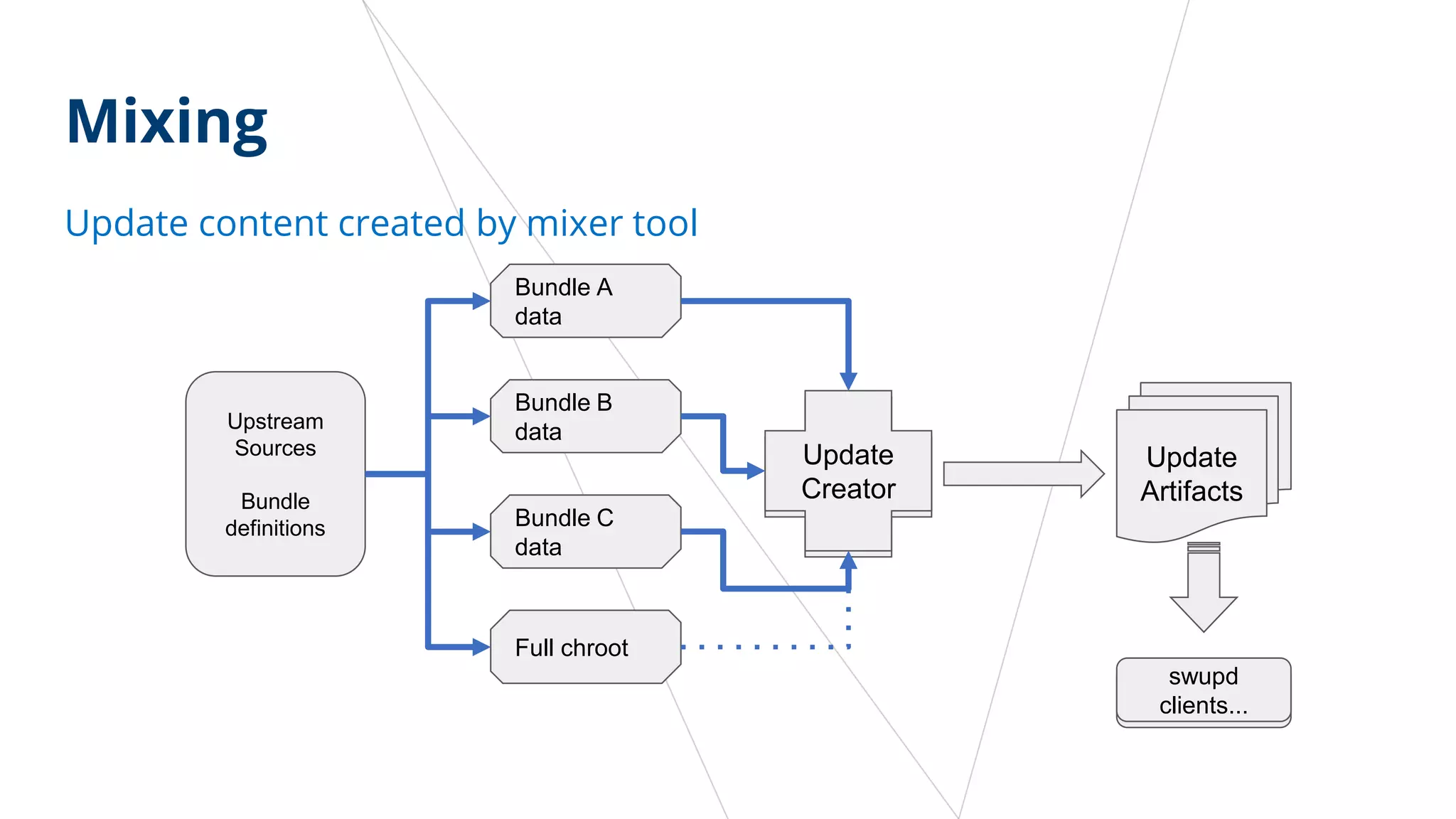

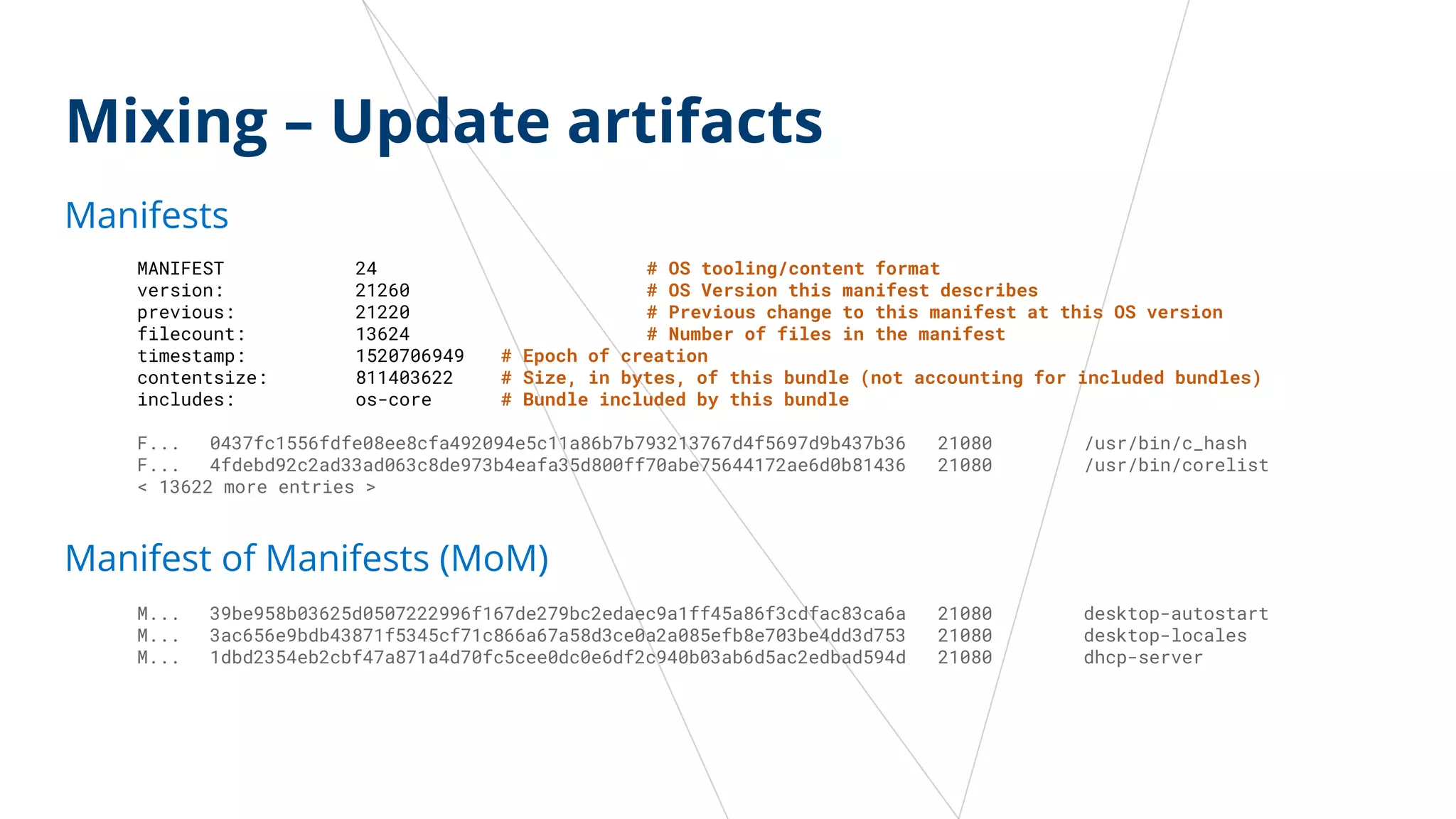

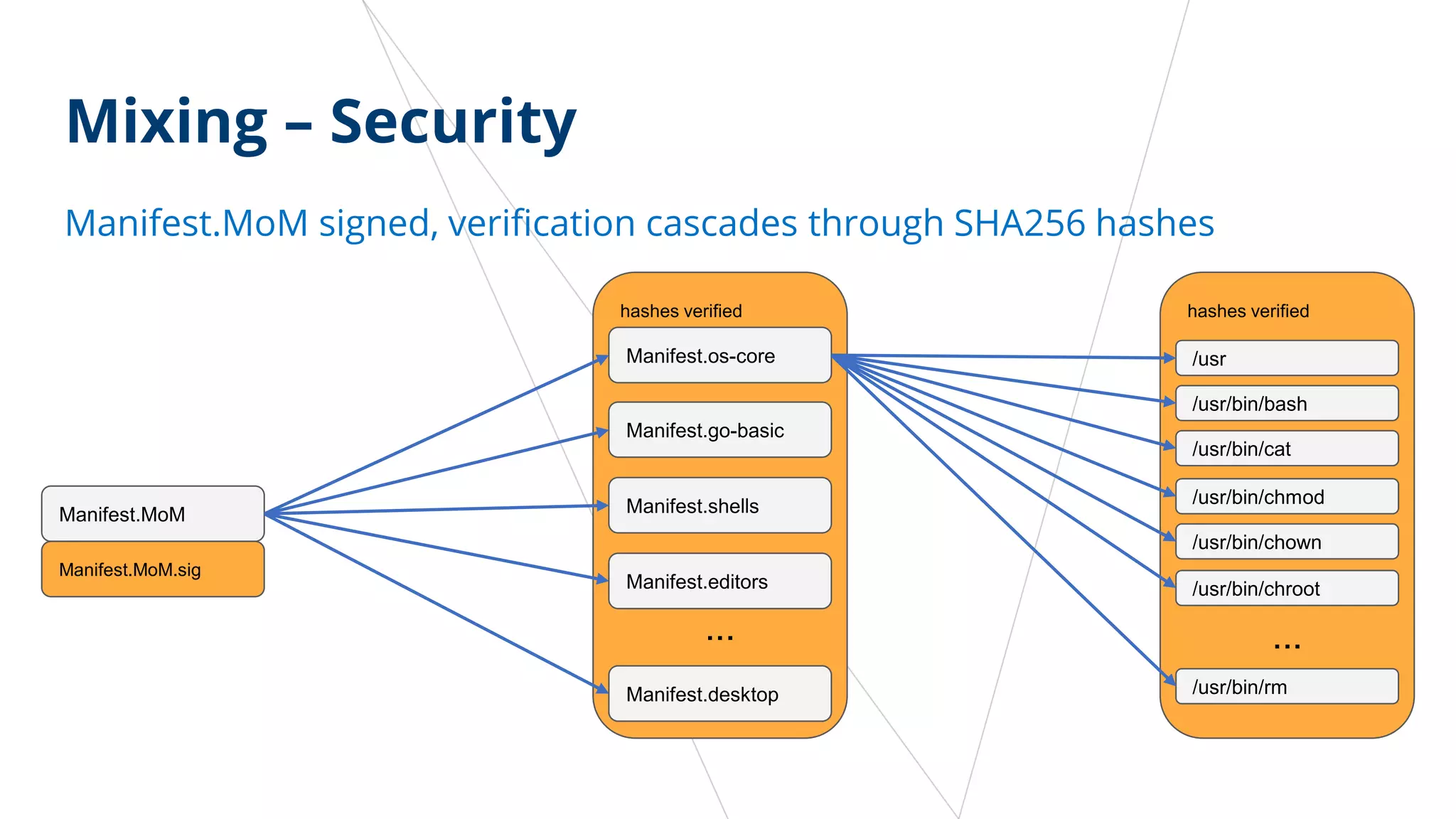

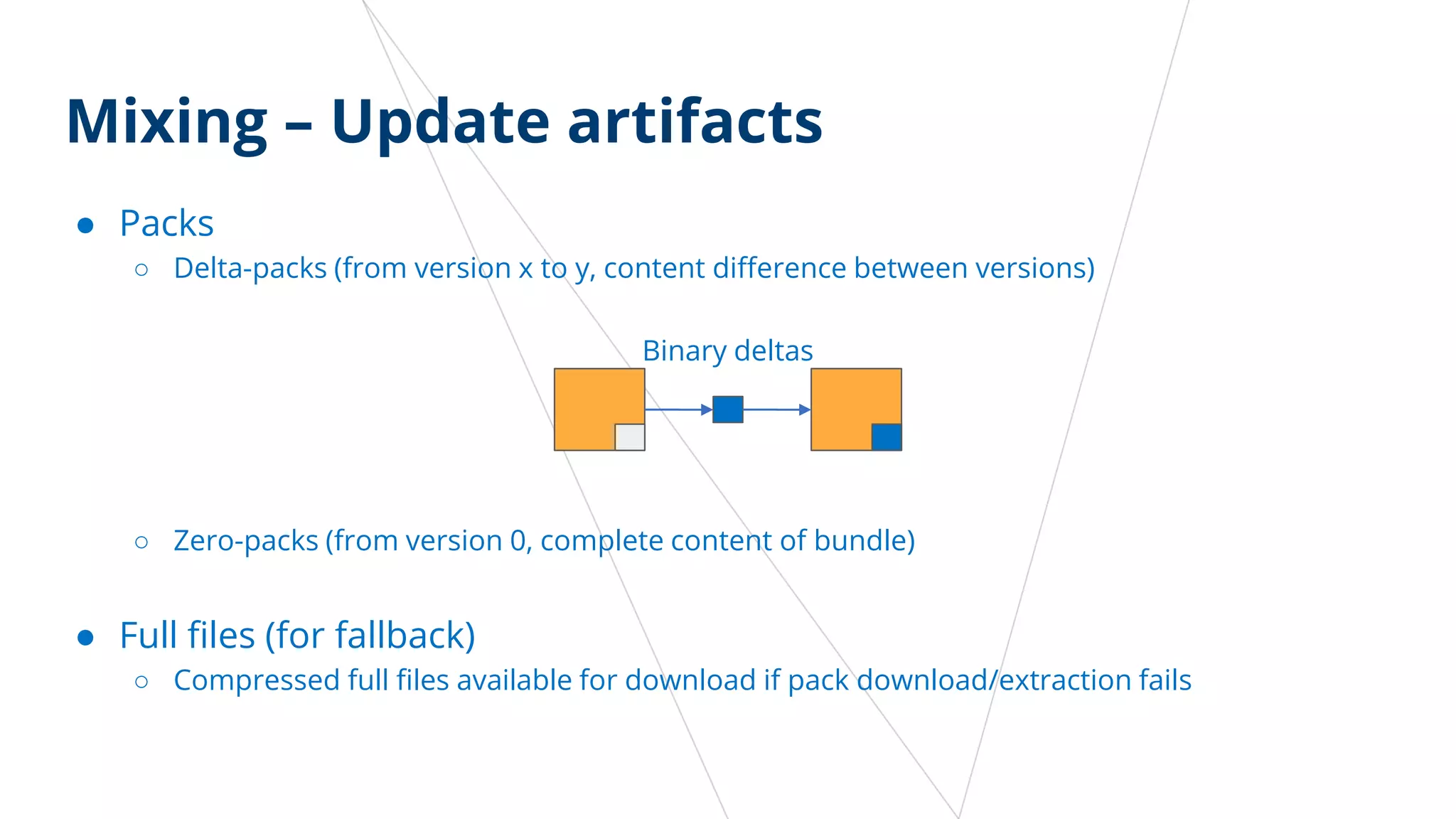

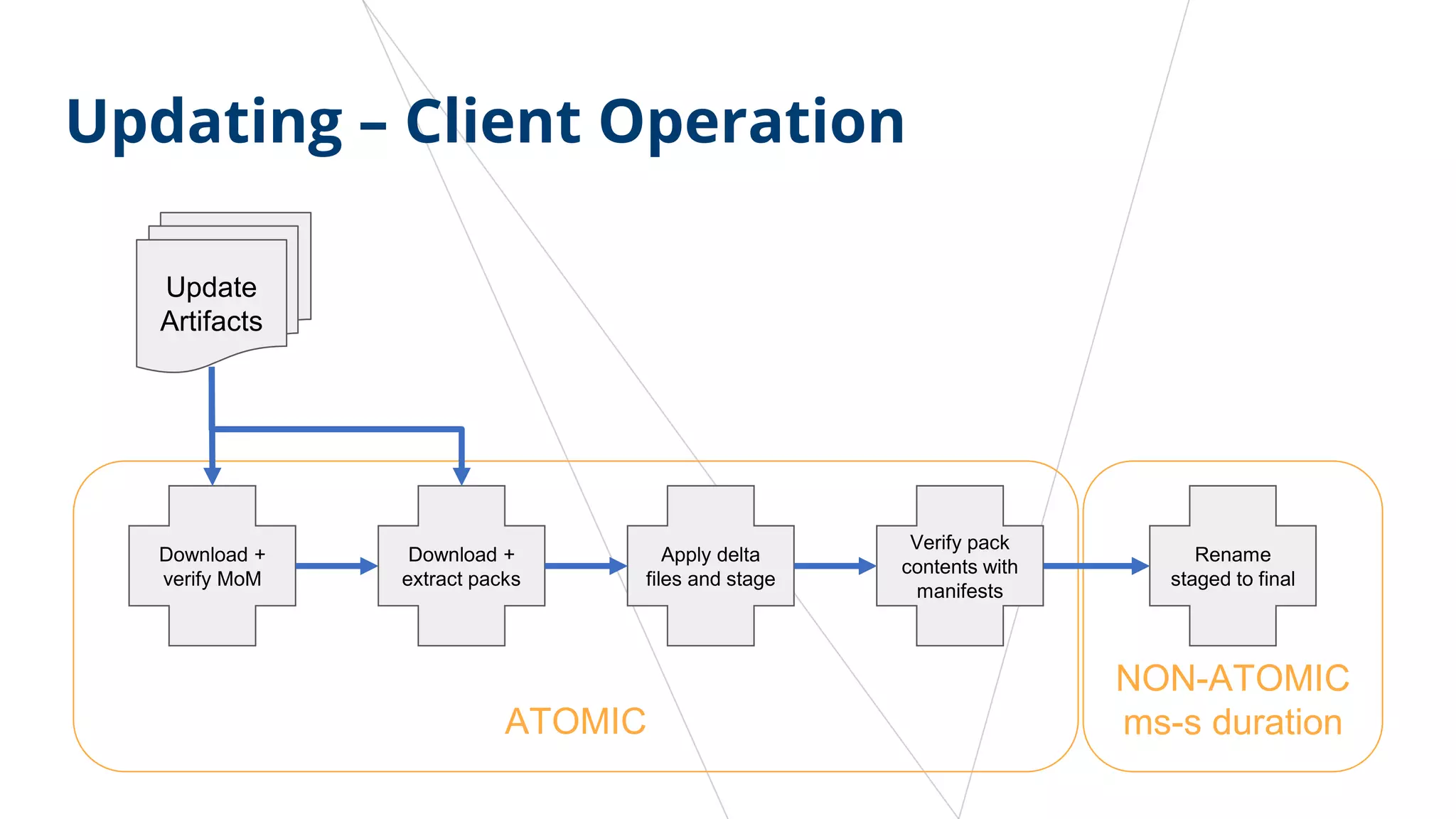

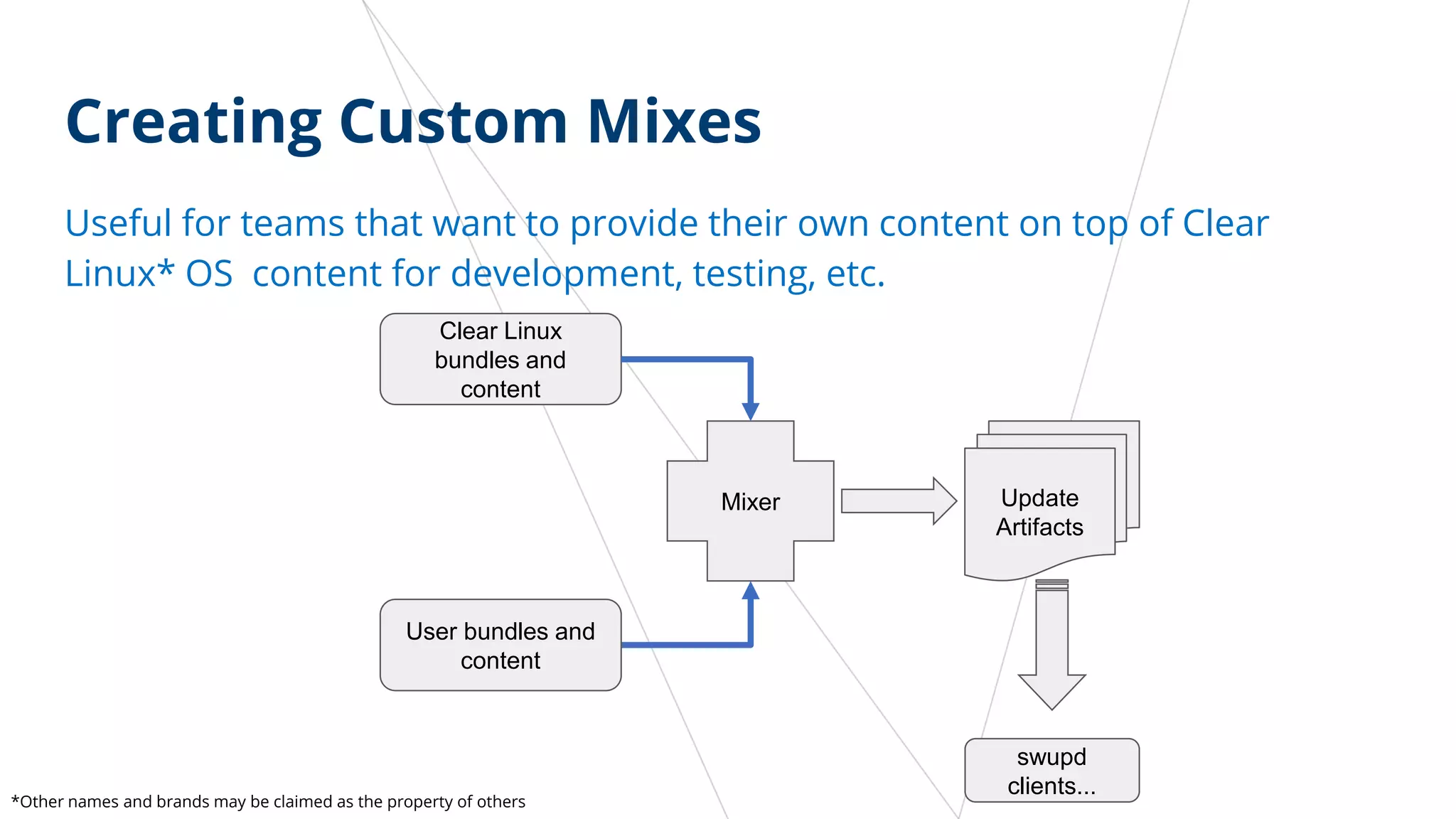

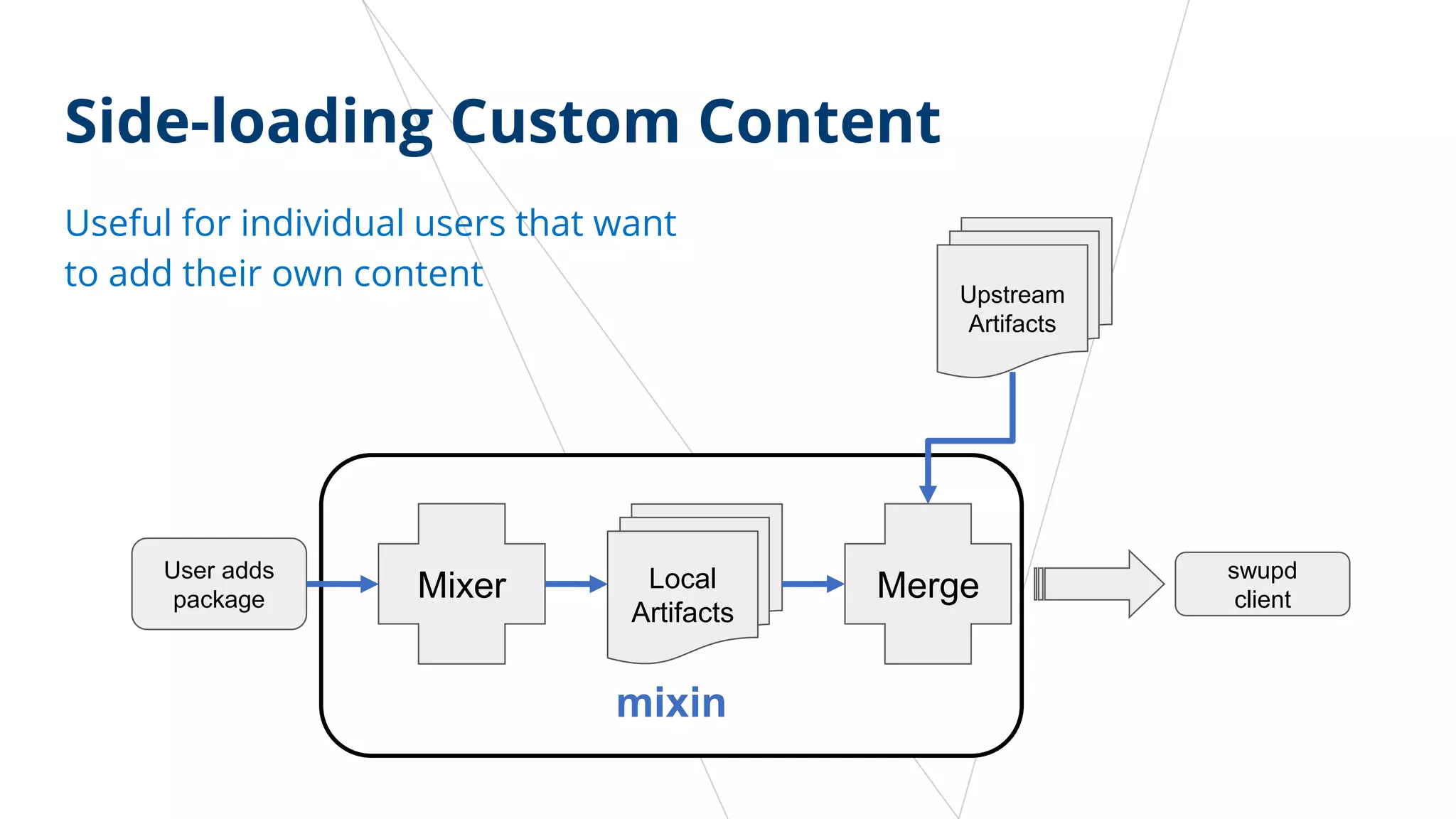

The document provides an overview of Clear Linux OS, emphasizing its architecture, performance optimizations, and stateless design. It highlights features such as a rolling release distribution, developer-focused bundles, and an opt-in telemetry solution for monitoring software performance. Additionally, it discusses the updating process and customization options for users and teams, ensuring security and efficiency in system updates.