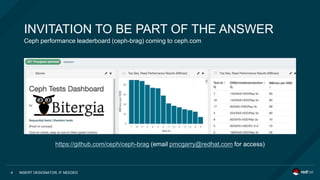

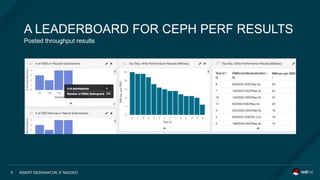

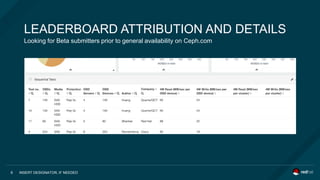

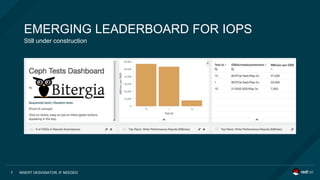

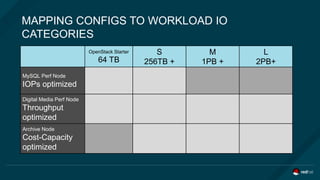

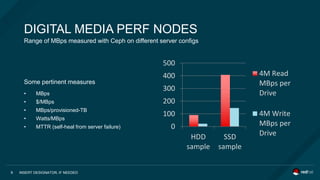

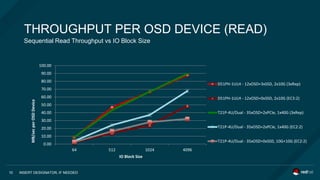

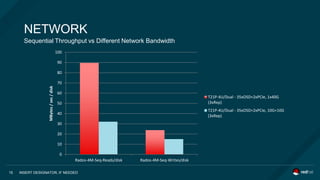

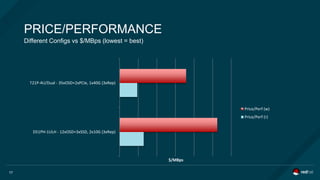

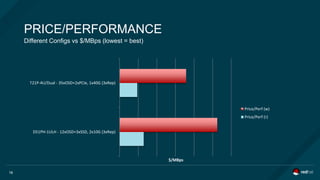

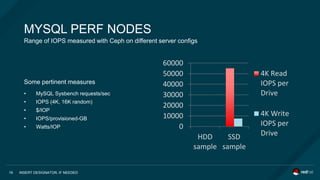

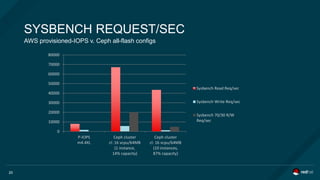

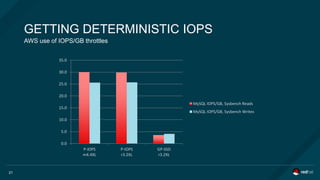

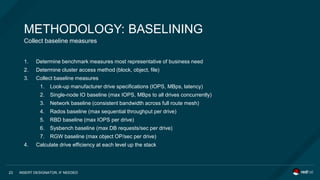

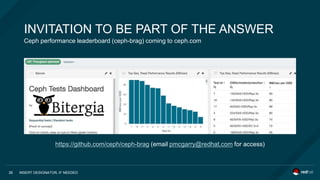

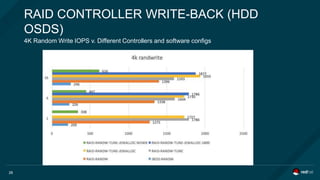

The document discusses Ceph's performance profiling, addressing common community questions regarding its capability with various workloads and configurations. It presents performance metrics, including IOPS and throughput across different setups, and outlines methodologies for benchmarking and capacity planning. Additionally, it introduces a performance leaderboard accessible for users to compare results and optimize configurations.