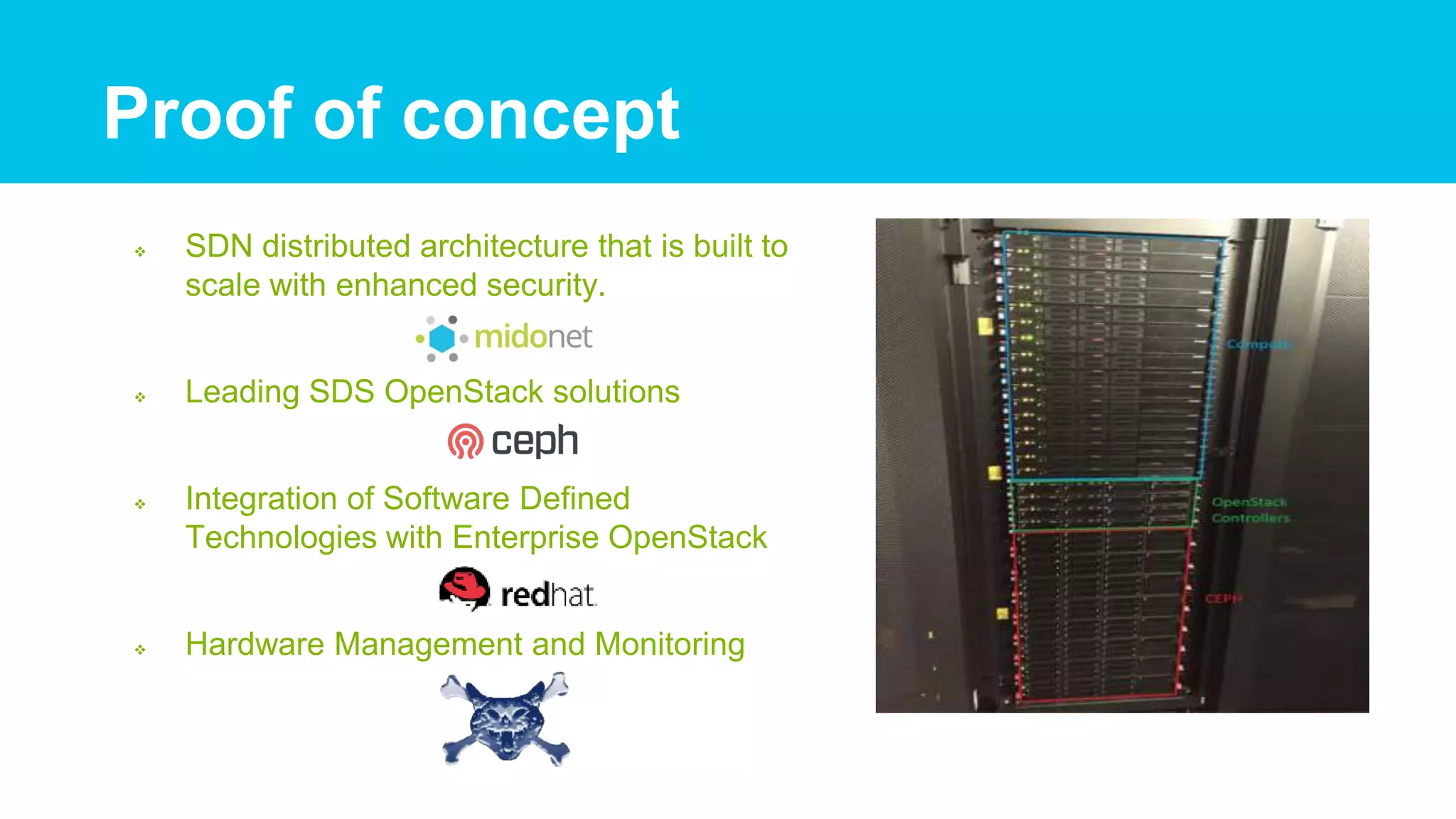

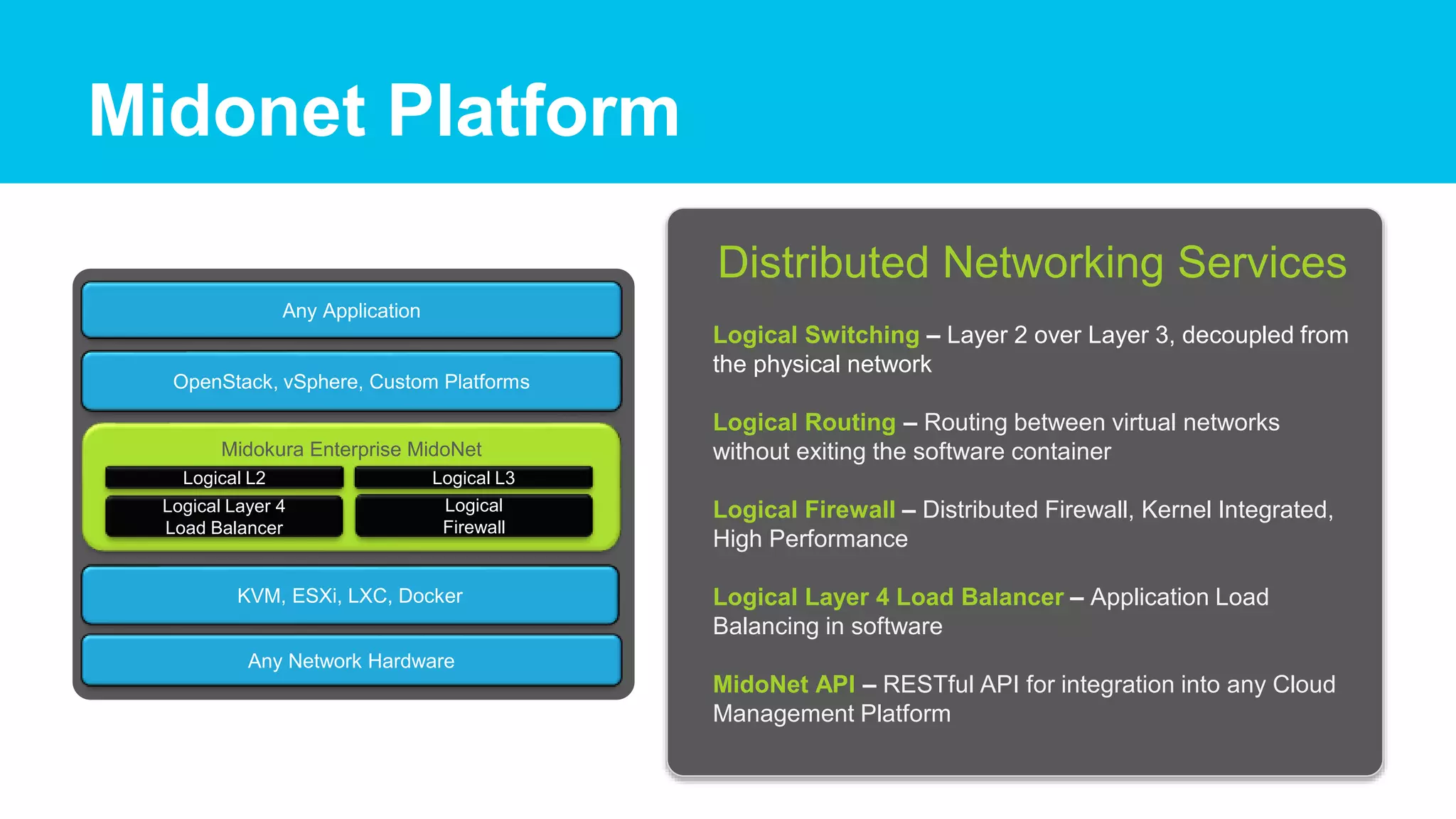

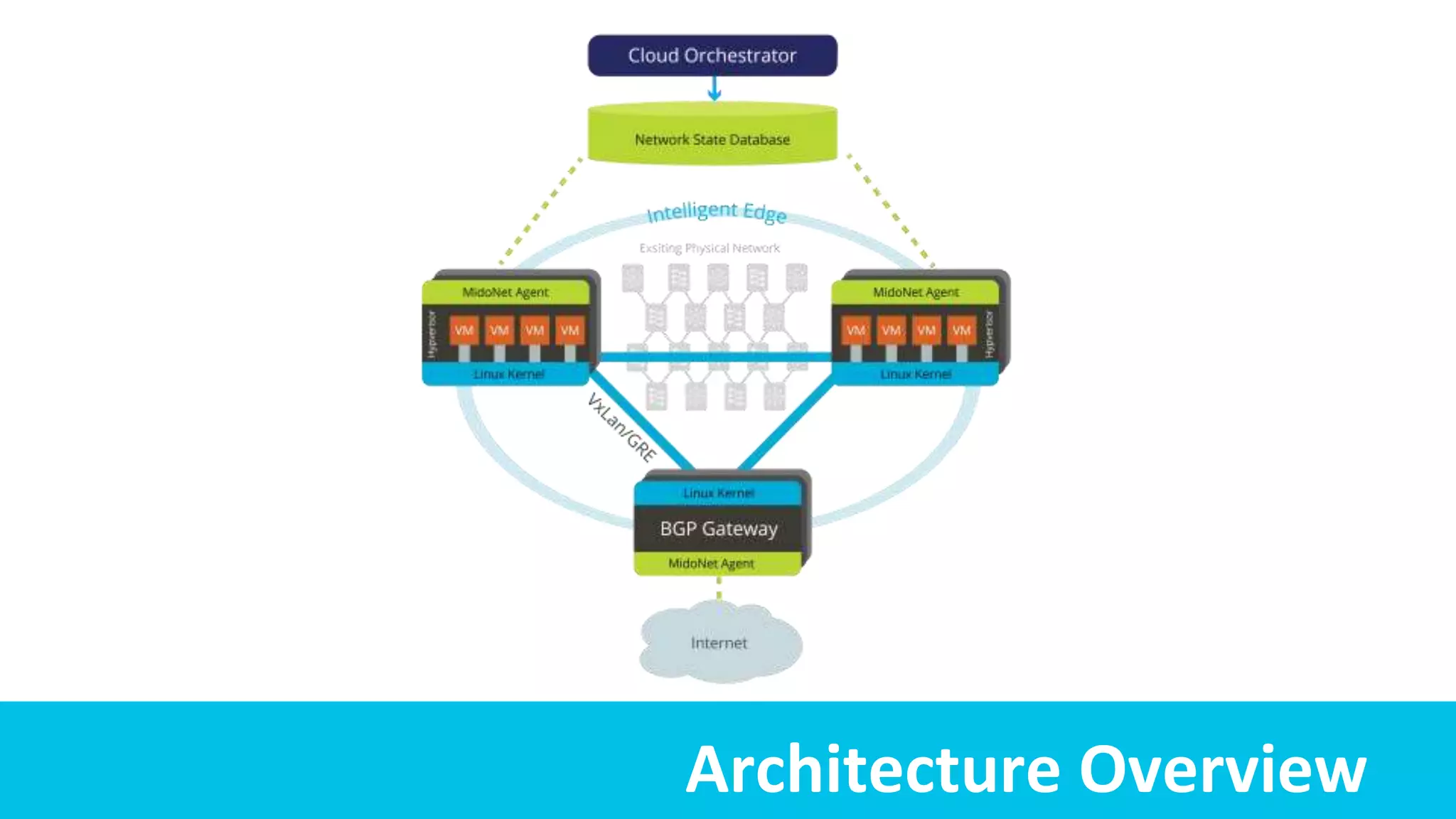

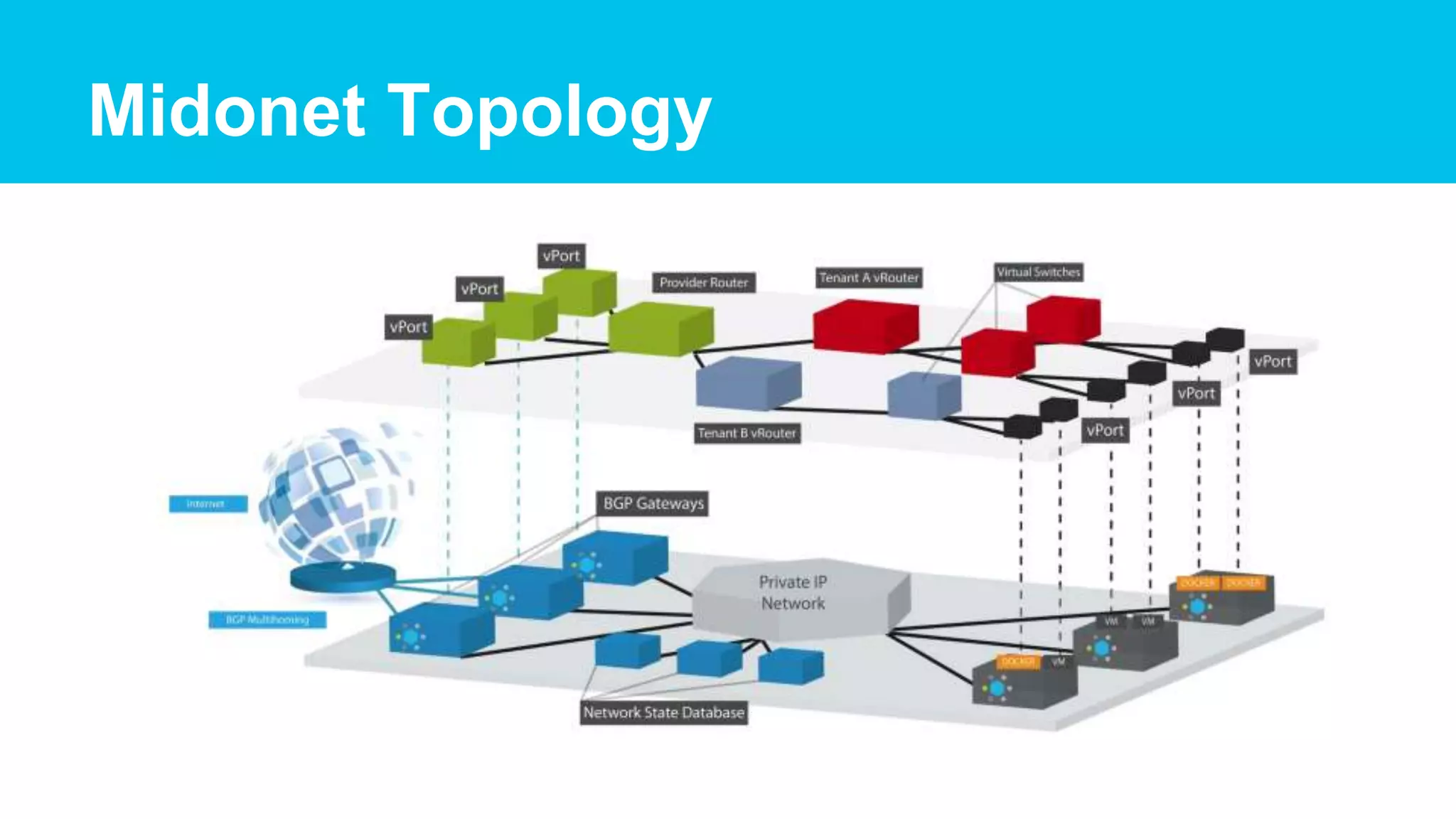

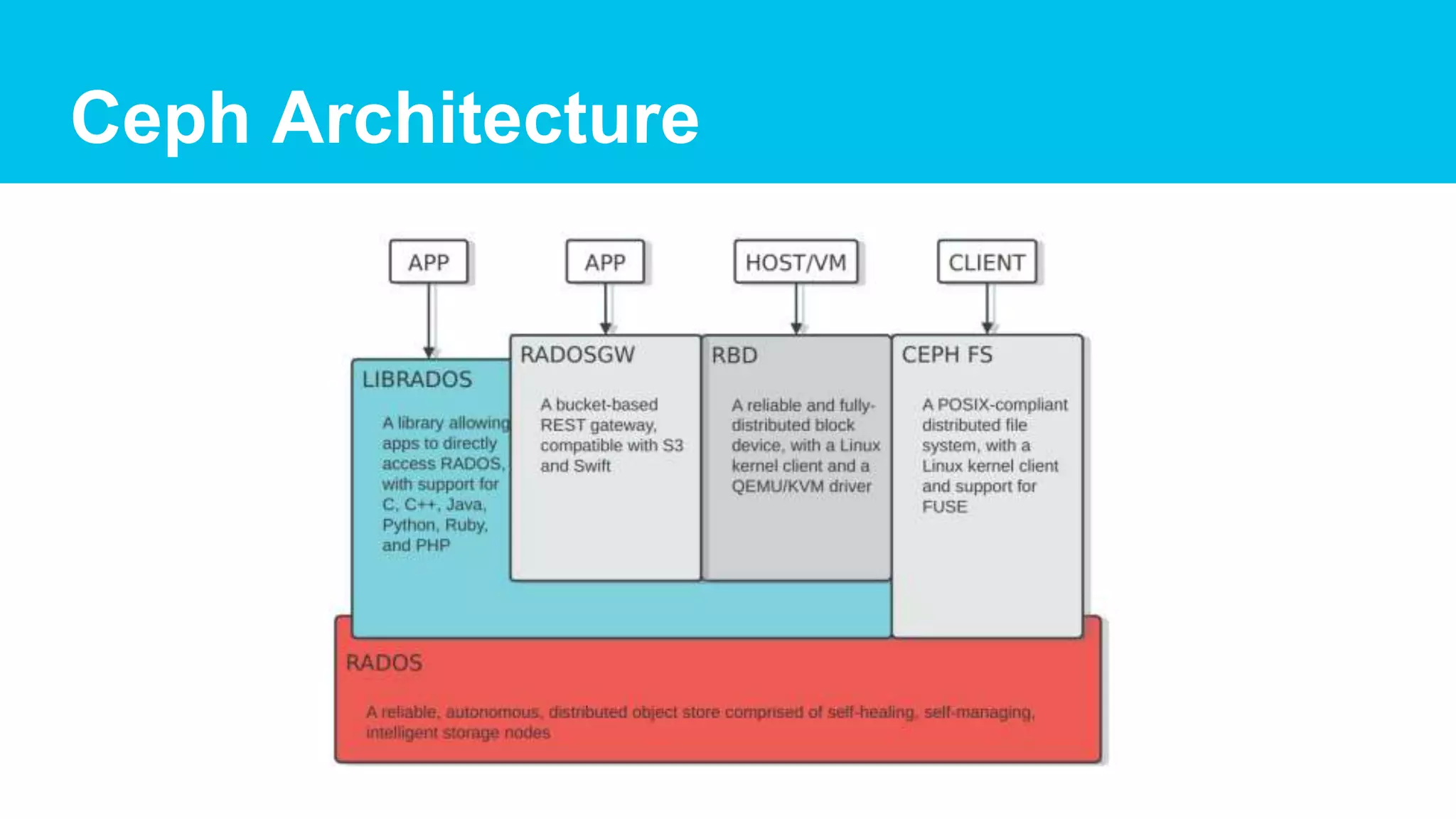

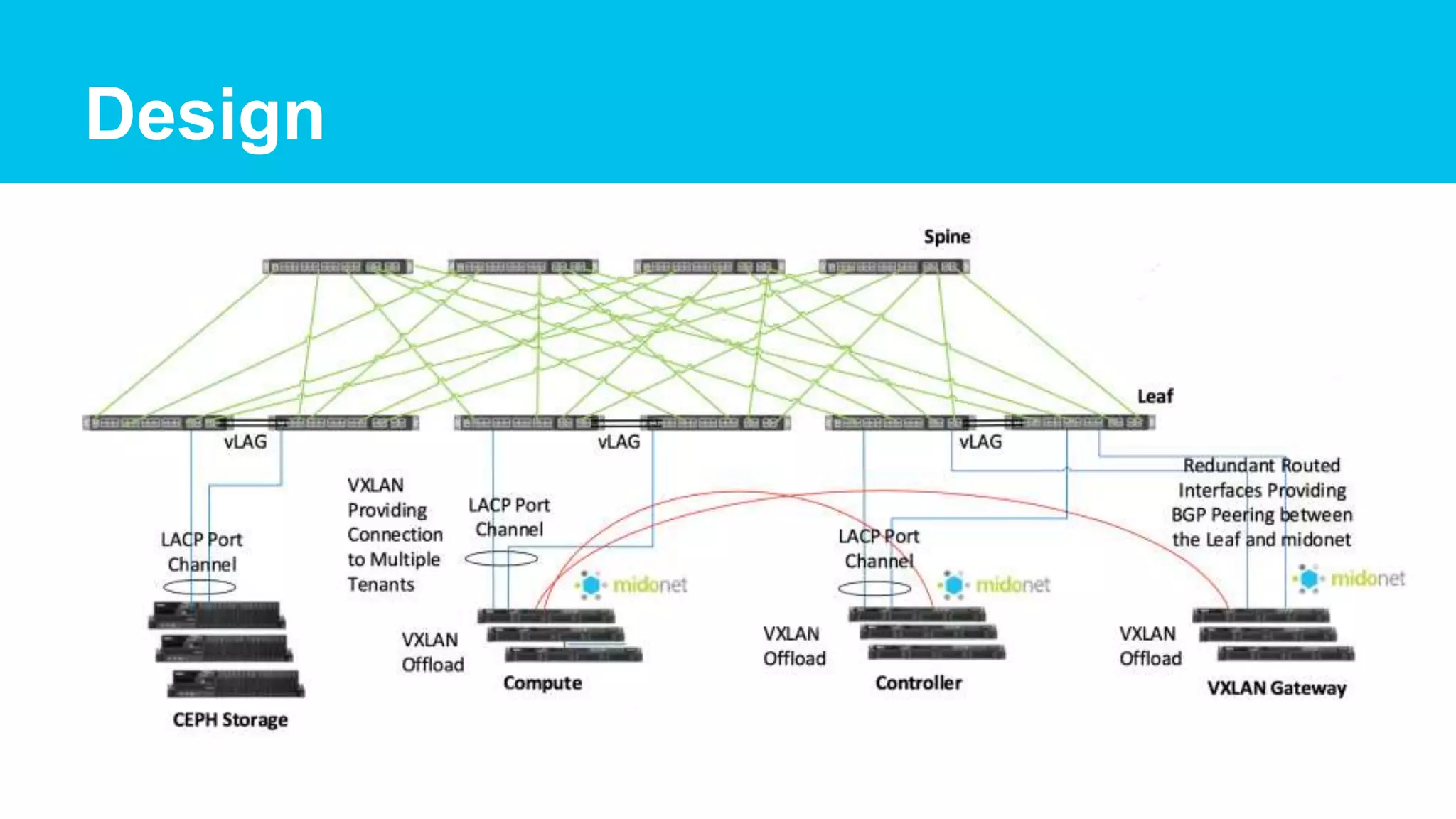

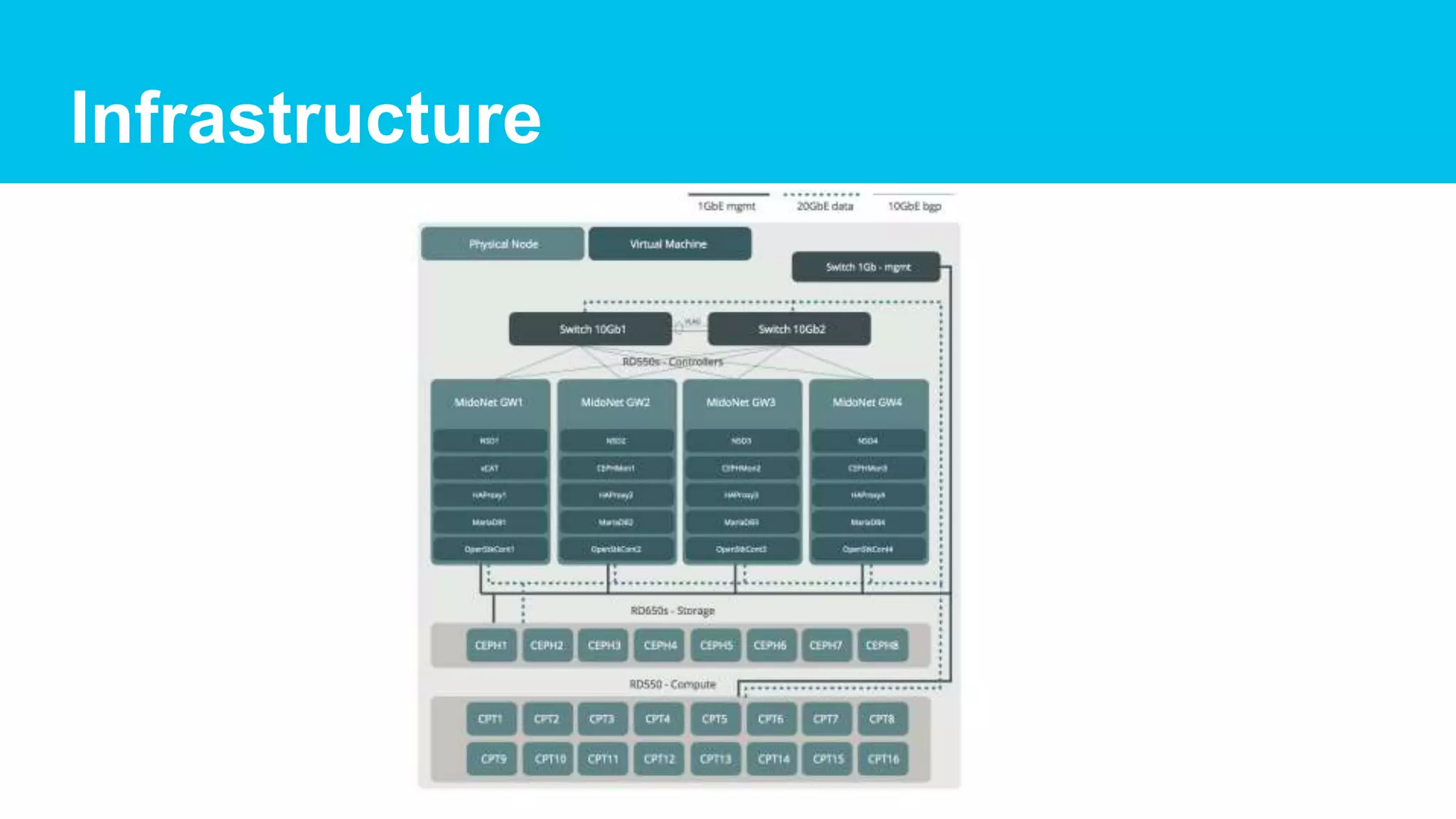

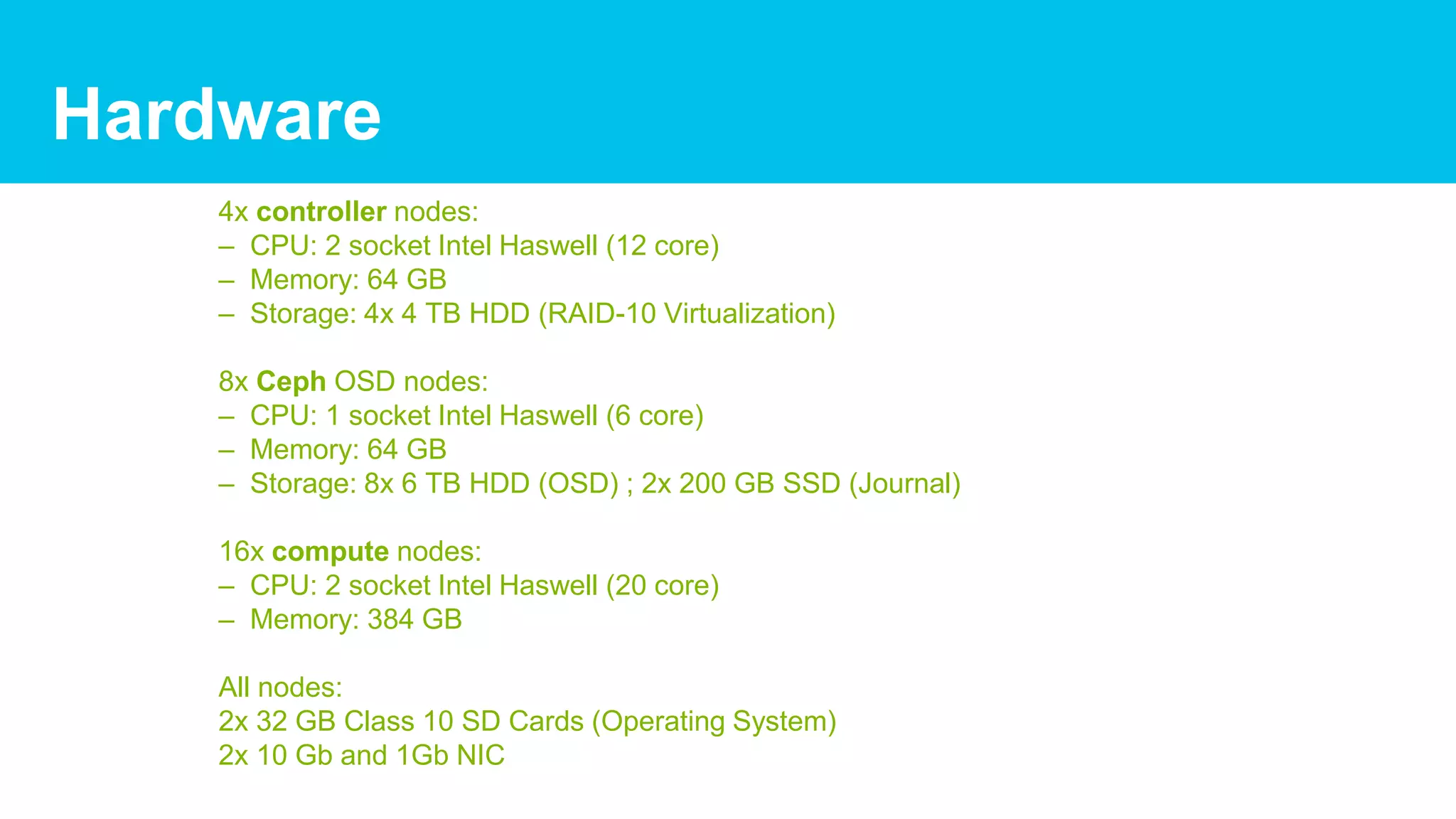

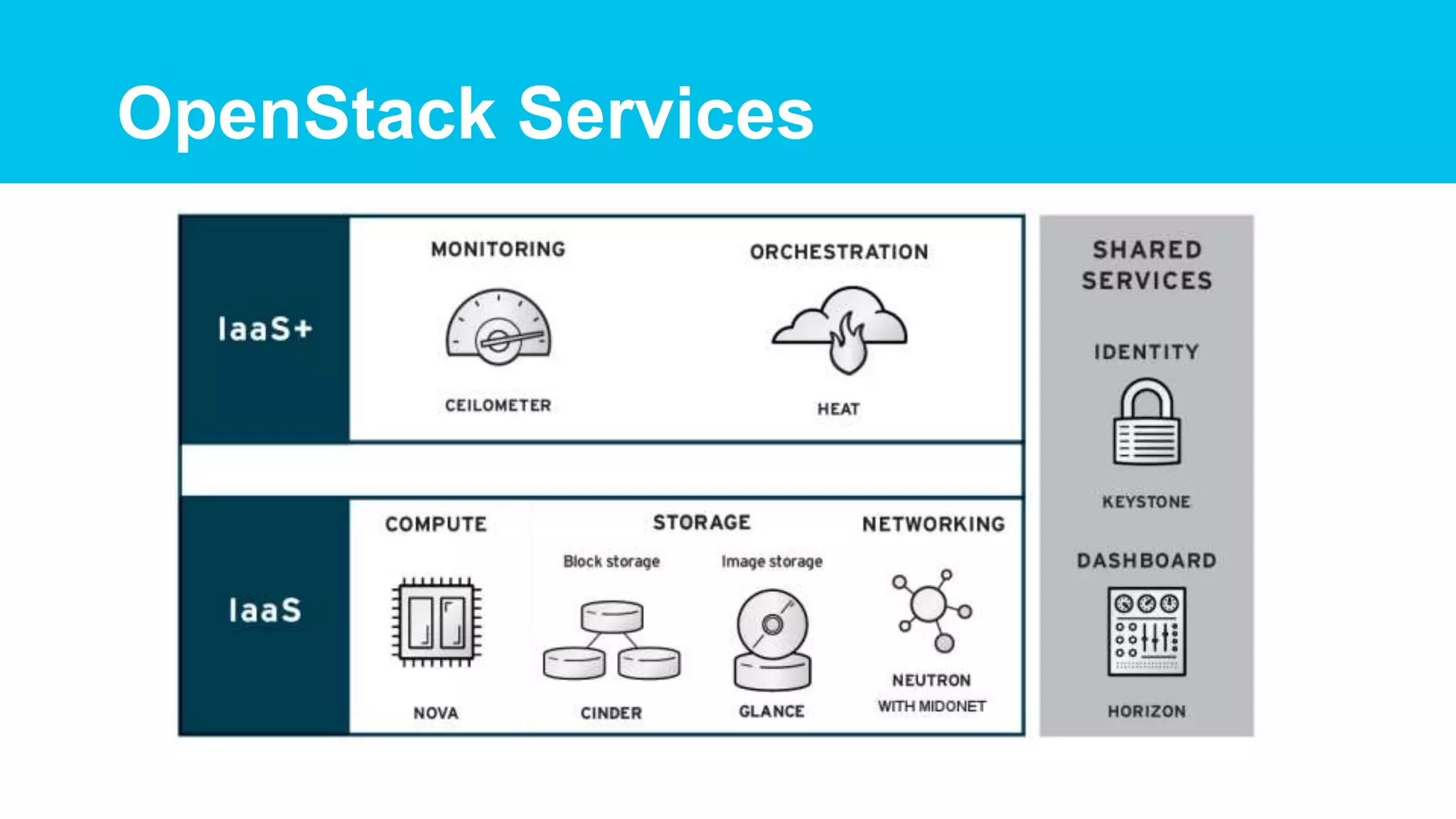

This document summarizes a proof of concept for a software defined data center using OpenStack and Midokura MidoNet software defined networking. The POC used 4 controllers, 8 Ceph storage nodes, and 16 compute nodes with Midokura providing logical layer 2-4 networking services. Key lessons learned included planning the underlay network configuration, optimizing Zookeeper connections, and improving OpenStack deployment processes which can be complex. Performance testing showed Ceph throughput was higher for reads than writes and SSD journaling improved IOPS. The streamlined workflow provided by the software defined infrastructure could help reduce costs and management complexity for organizations.