- The document is a slide deck for a webinar on building a basic blogging application using MongoDB.

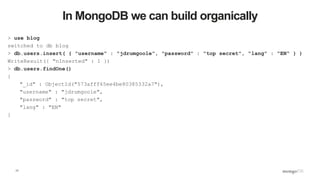

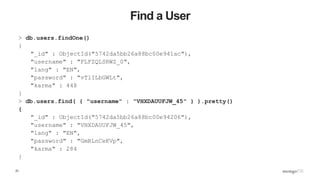

- It covers MongoDB concepts like documents, collections and indexes. It then demonstrates how to install MongoDB, connect to it using the mongo shell, and insert documents.

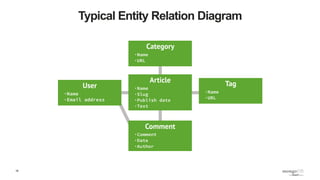

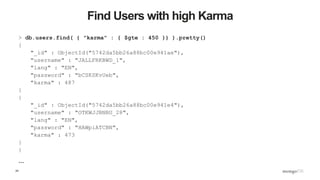

- The slide deck proceeds to model a basic blogging application using MongoDB, creating collections for users, articles and comments. It shows how to query, update, and import large amounts of seeded data.

![6

Document Store

{

name : “Joe Drumgoole”,

title : “Director of Developer Advocacy”,

Address : {

address1 : “Latin Hall”,

address2 : “Golden Lane”,

eircode : “D09 N623”,

}

expertise: [ “MongoDB”, “Python”, “Javascript” ],

employee_number : 320,

location : [ 53.34, -6.26 ]

}](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-6-320.jpg)

![7

MongoDB Documents are Typed

{

name : “Joe Drumgoole”,

title : “Director of Developer Advocacy”,

Address : {

address1 : “Latin Hall”,

address2 : “Golden Lane”,

eircode : “D09 N623”,

}

expertise: [ “MongoDB”, “Python”, “Javascript” ],

employee_number : 320,

location : [ 53.34, -6.26 ]

}

Strings

Nested Document

Array

Integer

Geo-spatial Coordinates](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-7-320.jpg)

![10

Running mongod

JD10Gen:mongodb jdrumgoole$ ./bin/mongod --dbpath /data/b2b

2016-05-23T19:21:07.767+0100 I CONTROL [initandlisten] MongoDB starting : pid=49209 port=27017 dbpath=/data/b2b 64-

bit host=JD10Gen.local

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] db version v3.2.6

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] git version: 05552b562c7a0b3143a729aaa0838e558dc49b25

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] allocator: system

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] modules: none

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] build environment:

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] distarch: x86_64

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] target_arch: x86_64

2016-05-23T19:21:07.768+0100 I CONTROL [initandlisten] options: { storage: { dbPath: "/data/b2b" } }

2016-05-23T19:21:07.769+0100 I - [initandlisten] Detected data files in /data/b2b created by the 'wiredTiger'

storage engine, so setting the active storage engine to 'wiredTiger'.

2016-05-23T19:21:07.769+0100 I STORAGE [initandlisten] wiredtiger_open config:

create,cache_size=4G,session_max=20000,eviction=(threads_max=4),config_base=false,statistics=(fast),log=(enabled=true

,archive=true,path=journal,compressor=snappy),file_manager=(close_idle_time=100000),checkpoint=(wait=60,log_size=2GB)

,statistics_log=(wait=0),

2016-05-23T19:21:08.837+0100 I CONTROL [initandlisten]

2016-05-23T19:21:08.838+0100 I CONTROL [initandlisten] ** WARNING: soft rlimits too low. Number of files is 256,

should be at least 1000

2016-05-23T19:21:08.840+0100 I NETWORK [HostnameCanonicalizationWorker] Starting hostname canonicalization worker

2016-05-23T19:21:08.840+0100 I FTDC [initandlisten] Initializing full-time diagnostic data capture with directory

'/data/b2b/diagnostic.data'

2016-05-23T19:21:08.841+0100 I NETWORK [initandlisten] waiting for connections on port 27017

2016-05-23T19:21:09.148+0100 I NETWORK [initandlisten] connection accepted from 127.0.0.1:59213 #1 (1 connection now

open)](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-10-320.jpg)

![11

Connecting Via The Shell

$ ./bin/mongo

MongoDB shell version: 3.2.6

connecting to: test

Server has startup warnings:

2016-05-17T11:46:03.516+0100 I CONTROL [initandlisten]

2016-05-17T11:46:03.516+0100 I CONTROL [initandlisten] ** WARNING: soft rlimits too low. Number of

files is 256, should be at least 1000

>](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-11-320.jpg)

![18

How do we do this in a program?

'''

Created on 17 May 2016

@author: jdrumgoole

'''

import pymongo

#

# client defaults to localhost and port 27017. eg MongoClient('localhost', 27017)

client = pymongo.MongoClient()

blogDatabase = client[ "blog" ]

usersCollection = blogDatabase[ "users" ]

usersCollection.insert_one( { "username" : "jdrumgoole",

"password" : "top secret",

"lang" : "EN" })

user = usersCollection.find_one()

print( user )](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-18-320.jpg)

![19

Next up Articles

…

articlesCollection = blogDatabase[ "articles" ]

author = "jdrumgoole"

article = { "title" : "This is my first post",

"body" : "The is the longer body text for my blog post. We can add lots of text here.",

"author" : author,

"tags" : [ "joe", "general", "Ireland", "admin" ]

}

#

# Lets check if our author exists

#

if usersCollection.find_one( { "username" : author }) :

articlesCollection.insert_one( article )

else:

raise ValueError( "Author %s does not exist" % author )](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-19-320.jpg)

![20

Create a new type of article

#

# Lets add a new type of article with a posting date and a section

#

author = "jdrumgoole"

title = "This is a post on MongoDB"

newPost = { "title" : title,

"body" : "MongoDB is the worlds most popular NoSQL database. It is a document

database",

"author" : author,

"tags" : [ "joe", "mongodb", "Ireland" ],

"section" : "technology",

"postDate" : datetime.datetime.now(),

}

#

# Lets check if our author exists

#

if usersCollection.find_one( { "username" : author }) :

articlesCollection.insert_one( newPost )](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-20-320.jpg)

![21

Make a lot of articles 1

import pymongo

import string

import datetime

import random

def randomString( size, letters = string.letters ):

return "".join( [random.choice( letters ) for _ in xrange( size )] )

client = pymongo.MongoClient()

def makeArticle( count, author, timestamp ):

return { "_id" : count,

"title" : randomString( 20 ),

"body" : randomString( 80 ),

"author" : author,

"postdate" : timestamp }

def makeUser( username ):

return { "username" : username,

"password" : randomString( 10 ) ,

"karma" : random.randint( 0, 500 ),

"lang" : "EN" }](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-21-320.jpg)

![22

Make a lot of articles 2

blogDatabase = client[ "blog" ]

usersCollection = blogDatabase[ "users" ]

articlesCollection = blogDatabase[ "articles" ]

bulkUsers = usersCollection.initialize_ordered_bulk_op()

bulkArticles = articlesCollection.initialize_ordered_bulk_op()

ts = datetime.datetime.now()

for i in range( 1000000 ) :

#username = randomString( 10, string.ascii_uppercase ) + "_" + str( i )

username = "USER_" + str( i )

bulkUsers.insert( makeUser( username ) )

ts = ts + datetime.timedelta( seconds = 1 )

bulkArticles.insert( makeArticle( i, username, ts ))

if ( i % 500 == 0 ) :

bulkUsers.execute()

bulkArticles.execute()

bulkUsers = usersCollection.initialize_ordered_bulk_op()

bulkArticles = articlesCollection.initialize_ordered_bulk_op()

bulkUsers.execute()

bulkArticles.execute()](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-22-320.jpg)

![26

Update an article to add Comments 1

> db.articles.find( { "_id" : 19 } ).pretty()

{

"_id" : 19,

"body" :

"nTzOofOcnHKkJxpjKAyqTTnKZMFzzkWFeXtBRuEKsctuGBgWIrEBrYdvFIVHJWaXLUTVUXblOZZgUq

Wu",

"postdate" : ISODate("2016-05-23T12:02:46.830Z"),

"author" : "ASWTOMMABN_19",

"title" : "CPMaqHtAdRwLXhlUvsej"

}

> db.articles.update( { _id : 18 }, { $set : { comments : [] }} )

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-26-320.jpg)

![27

Update an article to add comments 2

> db.articles.find( { _id :18 } ).pretty()

{

"_id" : 18,

"body" :

"KmwFSIMQGcIsRNTDBFPuclwcVJkoMcrIPwTiSZDYyatoKzeQiKvJkiVSrndXqrALVIYZxGpaMjucgX

UV",

"postdate" : ISODate("2016-05-23T16:04:39.497Z"),

"author" : "USER_18",

"title" : "wTLreIEyPfovEkBhJZZe",

"comments" : [ ]

}

>](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-27-320.jpg)

![28

Update an article to add comments 3

> db.articles.update( { _id : 18 }, { $push : { comments : { username : "joe",

comment : "hey first post" }}} )

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.articles.find( { _id :18 } ).pretty()

{

"_id" : 18,

"body" :

"KmwFSIMQGcIsRNTDBFPuclwcVJkoMcrIPwTiSZDYyatoKzeQiKvJkiVSrndXqrALVIYZxGpaMjucgXUV"

,

"postdate" : ISODate("2016-05-23T16:04:39.497Z"),

"author" : "USER_18",

"title" : "wTLreIEyPfovEkBhJZZe",

"comments" : [

{

"username" : "joe",

"comment" : "hey first post"

}

]

}

>](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-28-320.jpg)

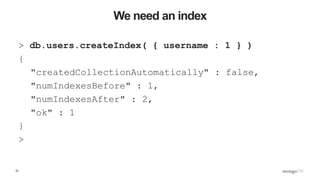

![37

Execution stage

"executionStages" : {

"stage" : "FETCH",

"nReturned" : 1,

"executionTimeMillisEstimate" : 0,

"docsExamined" : 1,,

"inputStage" : {

"stage" : "IXSCAN",

"nReturned" : 1,

"executionTimeMillisEstimate" : 0,

"keyPattern" : {

"username" : 1

},

"indexName" : "username_1",

"isMultiKey" : false,

"isUnique" : false,

"isSparse" : false,

"isPartial" : false,

"indexVersion" : 1,

"direction" : "forward",

"indexBounds" : {

"username" : [

"["USER_999999", "USER_999999"]"

]

},

"keysExamined" : 1,

"seenInvalidated" : 0

}

}

}](https://image.slidesharecdn.com/b2b-webinar-2-yourfirstmongodbapplication-1-170126162857/85/Back-to-Basics-My-First-MongoDB-Application-37-320.jpg)