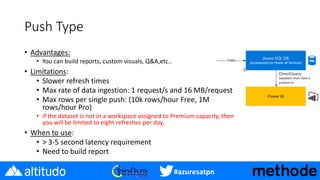

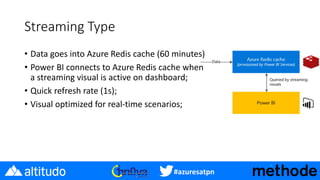

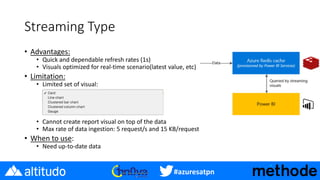

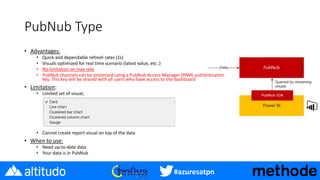

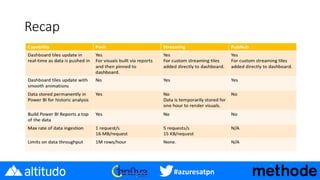

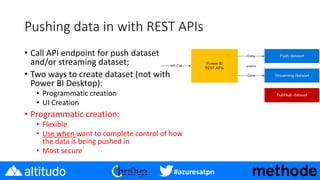

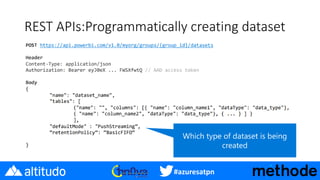

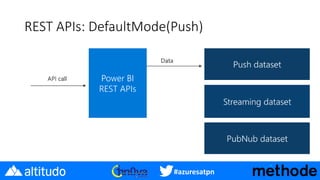

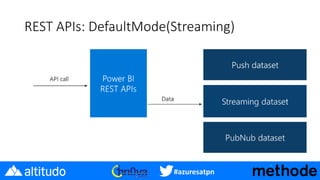

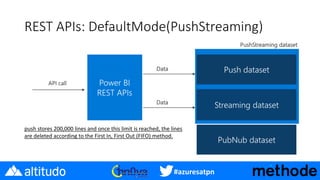

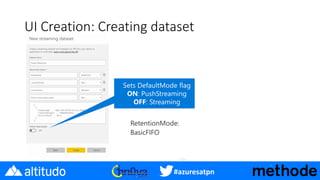

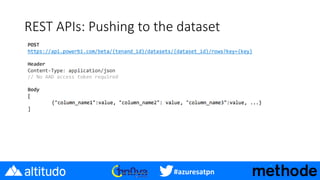

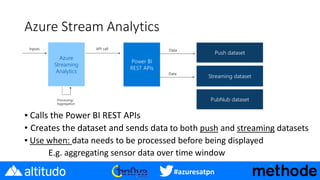

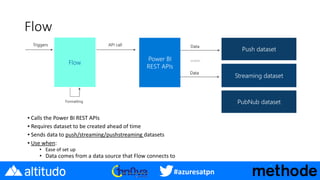

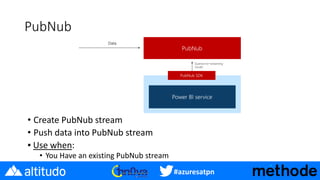

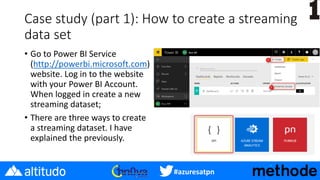

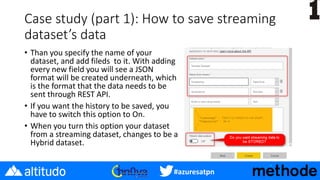

- The document discusses real-time options in Power BI including push, streaming, and PubNub data. It describes the characteristics of each option including refresh rates, visual capabilities, and advantages/limitations.

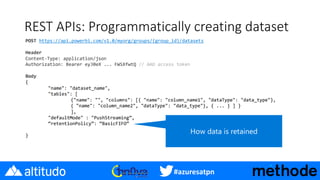

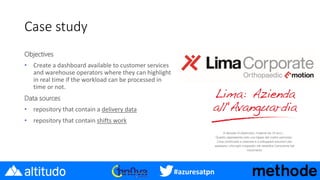

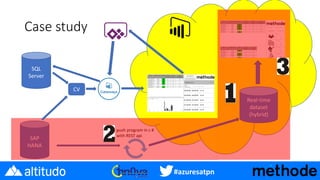

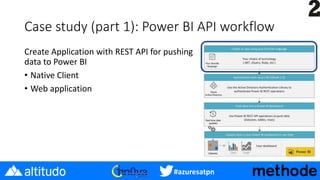

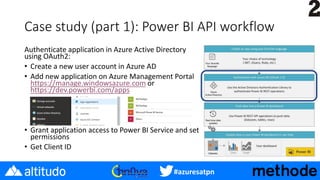

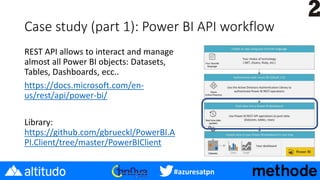

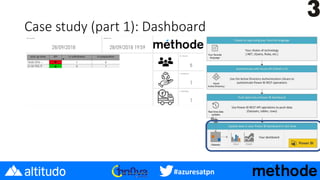

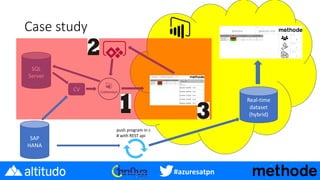

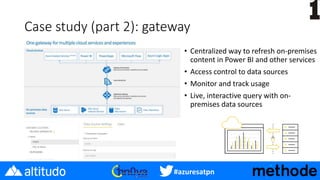

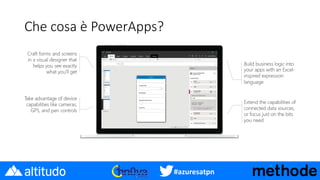

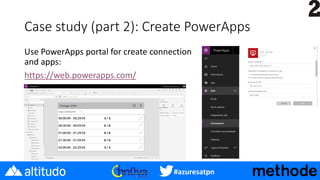

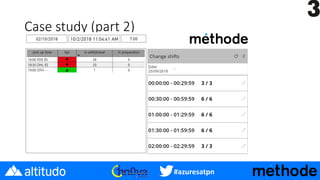

- A case study is presented on creating a dashboard to monitor warehouse workload in real-time using a hybrid dataset with data pushed from SQL Server and SAP HANA via REST APIs into Power BI. PowerApps is also suggested for creating mobile apps connected to the real-time data.

- Additional resources are provided on real-time streaming documentation, tutorials for IoT dashboards and connecting Azure Stream Analytics, and using PubNub streams in Power BI.