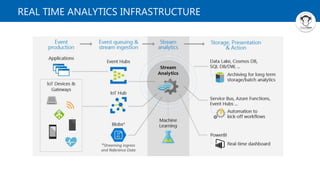

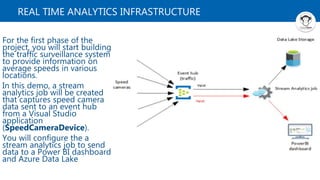

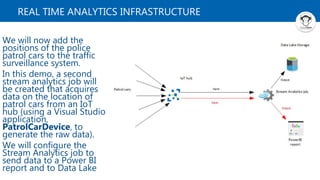

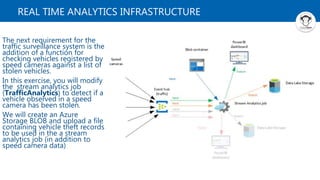

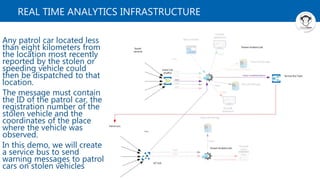

The document discusses building a real-time traffic analytics infrastructure using Azure services. It includes 4 demos: 1) Creating a Stream Analytics job to capture speed camera data and send it to Power BI and Data Lake; 2) Adding patrol car location data from an IoT hub to Stream Analytics; 3) Modifying Stream Analytics to check for matches of speeding vehicles to a list of stolen vehicles stored in Blob; 4) Configuring Service Bus to send warning messages to patrol cars about locations of stolen vehicles observed by cameras. The overall goal is providing real-time traffic and patrol information and alerts.