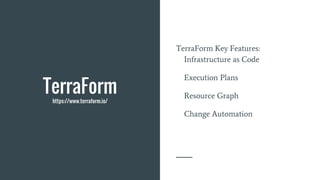

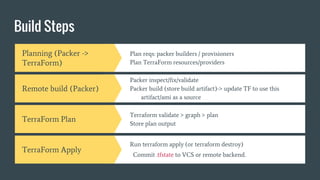

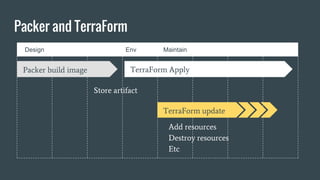

The document provides an overview of Packer and Terraform, tools used for automating infrastructure management through infrastructure as code (IaC). Packer creates consistent machine images, while Terraform manages and orchestrates infrastructure resources effectively. Key benefits of using these tools include reduced costs, increased speed, and minimized risks associated with infrastructure changes.

![Create a template

: configuration file used to define what

image we want built and how

Notes

Define the builders

Define provisioners

Define post-processors

Define variables (access keys etc)

<NB/>: Parallel Builds

Example

{

"builders": [],

"description": "A packer example template",

"min_packer_version": "0.8.0",

"provisioners": [],

"post-processors": [],

"variables": []

}](https://image.slidesharecdn.com/kqm5angxqlg3xjs6iexe-signature-9488c522bf2e1e752a1bcec9aee84053329e9255e4406882e00421281dda234a-poli-160527054400/85/Automation-with-Packer-and-TerraForm-8-320.jpg)

![Builders

Amazon EC2 (AMI)

DigitalOcean

Docker

Google Compute Engine

OpenStack

VirtualBox

<Commands/>:

packer build

packer fix

packer inspect

packer validate

{

"variables": {

"aws_access_key": "YOURACCESSKEY",

"aws_secret_key": "YOURSECRETKEY",

"do_api_token": "YOURAPITOKEN"

},

"builders": [{

"type": "amazon-ebs",

"access_key": "{{user `aws_access_key`}}",

"secret_key": "{{user `aws_secret_key`}}",

"region": "us-east-1",

"source_ami": "ami-fce3c696",

"instance_type": "t2.micro",

"ssh_username": "ubuntu",

"ami_name": "packer-example {{timestamp}}"

},{

"type": "digitalocean",

"api_token": "{{user `do_api_token`}}",

"image": "ubuntu-14-04-x64",

"region": "nyc3",

"size": "512mb"

}],

"provisioners": [{

"type": "shell",

"inline": [

"sleep 30",

"sudo apt-get update",

"sudo apt-get install -y redis-server"

]

}]

}](https://image.slidesharecdn.com/kqm5angxqlg3xjs6iexe-signature-9488c522bf2e1e752a1bcec9aee84053329e9255e4406882e00421281dda234a-poli-160527054400/85/Automation-with-Packer-and-TerraForm-9-320.jpg)