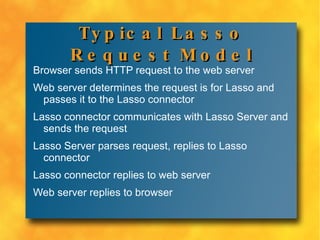

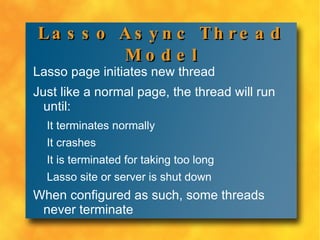

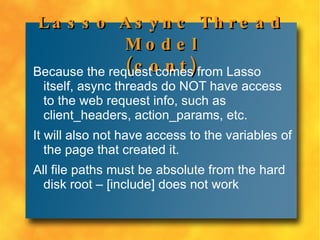

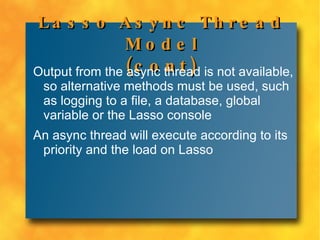

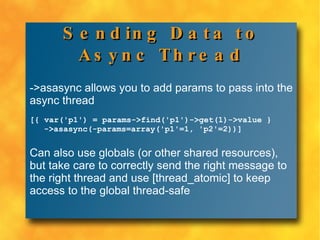

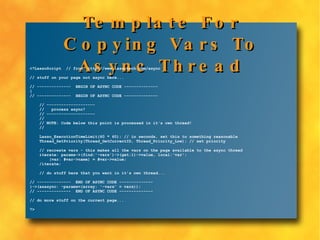

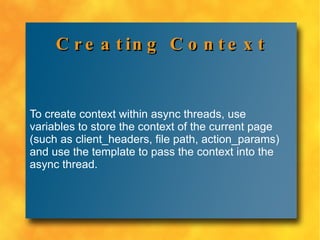

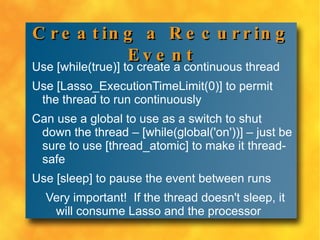

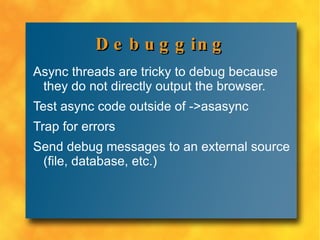

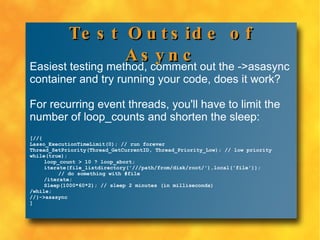

Async threads in Lasso allow code to run asynchronously and in parallel to the currently processing page. They are useful for offloading tasks to improve response times and for recurring events like scheduled jobs. Async threads do not have access to web request data and their output is not available, so alternative methods like logging must be used. Context can be passed into async threads using variables. Debugging async threads requires techniques like testing code outside the async context, trapping for errors, and sending debug messages to external sources.

![All file paths must be absolute from the hard disk root – [include] does not work](https://image.slidesharecdn.com/corryasyncthreads-091124005754-phpapp02/85/Asynchronous-Threads-in-Lasso-8-5-17-320.jpg)

![Basic Example Async threads are surrounded by curly-braces and initiated using ->asasyn c: [{'Hello World'}->asasync] The above code doesn't show anything in the browser, but it does run asynchronously.](https://image.slidesharecdn.com/corryasyncthreads-091124005754-phpapp02/85/Asynchronous-Threads-in-Lasso-8-5-20-320.jpg)

![Sending Data to Async Thread ->asasync allows you to add params to pass into the async thread [{ var('p1') = params->find('p1')->get(1)->value } ->asasync(-params=array('p1'=1, 'p2'=2))] Can also use globals (or other shared resources), but take care to correctly send the right message to the right thread and use [thread_atomic] to keep access to the global thread-safe](https://image.slidesharecdn.com/corryasyncthreads-091124005754-phpapp02/85/Asynchronous-Threads-in-Lasso-8-5-21-320.jpg)