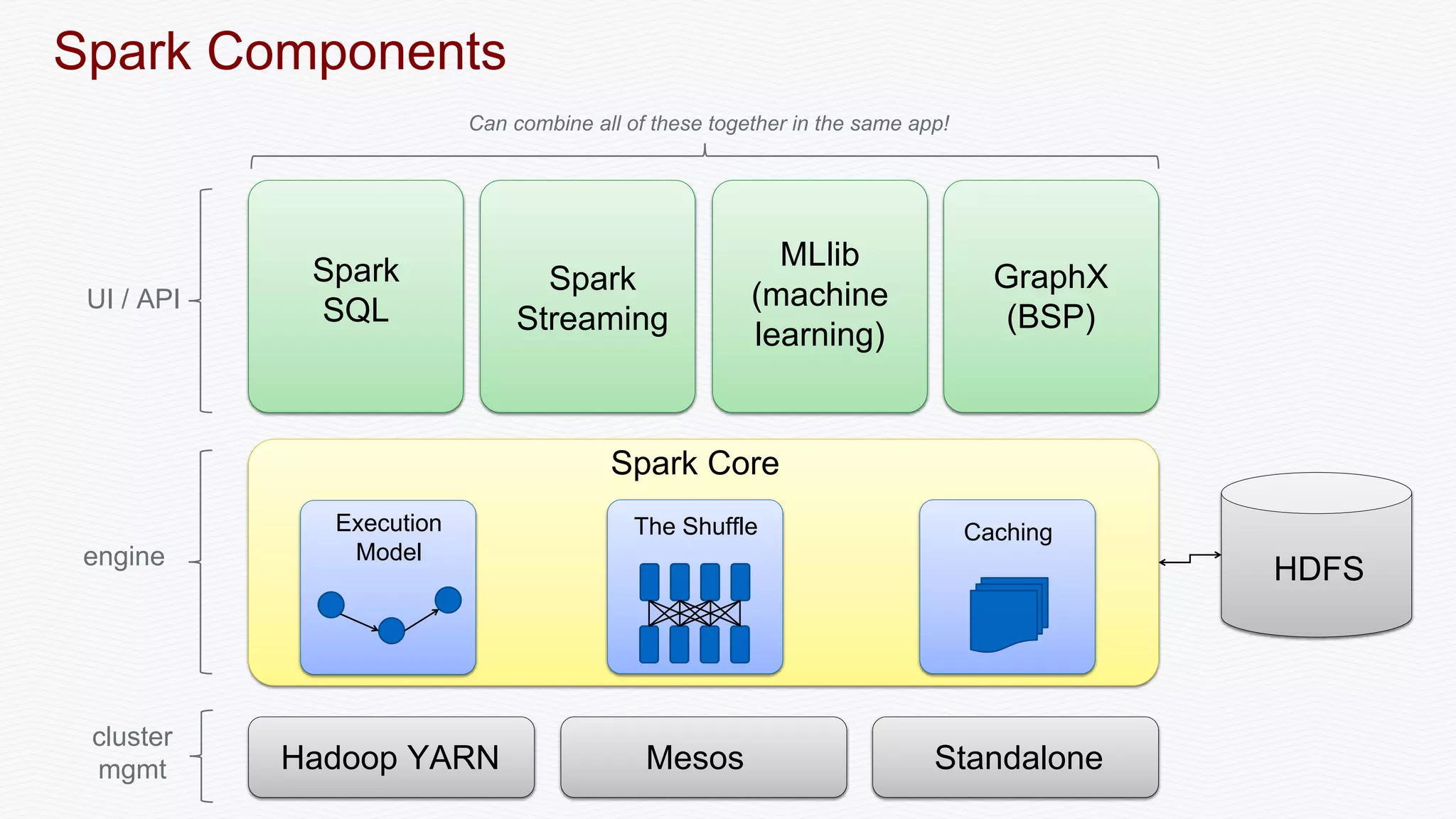

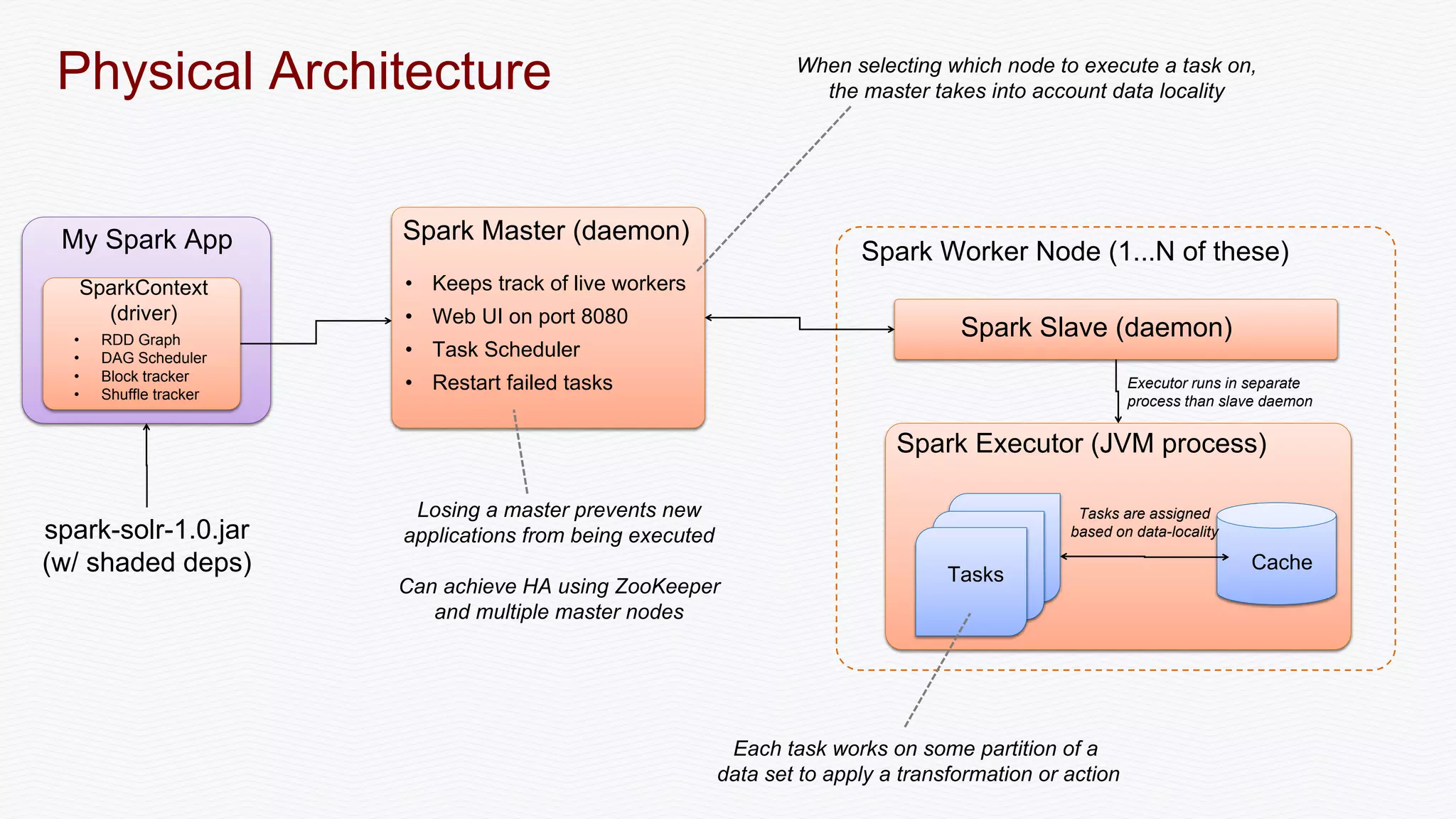

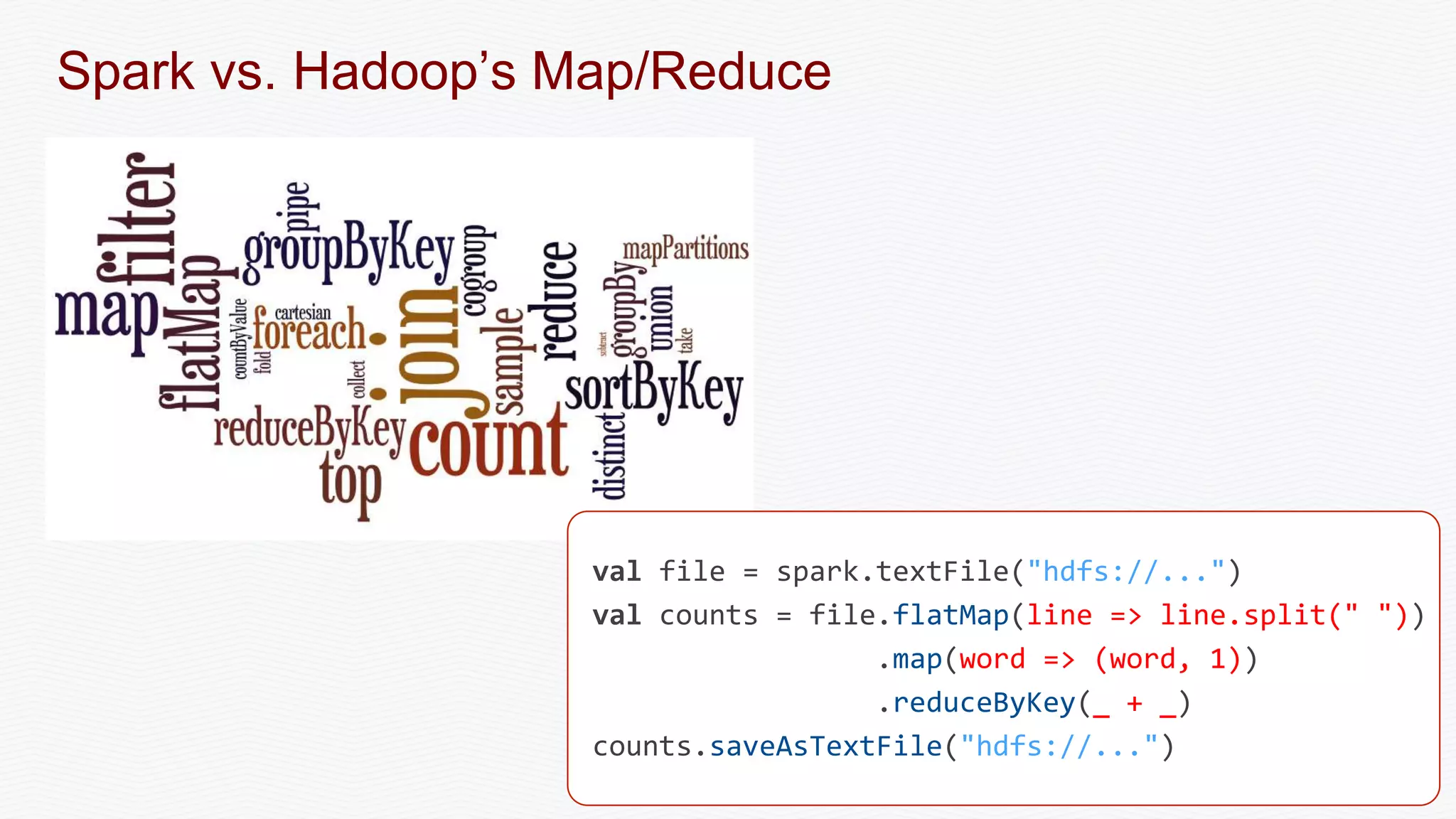

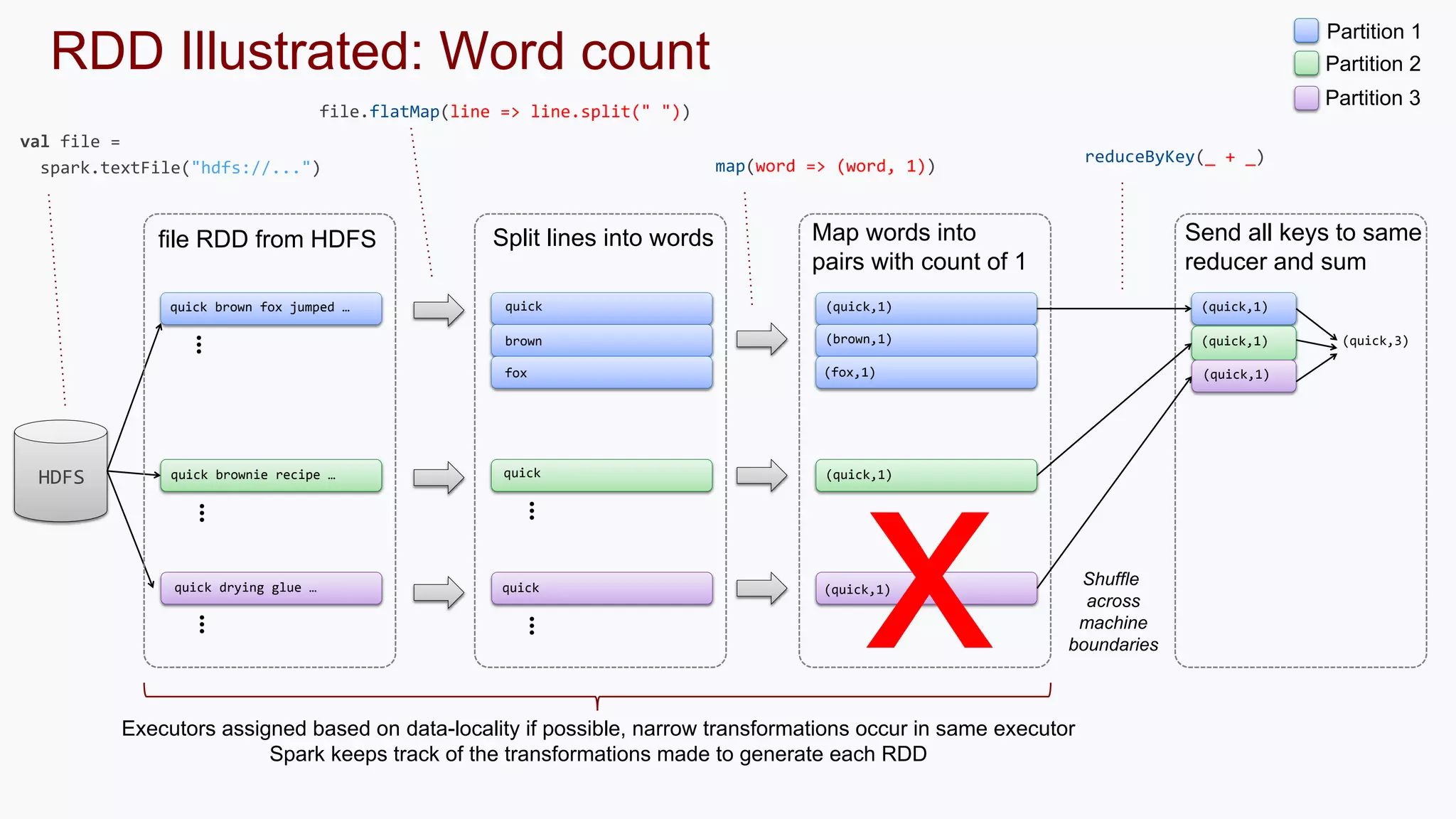

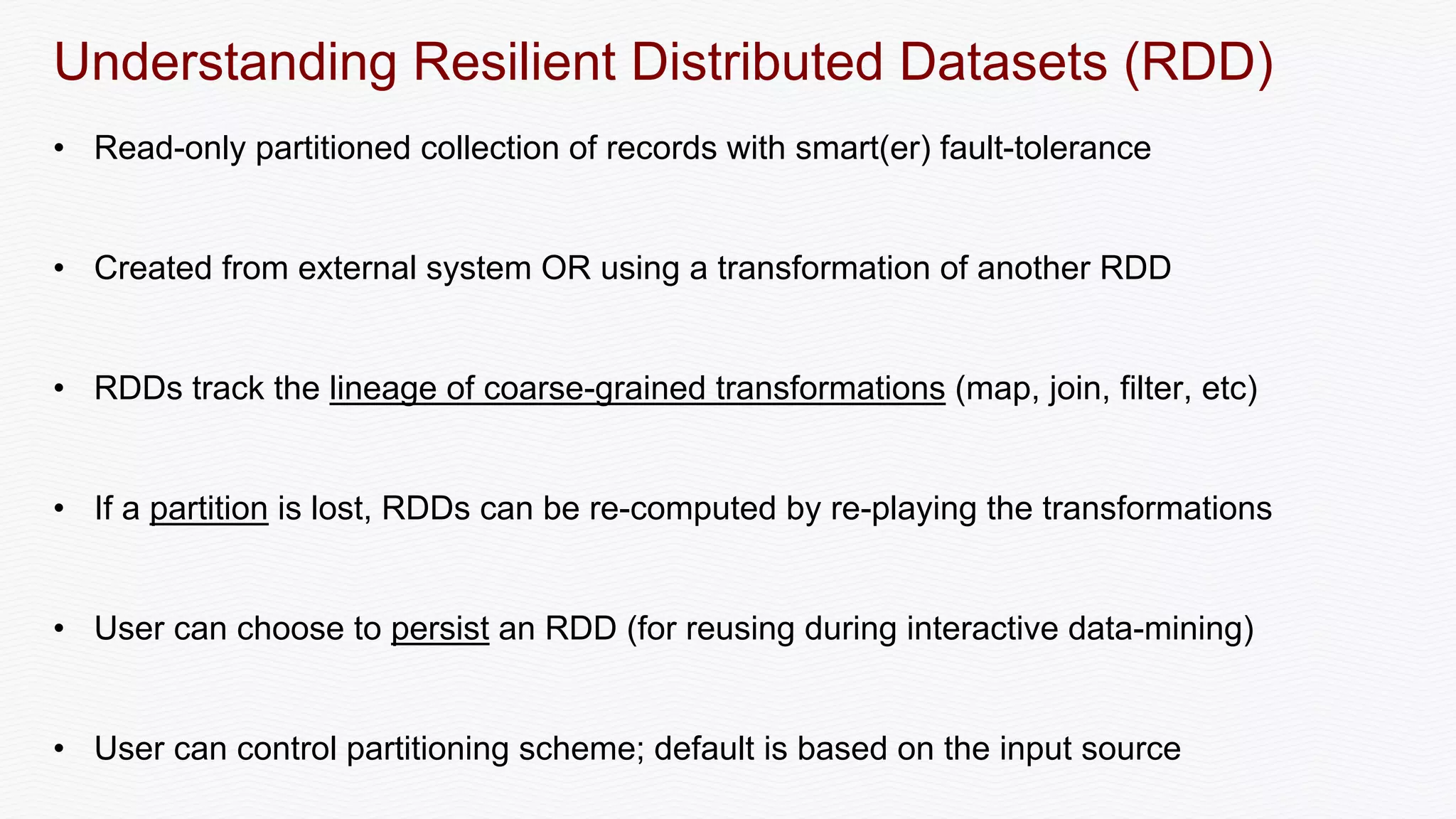

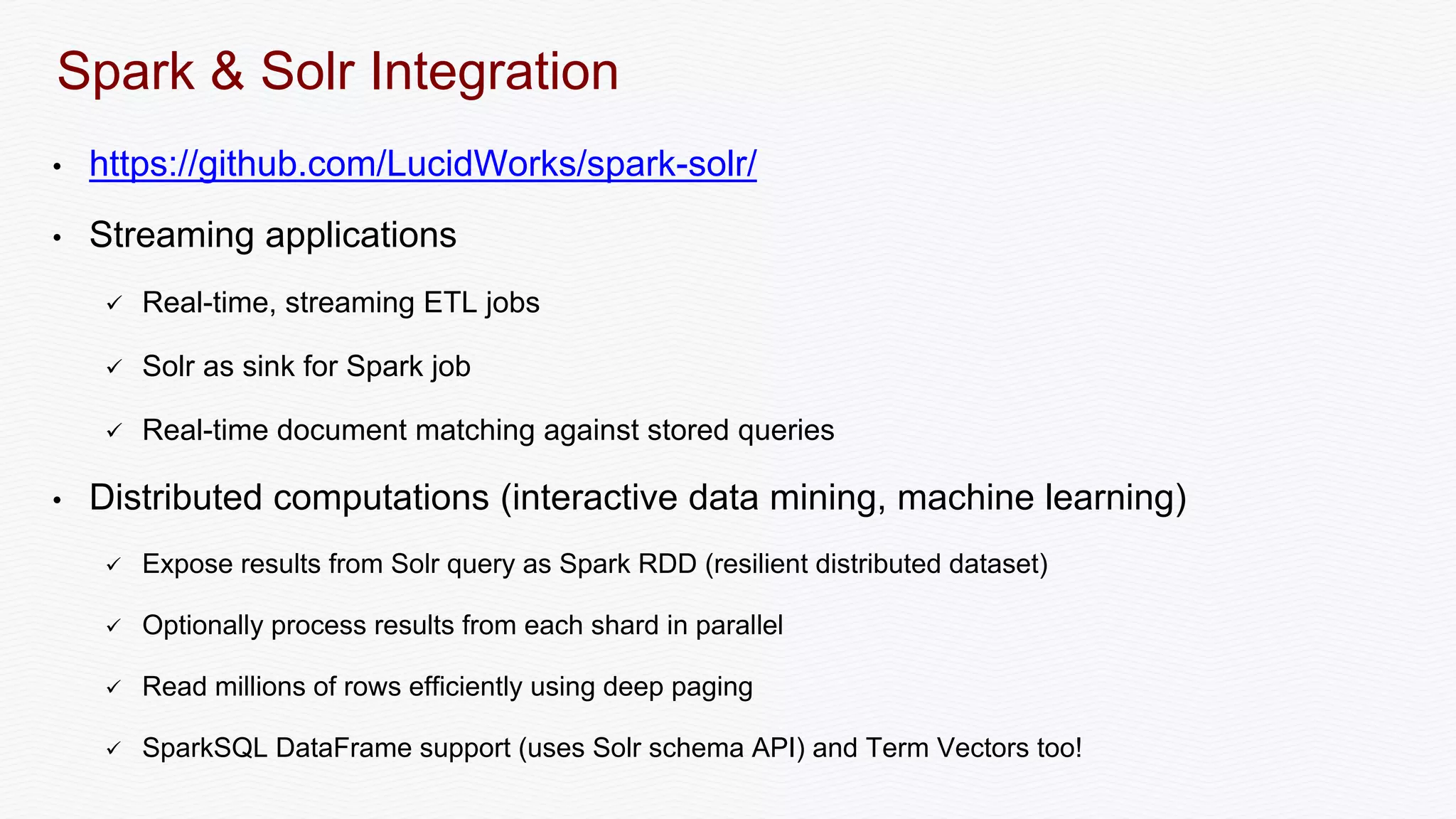

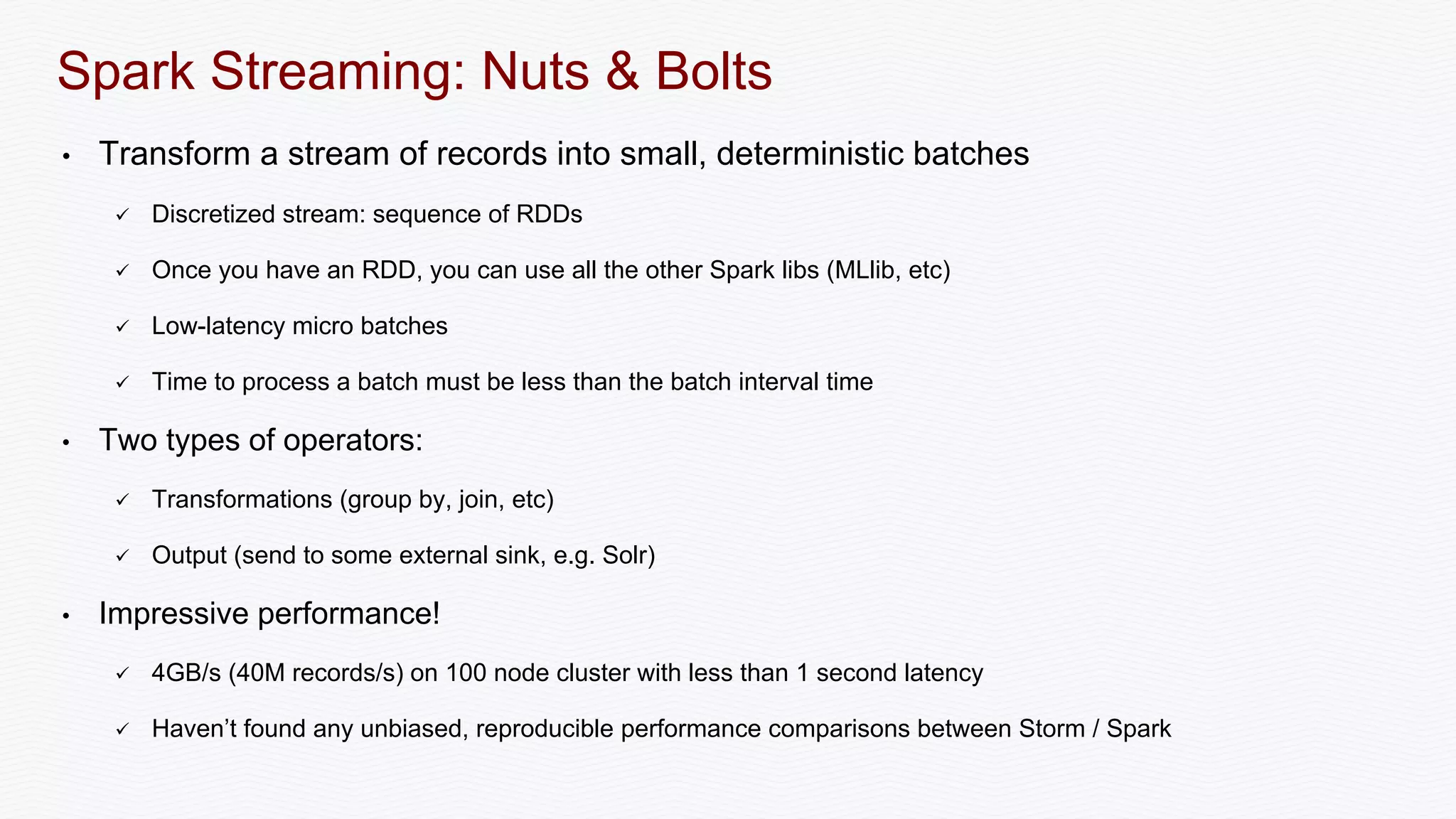

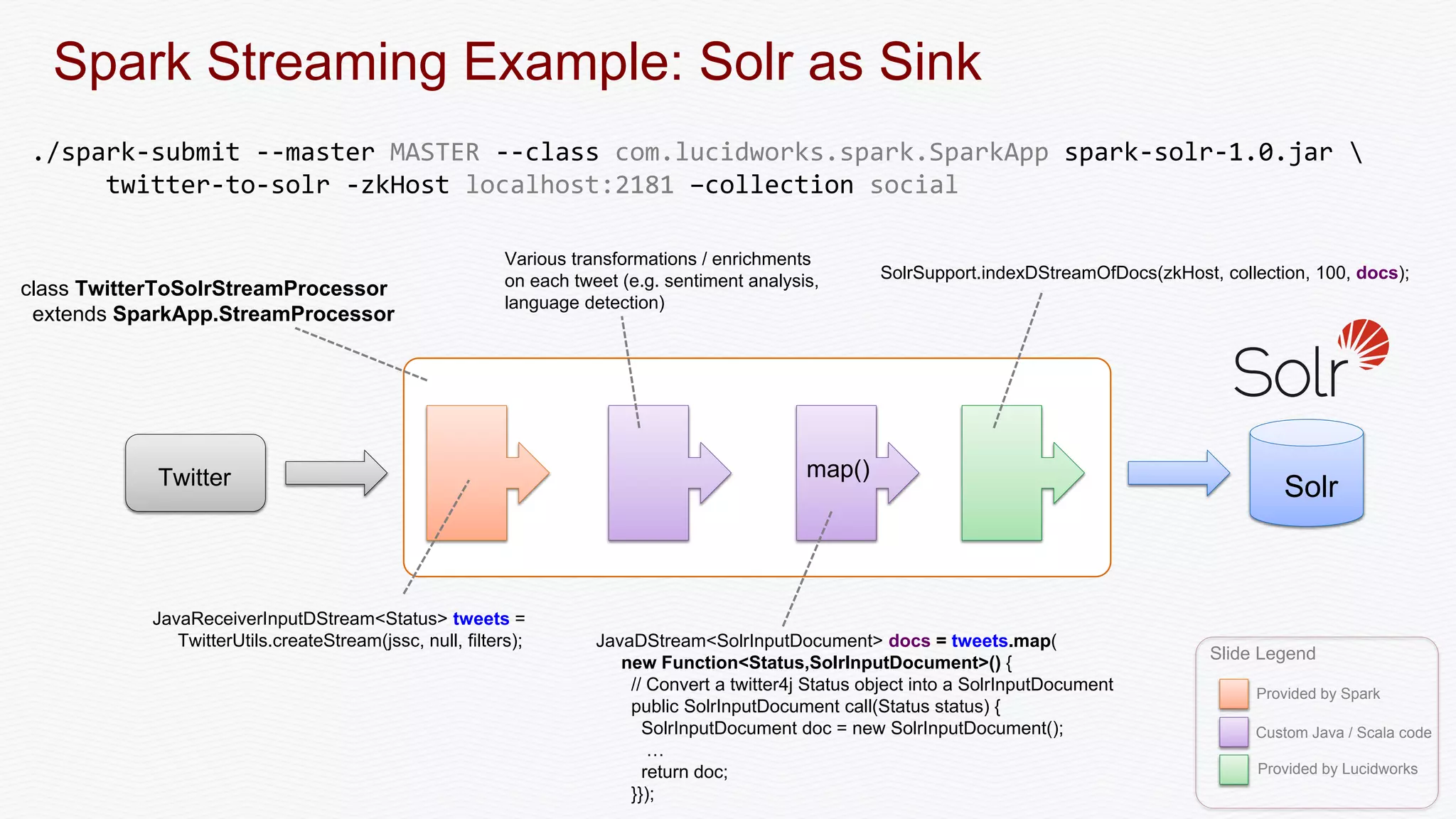

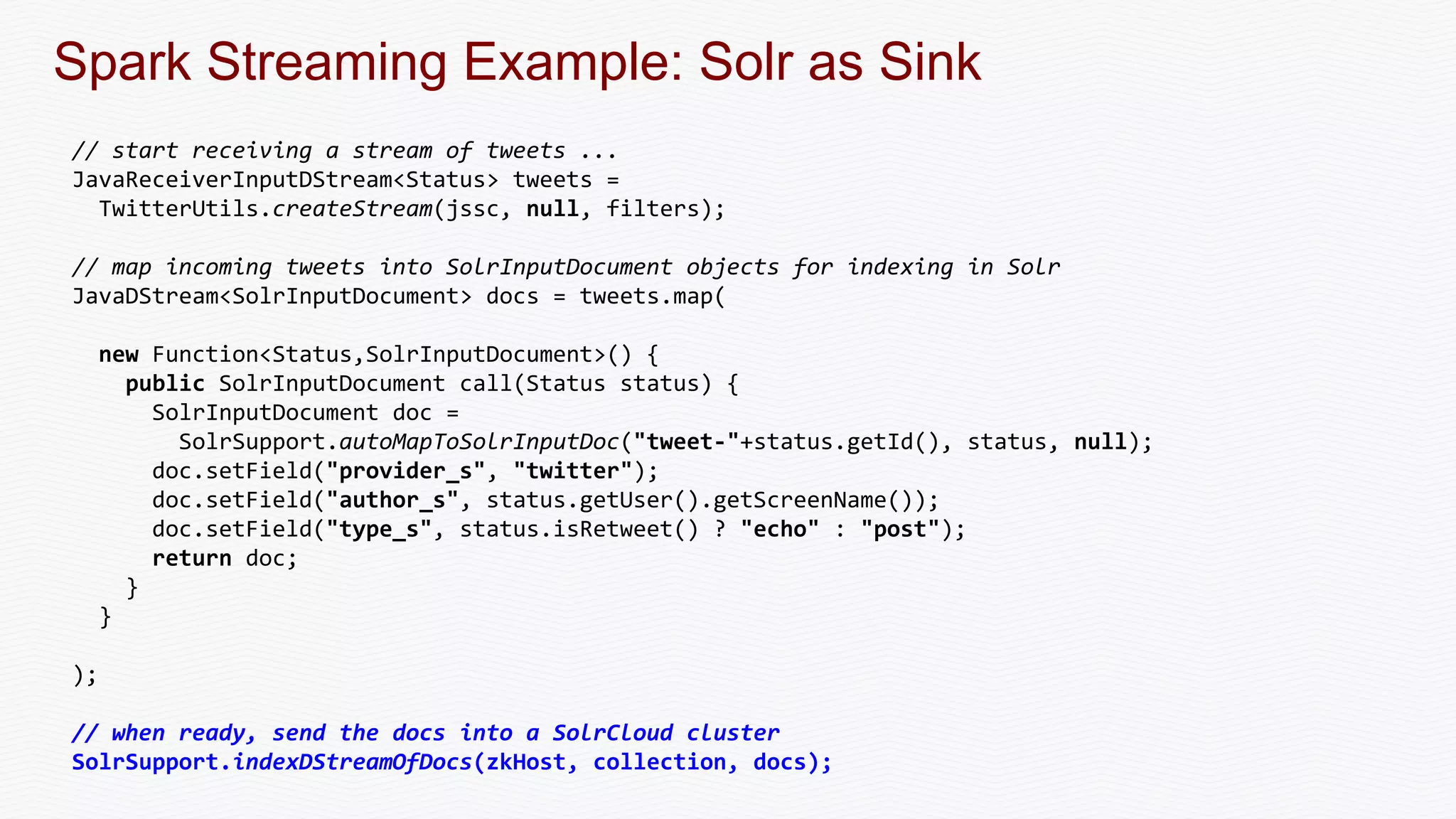

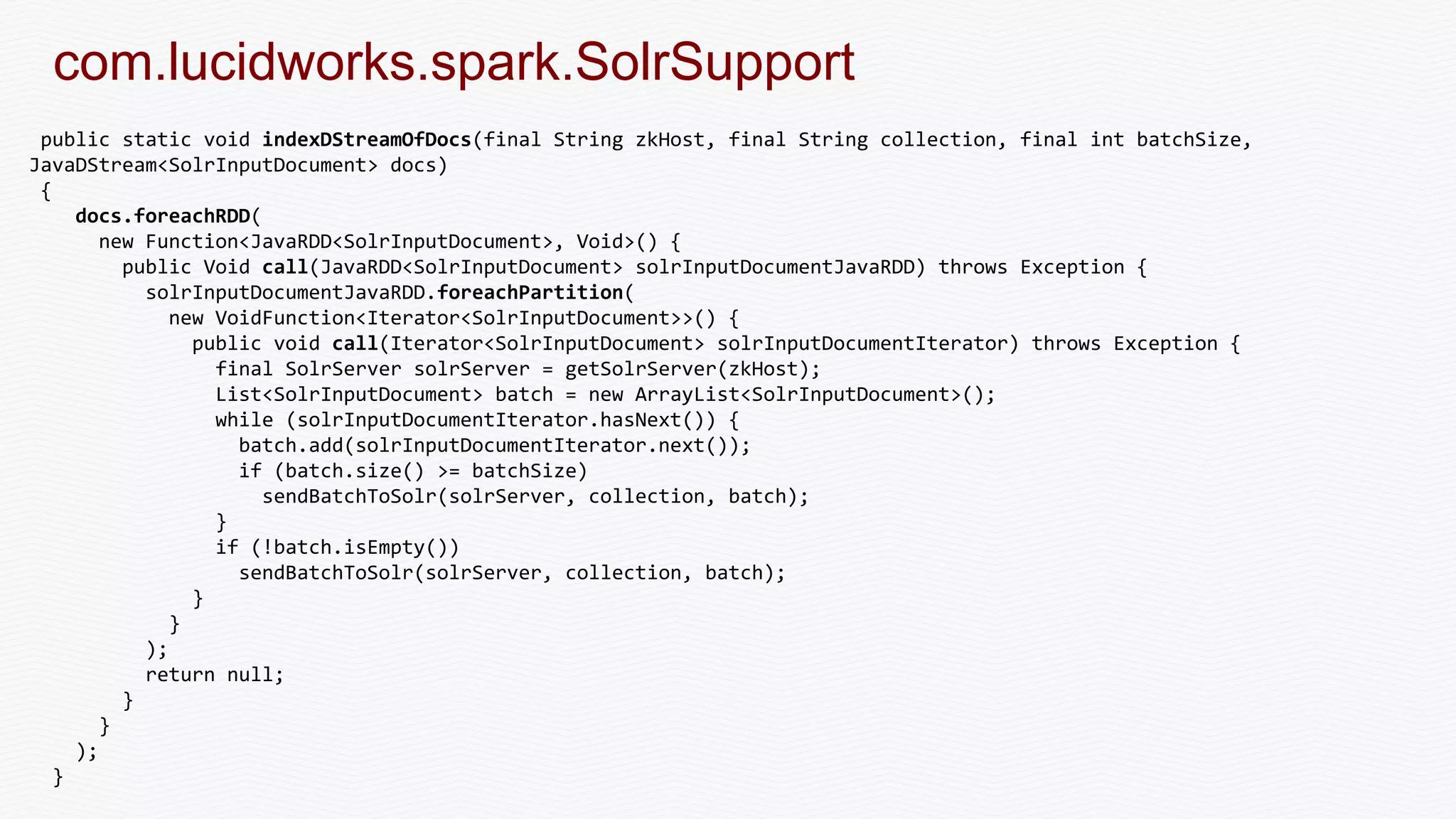

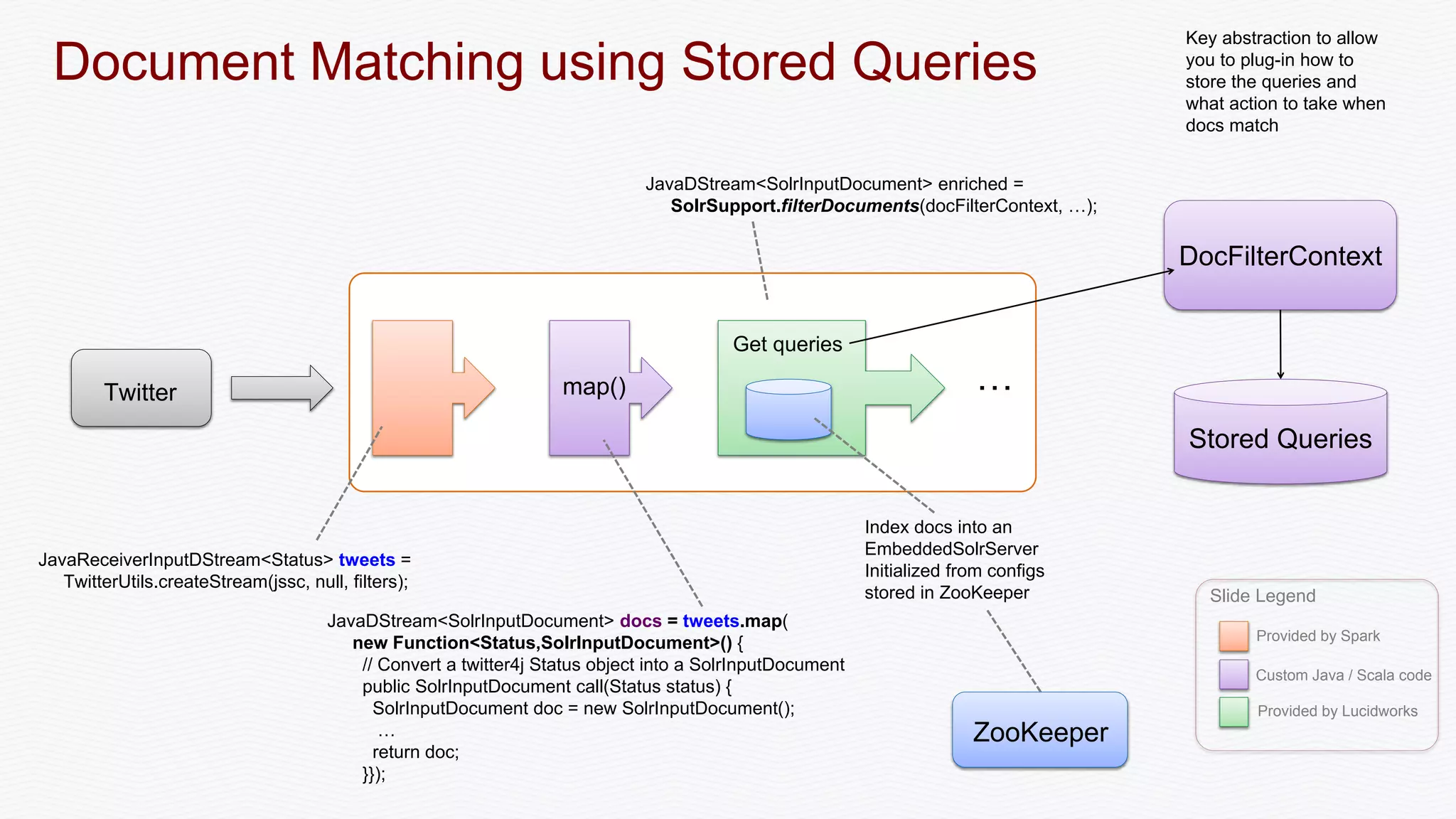

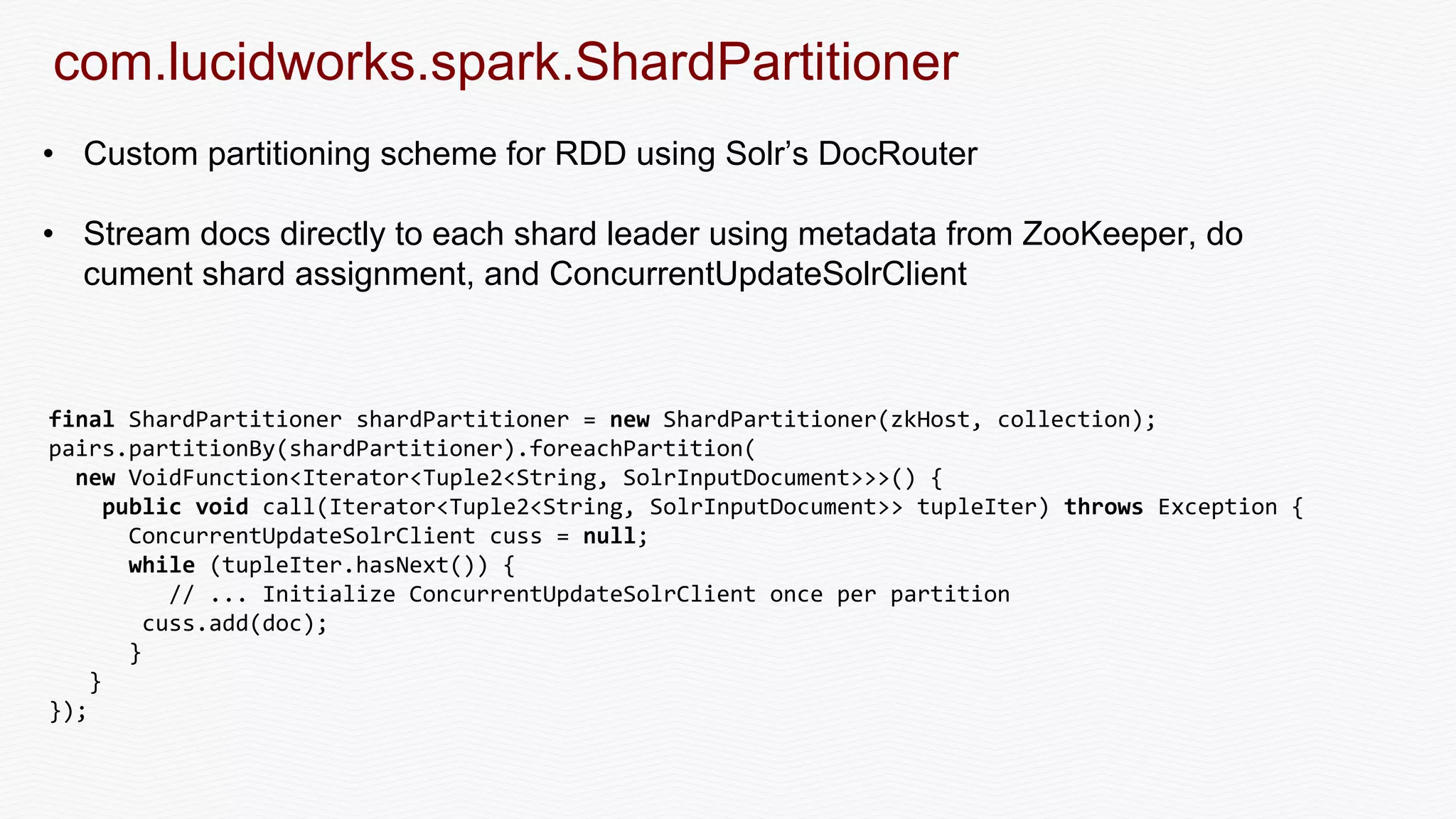

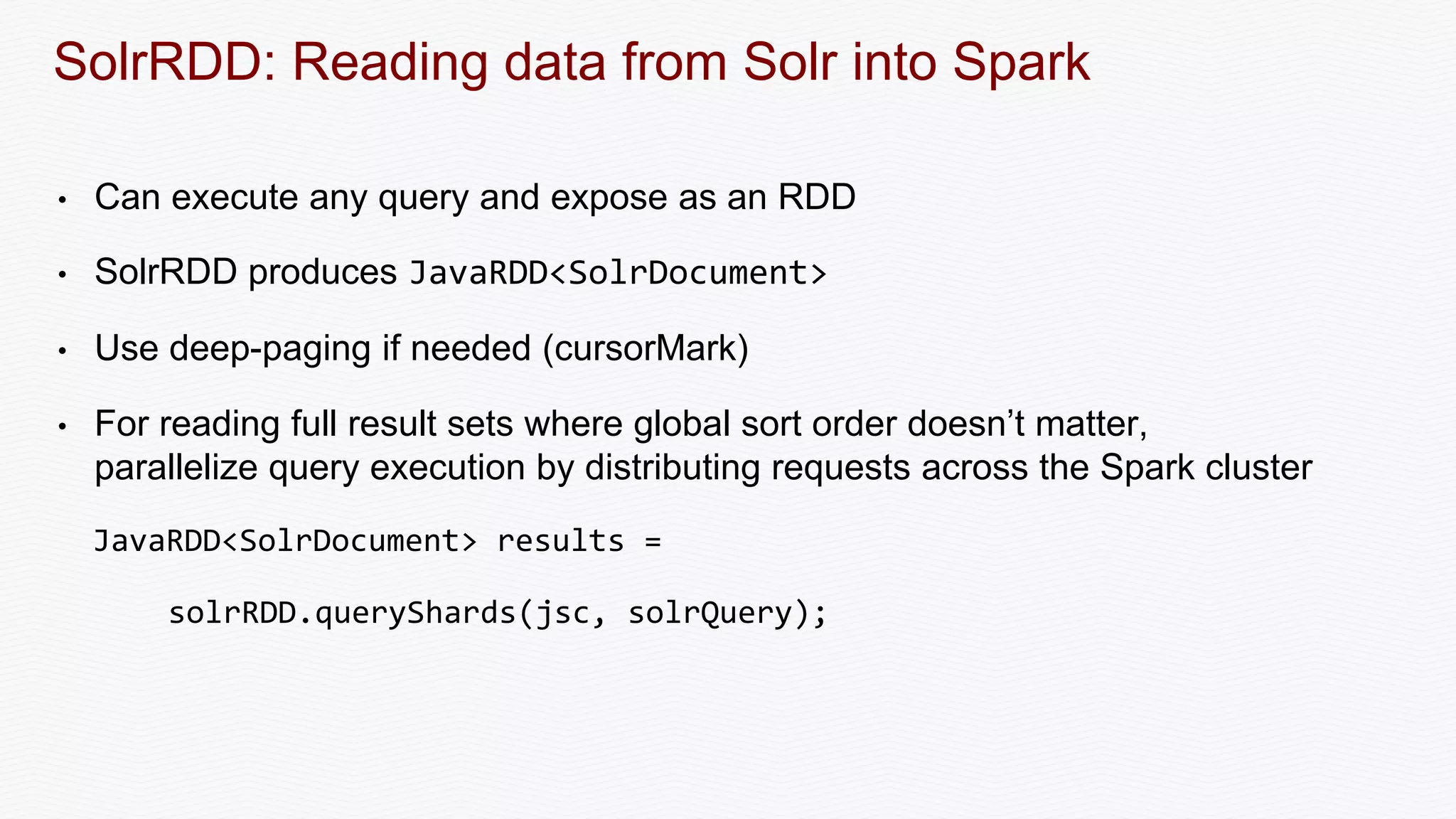

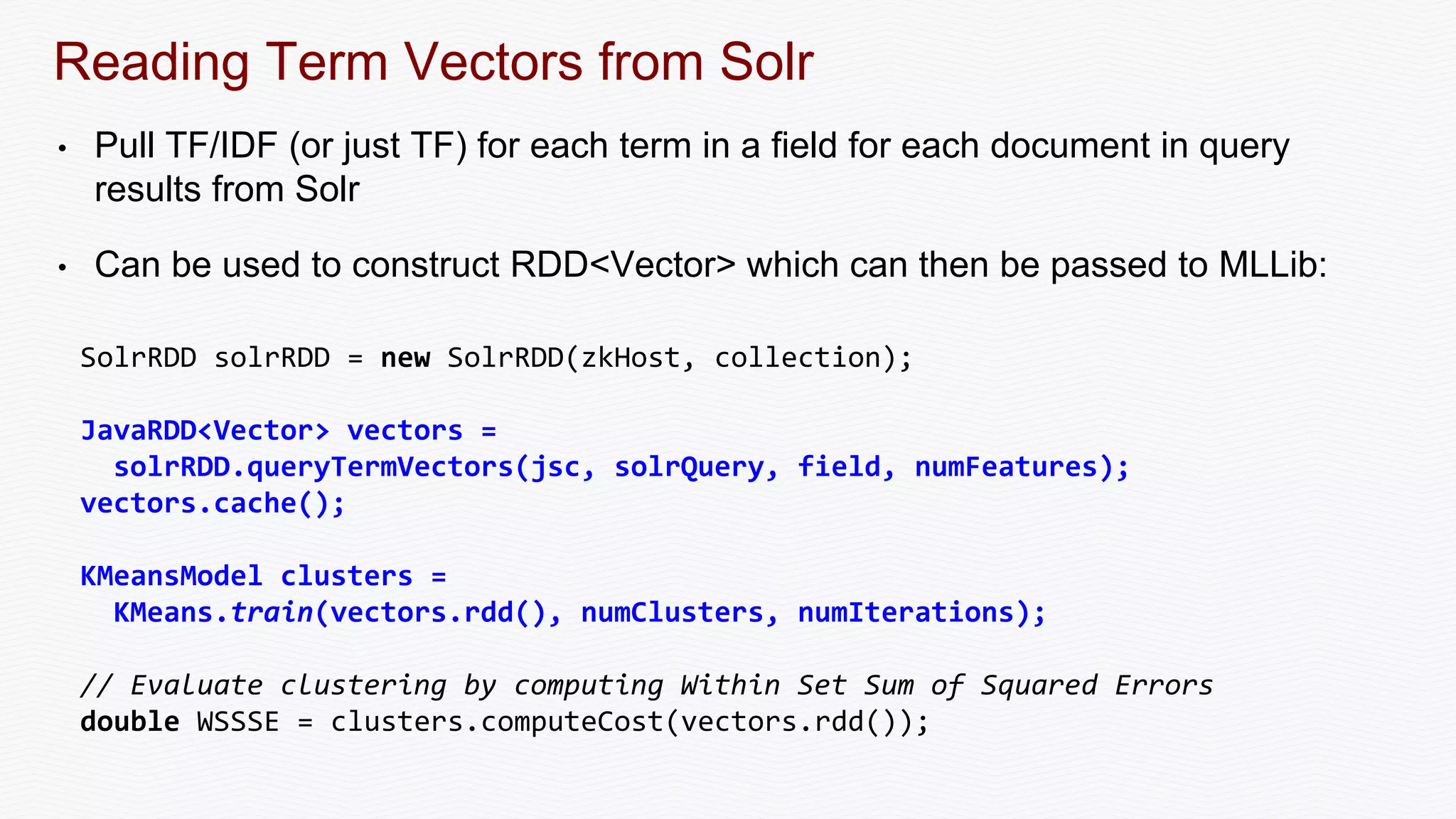

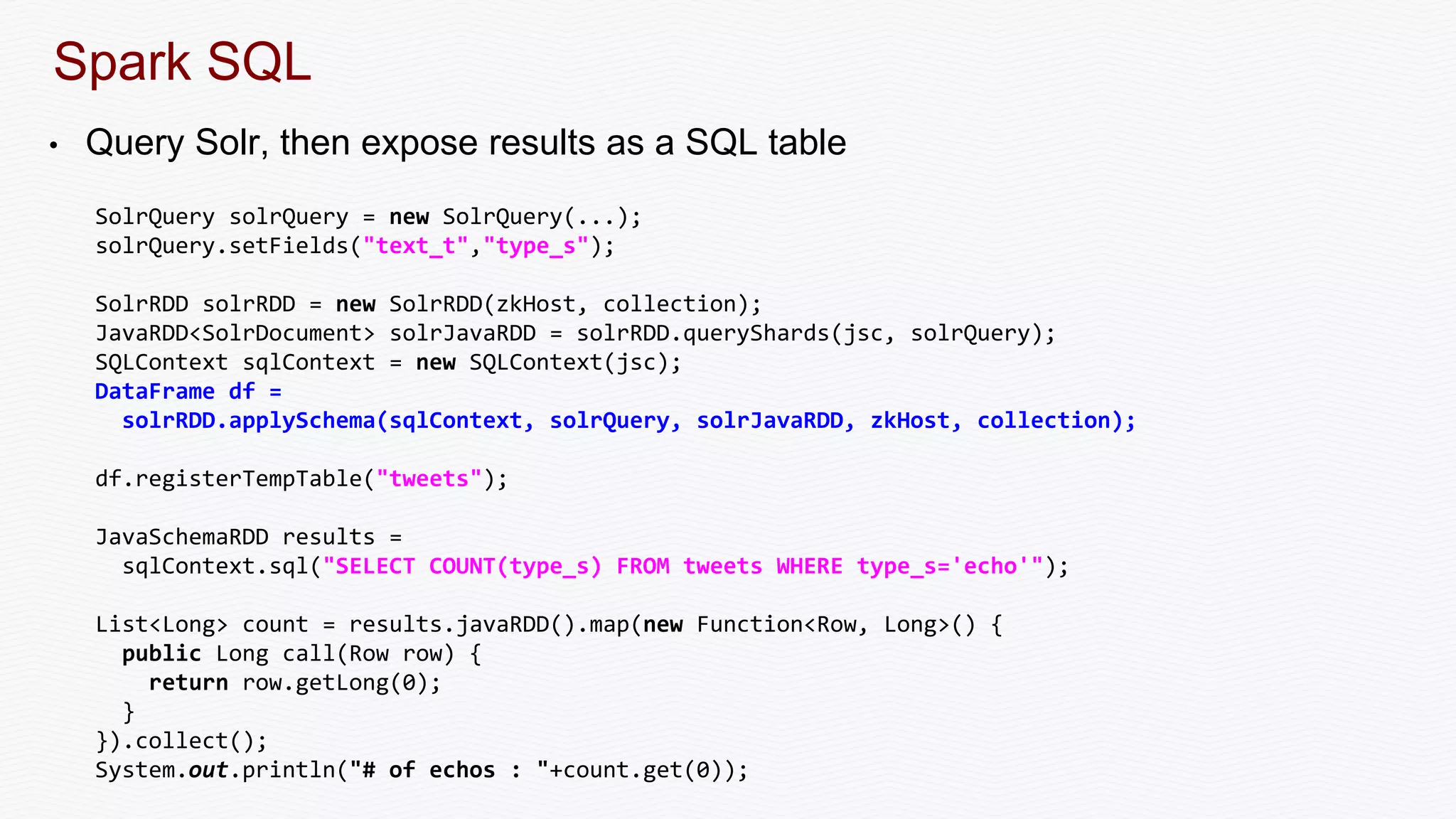

The document discusses the integration of Apache Spark with Solr, emphasizing Spark's advantages as a faster alternative to MapReduce for big data processing, particularly for iterative algorithms and real-time data analysis. It covers Spark's architecture, sustainable fault-tolerance with Resilient Distributed Datasets (RDDs), and practical implementations such as document matching and data streaming using Solr as a sink. The content also includes performance benchmarks, resources for deeper understanding, and various examples of Spark and Solr collaboration for efficient data processing.