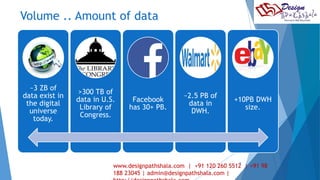

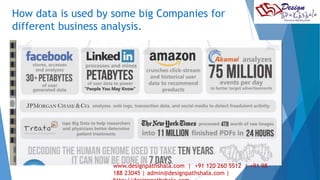

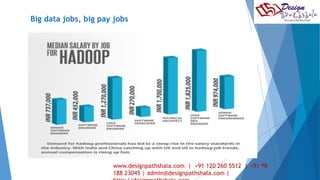

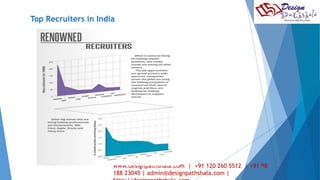

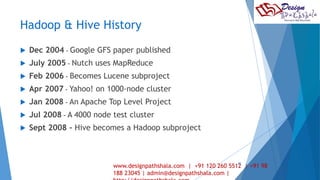

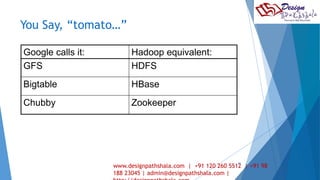

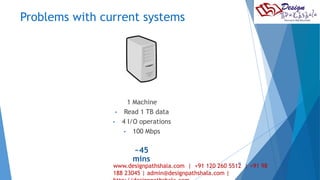

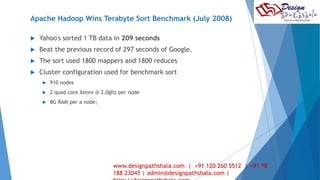

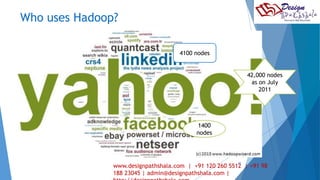

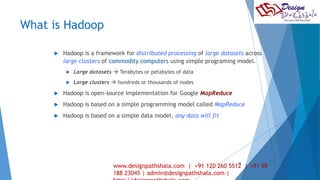

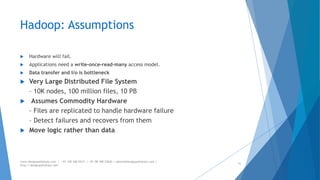

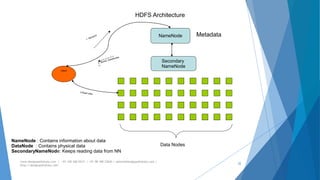

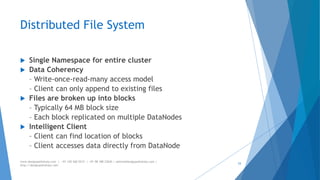

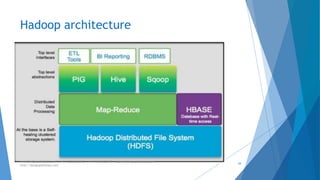

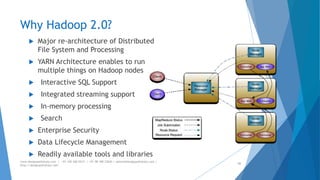

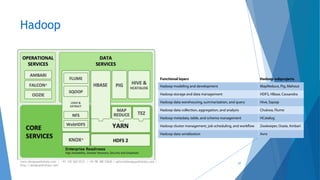

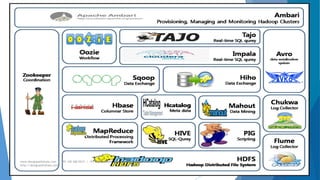

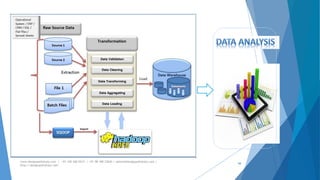

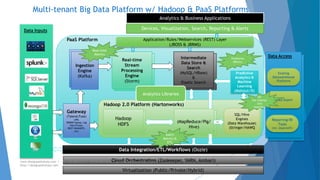

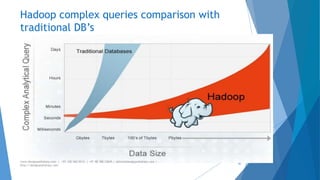

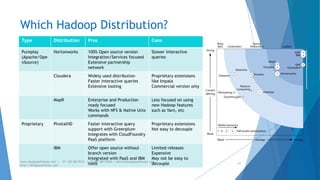

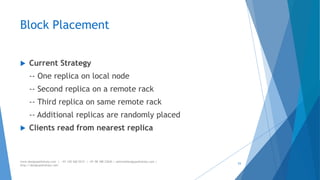

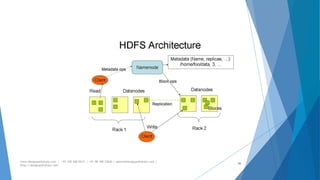

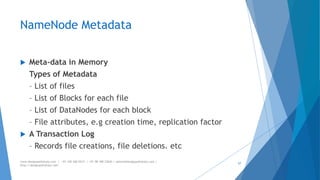

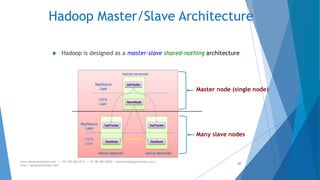

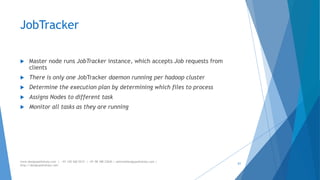

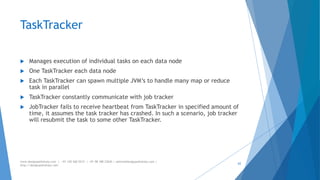

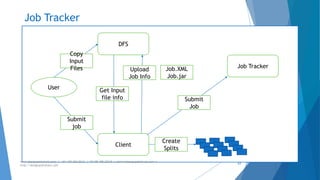

The document provides an overview of Apache Hadoop, highlighting its motivation, basic concepts, and various components such as MapReduce, Hive, Pig, and Sqoop. It emphasizes the significance of handling big data through distributed processing across clusters of commodity computers, with key features including scalability, efficiency, and reliability. Additionally, it mentions the historical context of Hadoop's development and its applications in data analysis and business intelligence.