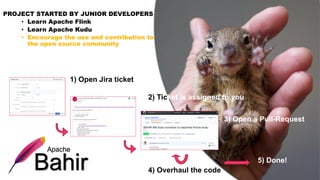

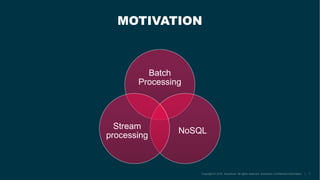

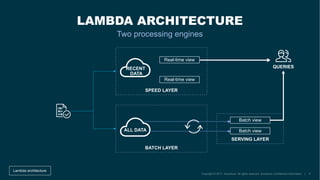

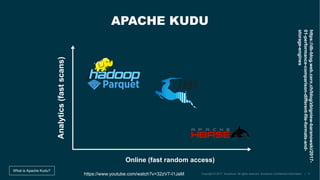

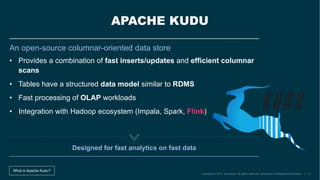

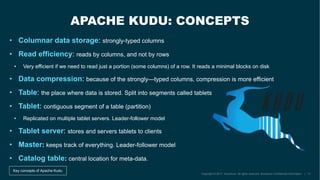

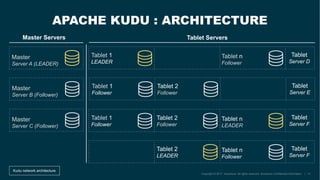

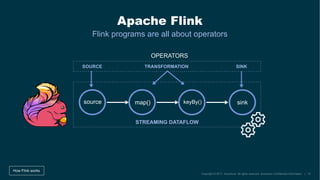

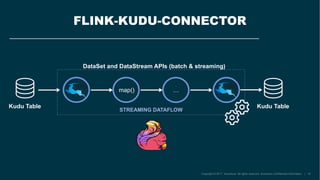

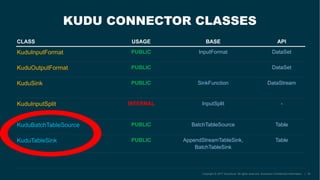

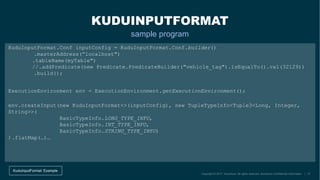

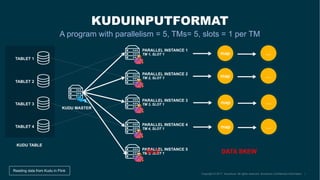

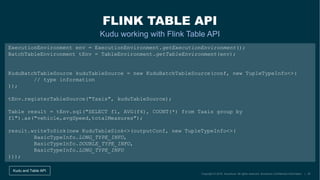

The document describes the development of the Flink-Kudu connector by a team at Accenture, aimed at creating Kappa architectures for big data processing. It outlines the features of Apache Kudu and Apache Flink, detailing their respective architectures and functionalities, including handling batch and stream processing. Additionally, it introduces the coding process for integrating Flink with Kudu and discusses ongoing contributions and future improvements to the connector.