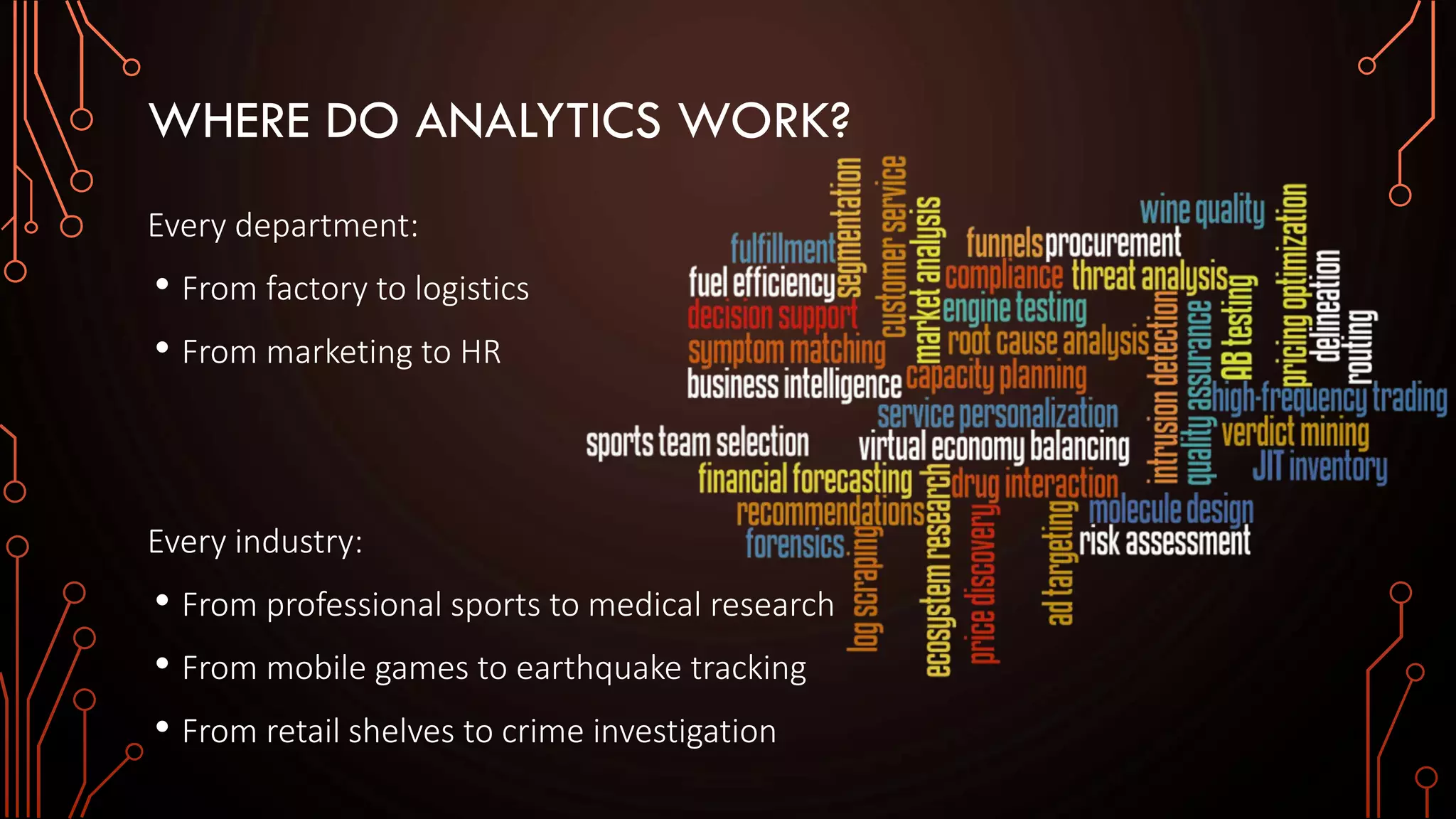

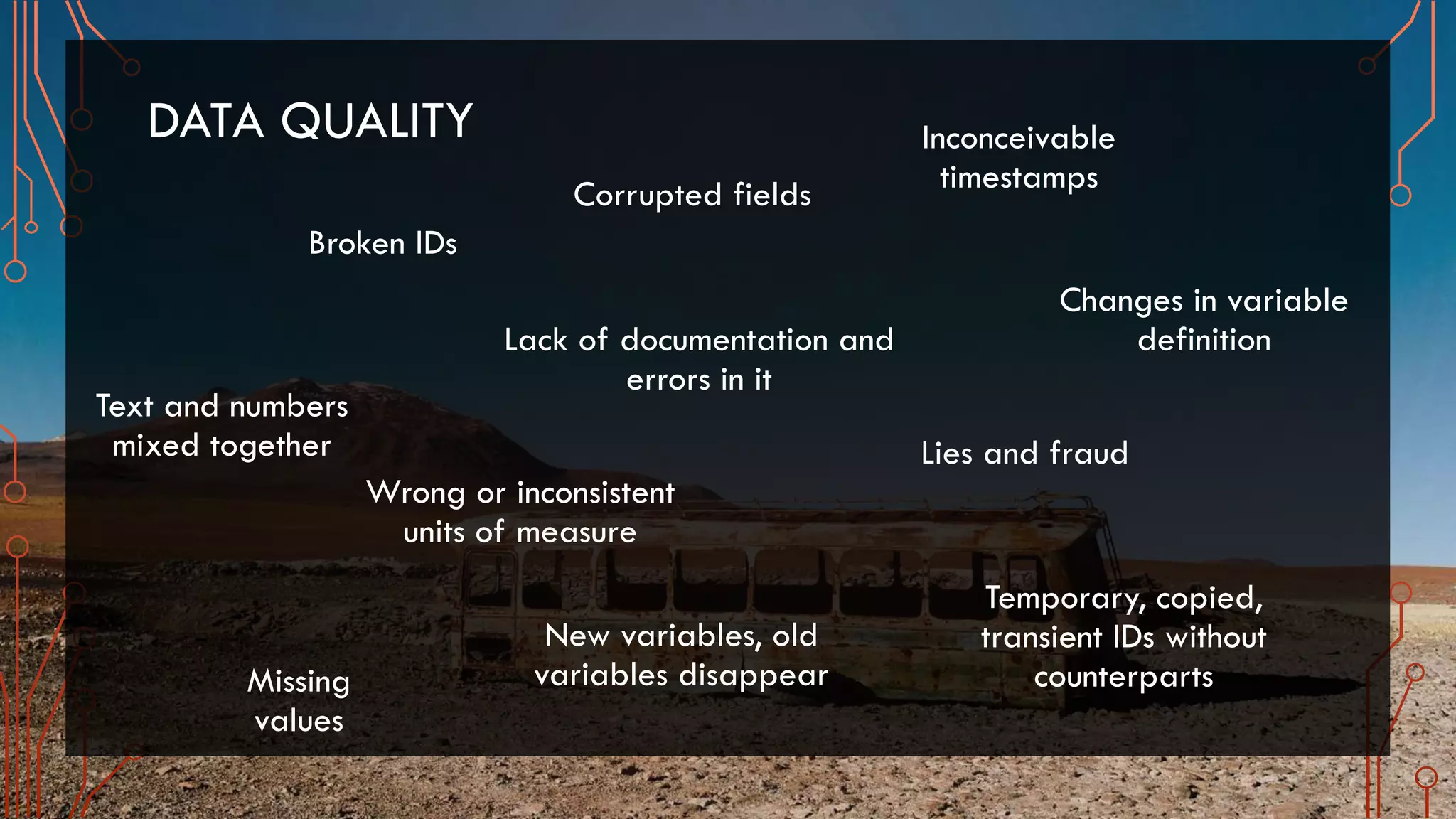

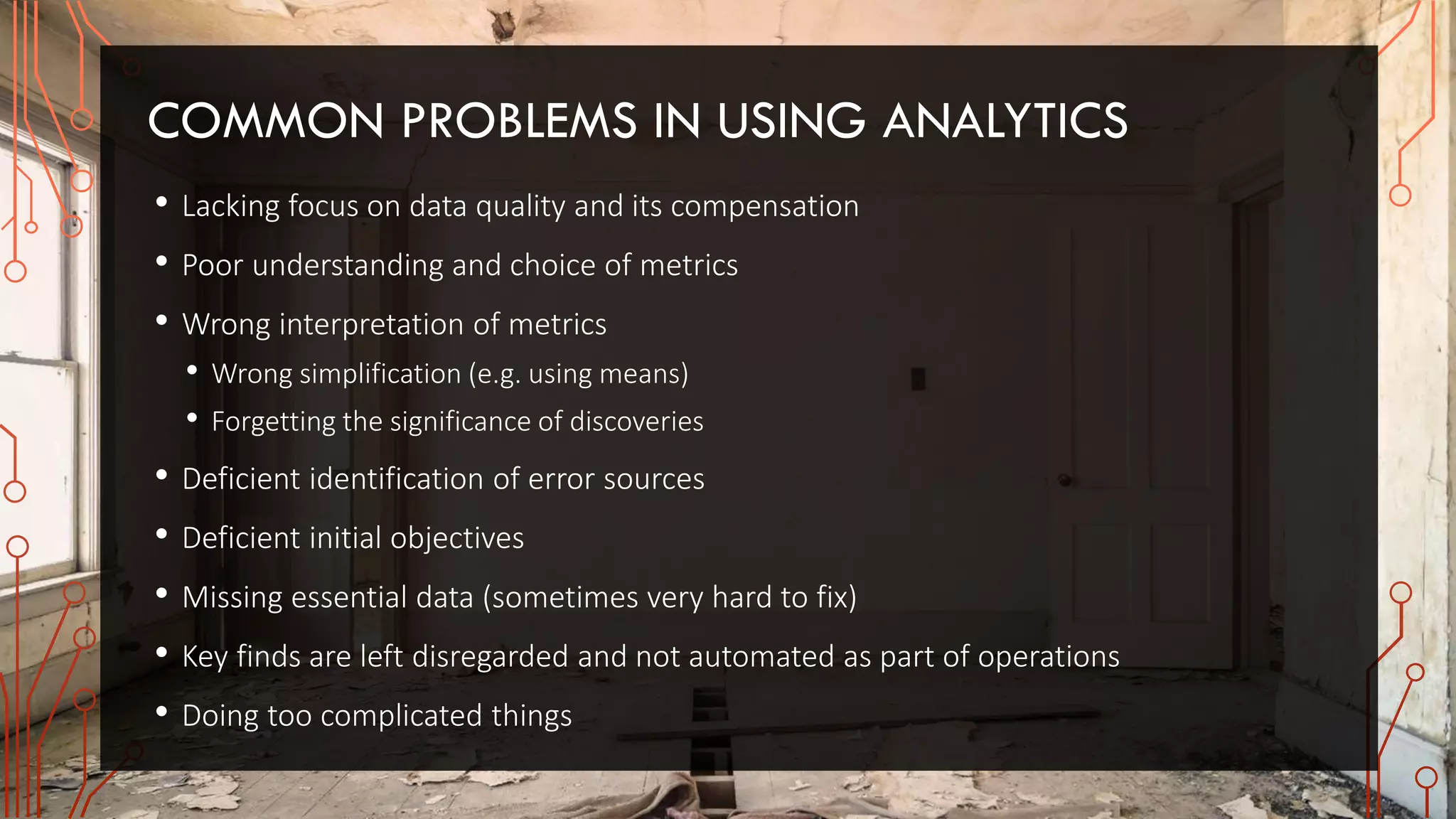

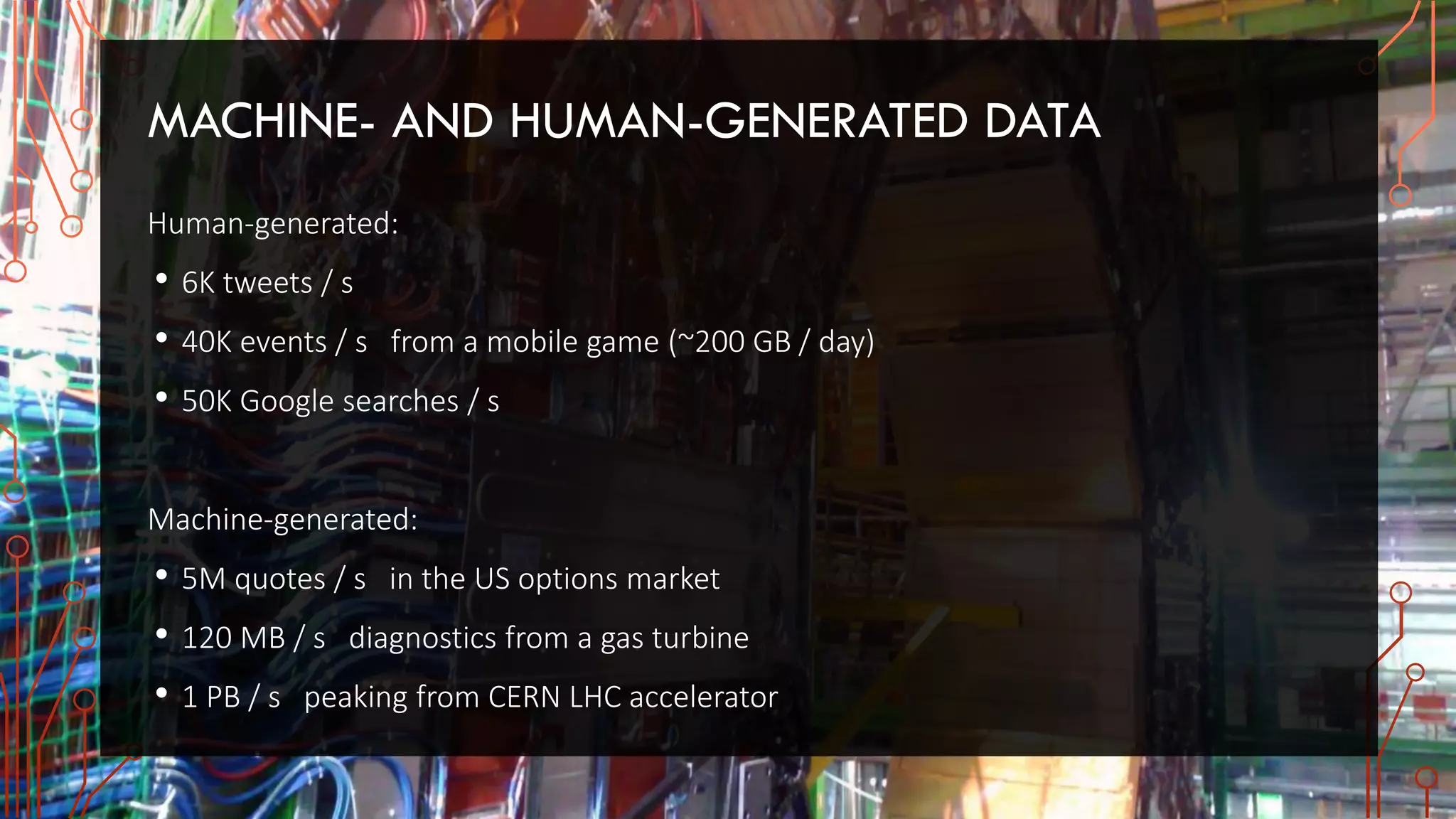

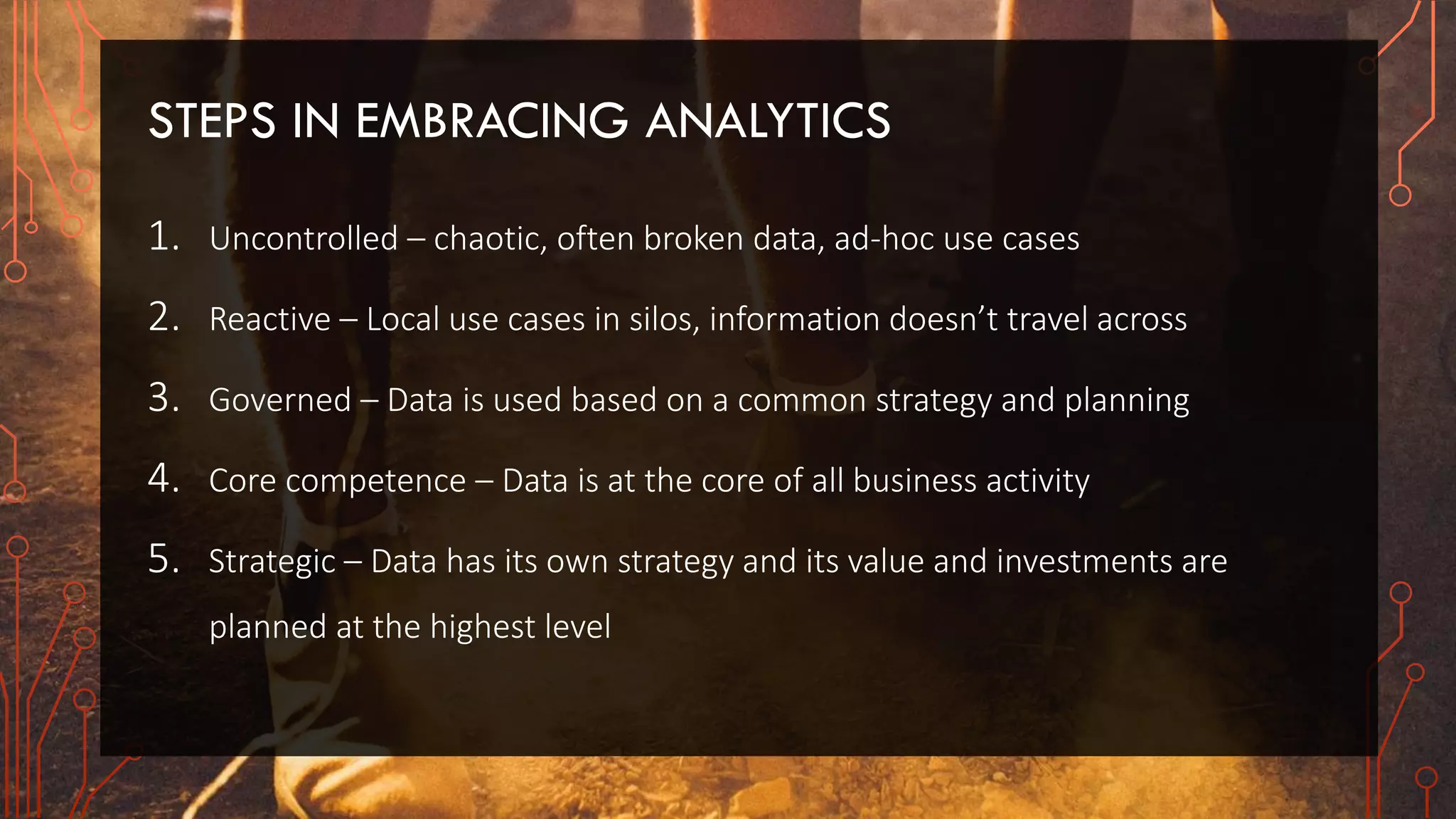

The document discusses the critical role of analytics in modern business across various departments and industries, emphasizing the importance of data quality and the skilled handling of big data. It outlines the challenges and methodologies of analytics, including the distinction between correlation and causation, the significance of real-time and operative analytics, and the necessity of aligning metrics with business objectives. The text also highlights the evolving data systems, such as cloud computing and the Internet of Things, and the cultural implications for organizations adopting data-driven strategies.