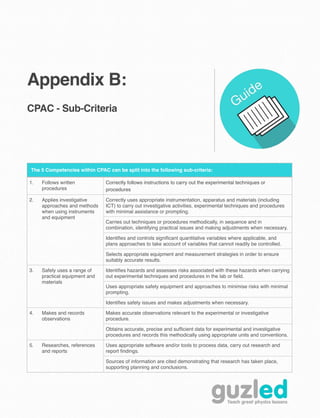

This document provides guidance and resources for teachers implementing the practical assessment component of A-Level physics courses in the UK. It discusses the requirements for record keeping of student practical work, feedback from monitoring visits conducted by exam boards, and planning considerations for the second year of the two-year A-Level course. Key recommendations include completing 5-6 of the required practical experiments by the first year, developing students' planning and decision making skills, and aiming to finish all practical work by Easter of the second year. FAQs and additional details on the assessment criteria are provided in appendices.