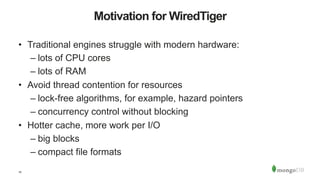

This document provides an overview of WiredTiger, an open-source embedded database engine that provides high performance through its in-memory architecture, record-level concurrency control using multi-version concurrency control (MVCC), and compression techniques. It is used as the storage engine for MongoDB and supports key-value data with a schema layer and indexing. The document discusses WiredTiger's architecture, in-memory structures, concurrency control, compression, durability through write-ahead logging, and potential future features including encryption and advanced transactions.

![27

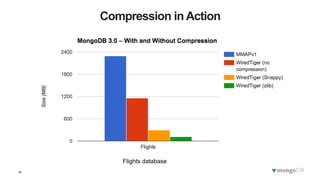

On-disk Compression

• Compression algorithms:

– snappy [default]: good compression, low overhead

– LZ4: good compression, low overhead, better page layout

– zlib: better compression, high overhead

– pluggable

• Optional

– compressing filesystem instead](https://image.slidesharecdn.com/wiredtiger-boston-150917231853-lva1-app6891/85/A-Technical-Introduction-to-WiredTiger-27-320.jpg)