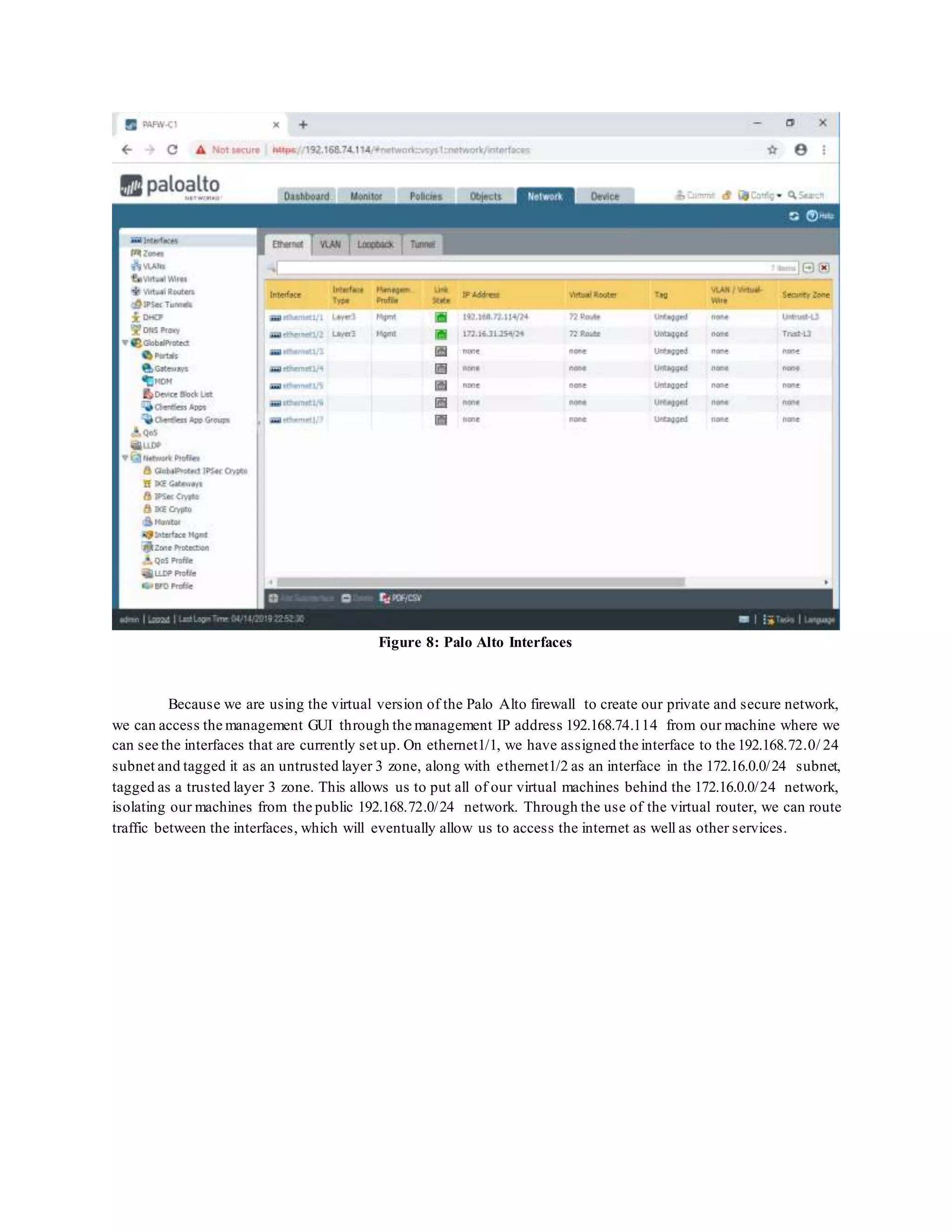

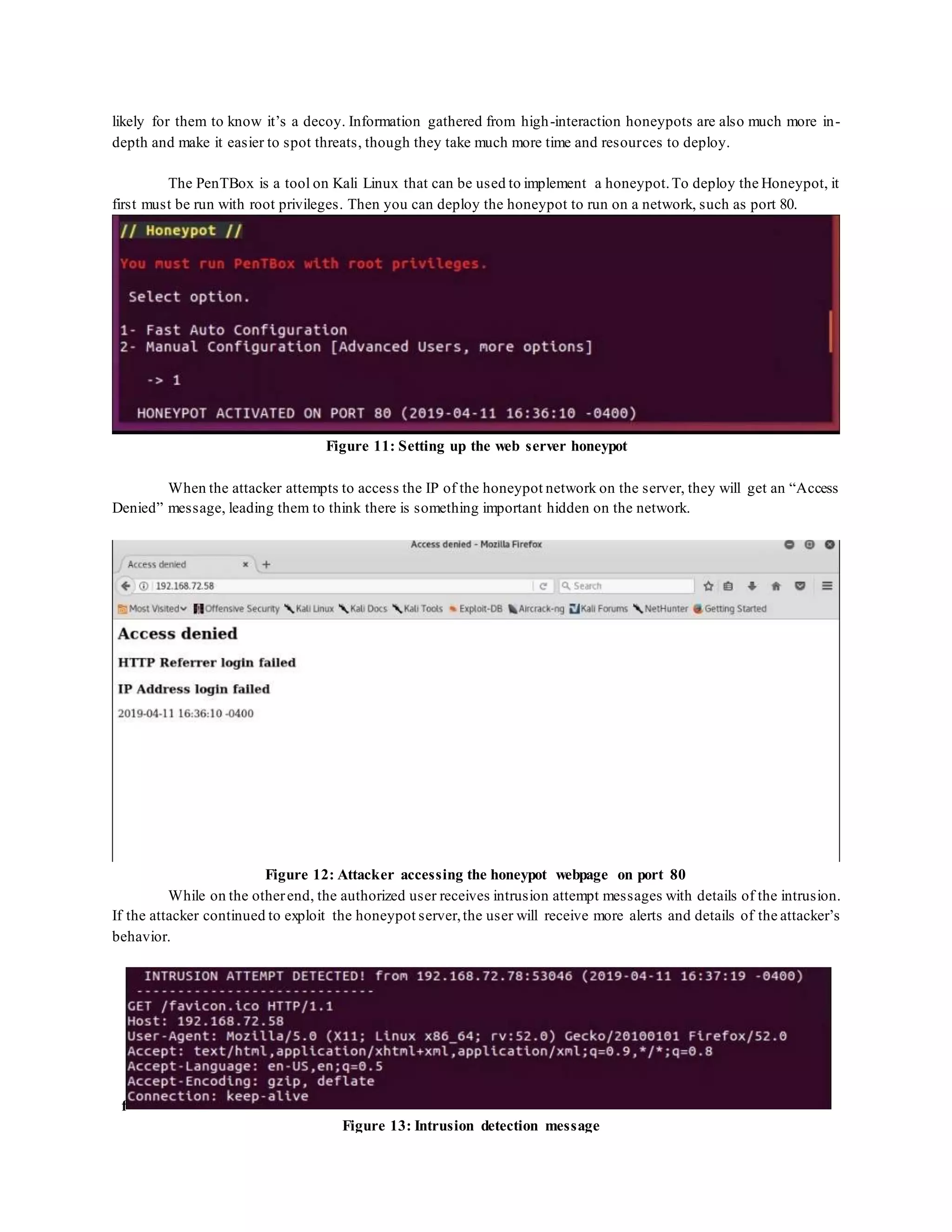

This document discusses the importance of digital forensics in cybersecurity, emphasizing the need for constant monitoring to detect both overt and subtle attacks on systems. It outlines a strategy that combines various tools to gather data on network intrusions, employing methods like honeybot for capturing malicious traffic and refining firewall rules to enhance network security. Additionally, the document highlights the role of big data analysis in identifying vulnerabilities and improving threat detection in cybersecurity practices.