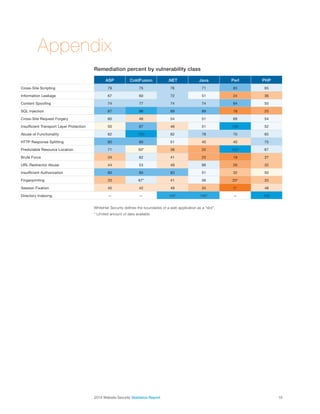

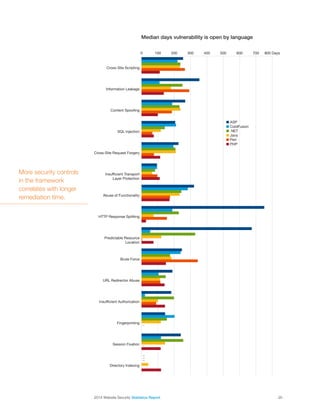

The 2014 website security statistics report examines various web programming languages and frameworks regarding their security postures by analyzing over 30,000 websites. It finds that there is no significant difference in the average number of vulnerabilities between popular languages like .NET, Java, ASP, and PHP, suggesting that language choice does not significantly affect security outcomes. The report highlights that security should be integral to the software development process and provides insights into vulnerability types, remediation rates, and the importance of understanding the security implications of technology choices.