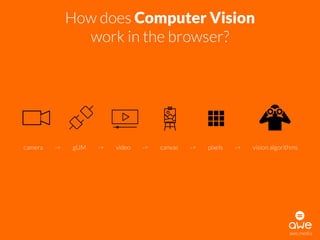

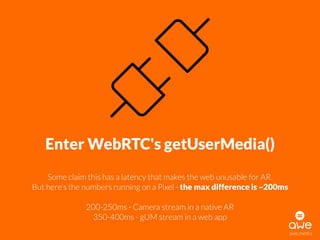

The document provides an overview of the advancements in computer vision, detailing its historical milestones from 1957 to recent developments in web-based applications. It explains how computer vision is integrated into browsers through technologies like WebRTC and mentions various libraries such as OpenCV and ARToolKit. Additionally, it discusses the challenges and solutions in implementing augmented reality functionalities in web environments.