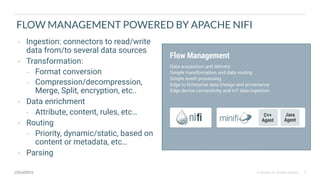

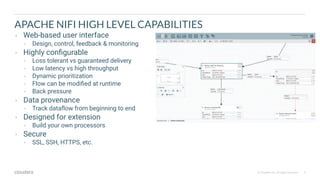

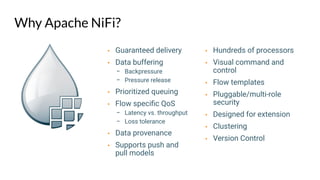

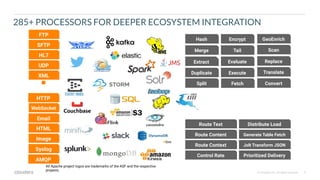

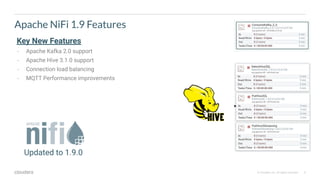

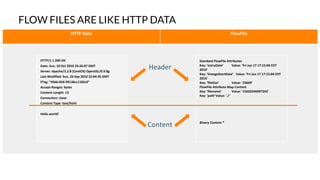

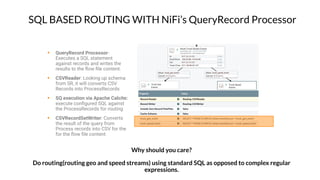

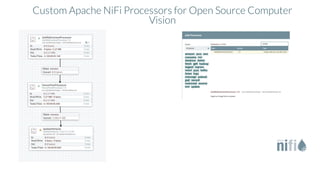

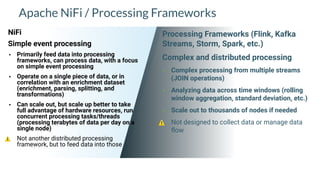

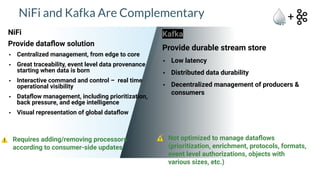

Apache NiFi is a data flow management tool that facilitates the ingestion, transformation, and routing of data through a web-based user interface with capabilities for data provenance, securing data, and dynamic configuration. It supports numerous processors and can handle both structured and unstructured data, making it flexible for various use cases within big data ecosystems. Key features include support for Apache Kafka, Hive, and the ability to execute SQL queries against records, emphasizing its usability in data integration and monitoring.