A 3D Visualization System For Hurricane Storm-Surge Flooding

- 1. Hurricanes are the most devastating natural hazard affecting the populated US East and Gulf coasts. One of the major threats associated with hurricanes is stormsurge.Thestormsurgecandamagebuildingsand infrastructure, block escape routes, and drown people by flooding low-lying coastal areas. More than 8,000 people were killed largely from storm-surge flooding when a hurricane made landfall at Galveston, Texas, in 1900.ThedeathtollintheUSfromstorm-surgehazards has declined drastically over the last several decades because of a low hurricane activity and implementation of timely evacuation from storm-surge zones. The evac- uation zones are determined through a combination of near-shore elevations and storm surge predicted by the numerical model Sea, Lake, Overland Surges from Hurricanes (Slosh)1 developed by the National Oceanic and Atmospheric Administration (NOAA). As coastal populations grow and decades of increased hurricane activity occur,2 the risk of drowning thousands of peo- ple living in low-lying areas, such as South Florida and New Orleans, Louisiana, increases as demonstrated by Hurricanes Katrina and Rita in 2005. Several hundred people were killed along the Mississippi coast by the storm-surge flooding induced by Katrina. Coastalresidentsarewarnedandadvisedaboutevac- uations by the National Hurricane Center (NHC) of NOAA through the media such as TV, Web, radio, and newspapers. However, due to a lack of first-hand expe- rience of hurricane impact, many coastal residents tend to overlook the danger of storm surge and choose not to evacuate. This could cause loss of life and injury that could be avoided. On the other hand, some residents choose to evacu- ate even though they are not in an evacuation zone. Overevacuation not only brings an inconvenience to evacuees, but also increases traffic and consequently slows down the evacuation process. A major reason for under- and overevacuation is that people don’t properly relate the 2D maps of the evacu- ation zones to their 3D life experience. Currently, TV meteorologistsuseacolorlinebufferoveramaporsatel- lite image to display hurricane warning and watch zones. This information, in combination with 2D evac- uation maps, raises the public’s awareness of following the evacuation order. However, hurricane warning and evacuation maps don’t provide coastal residents with a detailed account on the effect of storm surge on people and property. Three-dimensional computer visualization and ani- mation can provide a substitute for coastal residents’ lack of personal experience with hurricane-surge flood- ing. Tremendous progress has been made in 3D anima- tion in the last decade, which movies such as Perfect Storm and TheDayAfterTomorrow have demonstrated. However,the3Dvisualizationandanimationsystemfor storm-surge flooding differs from those in Hollywood movies in three aspects. First, objects such as buildings, roads, and trees in a synthetic 3D visualization environment not only have to be able to duplicate the real-world feature visually, but also be georeferenced so users can find real loca- tionsthroughaddressesorspatialcoordinates.Thesizes and shapes of buildings and trees have to be accurate so users can sense the severity of flooding by comparing the water level with heights of familiar objects. Second,themagnitude,extent,andprocessofstorm- surge flooding have to be accurate enough to represent the real situation. This information has to be based on hydrodynamics of storm surge. Third,thedamageextentofapropertycausedbystorm surgeandwaves,suchasthecollapseofahouse,mustbe determined by engineering rules. Recent advances in high-resolution remote-sensing technology and numer- ical modeling make it possible to provide accurate data fortheearth’ssurfacefeaturesandstorm-surgeflooding. Objectives and goals Here, we present a prototype 3D visualization and animation system for storm-surge flooding. Our system includes three major components: ■ a 3D synthetic visualization environment represent- ing terrain, buildings, roads, vegetation, and the sea; ■ a module for animating surge flooding and waves; and ■ a high-resolution storm-surge model to serve as an engine to drive the animation of flooding (see Figure 1). Thestorm-surgemodel(seethe“FurtherReading”side- bar) that we used in our study is an improvement of NOAA’s Slosh model, which enables us to refine the spa- Keqi Zhang, Shu-Ching Chen, Peter Singh, Khalid Saleem, and Na Zhao Florida International University A 3D Visualization System for Hurricane Storm-Surge Flooding ______________________________ Applications Editor: Mike Potel http://www.wildcrest.com 18 January/February 2006 Published by the IEEE Computer Society 0272-1716/06/$20.00 © 2006 IEEE

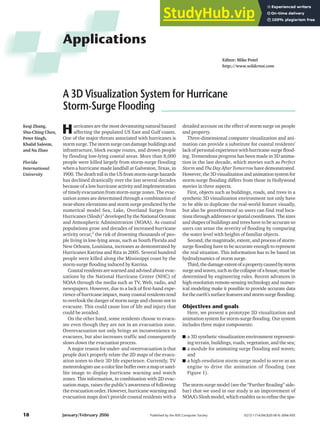

- 2. tial resolution of surge modeling from the kilo- meter to the hundred-meter scale. We built the 3D visualization environment and animation components, which are the focus of this article, based on the Virtual Terrain Project (VTP; see http://www. vterrain.org) using OpenGL. The major goals of our storm-surge flooding visualization system are to perform ■ near-real-time animation for forecasted hur- ricanes and ■ offlineanimationforhypotheticalhurricanes. The NHC issues public advisories that contain forecasted storm positions and intensity for three and five days in advance. There is con- siderable uncertainty in forecasting hurricane tracks and intensity, which are inputs of storm-surge models, because of our limited scientific knowledge of hurricanes. The longer the forecast period is, the larger the un- certainty. The uncertainty of forecasted hurricane tracks is displayed as a cone on TV with the best prediction in the center. Usually, the NHC releases a public advisory every 6 hours during a storm event and every 2 to 3 hours near land- fall. The location, size, and shape of the fore- casted cone are updated and improved as a hurricaneapproachestheshore.Thisrequiresa near-real-time modeling and animation of storm-surge flooding to present the public with themostrecentinformation.Also,thescenarios for most severe, most possible, and slightest flooding have to be produced based on the uncertainty in the hurricane track and intensi- tyforecasttoprovidethepublicwithacomplete picture of possible storm-surge flooding. In addition to informing the public, the real-time prediction and visualization of a storm surge lets emergency managers plan and respond to hurricane impacts more effectively. We used offline visualization and anima- tion to simulate the effect of storm-surge flooding from a hypothetical hurricane. These simu- lations display what the effect of storm-surge flood- ing would be if a hypothetical hurricane makes a landfall at a designated area. Offline visualization is useful for planning and education purposes by dis- playing the possible impact of a storm surge on exist- ing and future coastal development. We selected two study sites—South Beach and the Rickenbacker Causeway, located in Miami, Florida—to applyour3Dvisualizationandanimationsystem.Miami is constantly battered by hurricanes in terms of the his- torical record. South Beach is part of a barrier island chain facing the Atlantic Ocean. It’s a typical sample of important coastal tourism sites that are vulnerable to storm-surge flooding. The Rickenbacker Causeway is a highway connecting Virginia Key and Key Biscayne and is the only available escape route for island residents during a hurricane. IEEE Computer Graphics and Applications 19 Lidar measurements Feature extraction Data assimilation and representation Digital terrain model Building Vegetation Road Photographs GIS databases Visualization environment Animation component Numerical models 1 Framework for 3D visualization and animation of storm-surge flooding. Further Reading For more information on key aspects of our system, please see the following articles: ■ Storm-surge modeling: C. Xiao, K., Zhang, and J. Shen, A Three- Dimensional Numerical Model for Storm Surge Flooding, Part I: Model Description and Tide Simulation, tech. report, Int’l Hurricane Research Center, 2004, p. 30. ■ Lidar data classification: K. Zhang et al., “A Progressive Morphological Filter for Removing Non-ground Measurements from Airborne LIDAR Data,” IEEE Trans. Geoscience and Remote Sensing, vol. 41, no. 4, 2003, pp. 872-882. ■ Tree animation: P. Singh et al., “Tree Animation for a 3D Interactive Visualization System for Hurricane Impacts,” Proc. IEEE Int’l Conf. Multimedia and Expo (ICME), IEEE Press, 2005, pp. 598-601.

- 3. Constructing a 3D synthetic visualization environment A realistic and georeferenced 3D visualization envi- ronment needs to be built for animating and displaying the effect of storm-surge flooding. The major compo- nents of the visualization environment include a digital terrain model, buildings (location and 3D shapes), veg- etation(type,location,height,size,anddensity),roads, rivers, lakes, and oceans. The data required for buiding these components can be derived through remote-sens- ingtechnology,groundsurveys,andexistinggeographic information system (GIS) databases. Airborne Lidar measurements, feature extraction, and texture derivation High-resolution measurements of the terrain and the earth’s surface objects are essential to constructing a realistic 3D visualization environment. Until recent advancesinairbornelightdetectionandranging(Lidar) technology,ithasbeendifficulttoobtainmeasurements of the earth’s surface topography and objects over large areas with sufficient resolution for realistic 3D visual- ization.AirborneLidarisanactiveremote-sensingtech- nology. A typical airborne Lidar mapping system consists of three basic components: a laser scanner, a kinematic GPS, and an inertial measurement unit (IMU)—see Figure 2. The laser scanner determines the range from the aircraft to an object on the ground using thetraveltimeofemittedandreflectedlaserpulses.The GPS and IMU record the aircraft position and orienta- tion. We can determine an object’s 3D coordinates (x, y, and z) —referenced to the earth’s center—by combing the laser range, GPS, and IMU data. With vertical accuracies up to 15 cm and a submeter horizontal resolution, Lidar measurements provide accurate and detailed geometric information of the ter- rain, buildings, and trees for constructing a synthetic visualization environment (see Figure 3). However, air- borne Lidar systems derive irregularly spaced 3D point measurements of objects scanned by the laser beneath the aircraft. To construct a 3D synthetic visualization environ- ment, we have to extract geometric informa- tion for the terrain, buildings, and trees from volumi- nous point data. Wehavedevelopedaframeworkthatincludesaseries of methods to extract the features from Lidar measure- ments.Underthisframework,measurementsfortheter- rain and nonground objects are first classified using a progressive morphological filter (see the “Further Reading” sidebar). Then, buildings and vegetation are furtherseparatedfromnongroundmeasurementsusing parameters of a fitting plane to Lidar measurements withinalocalwindow(seeFigure4).Withclassifiedter- rain, building, and vegetation Lidar measurements, we generate the digital terrain model by interpolating groundmeasurements,extractingthefootprintsand3D shapes of buildings, and deriving the locations and heights of trees from nonground measurements. Applications 20 January/February 2006 2 Schematic diagram show- ing data acquisi- tion parameters for a Lidar system. We obtained the point spacings from a scanner with an oscillat- ing mirror and a forward aircraft speed of 50 meters per second. We can achieve a high point density by setting a low flying altitude, slow flying speed, and narrow scan angle and per- forming over- lapping surveys. 500-m flight altitude 320-m- wide scan swath 13-cm-wide laser footprint 33-kHz laser pulse rate 0.7 m 36˚ scan angle 1.4 m GPS satellites GPS ground station Differential GPS navigation Inertial measurem unit

- 4. Furthermore, we extract shape data for roads, coast- lines, canals, and lakes from existing GIS databases. Combining these data together, we can construct wireframes of 3D objects for visualization. However, object wireframes, like the skeleton of the human body, only represent the shape of 3D objects. To make objects look realistic, we have to provide real-life skin (texture) over a wireframe. We obtained the texture for buildings and trees from color aerial and ground photographs. 3D models for buildings and trees We have to create 3D building and tree models based on wireframe and texture data to develop a 3D visual- ization environment in the VTP. Unfortunately, the VTP system can only read digital terrain model (usu- ally stored as binary files) and GIS shape files for roads directly and generate corresponding visual represen- tations using its C++ libraries. It does not have the capability to create complex 3D building and vegeta- tion models, but provides an API to read files (.3DS) from the commercial 3D model creation software, Autodesk’s 3ds Max. We created building and plant models in two steps in 3ds Max. First, we created geo- metric skeletons of objects using primitive parts such as boxes and cylinders and their transformations in terms of geometric data from Lidar measurements. Second, we created textures including material prop- erty, color, reflection, and opacity (which cover skele- tons) from ground and aerial photographs. Figure 5 shows models for a building and palm tree in South IEEE Computer Graphics and Applications 21 35 25 15 5 –5 Elevation (m) 4 (a) Aerial photograph for a building surrounded by trees, (b) Lidar point measurements for the same area, (c) unit normal vectors of the fitting plane for a Lidar point and its neighbors within a window, (d) and the sum of squares due to elevation deviations (SSEDs) of Lidar measurements within a window from the fitting plane. The unit normal vectors are consis- tent and SSEDs are small for building roof points, while unit normal vectors are variable and SSEDs are large for vegetation points. 3 Image interpolated from point Lidar measurements for a coastal section at South Beach, Miami, Florida. The right corner is the ocean side. 10 8 6 4 2 0 0.3 0.2 0.1 0 Elevation (m) 10 20 30 40 0 20 40 60 0 5 10 15 10 20 30 40 0 10 20 30 40 50 60 0 1 2 10 20 30 40 50 –10 0 0 10 20 30 40 50 60 70 0 1 2 (a) (b) (c) (d) 5 (a) Building and (b) tree models created in 3ds Max. (a) (b)

- 5. Beach. In addition, we developed other models includ- ing bridges, cars, and wake effects using 3ds Max. 3D visualization environment Weimporteddigitalterrainmodels,buildingandveg- etation models, and road shape files to the VTP to gen- erate a 3D synthetic environment using C++ libraries and OpenGL APIs. Figure 6 shows a 3D visualization environment for an area in South Beach. VTP also has a user-friendly interface that lets users fly or walk around the 3D environment, zoom-in or -out of a scene, as well as select designated locations within the environment. This provides users with an interactive way to navigate through a 3D synthetic environment and to reach their location of interest. Animation of storm-surge flooding The animation component reproduces the storm- surge flooding processes in a synthetic 3D visualization environment. Data for storm-surge flooding come from numericalmodelingbasedonhurricanetrackandinten- sity. However, animating storm-surge flooding is not enough to produce a realistic flooding scene because storm surge is only one of the impacts caused by hurri- canes. High winds and heavy rain, often accompanying a hurricane, can also alter the environment significant- ly. To make the visualization of surge-flooding process- ing more closely representative of a natural situation, we constructed six different animation engines to sim- ulate water, wind, rain, and other phenomena caused by storms. The six animation engines are ■ flooding and wave; ■ cloud, rain, and lightning; ■ tree; ■ debris; ■ vehicle; and ■ 3D sound. Flooding and wave animation The water-level change due to storm surge is easy to animate because it approximates a sine curve and is a relativelyslowandlarge-scaleprocess.Muchmorechal- lengingworkistoanimateocean-surfacewaves.Ocean- surface waves in deep water caused by hurricanes have multifrequencies that are determined by local wind waves and swell waves propagated from the area cov- ered by storms. In shallow coastal water, waves trans- form due to interactions with the bottom of the ocean and make the animation even more complicated. Many numerical models can simulate the generation and propagation of multispectral waves. However, the demand on computational resources for the simulation and rendering is tremendous. To save computational cost, we only implemented a simple deep ocean wave model for enhancing the visualization effect.3 The wave animation changes as wind speeds and directions vary, which allows representation of wave size changes dur- ing a hurricane. Figure 7 shows a scene of flooding animation from our system. The animation of a super- imposed surface wave over rising water makes surge flooding look more realistic. 22 January/February 2006 Applications 6 3D visualization environment for South Beach. 7 (a) Ocean wave animation and (b) overland flooding animation. (a) (b) (a) (b)

- 6. Cloud, rain, and lightning animation The weather of a hurricane has distinct features such as dark clouds, heavy rain, and sometimes thunder and lightning. To make the hurricane impact look more real- istic, these features have to be animated appropriately. Wecreatedtwocloudpatternstorepresenttheskyinhur- ricaneandnormalconditions.Thehurricaneskyisfilled withdarkandthickclouds(seeFigure8),whileanormal sky is clear with few clouds. Cloud animation provides viewerswithasenseofsevereweatherfromahurricane. We animated the raindrops using a particle engine.4 The engine stores a list of raindrops and updates them at a fixed time interval. The rain animation is associat- ed with variables including wind direction, speed, and rain intensity. We animated the lightning by randomly assigning depth to each lightning structure. Tree animation The tree animation engine displays the wind effect ontrees.Weemployedabillboardmodelinwhichatree isrepresentedbyplanar-texture-mappedgeometrywith various angles (see http://www.vterrain.org/Plants/ Modelling/) to perform a simple tree animation. The advantage of a billboard model is that it is easy to imple- mentandconsumeslittlecomputingresources.Thedis- advantage is that the model only allows tree bending or swinging as a whole around a pivot point. This method is reasonable for animating trees far away from the cen- terofascenewherethedetailsarenotneeded.However, themethodlacksofflexibilityanddetailstoanimatethe trees at the scene’s center. We used a vertex weighting technique (see the “Further Reading” sidebar) to improvethetreeanimationatthecenterofascene.This method allows trunk bending and branch swinging in different directions, making tree animation more real- istic visually. Wind speed and direction determine the magnitude and direction of tree bending and swinging in the tree animation engine. Debris animation High winds during a hurricane often cause flying debris that can result in serious damage to unprotected windows. The debris can be any solid object movable by windsuchasrocksontheground,snappedtreebranch- es, and loosened roof tiles. We used Open Dynamics Engine (see http://www.ode.org) along with VTP for animating flying debris. To control the animation, we used the wind speed and direction variables. Figure 9 showsdebrisanimationaroundtheSouthBeachregion. Vehicle animation To display the effect of storm-surge flooding on evac- uationtraffic,thevehicleshavetobeanimated.Vehicles traveling on a highway or a road during or after the rain produce a wake effect that results in splashes around the vehicle and a trail of water behind the vehicle. We created .3DS models rendering the wake effect around a vehicle and manipulated its size and texture using the VTP to animate a car movement under rain conditions. Figure10onthenextpageshowsthewakeeffectcaused by car movement during rain. 3D sound animation Creating sound effects from wind, wave, rain, and thunder make storm-surge flooding animation more realistic. We implemented 3D sound animation using OpenAL (see http://www.openal.org). The sound ani- mation includes a background sound for ocean waves and wind, which becomes louder as the wind speed increases. The strength of sound was determined with reference to the listener’s position, which is the camera locationinoursystem.Wealsoblendedsoundsforthun- der and rain into the 3D sound effect. The sound vol- ume of thunder depends on visibility of lightning and its distance from the listener (camera). The sound for rain increases in volume as listeners move close to the road or ocean. IEEE Computer Graphics and Applications 23 8 Cloud, rain, and lighting during a hurricane. We added the blue background to the lower portion to make raindrops more evident. 9 Debris animation.

- 7. Discussion and conclusions Our visualization and animation system displays a process showing that a rising storm surge can stop and drown vehicles in sections with low elevation during a hypothetical hurricane, leading to a blockage of the escaperoute.Thisvividanimation,incombinationwith a realistic 3D visualization environment, has impressed local coastal residents in several demos (see Figure 11). Displaying and animating 3D objects is an extensive computationandmemory-consumingtask.Thecurrent prototype—running on a PC workstation with a 3.2- GHz processor, 2 Gbytes of RAM, and a 256-Mbyte graphiccard—hasachievedacceptableperformancefor a coastal area extending several kilometers, but has dif- ficulty in rendering large-scale visualization. We can improve the performance for a large-scale 3D visual- ization and animation in several ways. First, we can increase the animation speed by optimizing many graphics-rendering algorithms. Pyramid algorithms need to be introduced into the system to compress com- ponents for a large-scale animation. Second, we can improve the animation speed by run- ningthesysteminacomputercluster.Todothisrequires breaking down the large-scale project into many small- scale components, and the corresponding parallel algo- rithms have to be developed. Third, if resources are limited for visualizing and ani- mating the entire area impacted by storm surge, we can perform visualization and animation for landmark buildings, evacuation bottlenecks, and key locations instead of the whole area. The public can derive the impression of storm-surge impact by watching the ani- mation at landmarks close to their living areas. We designed our current prototype for real-time visualization and animation for storm-surge flooding, which has higher requirements for computing power than a non-real-time visualization. An emphasis on real-time visualization and animation limits the detail of the 3D visualization environment. A real-time ani- mation is not required for answering what-if questions for the possible impacts of hypothetical hurricanes hav- ing different intensities. These questions are best addressed in offline animations, which can take hours to produce frames through a batch mode. Greater details of the 3D environment and flooding processes can be included in the offline visualization and ani- mation. Therefore, we must treat real-time and off-line animation separately. Our 3D visualization and animation system is based on real measurements and georeferenced data. By inputting the address or spatial coordinate for a specif- ic house, users can view the impact of storm surge on their property. Users can also view the spatial changes of storm-surge flooding by flying or walking through an animated environment. Therefore, this system can help emergency managers educate coastal residents to take appropriate evacuation action by allowing coastal resi- dents to view the potential impact and damage of storm surge to their living environment. This system is also usefulforperformingoptimalurbanplanningandflood damage mitigation by simulating possible storm-surge impact. Moreover, we can extend the current system to visualize and animate fresh-water flooding and wind damage to buildings. Georeferenced 3D synthetic visu- alization environments created for hurricane impact animation can also be used for other animation tasks such as routine traffic visualization. ■ Applications 24 January/February 2006 11 Flooding and traffic animation for the Rickenbacker Causeway during a hypo- thetical hurri- cane. Right panel shows a traffic blockage due to storm- surge flooding. 10 Water wake effect animation.

- 8. Acknowledgments This work was partially supported by a grant from the Federal Emergency Management Agency and by the Florida Hurricane Alliance Research Program spon- sored by the NOAA. This article is based on our paper from the Proceedings of the IEEE International Conference on Multimedia and Expo 2005 (see the side- bar for the complete reference). We thank Mike Potel, associate editor in chief of IEEE Computer Graphics and Applications, for reviewing our article and providing valuable comments. References 1. C.P. Jelesnianski, J. Chen, and W.A. Shaffer, Slosh: Sea, Lake and Overland Surges from Hurricanes, tech. report, Nat’l Oceanic and Atmospheric Administration, 1992, p. 71. 2. S.B. Goldenberg et al., “The Recent Increase in Atlantic Hurricane Activity: Causes and Implications,” Science, vol. 293, no. 5529, 2001, pp. 474-479. 3. A. Fournier and W.T. Reeves, “A Simple Model of Ocean Waves,”Proc.Siggraph,vol.4,ACMPress,1986,pp.75-84. 4. W.T. Reeves, “Particle System—A Technique for Modeling a Class of Fuzzy Objects,” ACM Trans. Graphics, vol. 2, no. 2, 1983, pp. 91-108. Readers may contact Keqi Zhang at zhangk@fiu.edu. Readers may contact editor Mike Potel at potel@ wildcrest.com. IEEE Computer Graphics and Applications 25 www.computer.org/join/ Complete the online application and get • immediate online access to Computer • a free e-mail alias — you@computer.org • free access to 100 online books on technology topics • free access to more than 100 distance learning course titles • access to the IEEE Computer Society Digital Library for only $118 Join the IEEE Computer Society online at Read about all the benefits of joining the Society at www.computer.org/join/benefits.htm