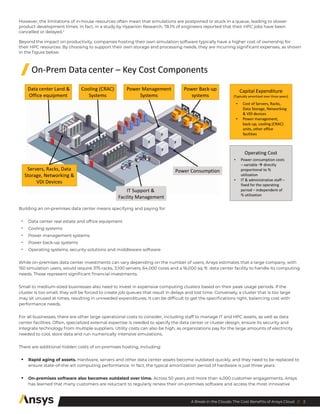

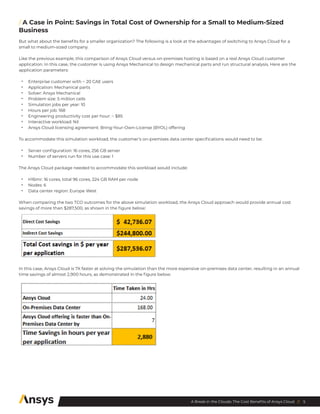

The document discusses the cost and performance benefits of using Ansys Cloud for engineering simulations, emphasizing its ability to provide flexible, on-demand computing resources that can significantly accelerate simulation solve times while reducing annual costs. It highlights case studies demonstrating substantial savings and efficiency improvements for both large enterprises and smaller organizations compared to maintaining on-premises data centers. Ultimately, Ansys Cloud enables engineering teams to focus on innovation and product development without the burdens of outdated hardware and complex infrastructure management.