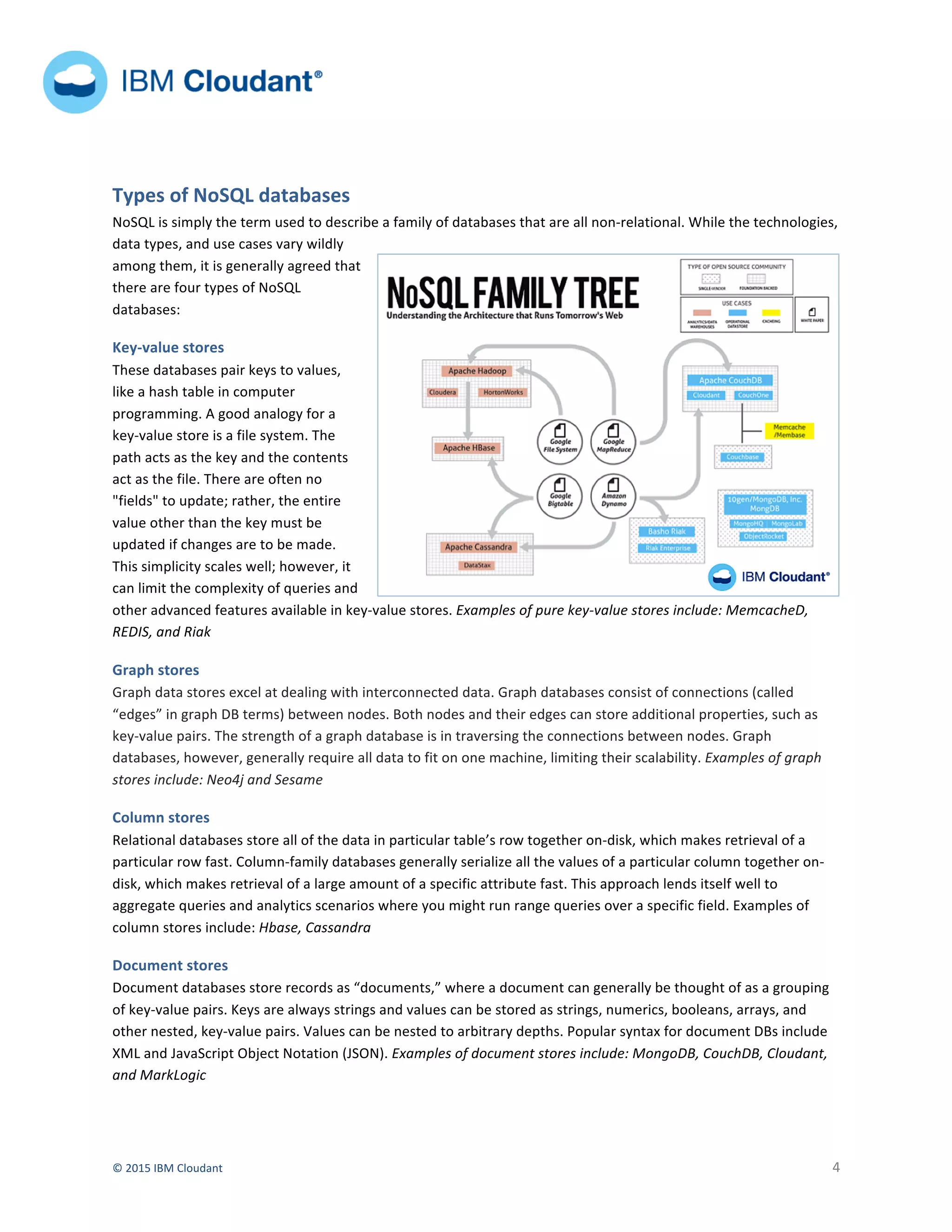

The document discusses the rise of NoSQL databases as an alternative to traditional relational databases. It provides a brief history of NoSQL, noting that new types of applications and data led developers to look for databases that offer more flexibility and scalability. It also describes the main types of NoSQL databases - key-value stores, graph stores, column stores, and document stores - and discusses some of the advantages of NoSQL databases like flexibility, scalability, availability and lower costs.