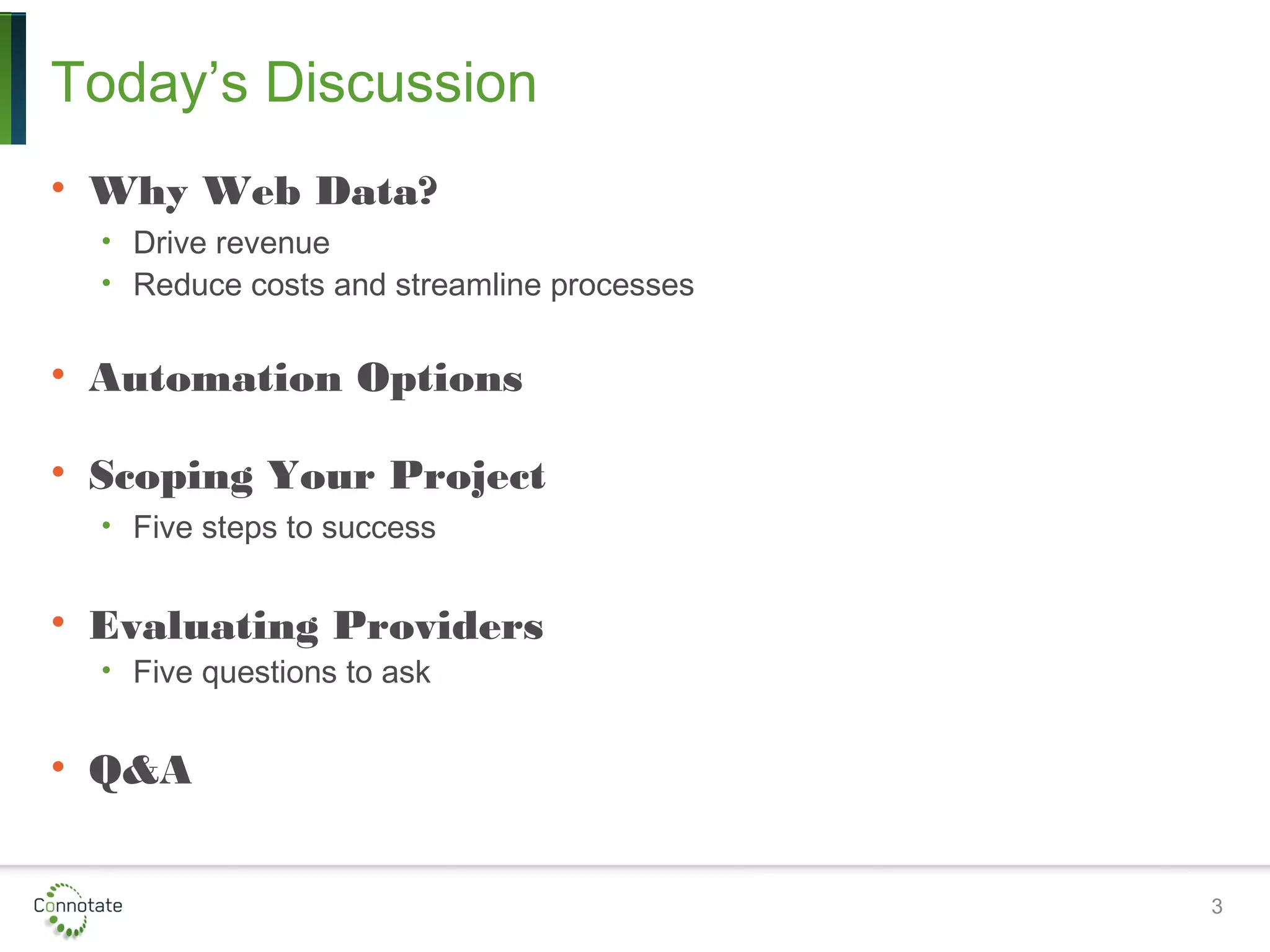

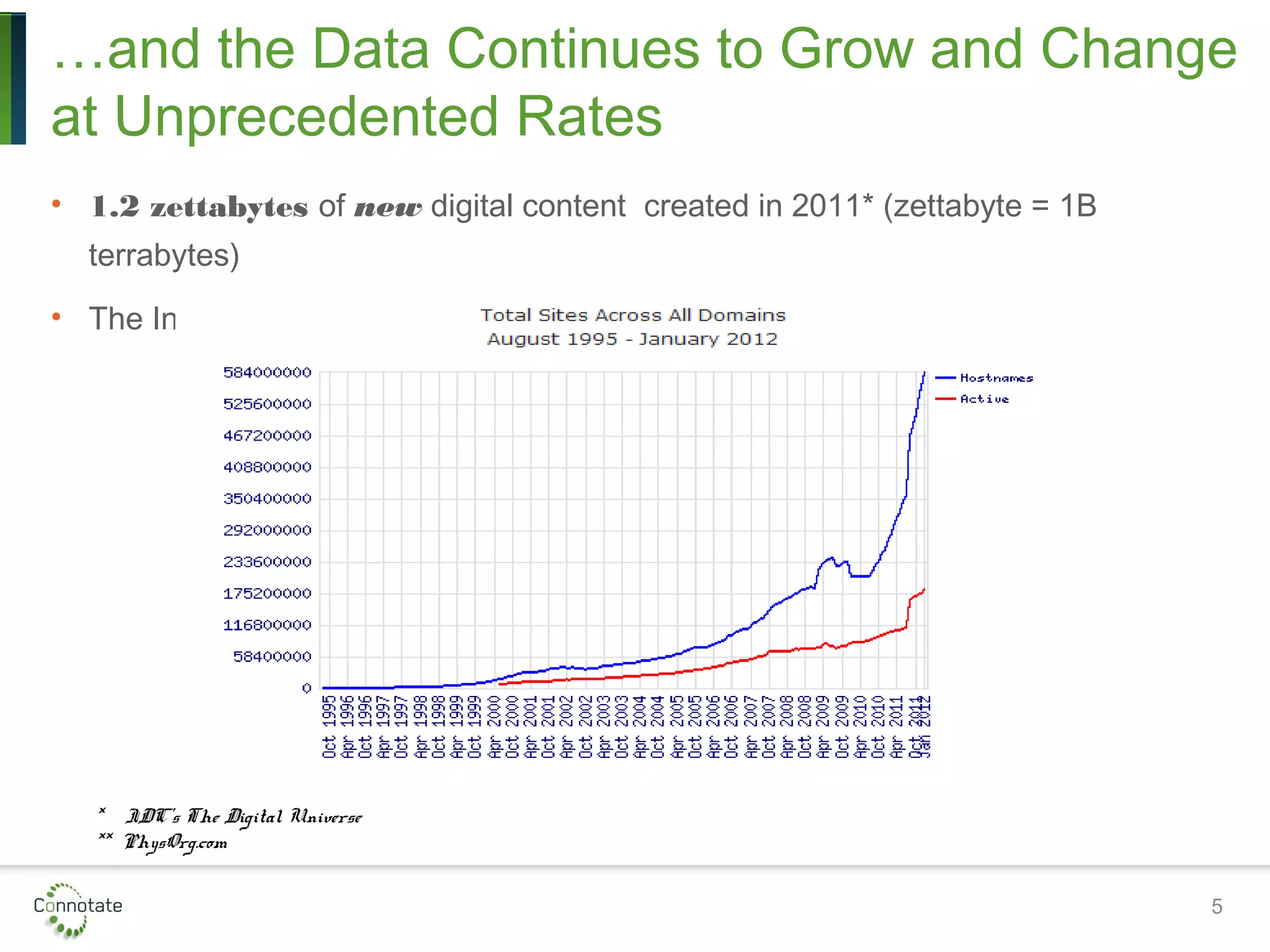

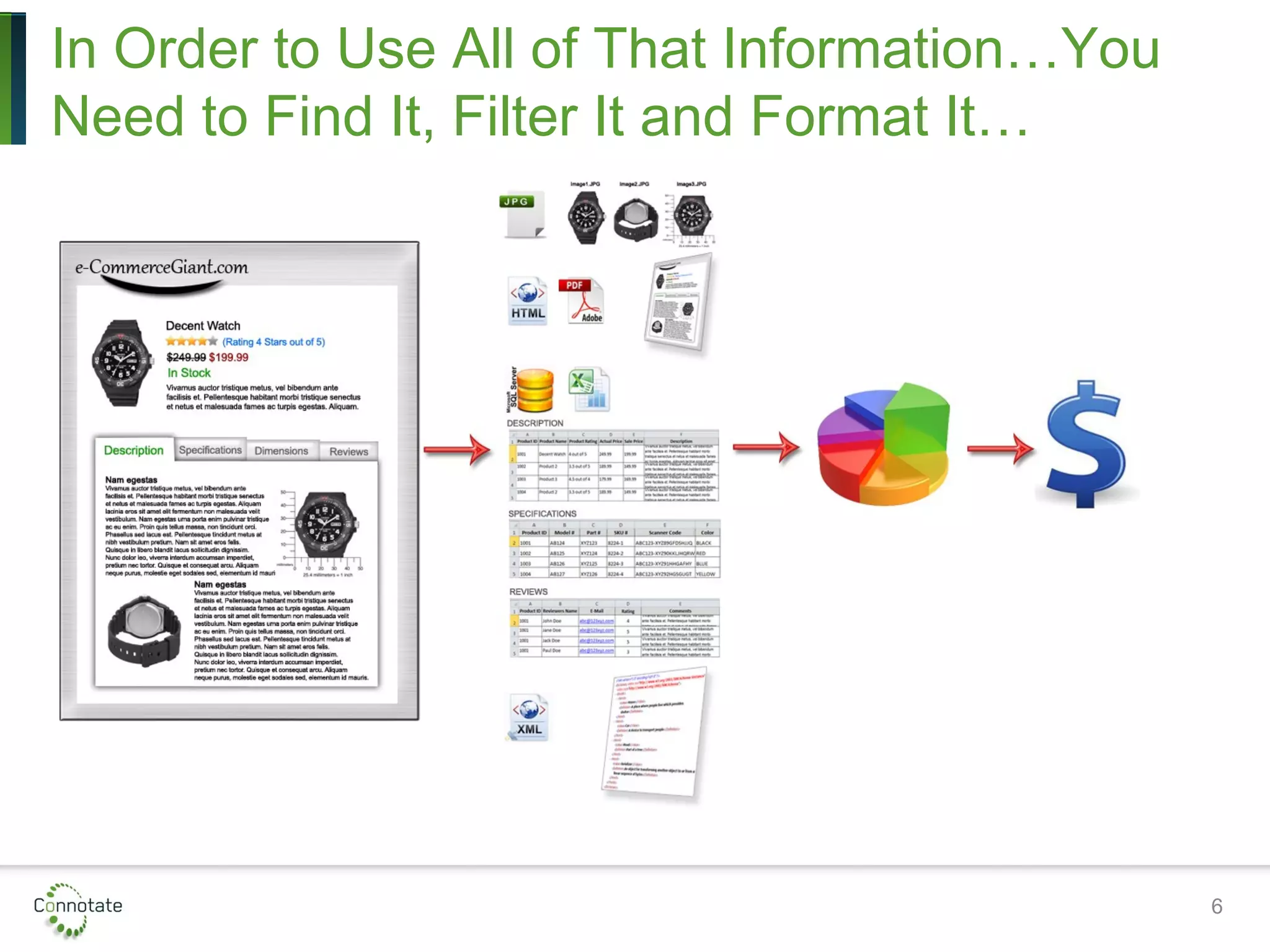

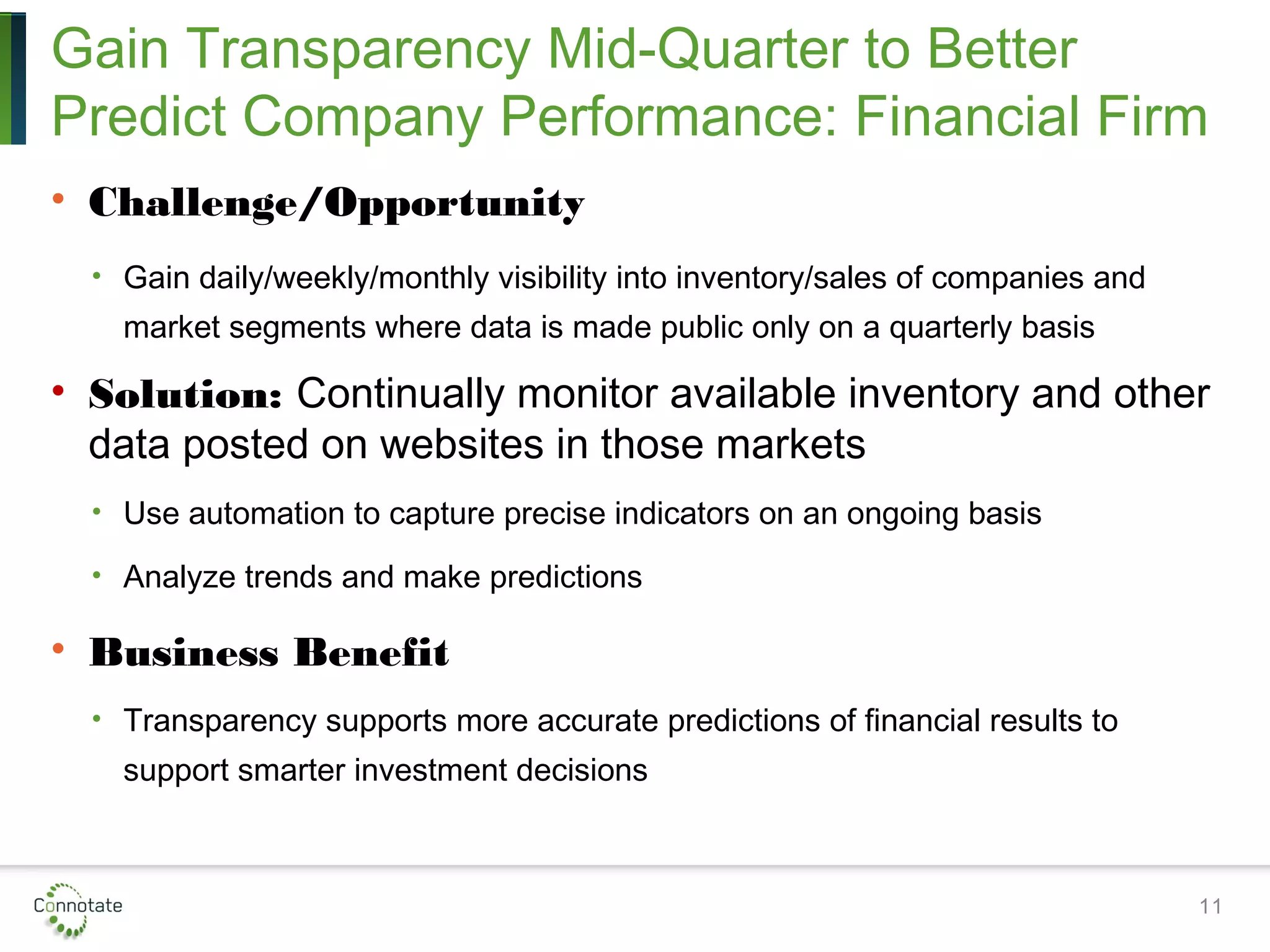

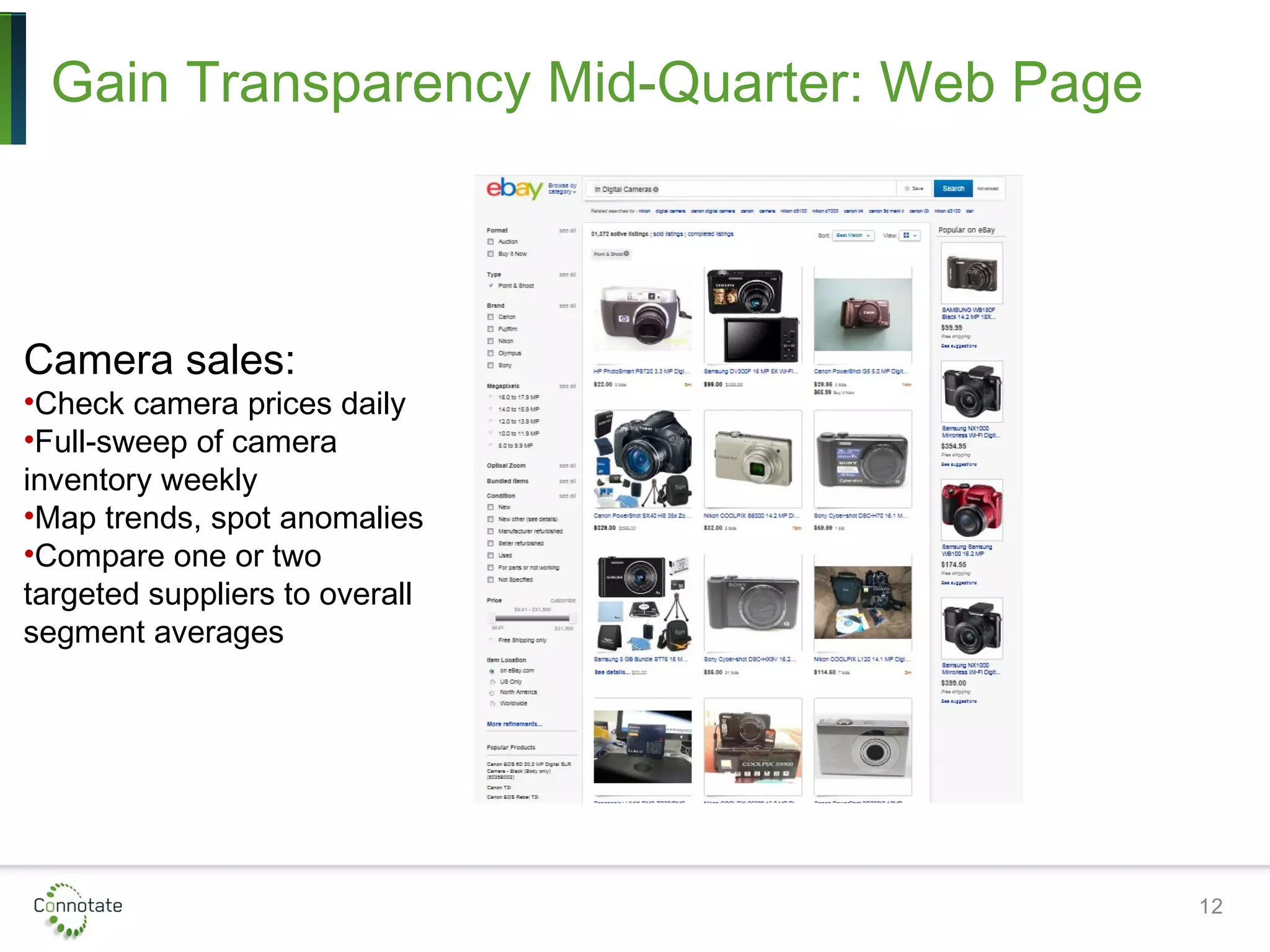

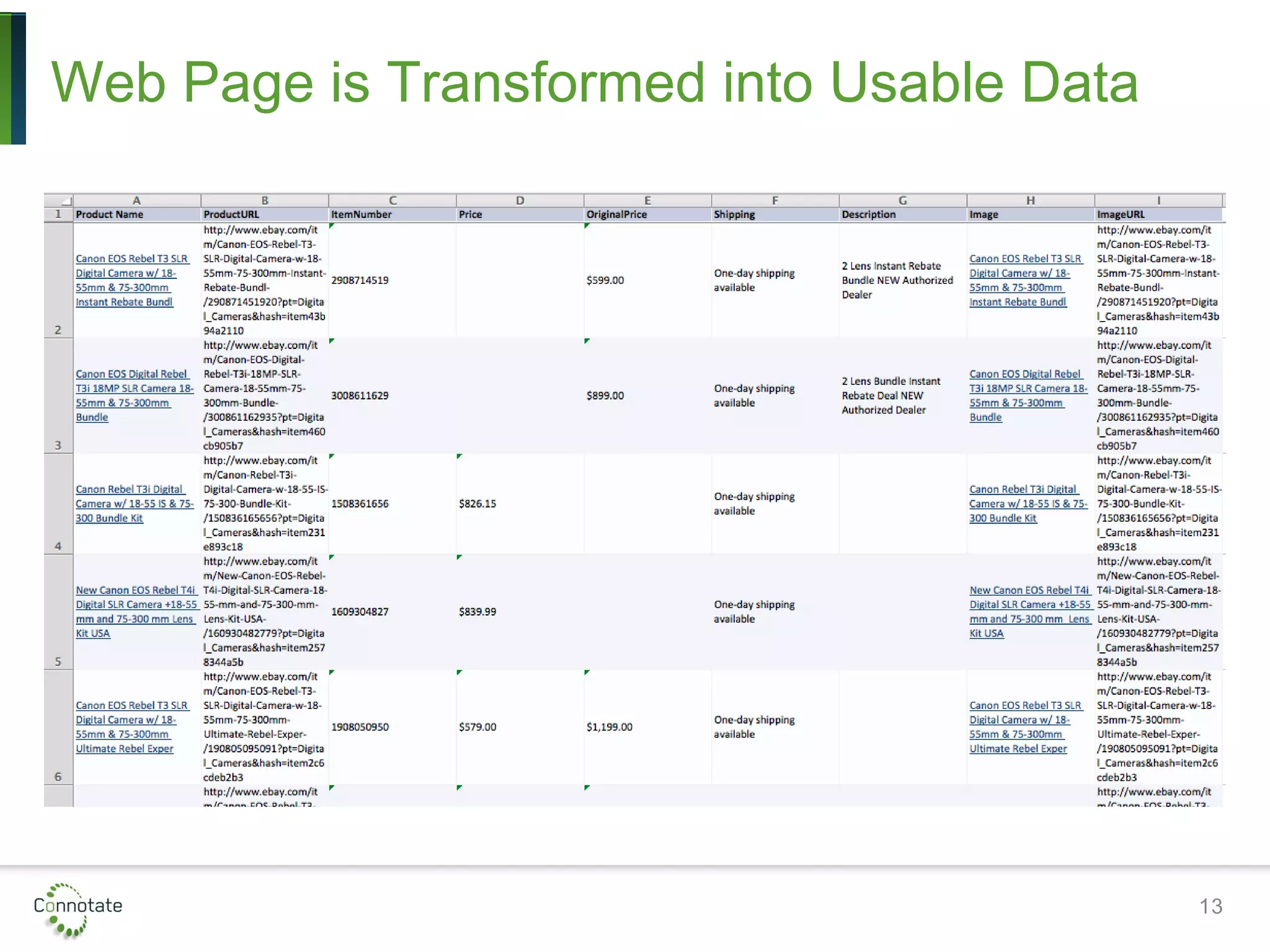

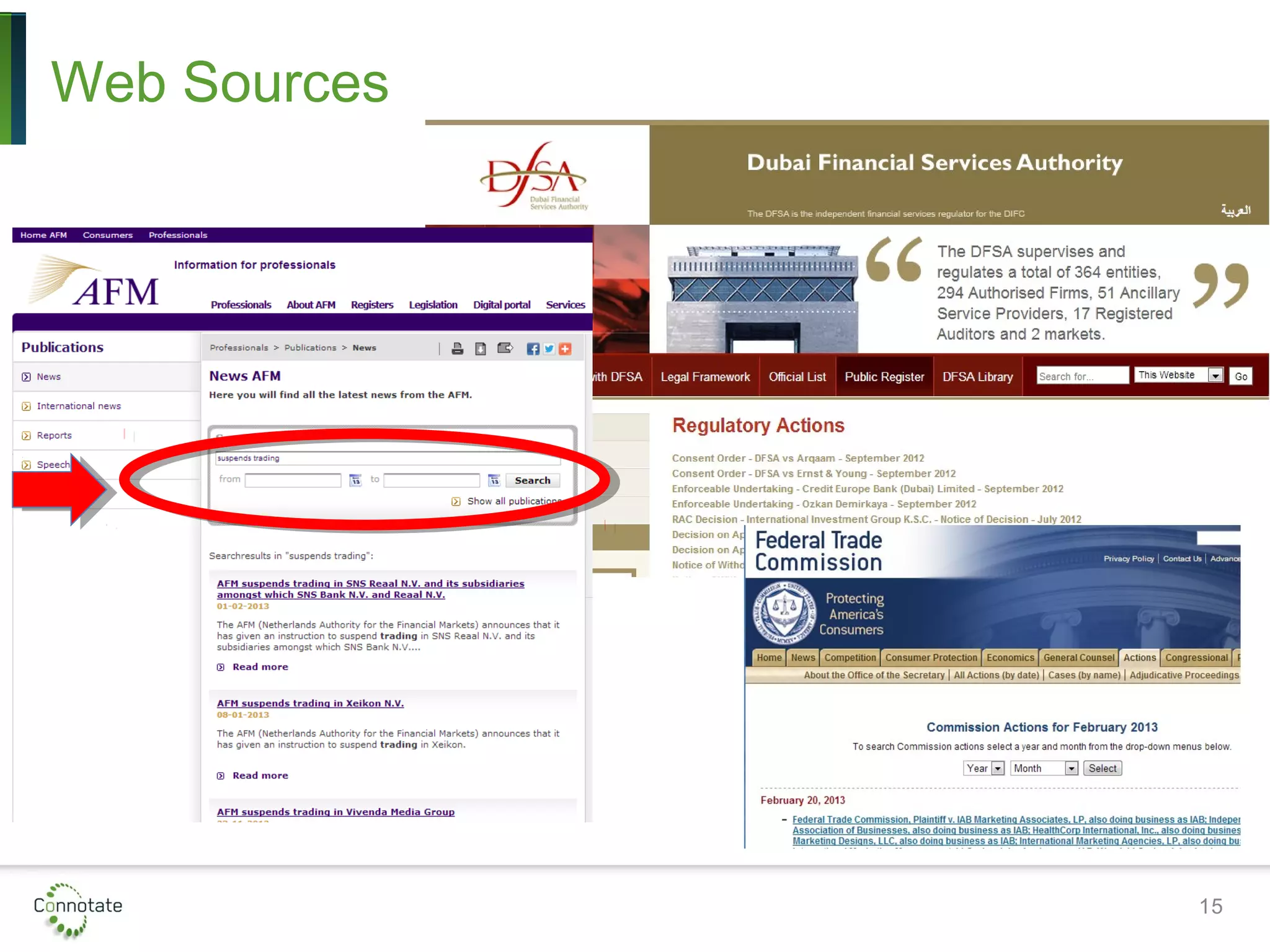

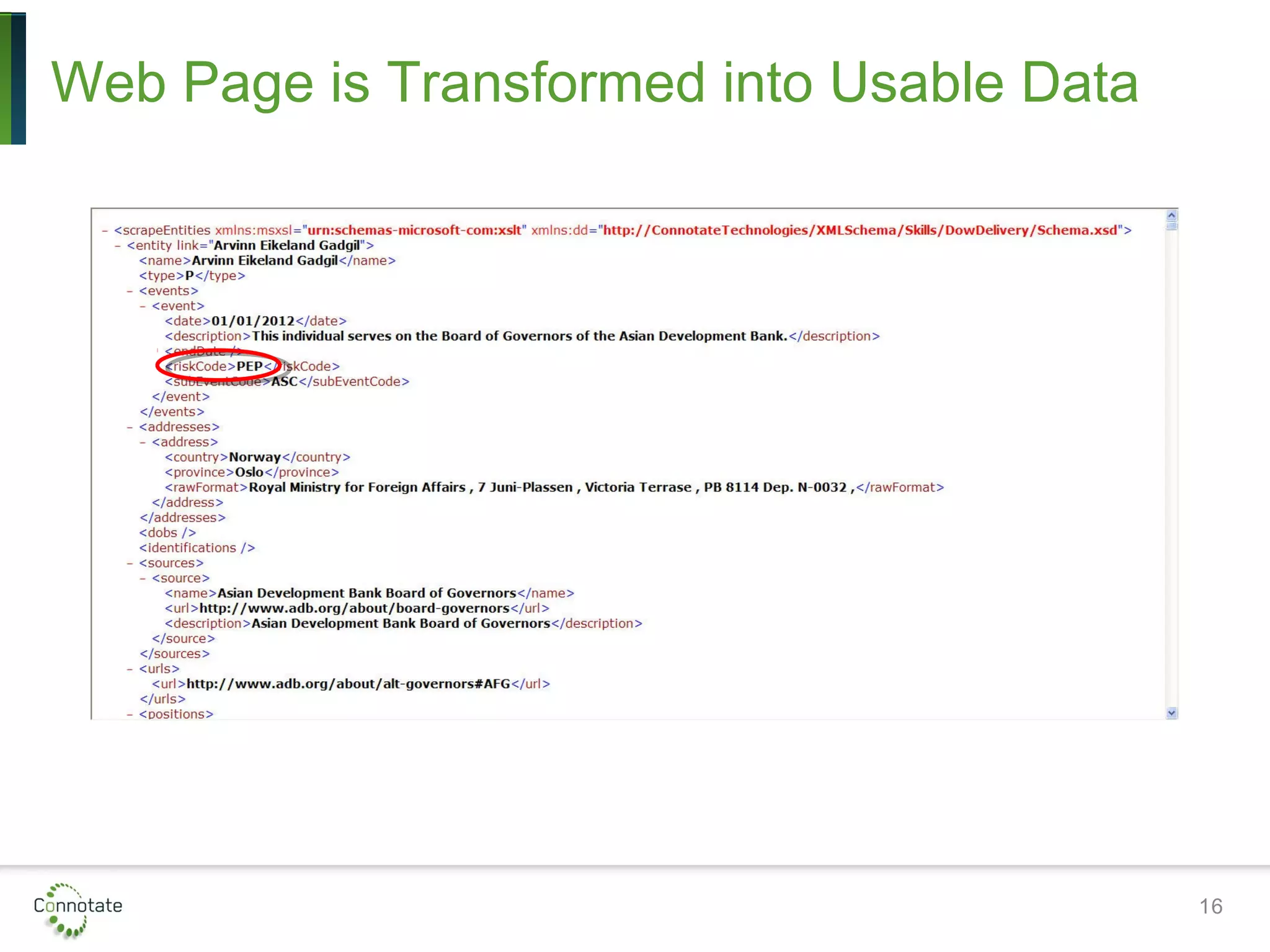

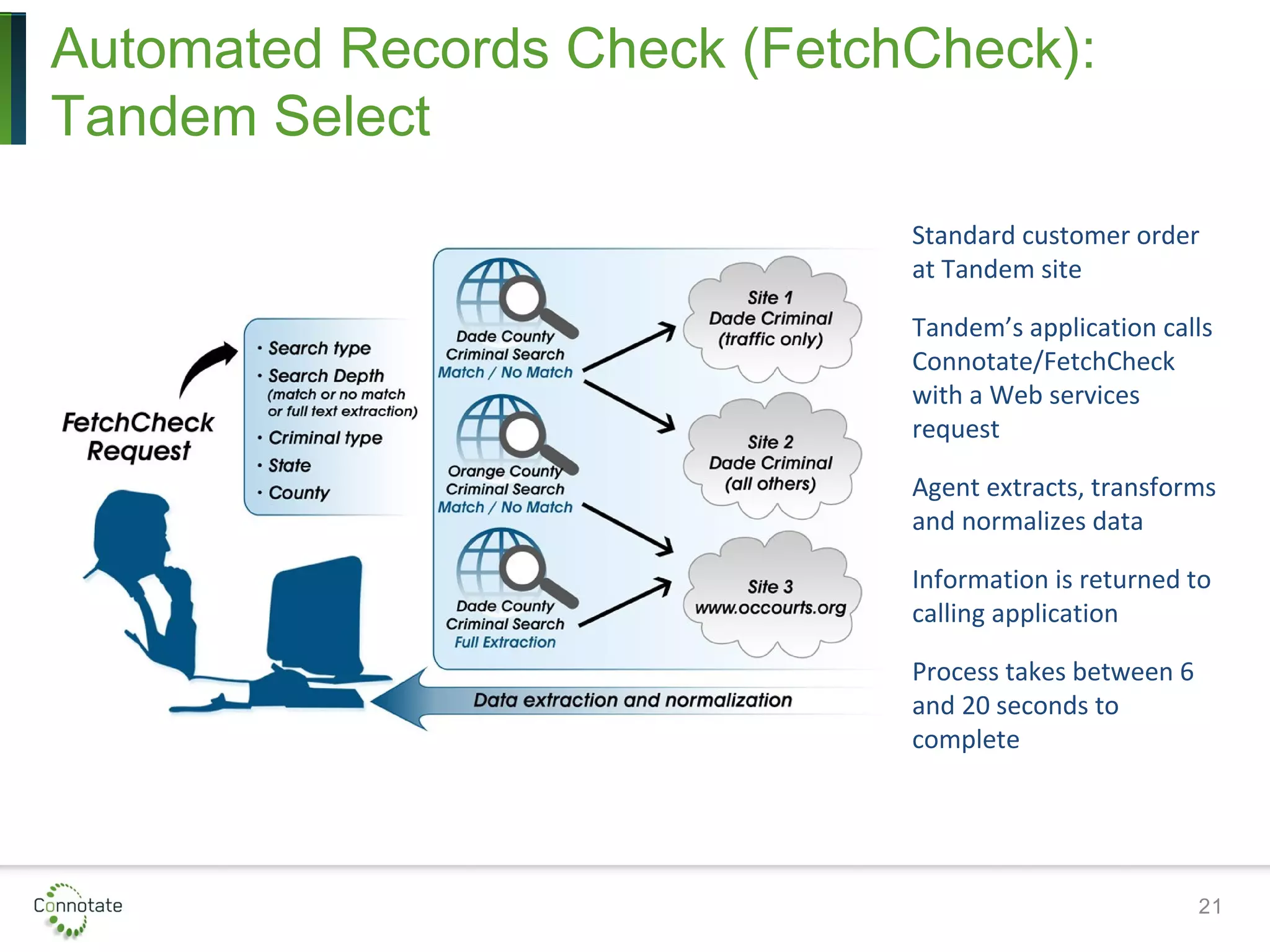

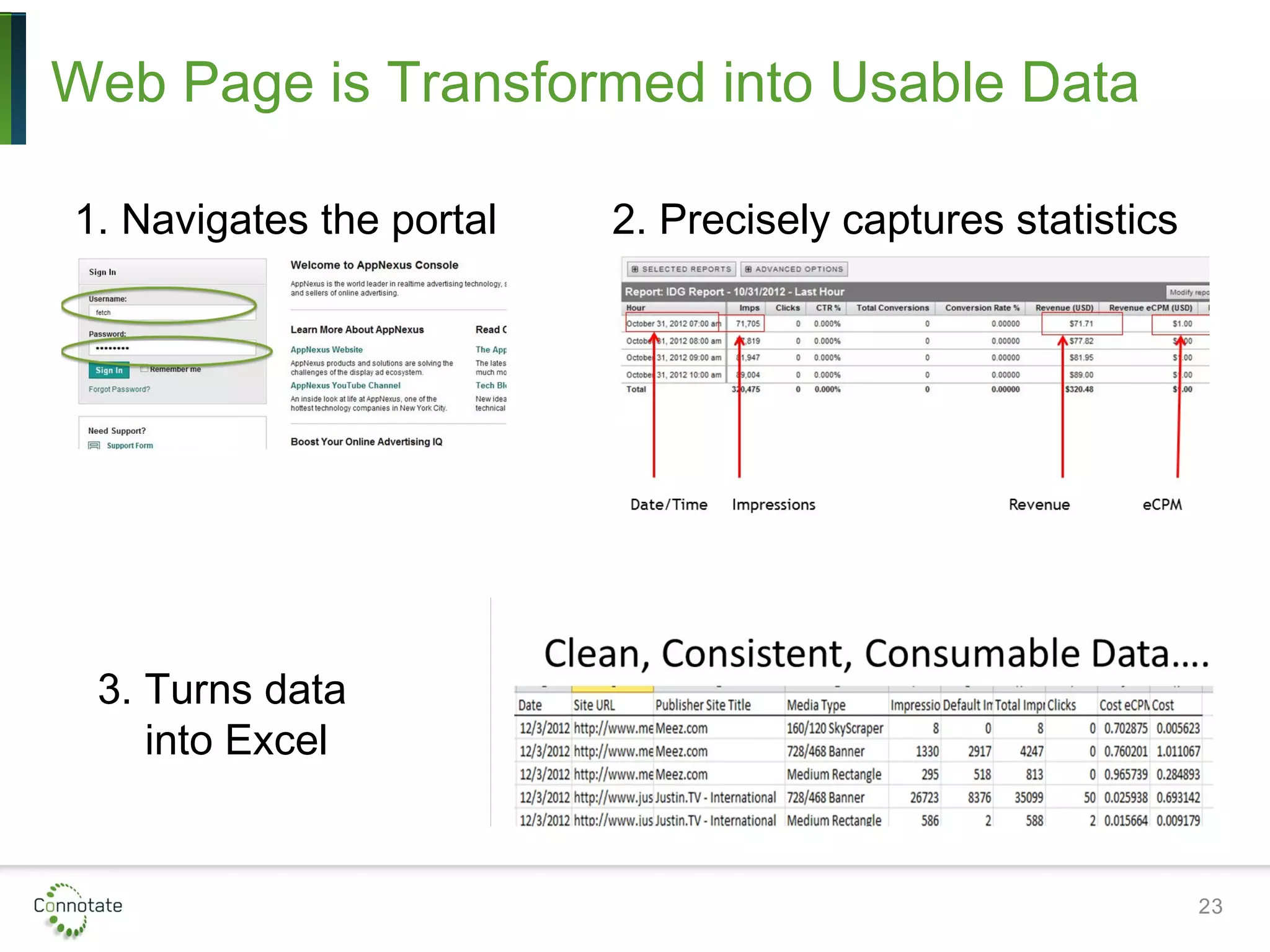

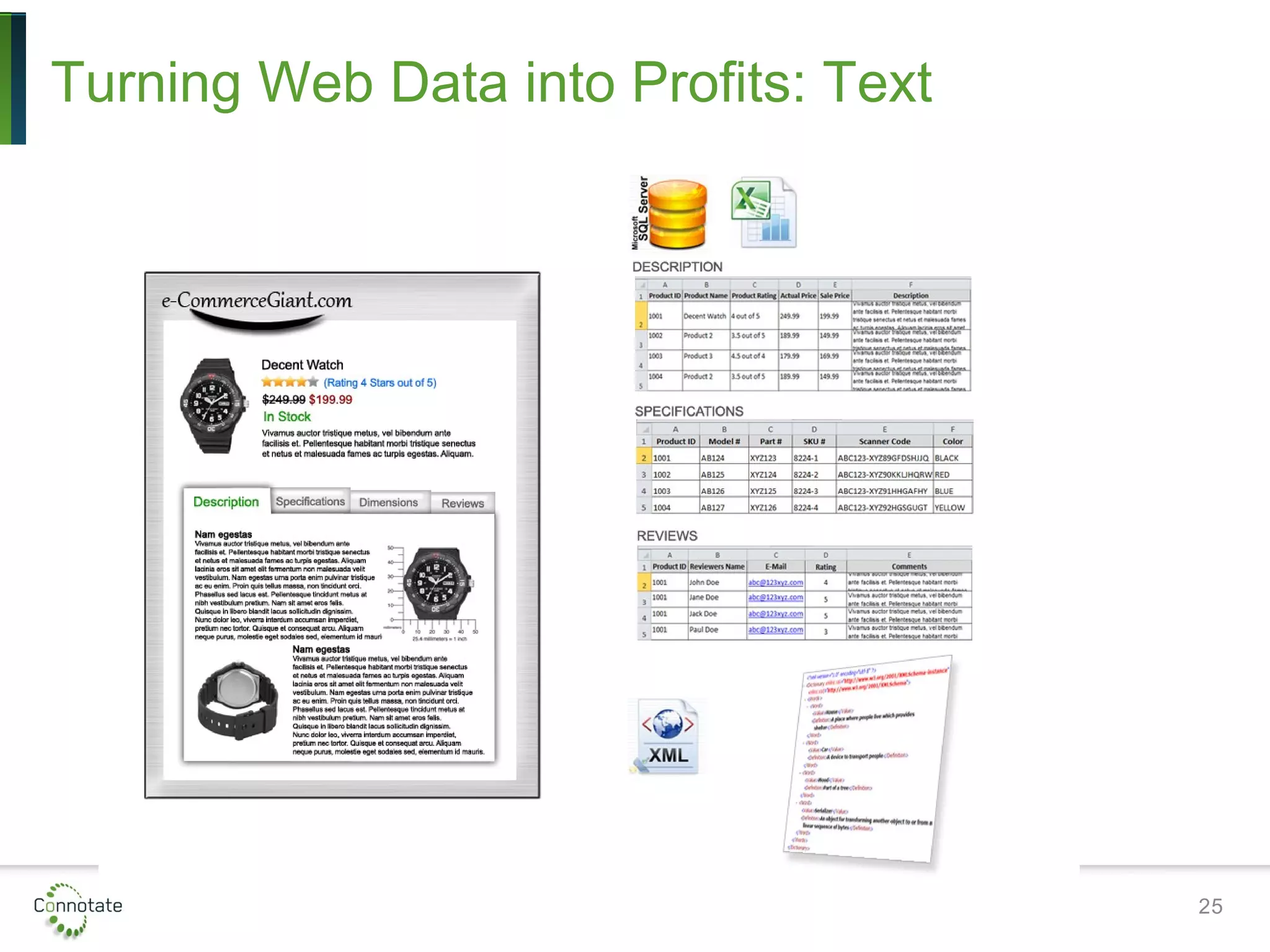

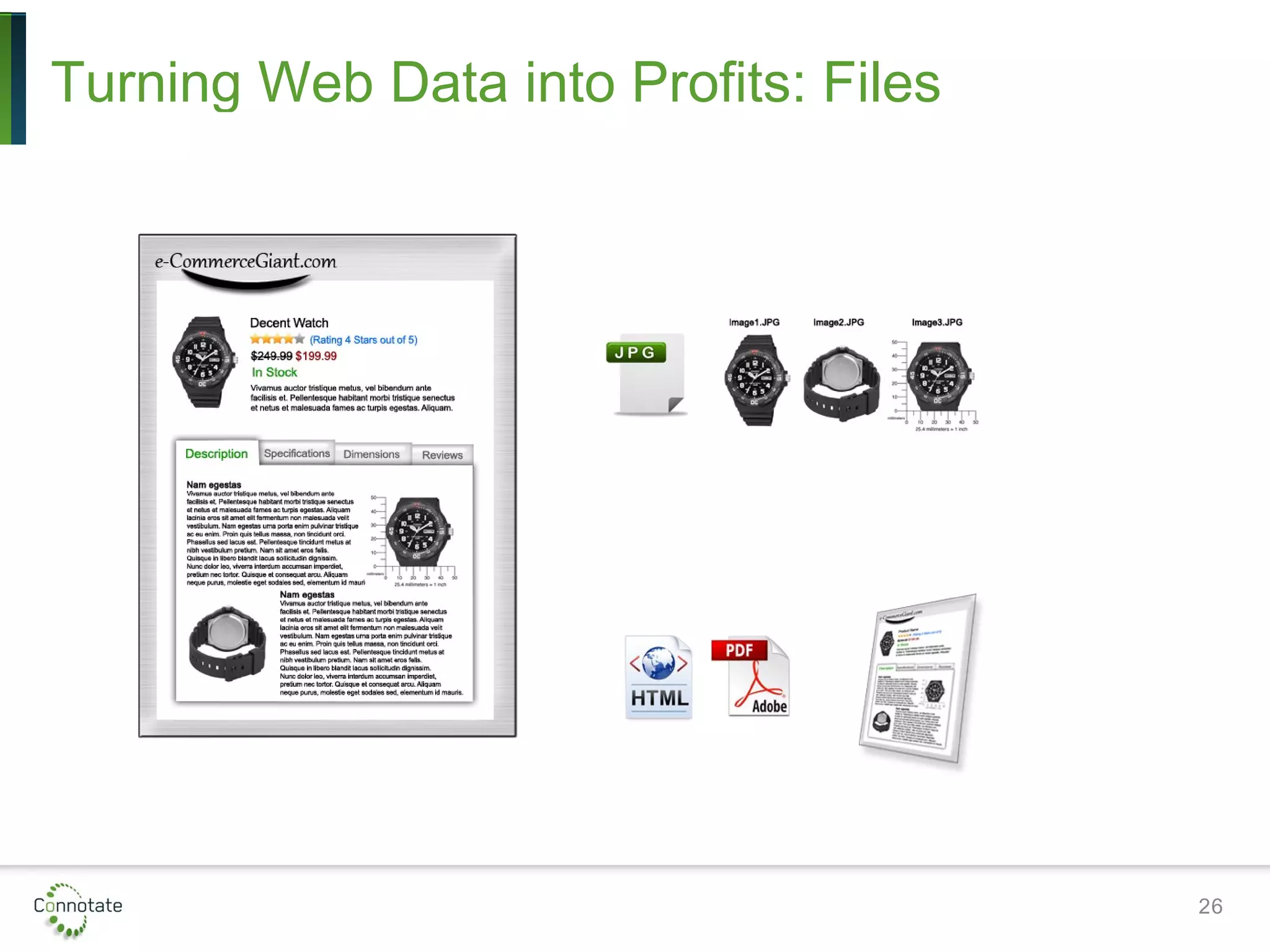

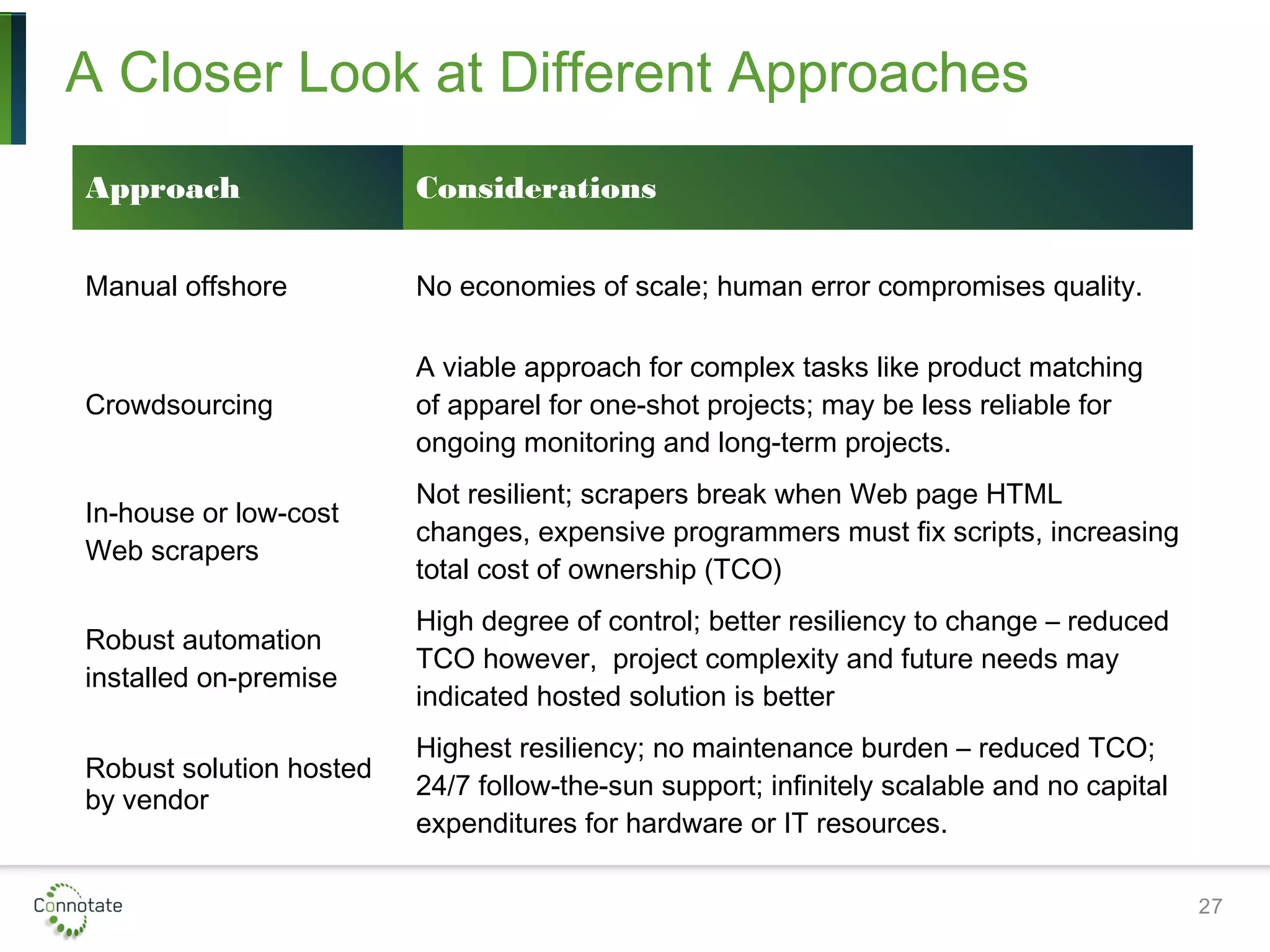

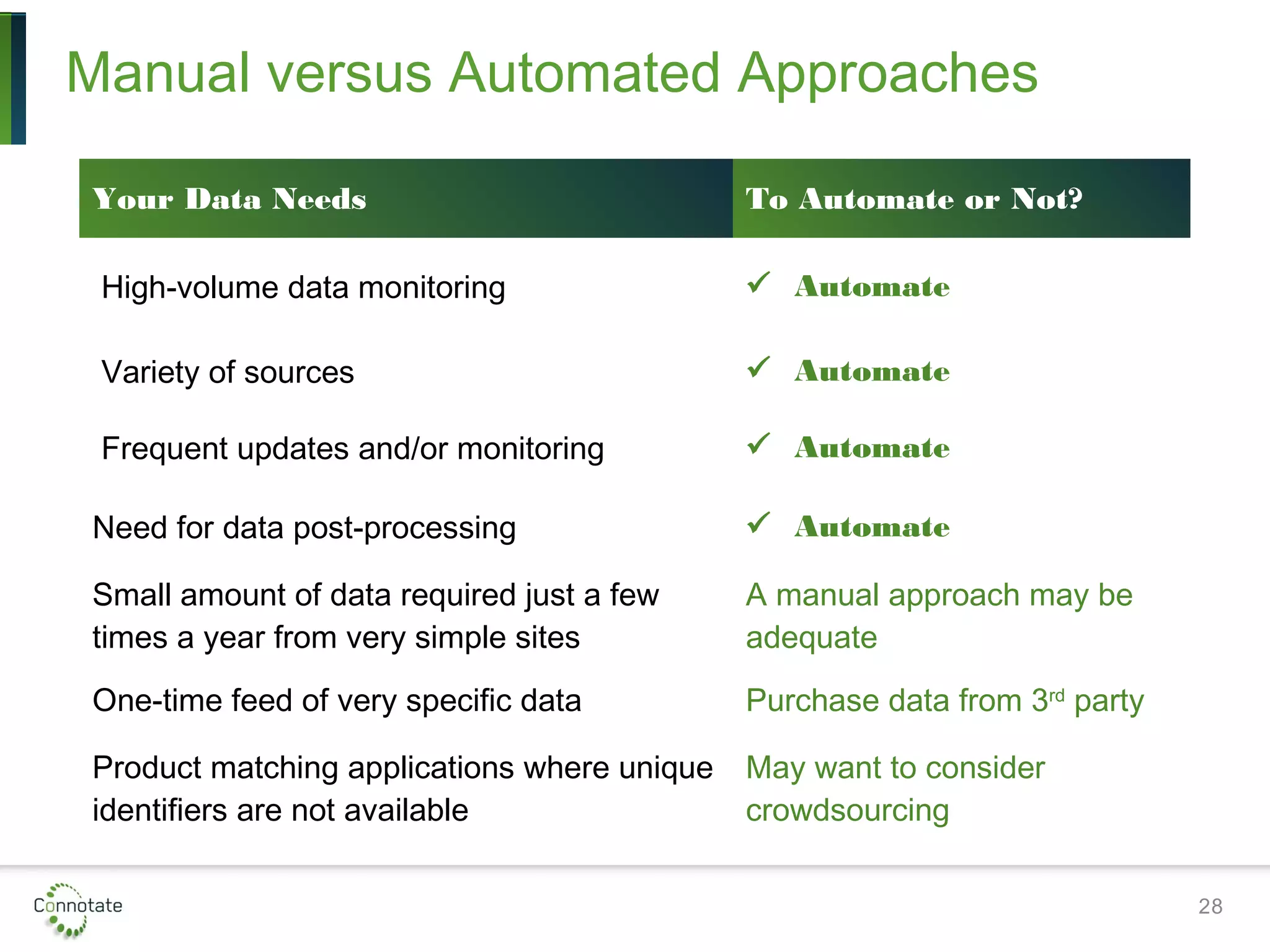

The presentation discusses how leveraging web data can drive revenue and reduce operational costs through automation and effective data management. It outlines various applications including competitive intelligence, risk assessment, and price optimization, emphasizing the benefits gained from transitioning from manual to automated processes. Key considerations for scoping projects and evaluating data providers are provided to ensure successful implementation.