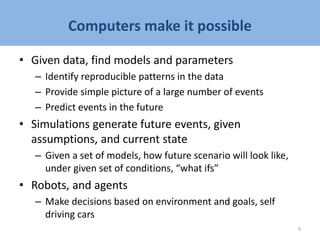

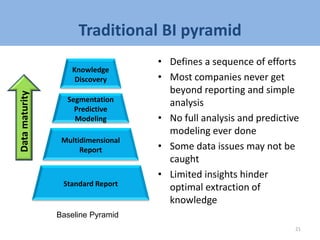

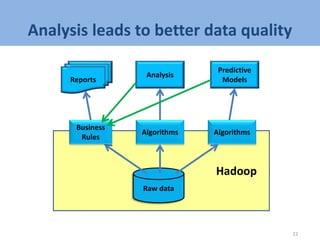

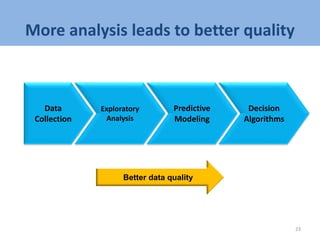

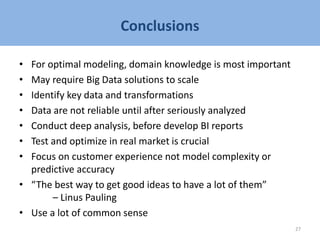

- The document discusses big data analytics and business intelligence processes for analyzing large datasets.

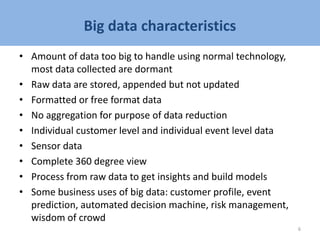

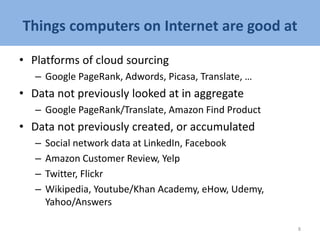

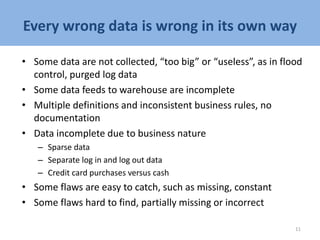

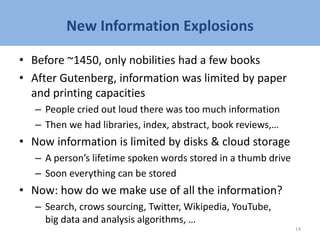

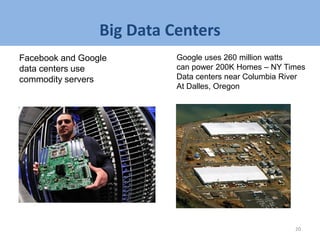

- It provides an overview of big data characteristics and challenges, and how cloud computing enables analyzing massive amounts of data.

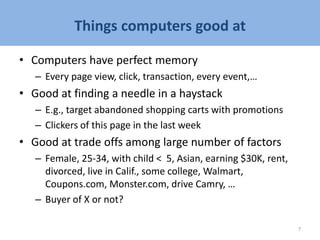

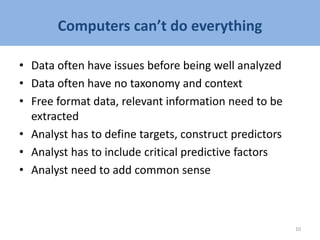

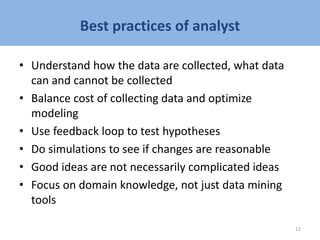

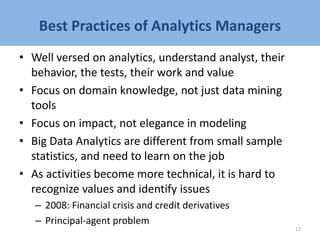

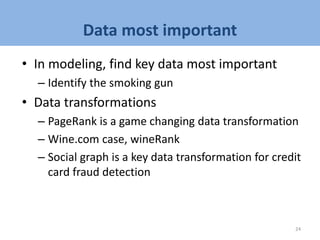

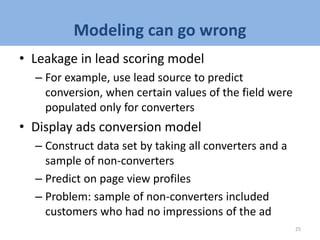

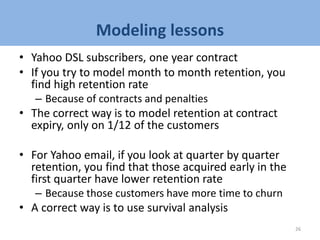

- Examples of big data analytics applications are described, including customer profiling, predictive modeling, and optimization of online marketing campaigns. Lessons are discussed for effective modeling, including the importance of domain expertise and identifying key data transformations and variables.