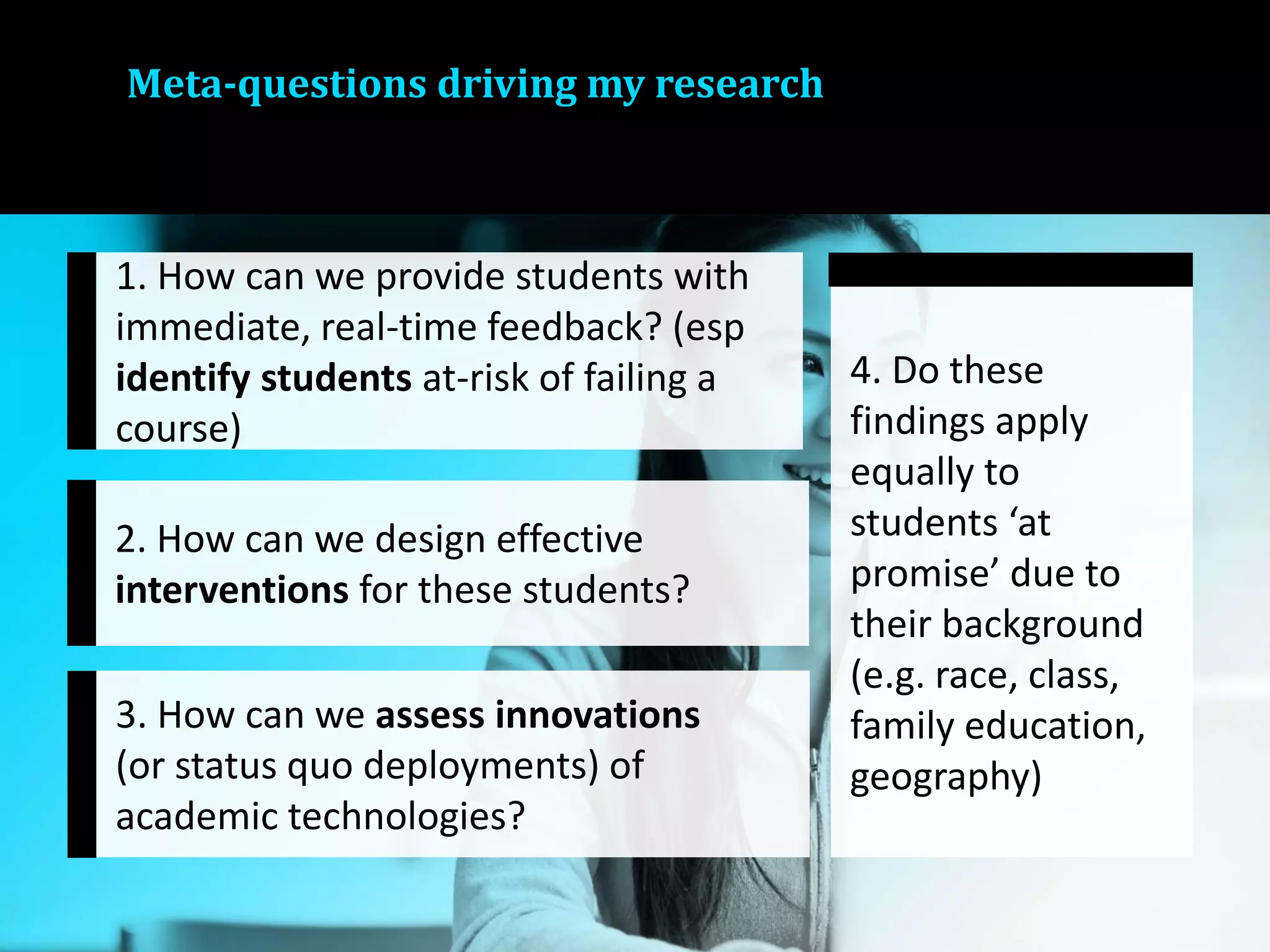

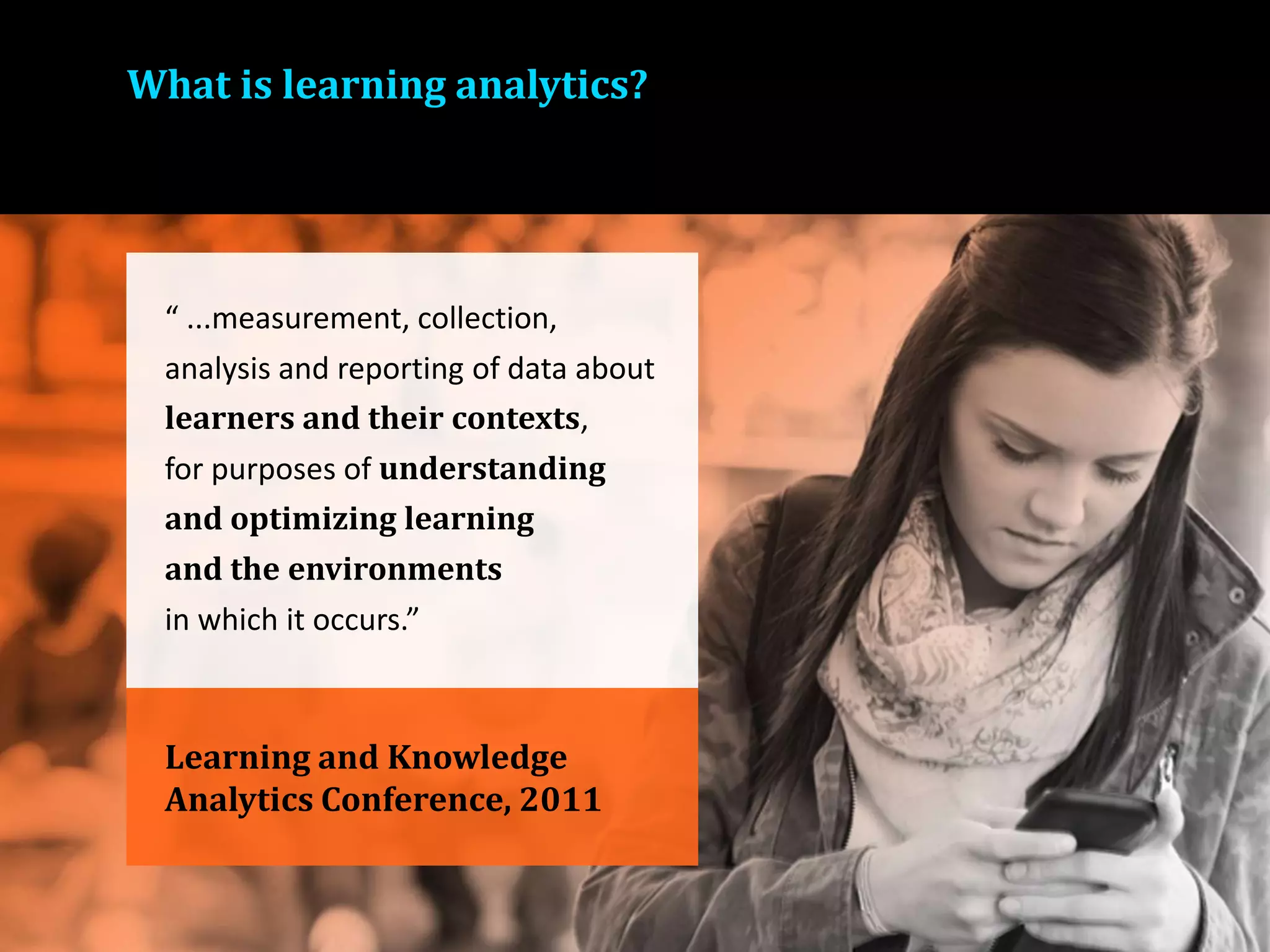

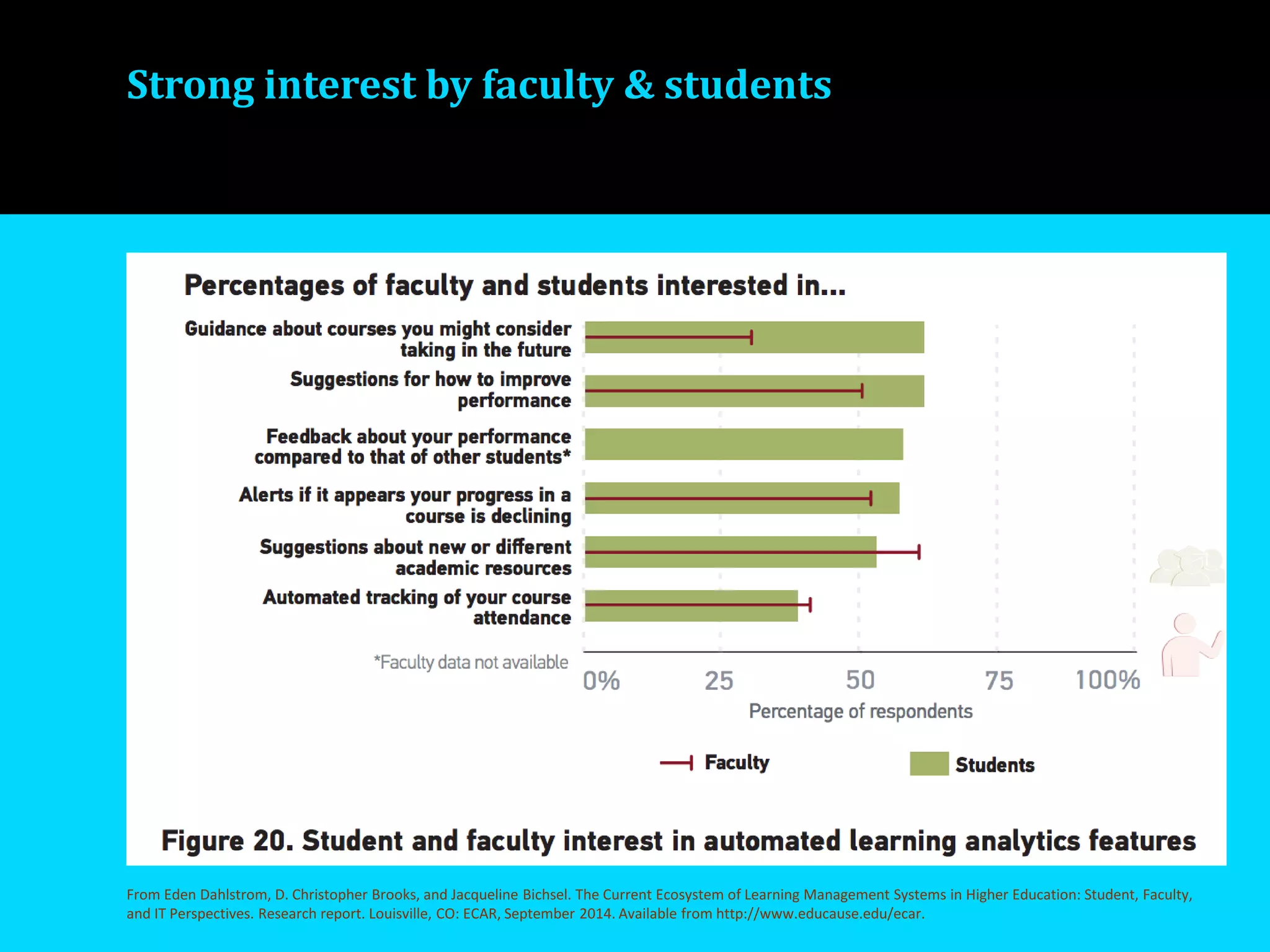

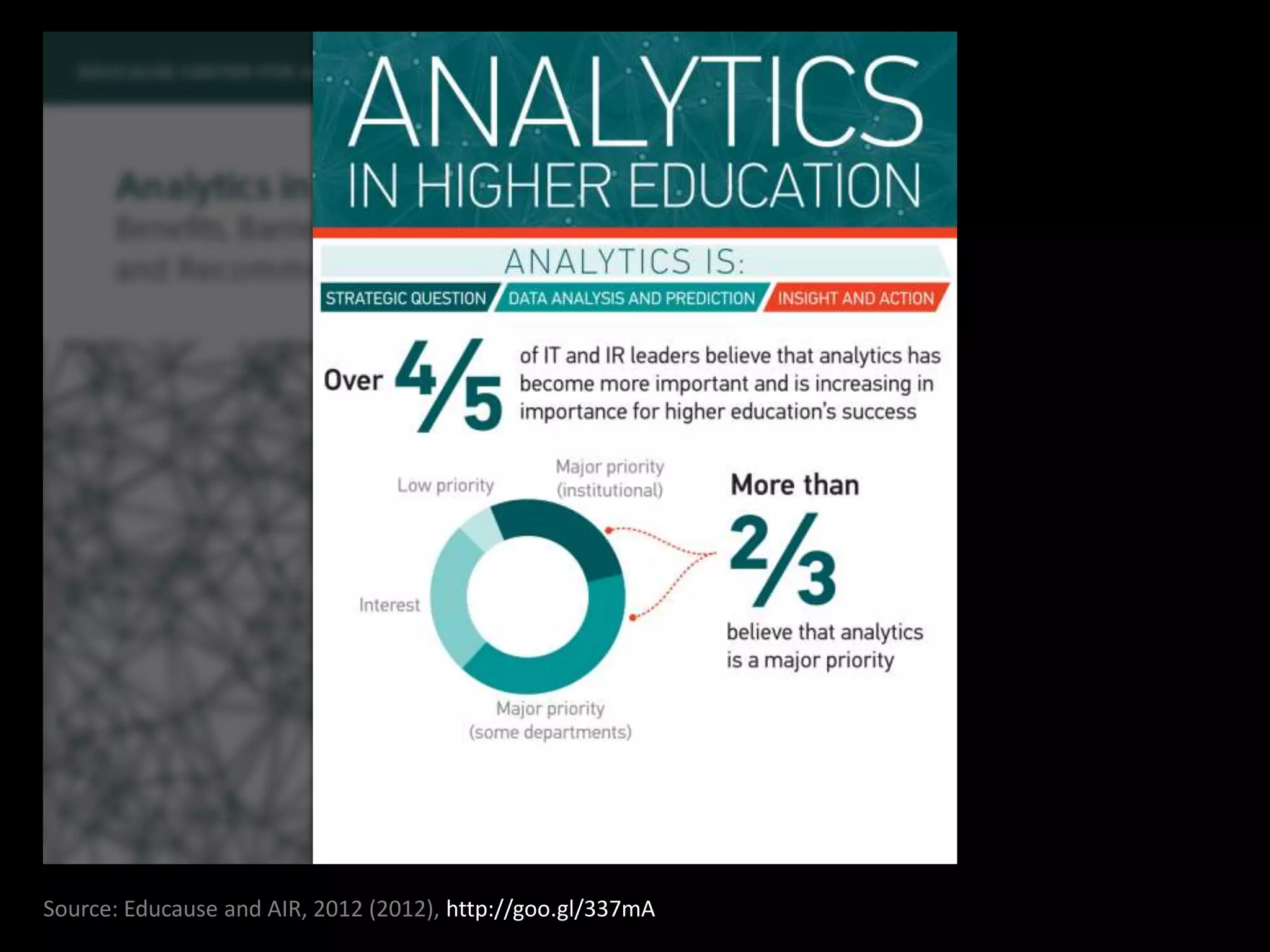

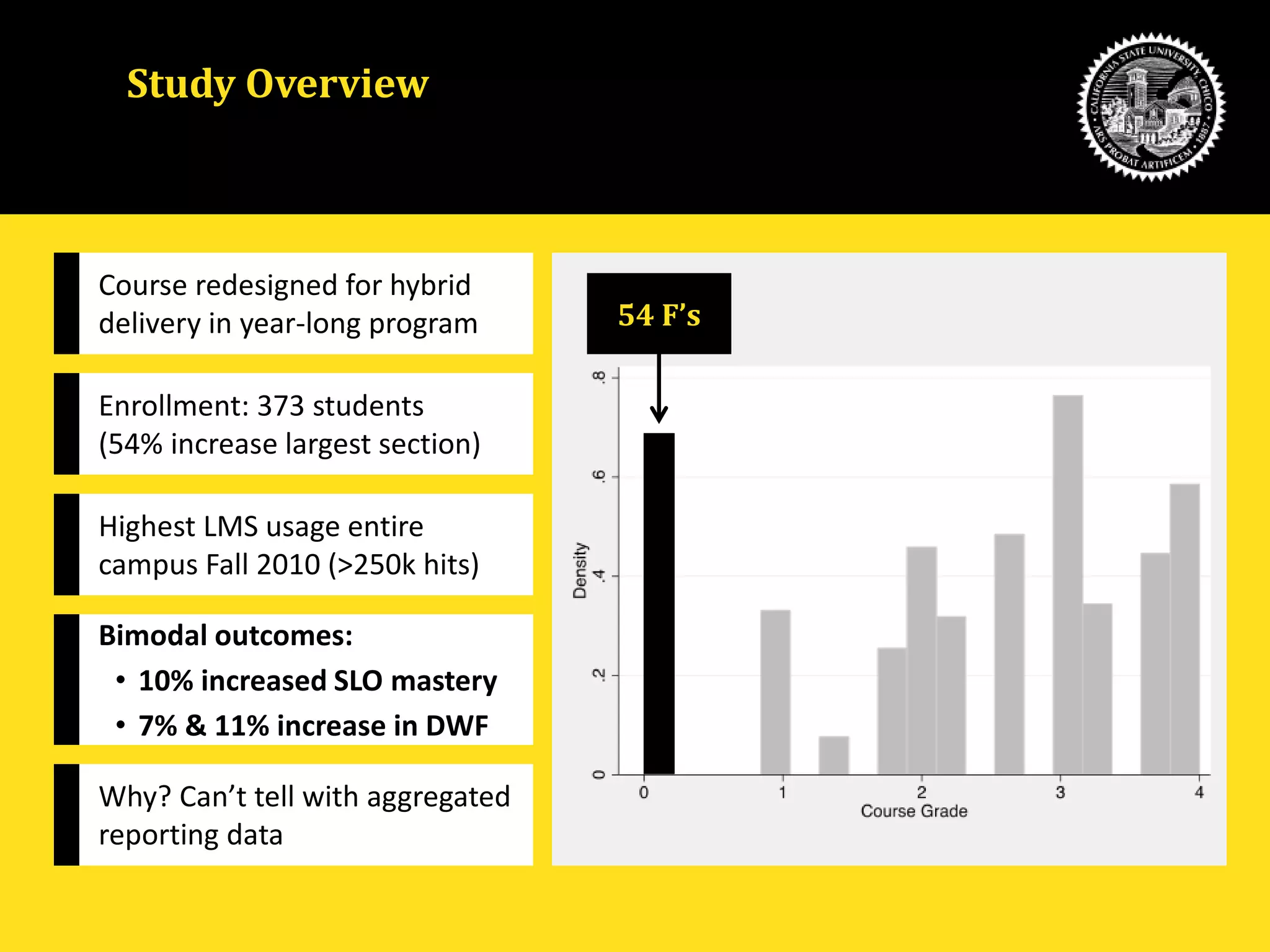

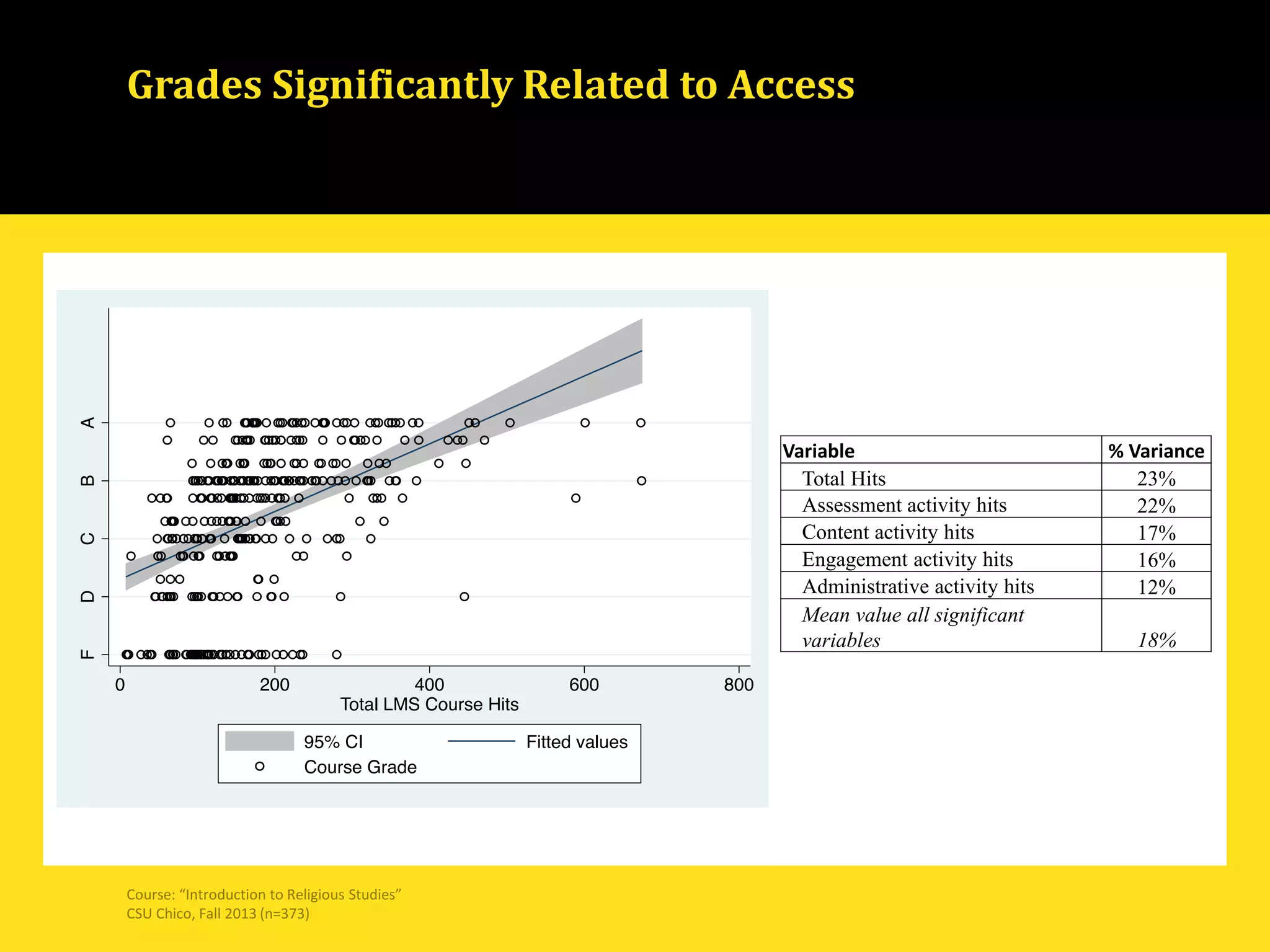

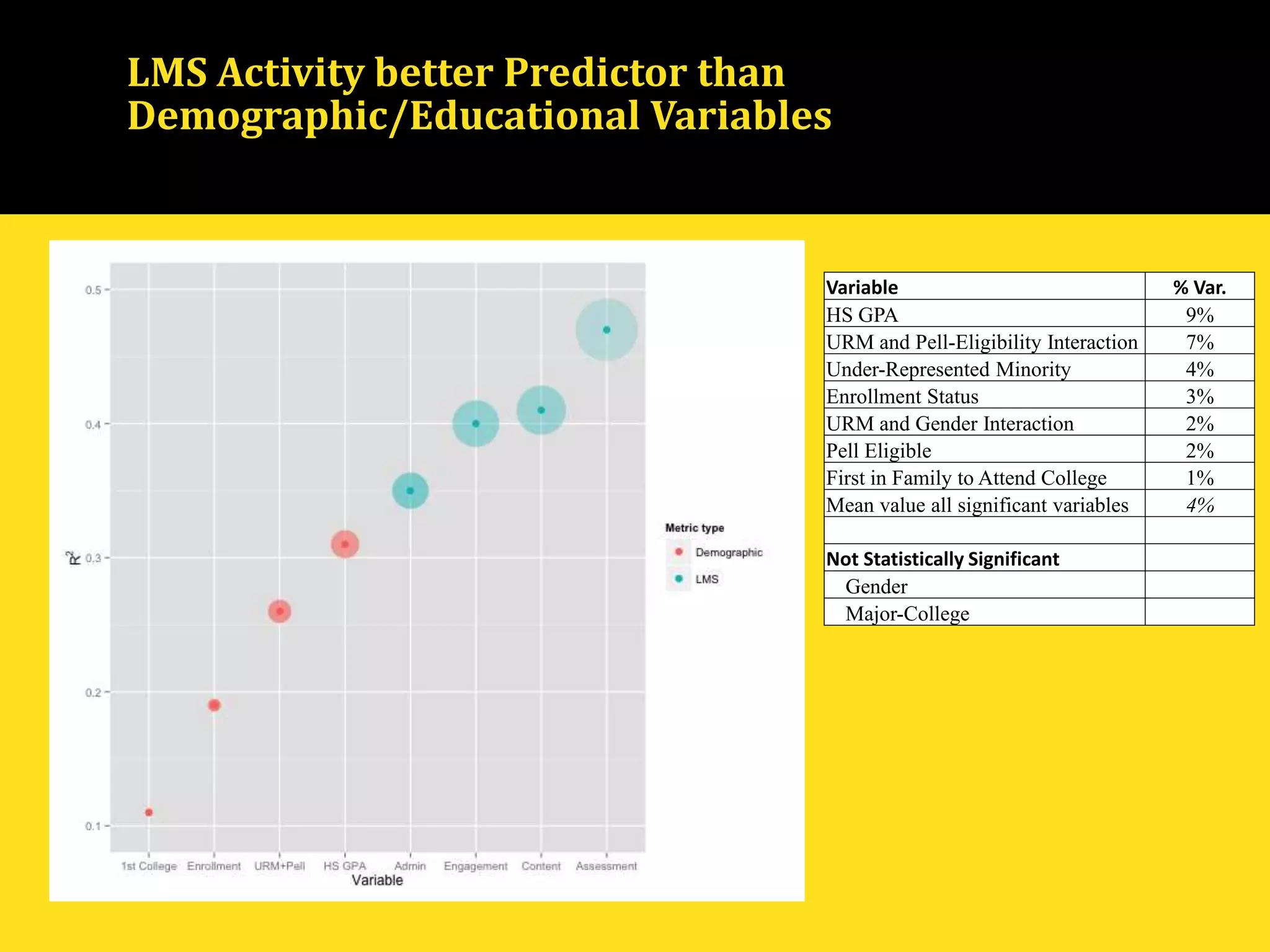

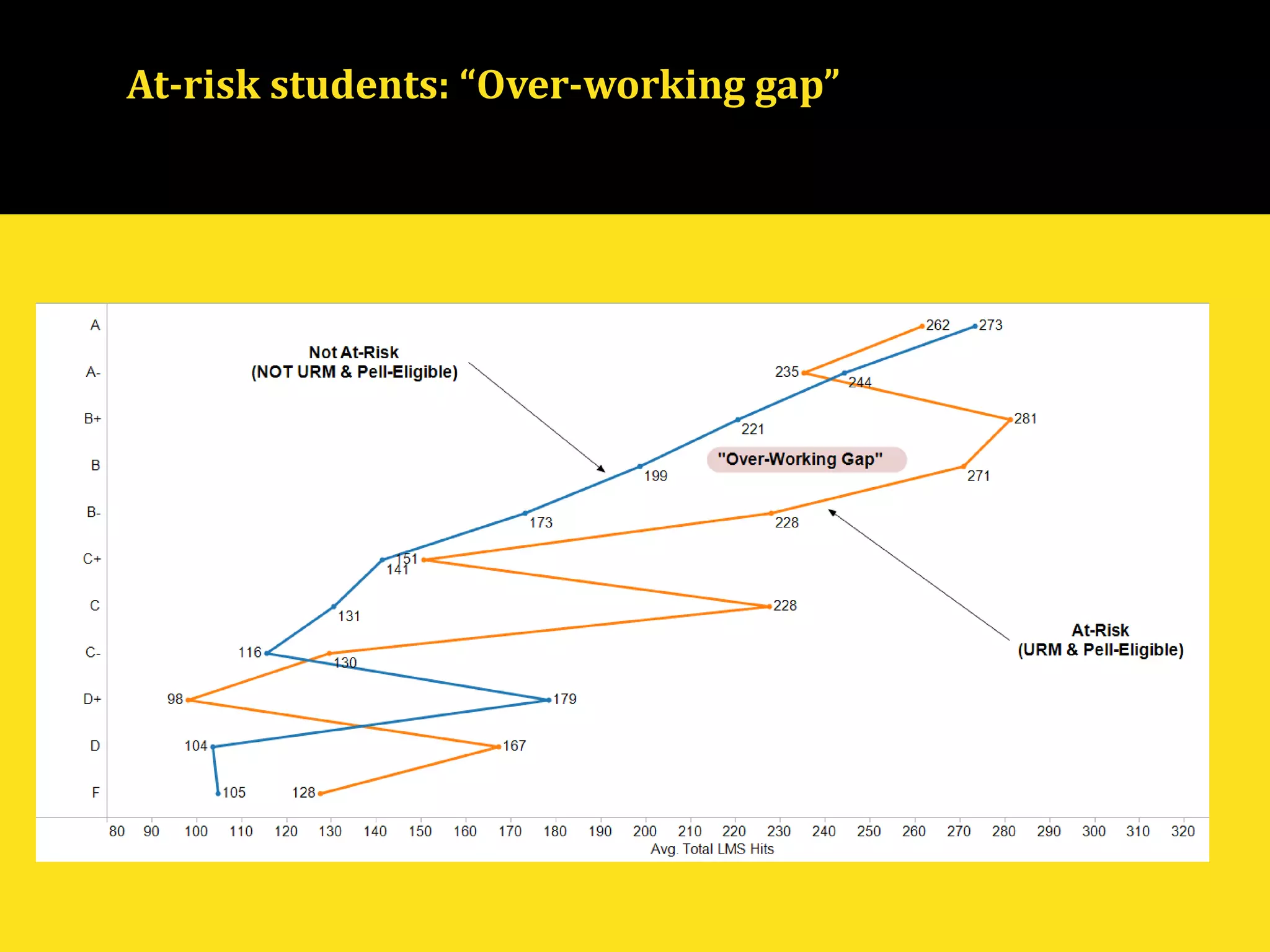

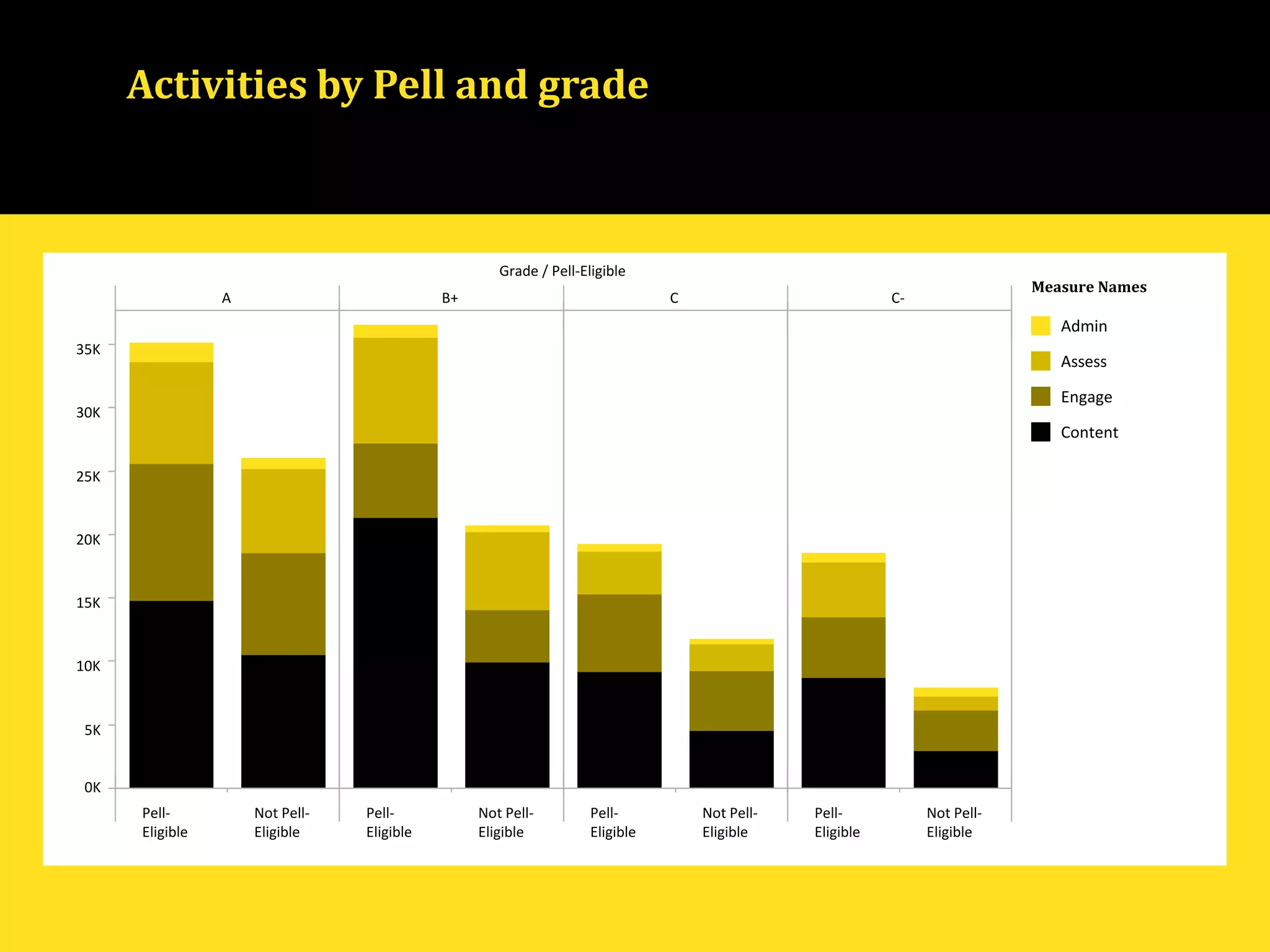

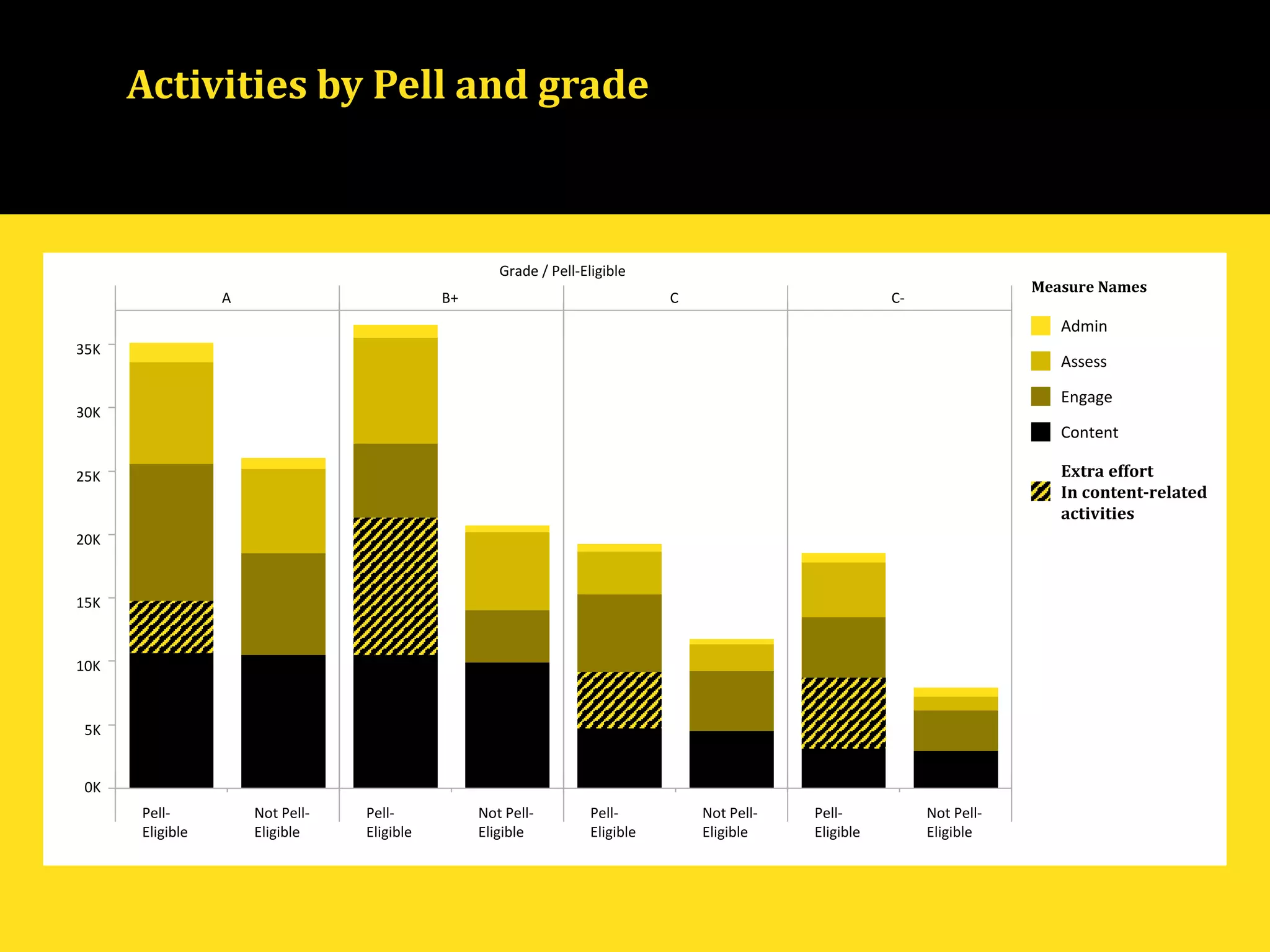

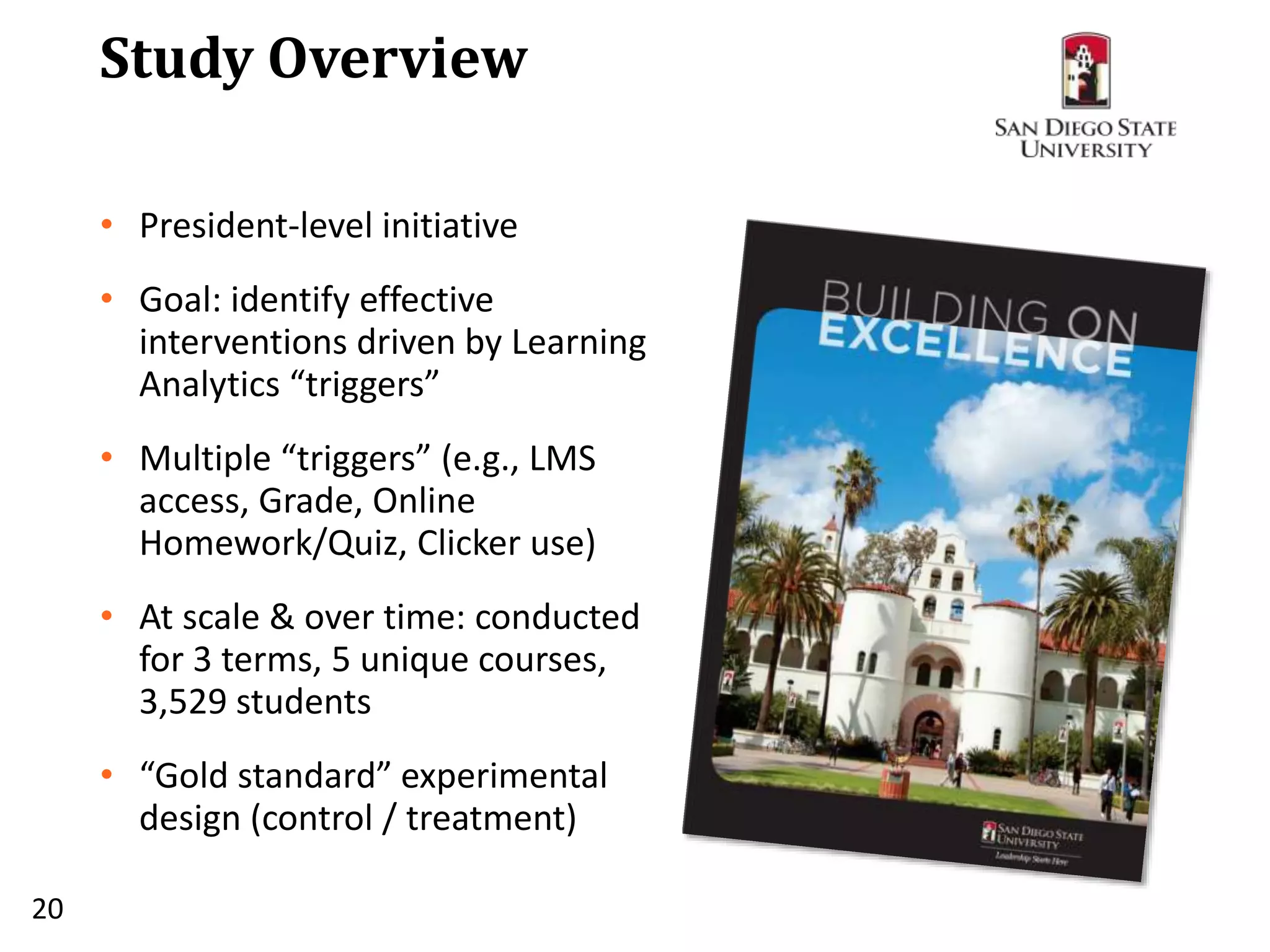

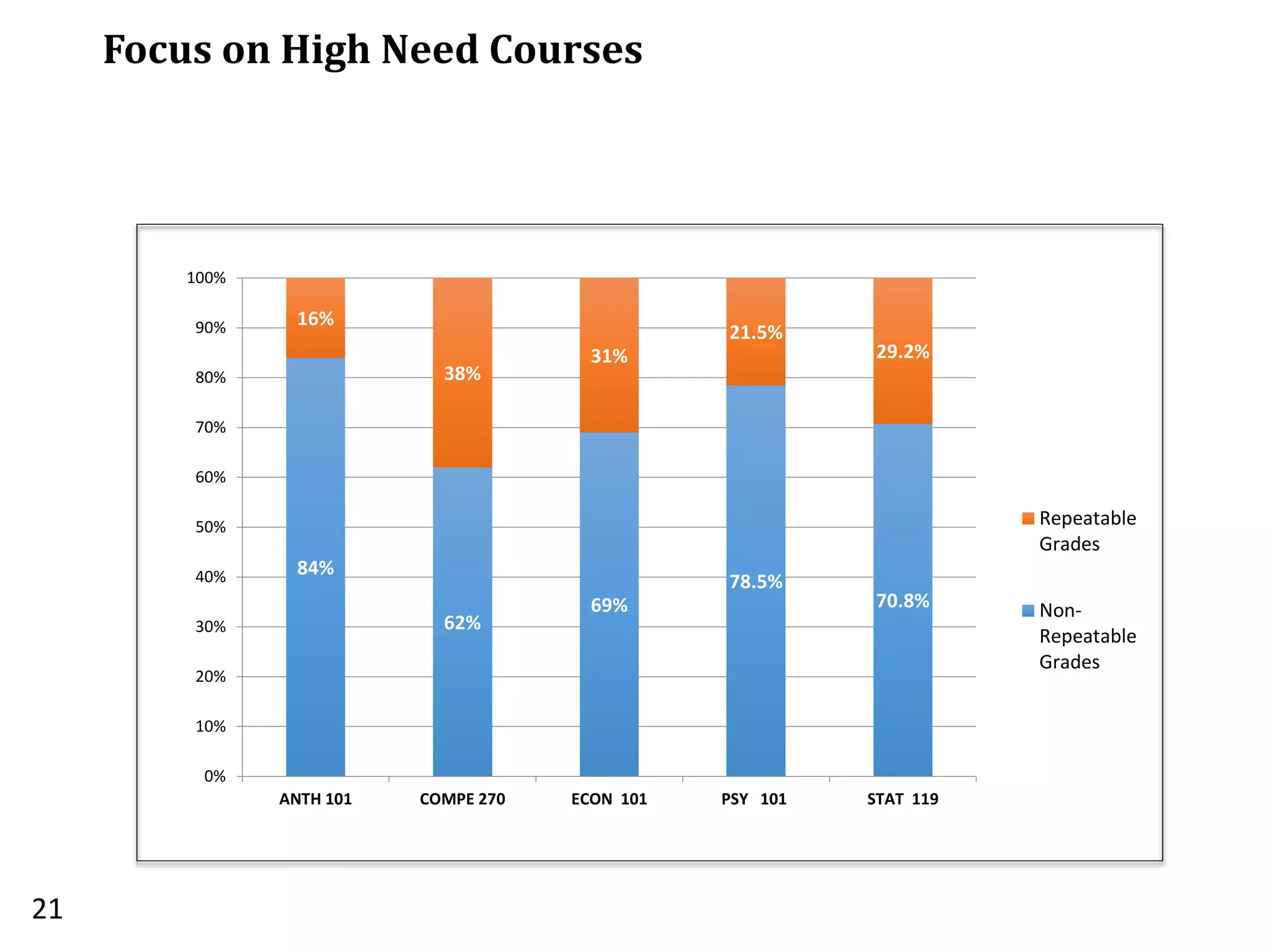

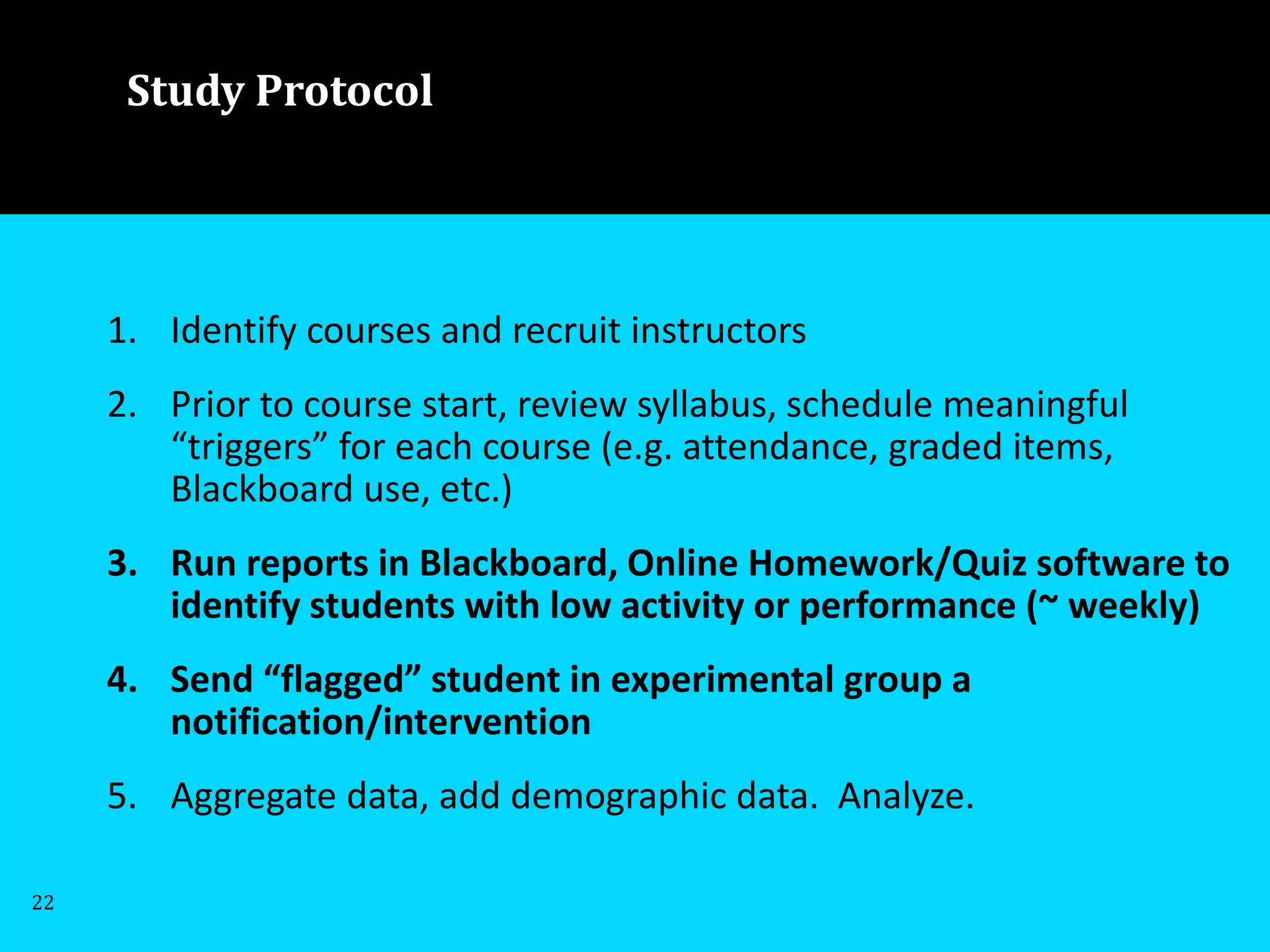

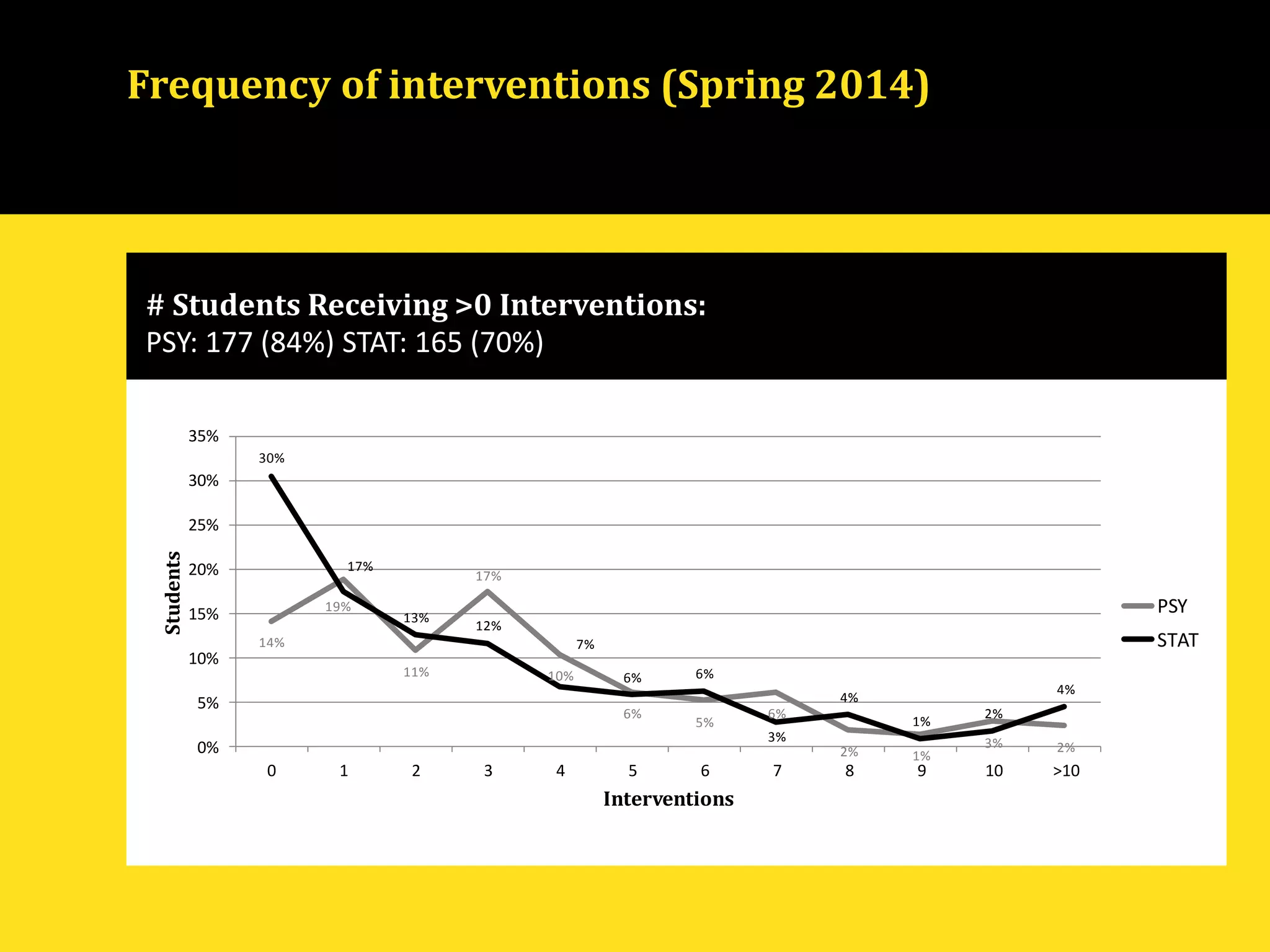

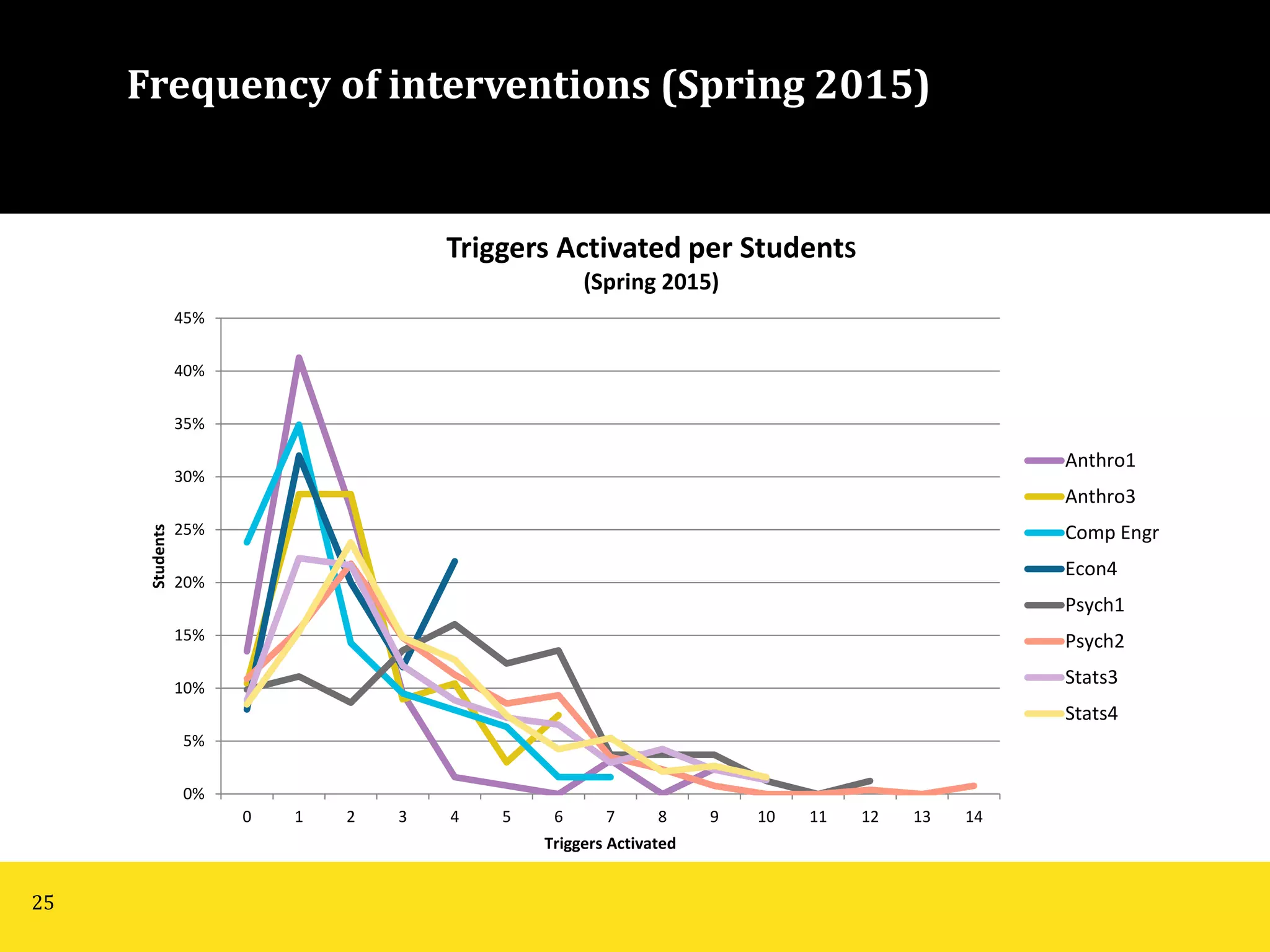

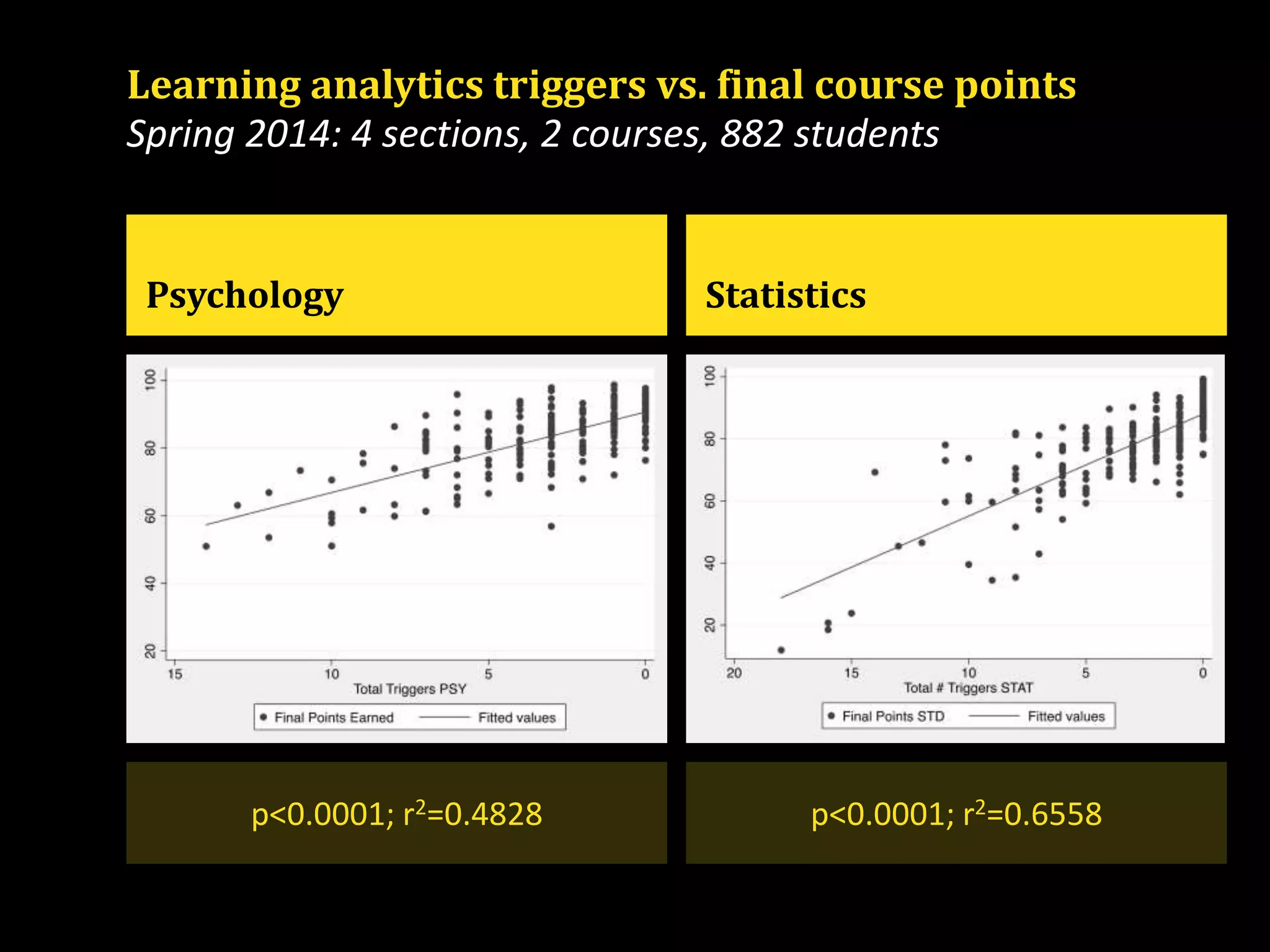

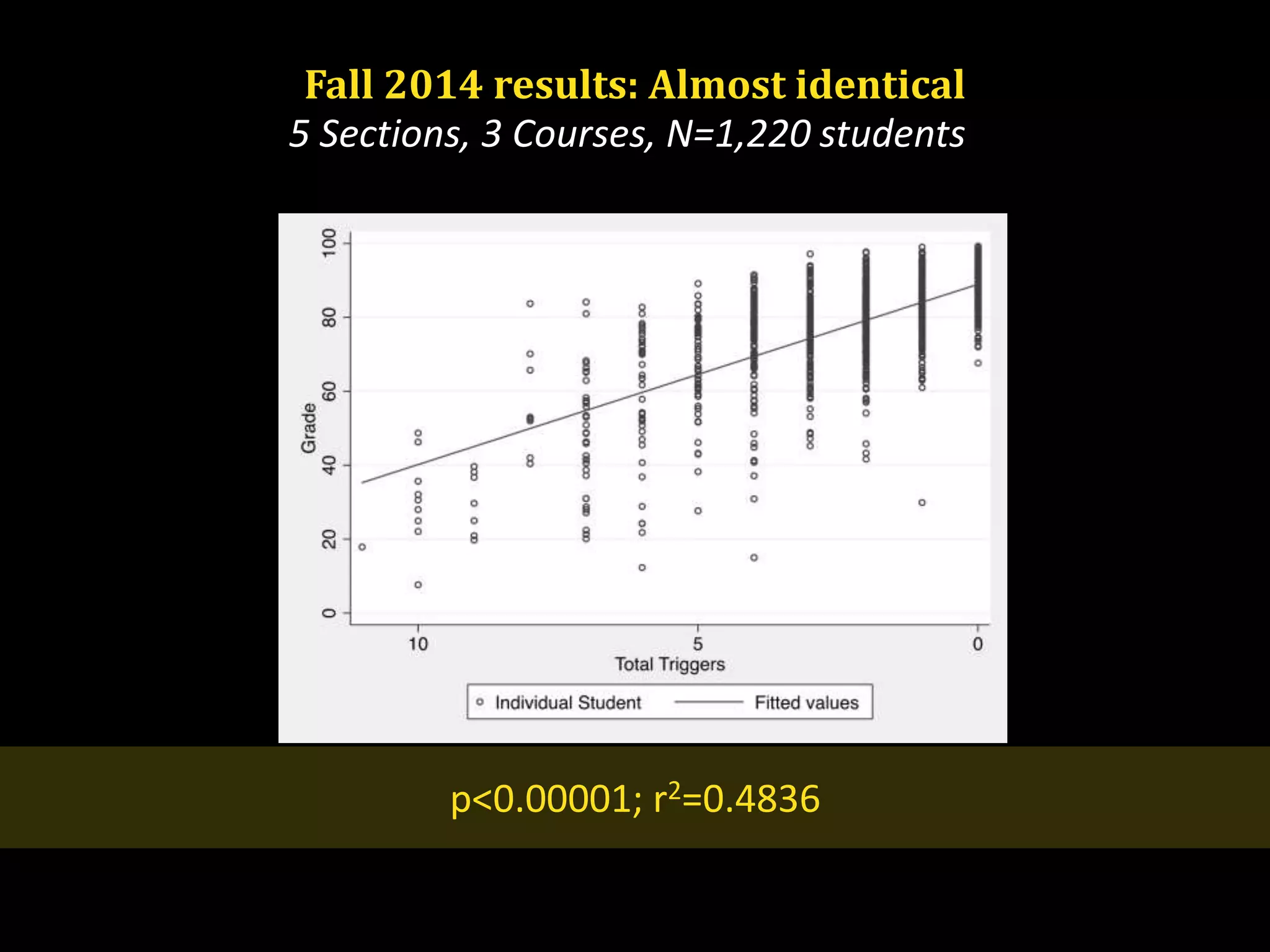

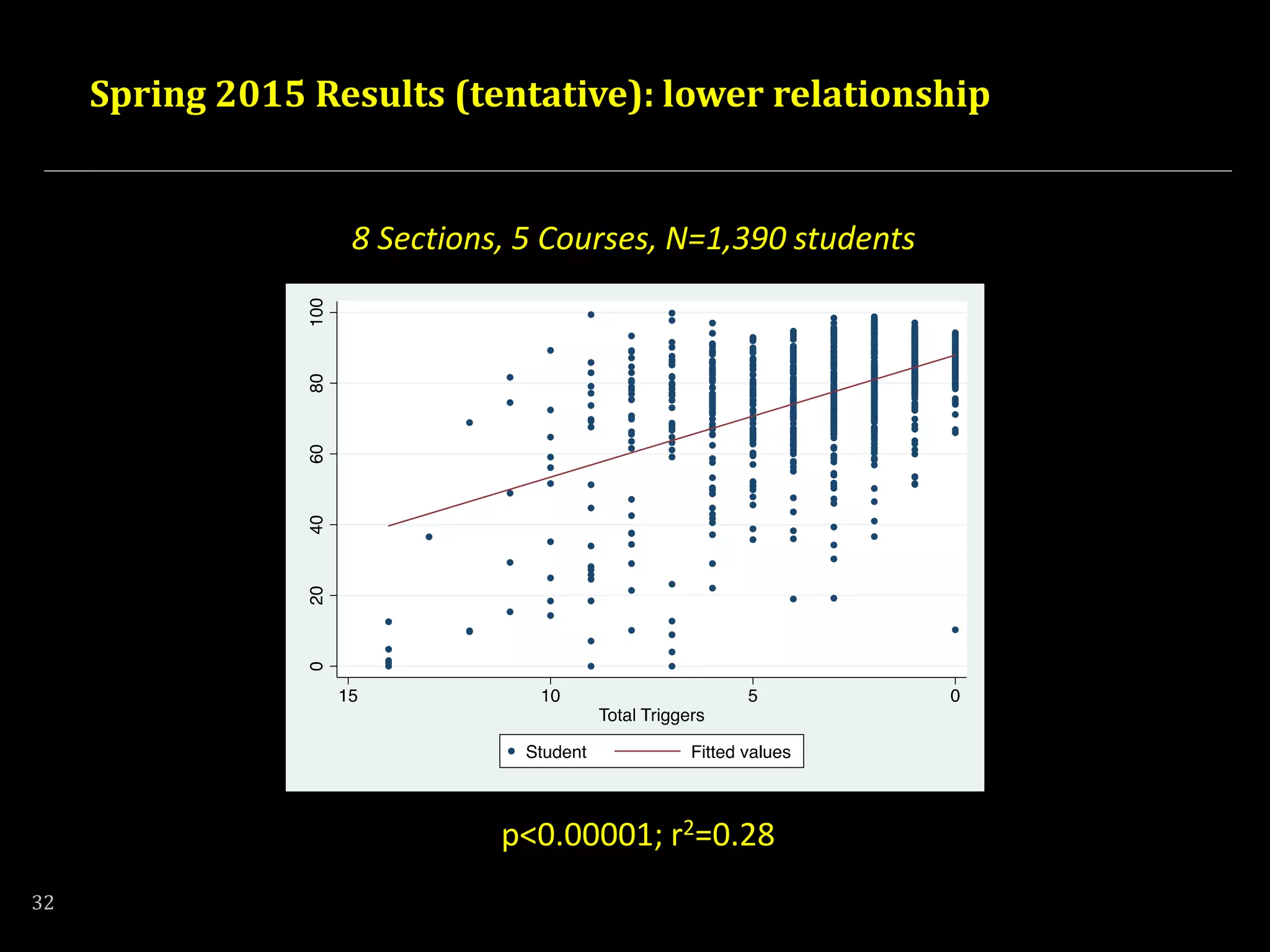

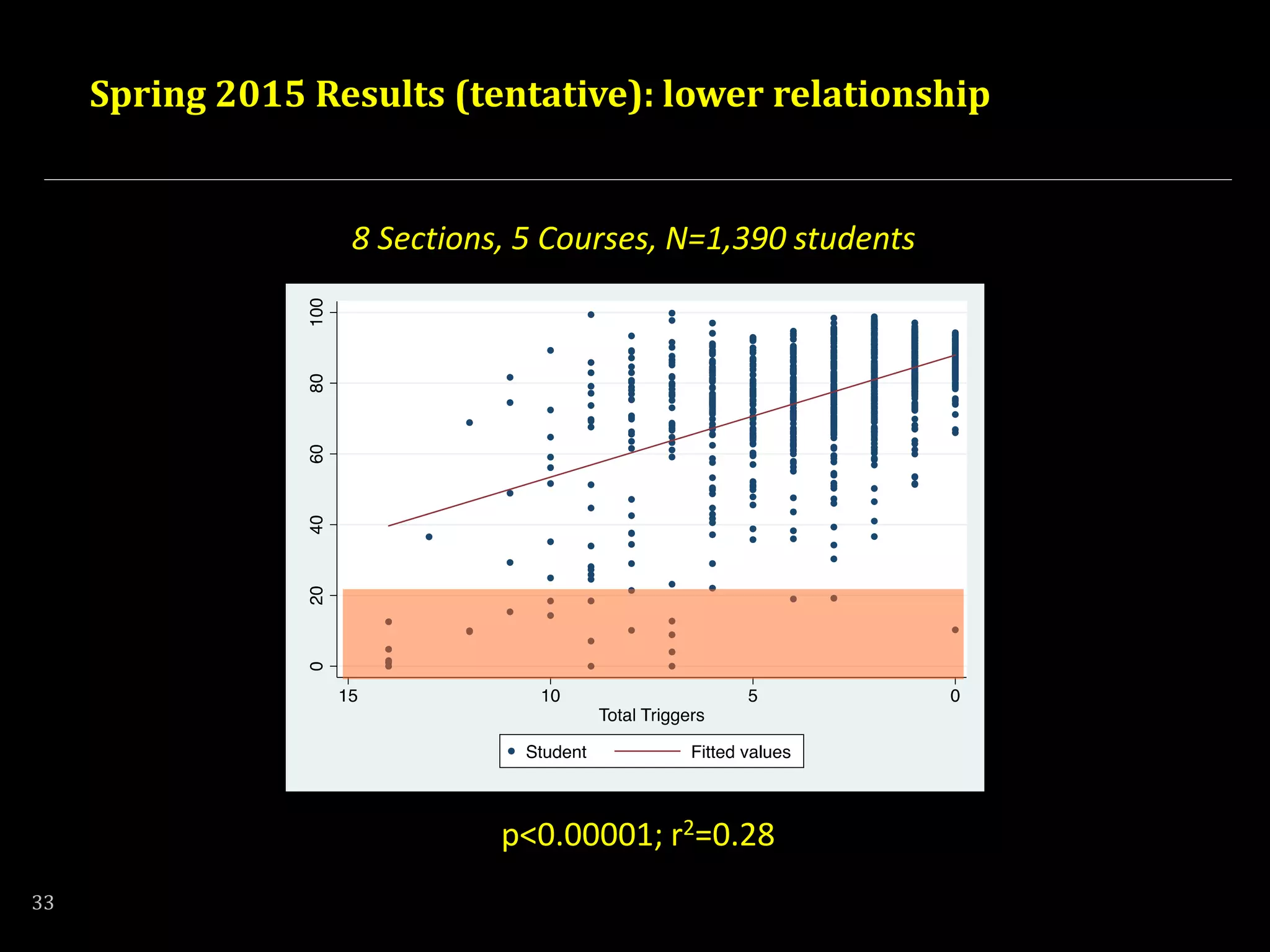

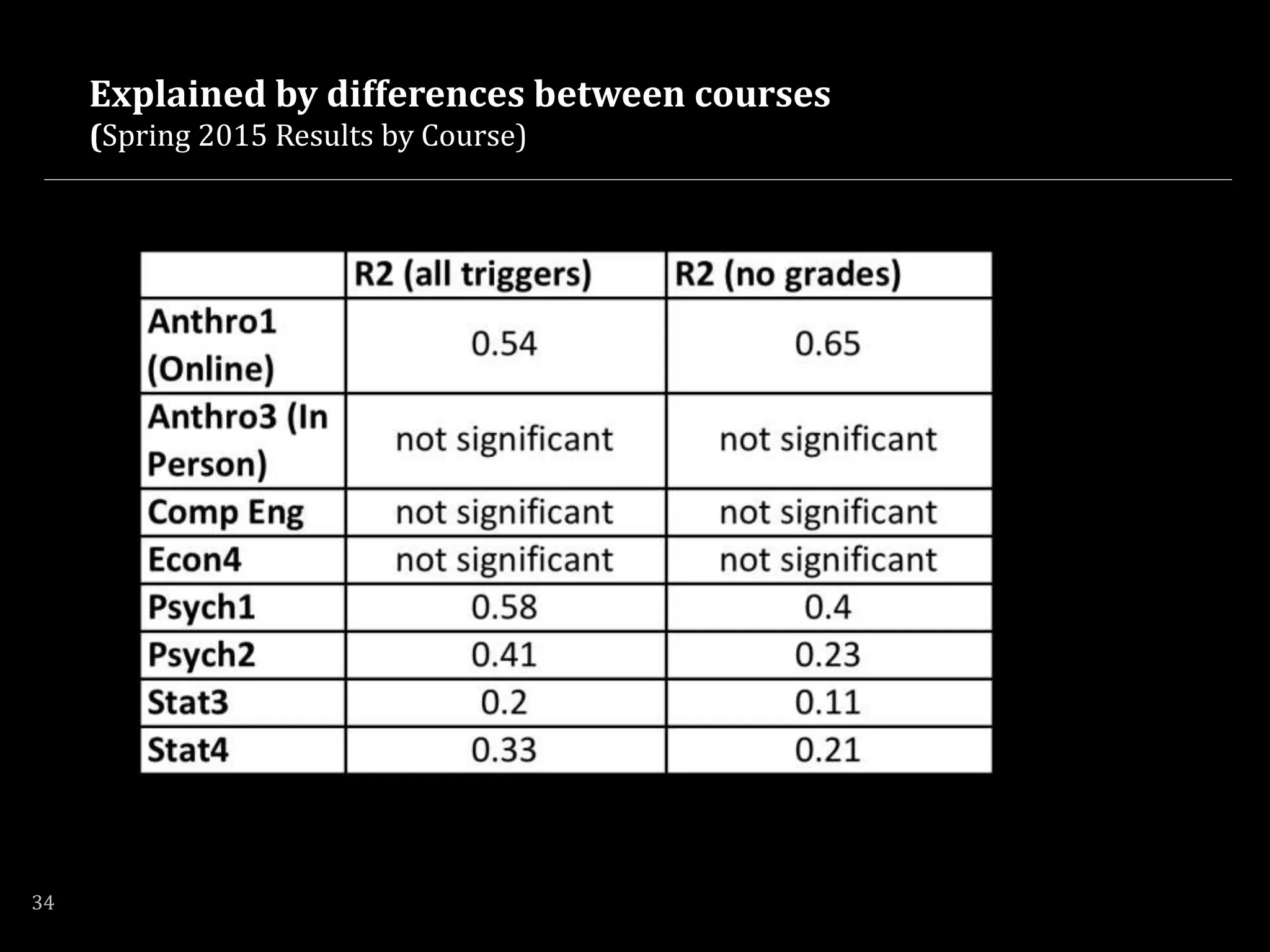

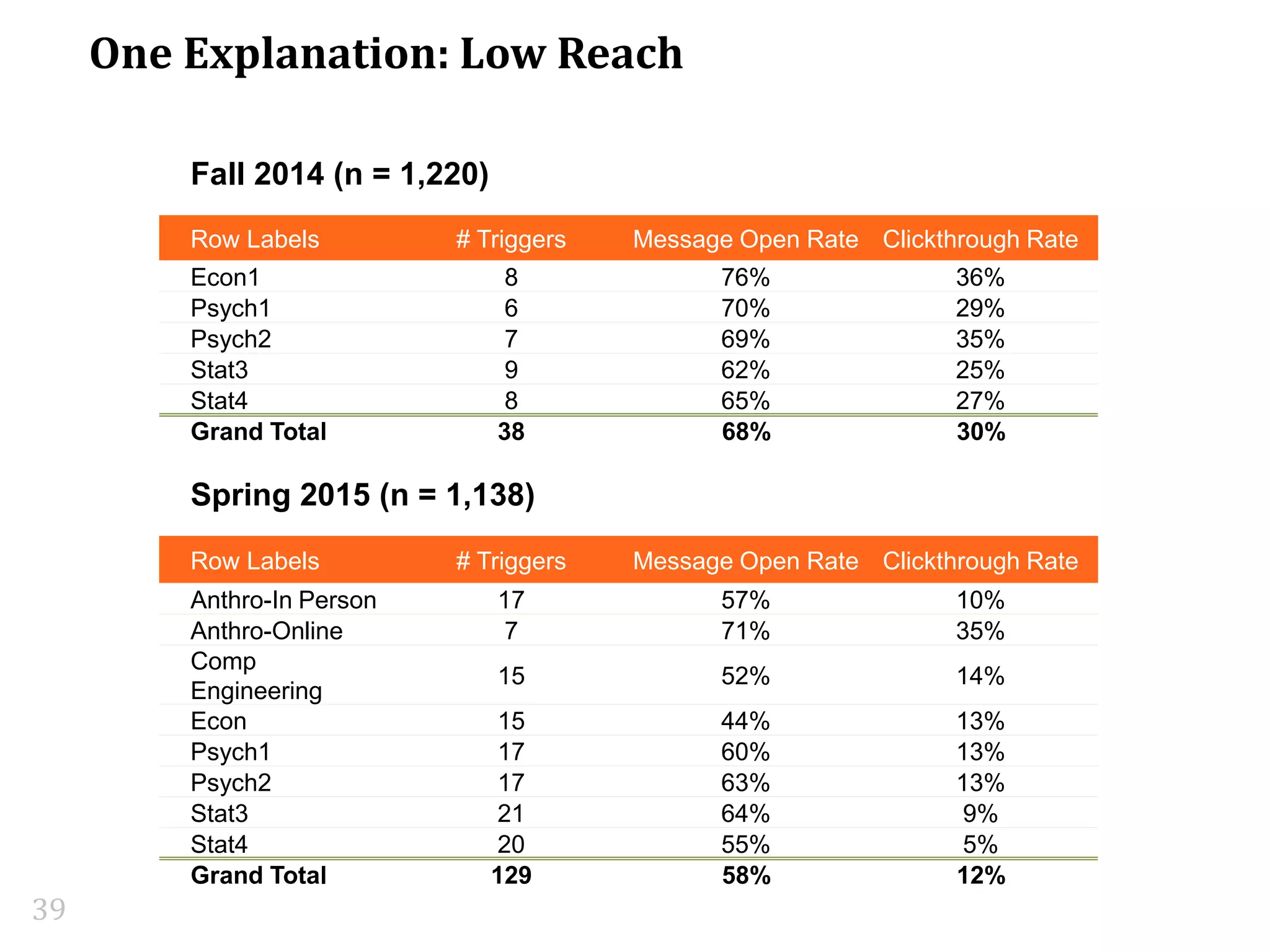

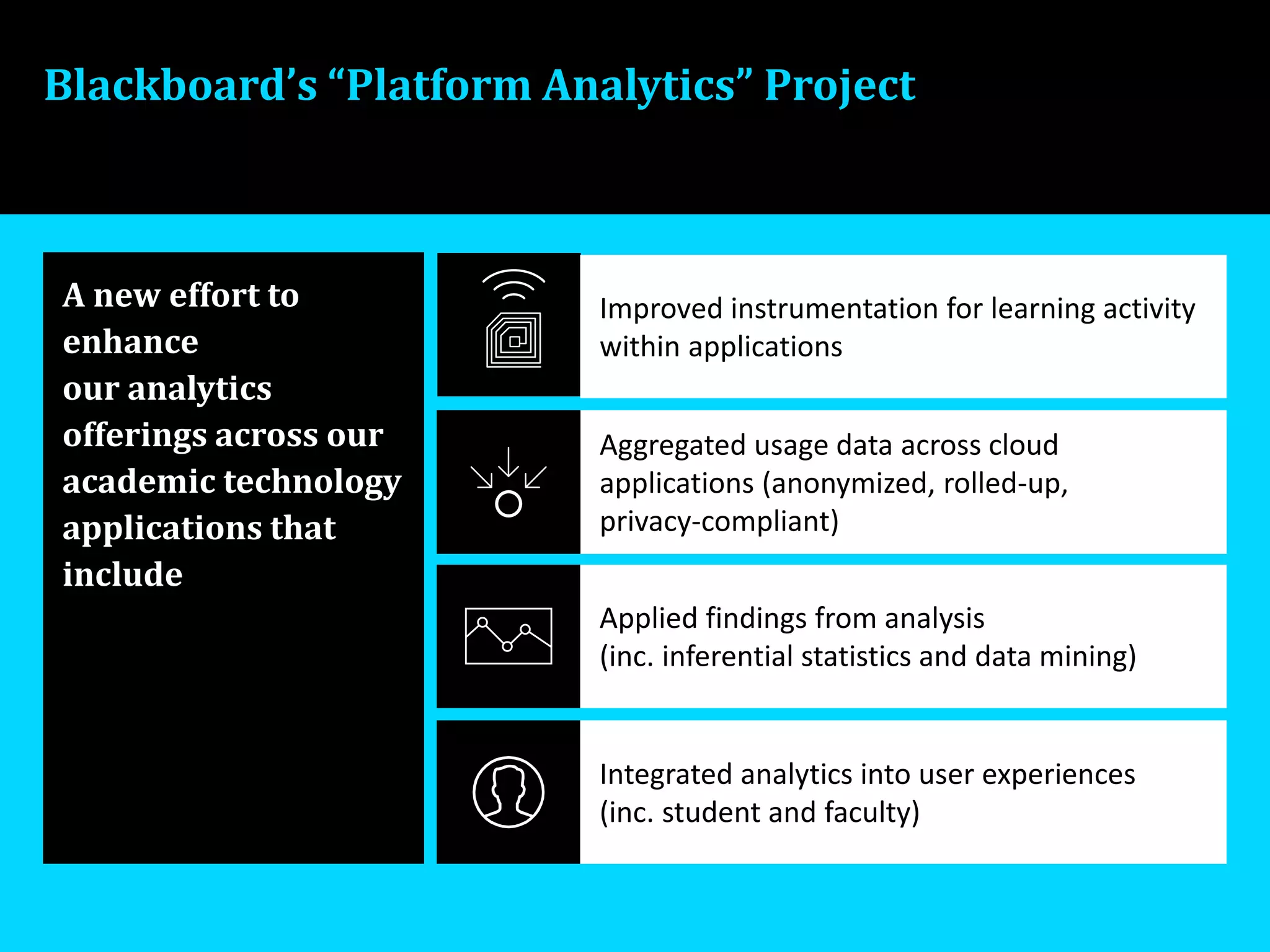

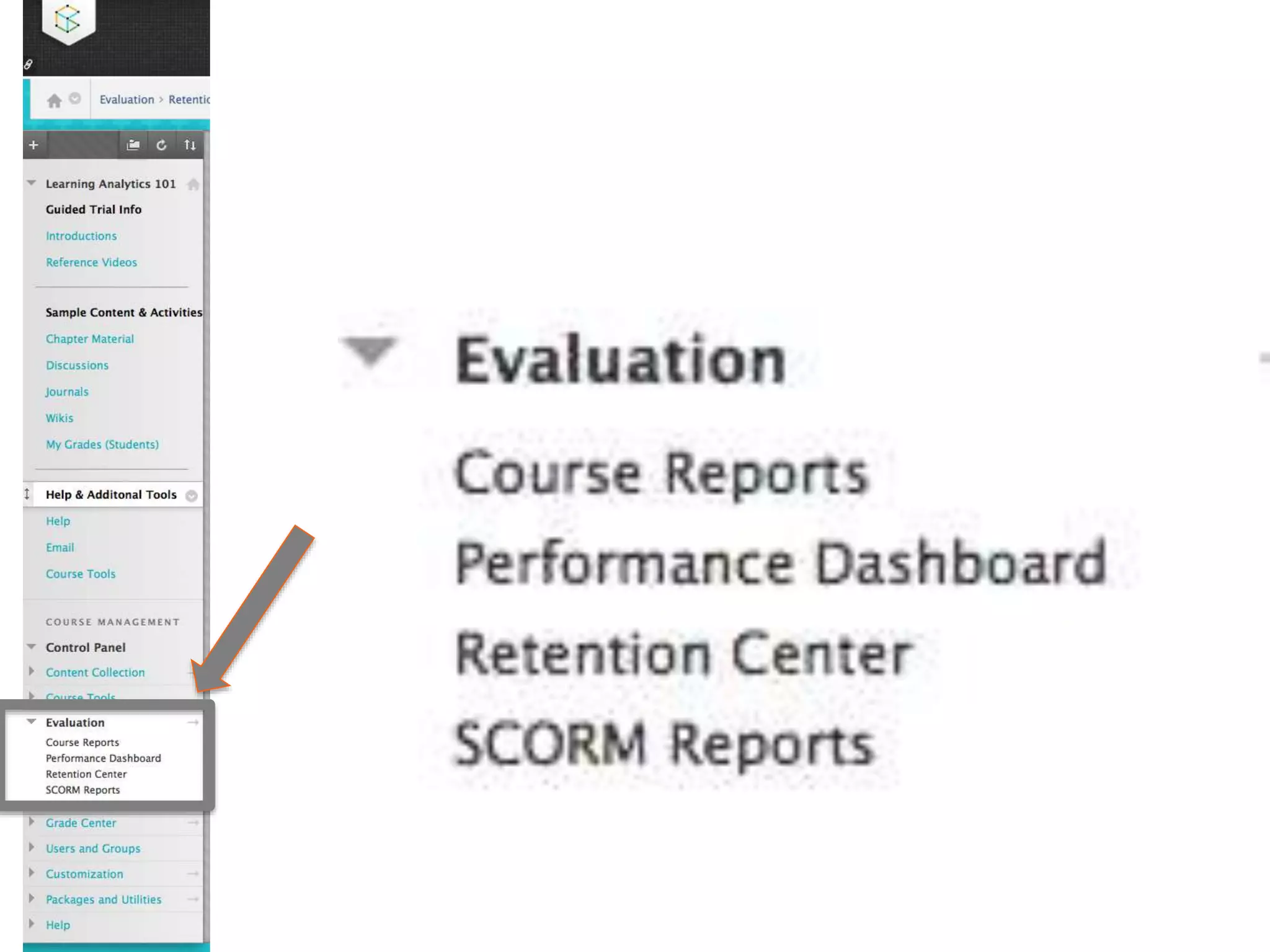

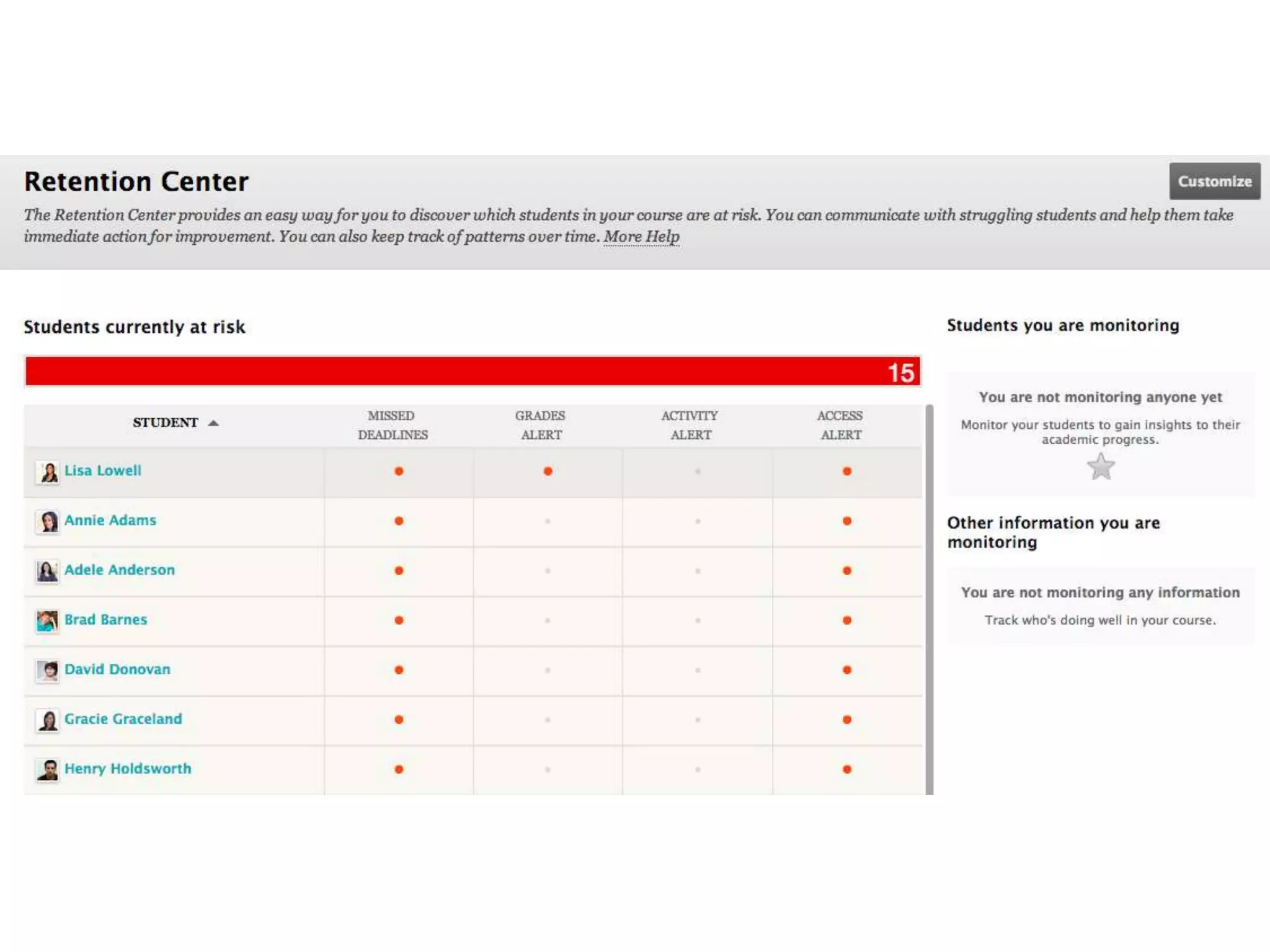

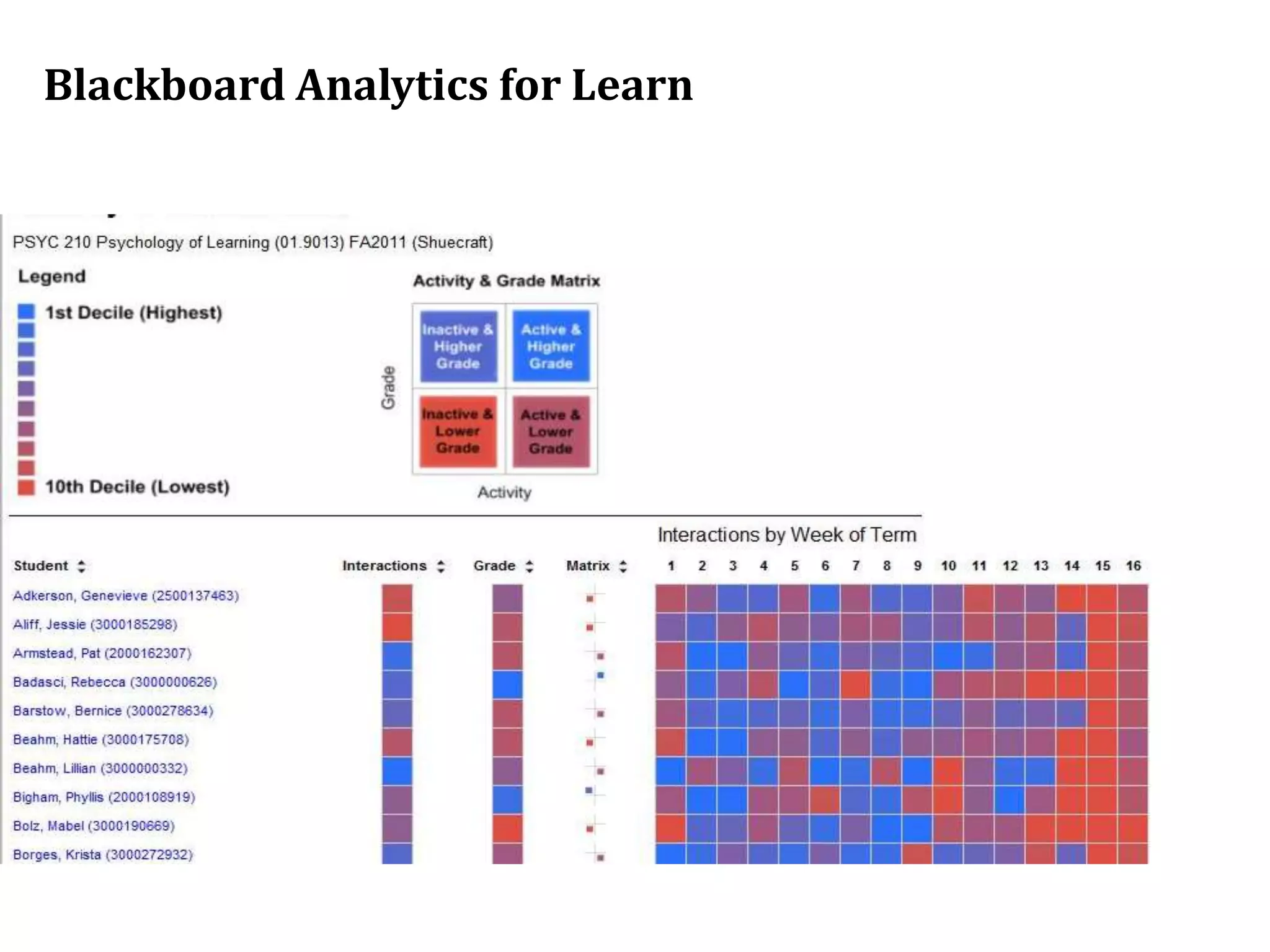

The document discusses the use of learning analytics to enhance student achievement and determine effective interventions for at-risk students, based on research findings from various studies. It highlights the importance of data analytics in predicting course success by analyzing student interactions with academic technologies, with a focus on engagement and performance metrics. Additionally, it emphasizes the ongoing development of analytics tools at Blackboard to improve educational outcomes across diverse student populations.

![Call to action [with amendments]

(from a May 2012 Keynote Presentation @ San Diego State U)

You’re not behind the curve, this is a rapidly emerging area

that we can (should) lead... [together with interested partners]

Metrics reporting is the foundation for analytics

[don’t under or over-estimate the importance]

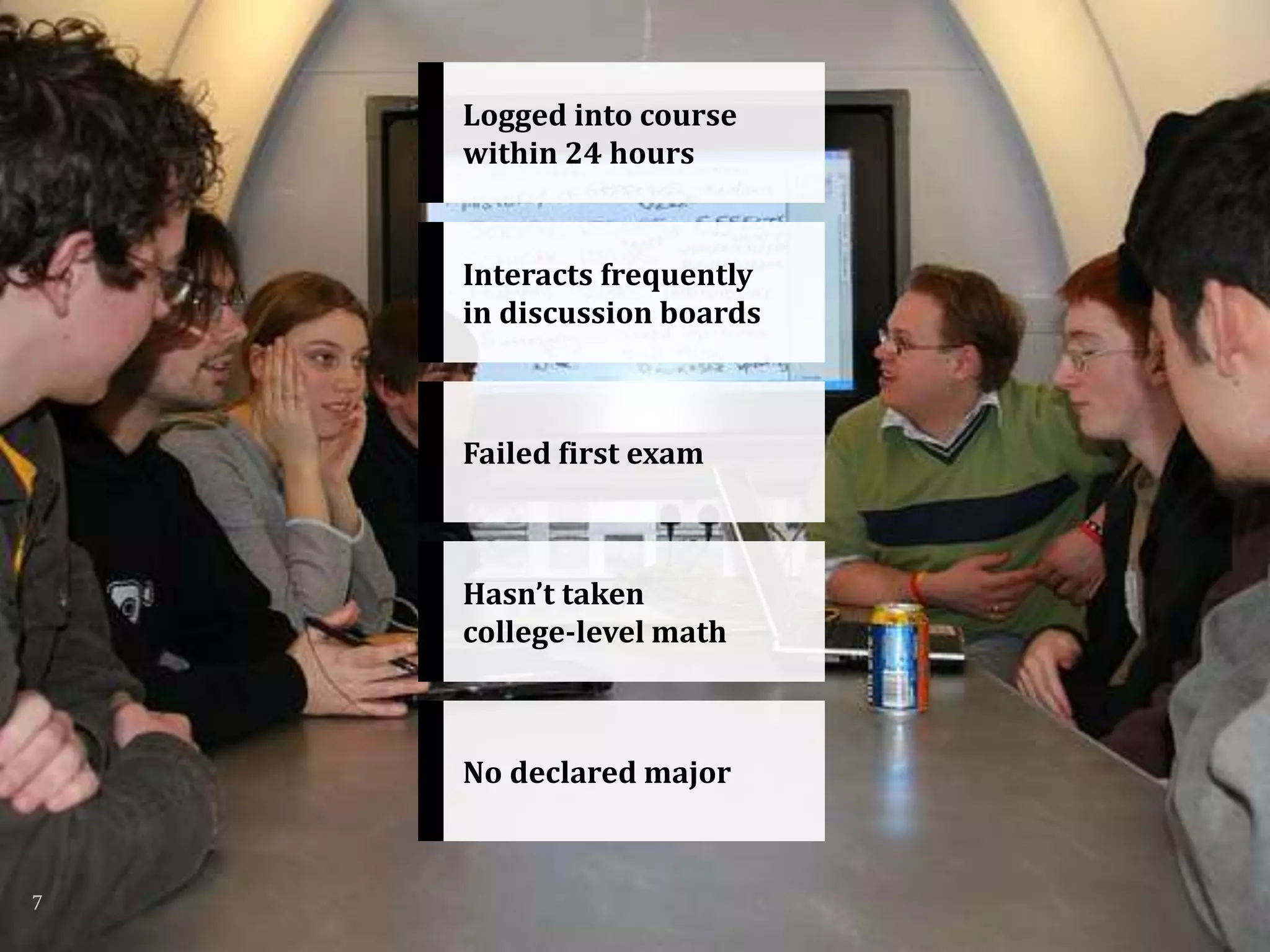

Start with what you have! Don’t wait for student characteristics and

detailed database information; LMS data can provide significant insights

If there’s any ed tech software folks in the audience,

please help us with better reporting!

[we’re working on it and feel your pain!]](https://image.slidesharecdn.com/2015624jisc-final-150623201524-lva1-app6891/75/Using-Learning-Analytics-to-Assess-Innovation-Improve-Student-Achievement-49-2048.jpg)