The document defines key concepts in reinforcement learning including:

- Multi-armed bandit problems where an agent interacts with multiple options to receive rewards from unknown distributions

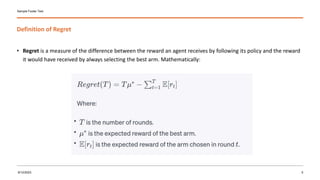

- Regret which measures the difference between an agent's actual reward and the reward it could have received by always selecting the best option

- The UCB algorithm which balances exploration and exploitation by selecting the option with the highest upper confidence bound

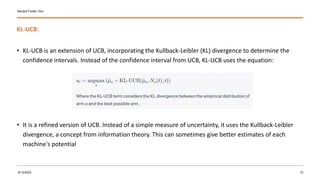

- The KL-UCB algorithm which is an extension of UCB using the Kullback-Leibler divergence to determine confidence intervals

- Thompson sampling which is a Bayesian approach that maintains posterior distributions over rewards and samples from them to select options