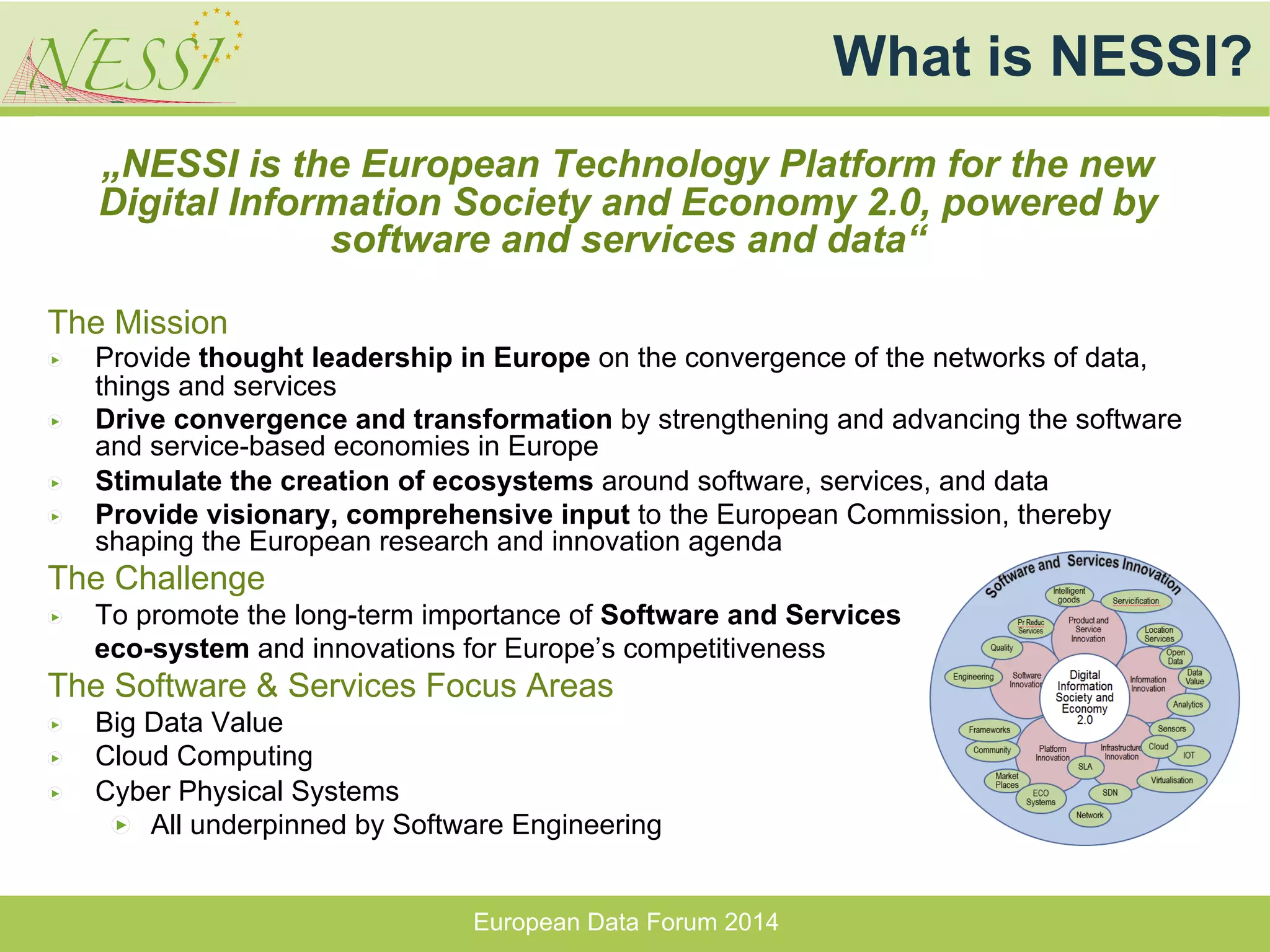

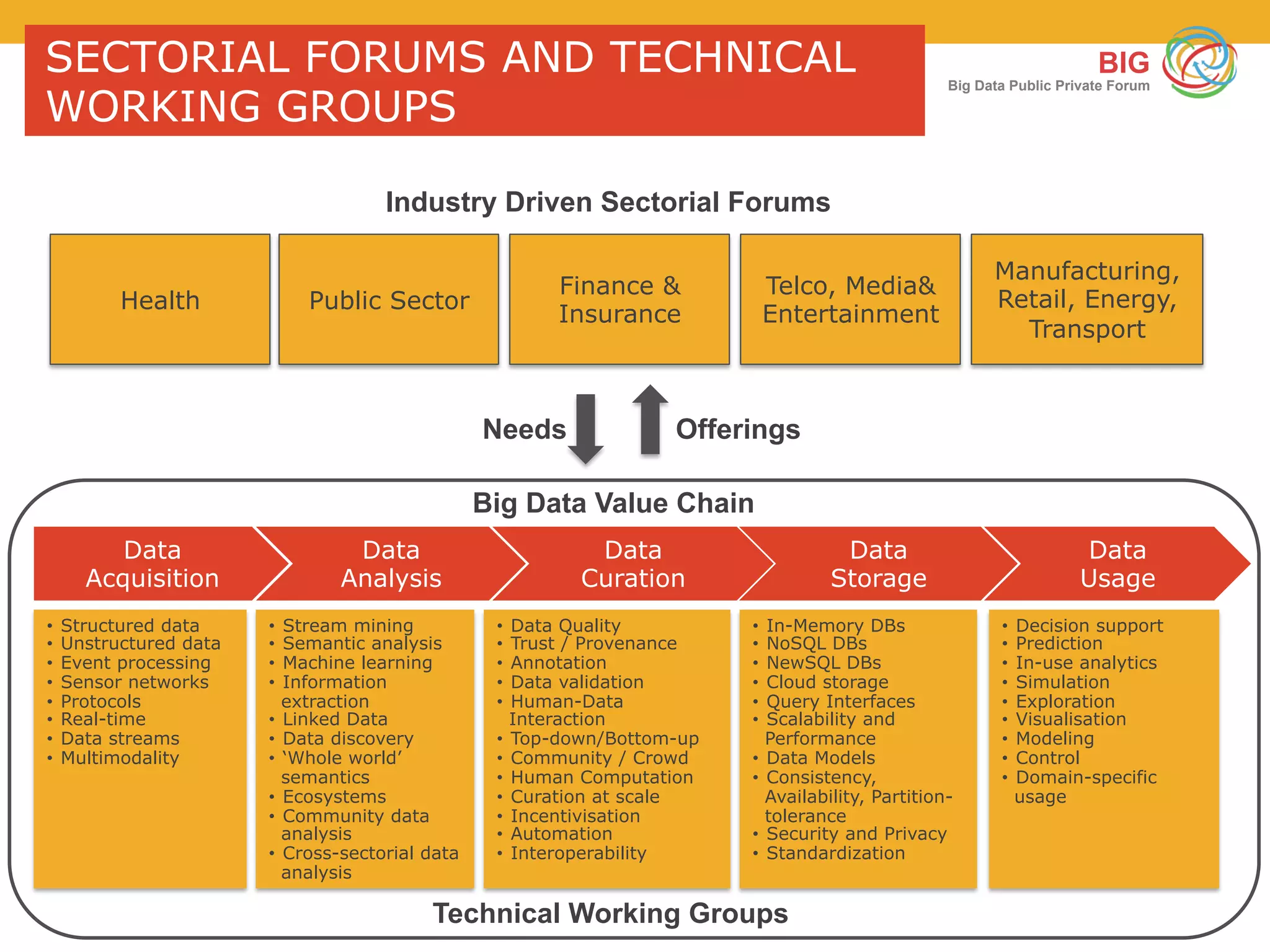

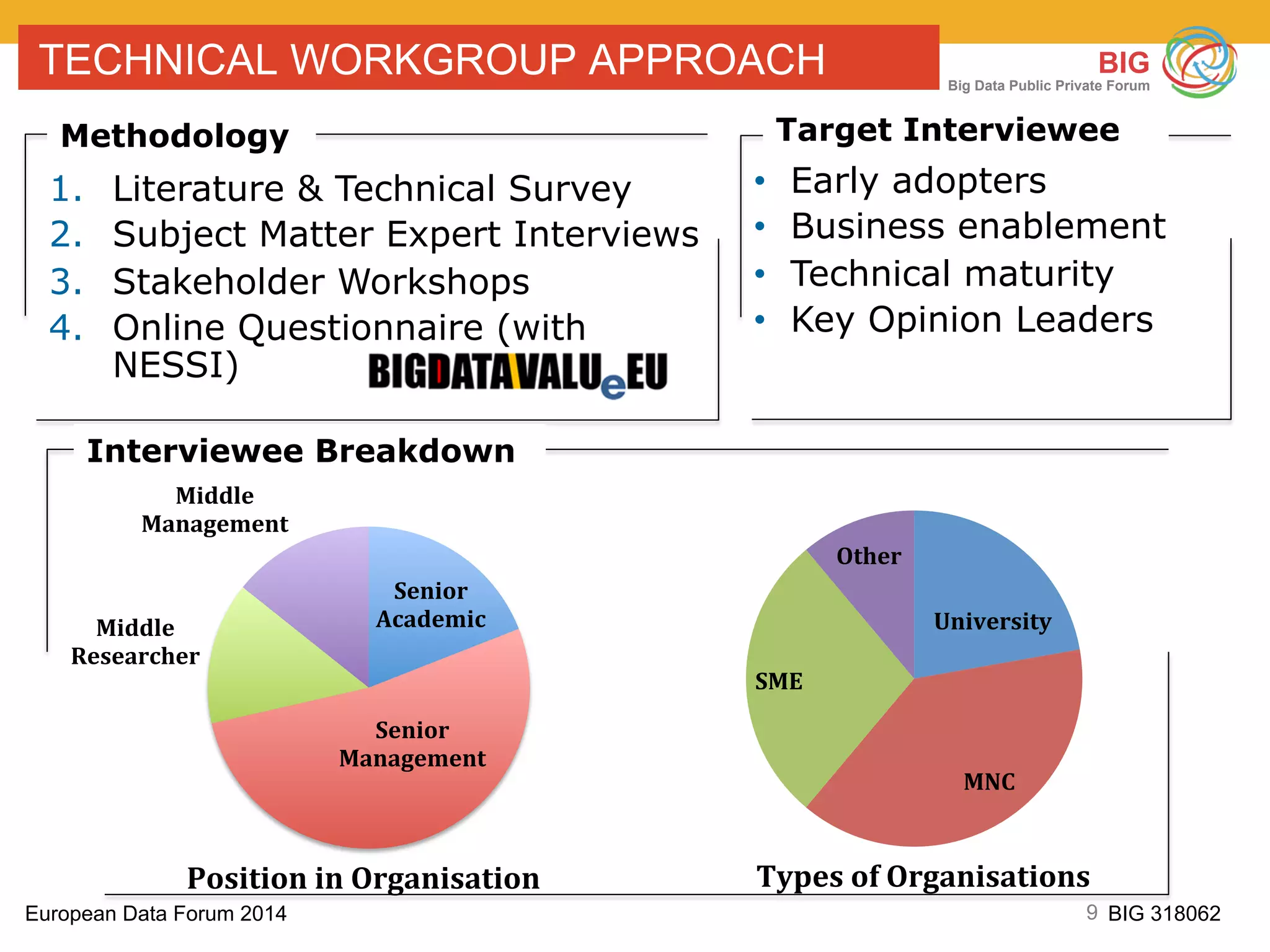

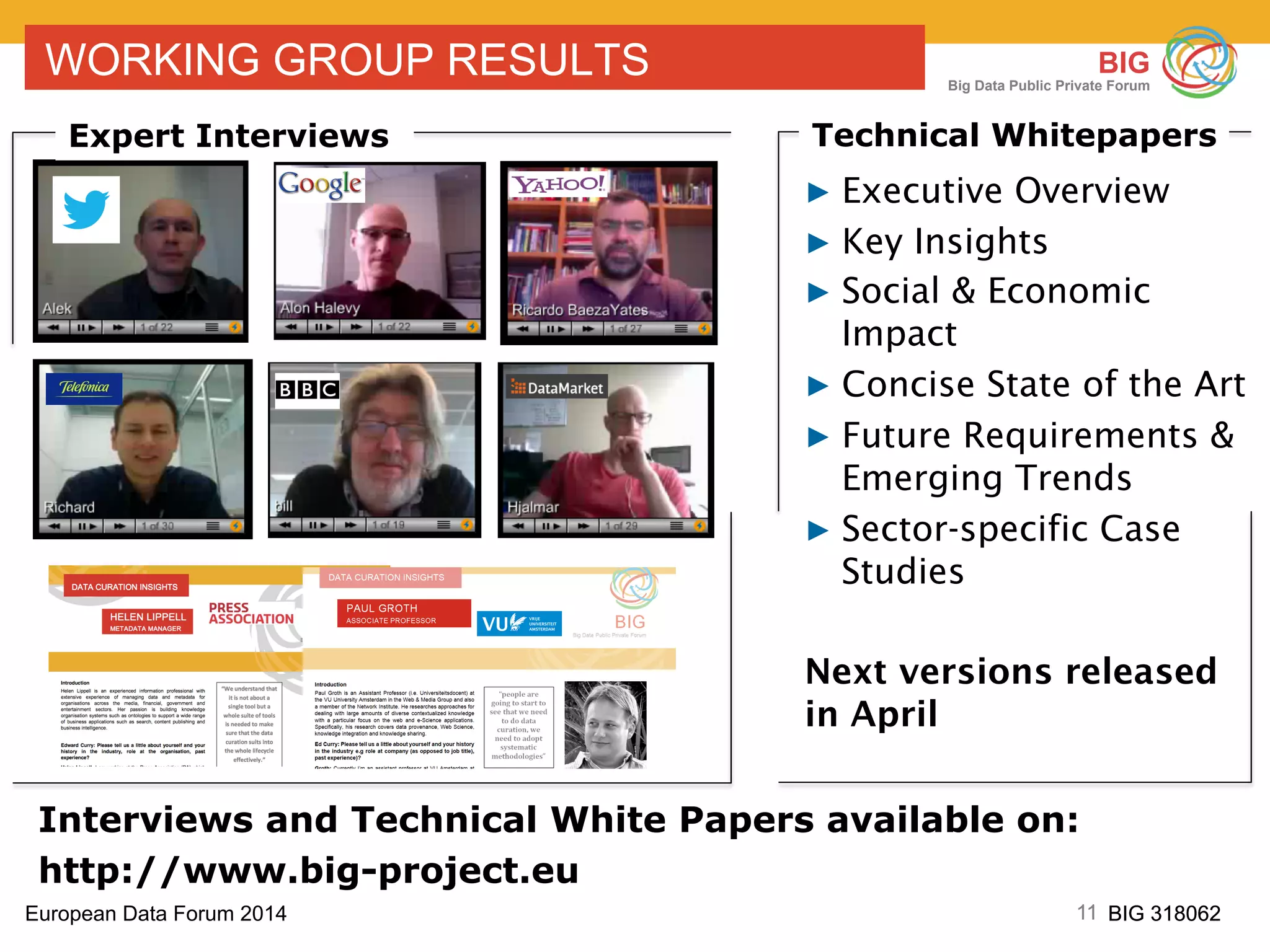

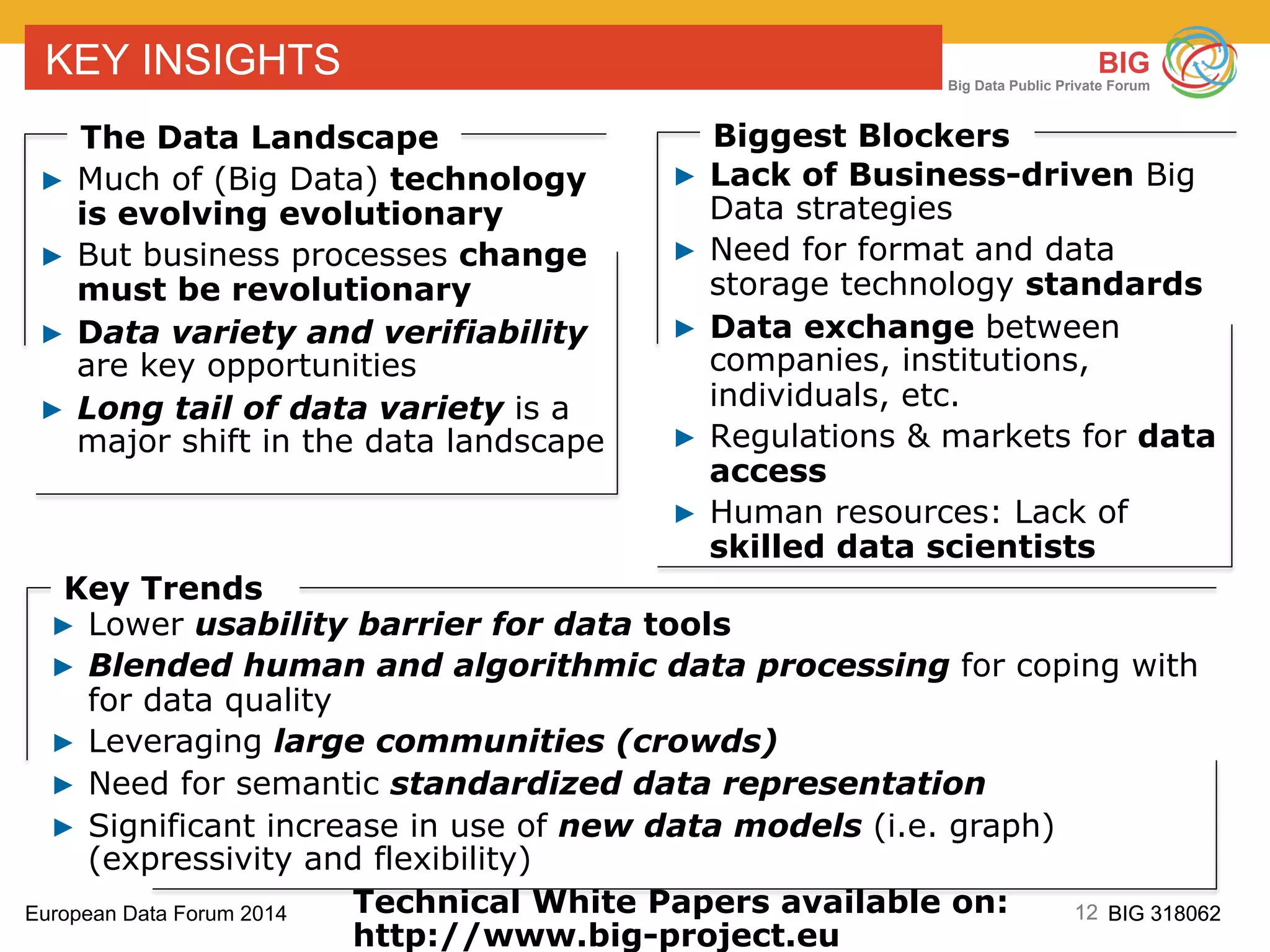

The European Data Forum 2014 emphasizes the importance of public-private partnerships in enhancing Europe’s big data capabilities. Key discussions focused on creating a strategic research and innovation agenda, defining a clear big data value chain, and ensuring the long-term competitiveness of Europe through industry-led initiatives. The forum highlighted the need for collaboration, technical standards, and skilled personnel to overcome challenges in the big data landscape.