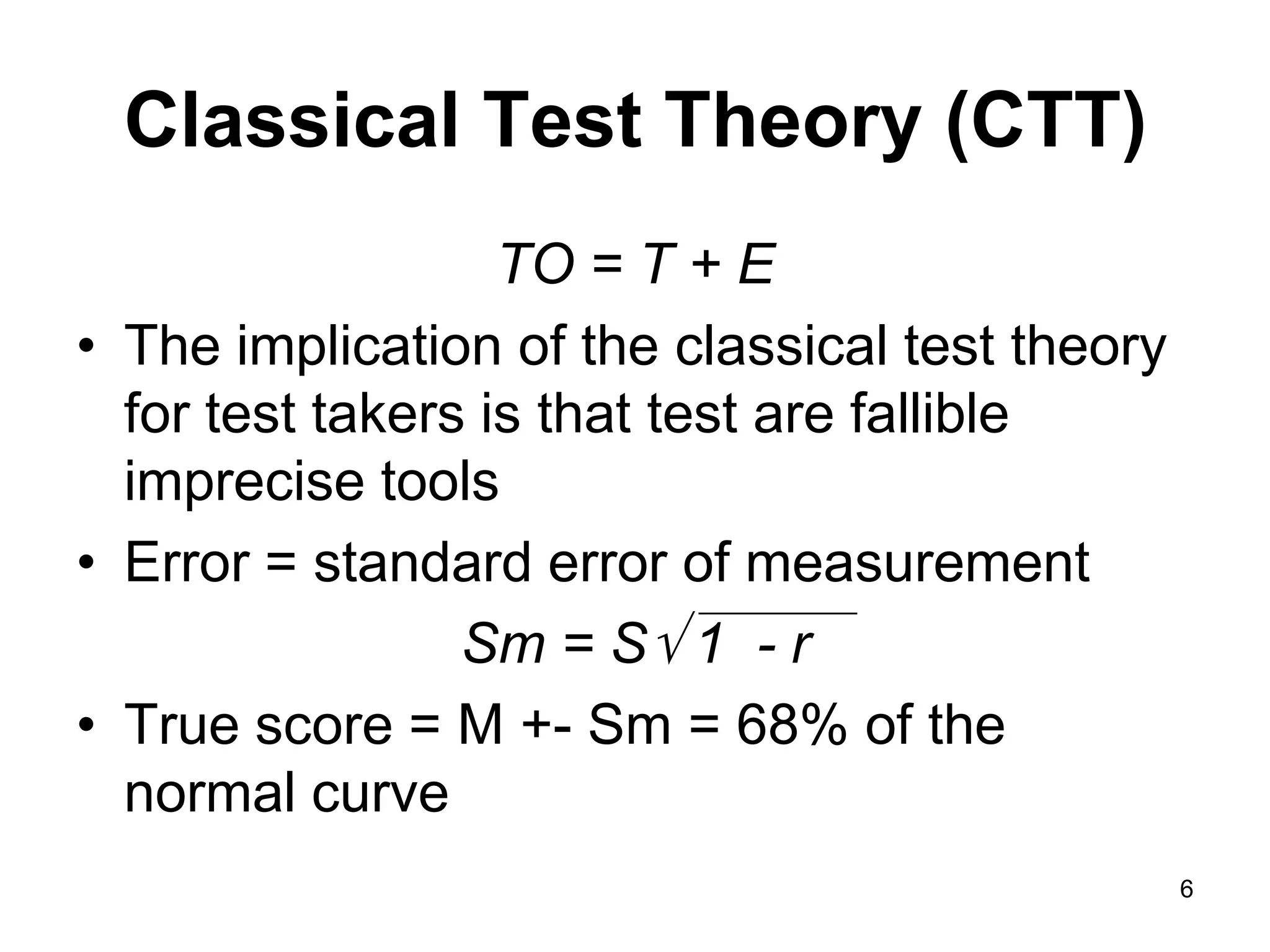

The document discusses the application of Item Response Theory (IRT) using the Rasch model to construct cognitive measures. It provides an overview of psychometric theory, classical test theory, and IRT approaches like the Rasch model. The Rasch model assumes that the probability of a correct response depends only on the difference between a person's ability and the item difficulty. It provides sample-independent item calibrations and person measures. The document outlines the assumptions, uses, and procedures of the Rasch model for test analysis.

![Rasch Model

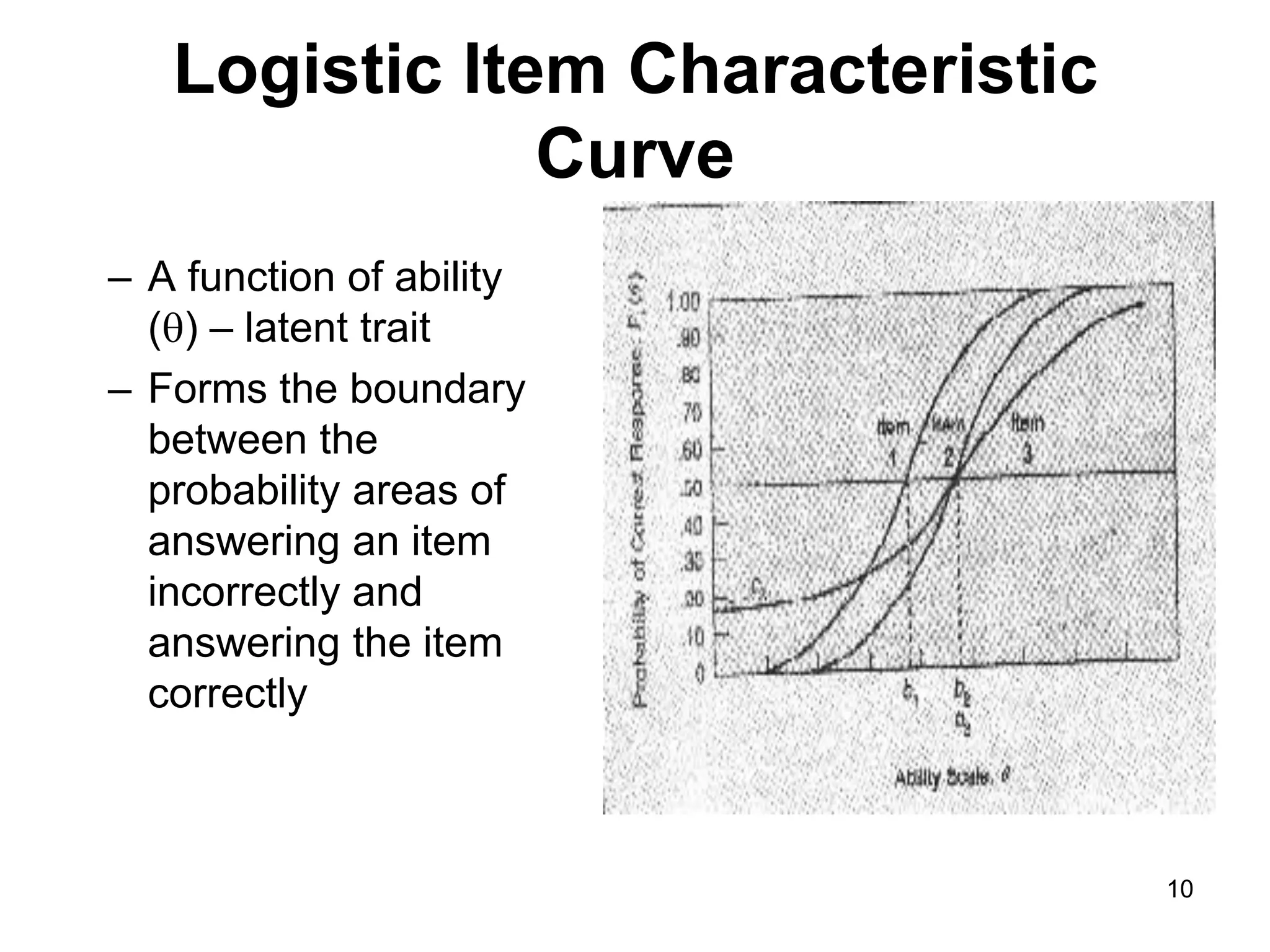

• The model was enhanced to assume that the

probability that a student will correctly answer a

question is a logistic function of the difference

between the student's ability [θ] and the difficulty

of the question [β] (i.e. the ability required to

answer the question correctly), and only a

function of that difference giving way to the

Rasch model

• Thus, when data fit the model, the relative

difficulties of the questions are independent of

the relative abilities of the students, and vice

versa (Rasch, 1977).

16](https://image.slidesharecdn.com/theapplicationofirtusingtheraschmodel-presnetation1-121012222709-phpapp02/75/The-application-of-irt-using-the-rasch-model-presnetation1-16-2048.jpg)