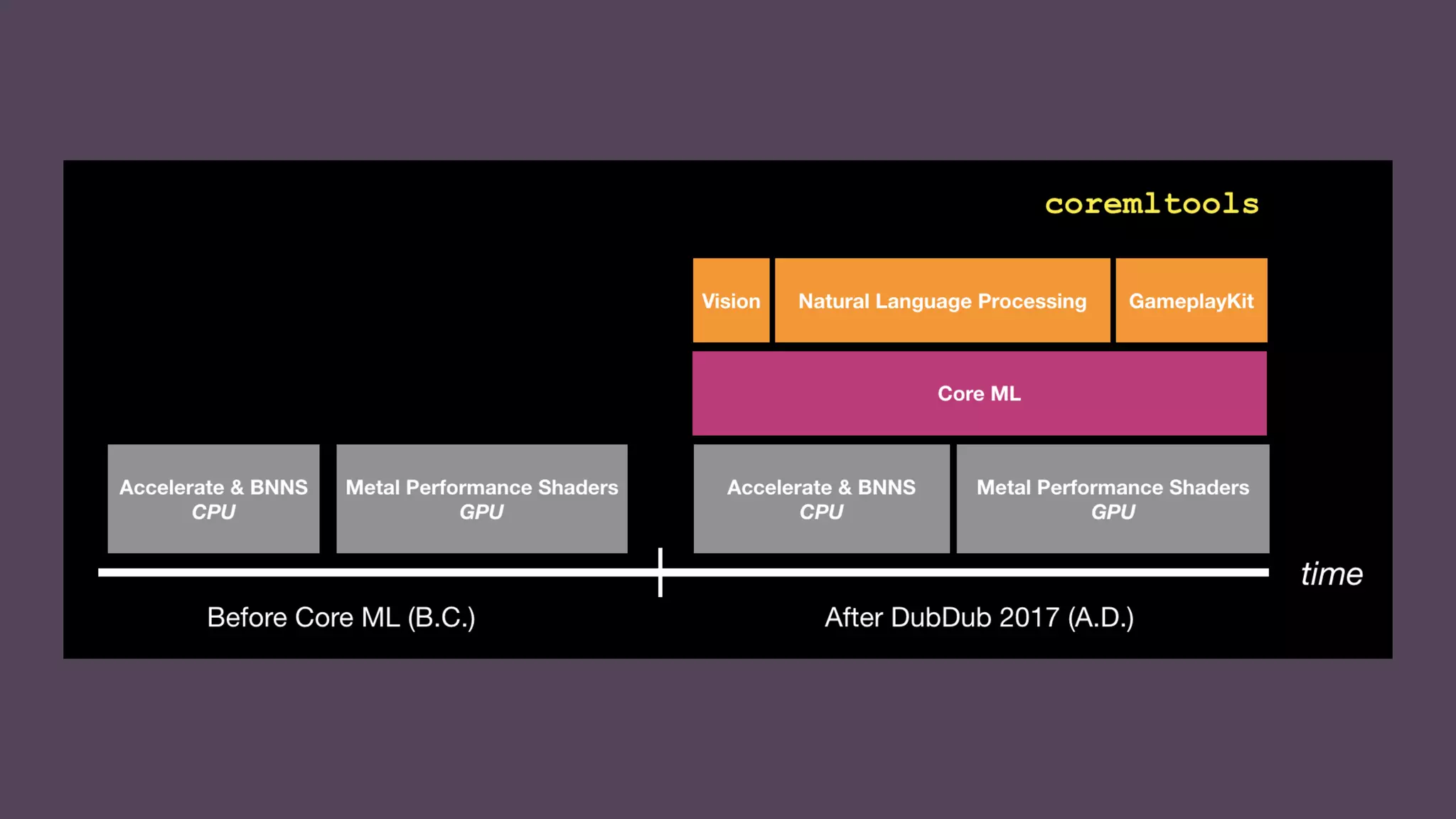

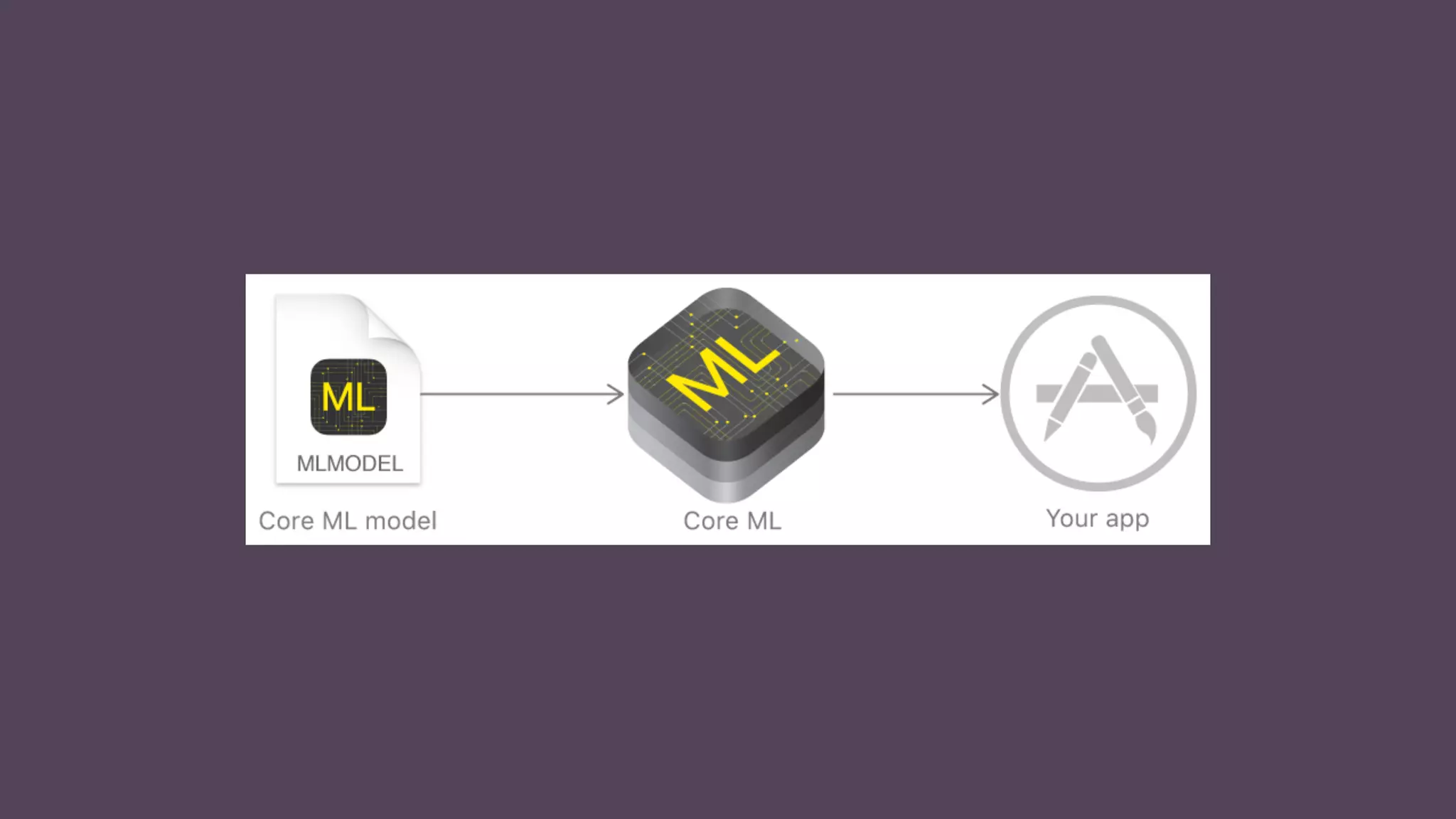

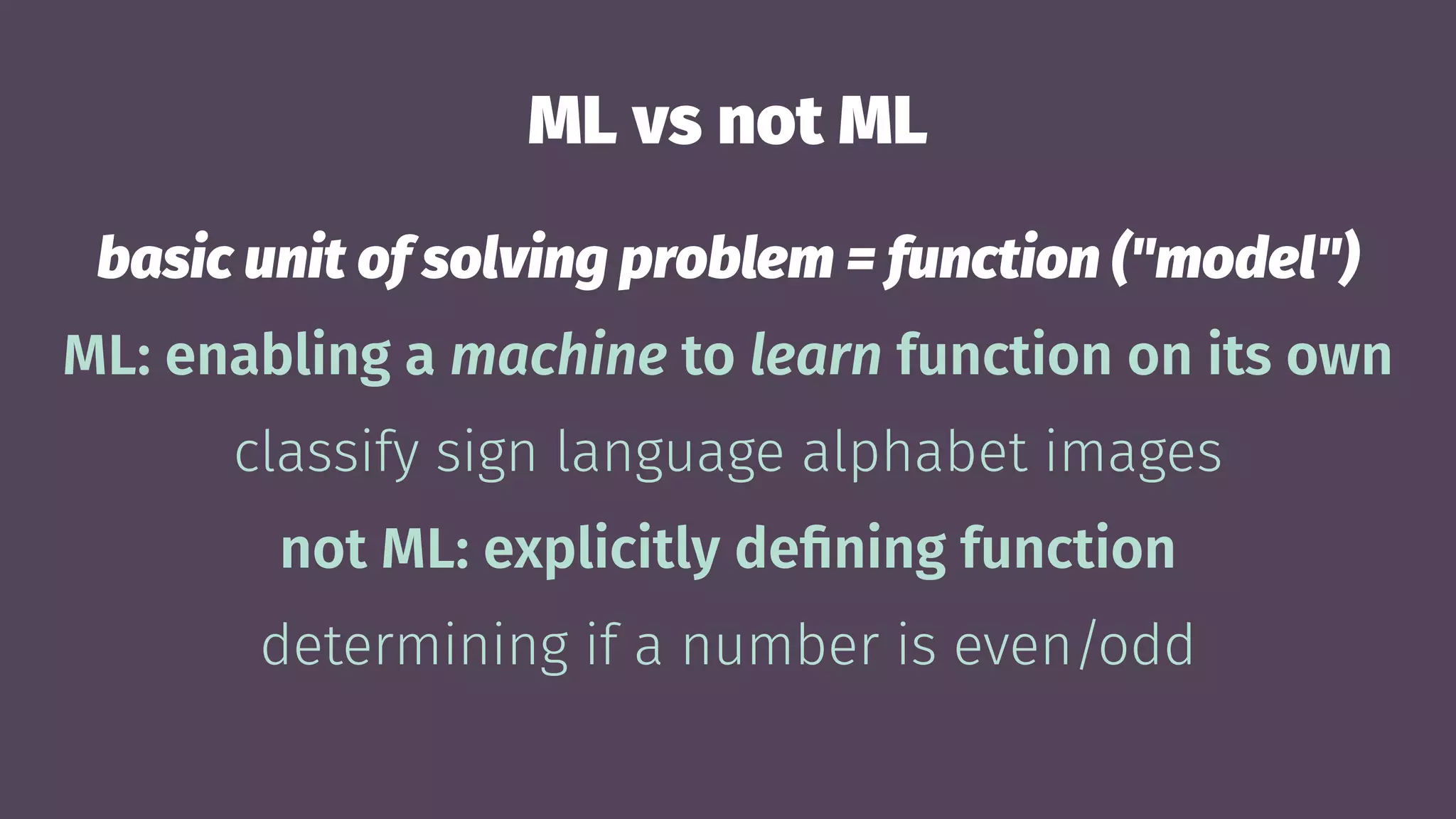

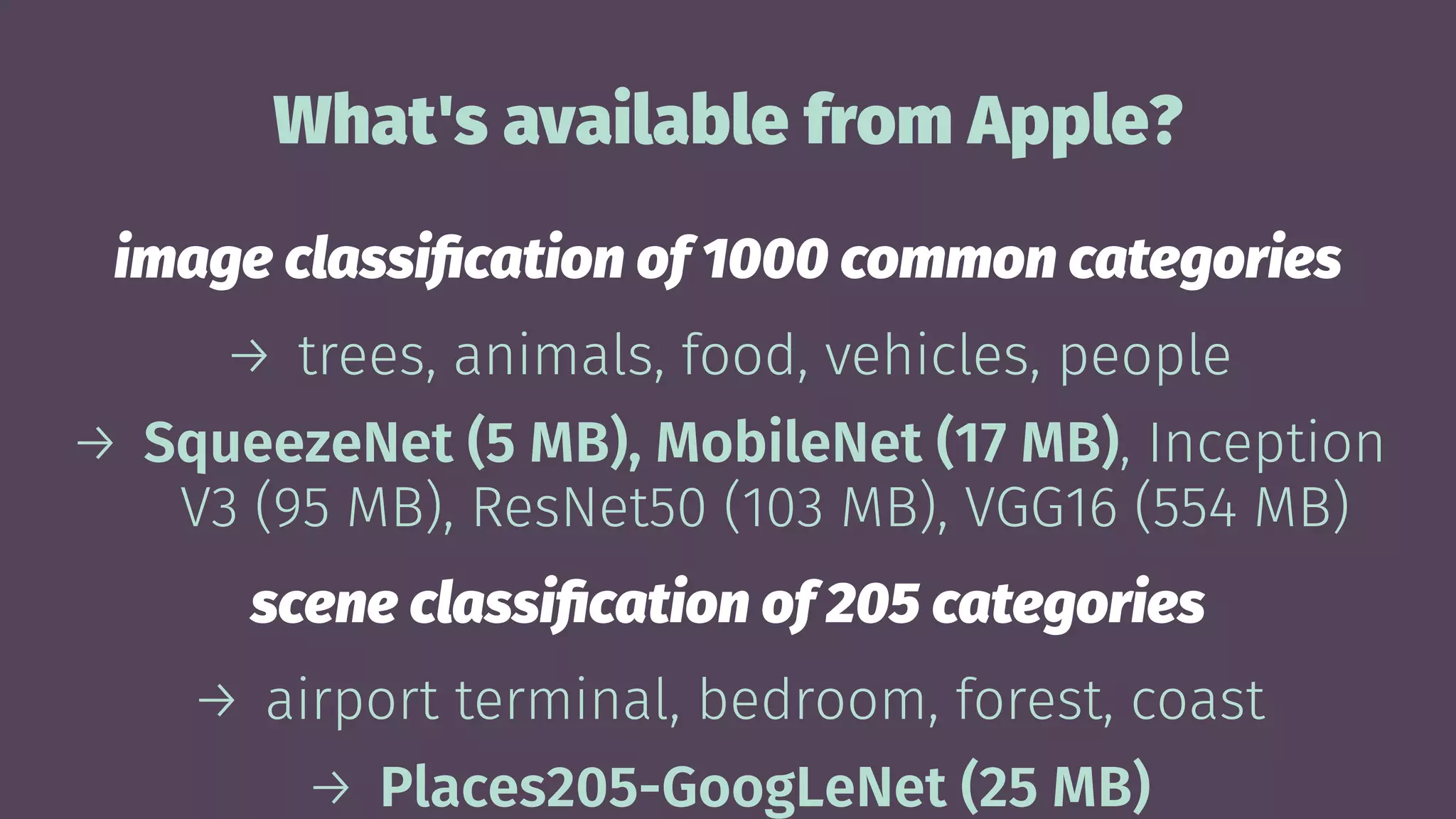

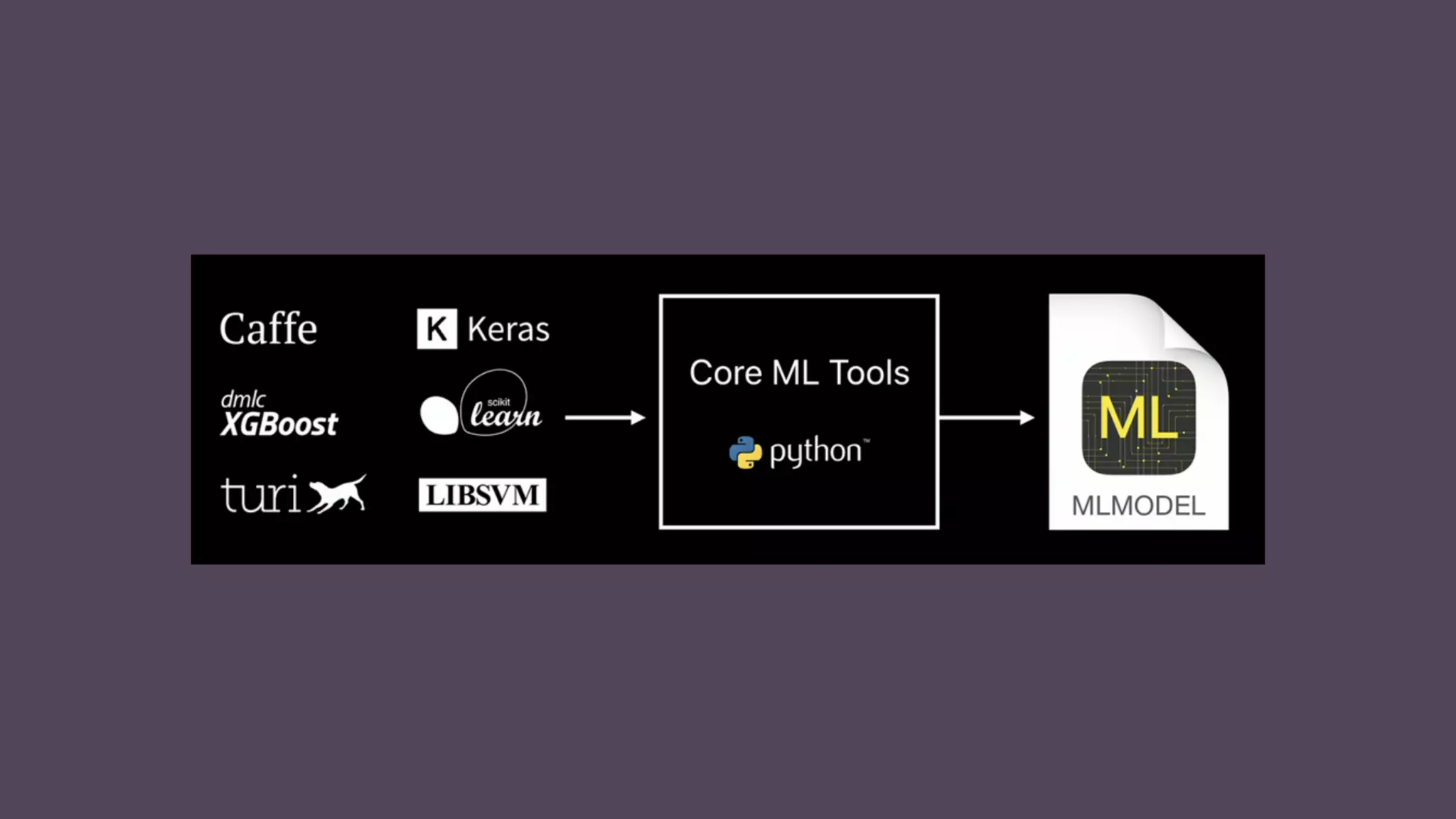

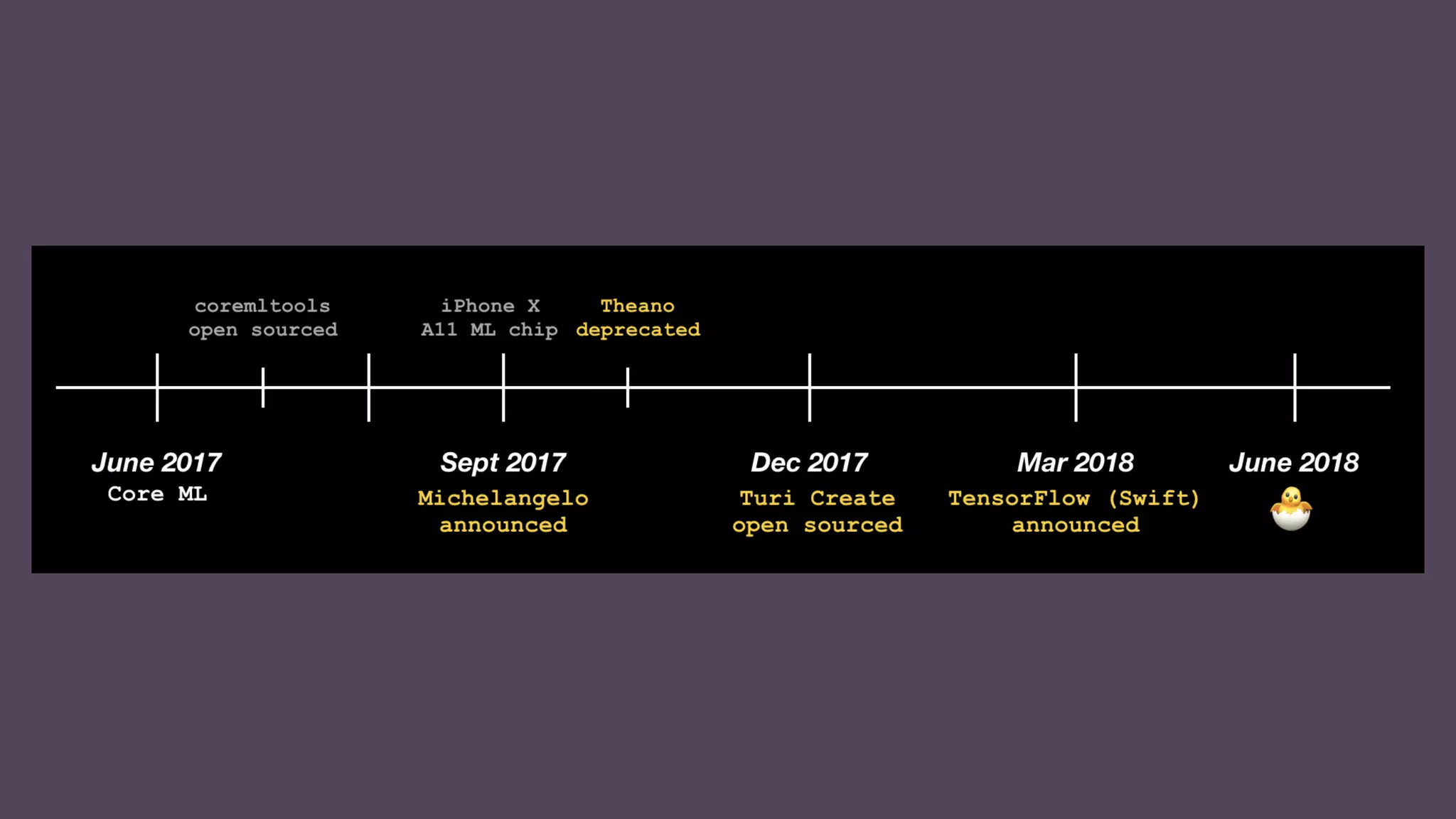

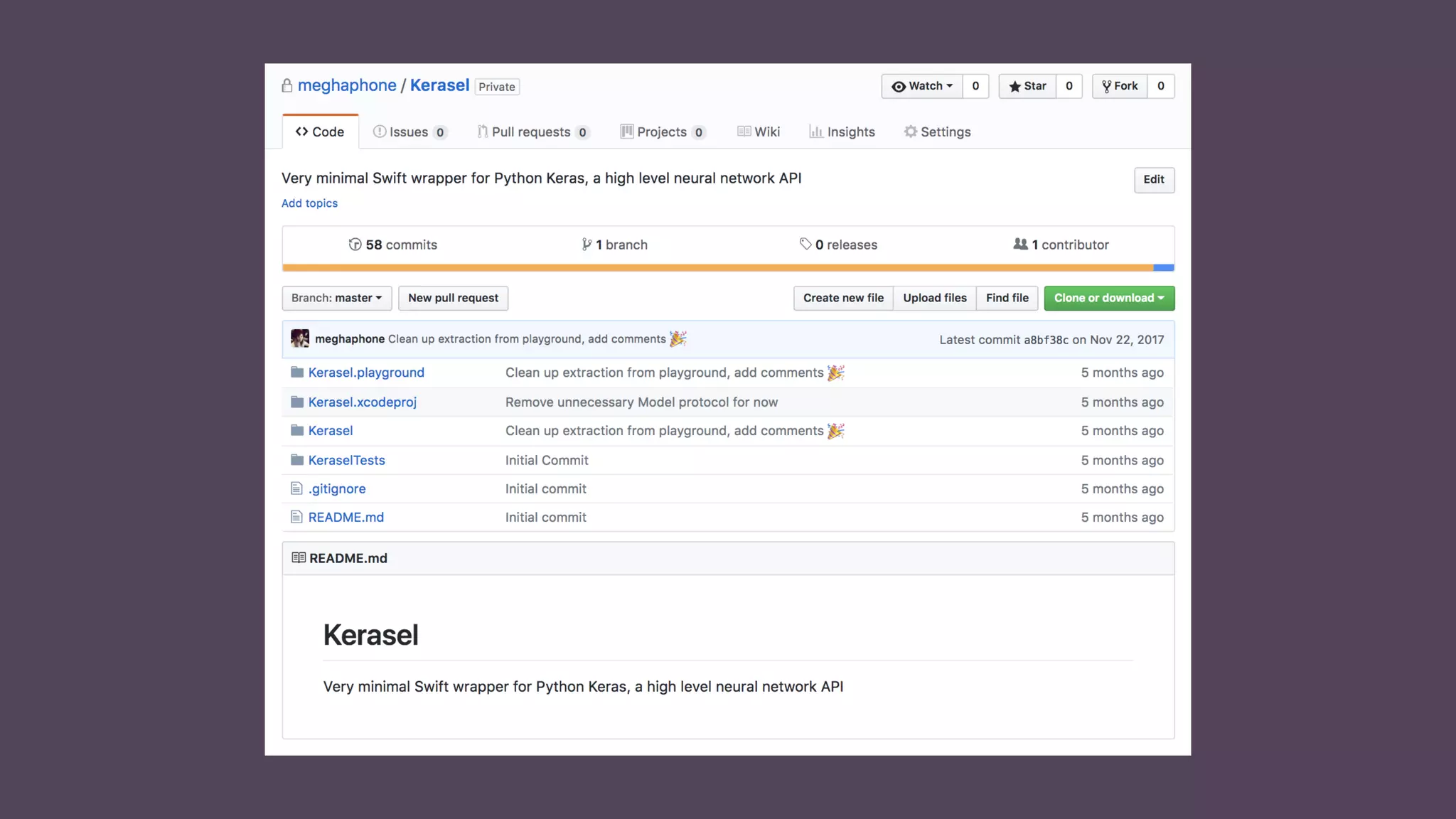

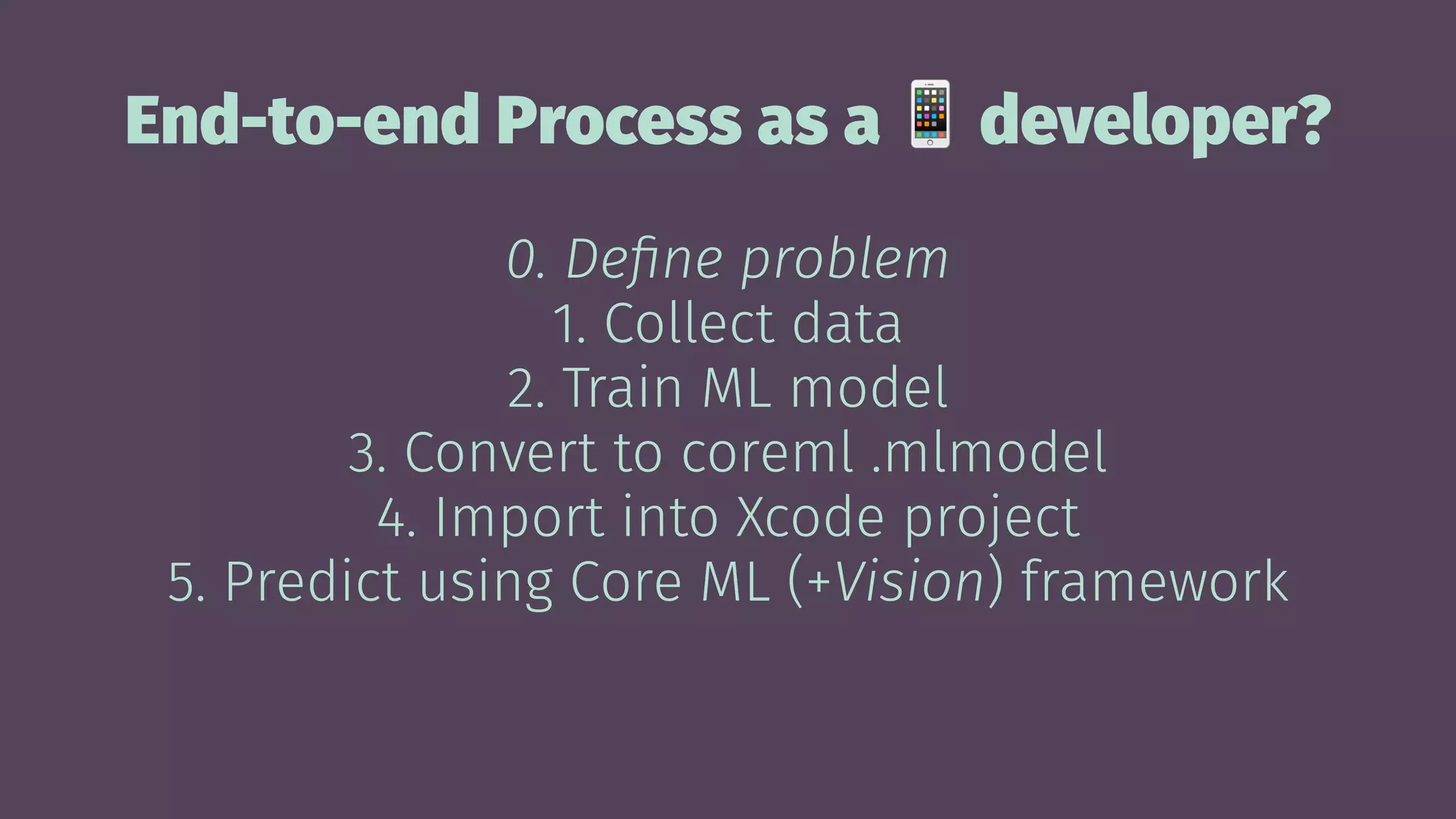

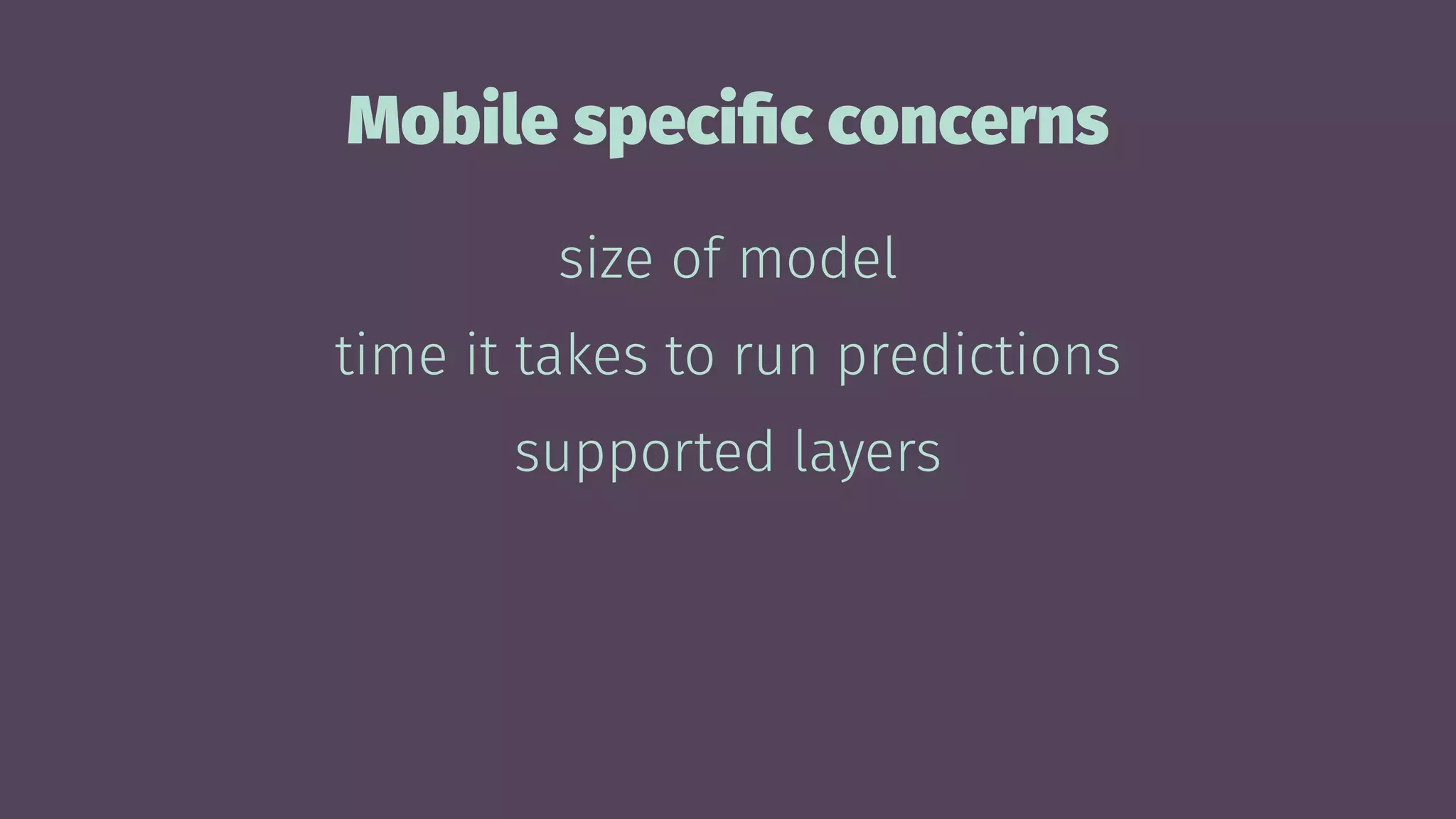

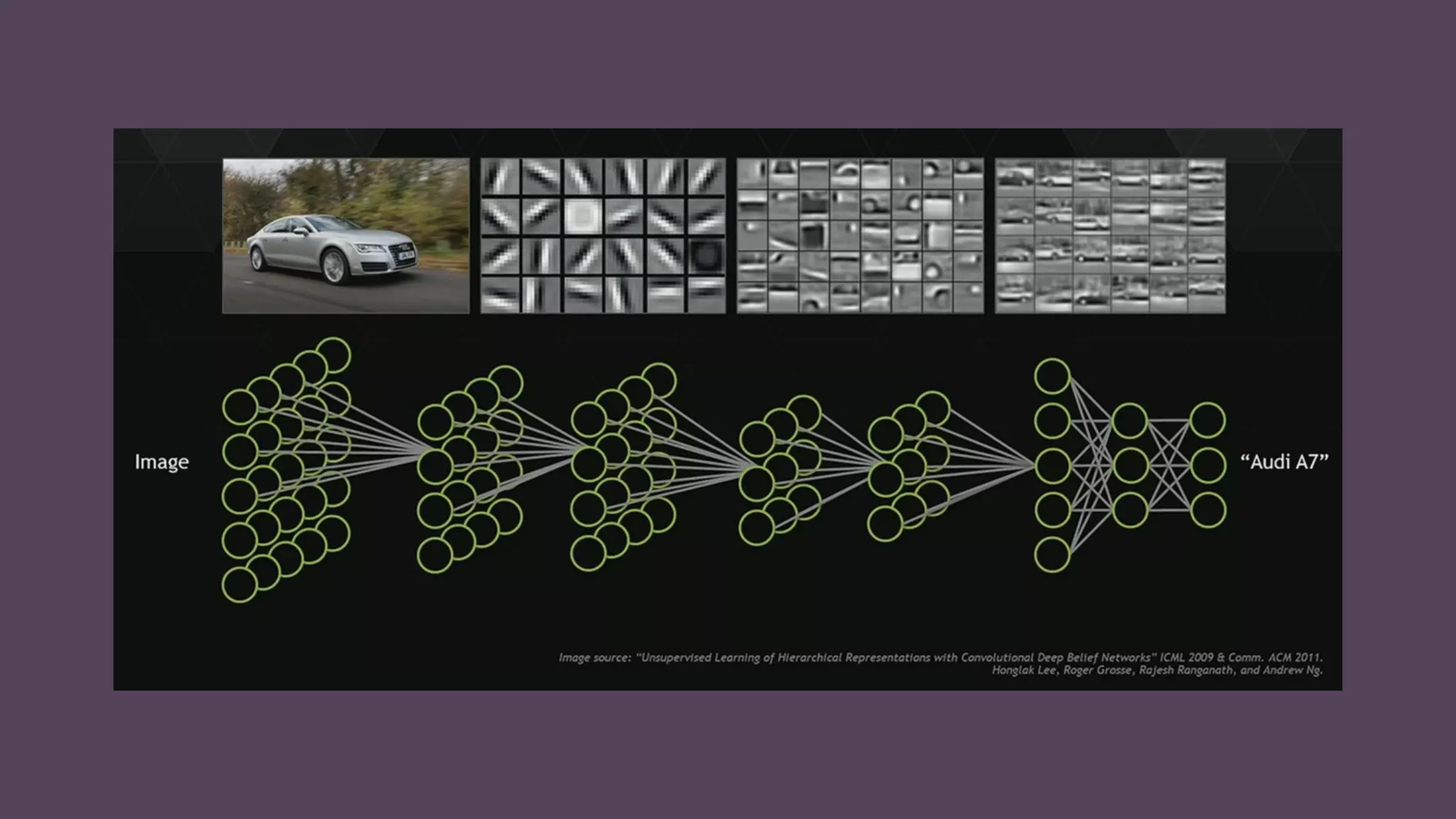

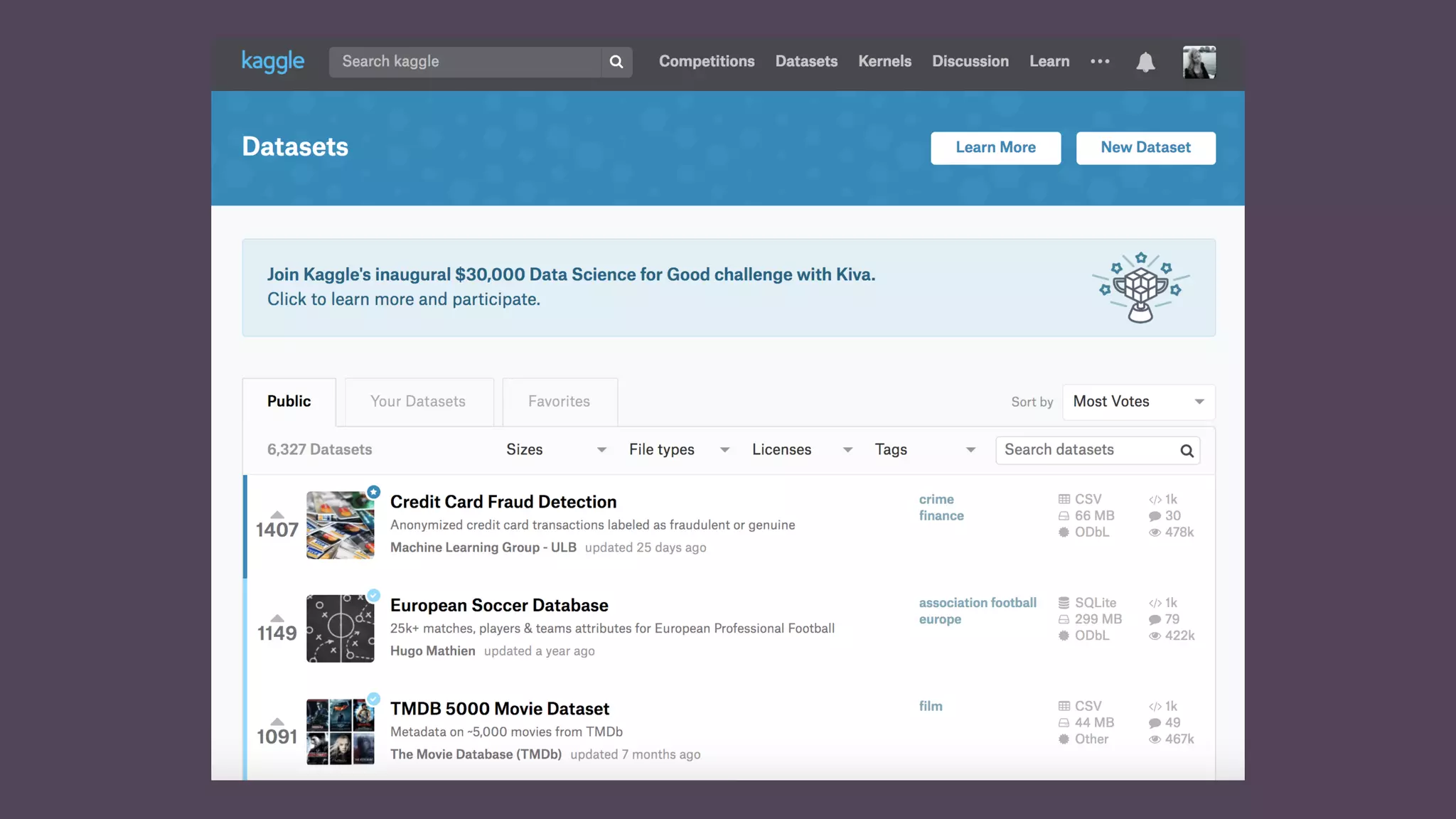

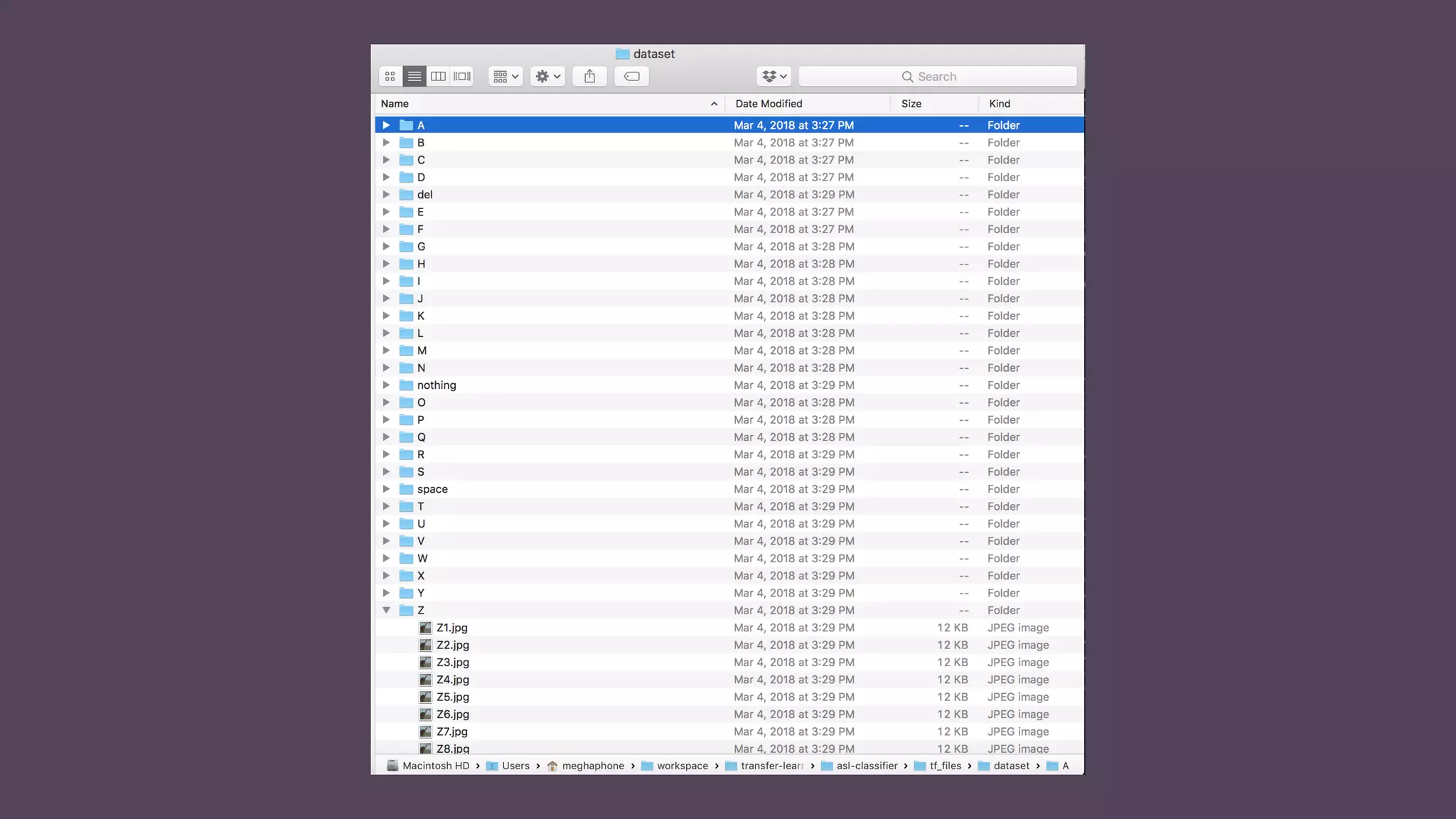

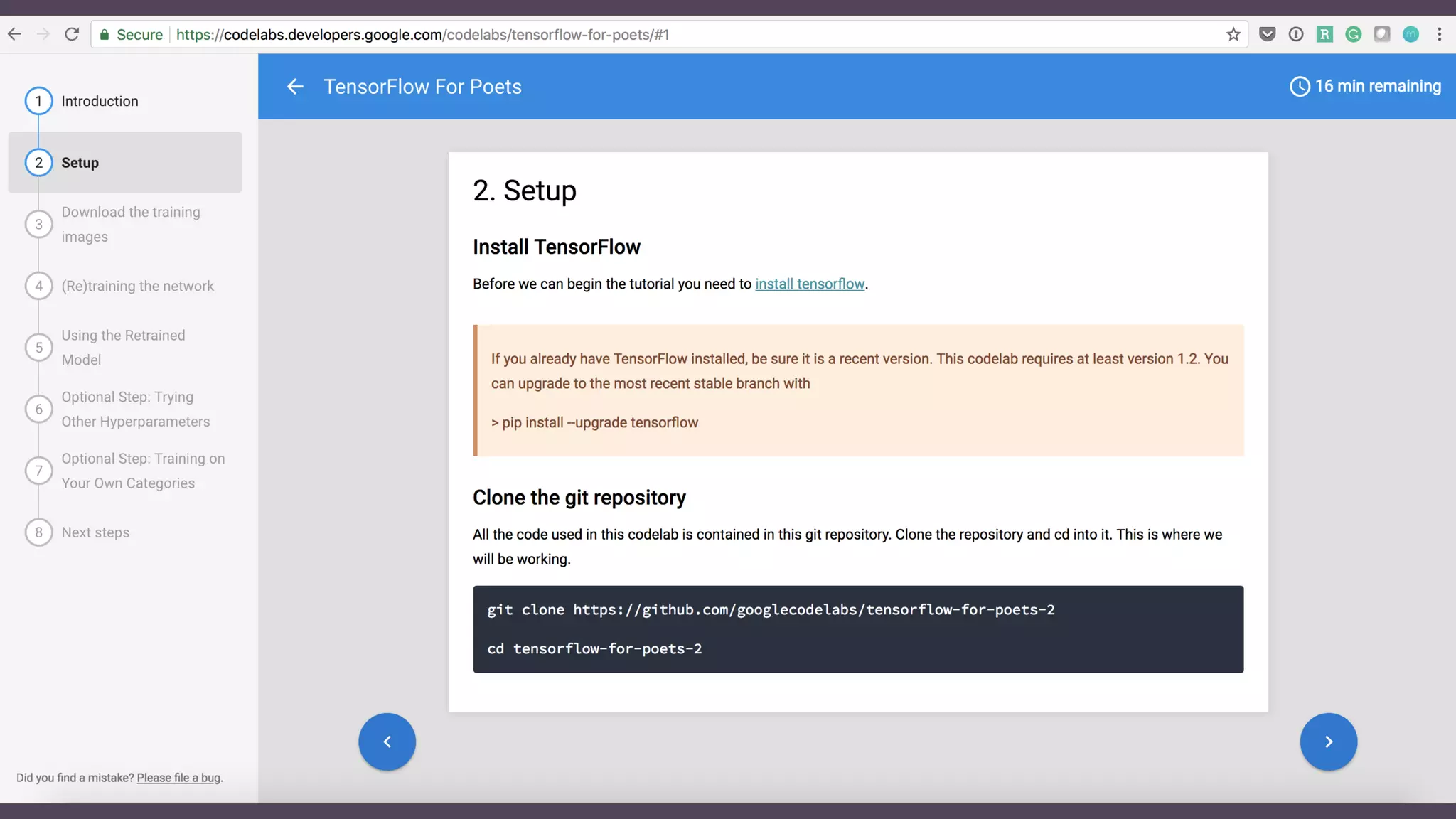

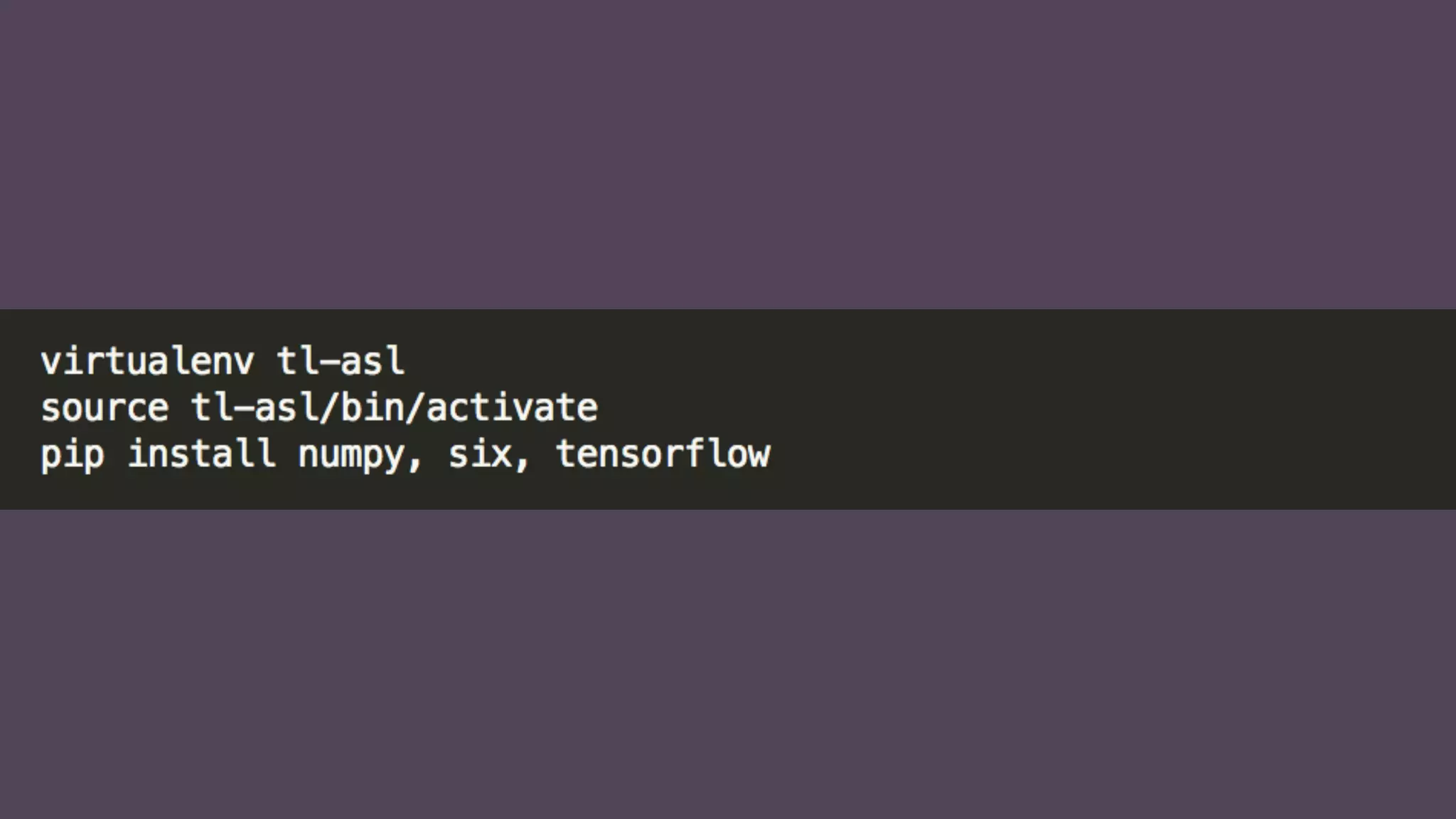

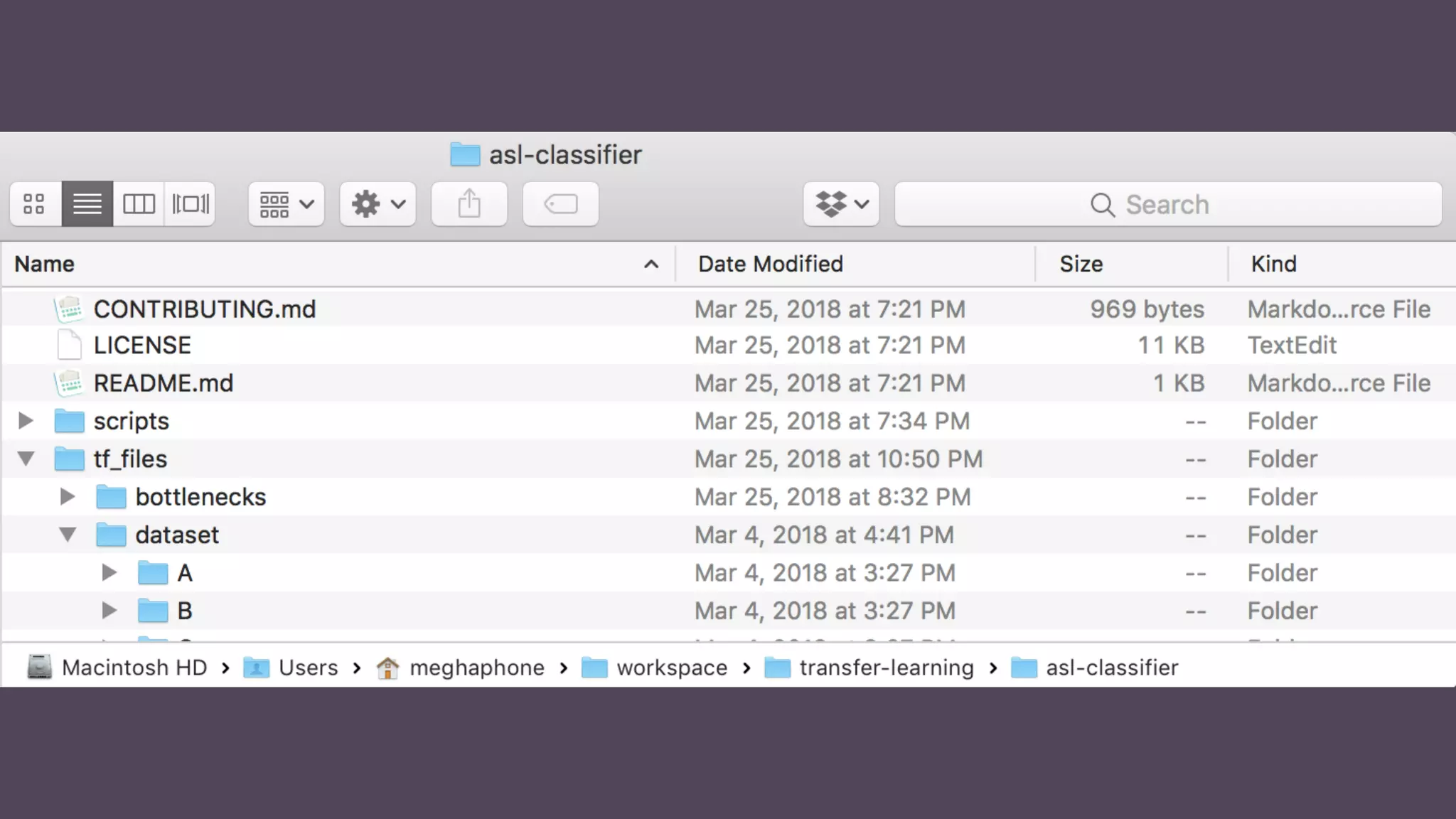

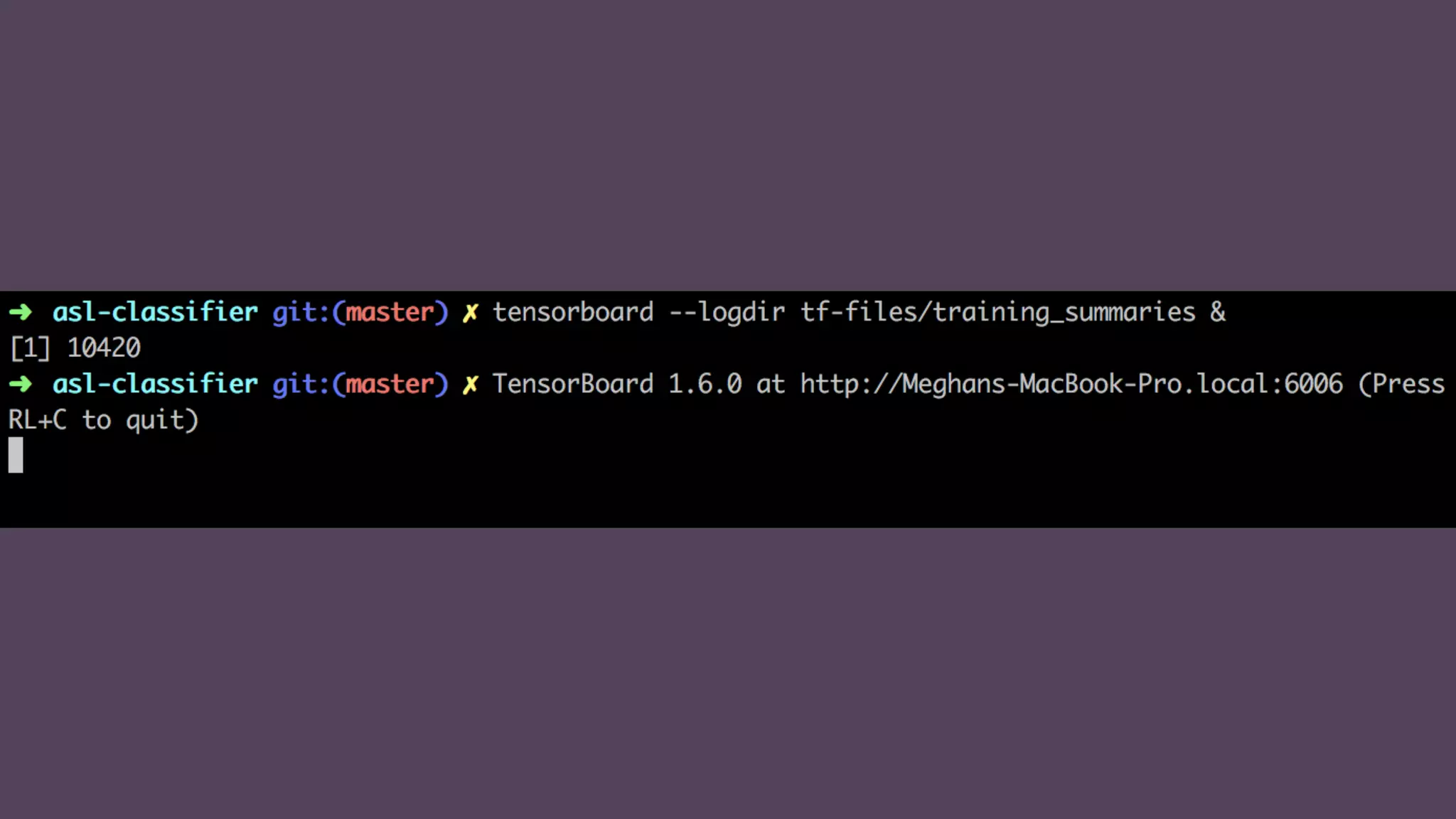

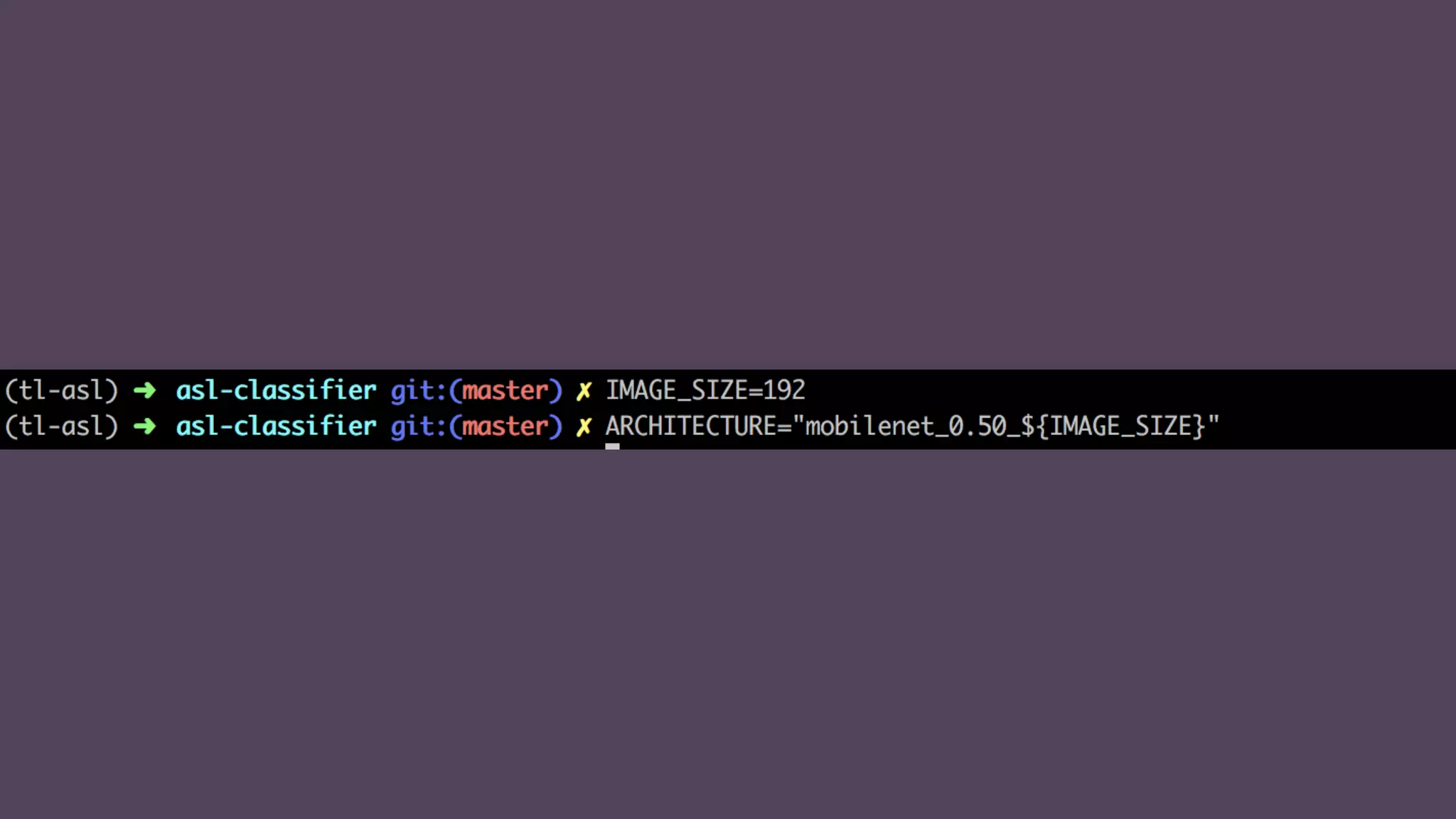

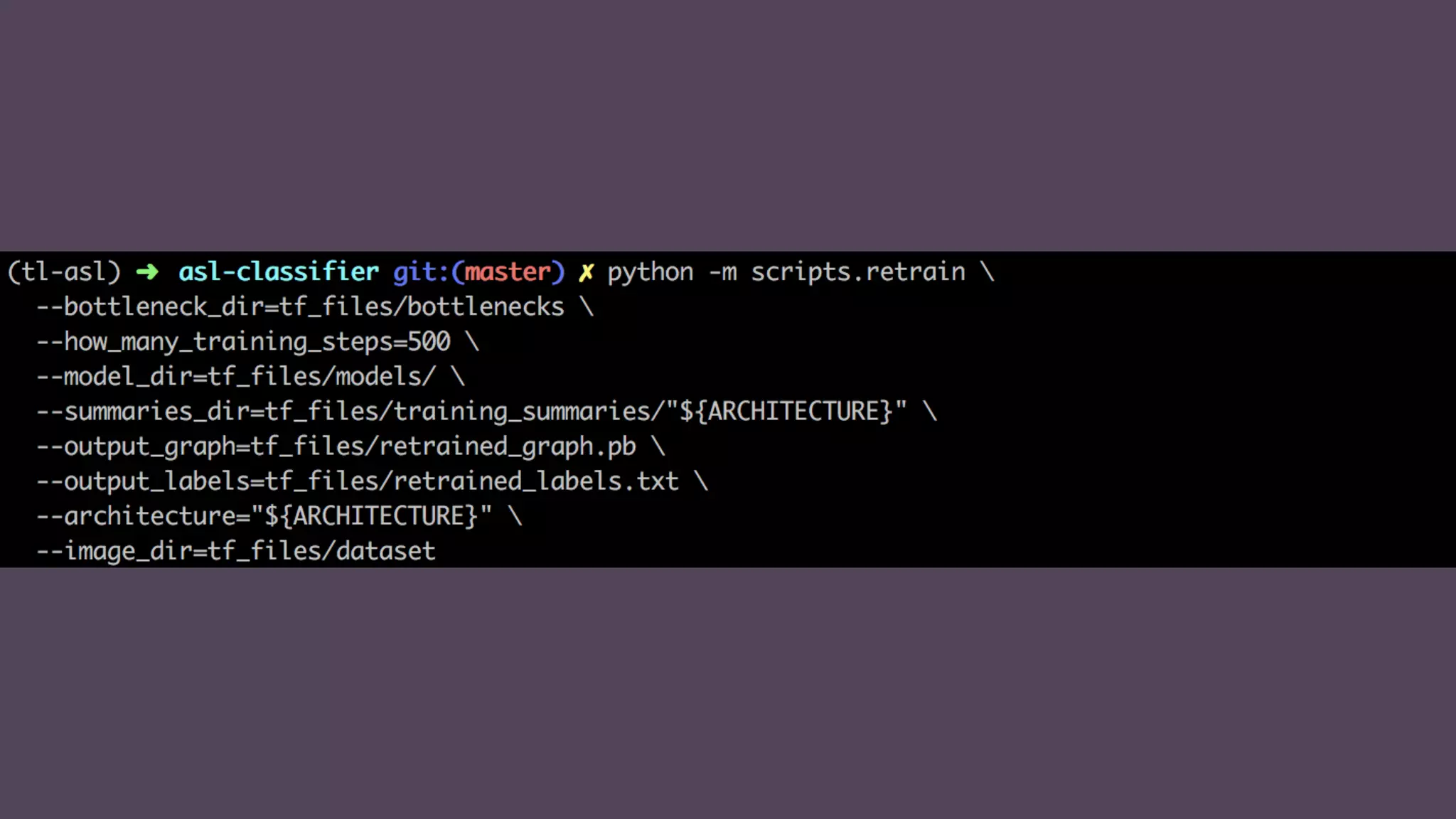

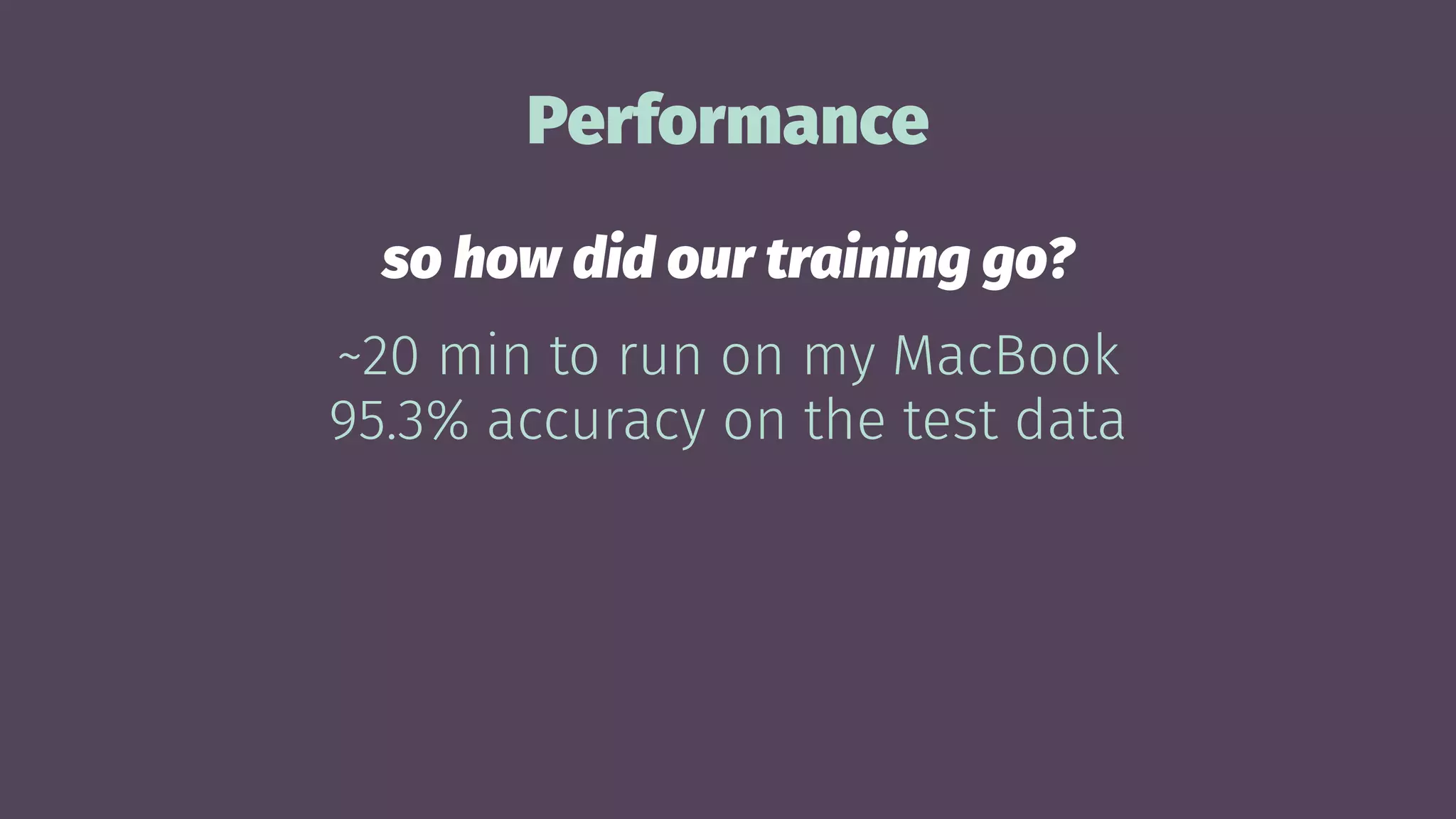

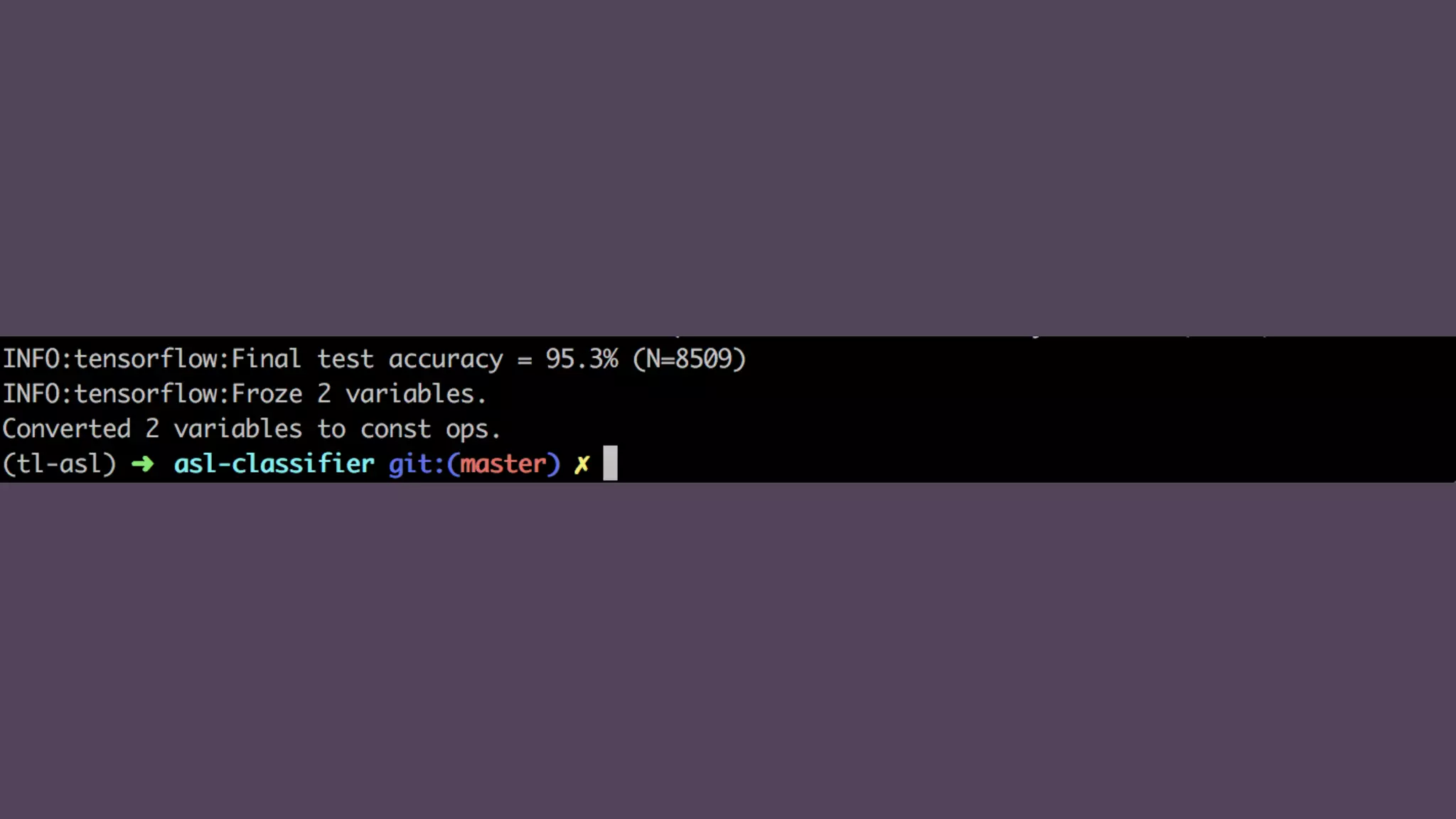

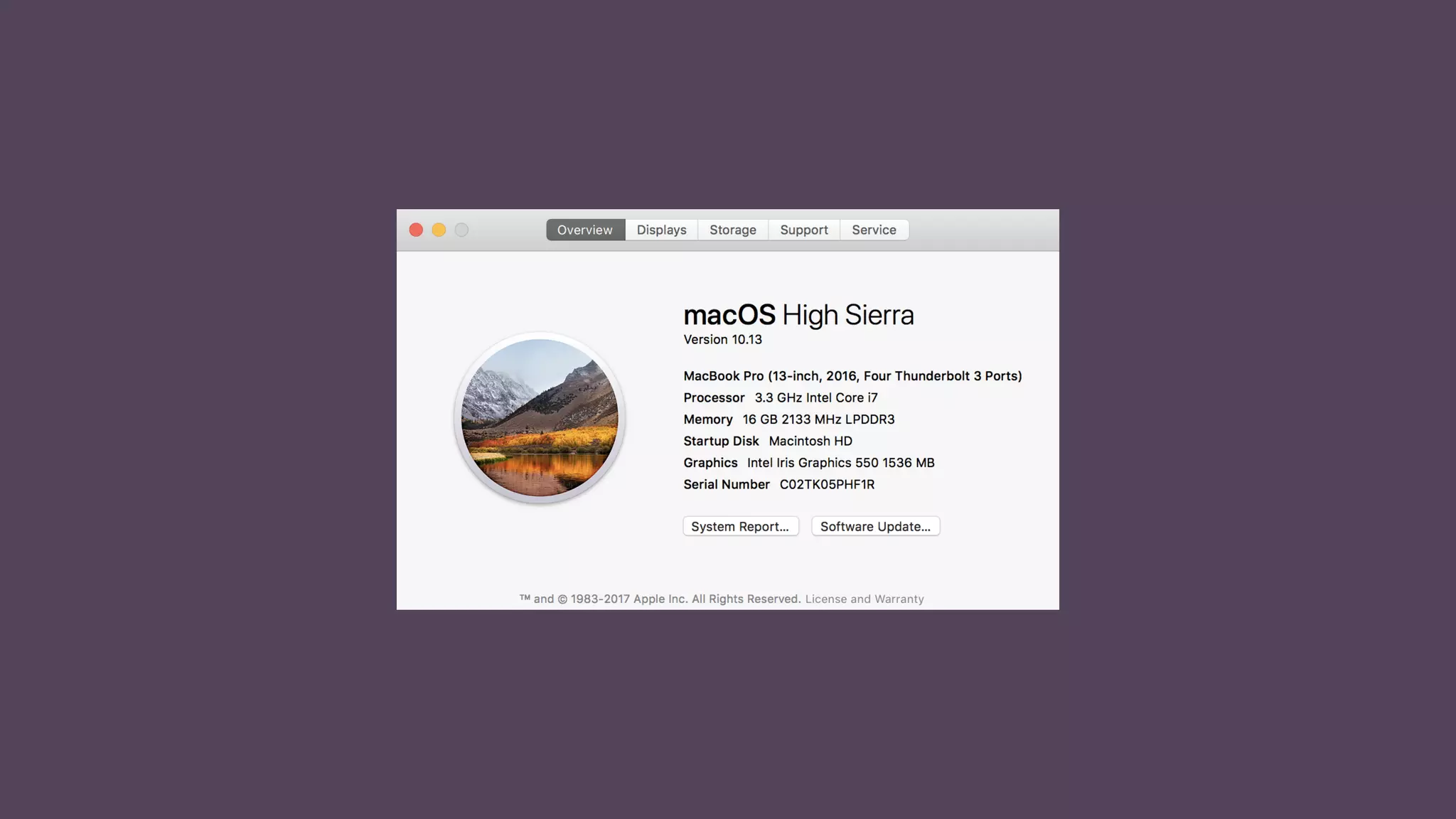

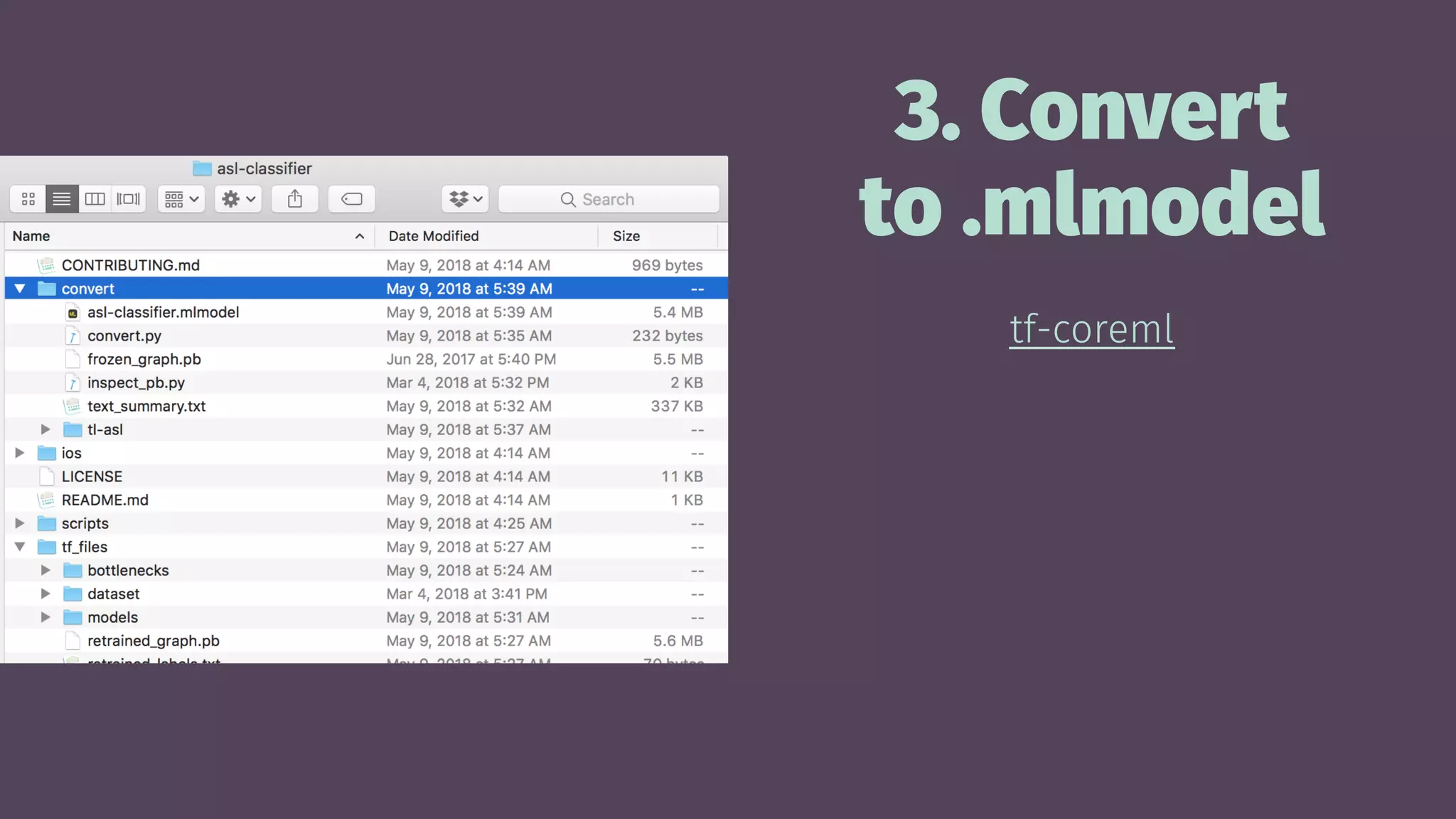

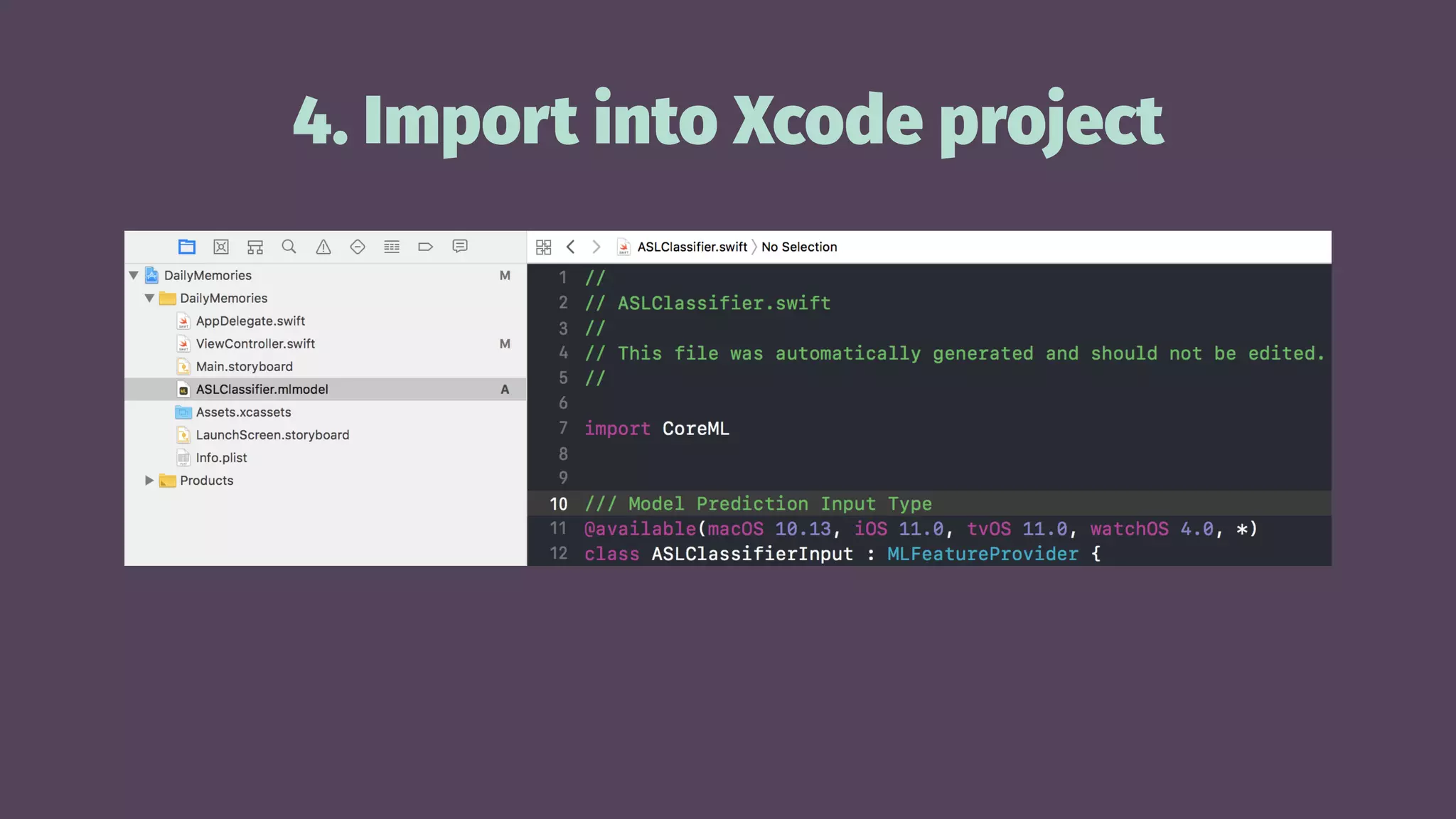

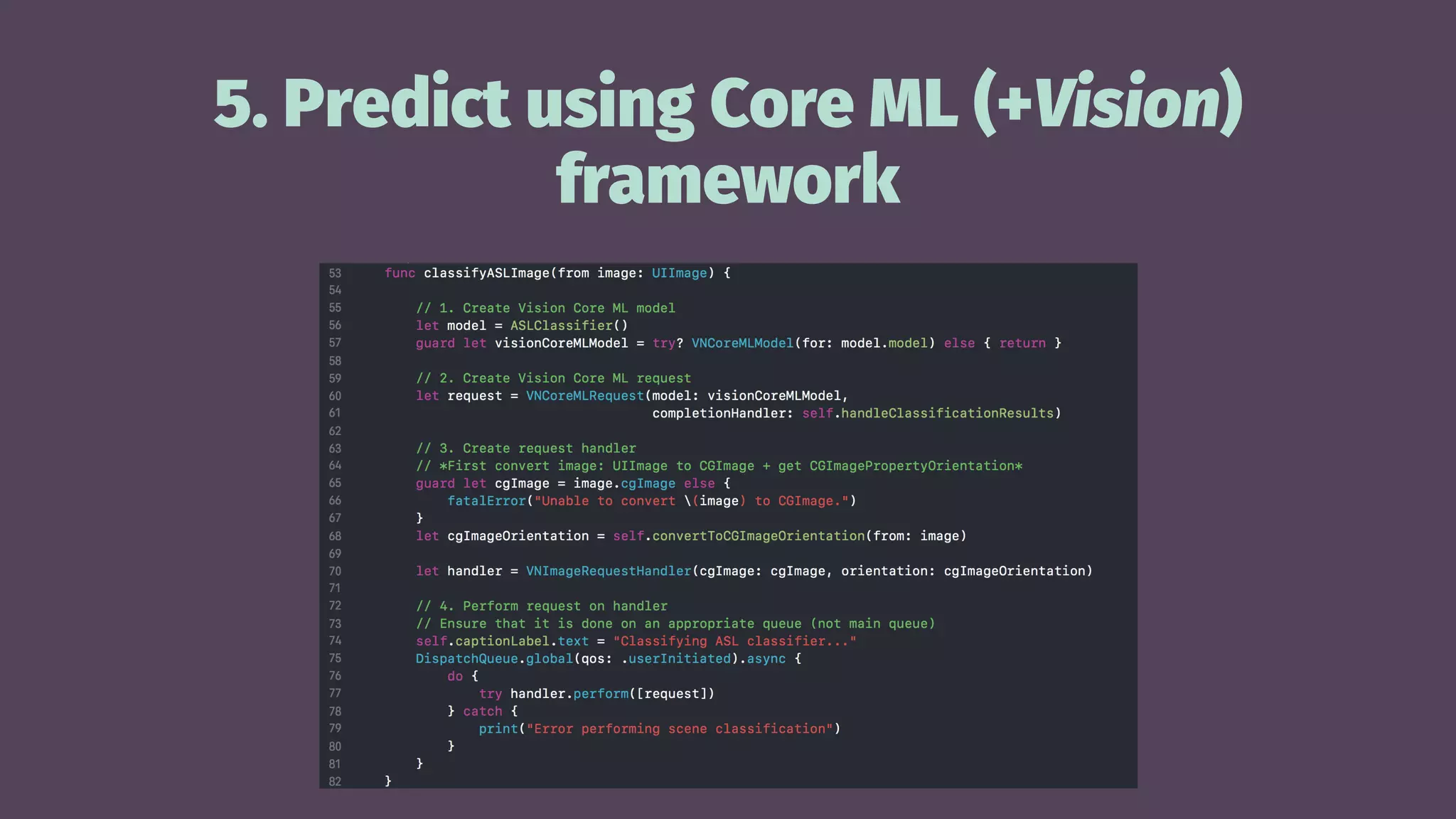

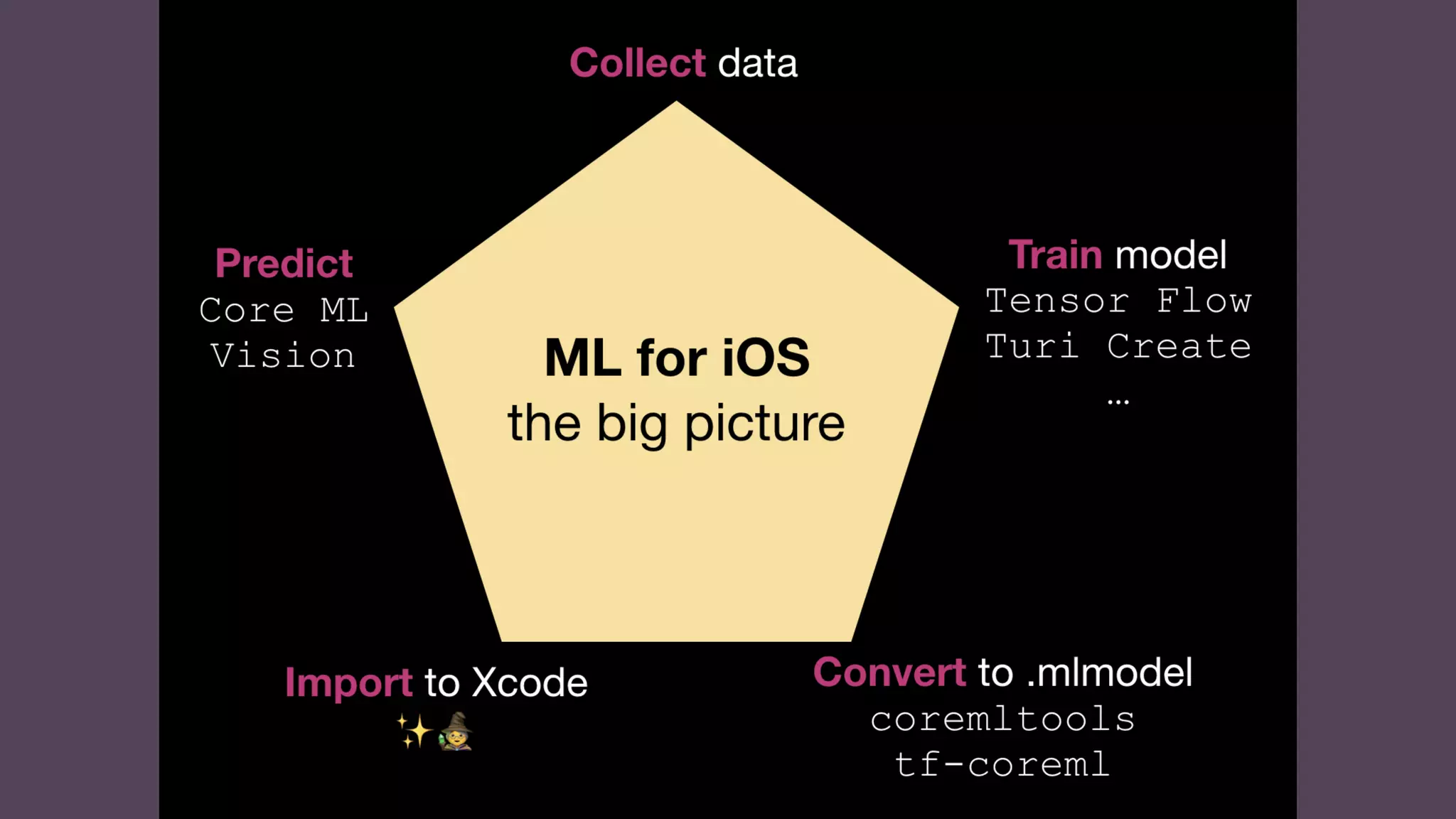

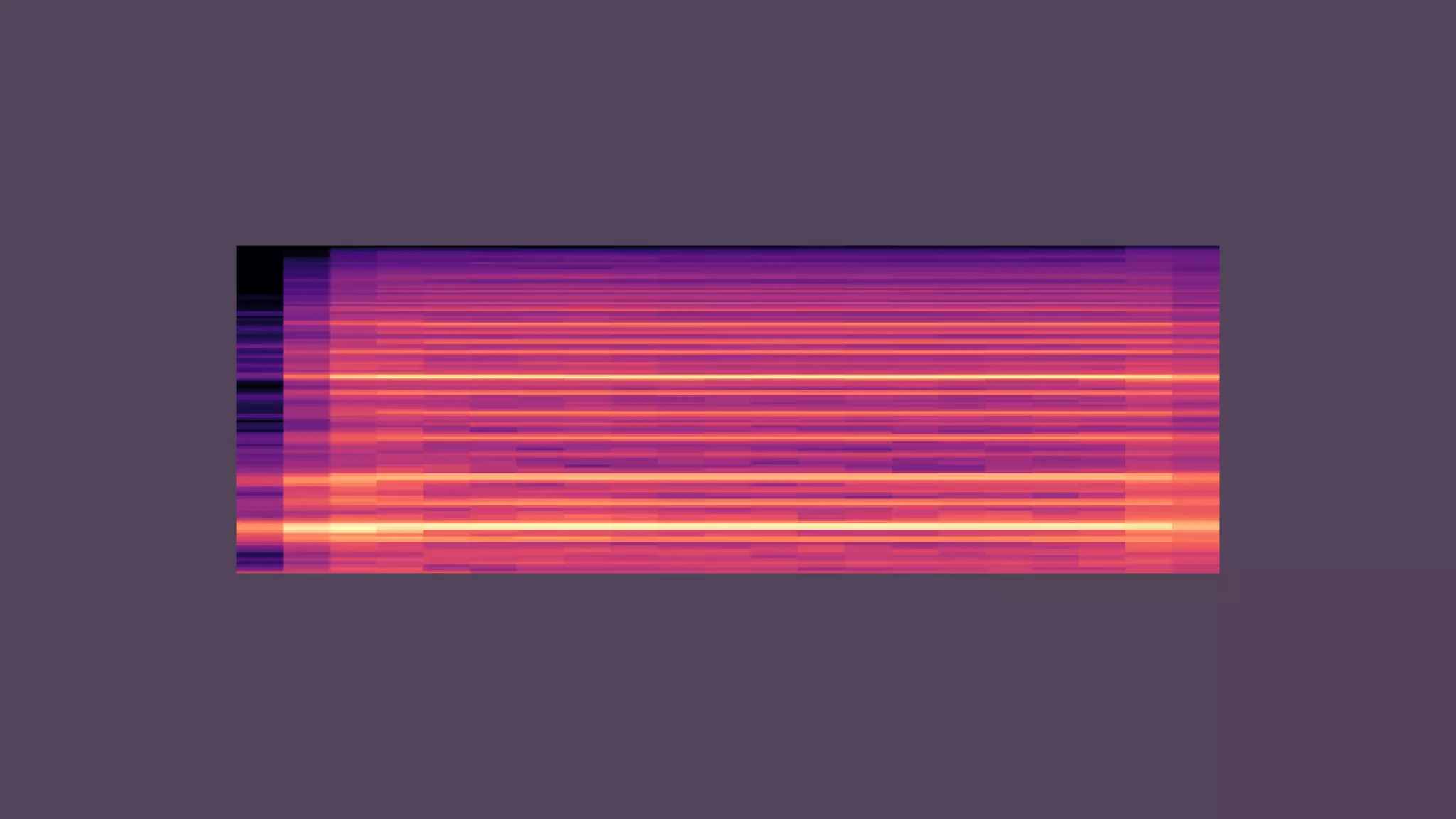

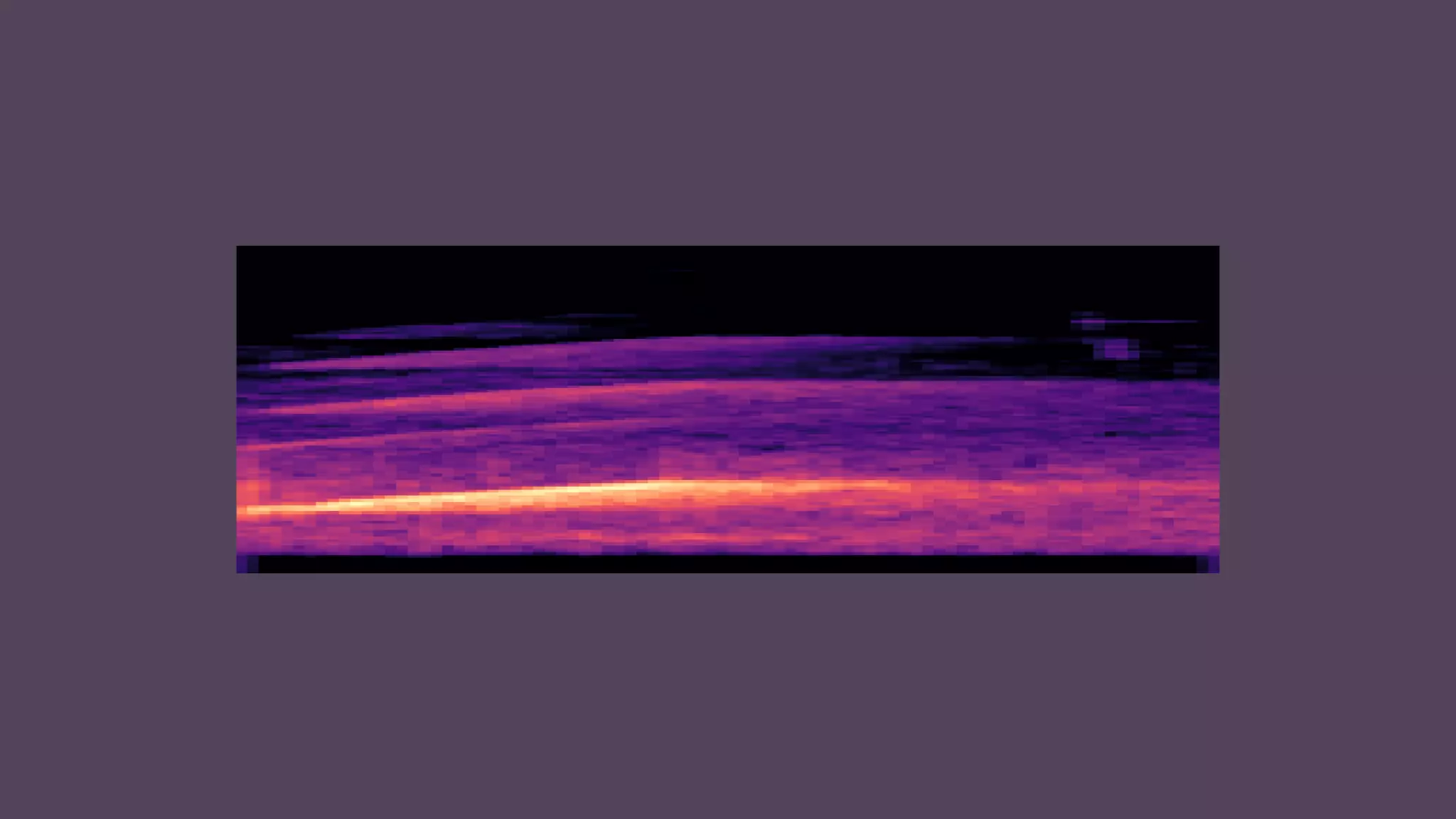

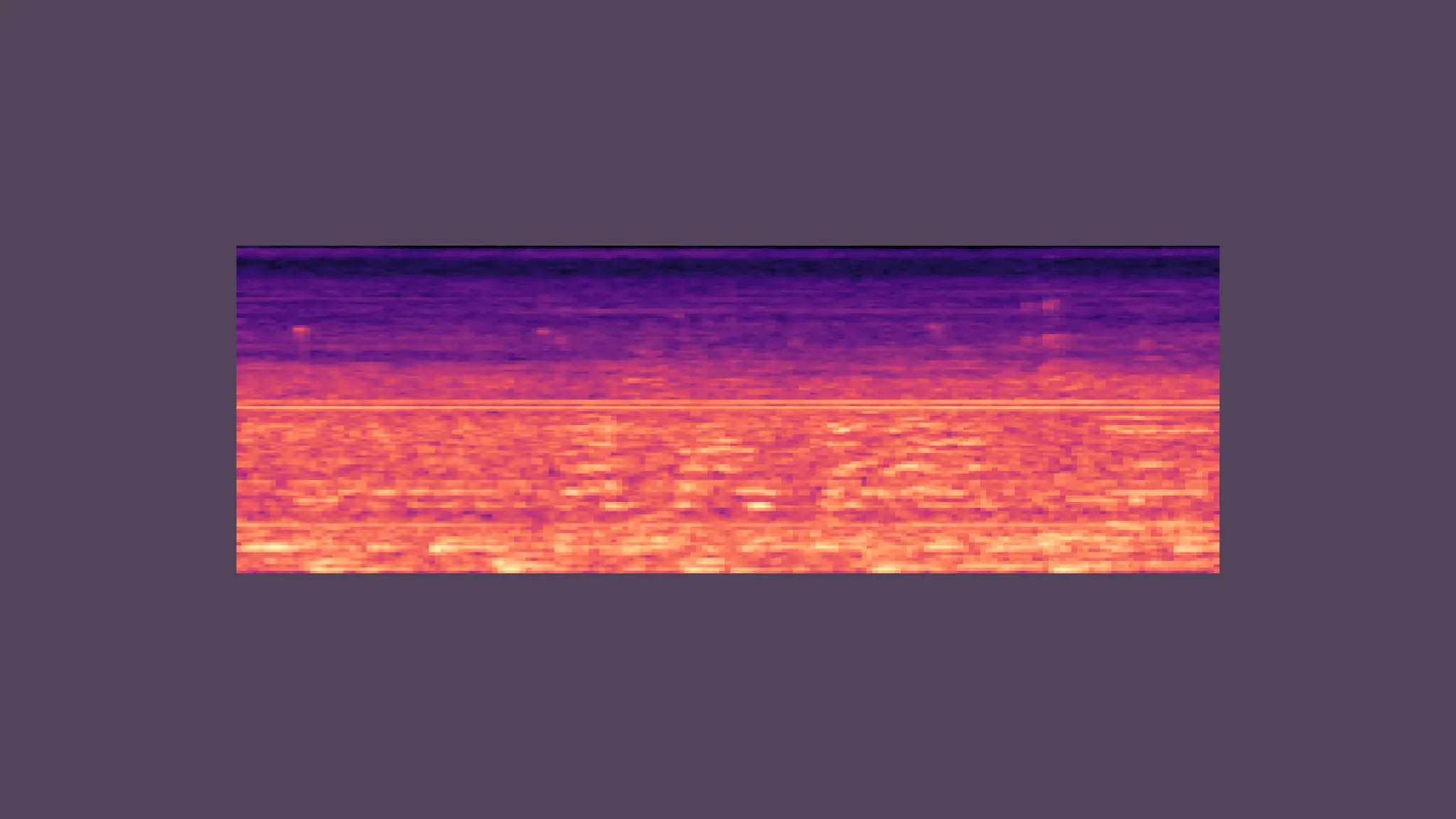

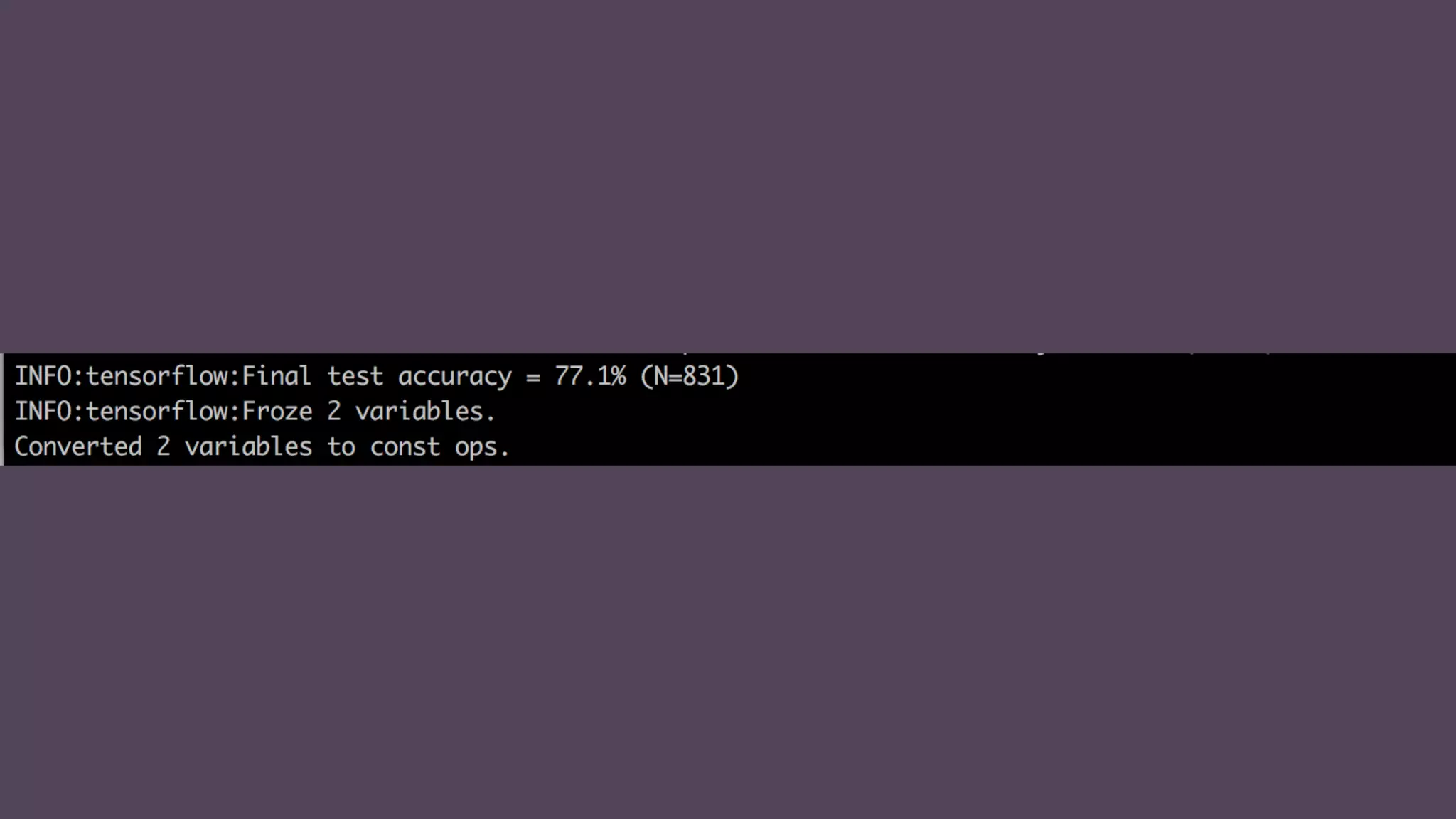

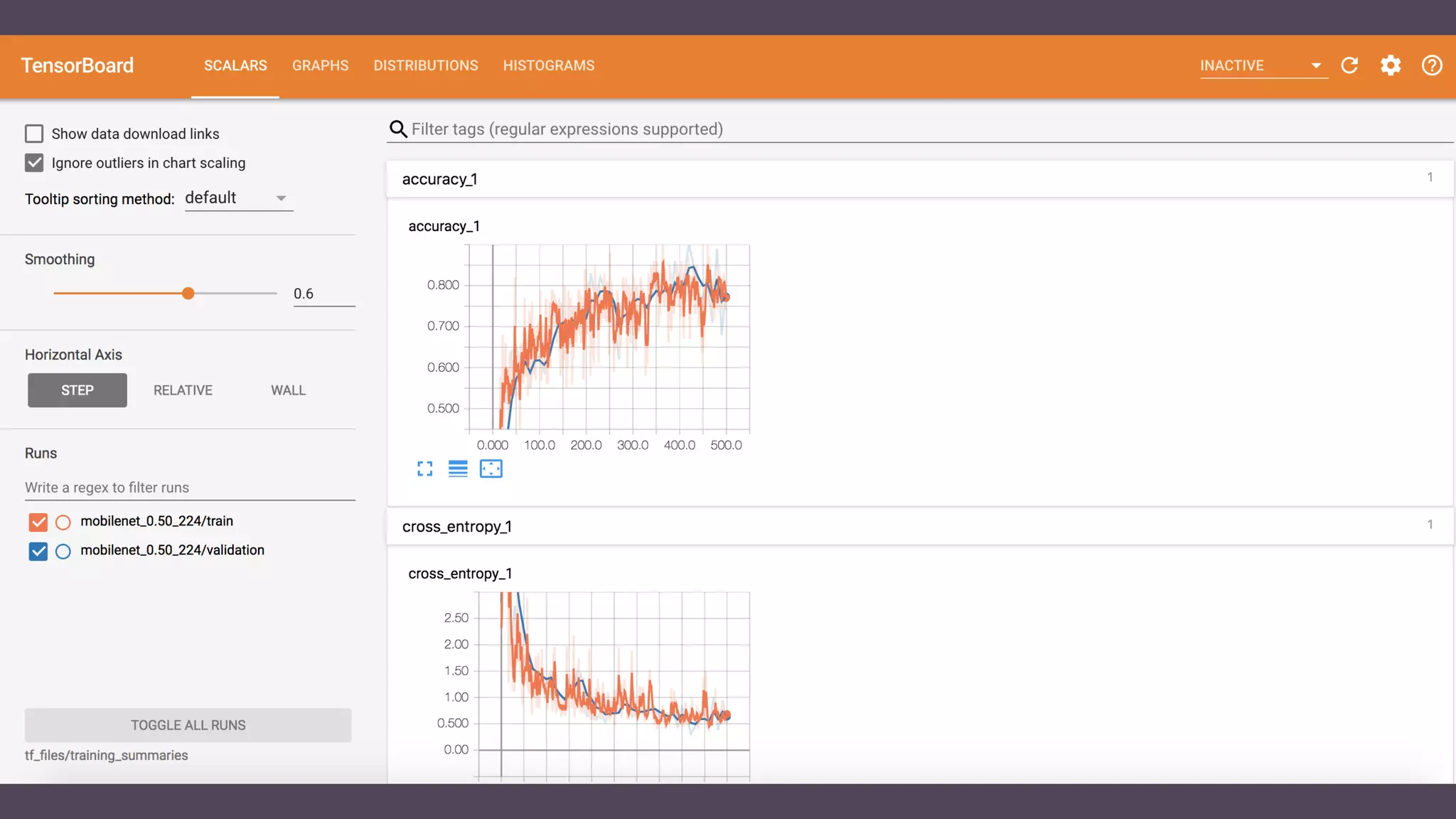

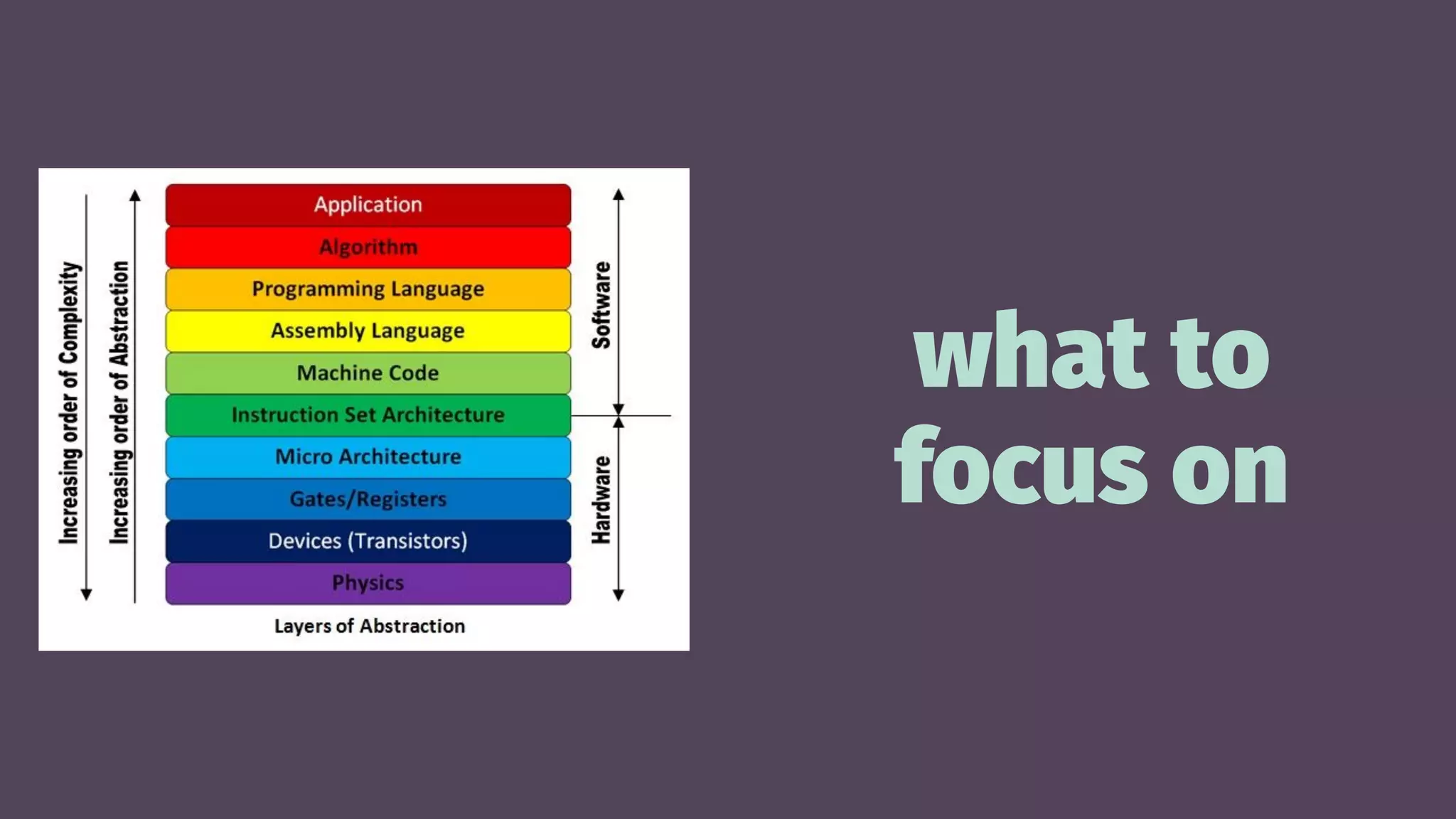

The document discusses the practical application of machine learning (ML) for iOS development, highlighting its tools, end-to-end processes, and use cases such as image and audio classification. It emphasizes the importance of choosing simplistic solutions and encourages developers to utilize frameworks like Core ML and transfer learning. Additionally, resource links for further learning and examples are provided, alongside practical insights into model training and implementation on mobile devices.