Terraform day02

•Download as PPTX, PDF•

0 likes•177 views

Terraform day02

Report

Share

Report

Share

Recommended

Developing Terraform Modules at Scale - HashiTalks 2021

Developing Terraform Modules at Scale - HashiTalks 2021

Terraform at Scale - All Day DevOps 2017

Terraform, is no doubt very flexible and powerful. The question is, how do we write Terraform code and construct our infrastructure in a reproducible fashion that makes sense? How can we keep code DRY, segment state, and reduce the risk of making changes to our service/stack/infrastructure?

HashiCorp’s infrastructure management tool, Terraform, is no doubt very flexible and powerful. The question is, how do we write Terraform code and construct our infrastructure in a reproducible fashion that makes sense? How can we keep code DRY, segment state, and reduce the risk of making changes to our service/stack/infrastructure?

This talk describes a design pattern to help answer the previous questions. The talk is divided into two sections, with the first section describing and defining the design pattern with a Deployment Example. The second part uses a multi-repository GitHub organization to create a Real World Example of the design pattern.

Intro to Terraform

Introduction to Terraform - presented at the Perth Python & Django meetup on March 1 2018. Demo code repo can be found here: https://github.com/jaymickey/terraform-demo

Terraform introduction

This beginning terraform workshop will teach you how to safely create and provision Infrastructure as Code (IAC) using Hashicorp Terraform in an AWS environment. In this class you will learn how to setup and install terraform. You will also be given a walkthrough of Terraform fundamentals. You will be lead through the process of deploying a single server, deploying a cluster and setting up a load balancer. You will also learn how to author Terraform Modules, work with Route53 and how to manage DNS.

Requirements. You will need to have an AWS account set up already with Terraform v0.9.3 installed. You will also need to have git install to download the workshop material.

You can find more informaiton on how to install terraform here: https://www.terraform.io/intro/getting-started/install.html. You can sign up for an AWS account here: https://aws.amazon.com/account/

https://github.com/jasonvance/terraform-introduction

Terraform in deployment pipeline

My talk at FullStackFest, 4.9.2017. Become more familiar with managing infrastructure using Terraform, Packer and deployment pipeline. Code repository - https://github.com/antonbabenko/terraform-deployment-pipeline-talk

Recommended

Developing Terraform Modules at Scale - HashiTalks 2021

Developing Terraform Modules at Scale - HashiTalks 2021

Terraform at Scale - All Day DevOps 2017

Terraform, is no doubt very flexible and powerful. The question is, how do we write Terraform code and construct our infrastructure in a reproducible fashion that makes sense? How can we keep code DRY, segment state, and reduce the risk of making changes to our service/stack/infrastructure?

HashiCorp’s infrastructure management tool, Terraform, is no doubt very flexible and powerful. The question is, how do we write Terraform code and construct our infrastructure in a reproducible fashion that makes sense? How can we keep code DRY, segment state, and reduce the risk of making changes to our service/stack/infrastructure?

This talk describes a design pattern to help answer the previous questions. The talk is divided into two sections, with the first section describing and defining the design pattern with a Deployment Example. The second part uses a multi-repository GitHub organization to create a Real World Example of the design pattern.

Intro to Terraform

Introduction to Terraform - presented at the Perth Python & Django meetup on March 1 2018. Demo code repo can be found here: https://github.com/jaymickey/terraform-demo

Terraform introduction

This beginning terraform workshop will teach you how to safely create and provision Infrastructure as Code (IAC) using Hashicorp Terraform in an AWS environment. In this class you will learn how to setup and install terraform. You will also be given a walkthrough of Terraform fundamentals. You will be lead through the process of deploying a single server, deploying a cluster and setting up a load balancer. You will also learn how to author Terraform Modules, work with Route53 and how to manage DNS.

Requirements. You will need to have an AWS account set up already with Terraform v0.9.3 installed. You will also need to have git install to download the workshop material.

You can find more informaiton on how to install terraform here: https://www.terraform.io/intro/getting-started/install.html. You can sign up for an AWS account here: https://aws.amazon.com/account/

https://github.com/jasonvance/terraform-introduction

Terraform in deployment pipeline

My talk at FullStackFest, 4.9.2017. Become more familiar with managing infrastructure using Terraform, Packer and deployment pipeline. Code repository - https://github.com/antonbabenko/terraform-deployment-pipeline-talk

Using Terraform.io (Human Talks Montpellier, Epitech, 2014/09/09)

How to build an infrastructure & handle change with Hashicorp's Terraform.

The talk was "distributed teams" oriented.

"Continuously delivering infrastructure using Terraform and Packer" training ...

Slides from "Continuously delivering infrastructure using Terraform and Packer" training by Anton Babenko, May 2017

Introductory Overview to Managing AWS with Terraform

From the AWS NZ Auckland Community Meetup - May 4th 2017

https://www.meetup.com/AWS_NZ/events/236169428/

We get a first look at Hashicorp's Terraform and how to use it for Infrastructure as Code with Amazon Web Services.

We'll also share how it fits in with our current CI/CD workflow at the Invenco cloud services team

Sample code available at https://github.com/beanaroo/aws_nz_meetup-terraform_intro

Terraform at Scale

A presentation from Hashiconf 2016.

Terraform is a wonderful tool for describing infrastructure as code. It’s fast, flexible, automatically resolves dependencies, and is rapidly improving.

But in some ways, Terraform is flexible like AWS is flexible. You can do pretty much anything, but it’s also easy to shoot yourself in the foot if you aren’t careful.

In the past year, we’ve started managing thousands of resources with Terraform, allowing a lot more of the dev team to change the underlying infrastructure. During that time, we’ve learned a lot about how to set up our terraform modules so that they are easy to manage and reuse.

This talk will cover how we manage tfstate, separate environments, specific module definitions, and how use terraform to boot new services in production. I’ll also discuss the challenges we’re currently facing, and how we plan to attack them going forward.

Infrastructure as Code: Introduction to Terraform

Presented at Greater Philadelphia AWS User Group Meetup

07.27.2016

An intro to Docker, Terraform, and Amazon ECS

This talk is a very quick intro to Docker, Terraform, and Amazon's EC2 Container Service (ECS). In just 15 minutes, you'll see how to take two apps (a Rails frontend and a Sinatra backend), package them as Docker containers, run them using Amazon ECS, and to define all of the infrastructure-as-code using Terraform.

Terraform 0.9 + good practices

What's new in Terraform 0.9 and what are some of the good practices in writing your Terraform configs.

A Hands-on Introduction on Terraform Best Concepts and Best Practices

At our OC DevOps Meetup, we invited Rami Al-Ghami, a Sr. Software engineer at Workday to deliver a presentation on a Hands-On Terraform Best Concepts and Best Practices.

The software lifecycle does not end when the developer packages their code and makes it ready for deployment. The delivery of this code is an integral part of shipping a product. Infrastructure orchestration and resource configuration should follow a similar lifecycle (and process) to that of the software delivered on it. In this talk, Rami will discuss how to use Terraform to automate your infrastructure and software delivery.

Refactoring terraform

Slides from my "Untangling Terraform through Refactoring Talk" given at Hashiconf 2016

Introduction To Terraform

Basic details about Terraform and how to create sample S3 bucket, ec2 and cloudfront.

Infrastructure as Code with Terraform

Introduction to Infastructure as code with Terraform and Amazon Web Services

AWS DevOps - Terraform, Docker, HashiCorp Vault

From basic topics to advanced usages of Terraform including ECS and Vault set up aith Consul.

From a three-days training.

Version 1.0

Terraform - Taming Modern Clouds

Slides form Config Management Camp, looking at how you can take a collaborative GitFlow approach to Terraform using Remote State, Modules and Dynamically Generated Credentials using Vault

Terraform in action

Slides of my presentation to the AWS User Group Meetup in Montpellier.

Describes our use of terraform at Teads (http://www.teads.tv)

Administering and Monitoring SolrCloud Clusters

37 slides about taking care of your SolrCluster - Collections API, Core API, dynamic schema modification, segment merging, hard vs. soft commit, caches, monitoring, performance, JMX, it's all in here.

Comprehensive Terraform Training

A comprehensive walkthrough of how to manage infrastructure-as-code using Terraform. This presentation includes an introduction to Terraform, a discussion of how to manage Terraform state, how to use Terraform modules, an overview of best practices (e.g. isolation, versioning, loops, if-statements), and a list of gotchas to look out for.

For a written and more in-depth version of this presentation, check out the "Comprehensive Guide to Terraform" blog post series: https://blog.gruntwork.io/a-comprehensive-guide-to-terraform-b3d32832baca

Terraform Modules and Continuous Deployment

A demonstration on how they use Terraform Modules, Packer and Jenkins to build a continuous delivery pipeline to AWS.

Infrastructure as Code - Terraform - Devfest 2018

How to do Infrastructure as Code with Terraform and how to scale its code

Terraform Abstractions for Safety and Power

https://www.youtube.com/watch?v=IeweKUdHJc4

My presentation from Hashiconf 2017, discussing our use of Terraform, and our techniques

to help make it safe and accessible.

Everything as Code with Terraform

Terraform is an Infrastructure as Code tool for declaratively building and maintaining complex infrastructures on one or more cloud providers/services. But Terraform also supports over 80 non-infrastructure providers! In this demo-driven talk, will dive into the internals of Terraform and see how it works. We will show how Terraform can be used for non-infrastructure use cases by showing examples. We’ll also take a look at on how you can extend Terraform to manage anything with an API.

Terraform infraestructura como código

Presentación empleada en el primer MeetUp AWS del grupo de usuarios de Valencia.

Infraestructura como código empleando Terraform. Se muestra las principales características de esta tecnología que nos permite ser más ágiles y rápidos desplegando nuestras plataformas en AWS.

More Related Content

What's hot

Using Terraform.io (Human Talks Montpellier, Epitech, 2014/09/09)

How to build an infrastructure & handle change with Hashicorp's Terraform.

The talk was "distributed teams" oriented.

"Continuously delivering infrastructure using Terraform and Packer" training ...

Slides from "Continuously delivering infrastructure using Terraform and Packer" training by Anton Babenko, May 2017

Introductory Overview to Managing AWS with Terraform

From the AWS NZ Auckland Community Meetup - May 4th 2017

https://www.meetup.com/AWS_NZ/events/236169428/

We get a first look at Hashicorp's Terraform and how to use it for Infrastructure as Code with Amazon Web Services.

We'll also share how it fits in with our current CI/CD workflow at the Invenco cloud services team

Sample code available at https://github.com/beanaroo/aws_nz_meetup-terraform_intro

Terraform at Scale

A presentation from Hashiconf 2016.

Terraform is a wonderful tool for describing infrastructure as code. It’s fast, flexible, automatically resolves dependencies, and is rapidly improving.

But in some ways, Terraform is flexible like AWS is flexible. You can do pretty much anything, but it’s also easy to shoot yourself in the foot if you aren’t careful.

In the past year, we’ve started managing thousands of resources with Terraform, allowing a lot more of the dev team to change the underlying infrastructure. During that time, we’ve learned a lot about how to set up our terraform modules so that they are easy to manage and reuse.

This talk will cover how we manage tfstate, separate environments, specific module definitions, and how use terraform to boot new services in production. I’ll also discuss the challenges we’re currently facing, and how we plan to attack them going forward.

Infrastructure as Code: Introduction to Terraform

Presented at Greater Philadelphia AWS User Group Meetup

07.27.2016

An intro to Docker, Terraform, and Amazon ECS

This talk is a very quick intro to Docker, Terraform, and Amazon's EC2 Container Service (ECS). In just 15 minutes, you'll see how to take two apps (a Rails frontend and a Sinatra backend), package them as Docker containers, run them using Amazon ECS, and to define all of the infrastructure-as-code using Terraform.

Terraform 0.9 + good practices

What's new in Terraform 0.9 and what are some of the good practices in writing your Terraform configs.

A Hands-on Introduction on Terraform Best Concepts and Best Practices

At our OC DevOps Meetup, we invited Rami Al-Ghami, a Sr. Software engineer at Workday to deliver a presentation on a Hands-On Terraform Best Concepts and Best Practices.

The software lifecycle does not end when the developer packages their code and makes it ready for deployment. The delivery of this code is an integral part of shipping a product. Infrastructure orchestration and resource configuration should follow a similar lifecycle (and process) to that of the software delivered on it. In this talk, Rami will discuss how to use Terraform to automate your infrastructure and software delivery.

Refactoring terraform

Slides from my "Untangling Terraform through Refactoring Talk" given at Hashiconf 2016

Introduction To Terraform

Basic details about Terraform and how to create sample S3 bucket, ec2 and cloudfront.

Infrastructure as Code with Terraform

Introduction to Infastructure as code with Terraform and Amazon Web Services

AWS DevOps - Terraform, Docker, HashiCorp Vault

From basic topics to advanced usages of Terraform including ECS and Vault set up aith Consul.

From a three-days training.

Version 1.0

Terraform - Taming Modern Clouds

Slides form Config Management Camp, looking at how you can take a collaborative GitFlow approach to Terraform using Remote State, Modules and Dynamically Generated Credentials using Vault

Terraform in action

Slides of my presentation to the AWS User Group Meetup in Montpellier.

Describes our use of terraform at Teads (http://www.teads.tv)

Administering and Monitoring SolrCloud Clusters

37 slides about taking care of your SolrCluster - Collections API, Core API, dynamic schema modification, segment merging, hard vs. soft commit, caches, monitoring, performance, JMX, it's all in here.

Comprehensive Terraform Training

A comprehensive walkthrough of how to manage infrastructure-as-code using Terraform. This presentation includes an introduction to Terraform, a discussion of how to manage Terraform state, how to use Terraform modules, an overview of best practices (e.g. isolation, versioning, loops, if-statements), and a list of gotchas to look out for.

For a written and more in-depth version of this presentation, check out the "Comprehensive Guide to Terraform" blog post series: https://blog.gruntwork.io/a-comprehensive-guide-to-terraform-b3d32832baca

Terraform Modules and Continuous Deployment

A demonstration on how they use Terraform Modules, Packer and Jenkins to build a continuous delivery pipeline to AWS.

Infrastructure as Code - Terraform - Devfest 2018

How to do Infrastructure as Code with Terraform and how to scale its code

Terraform Abstractions for Safety and Power

https://www.youtube.com/watch?v=IeweKUdHJc4

My presentation from Hashiconf 2017, discussing our use of Terraform, and our techniques

to help make it safe and accessible.

Everything as Code with Terraform

Terraform is an Infrastructure as Code tool for declaratively building and maintaining complex infrastructures on one or more cloud providers/services. But Terraform also supports over 80 non-infrastructure providers! In this demo-driven talk, will dive into the internals of Terraform and see how it works. We will show how Terraform can be used for non-infrastructure use cases by showing examples. We’ll also take a look at on how you can extend Terraform to manage anything with an API.

What's hot (20)

Using Terraform.io (Human Talks Montpellier, Epitech, 2014/09/09)

Using Terraform.io (Human Talks Montpellier, Epitech, 2014/09/09)

"Continuously delivering infrastructure using Terraform and Packer" training ...

"Continuously delivering infrastructure using Terraform and Packer" training ...

Introductory Overview to Managing AWS with Terraform

Introductory Overview to Managing AWS with Terraform

A Hands-on Introduction on Terraform Best Concepts and Best Practices

A Hands-on Introduction on Terraform Best Concepts and Best Practices

Similar to Terraform day02

Terraform infraestructura como código

Presentación empleada en el primer MeetUp AWS del grupo de usuarios de Valencia.

Infraestructura como código empleando Terraform. Se muestra las principales características de esta tecnología que nos permite ser más ágiles y rápidos desplegando nuestras plataformas en AWS.

Building and Deploying Application to Apache Mesos

Building and Deploying Application to Apache Mesos using Marathon, Apache Aurora and Custom Frameworks

Understanding OpenStack Deployments - PuppetConf 2014

Understanding OpenStack Deployments - Chris Hoge, OpenStack Foundation

Introduction To Apache Mesos

Apache Mesos abstracts CPU, memory, storage, and other compute resources away from machines (physical or virtual), enabling fault-tolerant and elastic distributed systems to easily be built and run effectively.

Burn down the silos! Helping dev and ops gel on high availability websites

HA websites are where the rubber meets the road - at 200km/h. Traditional separation of dev and ops just doesn't cut it.

Everything is related to everything. Code relies on performant and resilient infrastructure, but highly performant infrastructure will only get a poorly written application so far. Worse still, root cause analysis in HA sites will more often than not identify problems that don't clearly belong to either devs or ops.

The two options are collaborate or die.

This talk will introduce 3 core principles for improving collaboration between operations and development teams: consistency, repeatability, and visibility. These principles will be investigated with real world case studies and associated technologies audience members can start using now. In particular, there will be a focus on:

- fast provisioning of test environments with configuration management

- reliable and repeatable automated deployments

- application and infrastructure visibility with statistics collection, logging, and visualisation

Programando sua infraestrutura com o AWS CloudFormation

Programando sua infraestrutura com o AWS CloudFormation, por Michel Pereir, Solutions Architect da AWS.

Apache Kafka, HDFS, Accumulo and more on Mesos

Slides from my talk about using Mesos to run persistant services like Apache Kafka, Apache HDFS, Apache Accumulo on Apache Mesos with Marathon

Application Stack - TIAD Camp Microsoft Cloud Readiness

TIAD Camp Microsoft Cloud Readiness 20 Juin 2017

Aprovisionamiento multi-proveedor con Terraform - Plain Concepts DevOps day

La infraestructura como código (IaC) es una de las prácticas relacionadas con la cultura DevOps que está cogiendo más tracción en el desarrollo de software y Terraform es una de las herramientas más recomendadas para ello.

Se suele relacionar sobre todo con la creación de infraestructura en los grandes servicios “Cloud” -AWS, Azure, Google Cloud,…- pero es además algo aplicable a otros aspectos de IT como podrían ser la creación de usuarios en servicios de terceros o propios (Github, bases de datos,…), configuración de dominios (Dyn, GoDaddy,…), configuración de alertas (Grafana, OpsGenie)…

Durante esta sesión se explicará su funcionamiento básico y veremos en directo despliegues en varias de estas plataformas.

Programming the Physical World with Device Shadows and Rules Engine

Learn more about how to use AWS IoT's Device Shadows and Rules Engine to build powerful IoT applications. With Device Shadows, you can build applications that interact with your devices by providing always available REST APIs. By taking advantage of AWS IoT's topic-based rules and built-in integrations, you can build IoT applications that gather, process, analyze, and act on data generated by connected devices at global scale, without having to manage any infrastructure.

DevOps Enabling Your Team

Speaker: Jacob Aae Mikkelsen

Once you have successfully developped your application in Grails, Ratpack or your other favorite framework, you would like to see it deployed as fast and painless as possible, right?

This talk will cover some of the supporting cast members of a succesful modern infrastructure, that developers can understand and use efficiently, and with good DevOps practices.

Key elements are

Docker

Infrastructure as Code

Container Orchestration

The demo-goods will hopefully be on our side, as this talk includes quite some live demos!

Deployment and Management on AWS:

A Deep Dive on Options and Tools

AWS Elastic Beanstalk

AWS OpsWorks

AWS CloudFormation

Amazon EC2

Integrating icinga2 and the HashiCorp suite

We all love infrastructure as code, we automate everything ™ but how many

of us can really say we could destroy and recreate our core infrastructure

without human intervention. Can you be sure there isnt a DNS problem or

that all the things ™ are done in the right order This talk walks the

audience through a green fields exercise that sets up service discovery

using Consul, infrastructure as code using terraform, using images build

with packer and configured using puppet.

Disaster Recovery Site on AWS - Minimal Cost Maximum Efficiency (STG305) | AW...

Implementation of a disaster recovery (DR) site is crucial for the business continuity of any enterprise. Due to the fundamental nature of features like elasticity, scalability, and geographic distribution, DR implementation on AWS can be done at 10-50% of the conventional cost. In this session, we do a deep dive into proven DR architectures on AWS and the best practices, tools and techniques to get the most out of them.

Similar to Terraform day02 (20)

Building and Deploying Application to Apache Mesos

Building and Deploying Application to Apache Mesos

Understanding OpenStack Deployments - PuppetConf 2014

Understanding OpenStack Deployments - PuppetConf 2014

Burn down the silos! Helping dev and ops gel on high availability websites

Burn down the silos! Helping dev and ops gel on high availability websites

Programando sua infraestrutura com o AWS CloudFormation

Programando sua infraestrutura com o AWS CloudFormation

Application Stack - TIAD Camp Microsoft Cloud Readiness

Application Stack - TIAD Camp Microsoft Cloud Readiness

Aprovisionamiento multi-proveedor con Terraform - Plain Concepts DevOps day

Aprovisionamiento multi-proveedor con Terraform - Plain Concepts DevOps day

Programming the Physical World with Device Shadows and Rules Engine

Programming the Physical World with Device Shadows and Rules Engine

Deployment and Management on AWS:

A Deep Dive on Options and Tools

Deployment and Management on AWS:

A Deep Dive on Options and Tools

Disaster Recovery Site on AWS - Minimal Cost Maximum Efficiency (STG305) | AW...

Disaster Recovery Site on AWS - Minimal Cost Maximum Efficiency (STG305) | AW...

More from Gourav Varma

More from Gourav Varma (20)

Recently uploaded

Architectural Portfolio Sean Lockwood

This portfolio contains selected projects I completed during my undergraduate studies. 2018 - 2023

一比一原版(SFU毕业证)西蒙菲莎大学毕业证成绩单如何办理

SFU毕业证原版定制【微信:176555708】【西蒙菲莎大学毕业证成绩单-学位证】【微信:176555708】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

◆◆◆◆◆ — — — — — — — — 【留学教育】留学归国服务中心 — — — — — -◆◆◆◆◆

【主营项目】

一.毕业证【微信:176555708】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【微信:176555708】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

学历顾问:微信:176555708

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.pdf

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.

Thank me later.

samsarthak31@gmail.com

Governing Equations for Fundamental Aerodynamics_Anderson2010.pdf

Governing Equations for Fundamental Aerodynamics

Standard Reomte Control Interface - Neometrix

About

Indigenized remote control interface card suitable for MAFI system CCR equipment. Compatible for IDM8000 CCR. Backplane mounted serial and TCP/Ethernet communication module for CCR remote access. IDM 8000 CCR remote control on serial and TCP protocol.

• Remote control: Parallel or serial interface.

• Compatible with MAFI CCR system.

• Compatible with IDM8000 CCR.

• Compatible with Backplane mount serial communication.

• Compatible with commercial and Defence aviation CCR system.

• Remote control system for accessing CCR and allied system over serial or TCP.

• Indigenized local Support/presence in India.

• Easy in configuration using DIP switches.

Technical Specifications

Indigenized remote control interface card suitable for MAFI system CCR equipment. Compatible for IDM8000 CCR. Backplane mounted serial and TCP/Ethernet communication module for CCR remote access. IDM 8000 CCR remote control on serial and TCP protocol.

Key Features

Indigenized remote control interface card suitable for MAFI system CCR equipment. Compatible for IDM8000 CCR. Backplane mounted serial and TCP/Ethernet communication module for CCR remote access. IDM 8000 CCR remote control on serial and TCP protocol.

• Remote control: Parallel or serial interface

• Compatible with MAFI CCR system

• Copatiable with IDM8000 CCR

• Compatible with Backplane mount serial communication.

• Compatible with commercial and Defence aviation CCR system.

• Remote control system for accessing CCR and allied system over serial or TCP.

• Indigenized local Support/presence in India.

Application

• Remote control: Parallel or serial interface.

• Compatible with MAFI CCR system.

• Compatible with IDM8000 CCR.

• Compatible with Backplane mount serial communication.

• Compatible with commercial and Defence aviation CCR system.

• Remote control system for accessing CCR and allied system over serial or TCP.

• Indigenized local Support/presence in India.

• Easy in configuration using DIP switches.

The Benefits and Techniques of Trenchless Pipe Repair.pdf

Explore the innovative world of trenchless pipe repair with our comprehensive guide, "The Benefits and Techniques of Trenchless Pipe Repair." This document delves into the modern methods of repairing underground pipes without the need for extensive excavation, highlighting the numerous advantages and the latest techniques used in the industry.

Learn about the cost savings, reduced environmental impact, and minimal disruption associated with trenchless technology. Discover detailed explanations of popular techniques such as pipe bursting, cured-in-place pipe (CIPP) lining, and directional drilling. Understand how these methods can be applied to various types of infrastructure, from residential plumbing to large-scale municipal systems.

Ideal for homeowners, contractors, engineers, and anyone interested in modern plumbing solutions, this guide provides valuable insights into why trenchless pipe repair is becoming the preferred choice for pipe rehabilitation. Stay informed about the latest advancements and best practices in the field.

Immunizing Image Classifiers Against Localized Adversary Attacks

This paper addresses the vulnerability of deep learning models, particularly convolutional neural networks

(CNN)s, to adversarial attacks and presents a proactive training technique designed to counter them. We

introduce a novel volumization algorithm, which transforms 2D images into 3D volumetric representations.

When combined with 3D convolution and deep curriculum learning optimization (CLO), itsignificantly improves

the immunity of models against localized universal attacks by up to 40%. We evaluate our proposed approach

using contemporary CNN architectures and the modified Canadian Institute for Advanced Research (CIFAR-10

and CIFAR-100) and ImageNet Large Scale Visual Recognition Challenge (ILSVRC12) datasets, showcasing

accuracy improvements over previous techniques. The results indicate that the combination of the volumetric

input and curriculum learning holds significant promise for mitigating adversarial attacks without necessitating

adversary training.

Nuclear Power Economics and Structuring 2024

Title: Nuclear Power Economics and Structuring - 2024 Edition

Produced by: World Nuclear Association Published: April 2024

Report No. 2024/001

© 2024 World Nuclear Association.

Registered in England and Wales, company number 01215741

This report reflects the views

of industry experts but does not

necessarily represent those

of World Nuclear Association’s

individual member organizations.

一比一原版(IIT毕业证)伊利诺伊理工大学毕业证成绩单专业办理

IIT毕业证原版定制【微信:176555708】【伊利诺伊理工大学毕业证成绩单-学位证】【微信:176555708】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

◆◆◆◆◆ — — — — — — — — 【留学教育】留学归国服务中心 — — — — — -◆◆◆◆◆

【主营项目】

一.毕业证【微信:176555708】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【微信:176555708】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

学历顾问:微信:176555708

Final project report on grocery store management system..pdf

In today’s fast-changing business environment, it’s extremely important to be able to respond to client needs in the most effective and timely manner. If your customers wish to see your business online and have instant access to your products or services.

Online Grocery Store is an e-commerce website, which retails various grocery products. This project allows viewing various products available enables registered users to purchase desired products instantly using Paytm, UPI payment processor (Instant Pay) and also can place order by using Cash on Delivery (Pay Later) option. This project provides an easy access to Administrators and Managers to view orders placed using Pay Later and Instant Pay options.

In order to develop an e-commerce website, a number of Technologies must be studied and understood. These include multi-tiered architecture, server and client-side scripting techniques, implementation technologies, programming language (such as PHP, HTML, CSS, JavaScript) and MySQL relational databases. This is a project with the objective to develop a basic website where a consumer is provided with a shopping cart website and also to know about the technologies used to develop such a website.

This document will discuss each of the underlying technologies to create and implement an e- commerce website.

J.Yang, ICLR 2024, MLILAB, KAIST AI.pdf

Language-Interfaced Tabular Oversampling Via Progressive Imputation And Self Autentication

Hybrid optimization of pumped hydro system and solar- Engr. Abdul-Azeez.pdf

Advancements in technology unveil a myriad of electrical and electronic breakthroughs geared towards efficiently harnessing limited resources to meet human energy demands. The optimization of hybrid solar PV panels and pumped hydro energy supply systems plays a pivotal role in utilizing natural resources effectively. This initiative not only benefits humanity but also fosters environmental sustainability. The study investigated the design optimization of these hybrid systems, focusing on understanding solar radiation patterns, identifying geographical influences on solar radiation, formulating a mathematical model for system optimization, and determining the optimal configuration of PV panels and pumped hydro storage. Through a comparative analysis approach and eight weeks of data collection, the study addressed key research questions related to solar radiation patterns and optimal system design. The findings highlighted regions with heightened solar radiation levels, showcasing substantial potential for power generation and emphasizing the system's efficiency. Optimizing system design significantly boosted power generation, promoted renewable energy utilization, and enhanced energy storage capacity. The study underscored the benefits of optimizing hybrid solar PV panels and pumped hydro energy supply systems for sustainable energy usage. Optimizing the design of solar PV panels and pumped hydro energy supply systems as examined across diverse climatic conditions in a developing country, not only enhances power generation but also improves the integration of renewable energy sources and boosts energy storage capacities, particularly beneficial for less economically prosperous regions. Additionally, the study provides valuable insights for advancing energy research in economically viable areas. Recommendations included conducting site-specific assessments, utilizing advanced modeling tools, implementing regular maintenance protocols, and enhancing communication among system components.

在线办理(ANU毕业证书)澳洲国立大学毕业证录取通知书一模一样

学校原件一模一样【微信:741003700 】《(ANU毕业证书)澳洲国立大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Cosmetic shop management system project report.pdf

Buying new cosmetic products is difficult. It can even be scary for those who have sensitive skin and are prone to skin trouble. The information needed to alleviate this problem is on the back of each product, but it's thought to interpret those ingredient lists unless you have a background in chemistry.

Instead of buying and hoping for the best, we can use data science to help us predict which products may be good fits for us. It includes various function programs to do the above mentioned tasks.

Data file handling has been effectively used in the program.

The automated cosmetic shop management system should deal with the automation of general workflow and administration process of the shop. The main processes of the system focus on customer's request where the system is able to search the most appropriate products and deliver it to the customers. It should help the employees to quickly identify the list of cosmetic product that have reached the minimum quantity and also keep a track of expired date for each cosmetic product. It should help the employees to find the rack number in which the product is placed.It is also Faster and more efficient way.

Recently uploaded (20)

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.pdf

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.pdf

Governing Equations for Fundamental Aerodynamics_Anderson2010.pdf

Governing Equations for Fundamental Aerodynamics_Anderson2010.pdf

The Benefits and Techniques of Trenchless Pipe Repair.pdf

The Benefits and Techniques of Trenchless Pipe Repair.pdf

ML for identifying fraud using open blockchain data.pptx

ML for identifying fraud using open blockchain data.pptx

Immunizing Image Classifiers Against Localized Adversary Attacks

Immunizing Image Classifiers Against Localized Adversary Attacks

Final project report on grocery store management system..pdf

Final project report on grocery store management system..pdf

Hybrid optimization of pumped hydro system and solar- Engr. Abdul-Azeez.pdf

Hybrid optimization of pumped hydro system and solar- Engr. Abdul-Azeez.pdf

Cosmetic shop management system project report.pdf

Cosmetic shop management system project report.pdf

Terraform day02

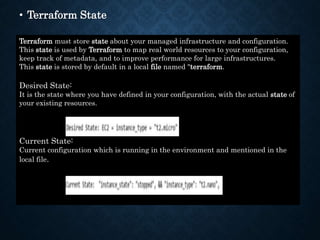

- 1. • Terraform State Terraform must store state about your managed infrastructure and configuration. This state is used by Terraform to map real world resources to your configuration, keep track of metadata, and to improve performance for large infrastructures. This state is stored by default in a local file named "terraform. Desired State: It is the state where you have defined in your configuration, with the actual state of your existing resources. Current State: Current configuration which is running in the environment and mentioned in the local file.

- 3. To refresh the current state: terraform refresh Scenario: If you change a parameter manually in any services inside AWS and then you want to roll back to previous value then it is mandatory to have it inside the desired state files.

- 4. • D. Interpolation, Attributes & Variables: • Attributes and Output Values Resource instances managed by Terraform each export attributes whose values can be used elsewhere in configuration. Output values are a way to expose some of that information to the user of your module. Note: For brevity, output values are often referred to as just "outputs" when the meaning is clear from context.

- 6. provider "aws" { region = "us-west-2" access_key = "PUT-YOUR-ACCESS-KEY-HERE" secret_key = "PUT-YOUR-SECRET-KEY-HERE" } resource "aws_eip" "lb" { vpc = true } output "eip" { value = aws_eip.lb } resource "aws_s3_bucket" "mys3" { bucket = "kplabs-attribute-demo-001" } output "mys3bucket" { value = aws_s3_bucket.mys3 }

- 8. provider "aws" { region = "us-west-2" access_key = "PUT-YOUR-ACCESS-KEY-HERE" secret_key = "PUT-YOUR-SECRET-KEY-HERE" } resource "aws_instance" "myec2" { ami = "ami-082b5a644766e0e6f" instance_type = "t2.micro" } resource "aws_eip" "lb" { vpc = true } resource "aws_eip_association" "eip_assoc" { instance_id = aws_instance.myec2.id allocation_id = aws_eip.lb.id }

- 10. resource "aws_security_group" "allow_tls" { name = "kplabs-security-group" ingress { from_port = 443 to_port = 443 protocol = "tcp" cidr_blocks = ["${aws_eip.lb.public_ip}/32"] # cidr_blocks = [aws_eip.lb.public_ip/32] } }

- 13. resource "aws_security_group" "var_demo" { name = "kplabs-variables" ingress { from_port = 443 to_port = 443 protocol = "tcp" cidr_blocks = [var.vpn_ip] } ingress { from_port = 80 to_port = 80 protocol = "tcp" cidr_blocks = [var.vpn_ip] } ingress { from_port = 53 to_port = 53 protocol = "tcp" cidr_blocks = [var.vpn_ip] }

- 14. variable "vpn_ip" { default = "116.50.30.50/32" } Source:

- 15. Understanding Provisioners in Terraform

- 19. resource "aws_instance" "myec2" { ami = "ami-082b5a644766e0e6f" instance_type = "t2.micro" provisioner "local-exec" { command = "echo ${aws_instance.myec2.private_ip} >> private_ips.txt" } } LOCAL EXEC PROVISIONERS

- 21. resource "aws_instance" "myec2" { ami = "ami-082b5a644766e0e6f" instance_type = "t2.micro" key_name = "kplabs-terraform" provisioner "remote-exec" { inline = [ "sudo amazon-linux-extras install -y nginx1.12", "sudo systemctl start nginx" ] connection { type = "ssh" user = "ec2-user" private_key = file("./kplabs-terraform.pem") host = self.public_ip } } } Remote Exec Provisioners

- 22. Integrating Ansible with Terraform

- 23. • Steps: 1. Install Ansible on the system. 2. Write a playbook to install and configure the applications. 3. Copy and paste the pem file which is tagged to the instance. 4. Write your tf code to build and provision the instance . Inside the tf code call ansible playbook to run it like below.

- 24. Files: nginx.yml

- 25. ec2.tf