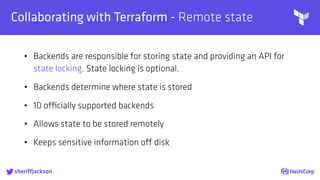

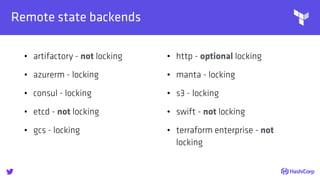

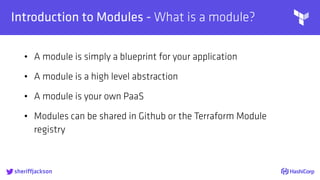

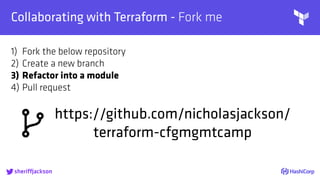

The document discusses managing modern cloud environments using Terraform, emphasizing infrastructure as code and collaboration through Terraform's features. It covers key concepts such as remote state management, module creation, CI/CD integration, and credential management using Vault for secure operations. The content serves as a guide for developers and organizations to effectively utilize Terraform in complex cloud infrastructures.

![

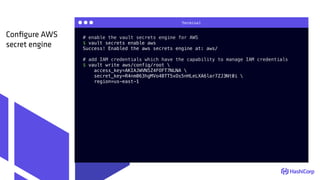

AWS Secrets

Managing Credentials with Vault - hierarchy

sheriffjackson

role A

(aws_read)

role B

(aws_write)

policy A

(aws_read)

[read]

policy C

(aws_write)

[read]

policy B

(aws_read)

[read, create]

GitHub Auth

user B

(ricksanchez)

user A

(nicholasjackson)](https://image.slidesharecdn.com/terraform-tamingmoderclouds-180205150036/85/Terraform-Taming-Modern-Clouds-43-320.jpg)

![{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": ["s3:ListBucket"],

"Resource": ["arn:aws:s3:::tfremotestatenic"]

},

{

"Effect": "Allow",

"Action": [

"s3:GetObject"

],

"Resource": ["arn:aws:s3:::tfremotestatenic/*"]

},

{

"Effect": "Allow",

"Action": "ec2:Describe*",

"Resource": "*"

},

{

"Effect": "Allow",

"Action": "elasticloadbalancing:Describe*",

"Resource": "*"

},

...

terraform.tf

Create a read-only

AWS IAM policy

https://github.com/nicholasjackson/terraform-cfgmgmtcamp/blob/master/vault/templates/aws_read.json](https://image.slidesharecdn.com/terraform-tamingmoderclouds-180205150036/85/Terraform-Taming-Modern-Clouds-46-320.jpg)

![{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": ["s3:ListBucket"],

"Resource": ["arn:aws:s3:::tfremotestatenic"]

},

{

"Effect": "Allow",

"Action": [

"s3:PutObject",

"s3:GetObject",

"s3:DeleteObject"

],

"Resource": ["arn:aws:s3:::tfremotestatenic/*"]

},

{

"Action": "ec2:*",

"Effect": "Allow",

"Resource": "*"

},

{

"Effect": "Allow",

"Action": "elasticloadbalancing:*",

"Resource": "*"

},

terraform.tf

Create a write

AWS IAM policy

https://github.com/nicholasjackson/terraform-cfgmgmtcamp/blob/master/vault/templates/aws_write.json](https://image.slidesharecdn.com/terraform-tamingmoderclouds-180205150036/85/Terraform-Taming-Modern-Clouds-47-320.jpg)

![path "aws/creds/aws_readonly" {

capabilities = ["read"] # "create", "read", "update", "delete", "list"

}

terraform.tf

Create a read-only

Vault policy](https://image.slidesharecdn.com/terraform-tamingmoderclouds-180205150036/85/Terraform-Taming-Modern-Clouds-49-320.jpg)

![path "aws/creds/aws_write" {

capabilities = ["read"]

}

terraform.tf

Create a write

Vault policy](https://image.slidesharecdn.com/terraform-tamingmoderclouds-180205150036/85/Terraform-Taming-Modern-Clouds-50-320.jpg)