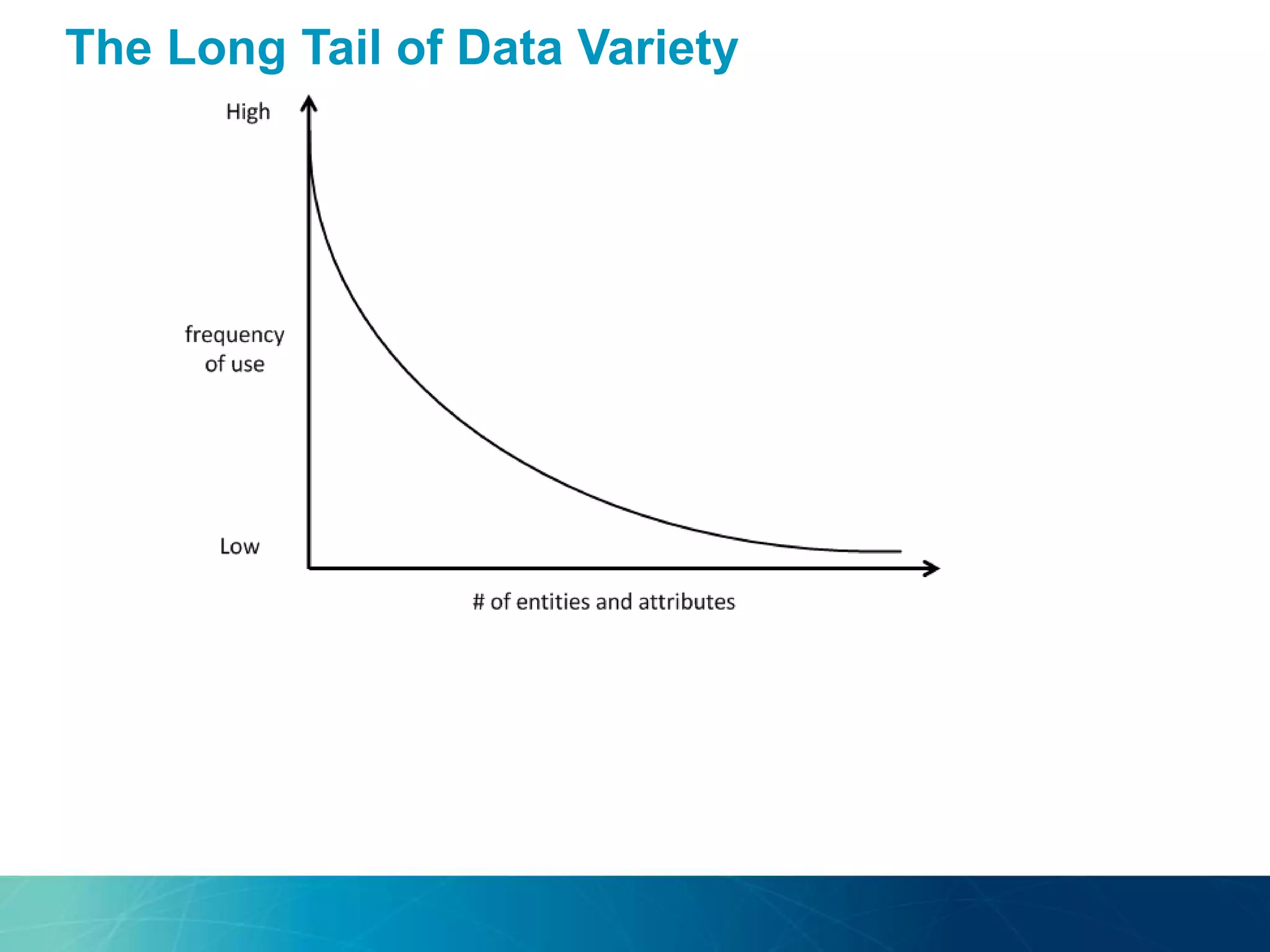

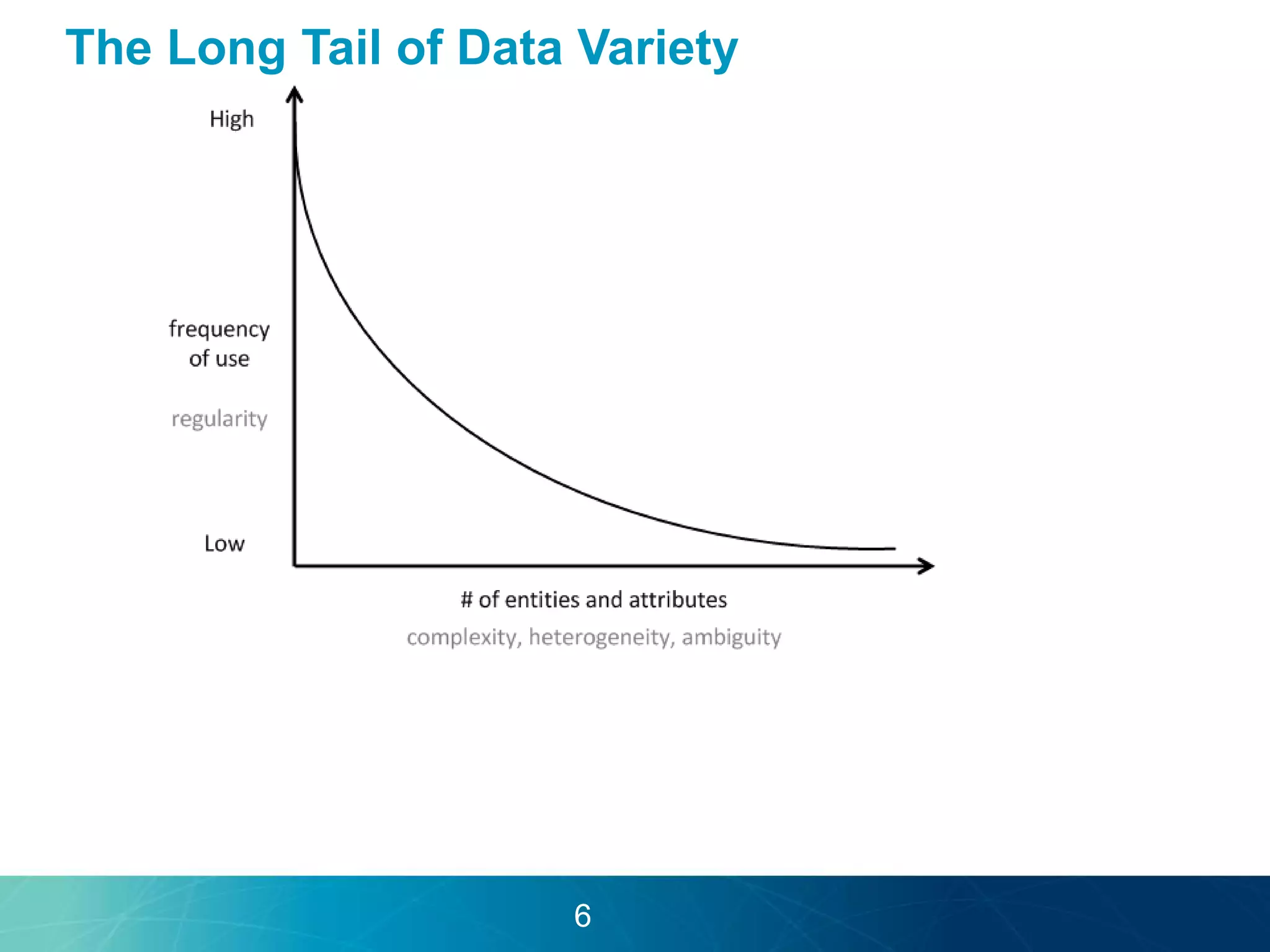

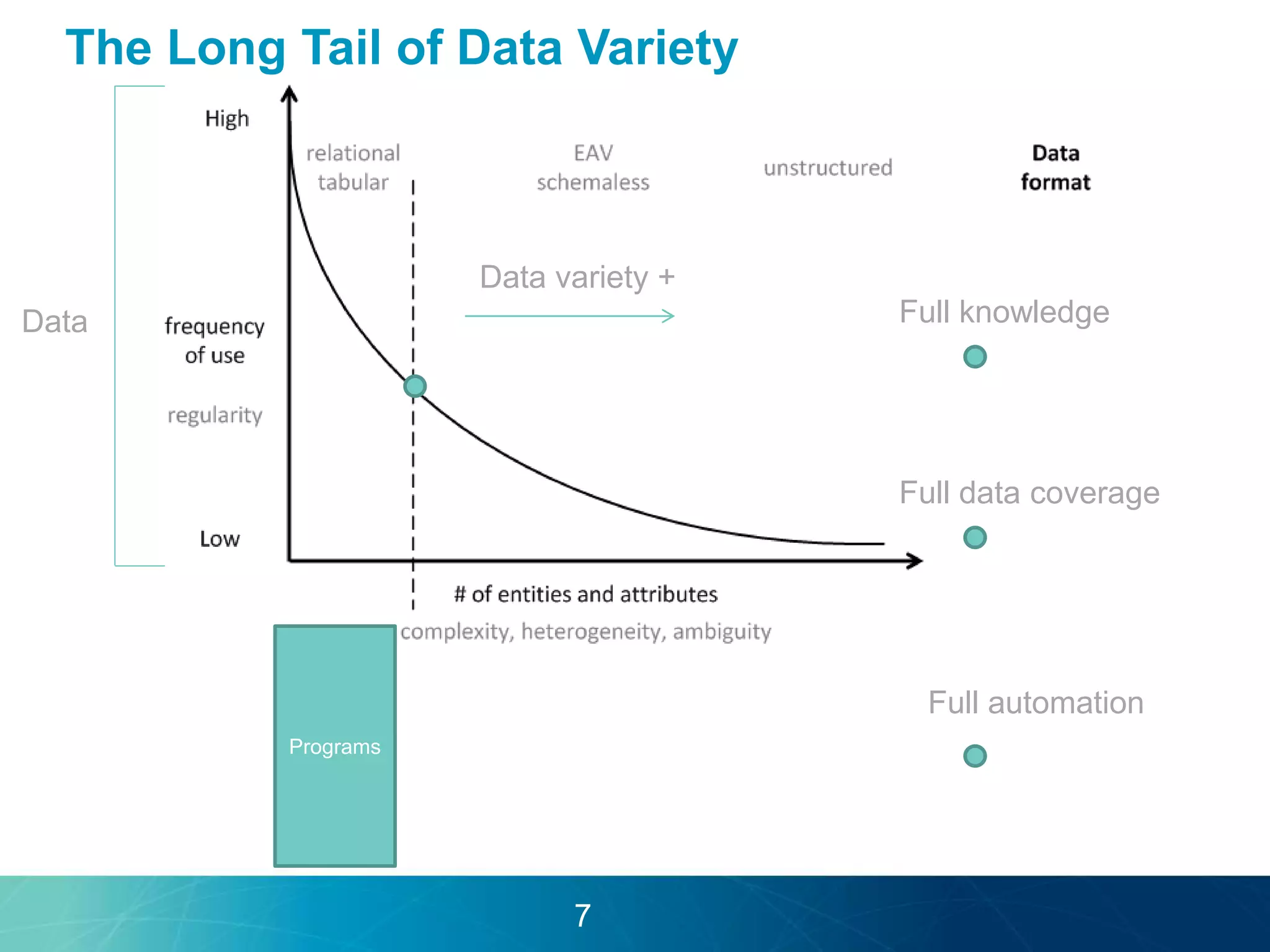

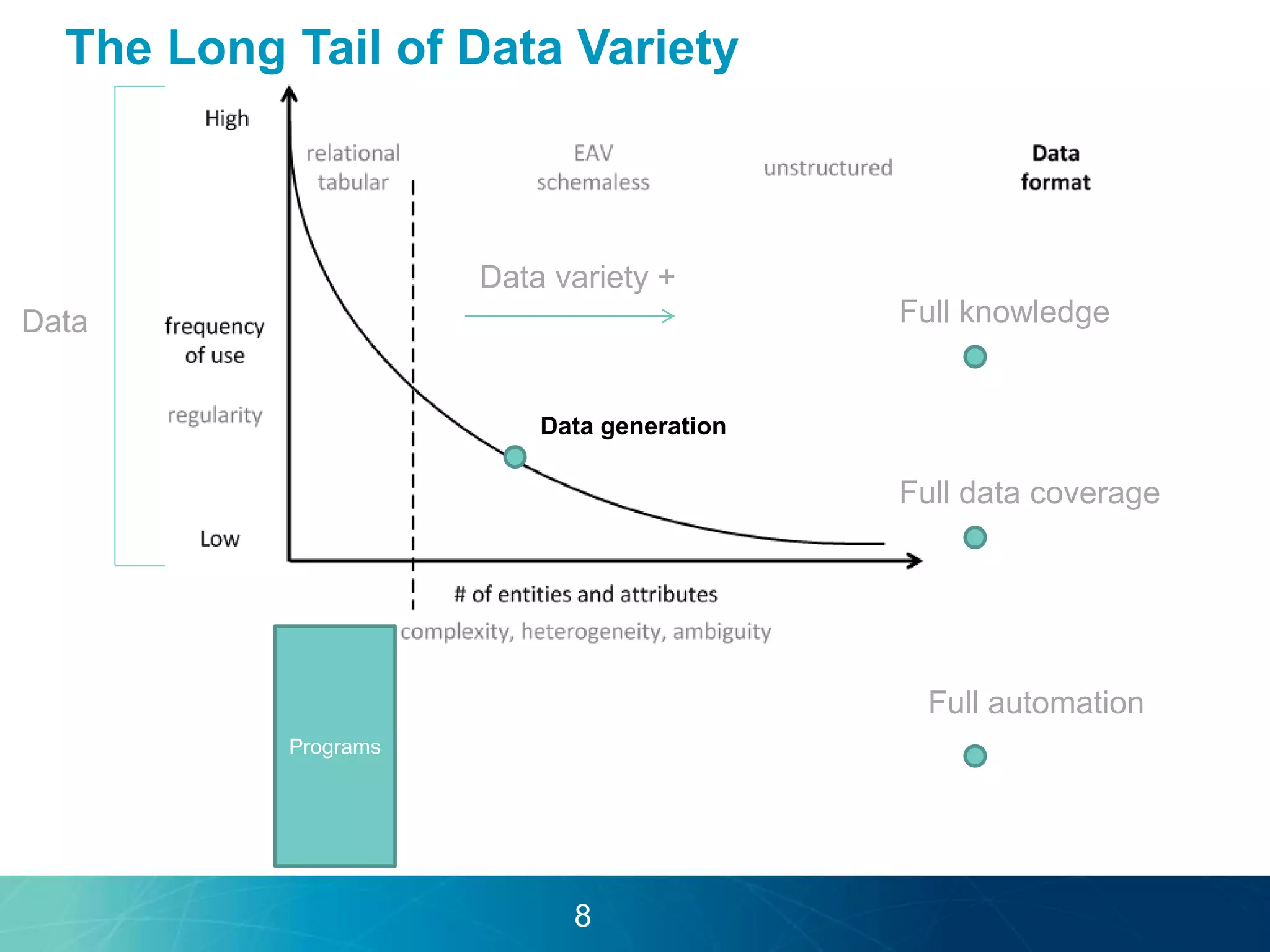

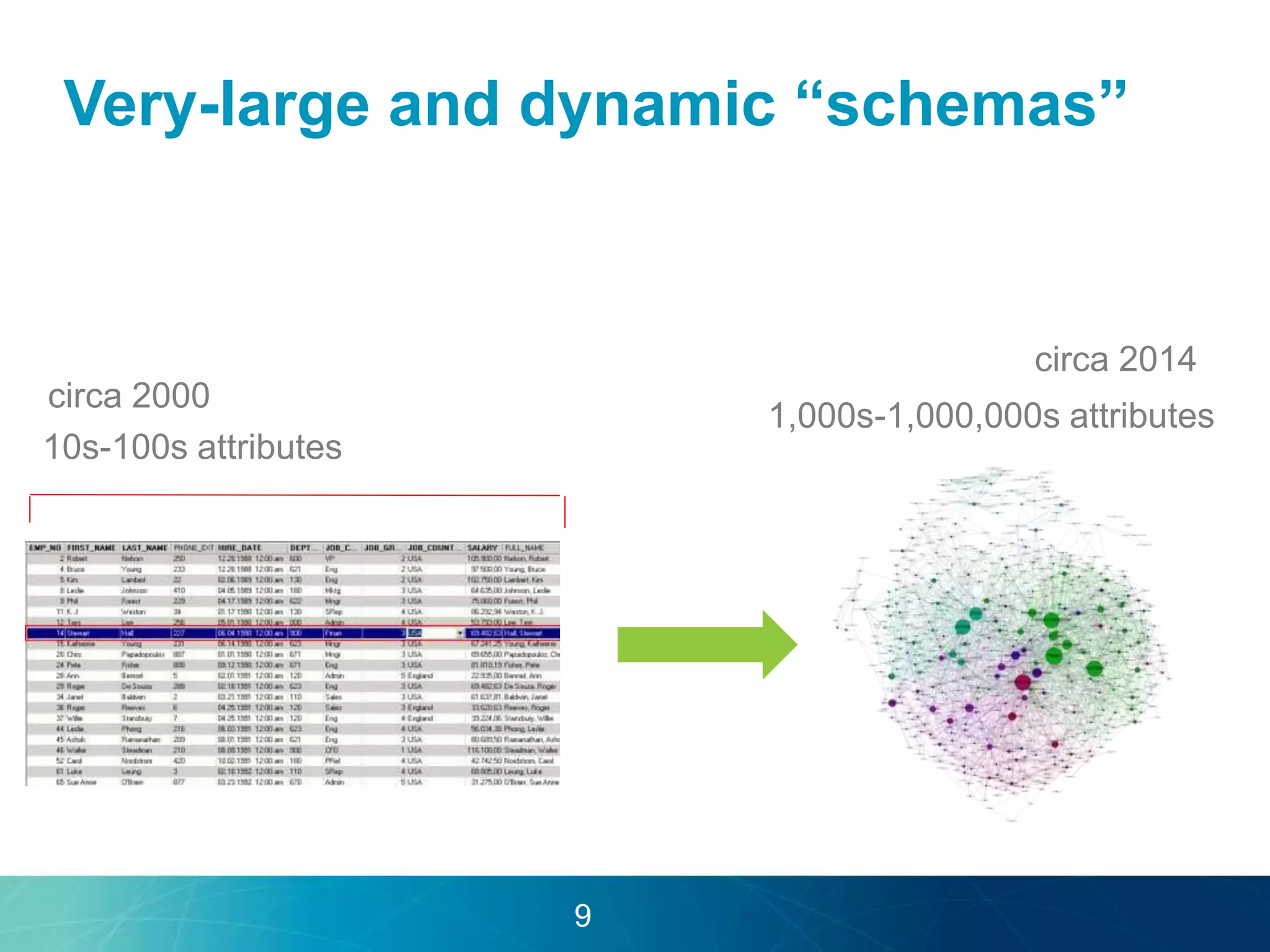

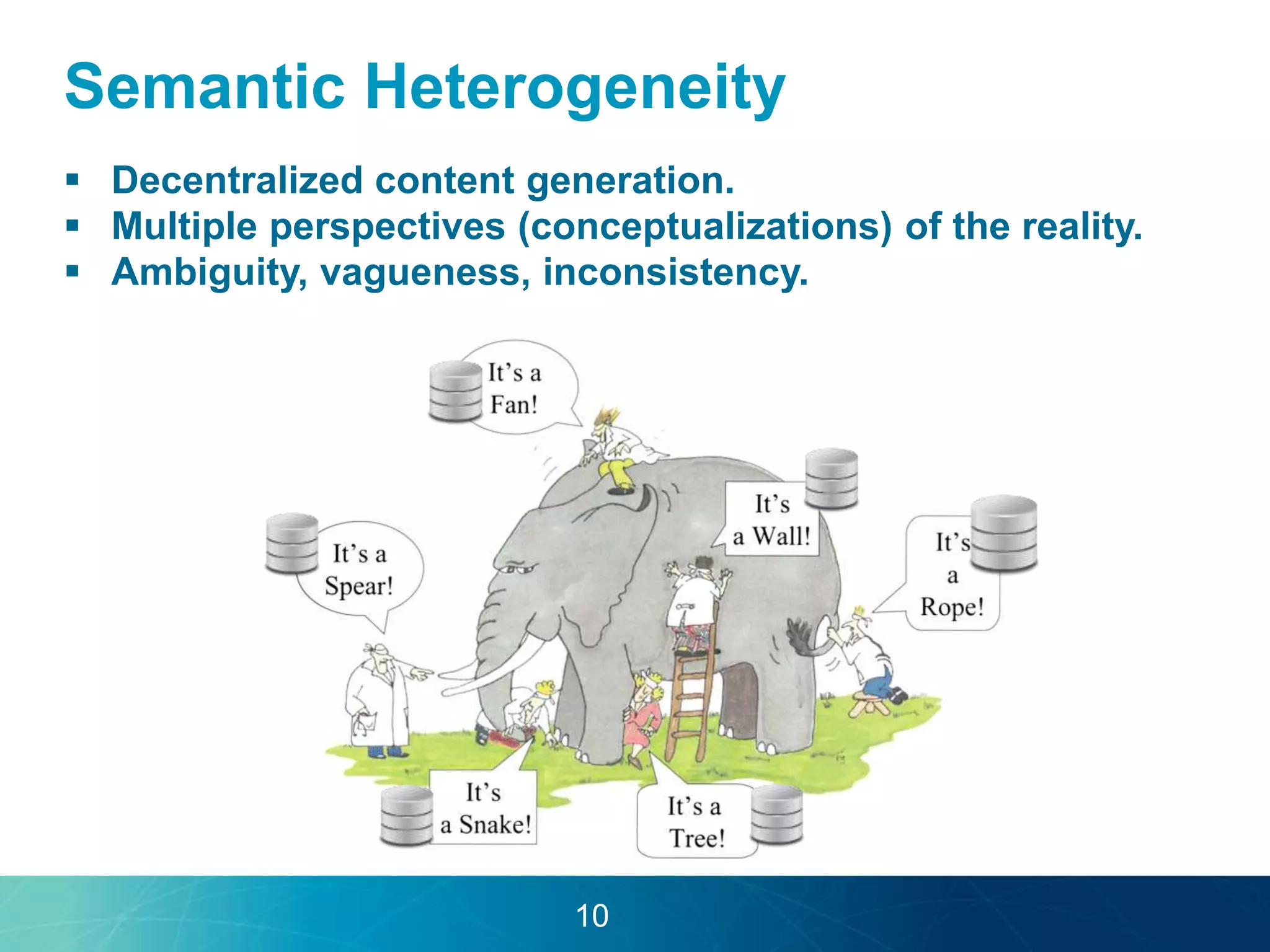

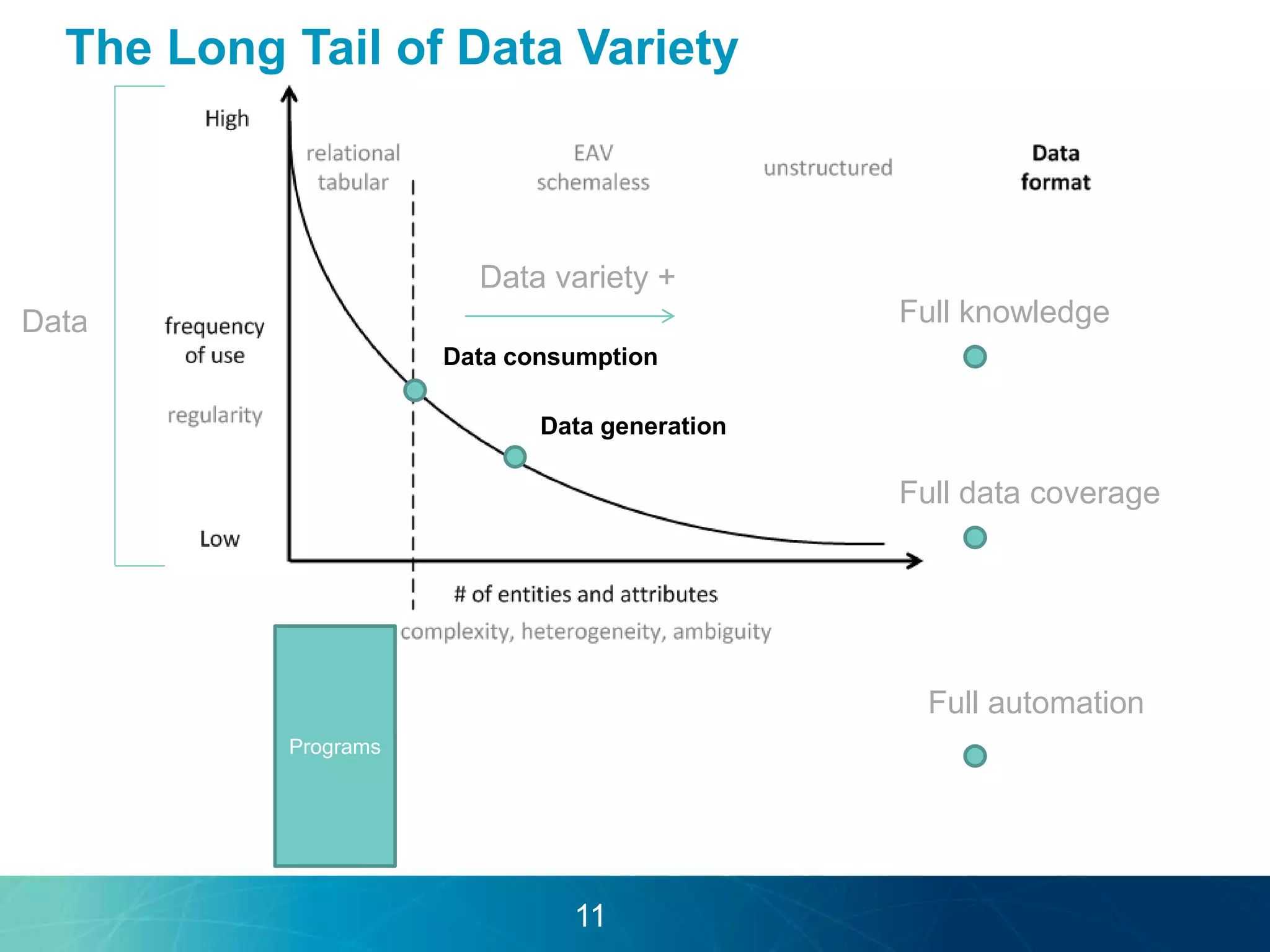

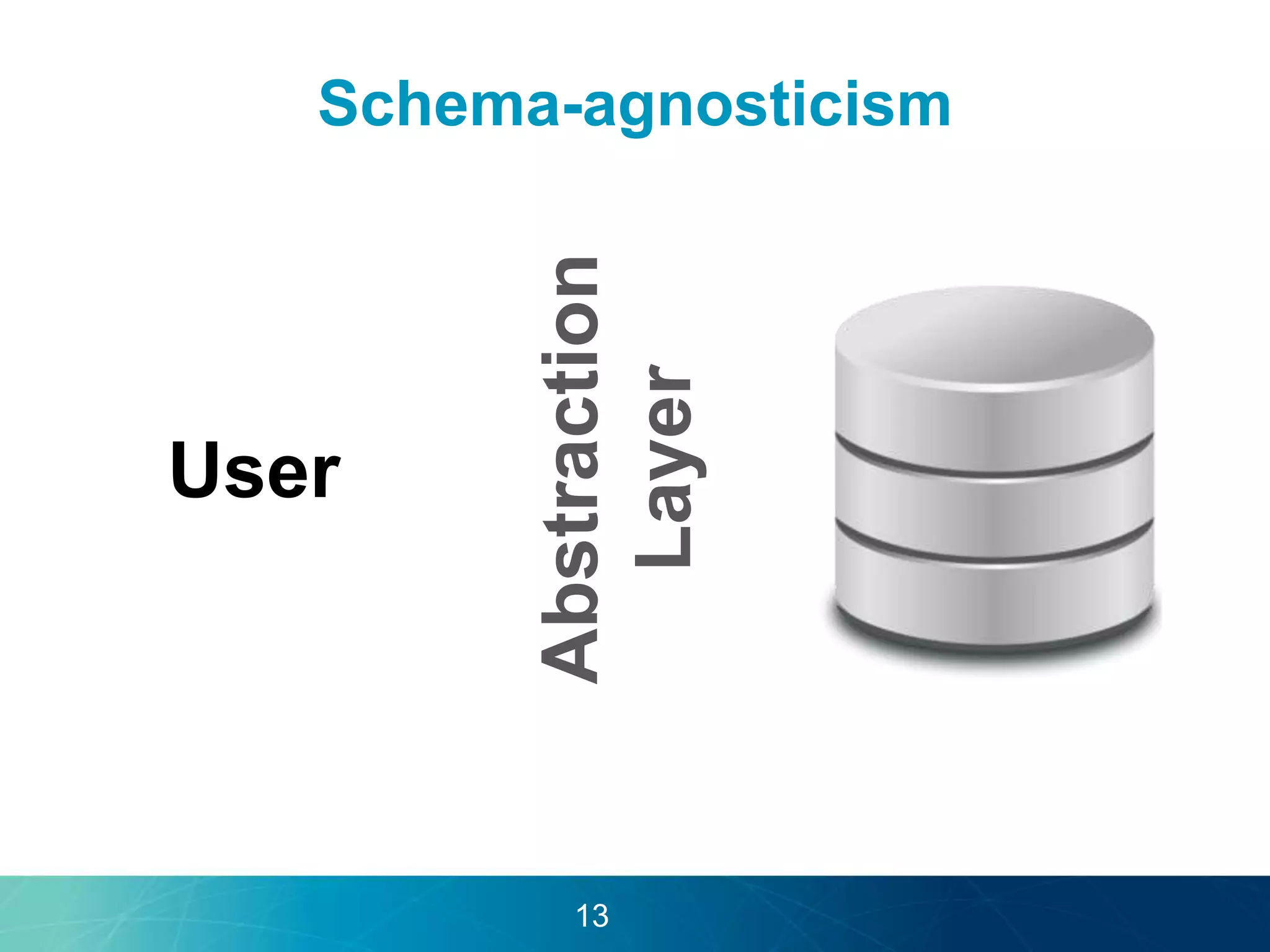

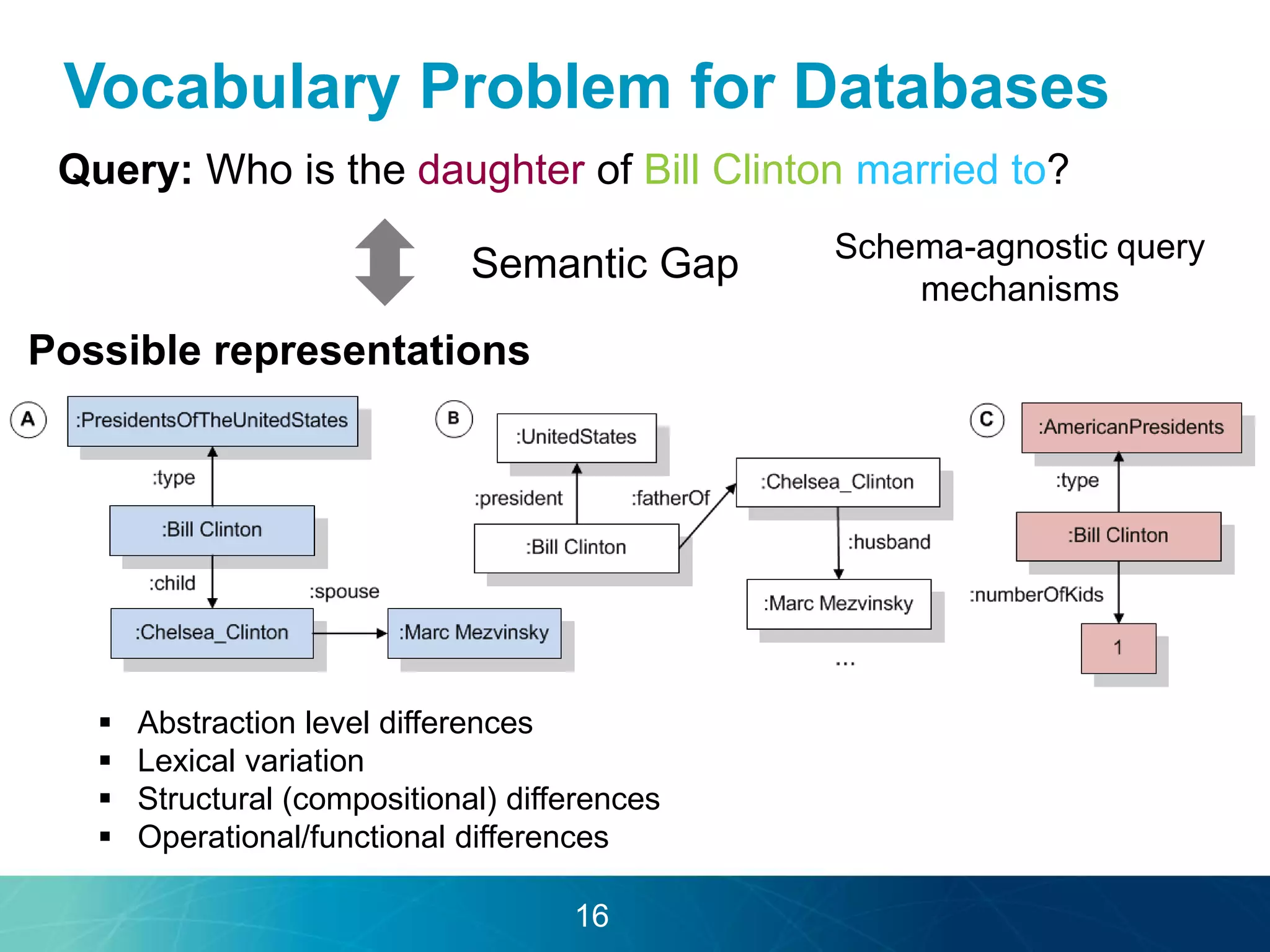

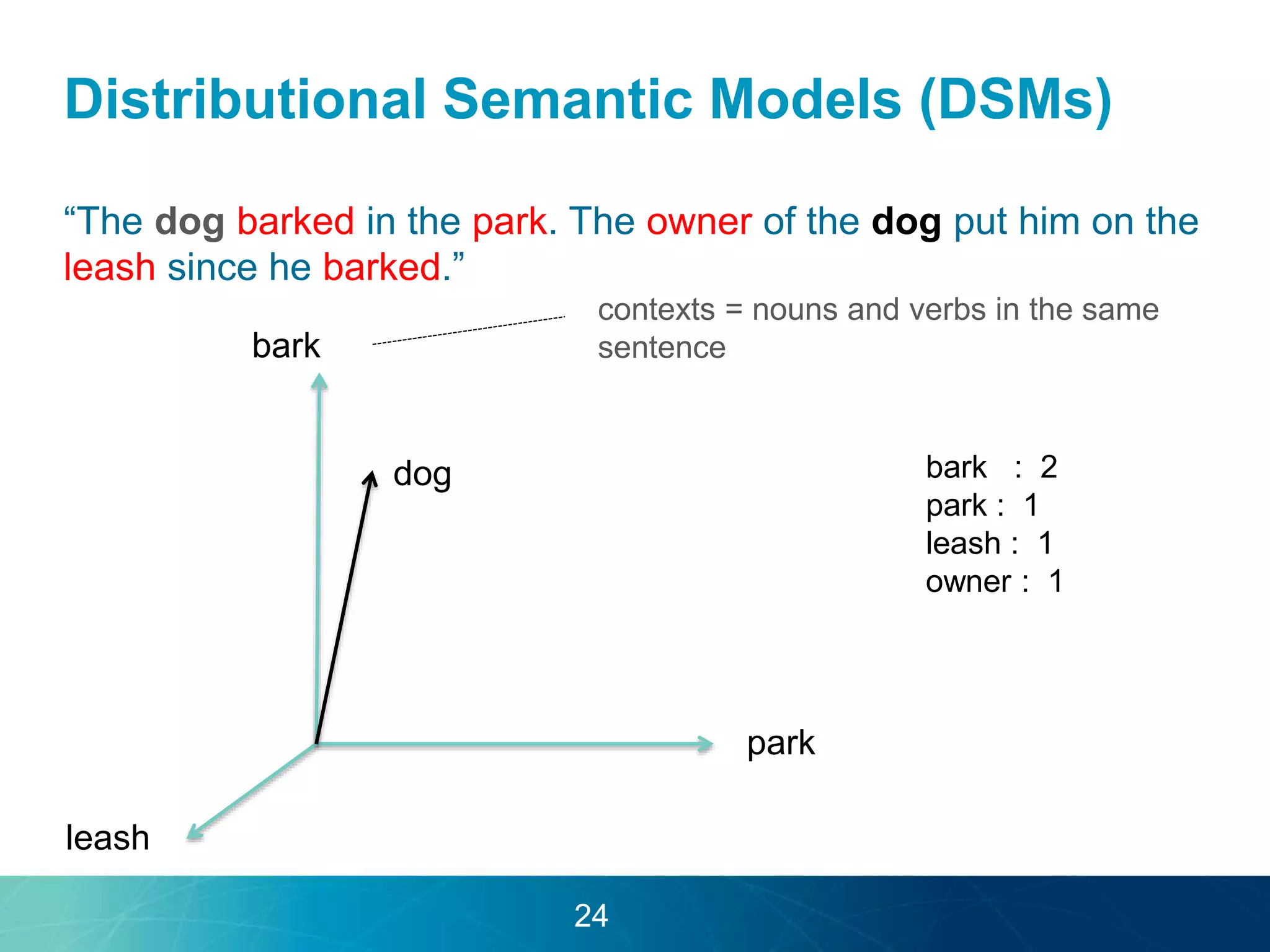

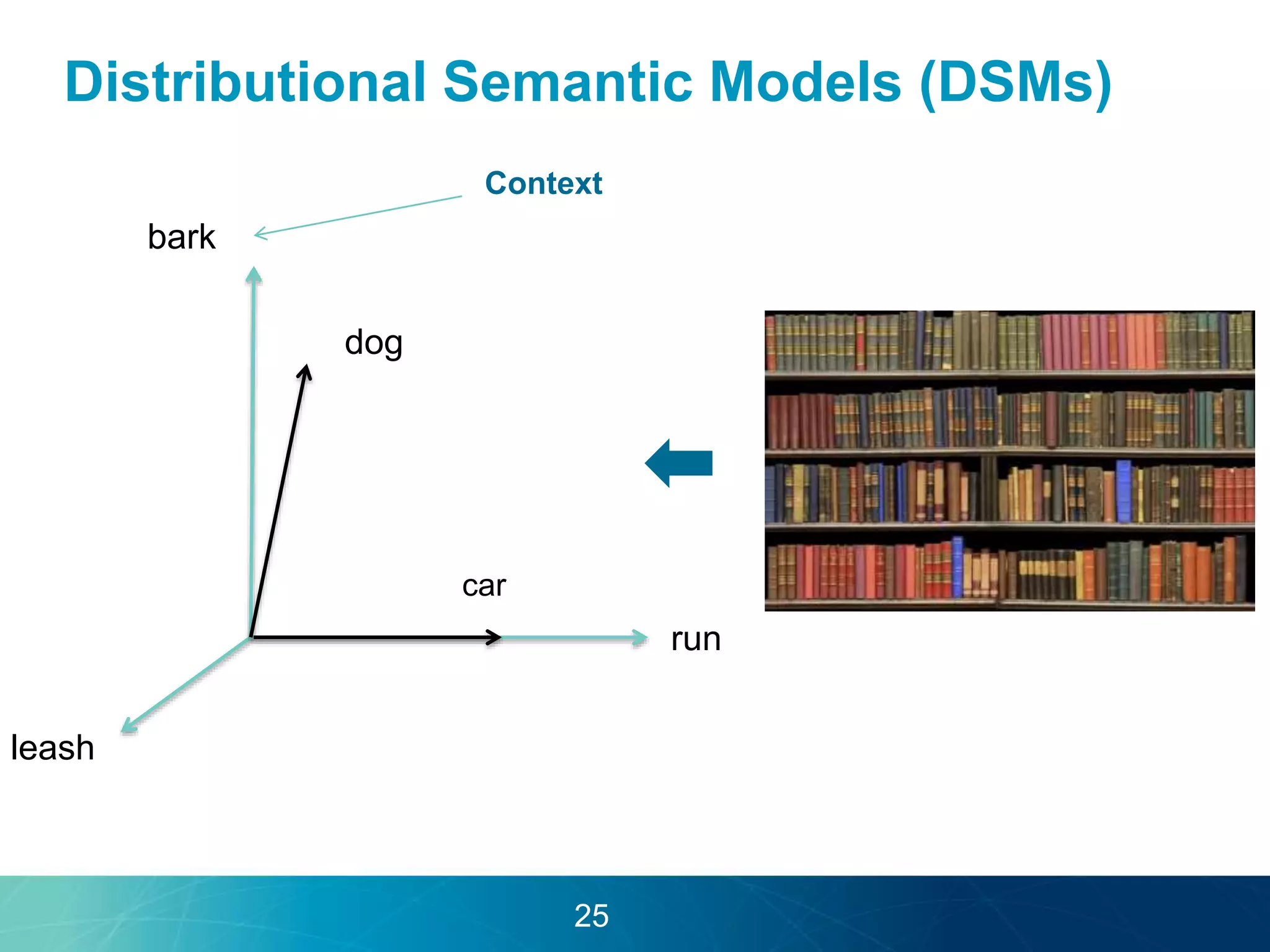

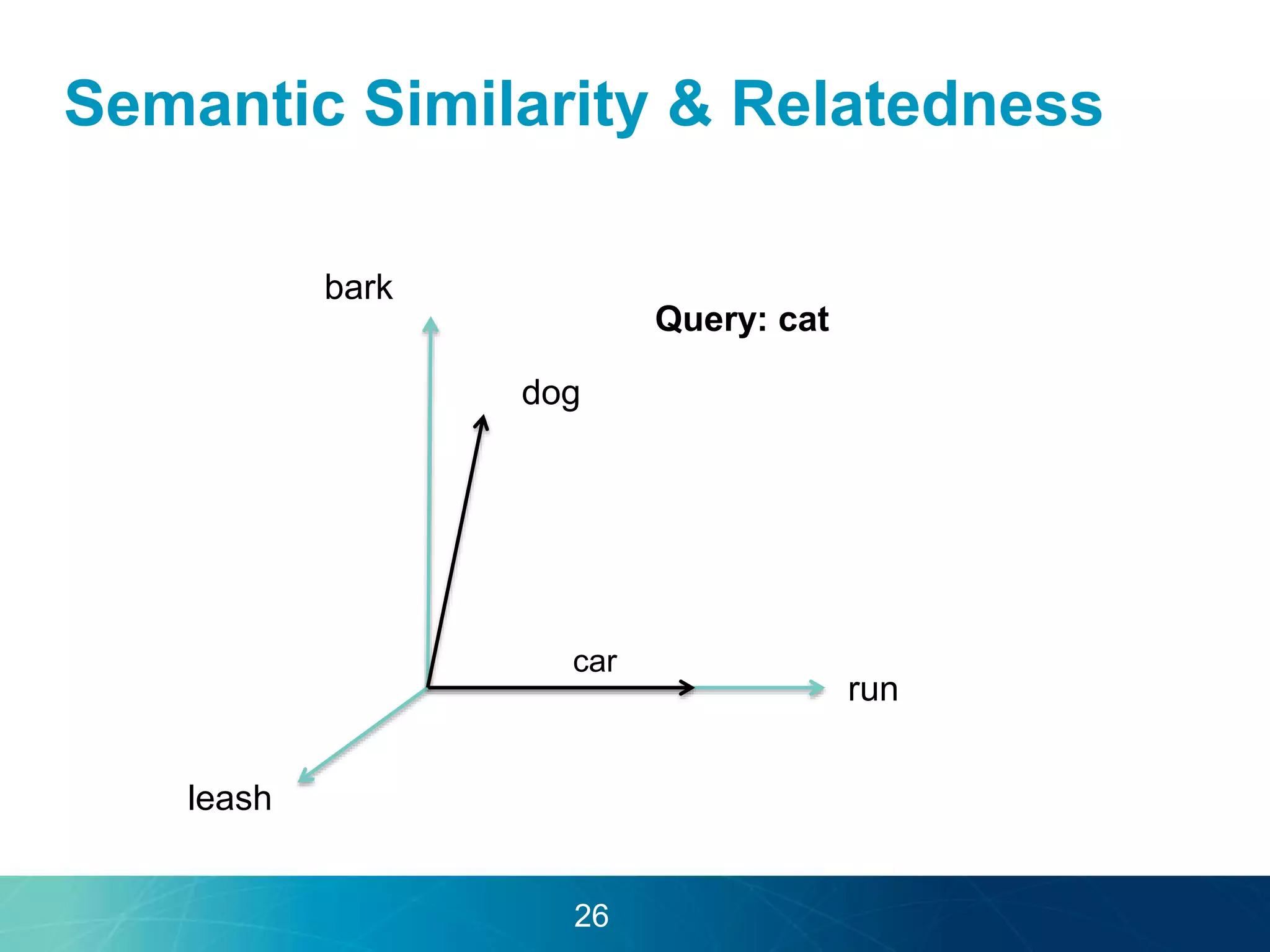

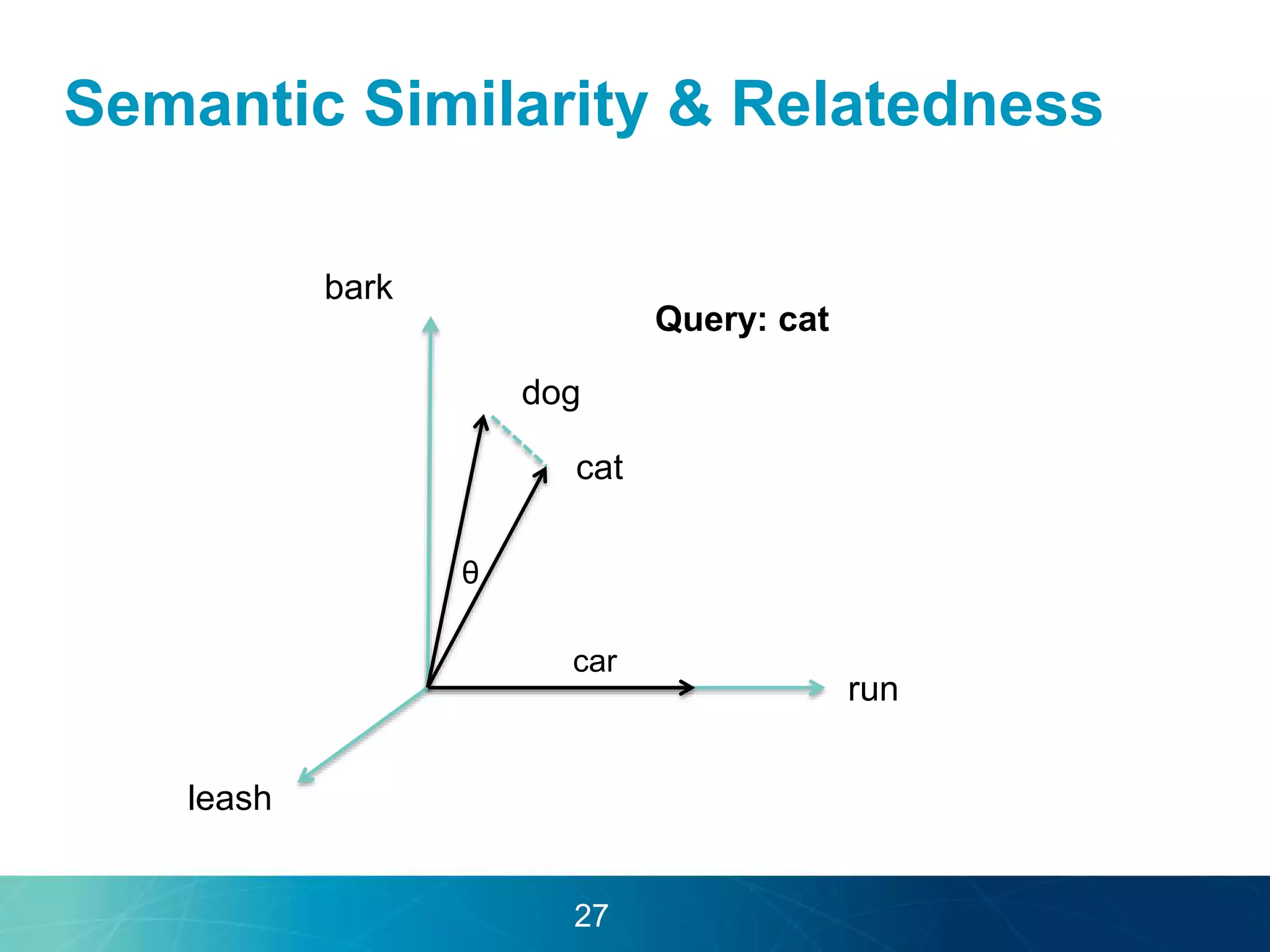

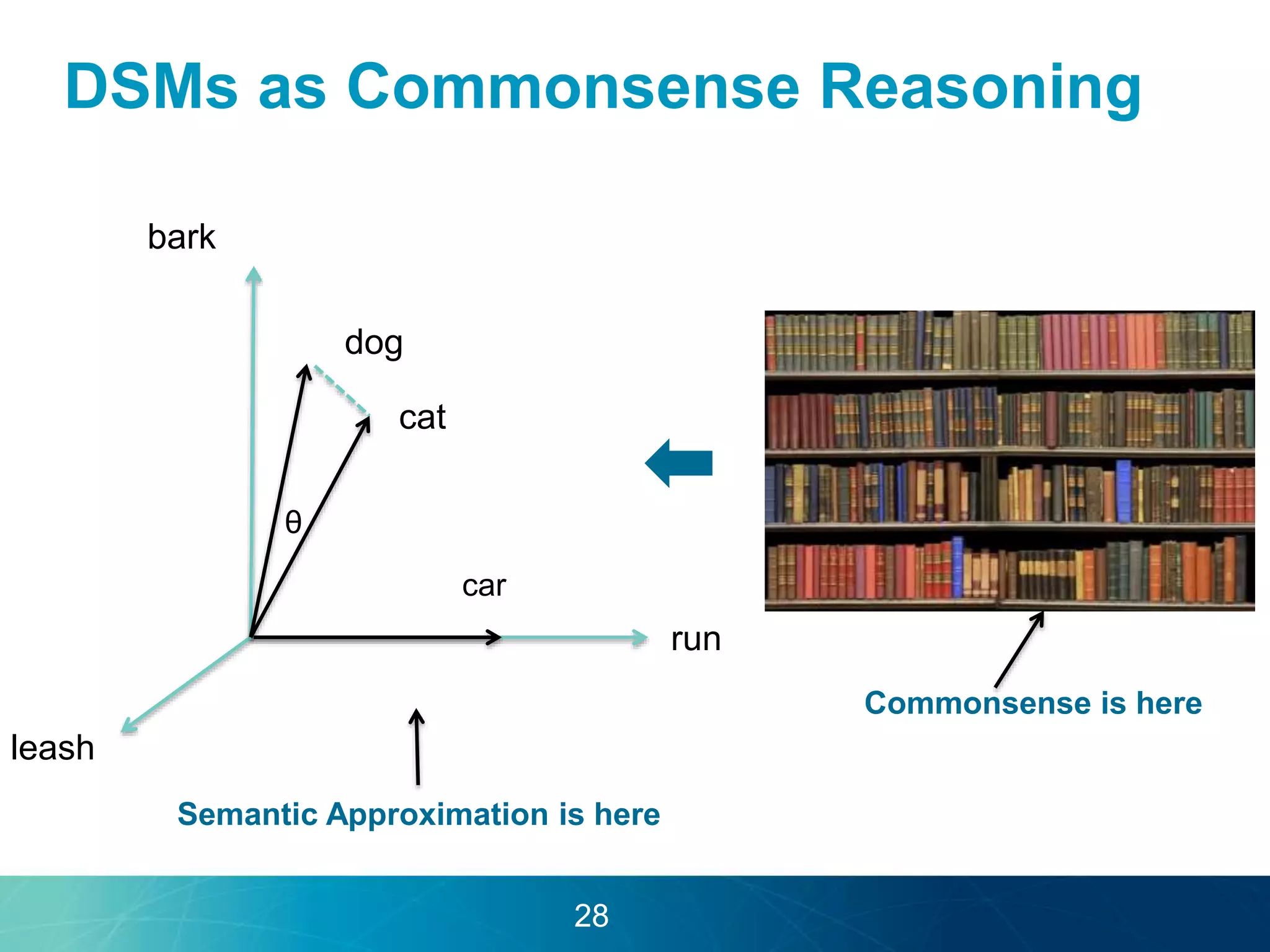

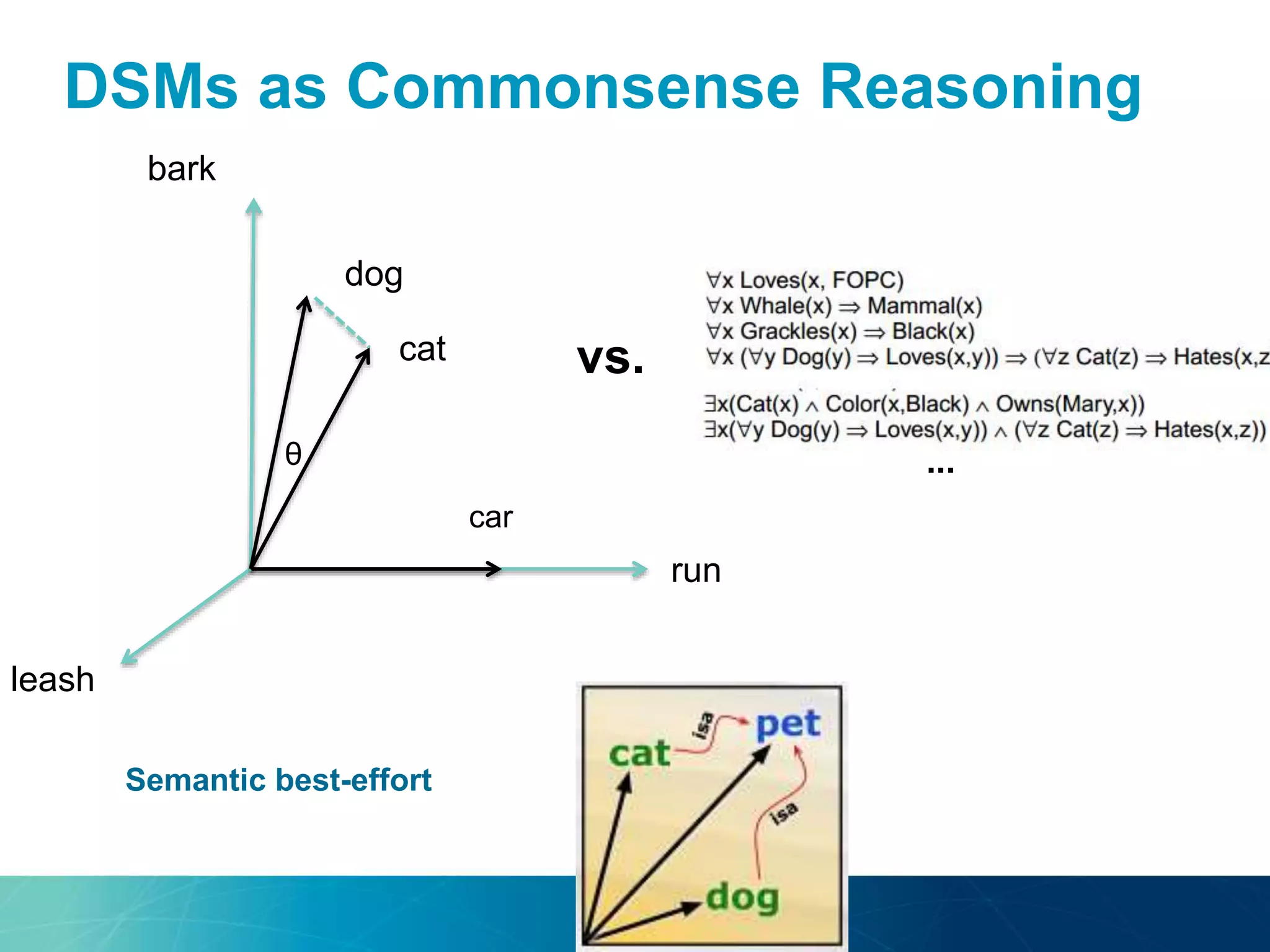

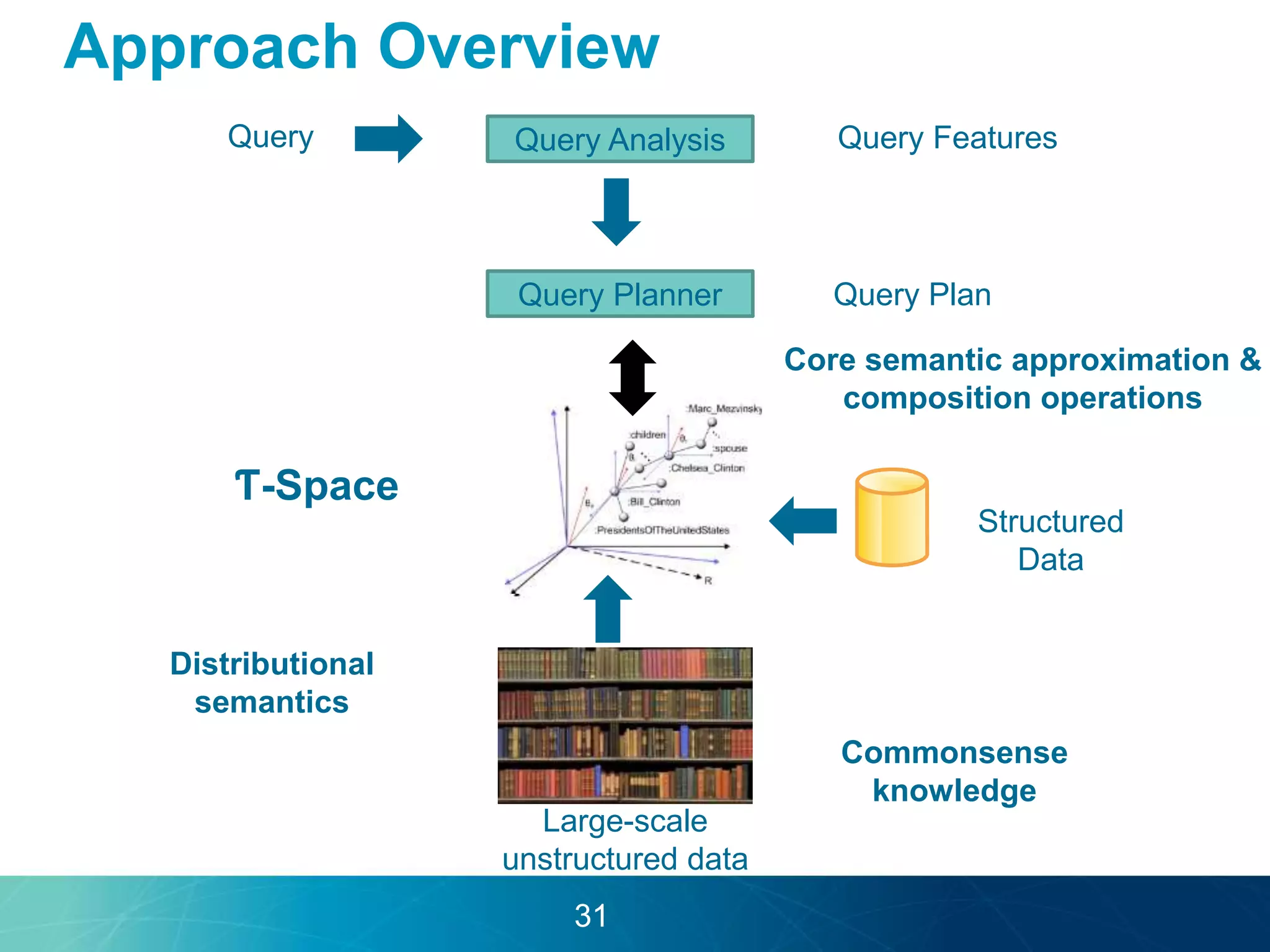

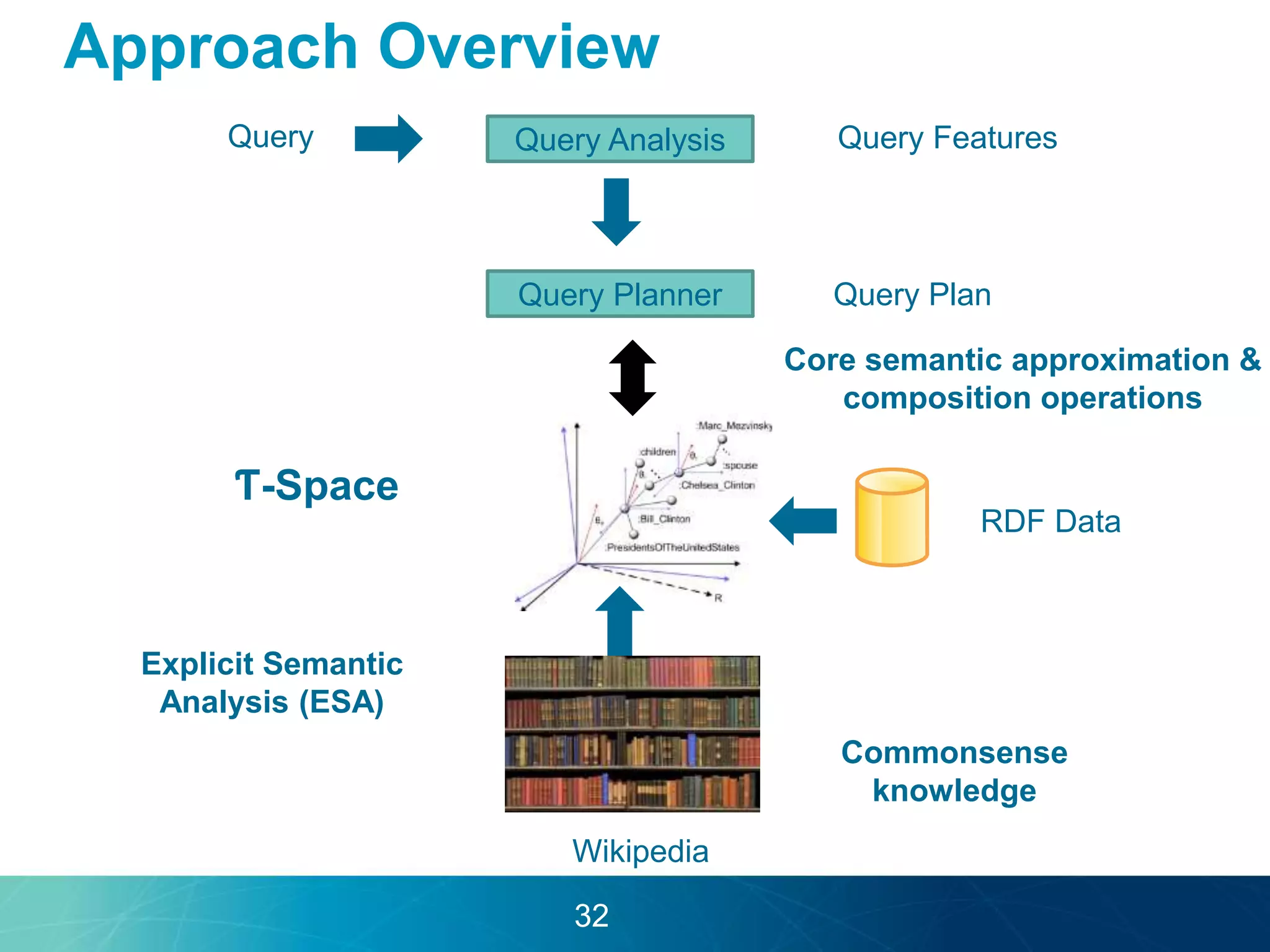

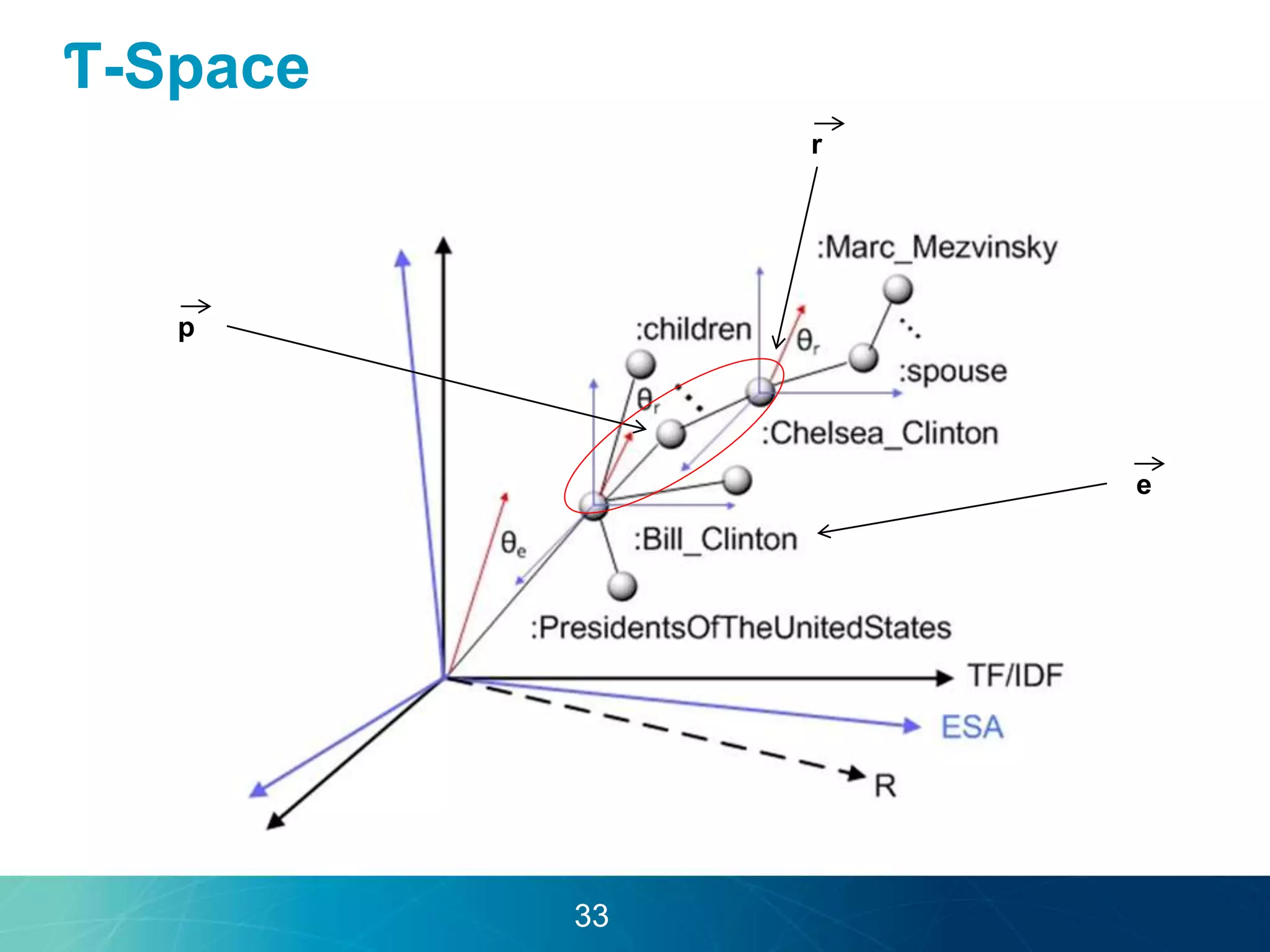

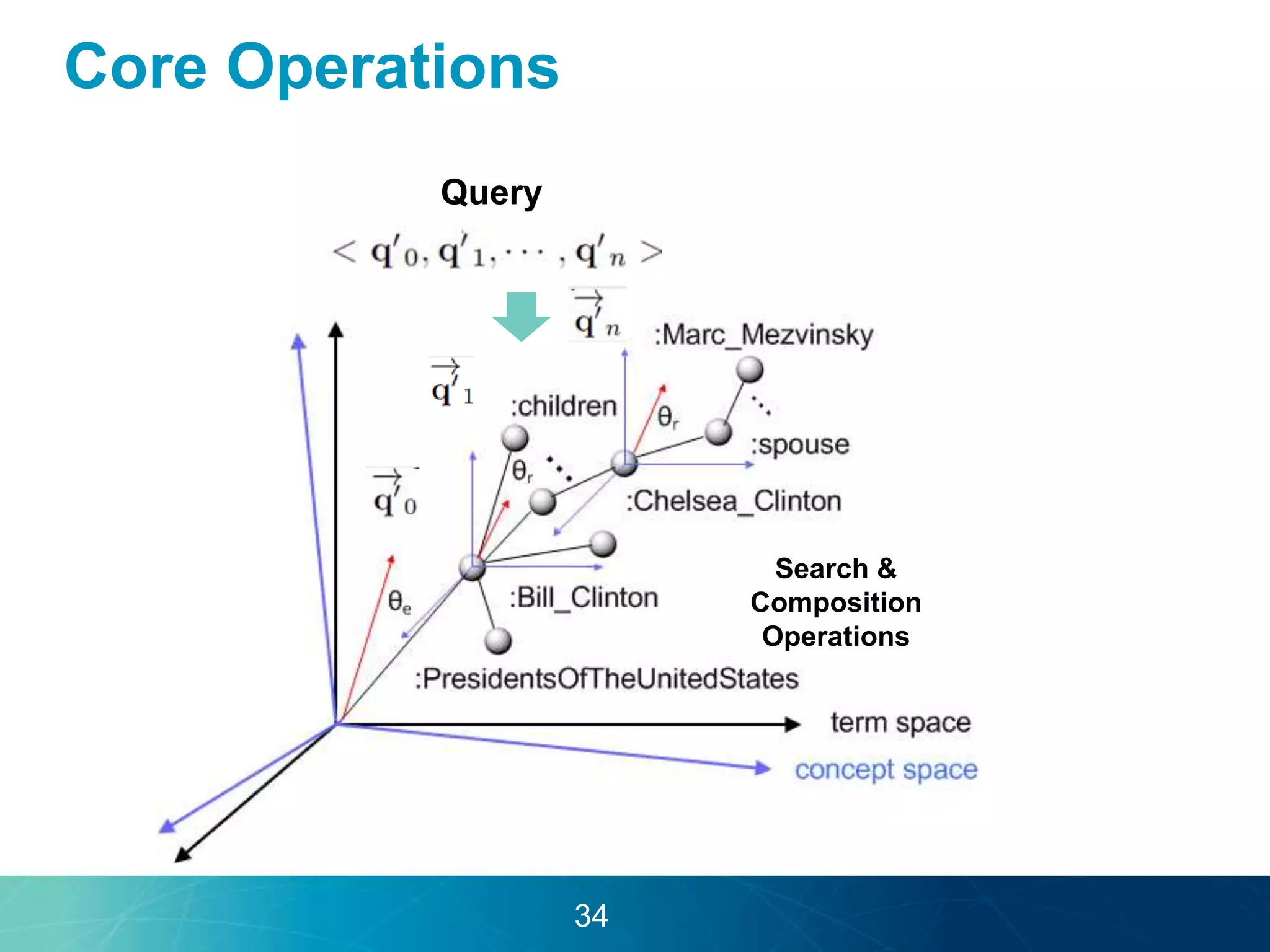

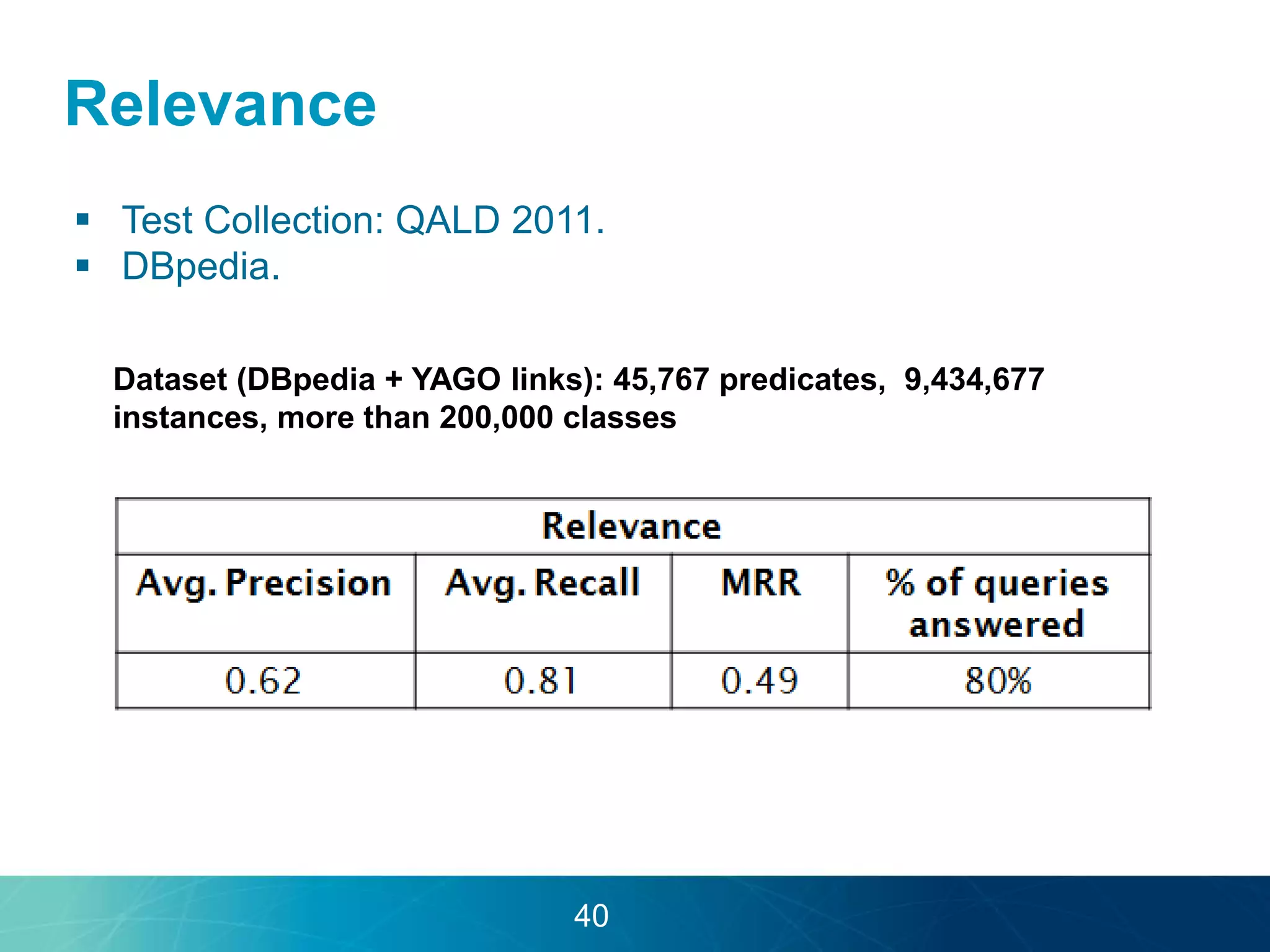

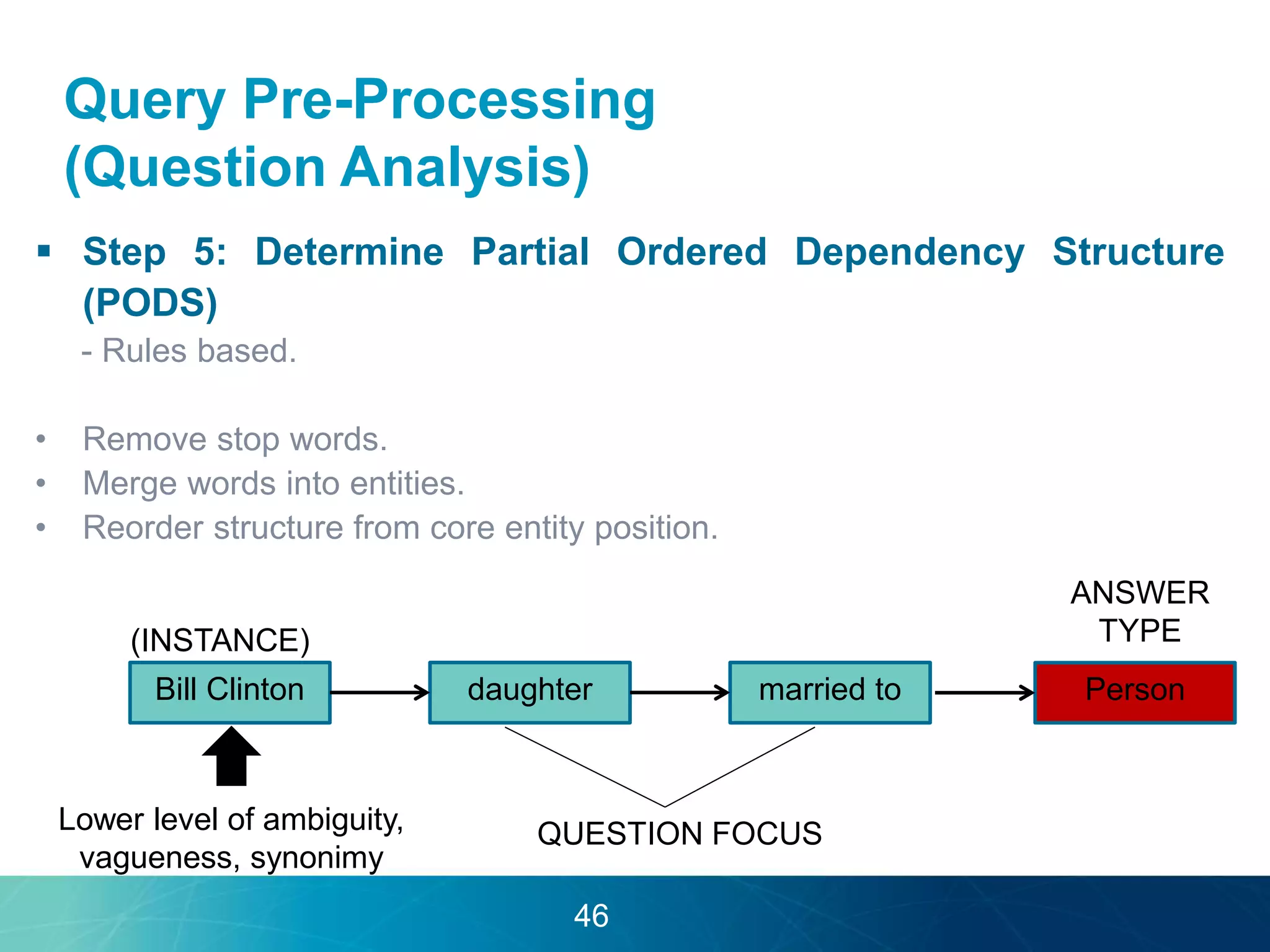

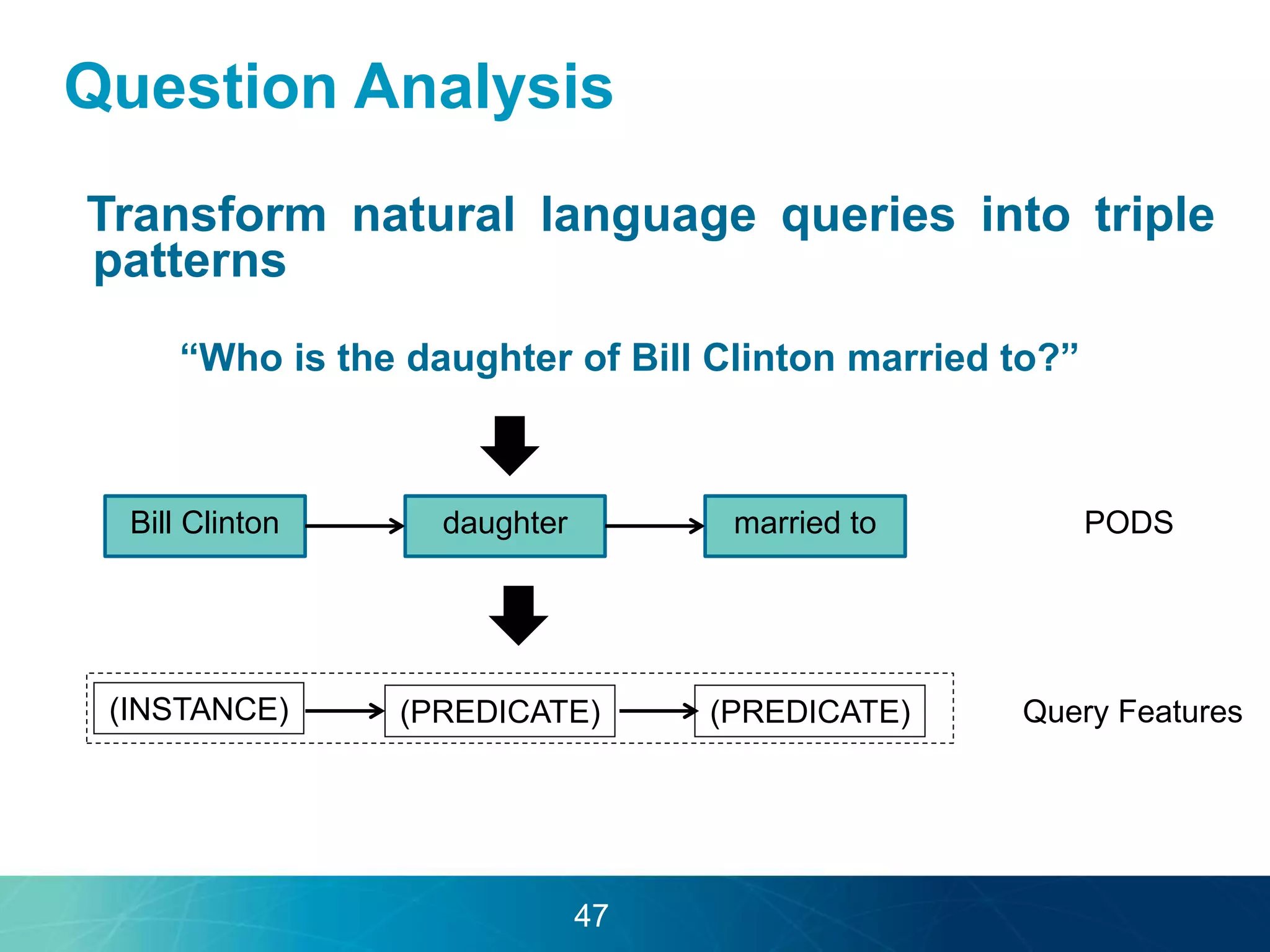

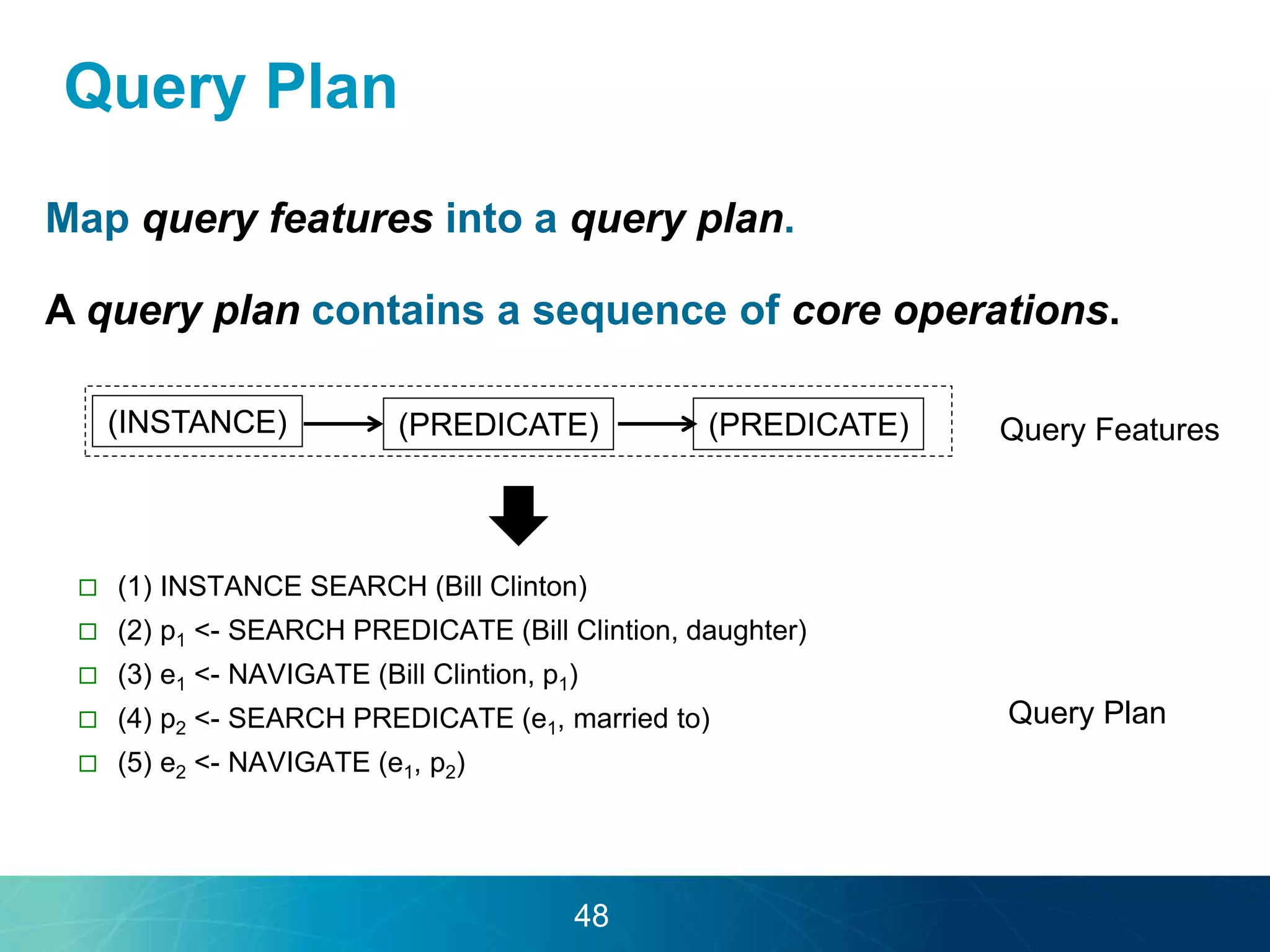

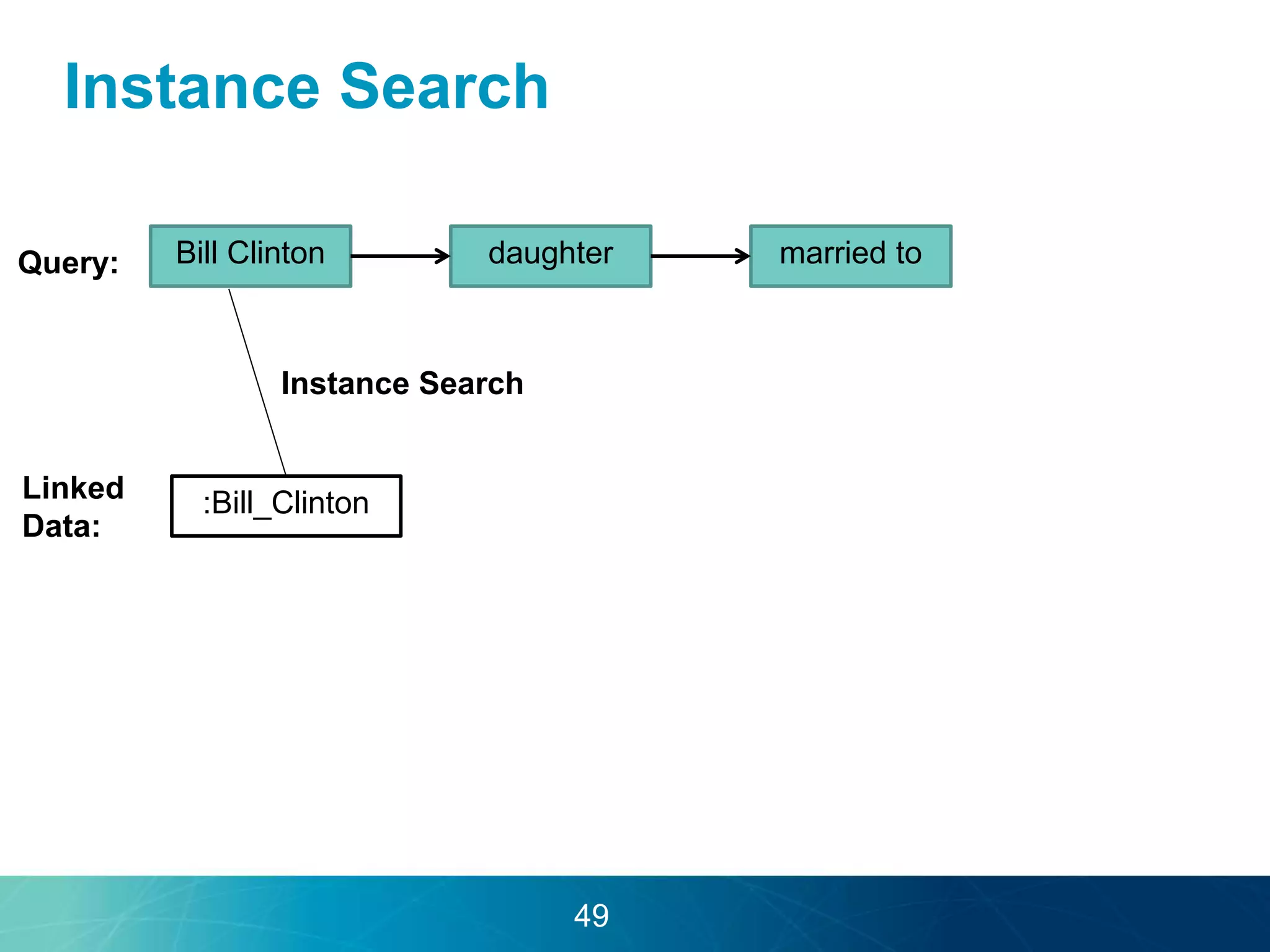

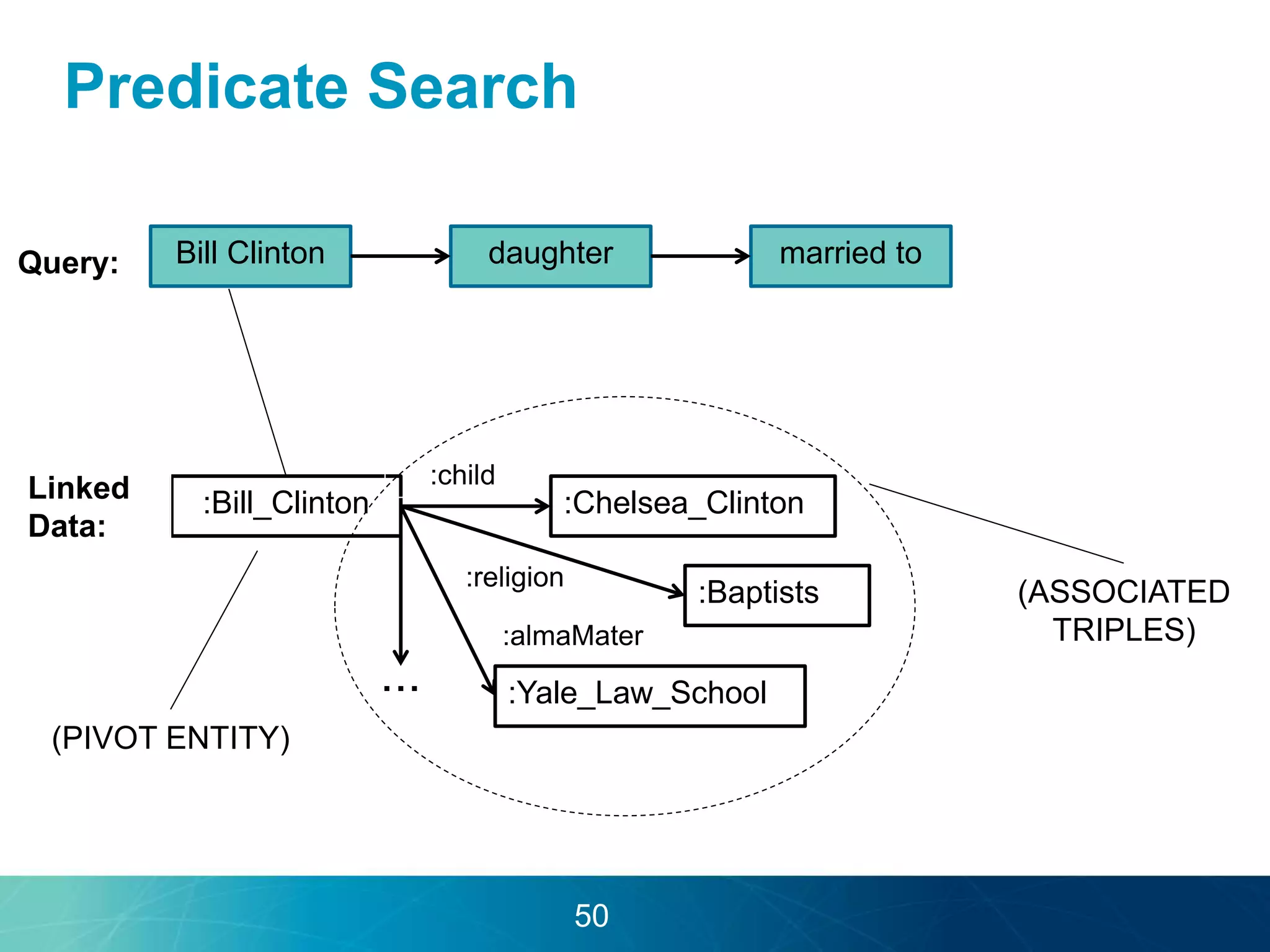

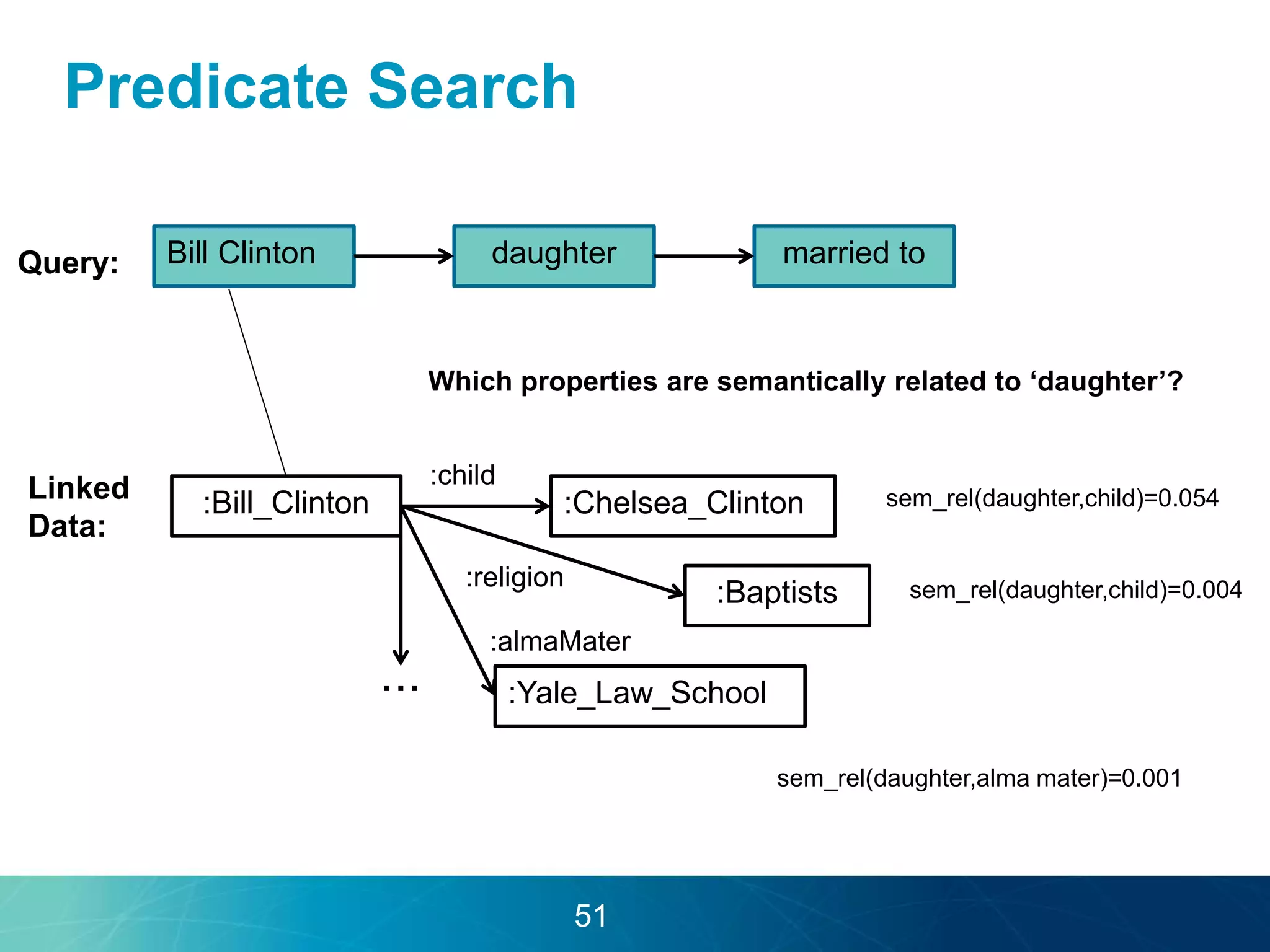

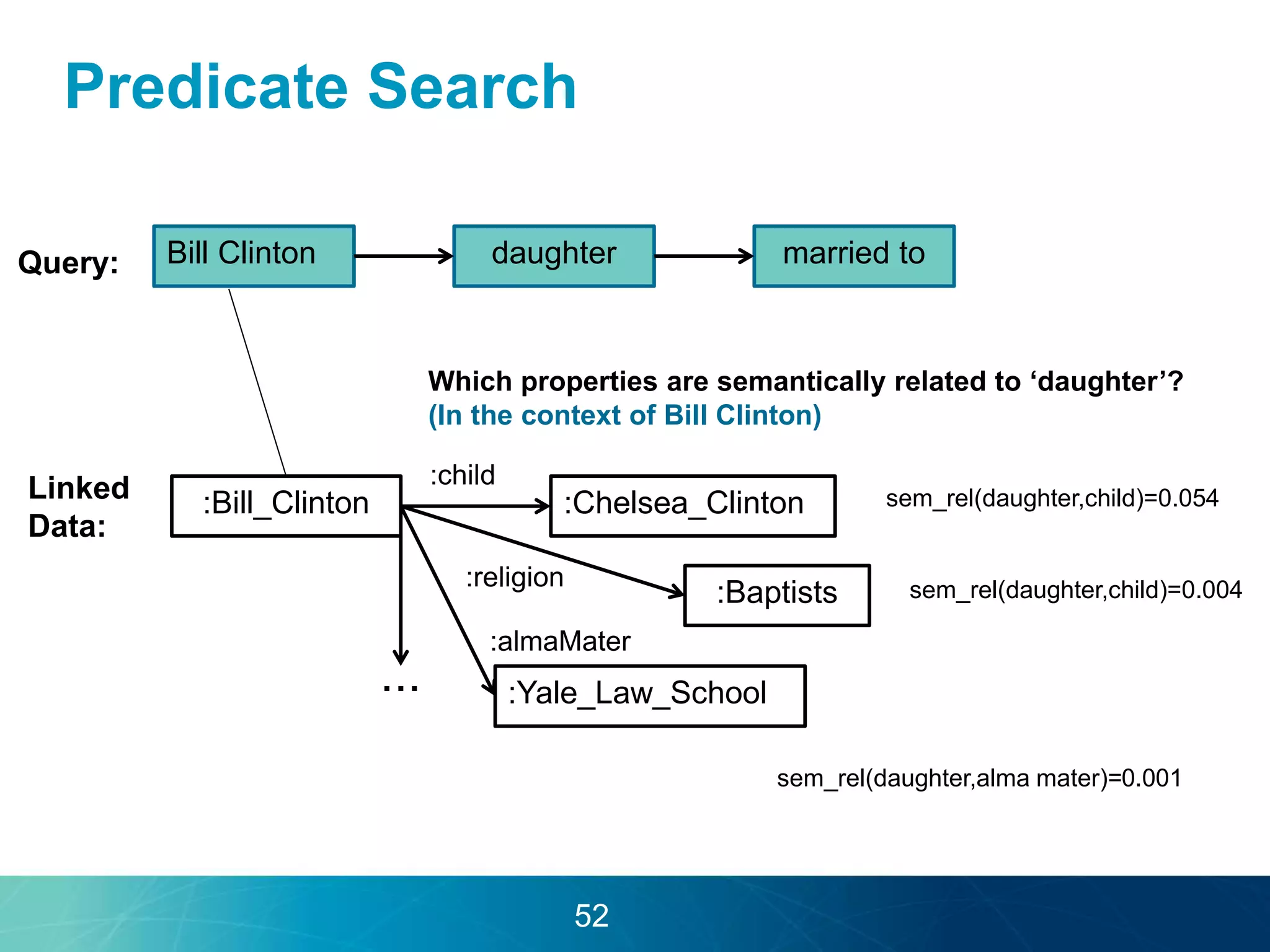

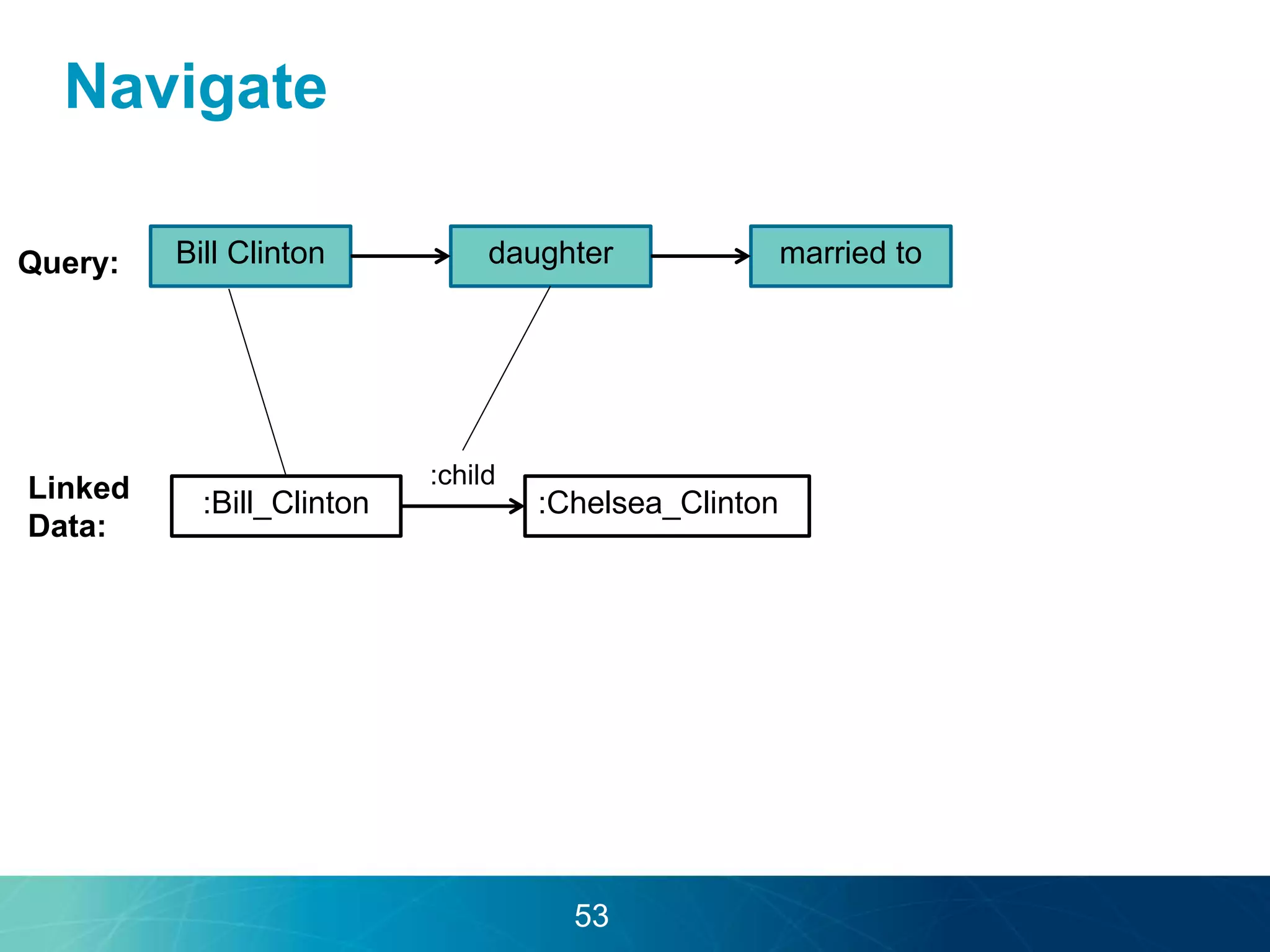

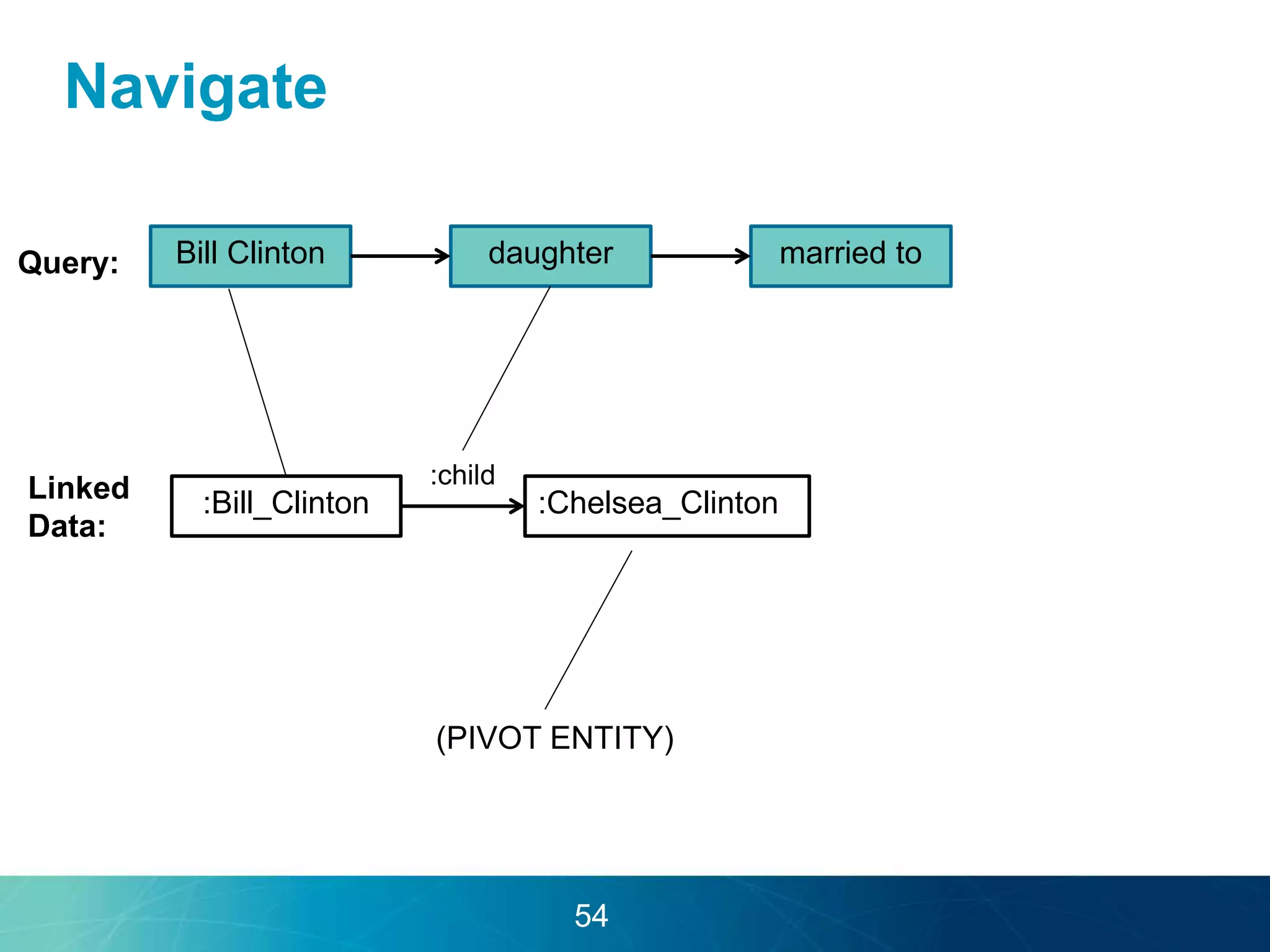

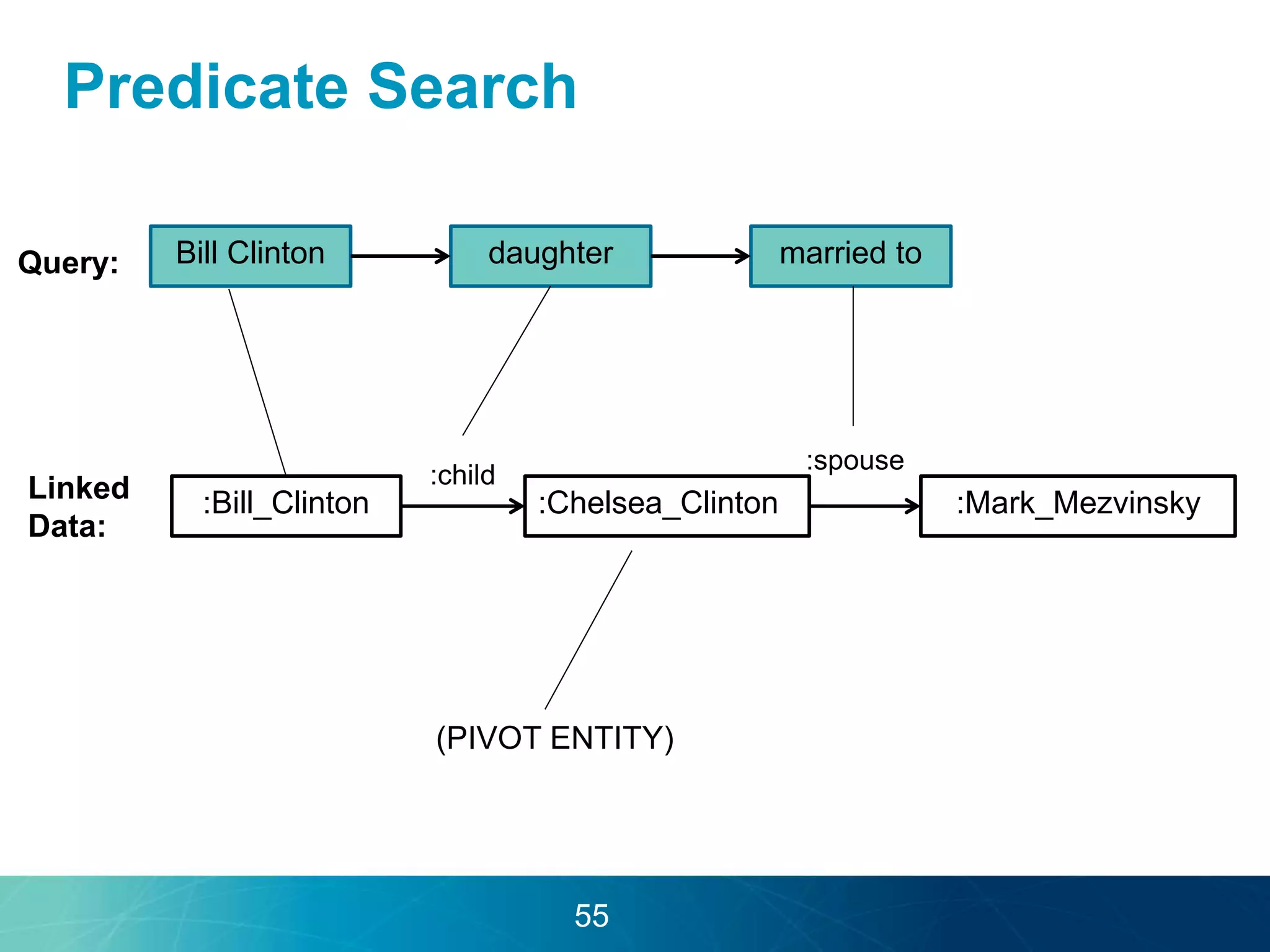

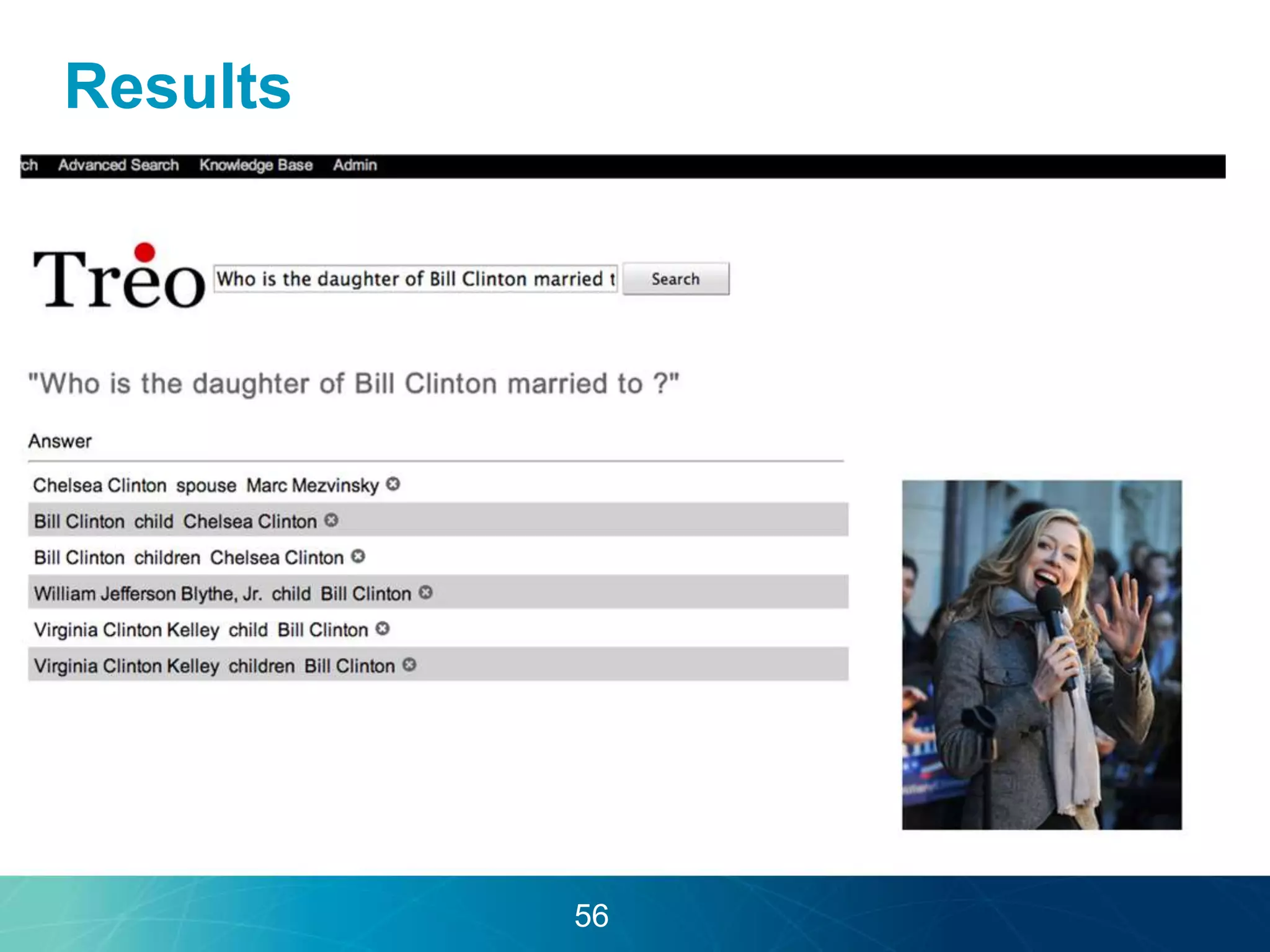

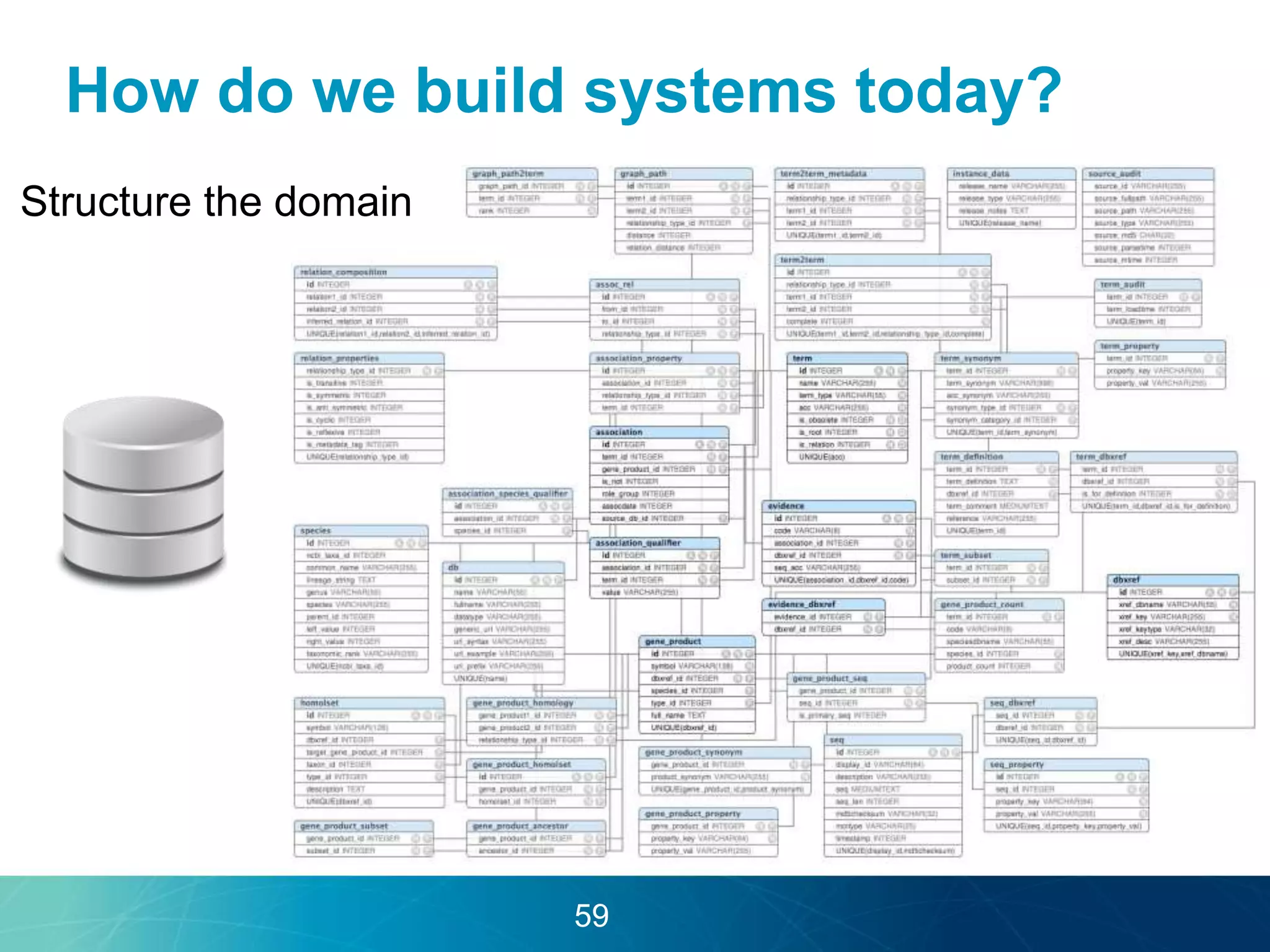

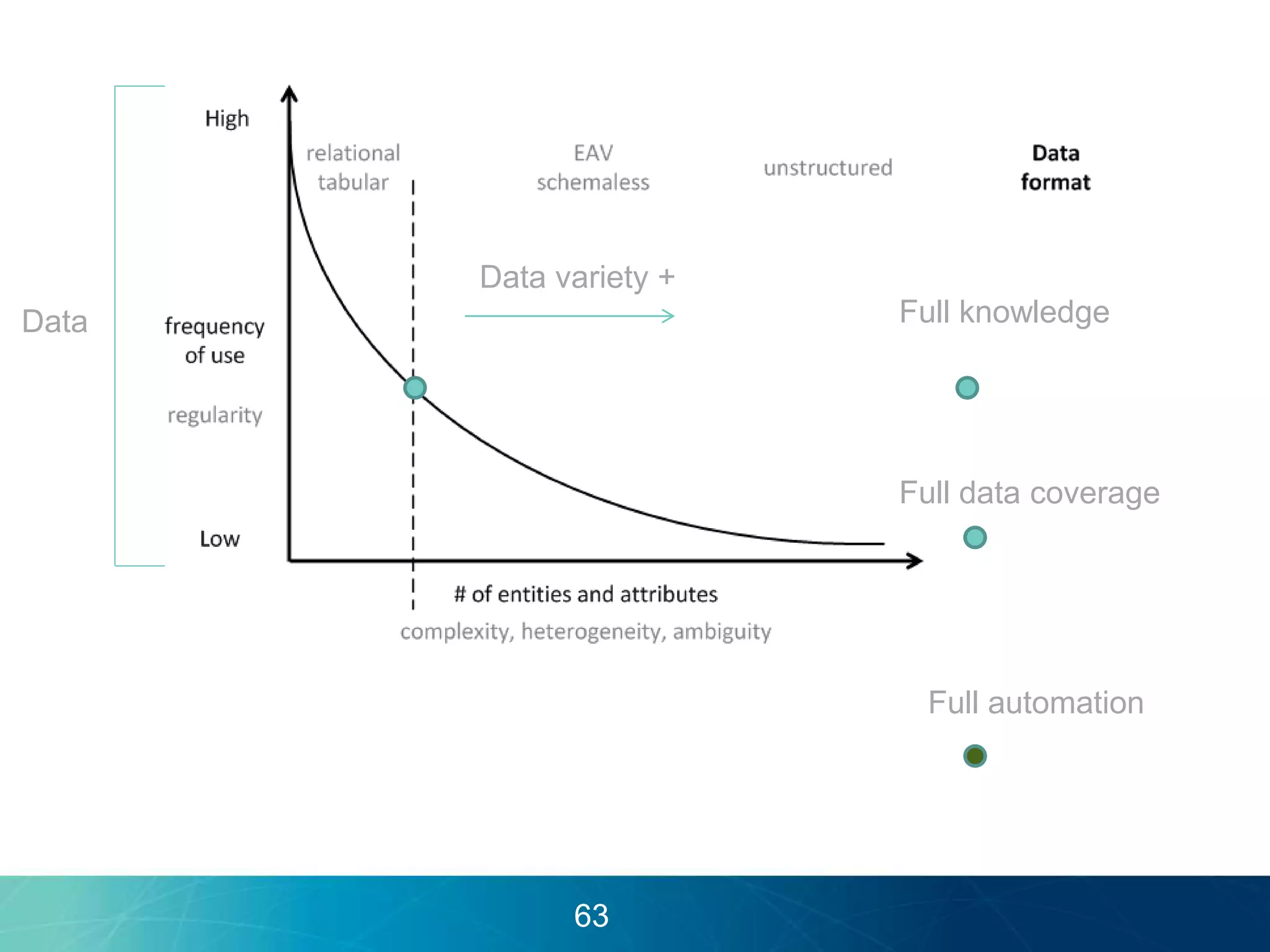

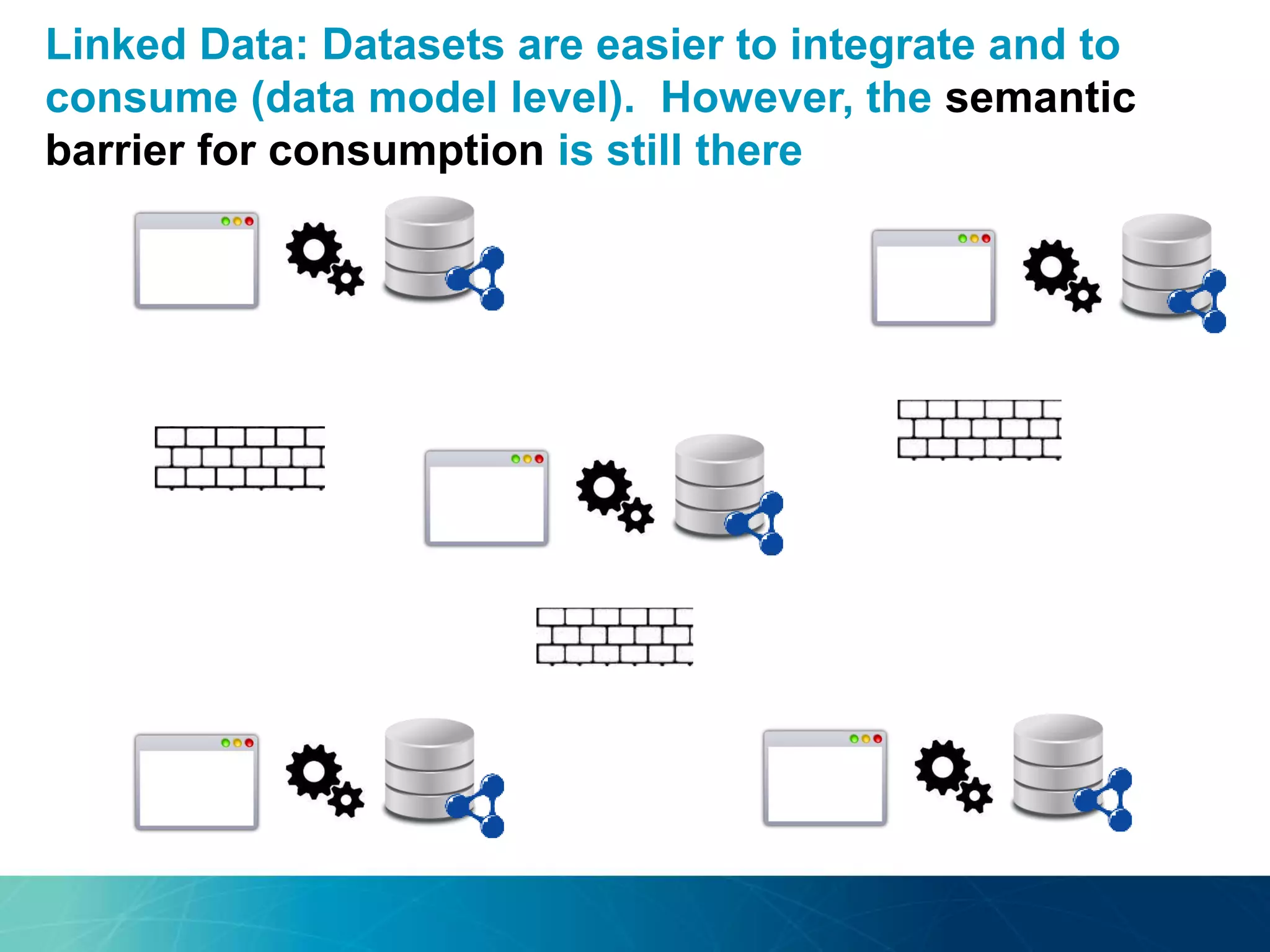

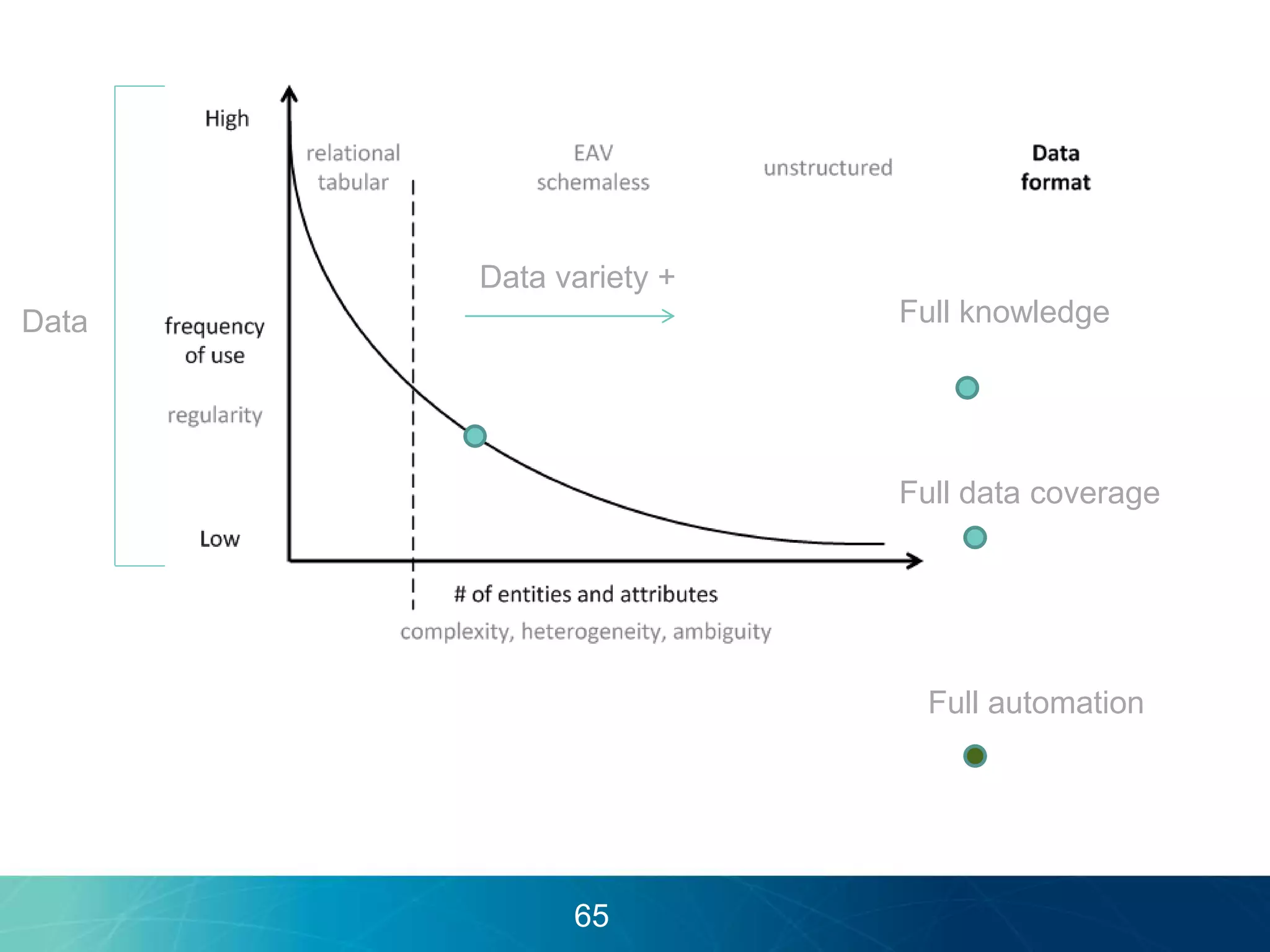

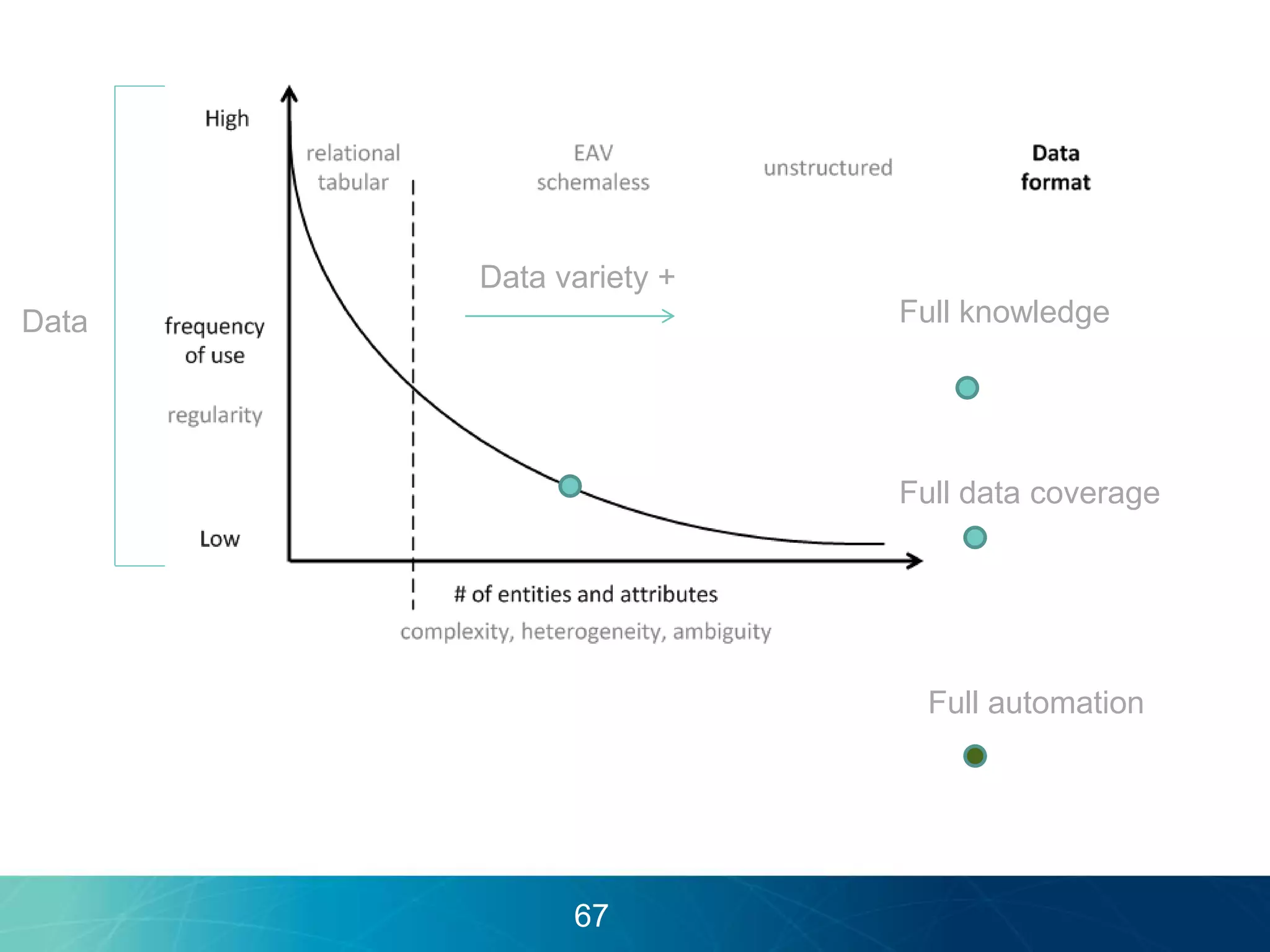

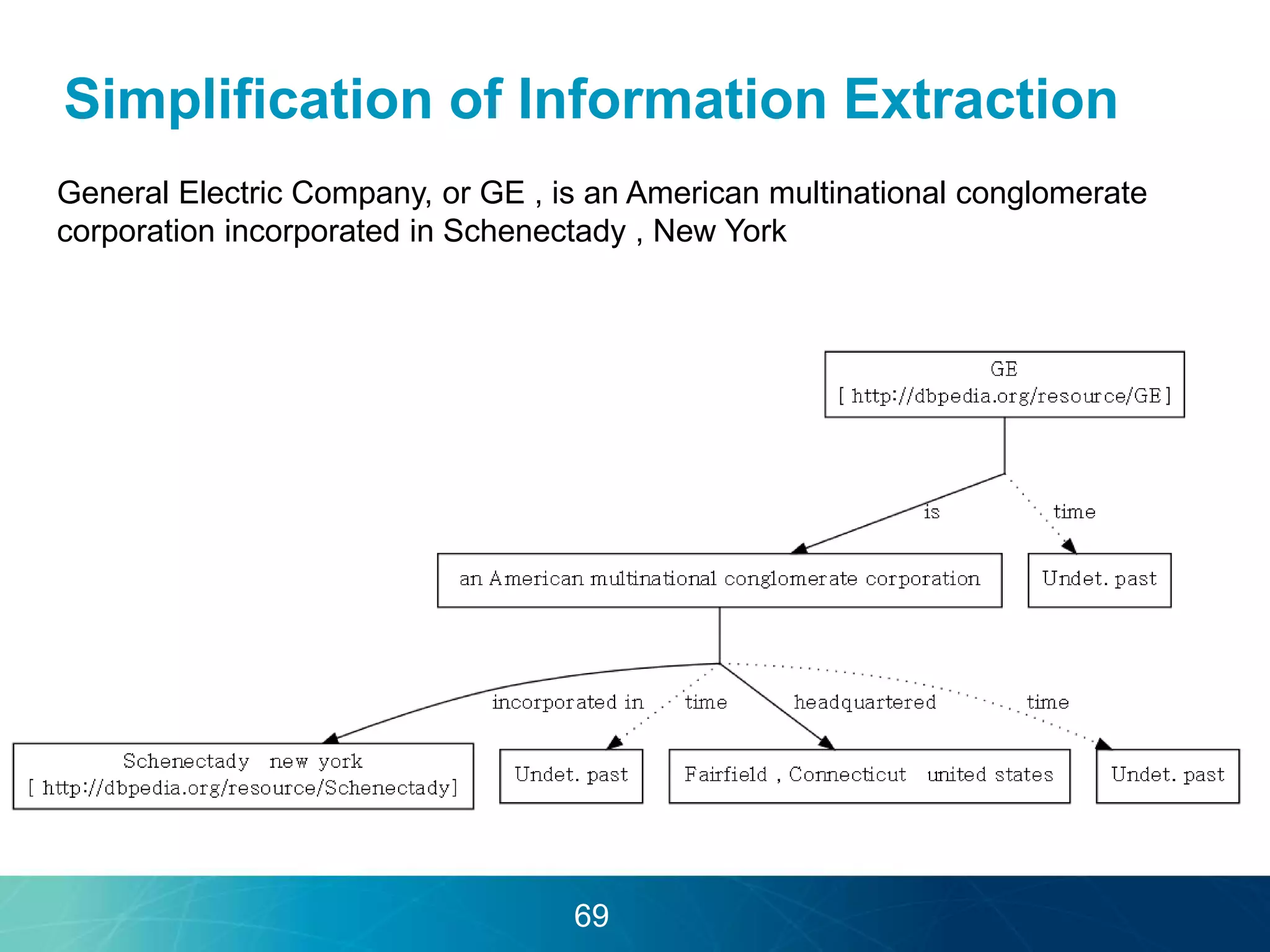

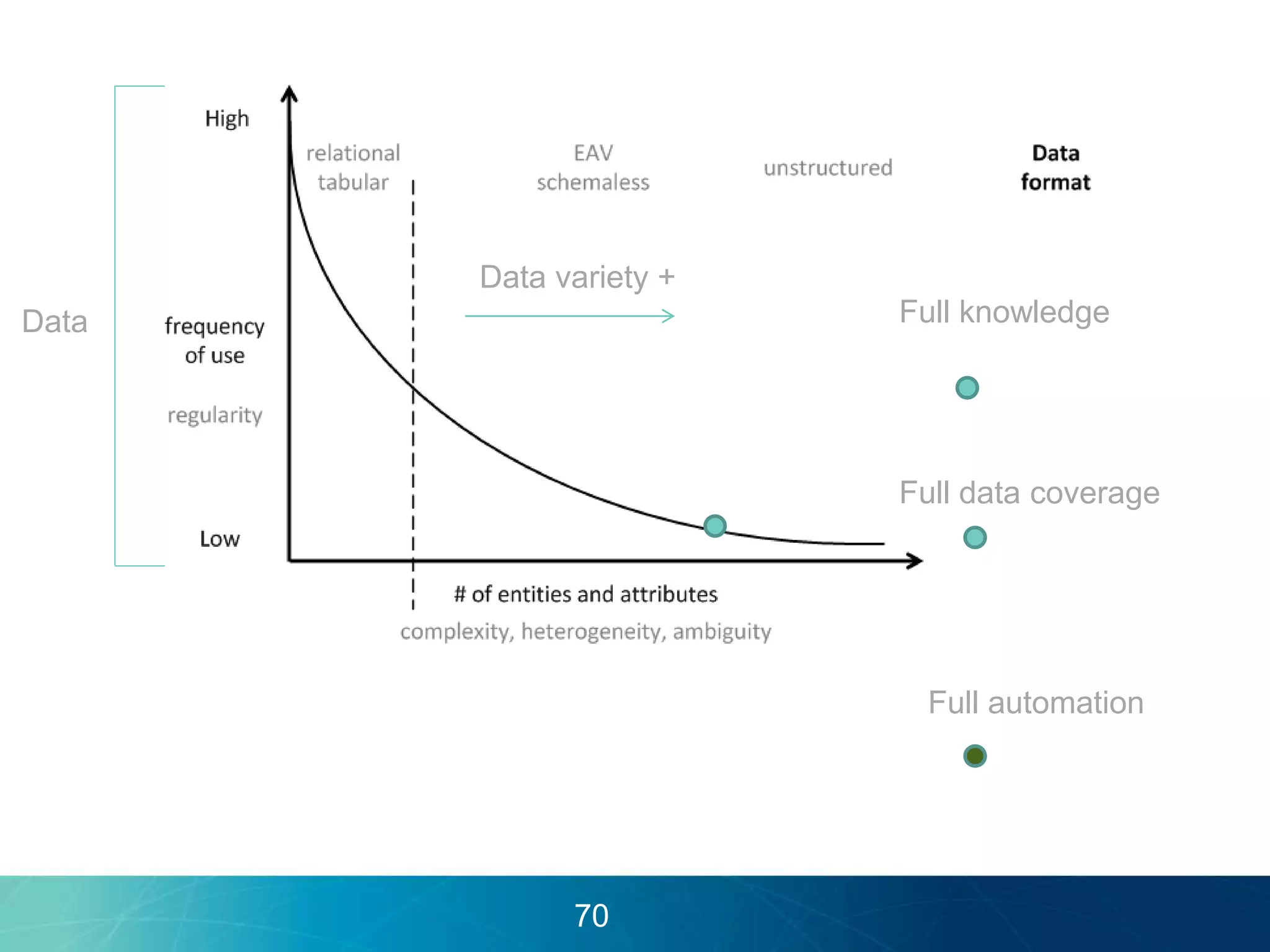

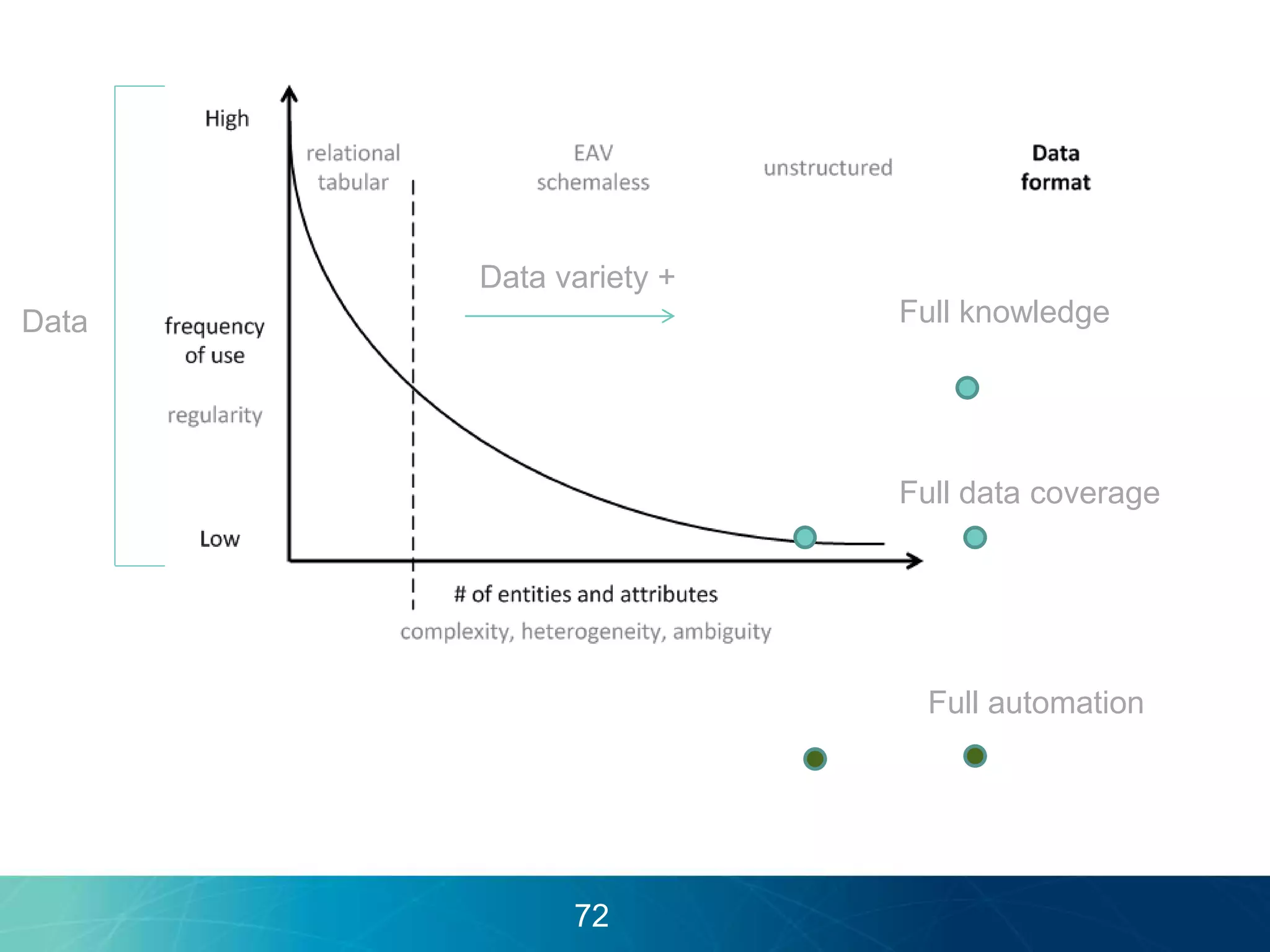

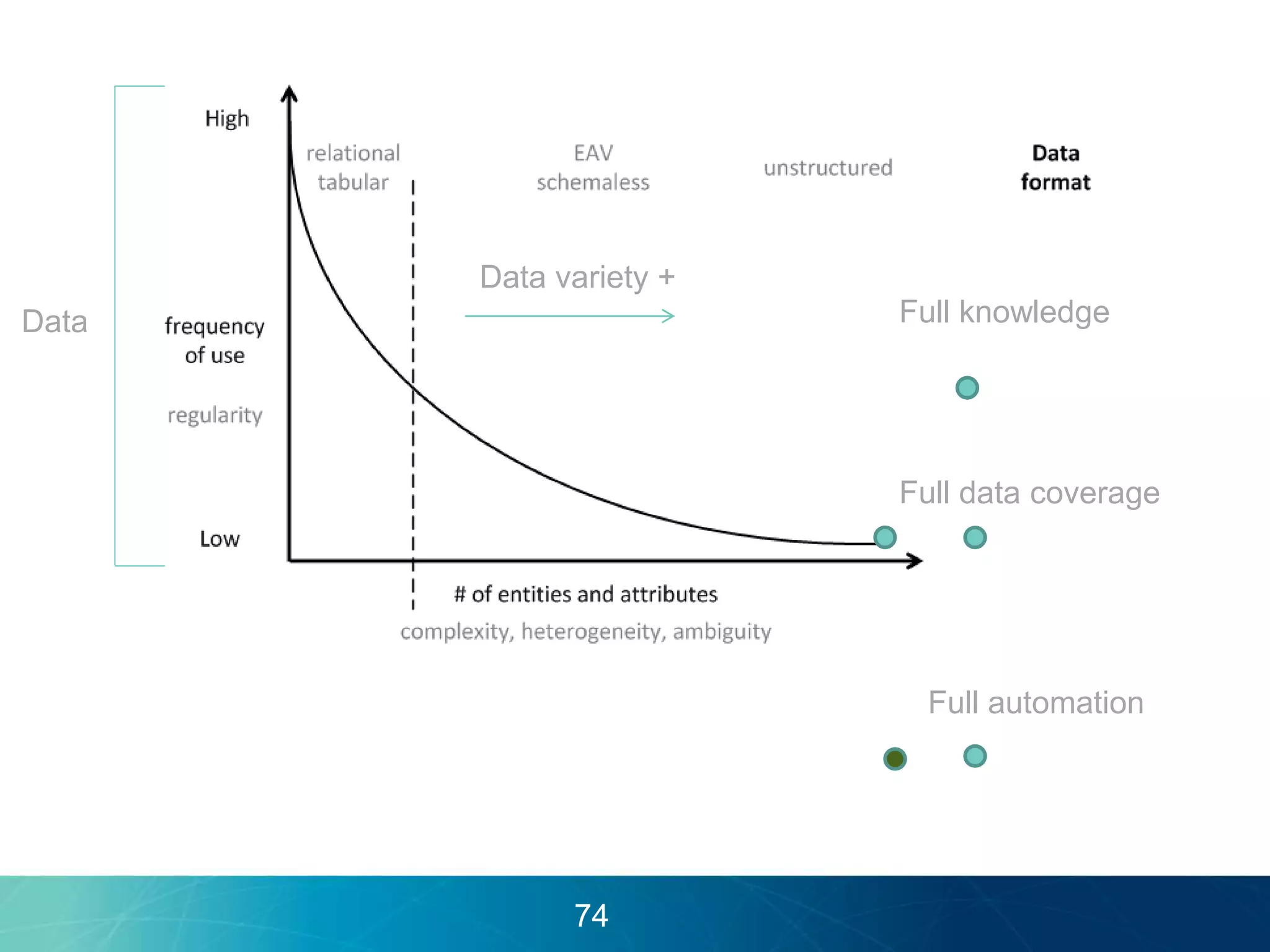

The document discusses the evolution of database technologies towards schema-agnosticism and the challenges posed by semantic heterogeneity in a schema-less world. It highlights the role of distributional semantic models in querying complex, unstructured data through natural language interfaces, with a case study of the Treo QA system as an example. The takeaway emphasizes that existing semantic technologies can address major data management issues, with schema-agnosticism serving as a key approach in leveraging the variety and complexity of data.