Embed presentation

Downloaded 93 times

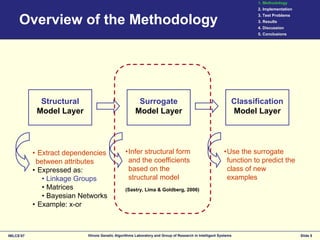

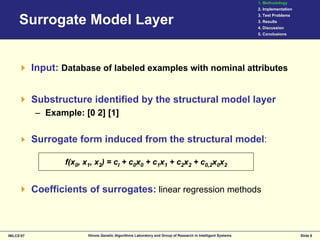

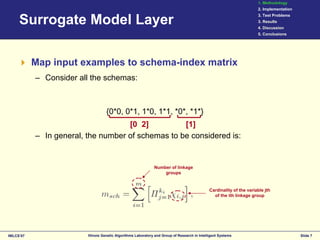

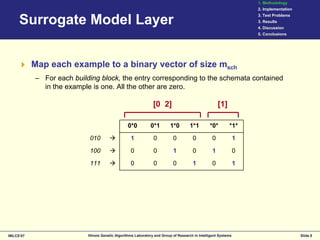

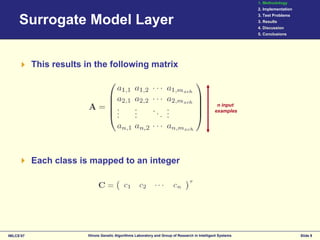

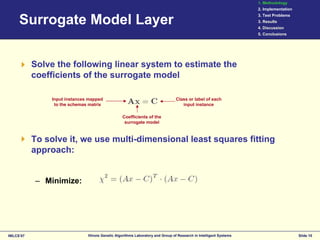

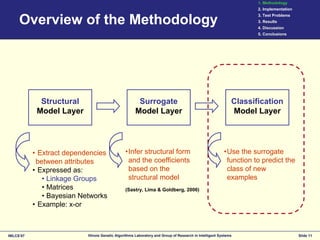

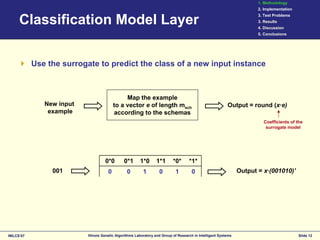

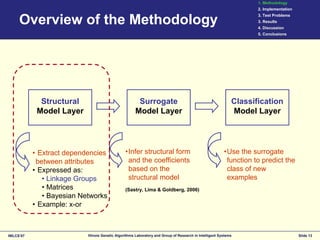

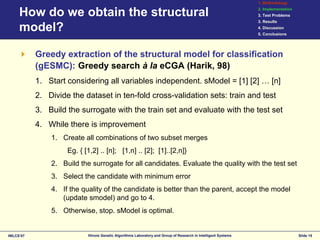

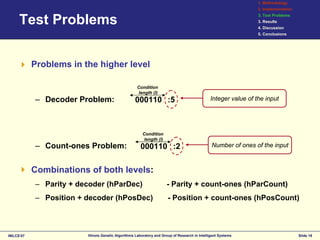

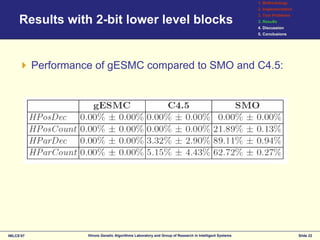

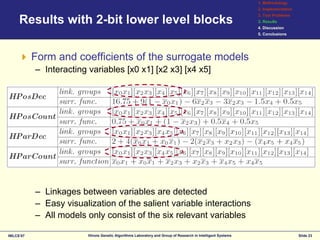

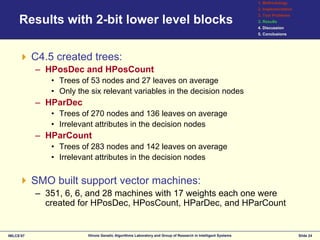

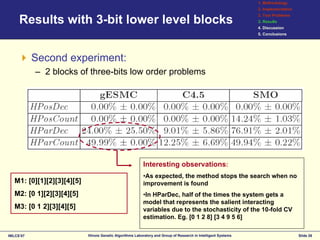

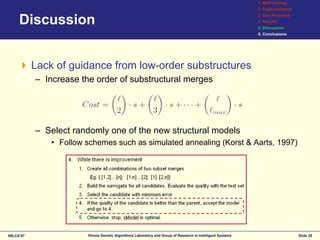

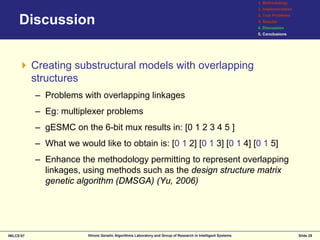

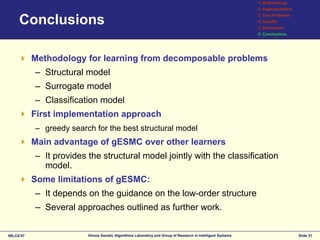

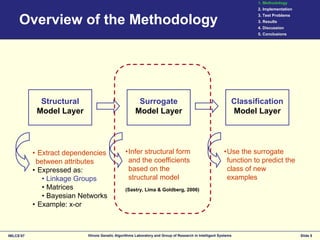

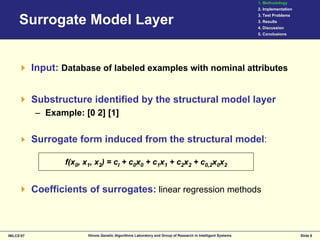

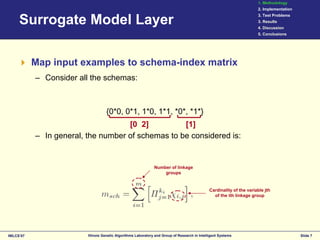

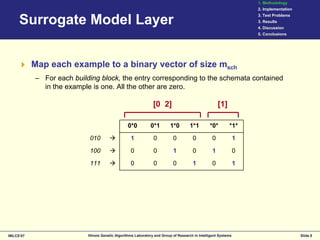

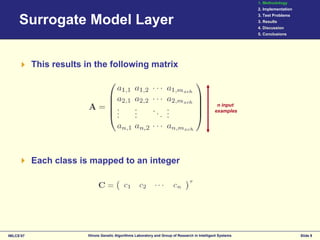

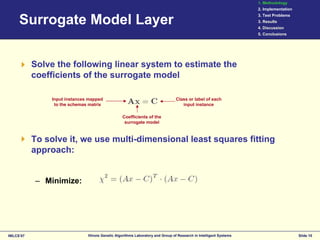

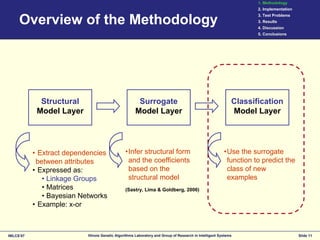

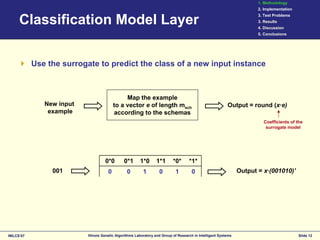

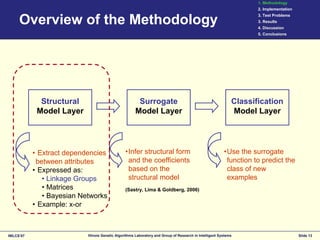

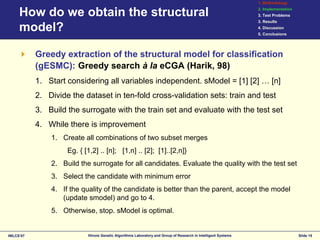

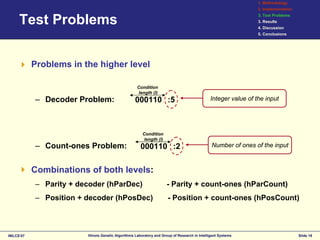

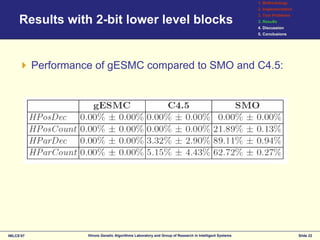

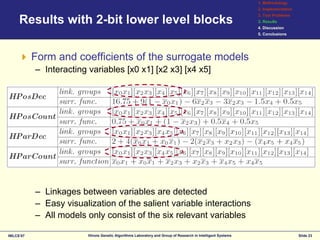

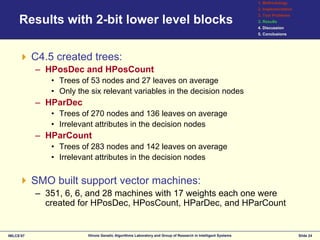

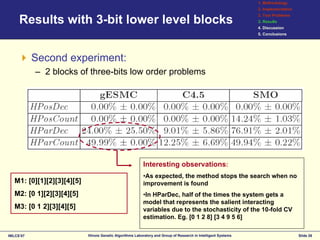

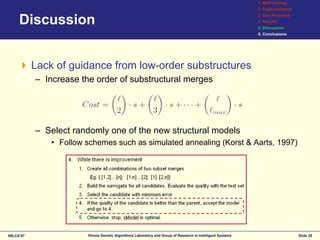

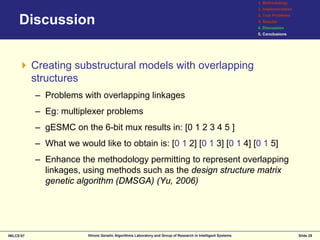

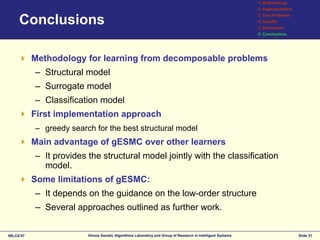

This document discusses the development of surrogates for decomposable classification problems using probabilistic models and regression techniques. It outlines a methodology for building structural surrogate models to classify new examples and evaluates their effectiveness through experiments on various test problems. The results indicate advantages of the proposed greedy extraction method over traditional approaches, although limitations and potential improvements are also identified.