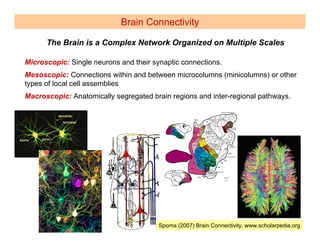

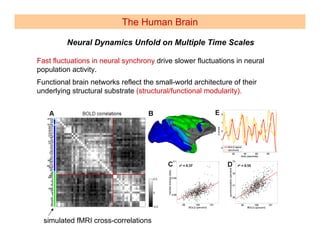

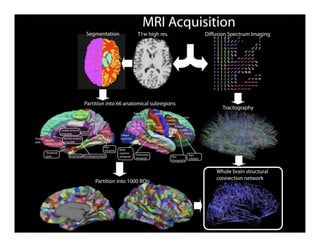

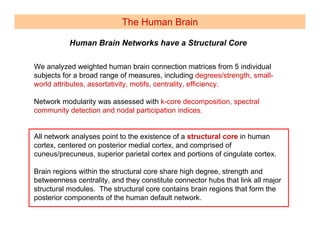

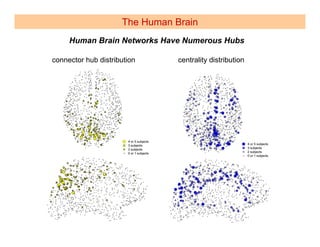

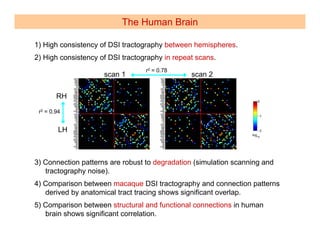

The document discusses the structure and function of the brain as a complex network. It notes that the brain exhibits both segregation and integration at multiple scales from neurons to regions. The structural connectivity of the brain forms a small-world network that allows for both specialized processing within clusters and integrated processing between regions via short path lengths. Computational models can capture large-scale brain activity and dynamics based on the underlying structural connectivity.