Embed presentation

Downloaded 42 times

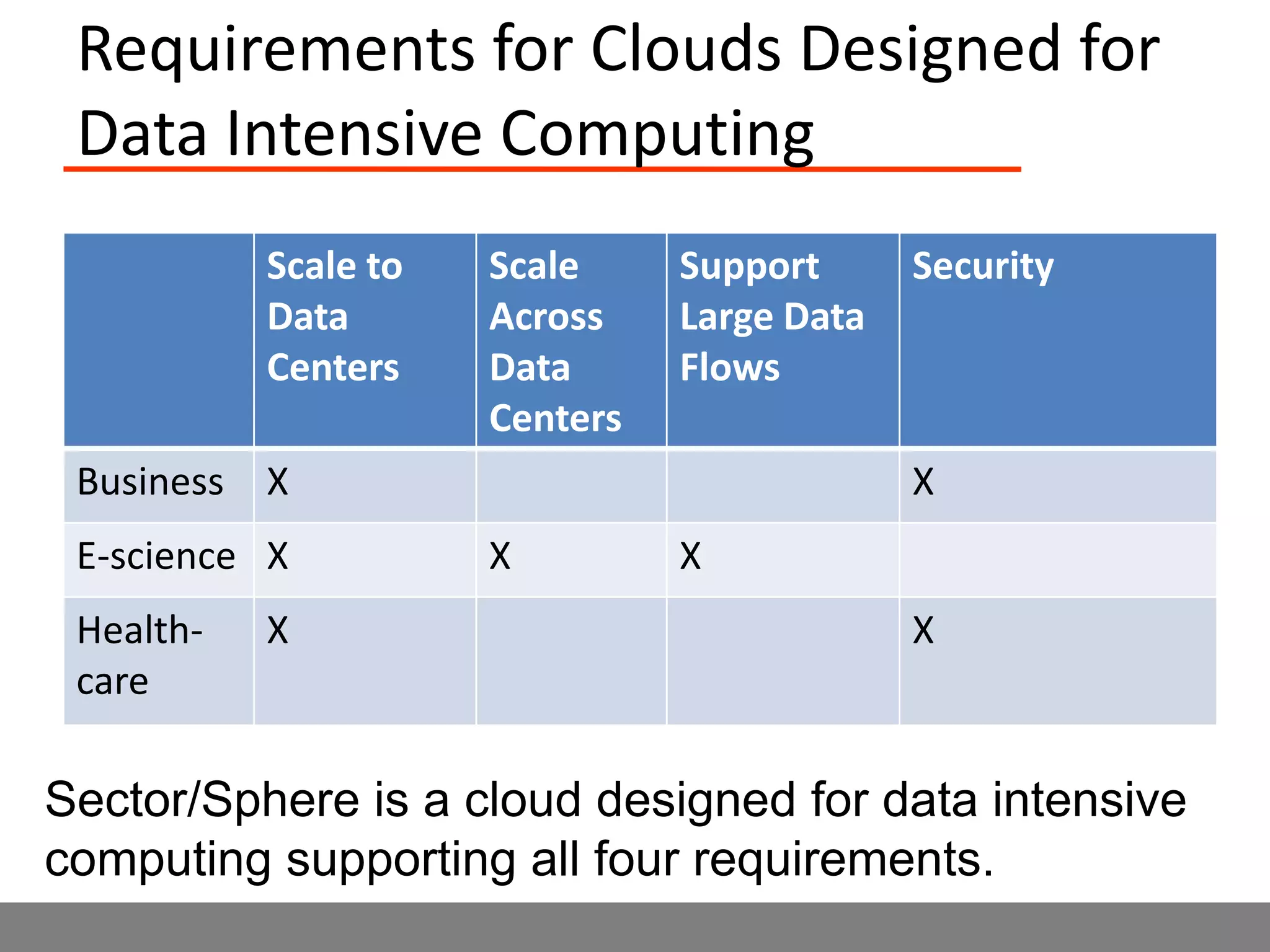

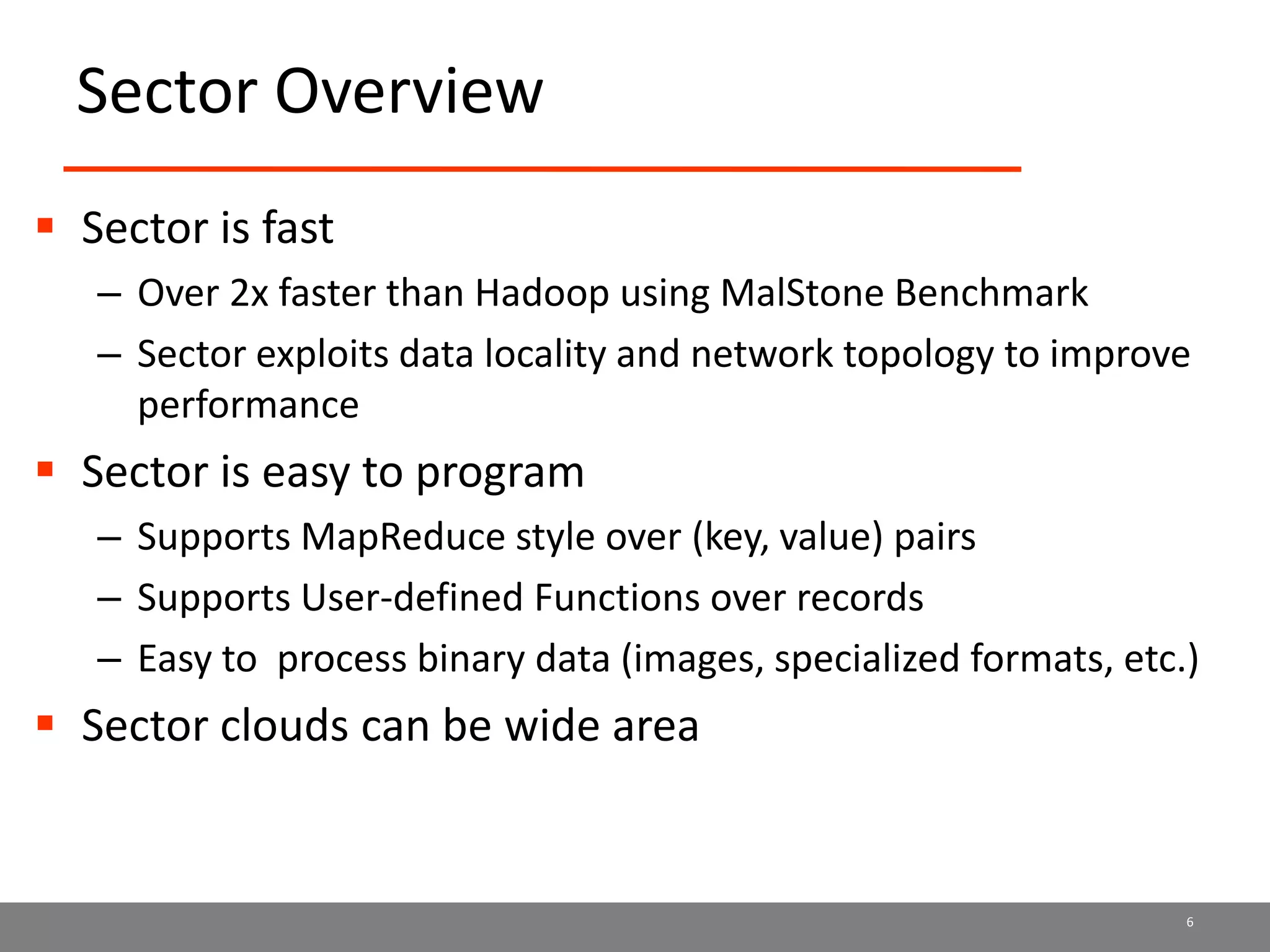

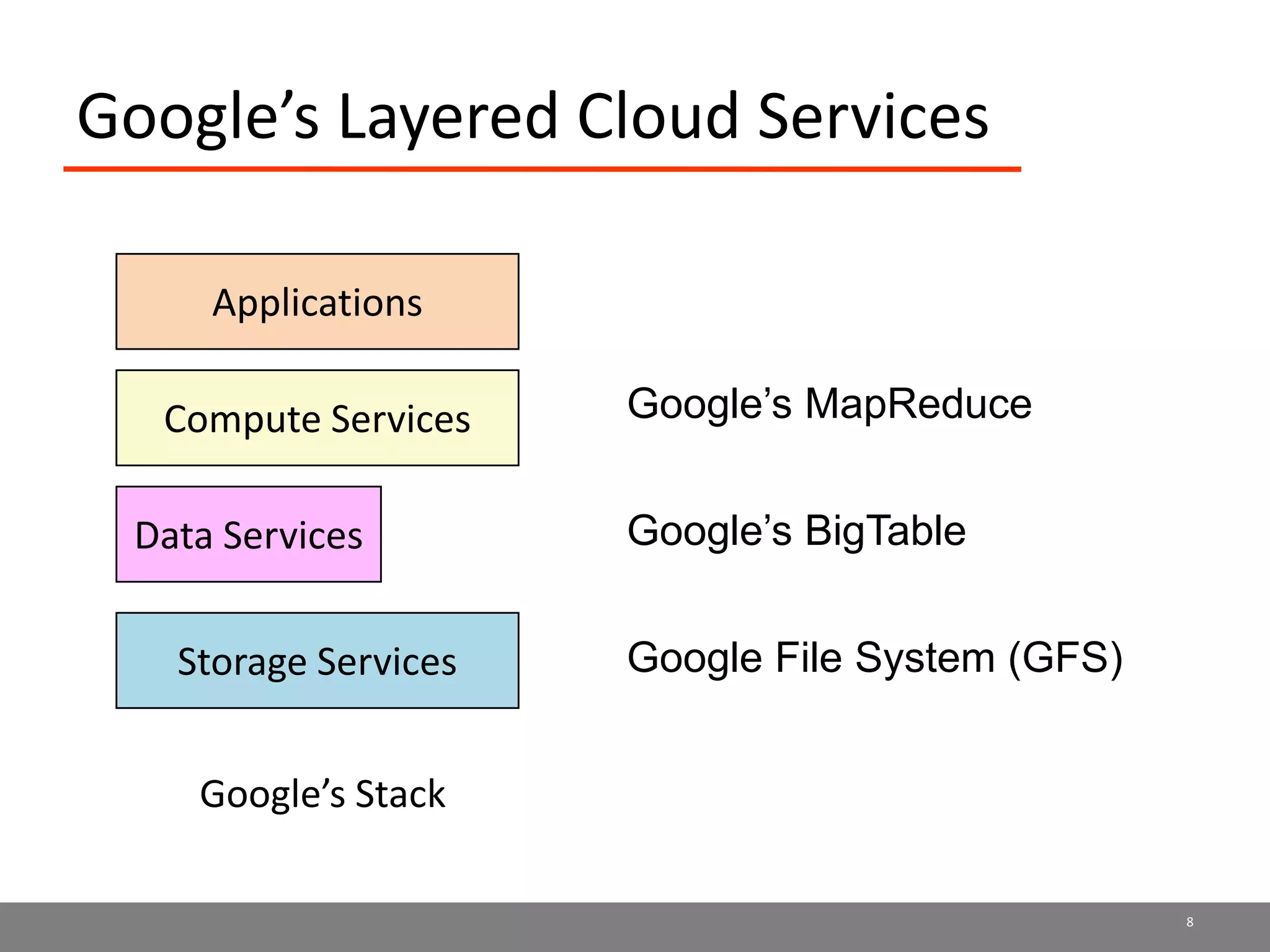

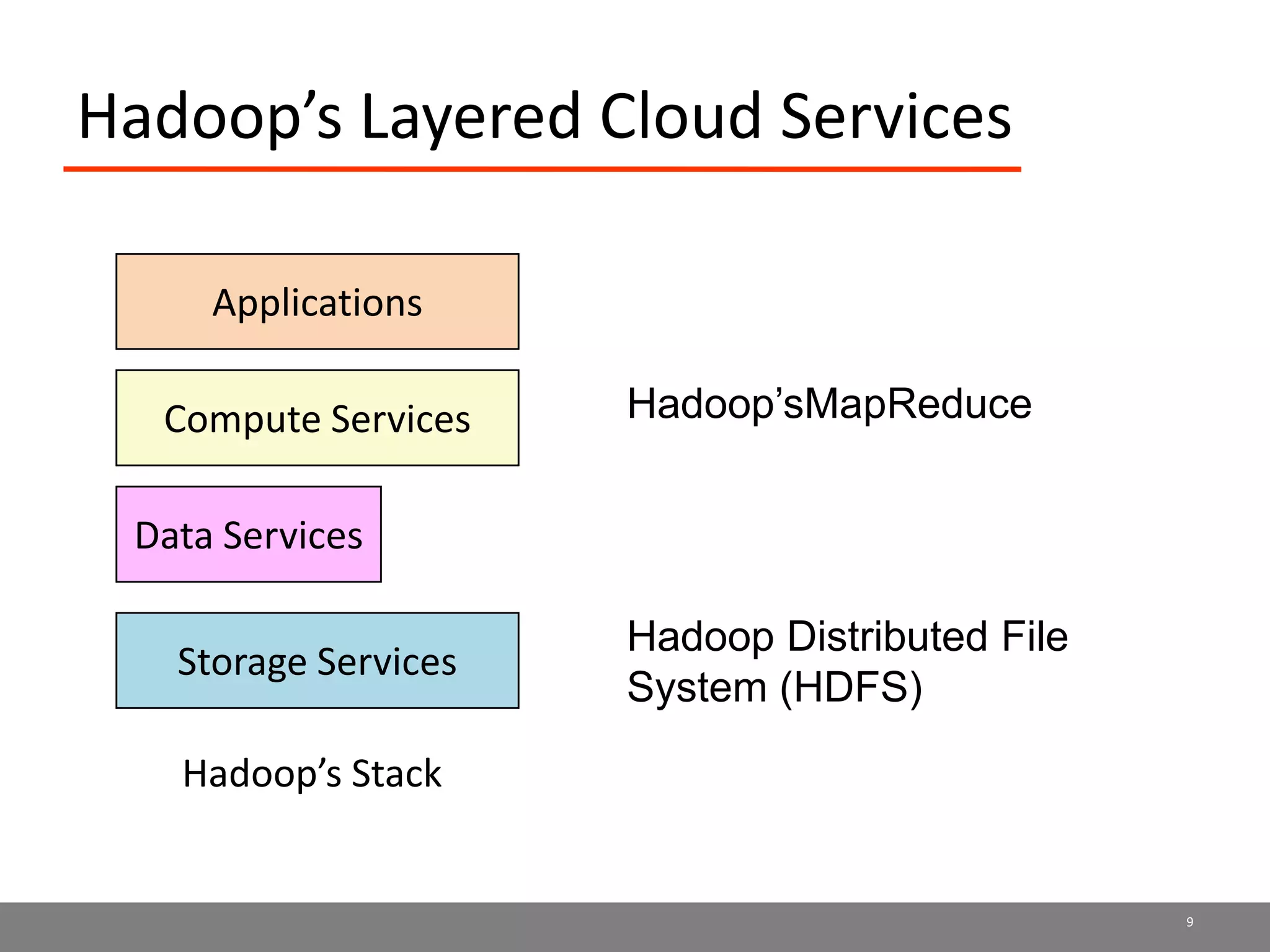

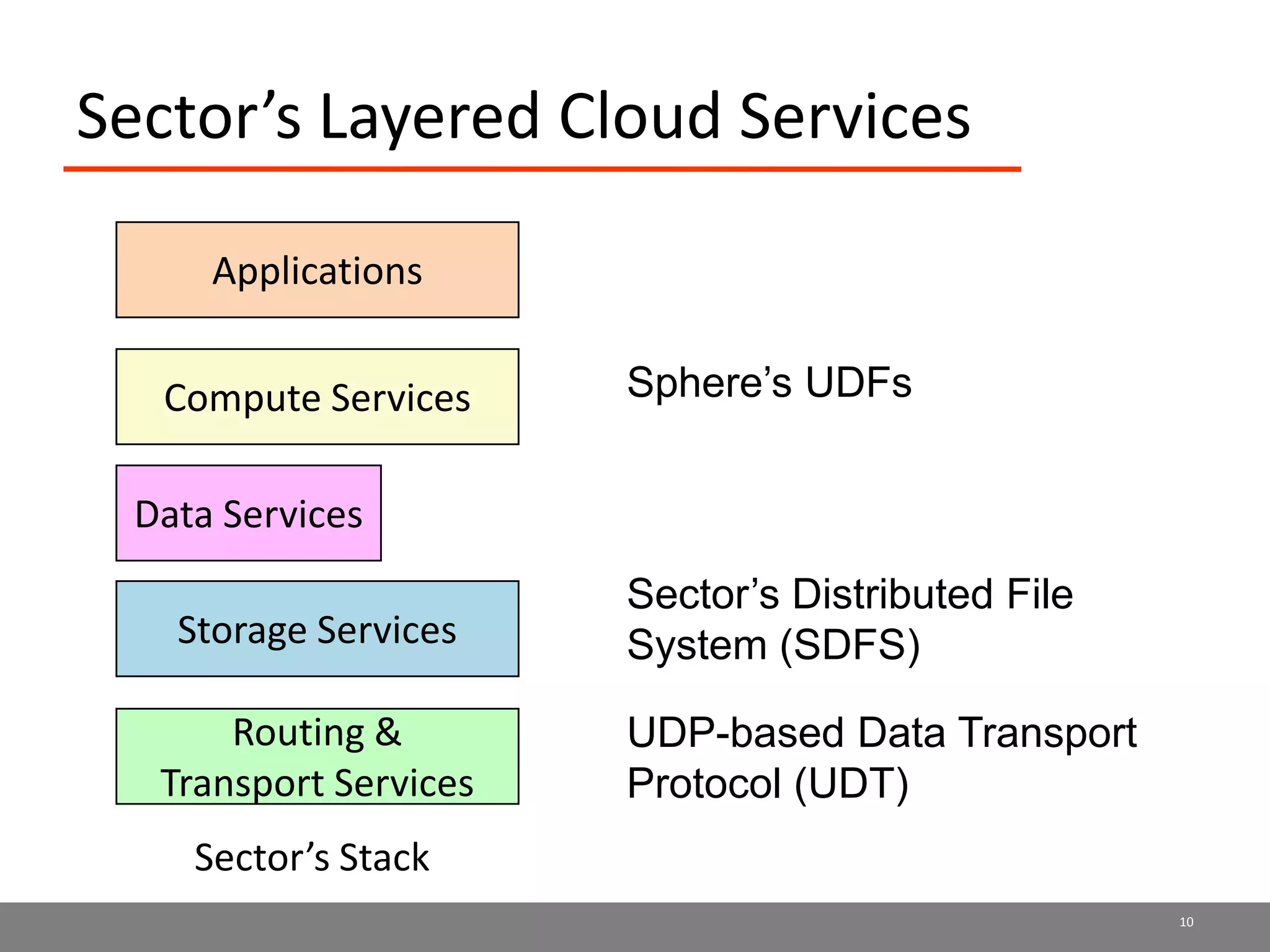

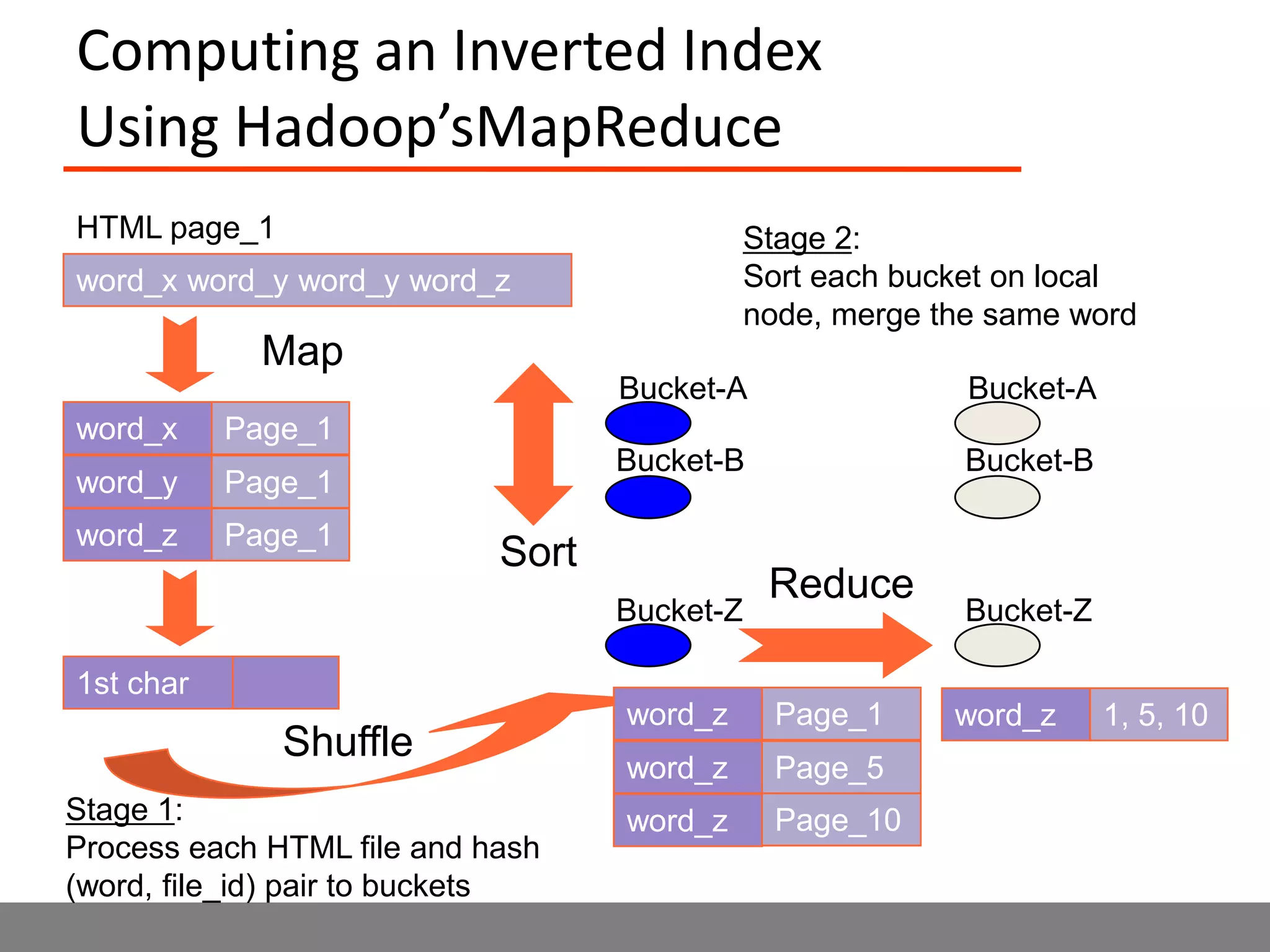

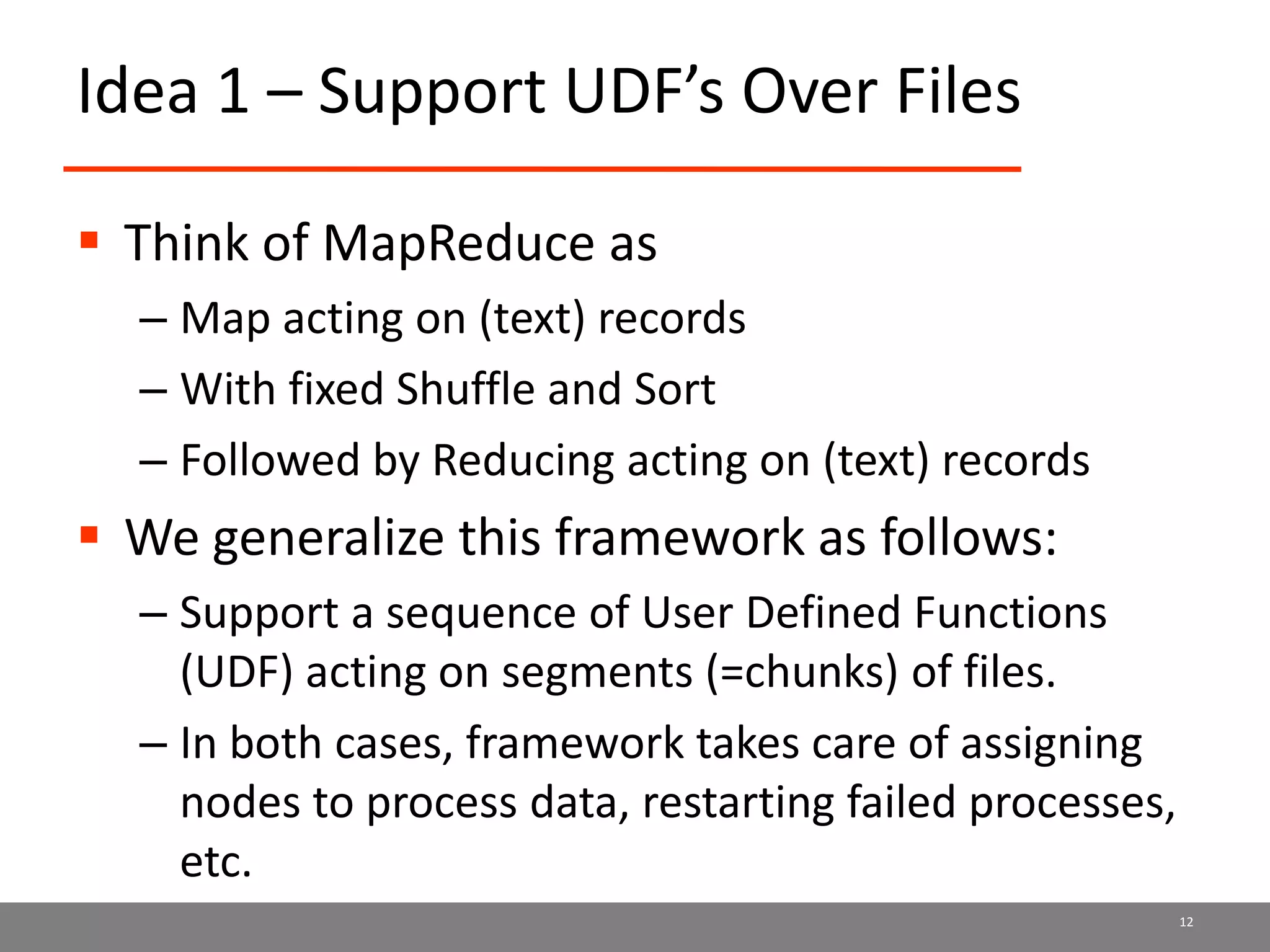

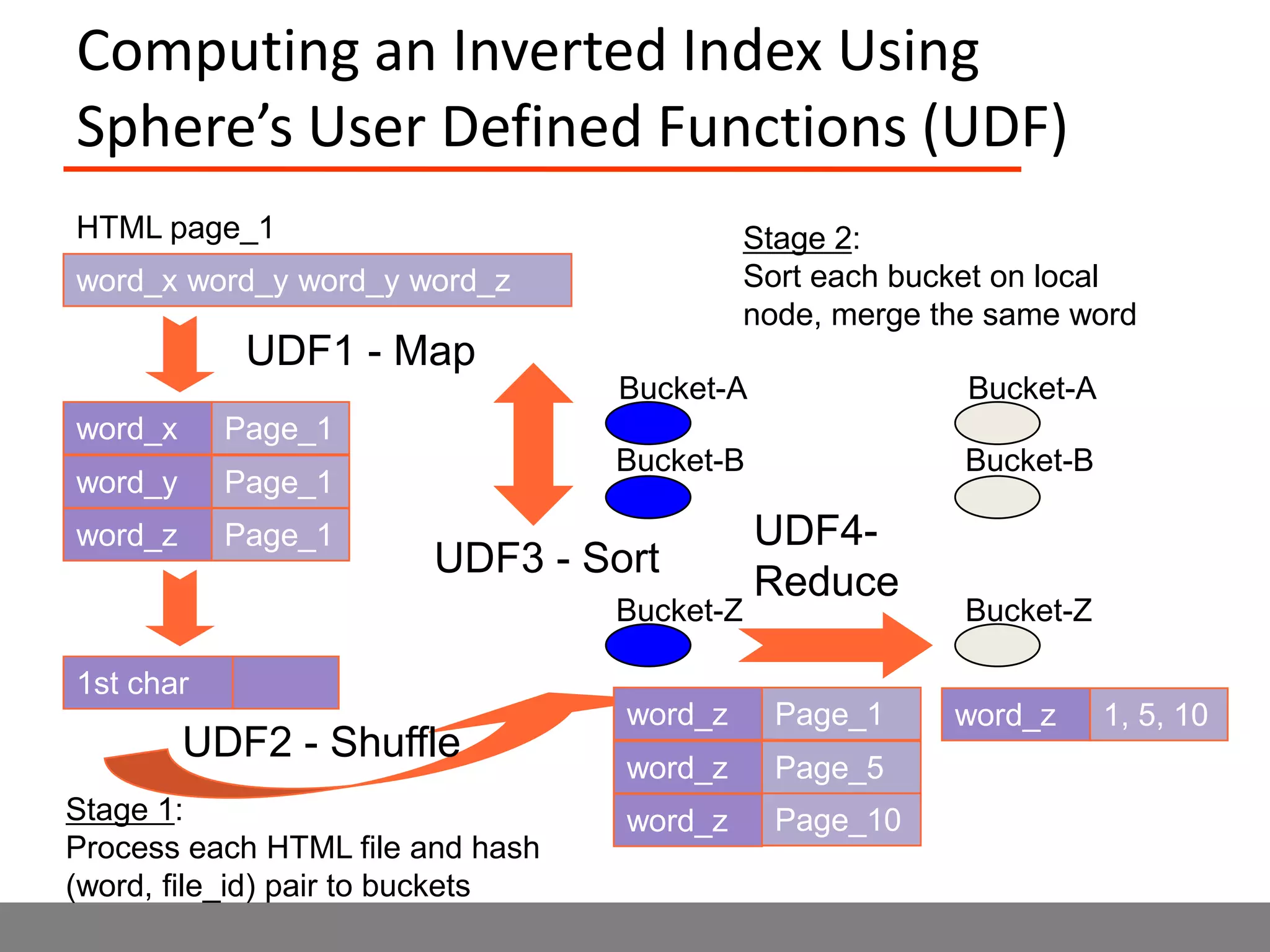

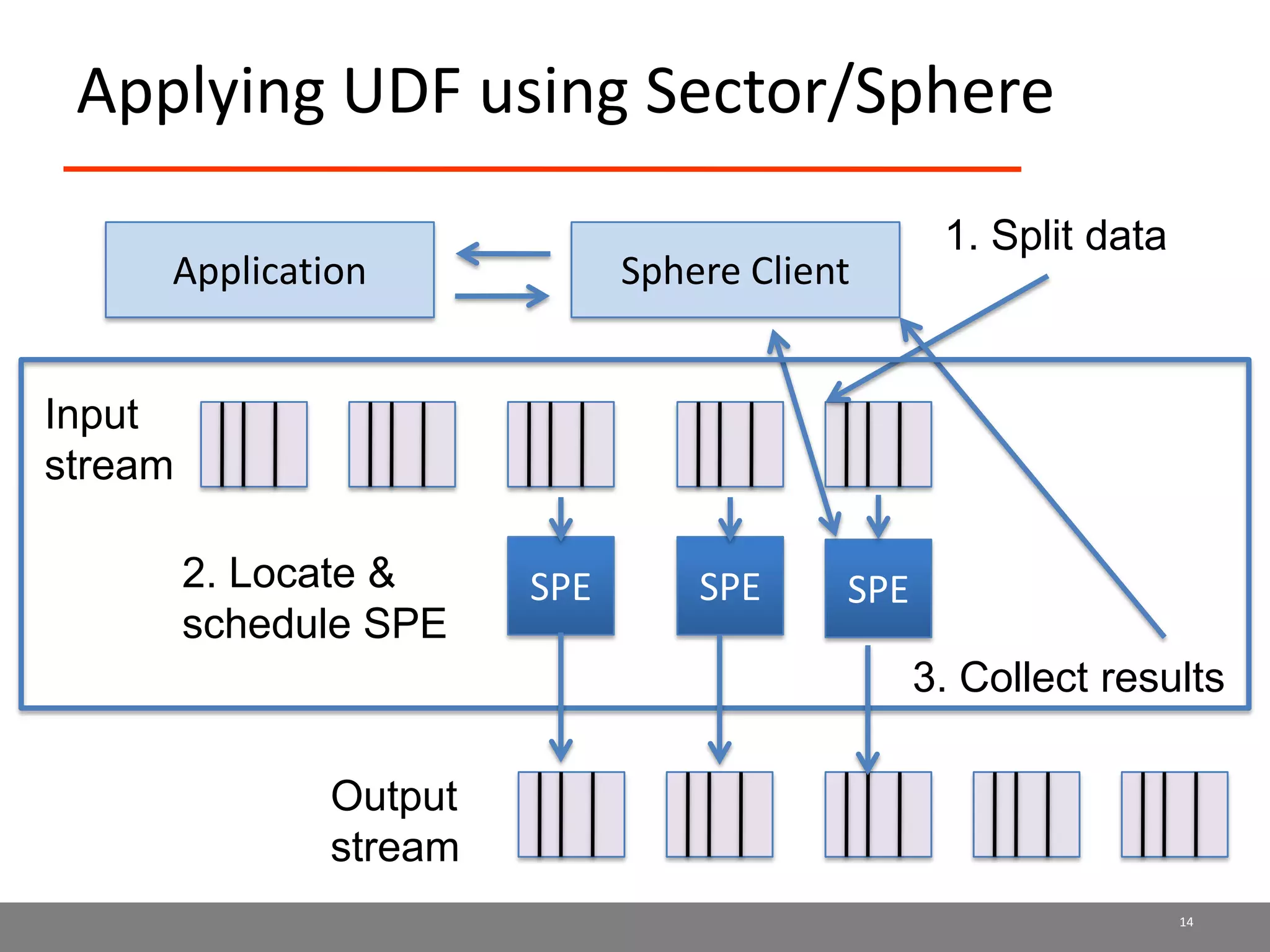

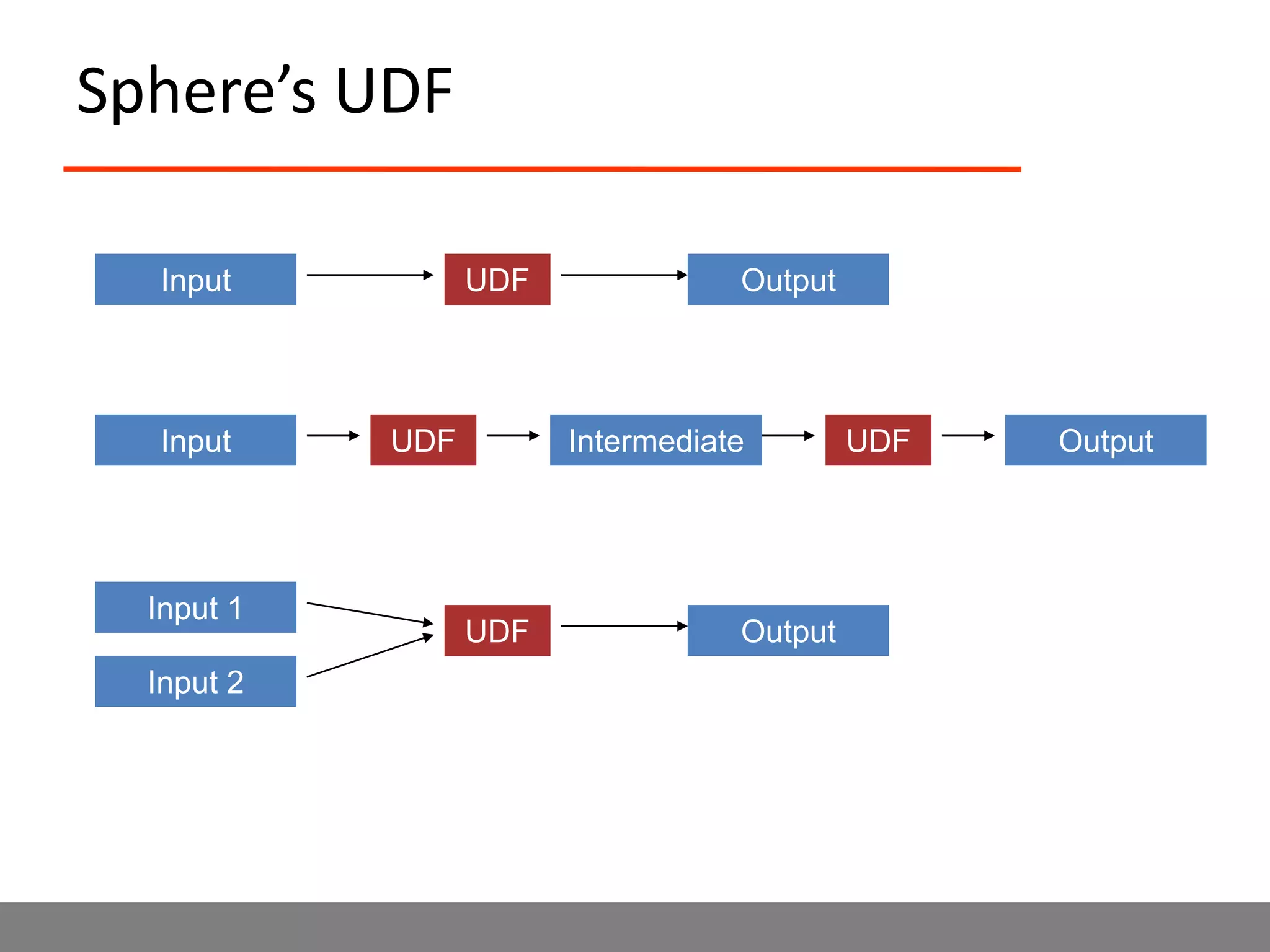

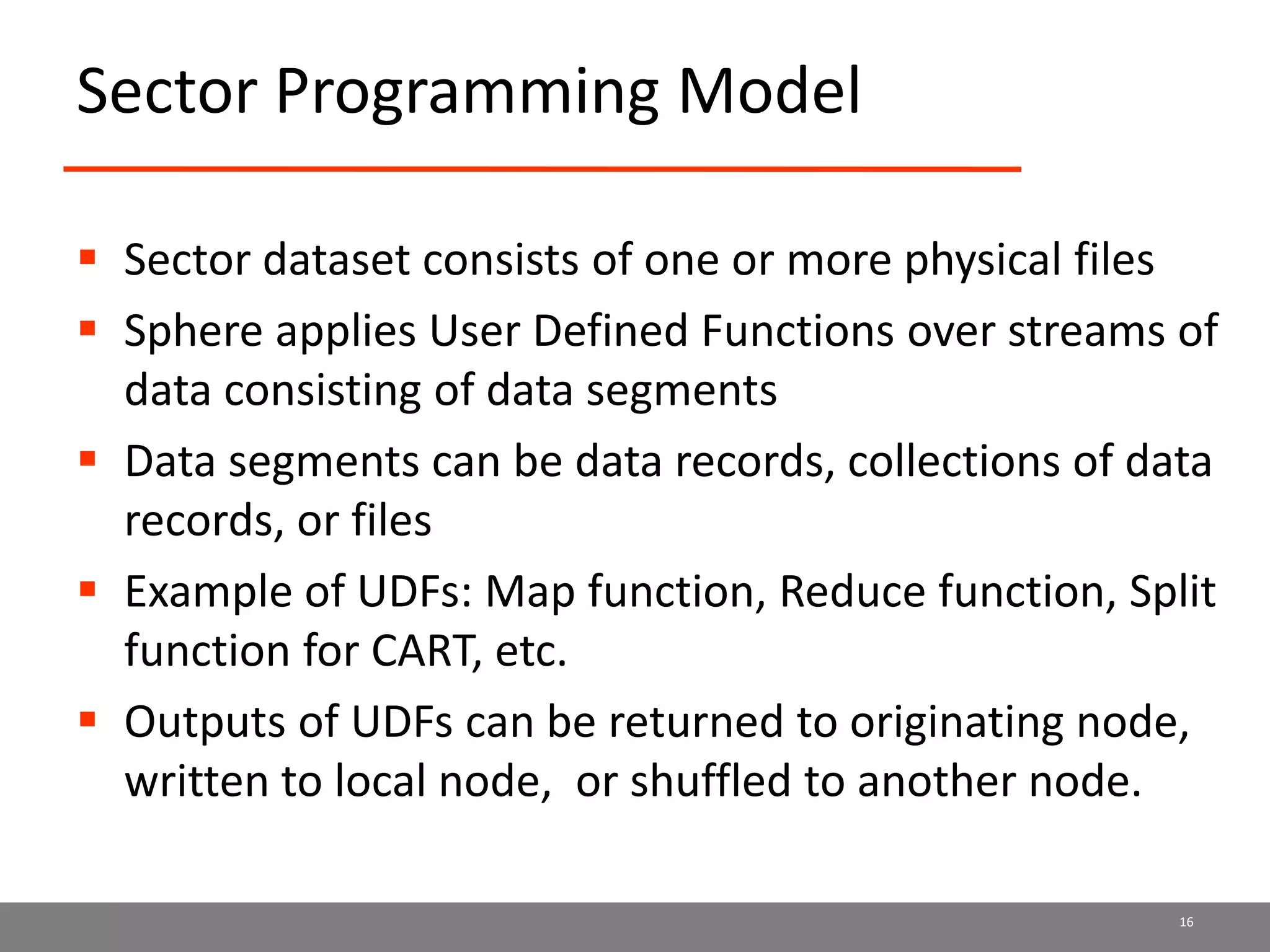

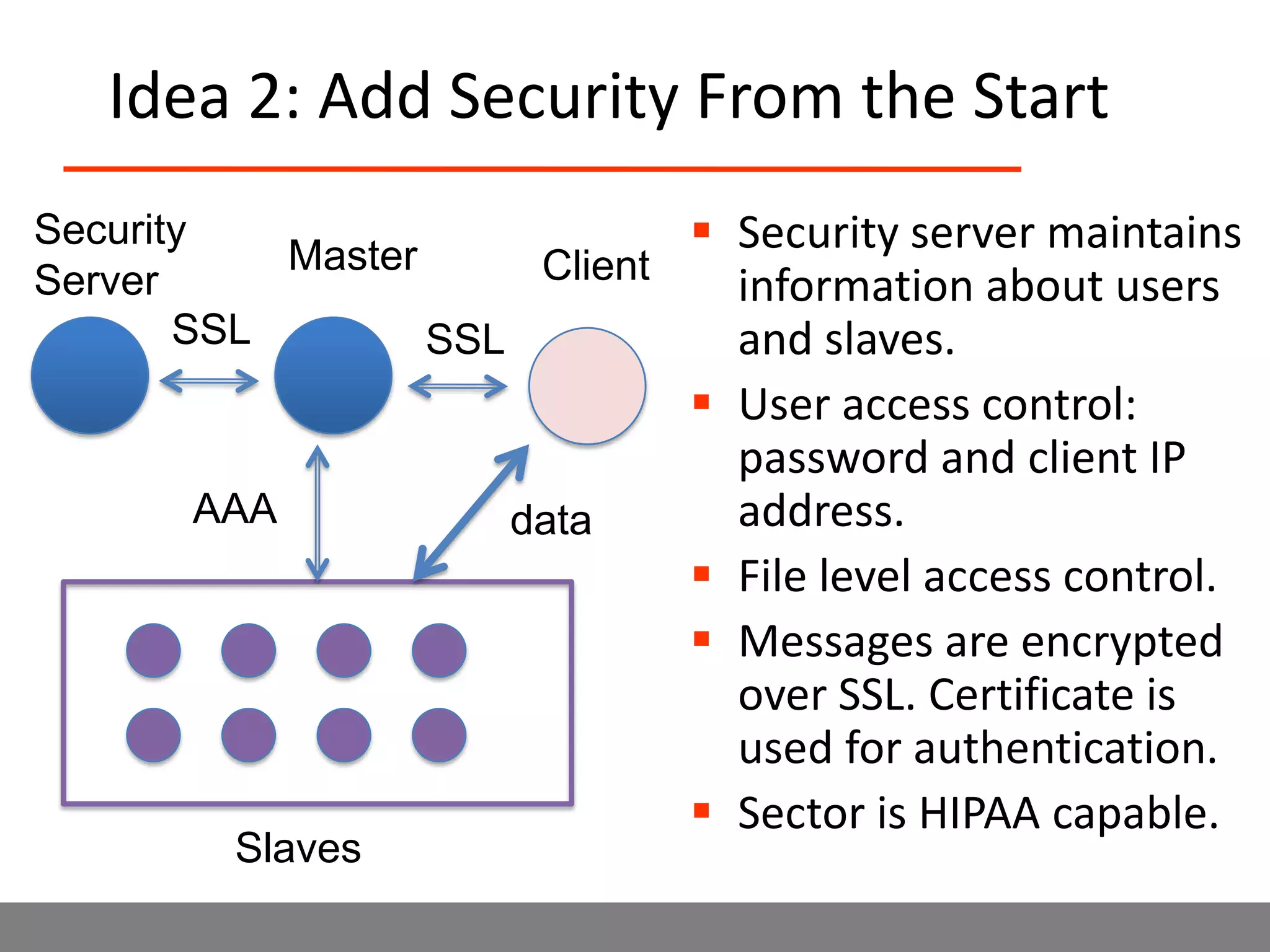

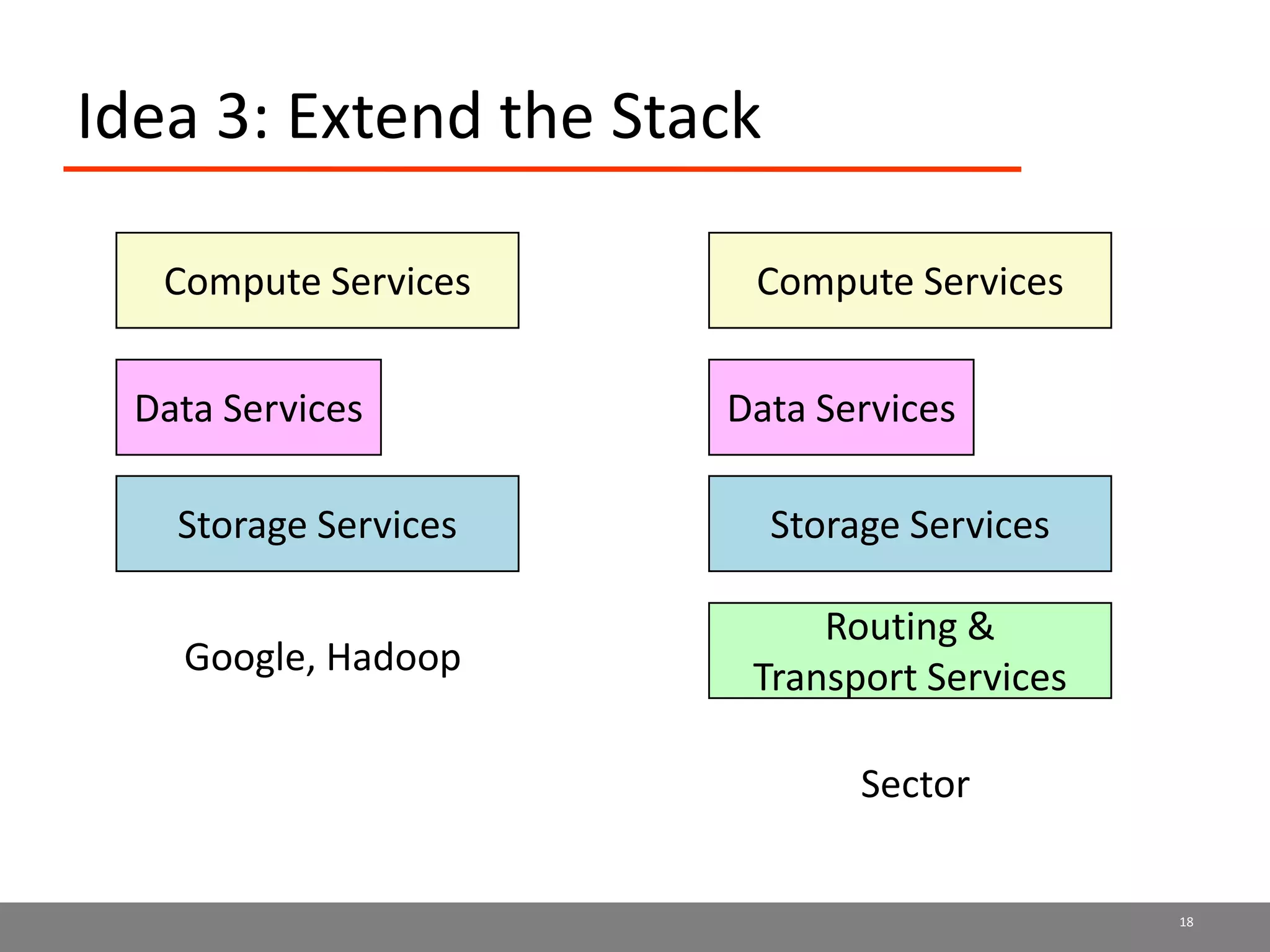

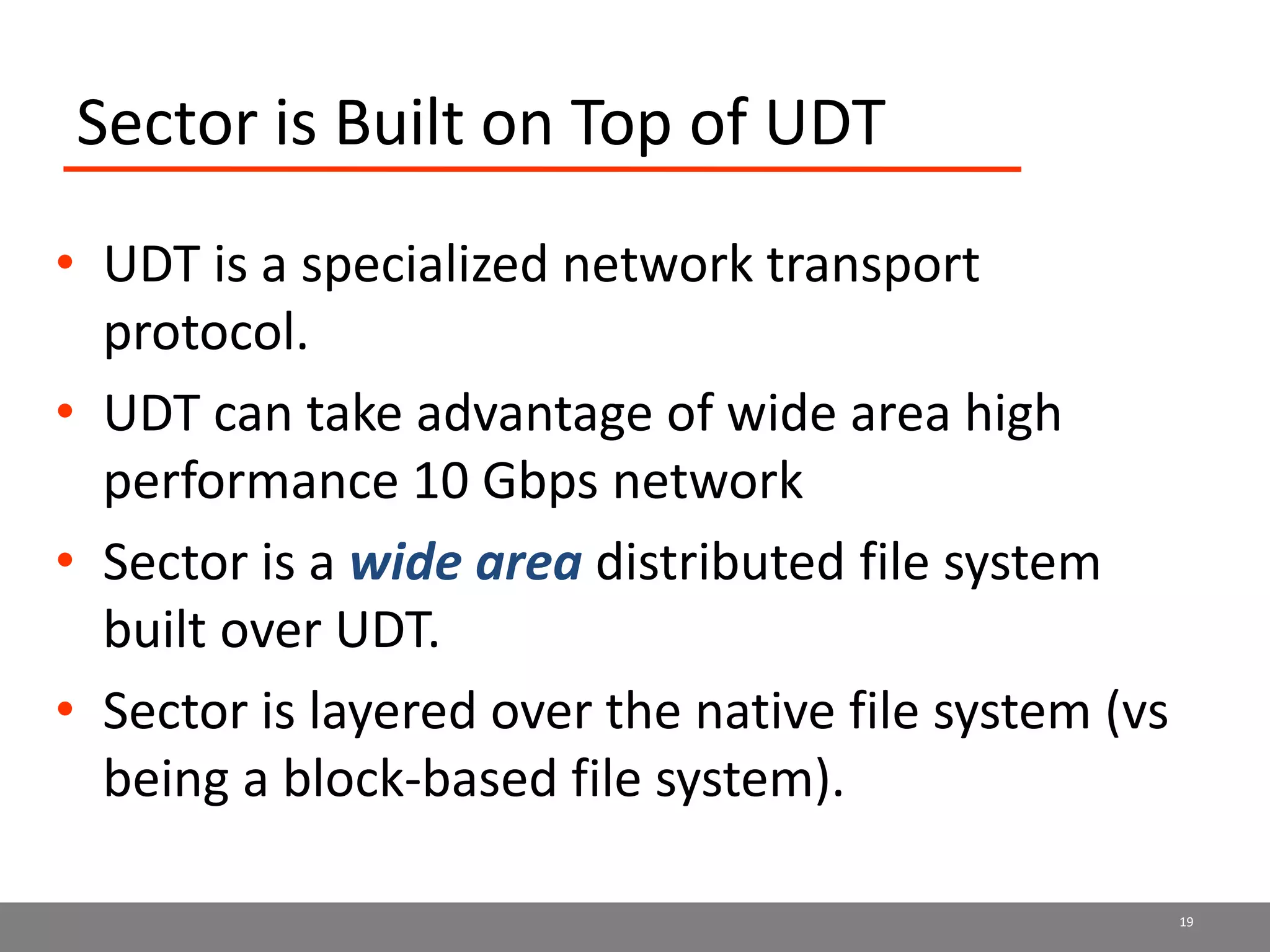

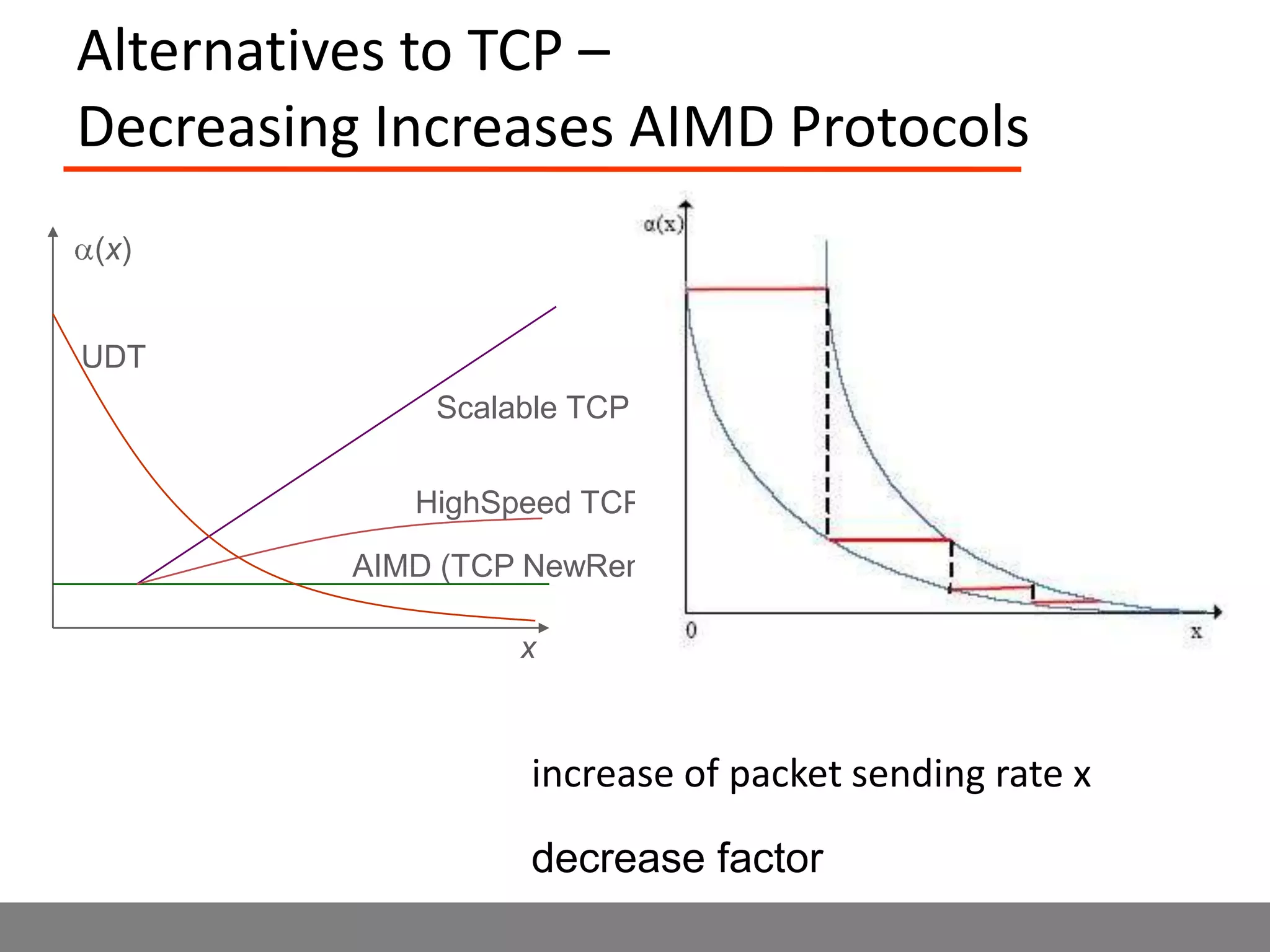

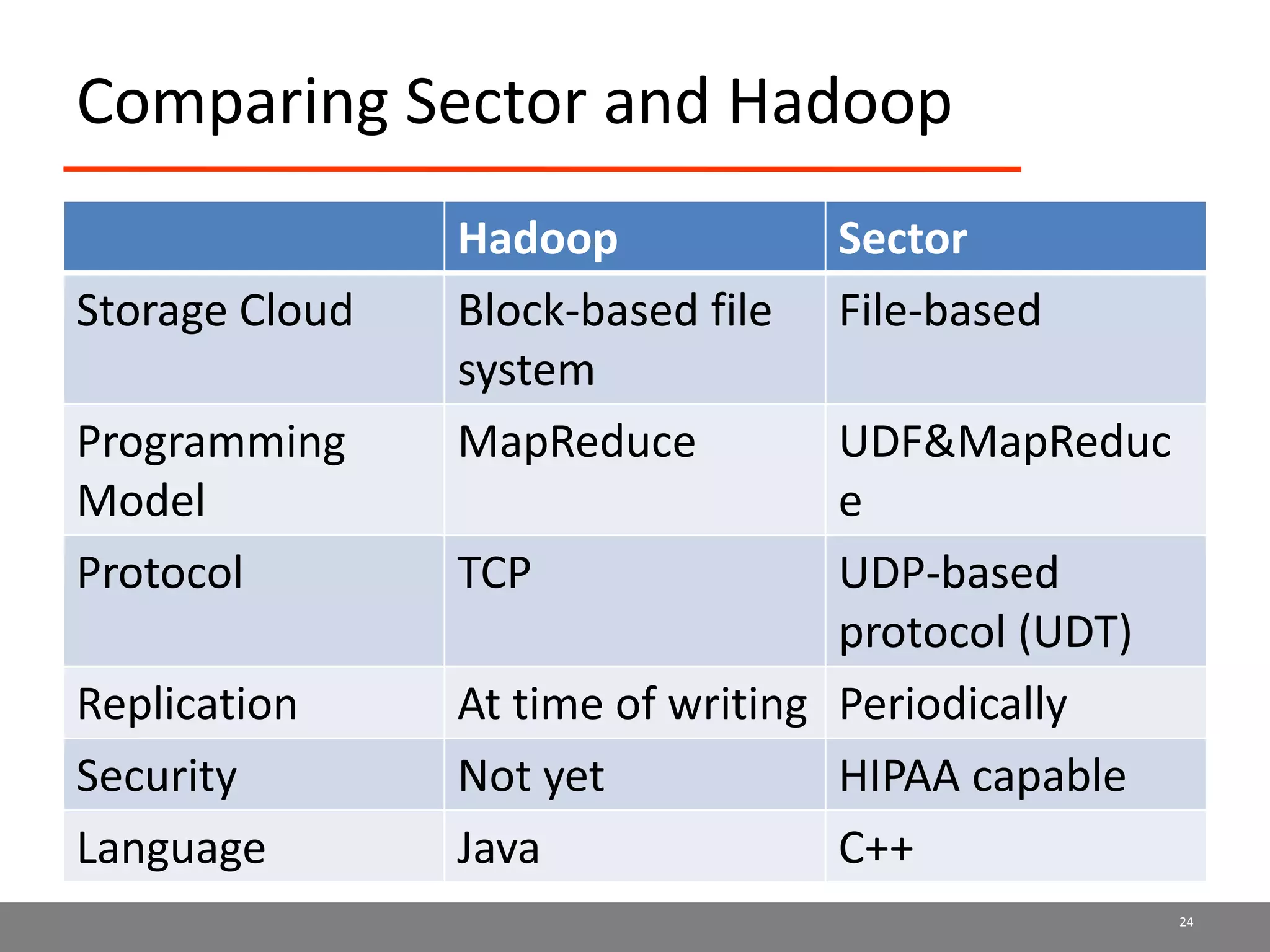

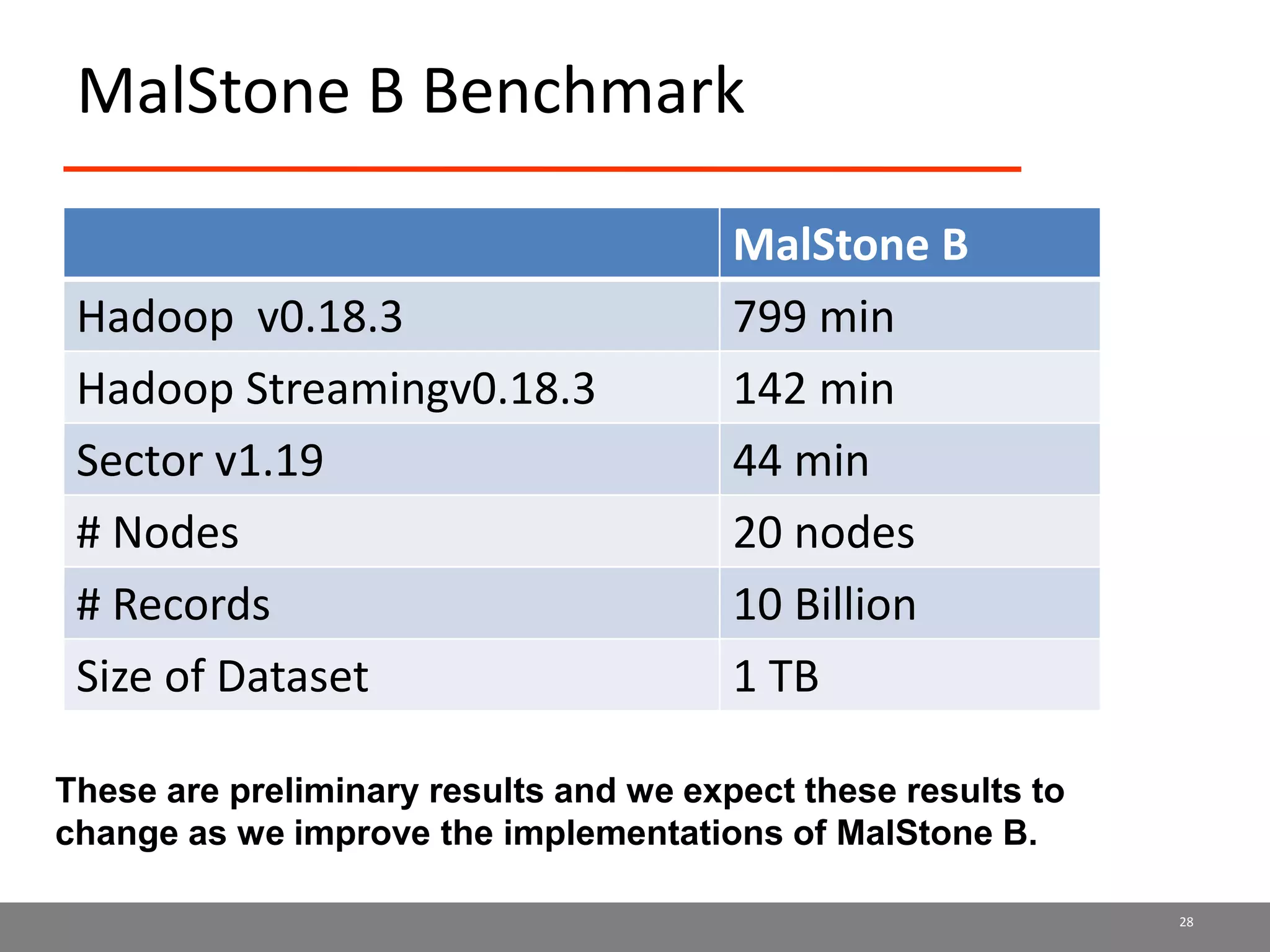

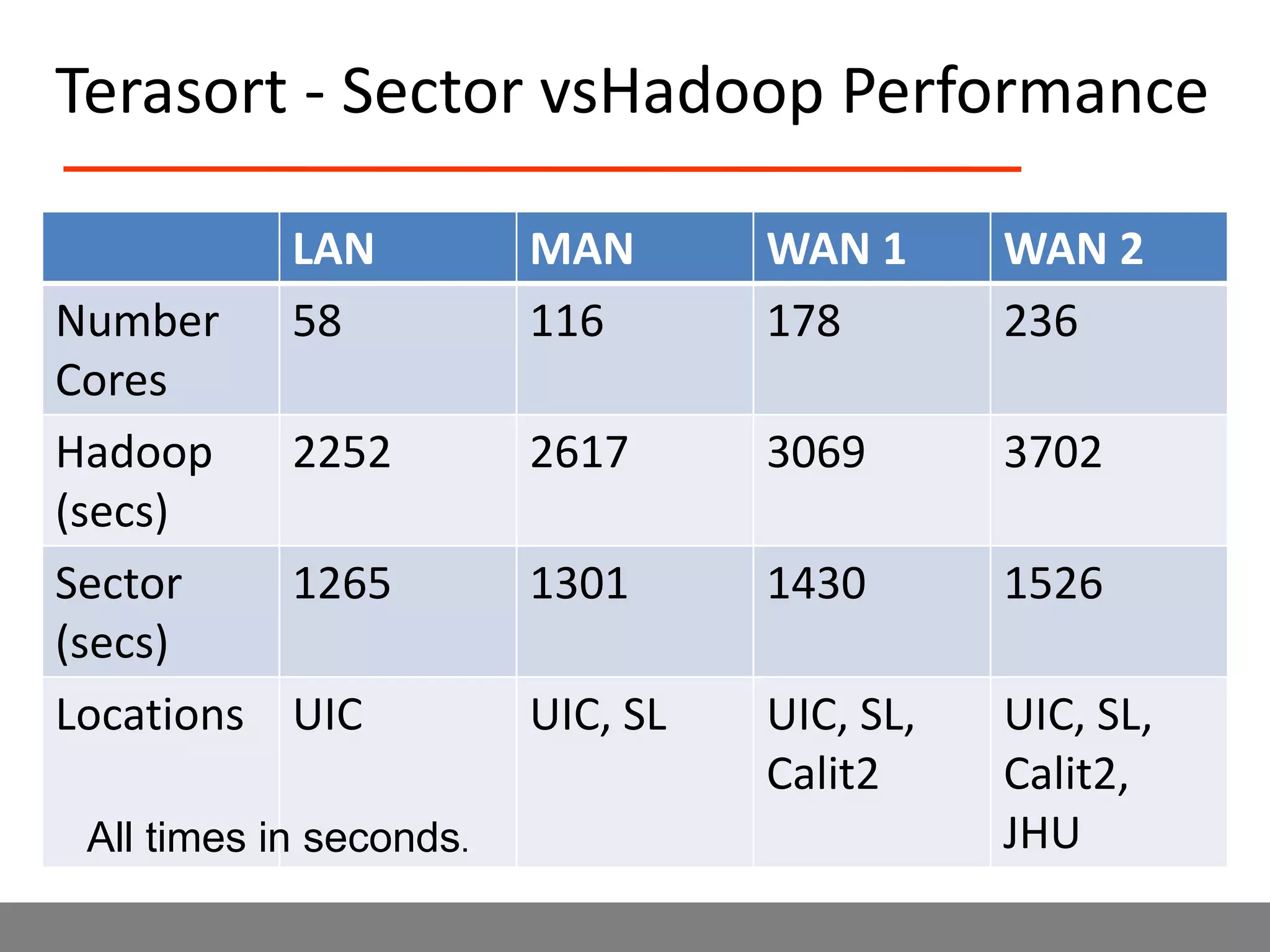

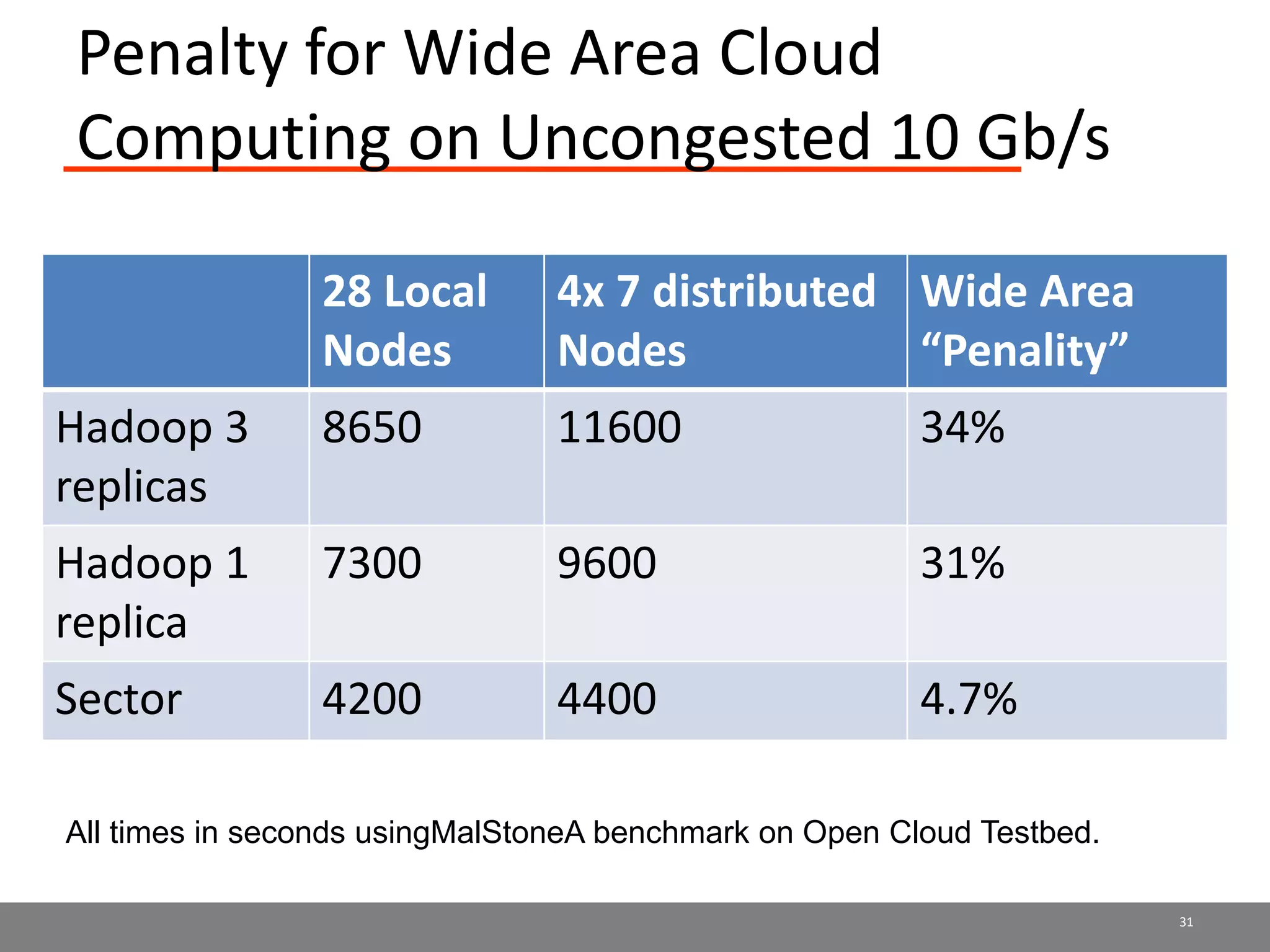

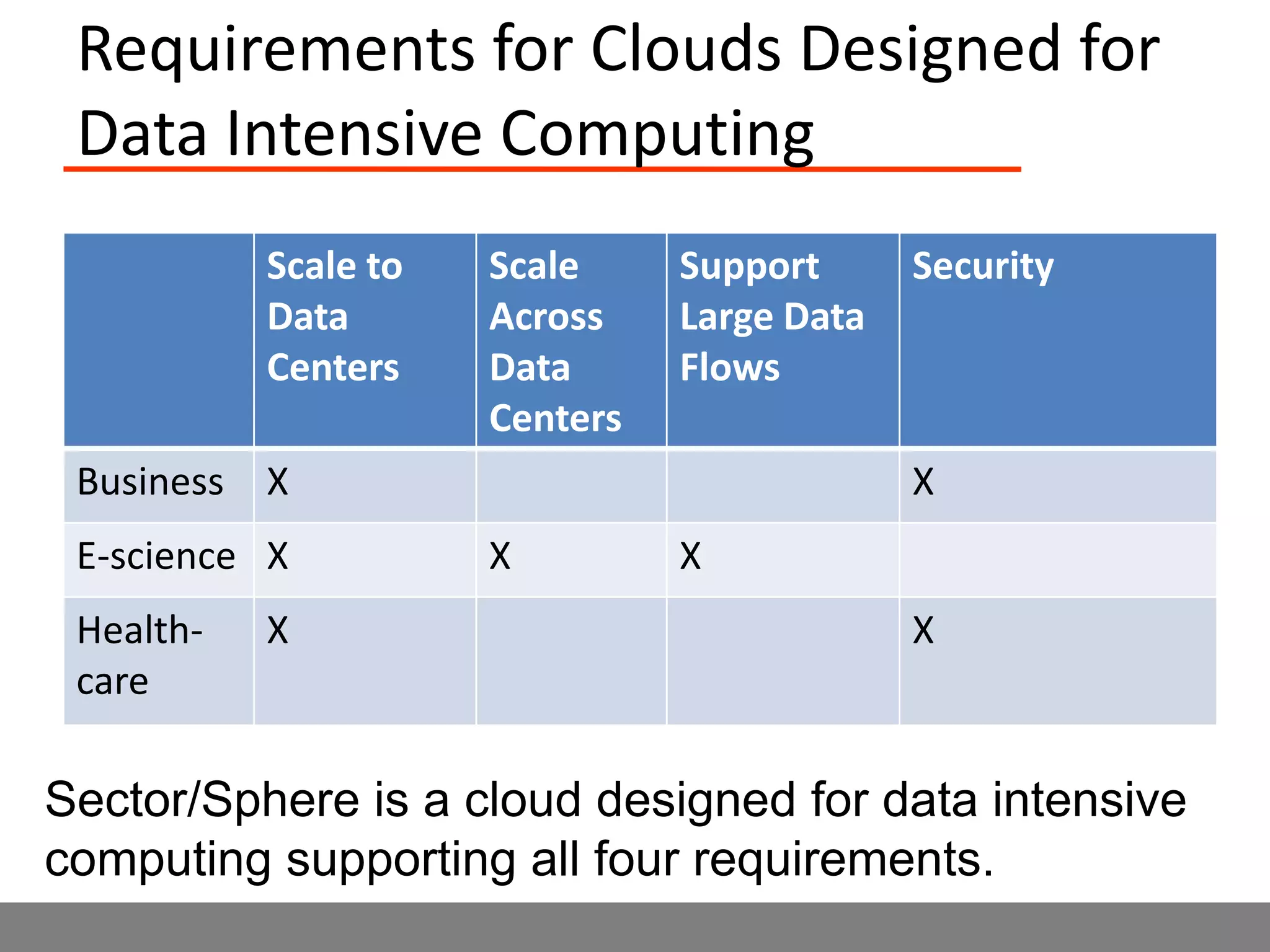

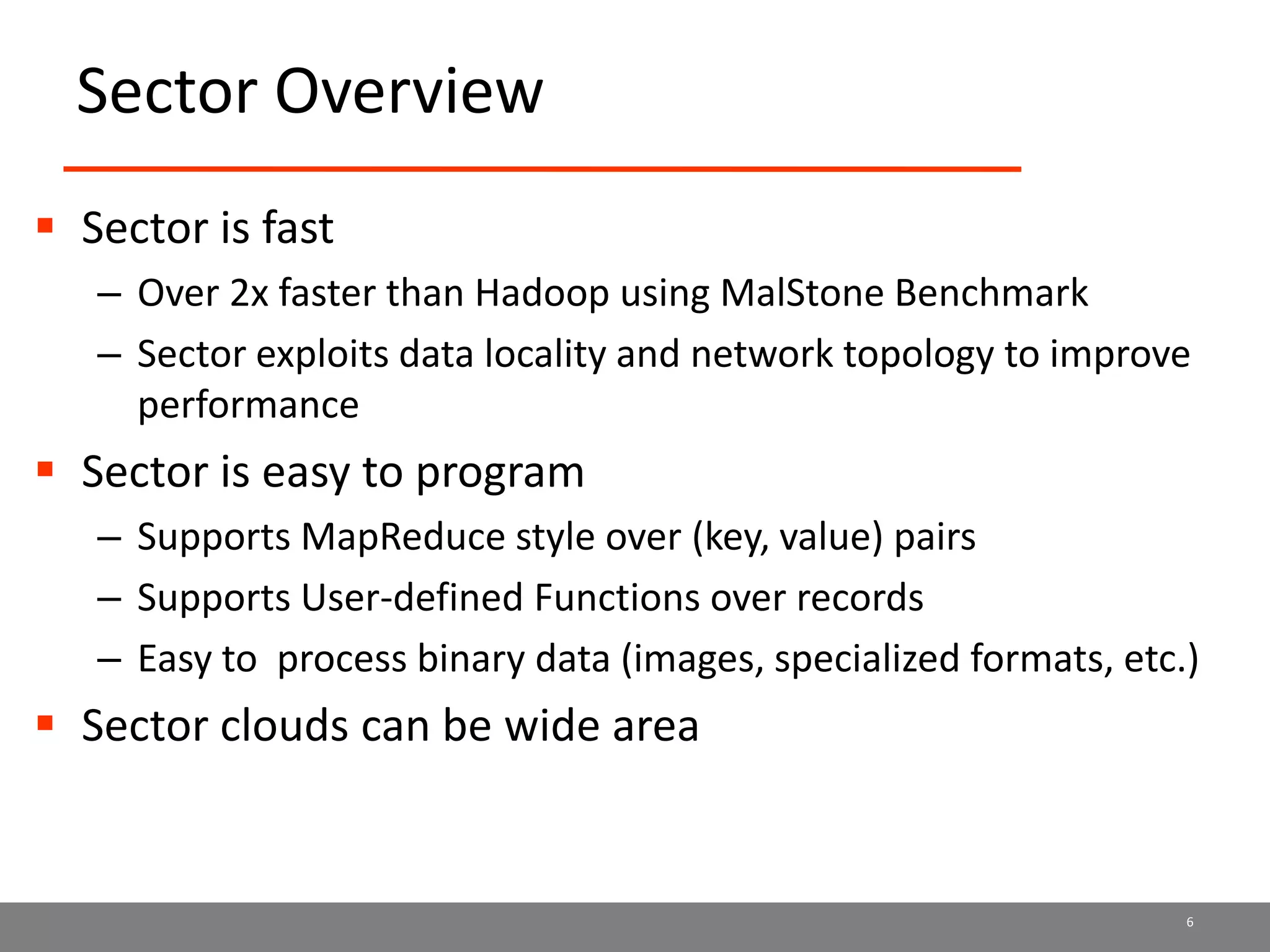

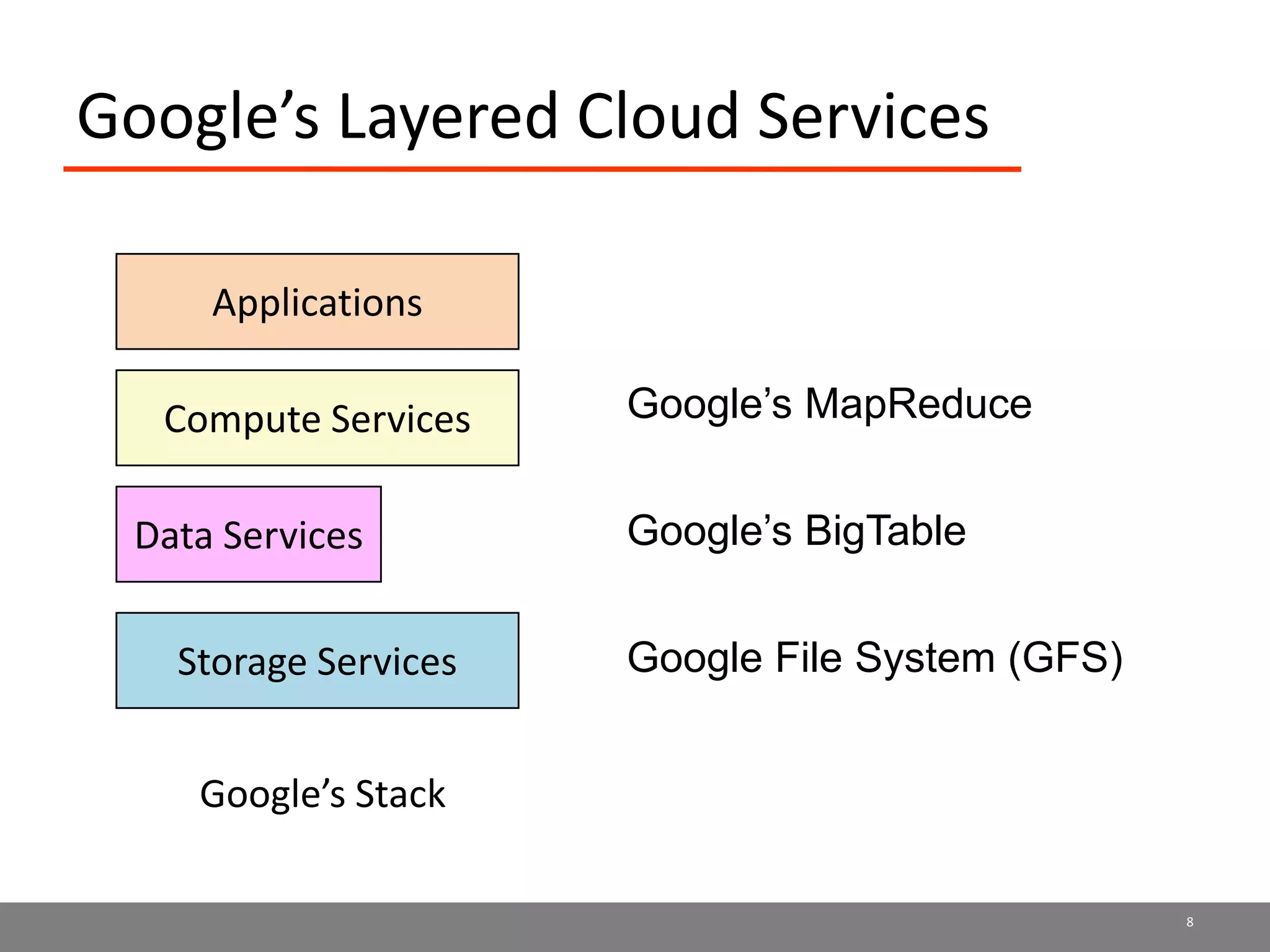

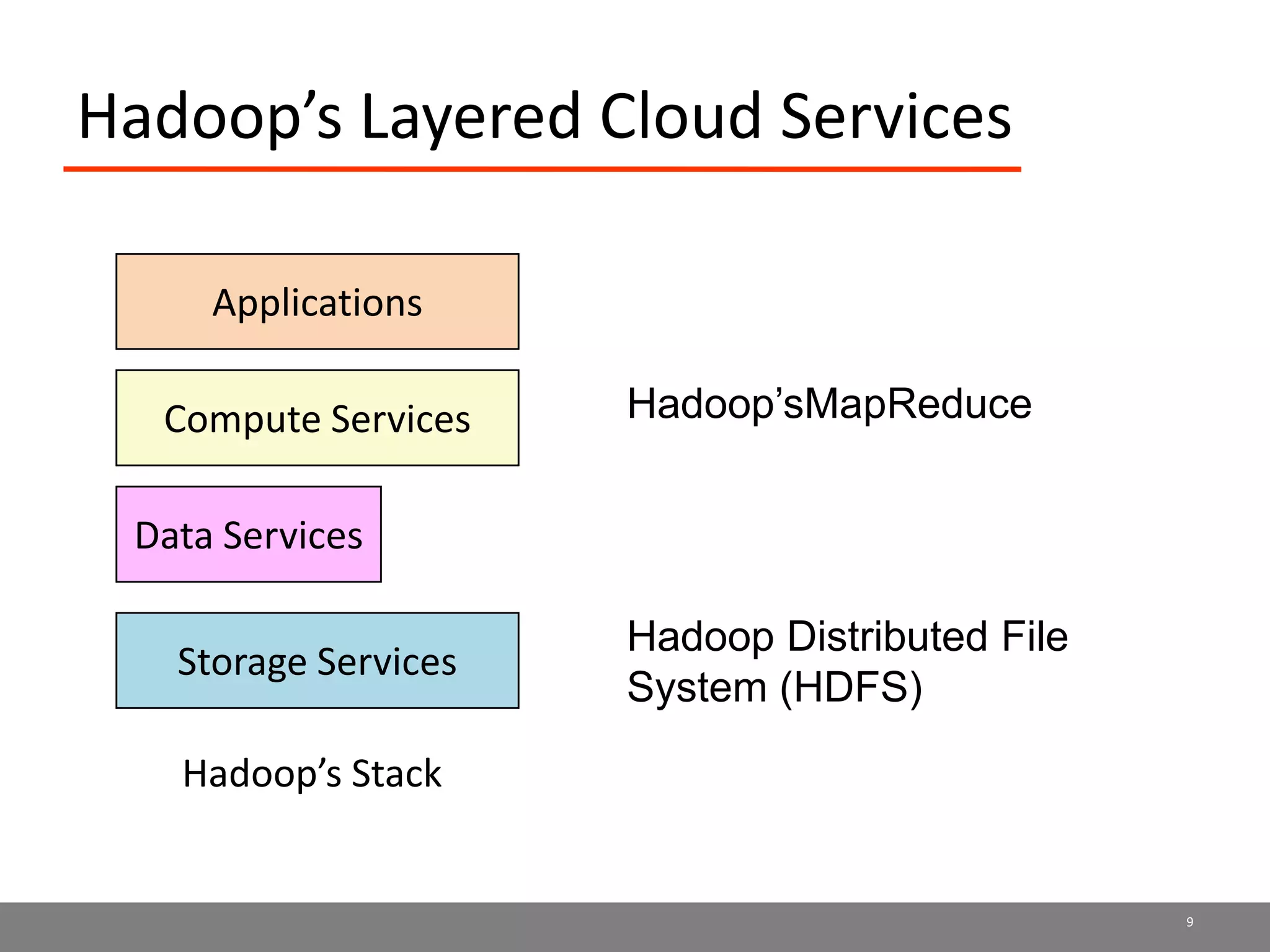

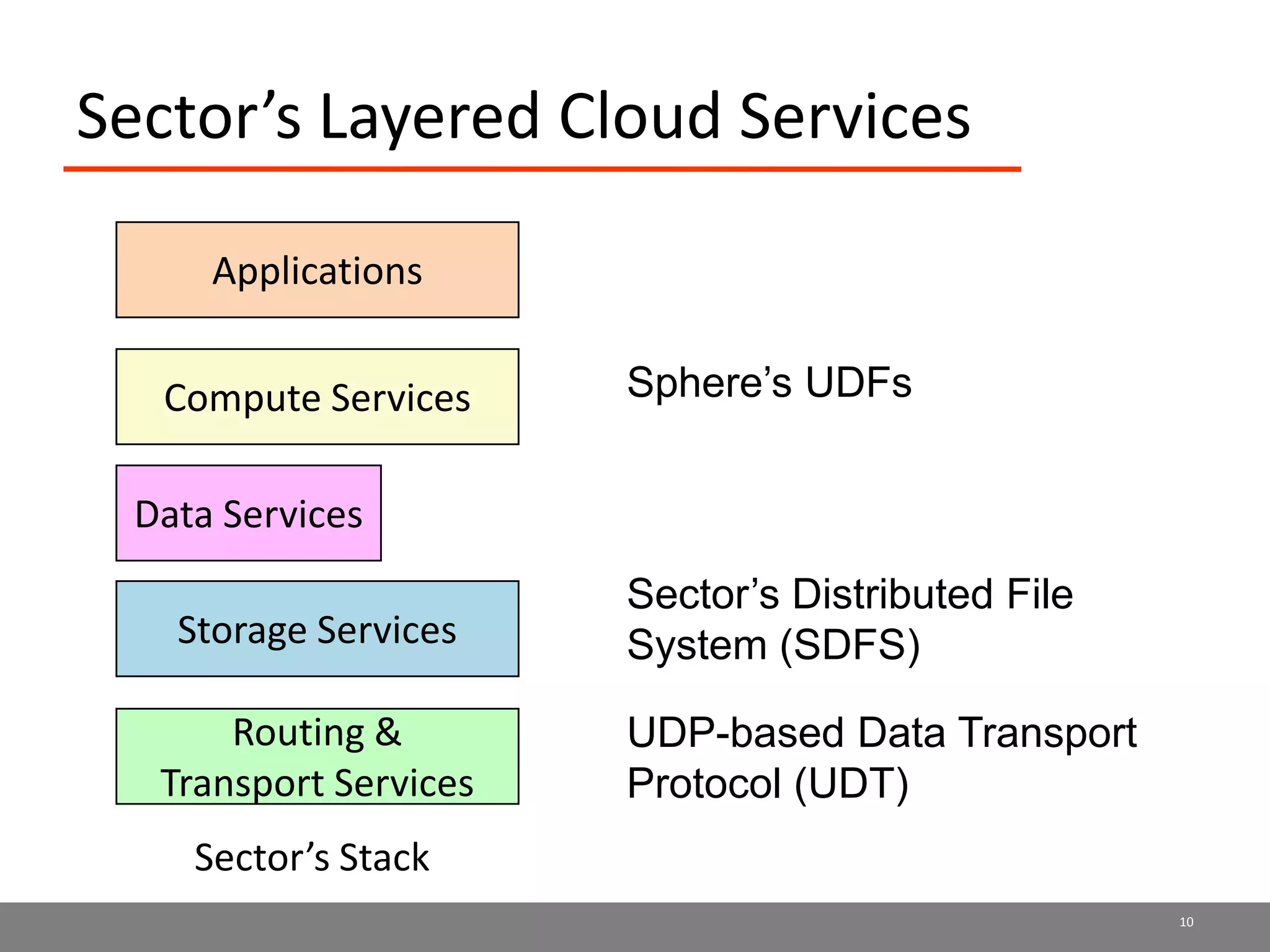

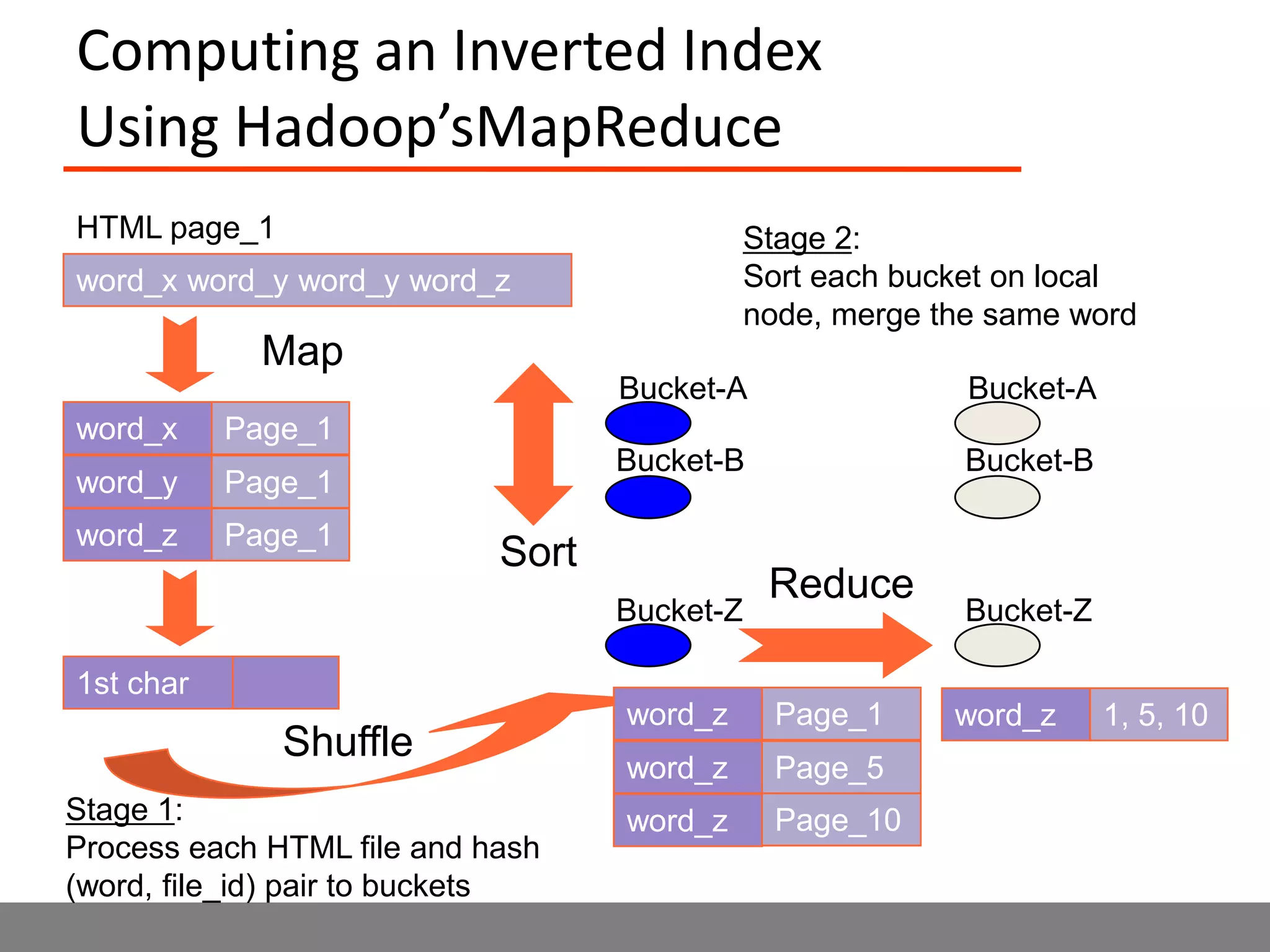

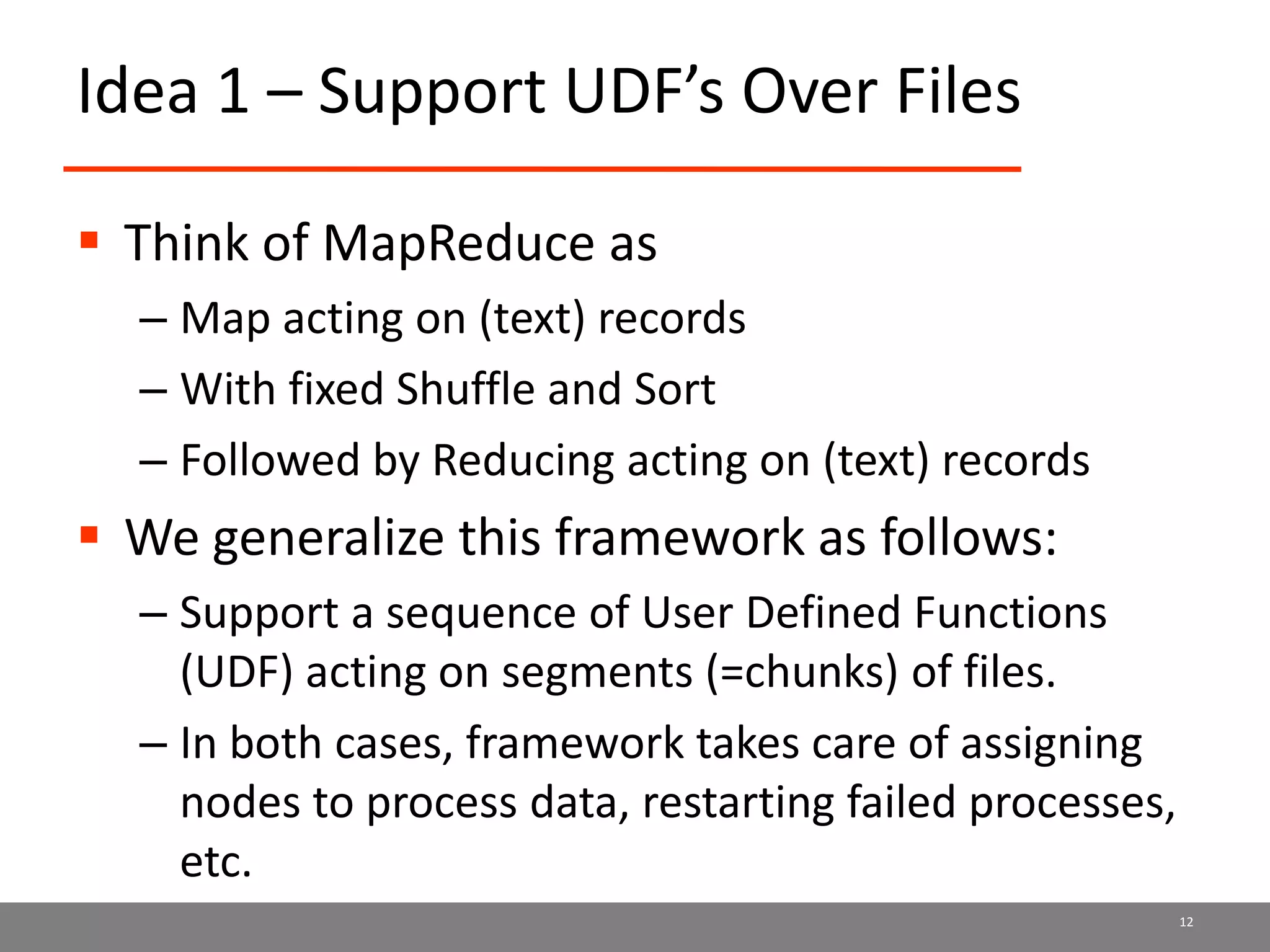

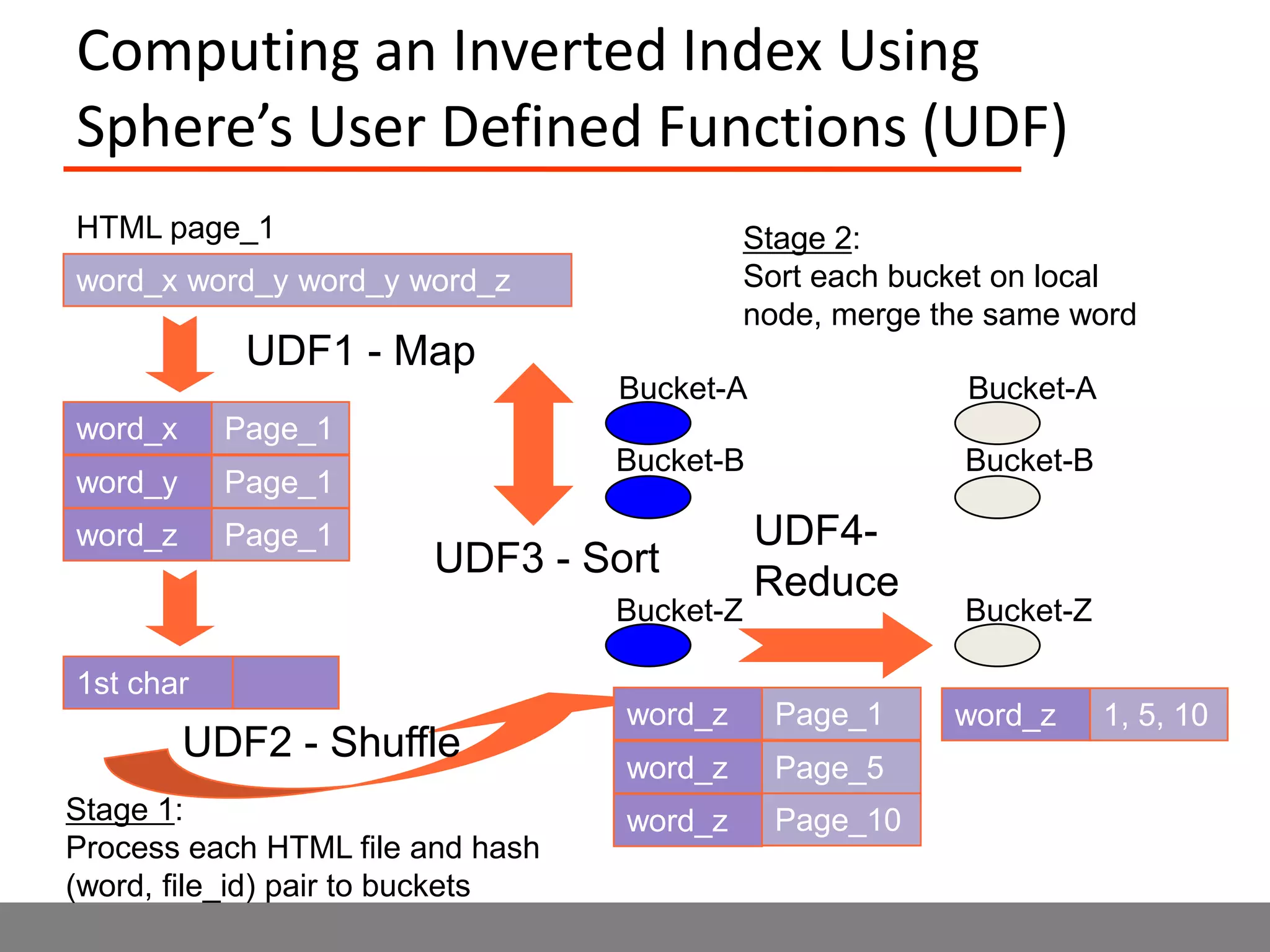

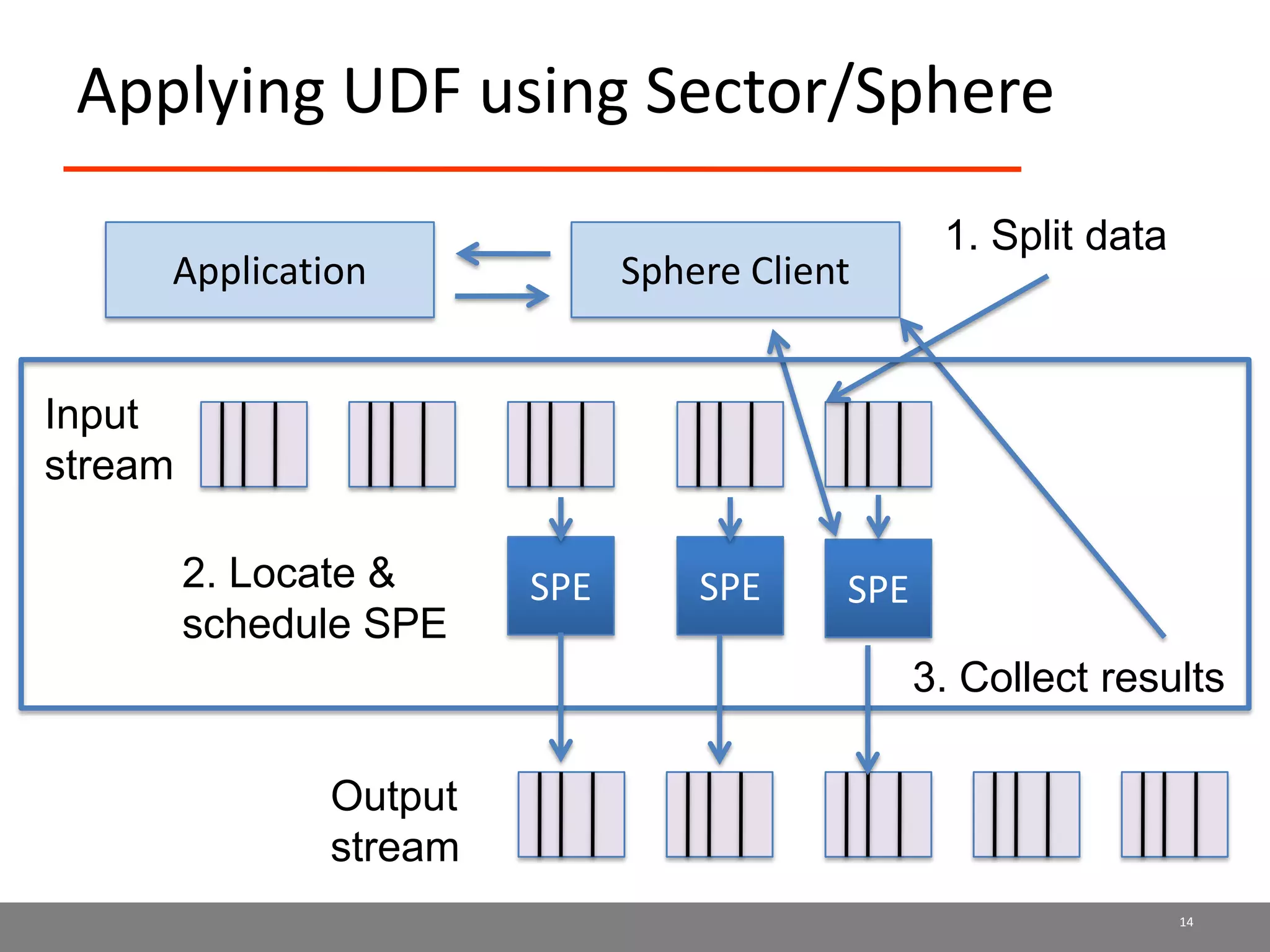

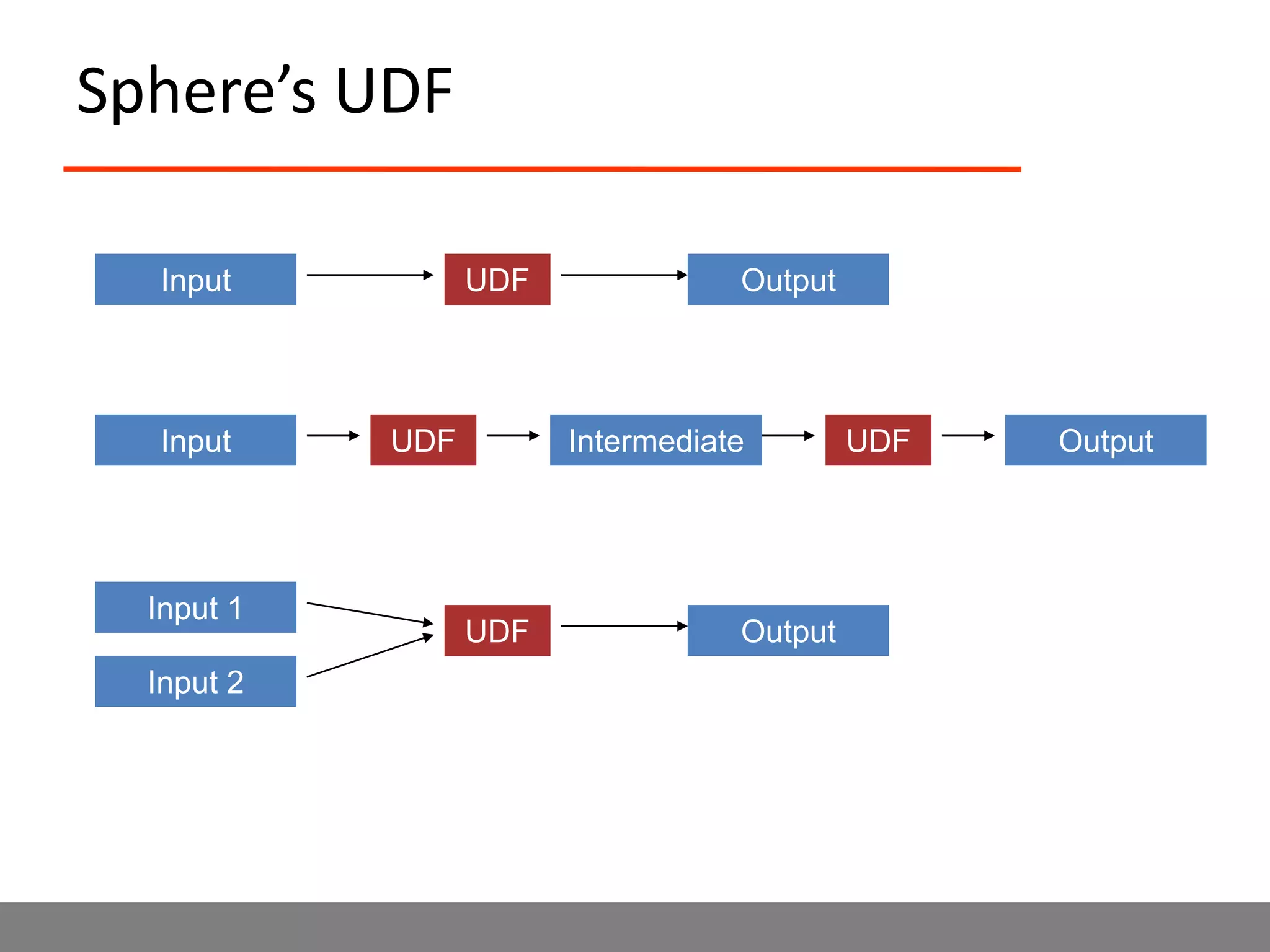

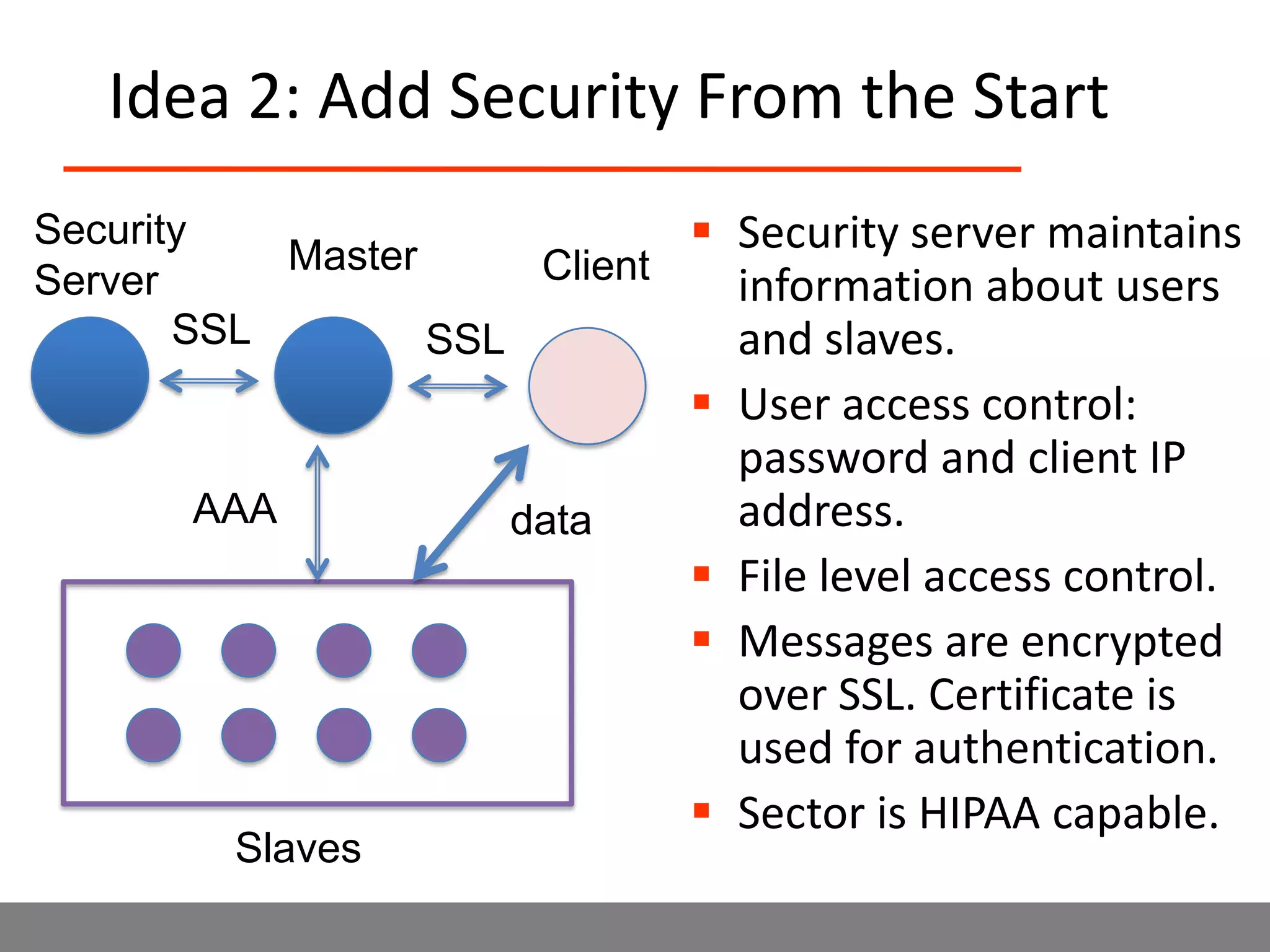

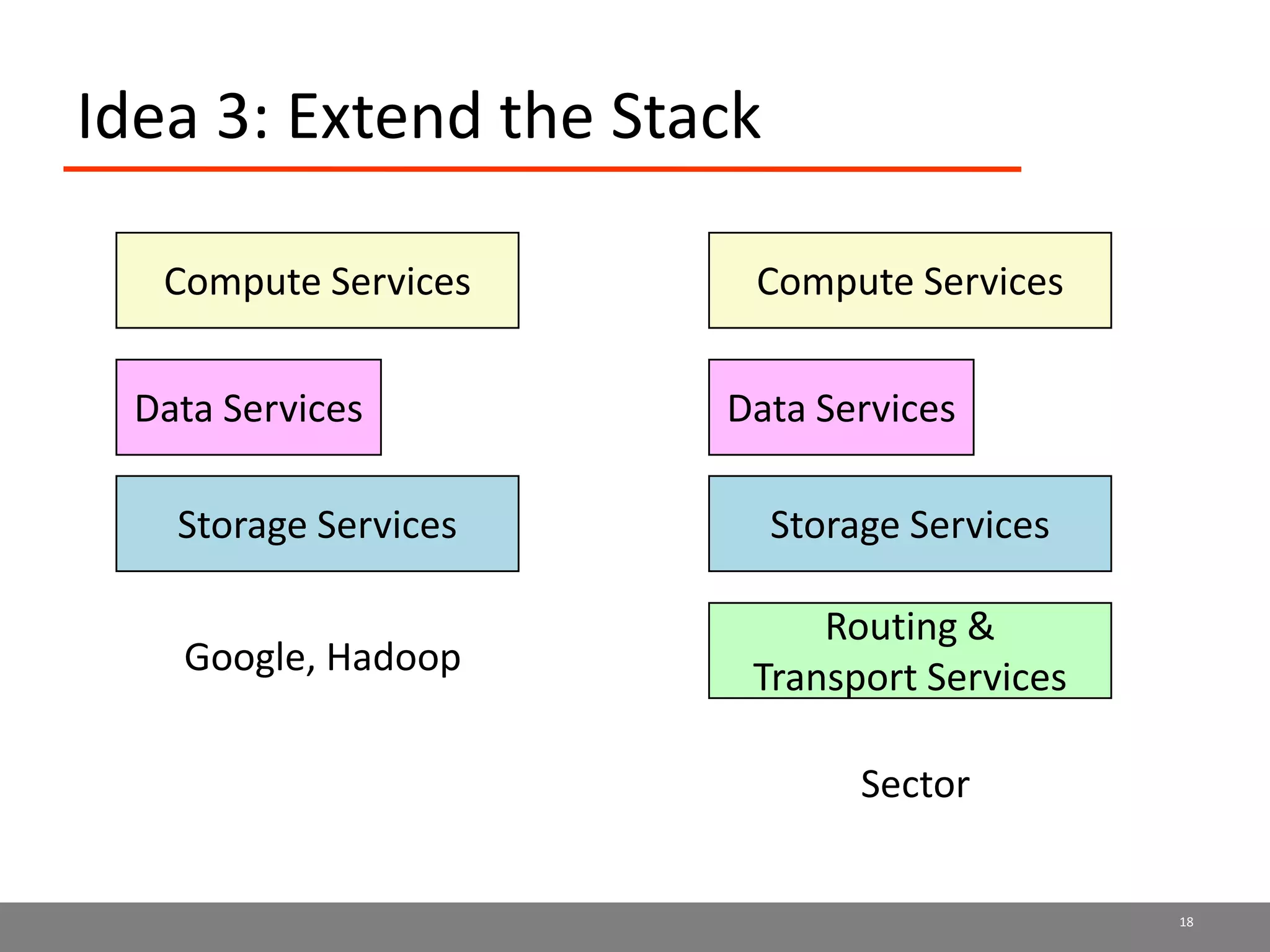

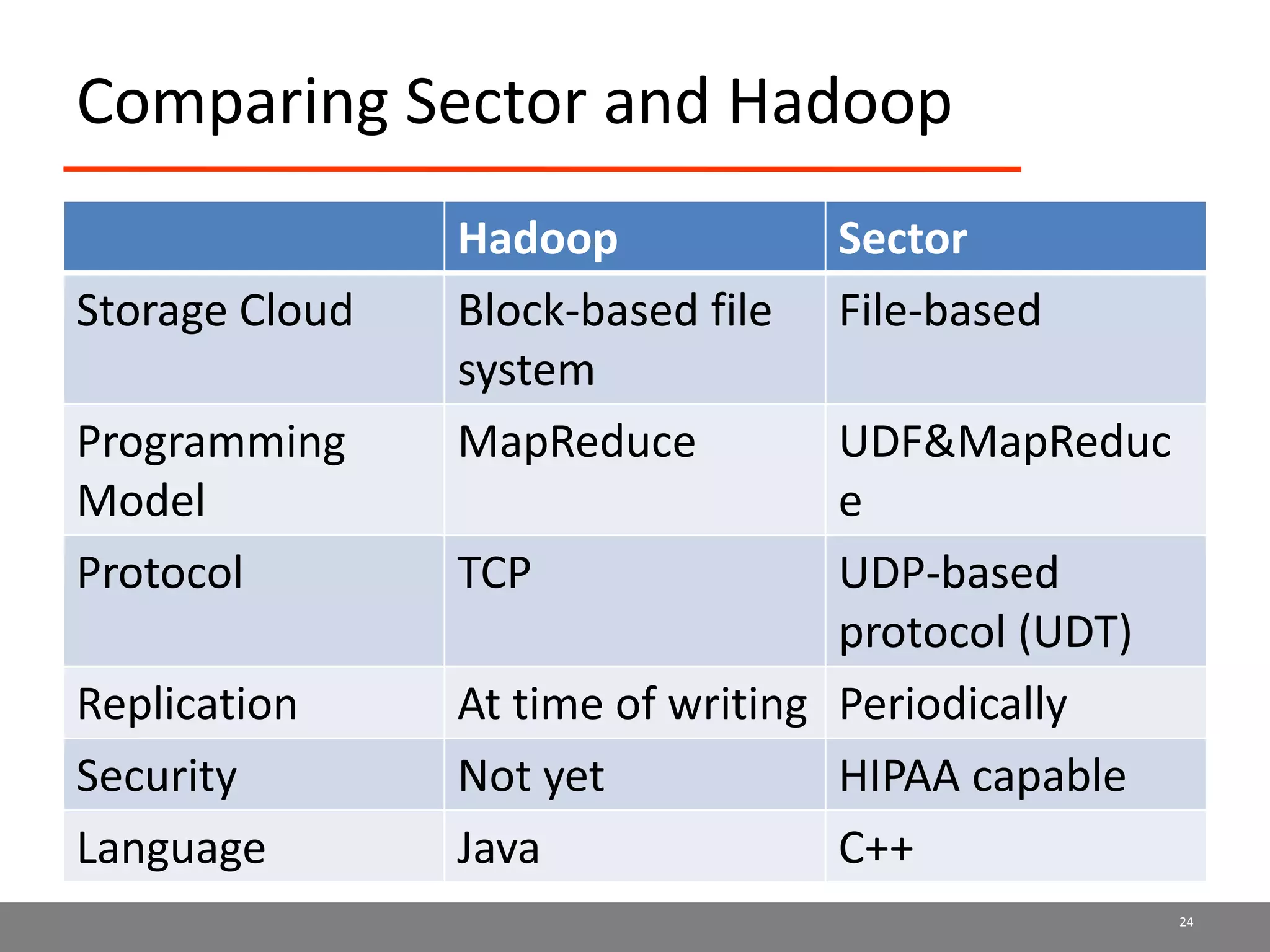

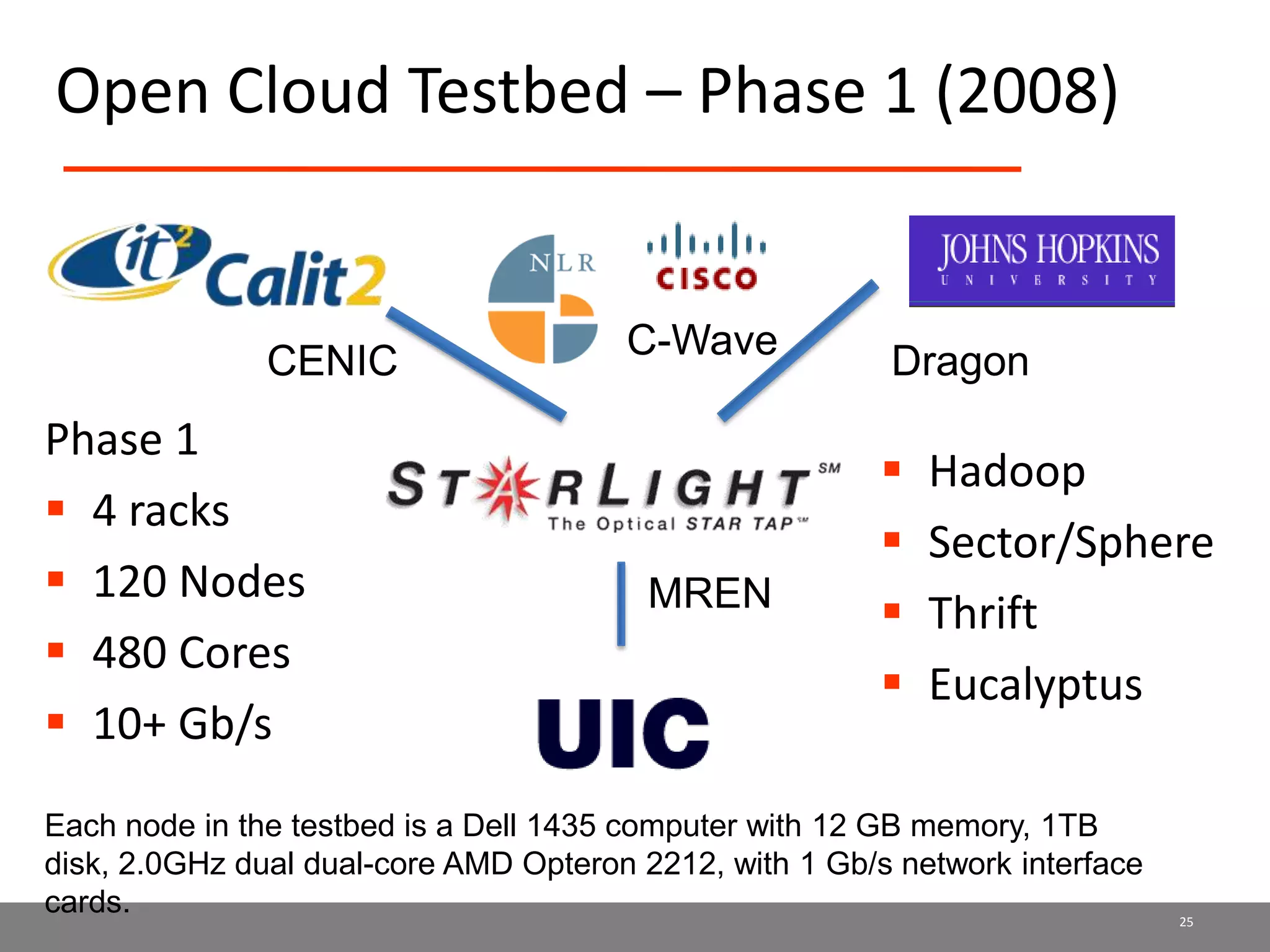

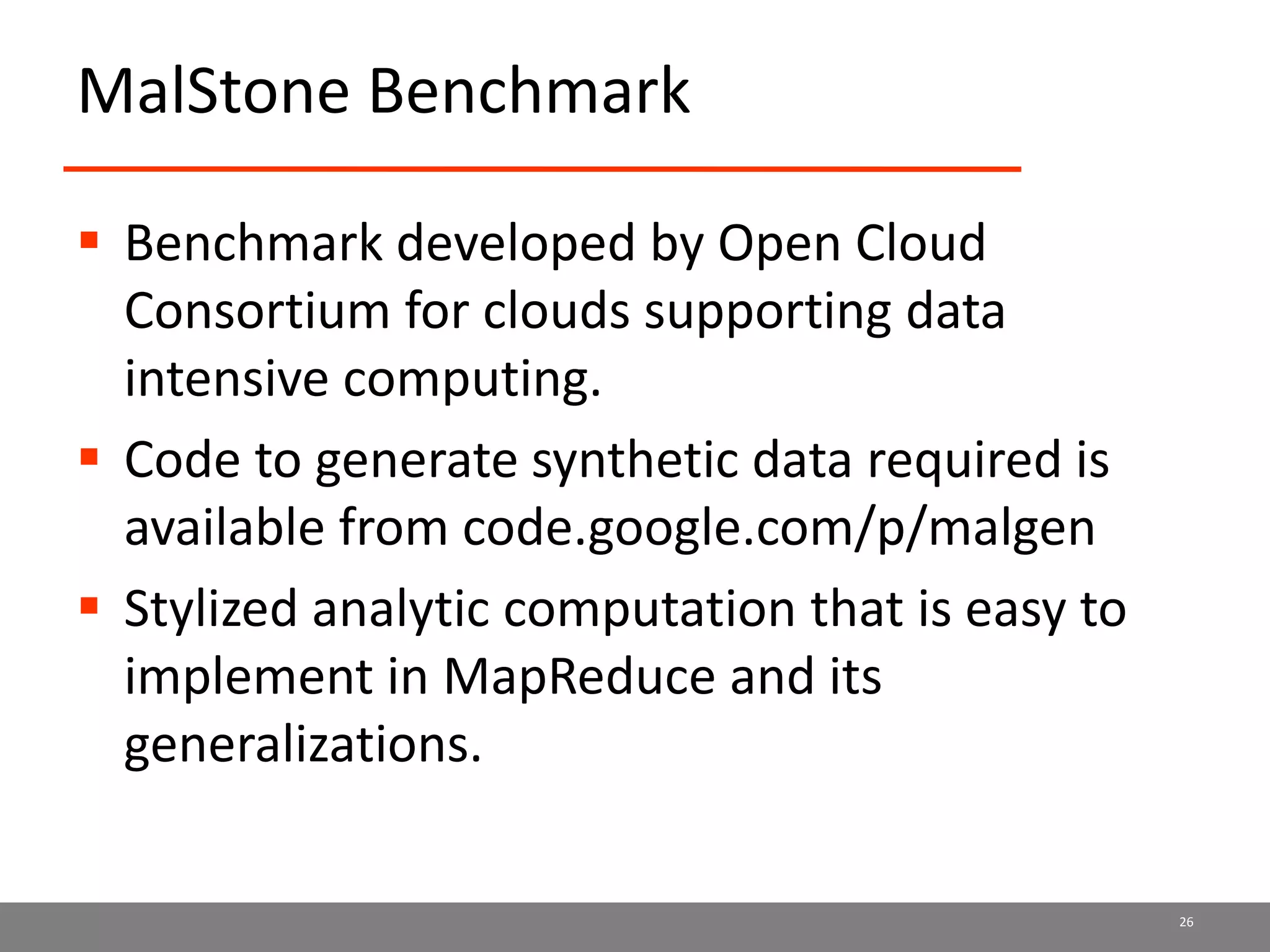

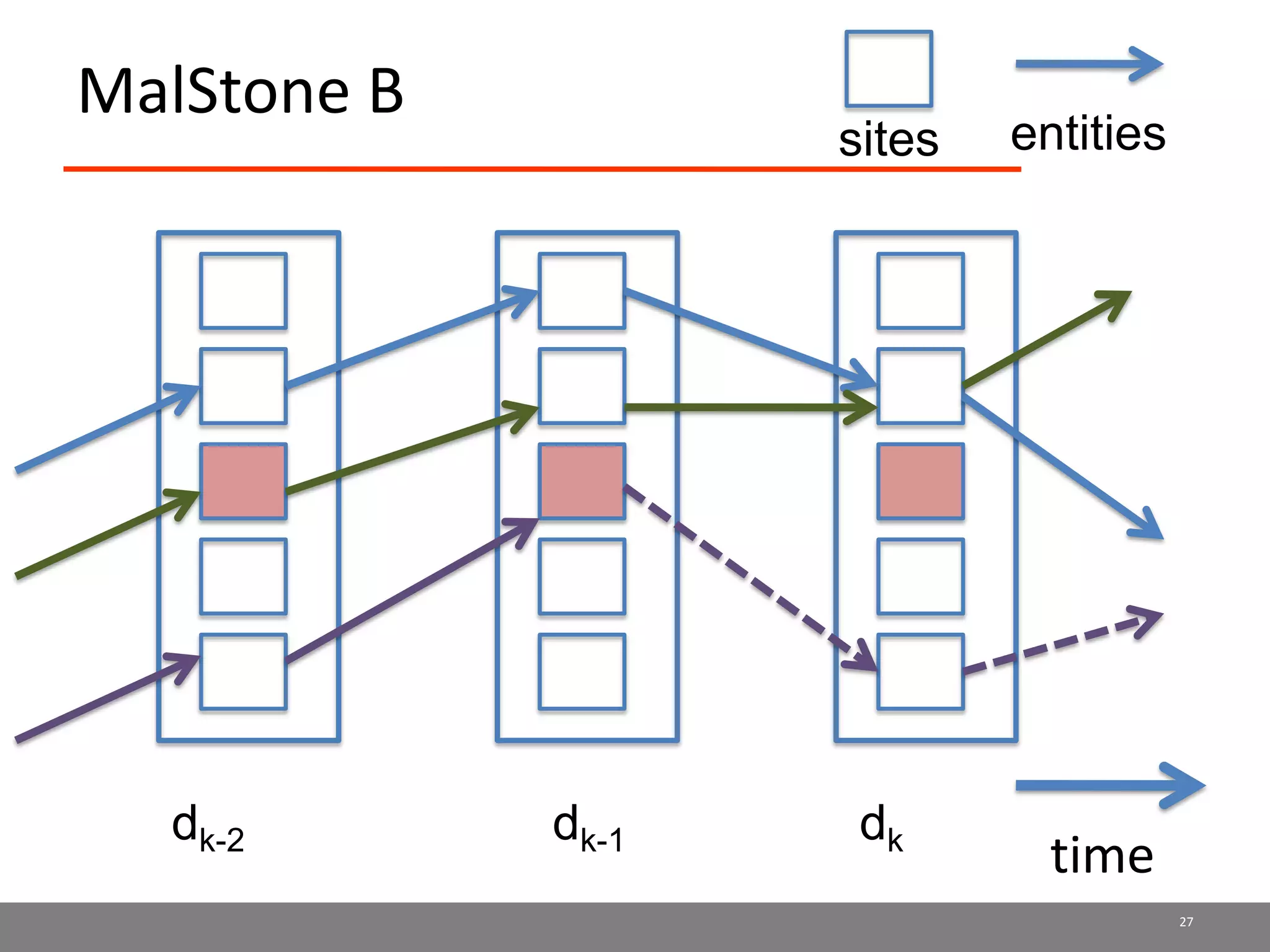

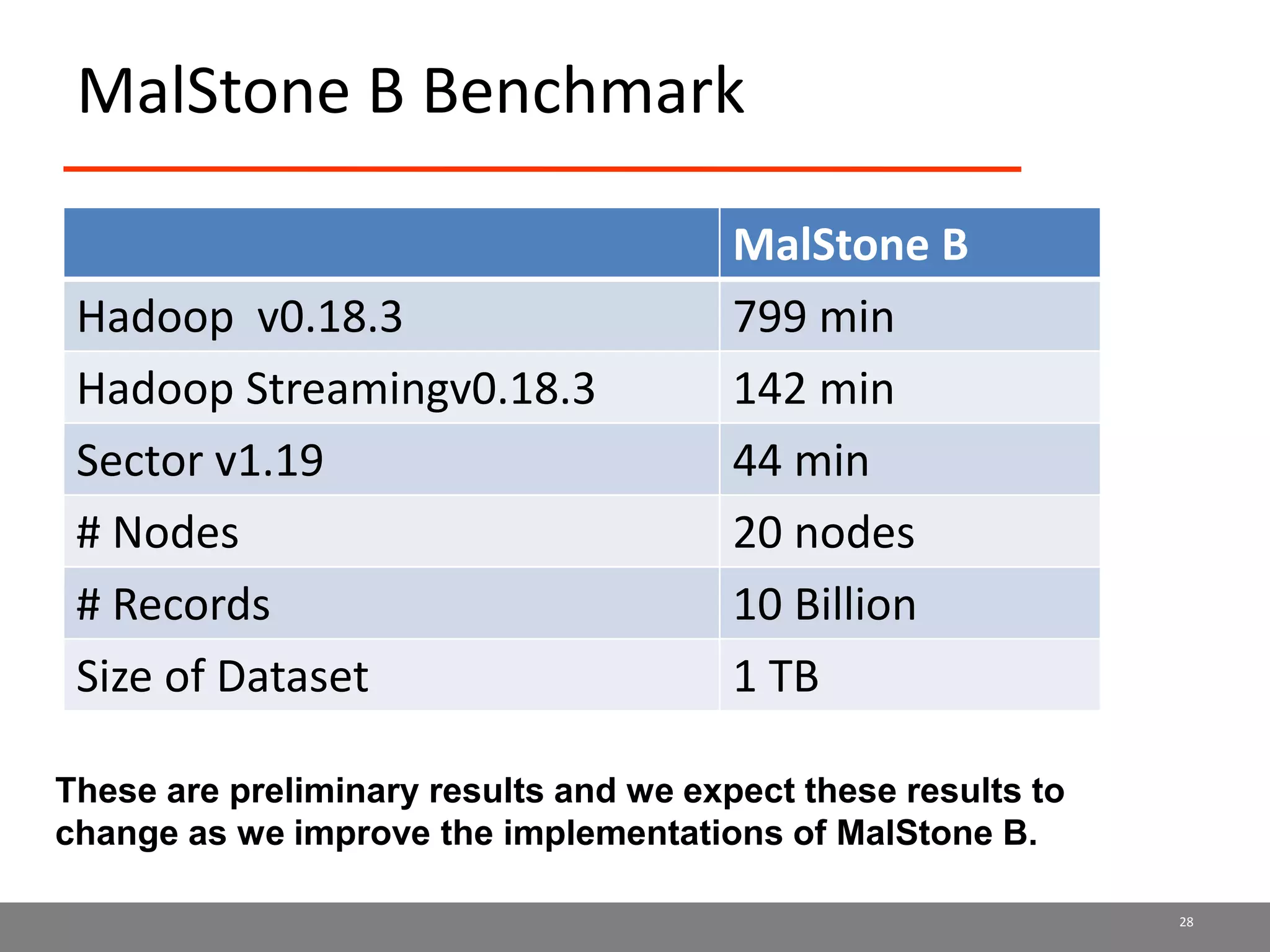

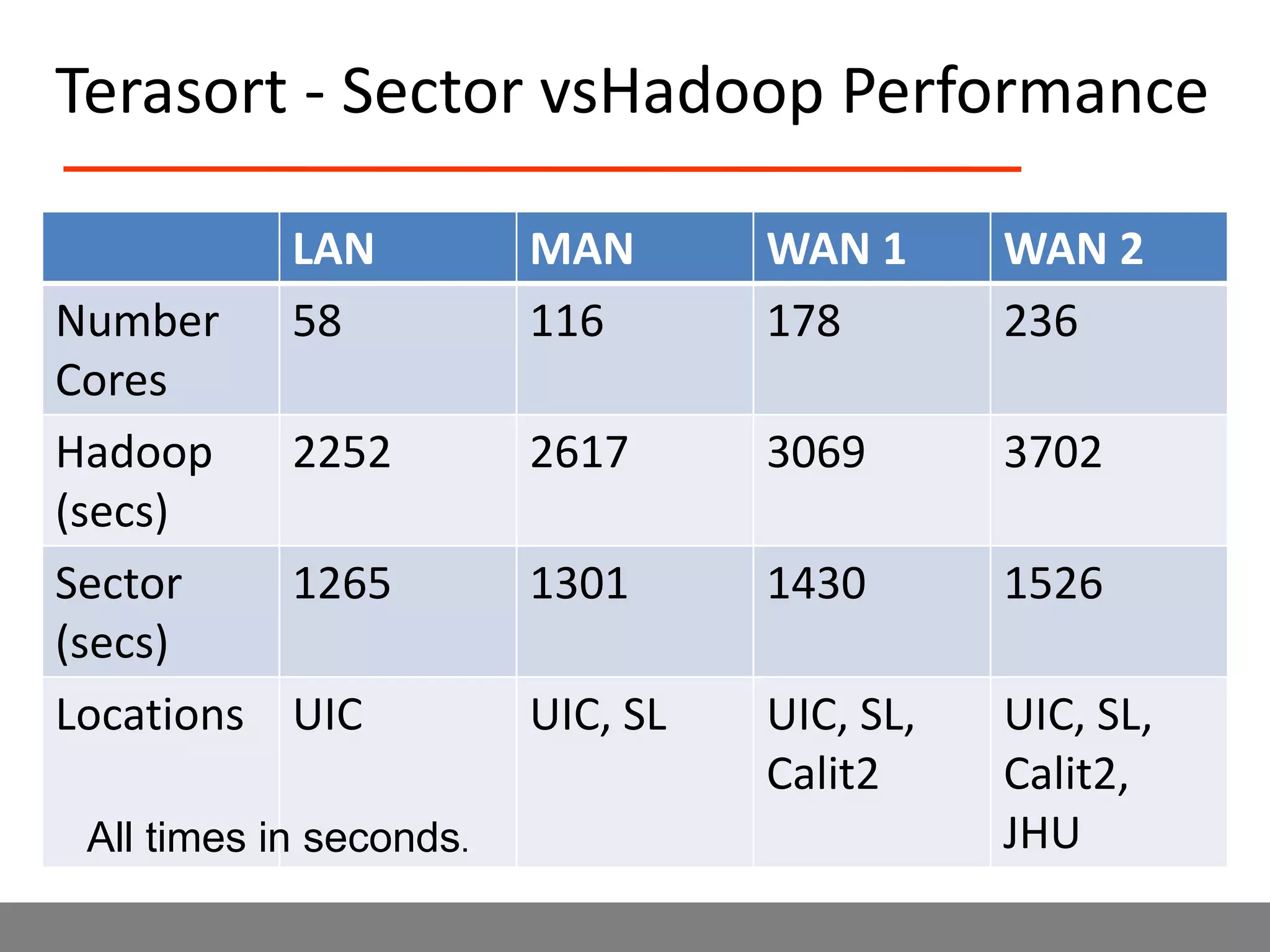

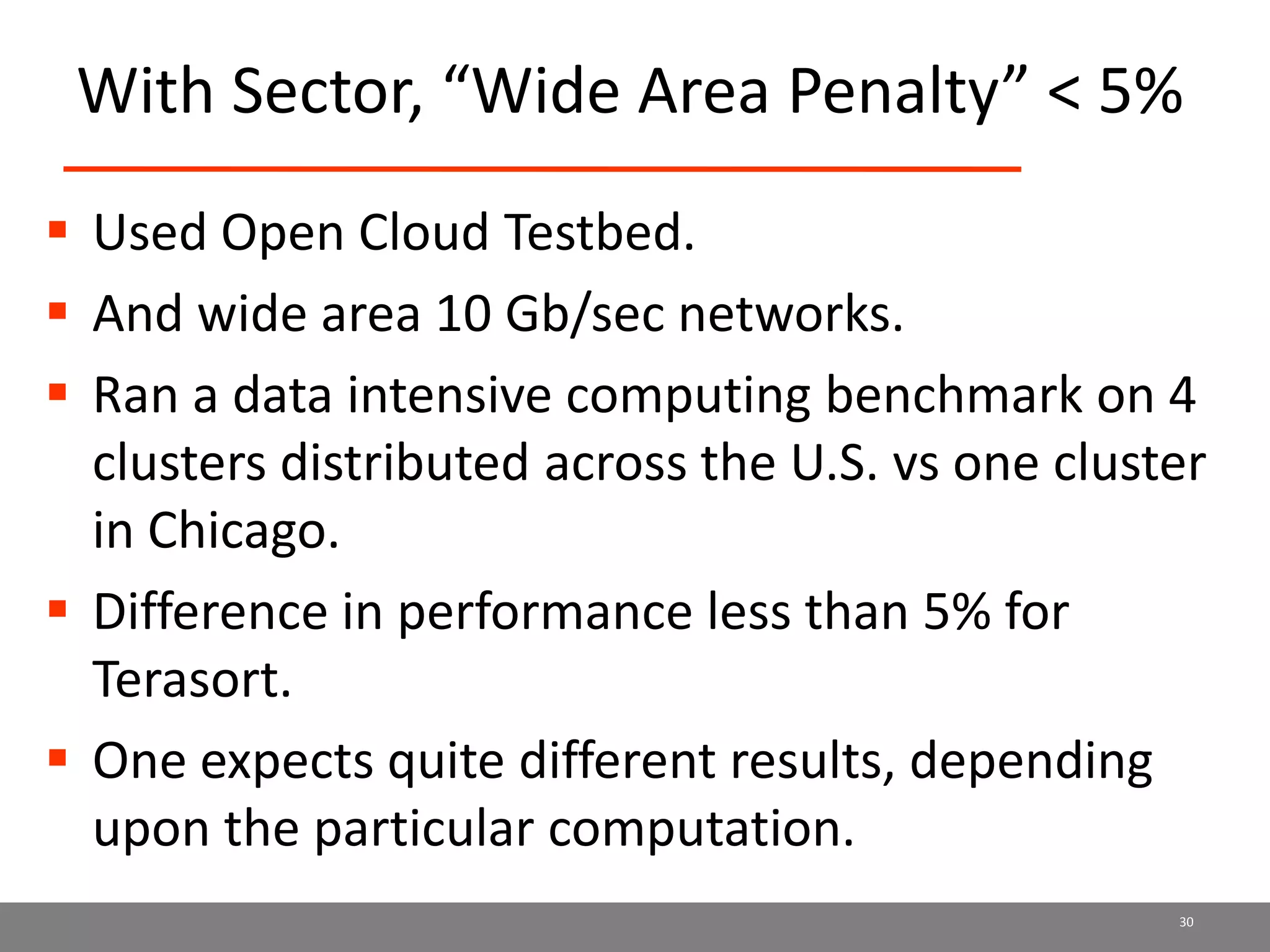

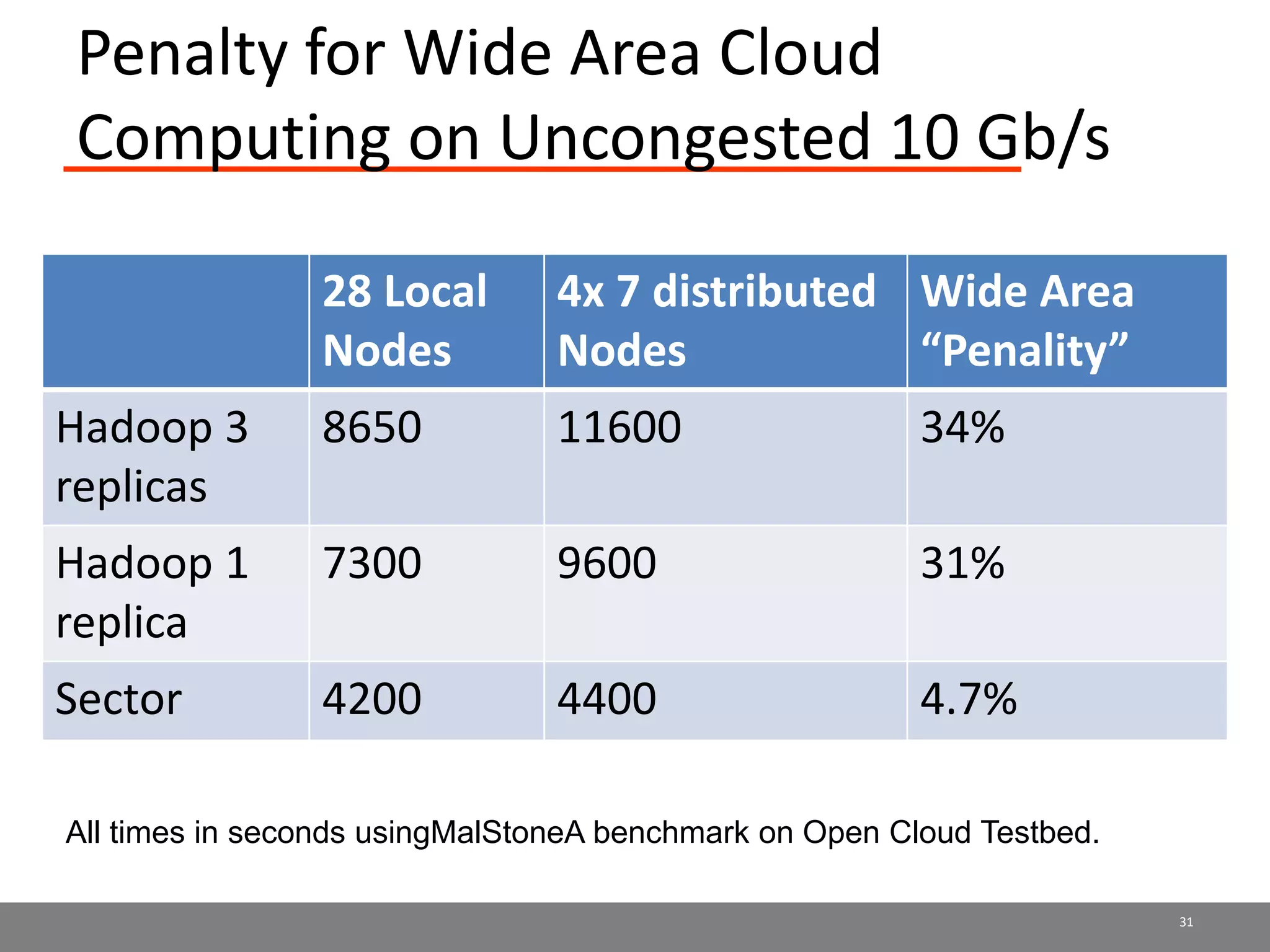

Sector is an open source cloud platform designed for data intensive computing. It provides several advantages over Hadoop such as being up to 2x faster, supporting user defined functions, and exploiting data locality and network topology. Sector uses a layered architecture with user defined functions, a distributed file system, and a UDP-based transport protocol. Experimental results show Sector outperforms Hadoop on benchmarks and has less than a 5% performance penalty compared to a local cluster when run on distributed wide area clusters connected by 10Gbps networks.